text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# Deep Q-Network (DQN)

---

In this notebook, you will implement a DQN agent with OpenAI Gym's LunarLander-v2 environment.

### 1. Import the Necessary Packages

```

import gym

import random

import torch

import numpy as np

from collections import deque

import matplotlib.pyplot as plt

%matplotlib inline

EXPERIMENT_NAME = "rainbow"

EXPERIMENT_DETAIL = "WiderVRangeEvenMoreAtoms"

```

### 2. Instantiate the Environment and Agent

Initialize the environment in the code cell below.

```

env = gym.make('LunarLander-v2')

env.seed(0)

print('State shape: ', env.observation_space.shape)

print('Number of actions: ', env.action_space.n)

```

Before running the next code cell, familiarize yourself with the code in **Step 2** and **Step 3** of this notebook, along with the code in `dqn_agent.py` and `model.py`. Once you have an understanding of how the different files work together,

- Define a neural network architecture in `model.py` that maps states to action values. This file is mostly empty - it's up to you to define your own deep Q-network!

- Finish the `learn` method in the `Agent` class in `dqn_agent.py`. The sampled batch of experience tuples is already provided for you; you need only use the local and target Q-networks to compute the loss, before taking a step towards minimizing the loss.

Once you have completed the code in `dqn_agent.py` and `model.py`, run the code cell below. (_If you end up needing to make multiple changes and get unexpected behavior, please restart the kernel and run the cells from the beginning of the notebook!_)

You can find the solution files, along with saved model weights for a trained agent, in the `solution/` folder. (_Note that there are many ways to solve this exercise, and the "solution" is just one way of approaching the problem, to yield a trained agent._)

```

# Hyperparameters

hyperparams = {

'seed': 101,

'buffer_size': int(1e5),

'batch_size': 32,

'start_since': 3200,

'gamma': 0.99,

'target_update_every': 4,

'tau': 1e-3,

'lr': 1e-4,

'weight_decay': 0,

'update_every': 4,

'priority_eps': 1e-5,

'a': 0.5,

'n_multisteps': 3,

'v_min': -500,

'v_max': 500,

'clip': None,

'n_atoms': 501,

'initial_sigma': 0.1,

'linear_type': 'noisy'

}

# Training Parameters

train_params = {

'n_episodes': 2000, 'max_t': 1000,

'eps_start': 0., 'eps_end': 0., 'eps_decay': 0.,

'beta_start': 0.4, 'beta_end': 1.0

}

from dqn_agent import Agent

agent = Agent(state_size=8, action_size=4, **hyperparams)

```

### 3. Train the Agent with DQN

Run the code cell below to train the agent from scratch. You are welcome to amend the supplied values of the parameters in the function, to try to see if you can get better performance!

```

def dqn(n_episodes=2000, max_t=1000,

eps_start=1.0, eps_end=0.01, eps_decay=0.995,

beta_start=0., beta_end=1.0,

continue_after_solved=True,

save_name="checkpoint_dueling_solved.pth"):

"""Deep Q-Learning.

Params

======

n_episodes (int): maximum number of training episodes

max_t (int): maximum number of timesteps per episode

eps_start (float): starting value of epsilon, for epsilon-greedy action selection

eps_end (float): minimum value of epsilon

eps_decay (float): multiplicative factor (per episode) for decreasing epsilon

"""

scores = [] # list containing scores from each episode

scores_window = deque(maxlen=100) # last 100 scores

eps = eps_start # initialize epsilon

prioritized = hasattr(agent, 'beta') # if using prioritized experience replay, initialize beta

if prioritized:

print("Priority Used")

agent.beta = beta_start

beta_increment = (beta_end - beta_start) / n_episodes

else:

print("Priority Not Used")

solved = False

epi_str_max_len = len(str(n_episodes))

for i_episode in range(1, n_episodes+1):

state = env.reset()

score = 0

for t in range(max_t):

action = agent.act(state, eps)

next_state, reward, done, _ = env.step(action)

agent.step(state, action, reward, next_state, done)

state = next_state

score += reward

if done:

break

else: # if not done (reached max_t)

agent.memory.reset_multisteps()

scores_window.append(score) # save most recent score

scores.append(score) # save most recent score

eps = max(eps_end, eps_decay*eps) # decrease epsilon

if prioritized:

agent.beta = min(beta_end, agent.beta + beta_increment)

print('\rEpisode {:>{epi_max_len}d} | Current Score: {:>7.2f} | Average Score: {:>7.2f} | Epsilon: {:>6.4f}'\

.format(i_episode, score, np.mean(scores_window), eps, epi_max_len=epi_str_max_len), end="")

if prioritized:

print(' | A: {:>6.4f} | Beta: {:>6.4f}'.format(agent.a, agent.beta), end='')

print(' ', end='')

if i_episode % 100 == 0:

print('\rEpisode {:>{epi_max_len}} | Current Score: {:>7.2f} | Average Score: {:>7.2f} | Epsilon: {:>6.4f}'\

.format(i_episode, score, np.mean(scores_window), eps, epi_max_len=epi_str_max_len), end='')

if prioritized:

print(' | A: {:>6.4f} | Beta: {:>6.4f}'.format(agent.a, agent.beta), end='')

print(' ')

if not solved and np.mean(scores_window)>=200.0:

print('\nEnvironment solved in {:d} episodes!\tAverage Score: {:.2f}'.format(i_episode-100, np.mean(scores_window)))

torch.save(agent.qnetwork_local.state_dict(), save_name)

solved = True

if not continue_after_solved:

break

return scores

scores = dqn(**train_params,

continue_after_solved=True,

save_name="experiment_{}_{}_solved.pth".format(EXPERIMENT_NAME, EXPERIMENT_DETAIL))

# plot the scores

plt.rcParams['figure.facecolor'] = 'w'

fig = plt.figure()

ax = fig.add_subplot(111)

plt.plot(np.arange(len(scores)), scores)

plt.ylabel('Score')

plt.xlabel('Episode #')

plt.show()

torch.save(agent.qnetwork_local.state_dict(), 'experiment_{}_{}_final.pth'.format(EXPERIMENT_NAME, EXPERIMENT_DETAIL))

agent.qnetwork_local.load_state_dict(torch.load('experiment_{}_{}_final.pth'.format(EXPERIMENT_NAME, EXPERIMENT_DETAIL)))

```

### 4. Watch a Smart Agent!

In the next code cell, you will load the trained weights from file to watch a smart agent!

```

agent.qnetwork_local.noise(False)

for i in range(10):

state = env.reset()

score = 0

for j in range(1000):

action = agent.act(state)

env.render()

state, reward, done, _ = env.step(action)

score += reward

if done:

break

print("Game {} Score: {} in {} steps".format(i, score, j + 1))

agent.qnetwork_local.noise(True)

env.close()

```

### 5. Explore

In this exercise, you have implemented a DQN agent and demonstrated how to use it to solve an OpenAI Gym environment. To continue your learning, you are encouraged to complete any (or all!) of the following tasks:

- Amend the various hyperparameters and network architecture to see if you can get your agent to solve the environment faster. Once you build intuition for the hyperparameters that work well with this environment, try solving a different OpenAI Gym task with discrete actions!

- You may like to implement some improvements such as prioritized experience replay, Double DQN, or Dueling DQN!

- Write a blog post explaining the intuition behind the DQN algorithm and demonstrating how to use it to solve an RL environment of your choosing.

```

def reset_env():

state = torch.from_numpy(env.reset()).unsqueeze(0).cuda()

with torch.no_grad():

p = agent.qnetwork_local(state).softmax(dim=-1)

action = np.argmax(agent.supports.mul(p).sum(dim=-1, keepdim=False).cpu().numpy())

env.render()

p = p.cpu().squeeze().numpy()

supports = agent.supports.cpu().numpy()

plt.rcParams['figure.facecolor'] = 'w'

fig, axes = plt.subplots(2, 2, figsize=(8, 8))

for ax in axes.reshape(-1):

ax.grid(True)

ax.set_ylabel("estimated probability")

ax.set_xlabel("supports")

axes[0, 0].set_title("do nothing")

axes[0, 1].set_title("left engine")

axes[1, 0].set_title("main engine")

axes[1, 1].set_title("right engine")

axes[0, 0].bar(x=supports, height=p[0], width=5)

axes[0, 1].bar(x=supports, height=p[1], width=5)

axes[1, 0].bar(x=supports, height=p[2], width=5)

axes[1, 1].bar(x=supports, height=p[3], width=5)

plt.tight_layout()

return action

def step(action, n_steps):

print(['nothing', 'left', 'main', 'right'][action])

score_gained = 0

for _ in range(n_steps):

state, reward, done, _ = env.step(action)

score_gained += reward

with torch.no_grad():

state = torch.from_numpy(state).unsqueeze(0).cuda()

p = agent.qnetwork_local(state).softmax(dim=-1)

action = np.argmax(agent.supports.mul(p).sum(dim=-1, keepdim=False).cpu().numpy())

env.render()

if done:

print(done)

break

print(score_gained)

p = p.cpu().squeeze().numpy()

supports = agent.supports.cpu().numpy()

plt.rcParams['figure.facecolor'] = 'w'

fig, axes = plt.subplots(2, 2, figsize=(8, 8))

for ax in axes.reshape(-1):

ax.grid(True)

ax.set_ylabel("estimated probability")

ax.set_xlabel("supports")

axes[0, 0].set_title("do nothing")

axes[0, 1].set_title("left engine")

axes[1, 0].set_title("main engine")

axes[1, 1].set_title("right engine")

axes[0, 0].bar(x=supports, height=p[0], width=5)

axes[0, 1].bar(x=supports, height=p[1], width=5)

axes[1, 0].bar(x=supports, height=p[2], width=5)

axes[1, 1].bar(x=supports, height=p[3], width=5)

plt.tight_layout()

return action

action = reset_env()

action = step(action, 50)

env.close()

agent.qnetwork_local(torch.from_numpy(state).unsqueeze(0).cuda()).softmax(dim=-1).mul(agent.supports).sum(dim=-1)

```

---

| github_jupyter |

## Compare PODNODE and DMD NIROM results using pre-computed solutions for the flow around a cylinder example

To run this notebook, download the precomputed PODNODE NIROM solution file ```cylinder_online_node_3850824_6.npz``` and ```cylinder_online_node_3851463_7.npz``` from

```

https://drive.google.com/drive/folders/19DEWdoS7Fkh-Cwe7Lbq6pdTdE290gYSS?usp=sharing

```

and place them in the ```../data/cylinder``` directory.

The DMD NIROM solutions can be generated by running the ```DMD_cylinder.ipynb``` notebook.

```

## Load modules

%matplotlib inline

import numpy as np

import scipy

import os

import gc

from scipy import interpolate

import matplotlib

import matplotlib.pyplot as plt

from matplotlib import cm

from matplotlib.ticker import LinearLocator, ScalarFormatter, FormatStrFormatter

from matplotlib import animation

matplotlib.rc('animation', html='html5')

from IPython.display import display

import matplotlib.ticker as ticker

from matplotlib import rcParams

from matplotlib.offsetbox import AnchoredText

# Plot parameters

plt.rc('font', family='serif')

plt.rcParams.update({'font.size': 20,

'lines.linewidth': 2,

'axes.labelsize': 16, # fontsize for x and y labels (was 10)

'axes.titlesize': 20,

'xtick.labelsize': 16,

'ytick.labelsize': 16,

'legend.fontsize': 16,

'axes.linewidth': 2})

import itertools

colors = itertools.cycle(['tab:blue','tab:orange','tab:green','tab:purple',

'tab:brown','tab:olive','tab:cyan','tab:pink','tab:red'])

markers = itertools.cycle(['p','d','o','^','s','x','D','H','v']) #,'*'

base_dir = os.getcwd()

work_dir = os.path.join(base_dir,'../examples/')

data_dir = os.path.join(base_dir,'../data/')

nirom_data_dir = os.path.join(base_dir,'../data')

node_data_dir = os.path.join(base_dir,'../data/cylinder')

fig_dir = os.path.join(base_dir,'../figures/podnode')

import pynirom

from pynirom.pod import pod_utils as pod

from pynirom.utils import data_utils as du

from pynirom.node import main as nd

from pynirom.node import plotting as pu

from pynirom.node import node as node

### ------ Import Snapshot data -------------------

data = np.load(data_dir + 'cylinder_Re100.0_Nn14605_Nt3001.npz')

mesh = np.load(data_dir + 'OF_cylinder_mesh_Nn14605_Ne28624.npz')

print('HFM data has {0} snapshots of dimension {1} for p,u and v, spanning times [{2}, {3}]'.format(

data['time'].shape[0],data['p'].shape[0],

data['time'][0], data['time'][-1]))

## ------- Prepare training snapshots ----------------

print('\n-------Prepare training and testing data---------')

soln_names = ['p', 'v_x', 'v_y']

nodes = mesh['nodes']; node_ind = mesh['node_ind']

triangles = mesh['elems']; elem_ind = mesh['elem_ind']

Nn = nodes.shape[0]

snap_start = 1250

T_end = 5.0 ### 5 seconds

snap_incr = 4

snap_train, times_train = du.prepare_data(data, soln_names, start_skip=snap_start, T_end=T_end, incr=snap_incr)

print('Using {0} training snapshots for time interval [{1},{2}] seconds'.format(times_train.shape[0],

times_train[0], times_train[-1]))

## ------- Prepare testing snapshots ----------------

pred_incr = snap_incr -3

snap_pred_true, times_predict = du.prepare_data(data, soln_names, start_skip=snap_start, incr=pred_incr)

print('Using {0} testing snapshots for time interval [{1},{2}] seconds'.format(times_predict.shape[0],

times_predict[0], times_predict[-1]))

## ------- Save full HFM data without spinup time -----

snap_data, times_offline = du.prepare_data(data, soln_names, start_skip=snap_start,)

DT = (times_offline[1:] - times_offline[:-1]).mean()

Nt = times_offline.size

Nt_online = times_predict.size

## Normalize the time axis. Required for DMD fitting

tscale = DT*snap_incr ### Scaling for DMD ()

times_offline_dmd = times_offline/tscale ## Snapshots DT = 1

times_online_dmd = times_predict/tscale

del data

del mesh

gc.collect()

## Load best NODE models

best_models = [x for x in os.listdir(node_data_dir) if not os.path.isdir(os.path.join(node_data_dir, x))]

unode = {}

for nn,file in enumerate(best_models):

unode[nn] ={}

print('%d: '%(nn+1)+"Loading NODE model %s"%(file.split('node_')[1].split('.npz')[0]))

for key in soln_names:

unode[nn][key] = np.load(os.path.join(node_data_dir,file))[key]

print("Loaded %d NODE models"%(len(best_models)))

## Load best DMD models

DMD1 = np.load(os.path.join(nirom_data_dir,'cylinder_online_dmd_r20.npz'))

Xdmd1 = DMD1['dmd']; X_true = DMD1['true'];

DMD2 = np.load(os.path.join(nirom_data_dir,'cylinder_online_dmd_r8.npz'))

Xdmd2 = DMD2['dmd'];

interleaved_snapshots = DMD1['interleaved'] ## True if snapshots were interleaved while performing DMD

del DMD1

del DMD2

gc.collect()

## Compute the POD coefficients

# trunc_lvl = 0.9999995

trunc_lvl = 0.99

snap_normalized, snap_mean, U, D, W = pod.compute_pod_multicomponent(snap_train)

nw, U_r = pod.compute_trunc_basis(D, U, eng_cap = trunc_lvl)

Z_train = pod.project_onto_basis(snap_train, U_r, snap_mean)

### ------ Compute the POD coefficients for the truth snapshots on the prediction interval------------------

Z_pred_true = pod.project_onto_basis(snap_pred_true, U_r, snap_mean)

npod_total = np.sum(list(nw.values()))

true_pred_state_array = np.zeros((times_predict.size,npod_total));

ctr=0

for key in soln_names:

true_pred_state_array[:,ctr:ctr+nw[key]]=Z_pred_true[key].T

ctr+=nw[key]

tmp = {};znode ={}

for nn in range(len(best_models)):

tmp[nn] = pod.project_onto_basis(unode[nn],U_r,snap_mean)

znode[nn]=np.zeros((times_predict.size,npod_total))

ctr=0

for key in soln_names:

znode[nn][:,ctr:ctr+nw[key]]=tmp[nn][key].T

ctr+=nw[key]

def var_string(ky):

md = ky

return md

### --- Visualize POD coefficients of true solution and NODE predictions

mode1=0; mode2=2; mode3=4

x_inx = times_online_dmd*tscale

start_trunc = 10+0*np.searchsorted(times_predict,times_train[-1])//10

end_trunc = 10*np.searchsorted(times_predict,times_train[-1])//10

end_trunc = end_trunc + (Nt_online - end_trunc)//1

tr_mark = np.searchsorted(times_predict, times_train[-1])

time_ind = np.searchsorted(times_offline, times_predict)

ky1 = 'p'; ky2 = 'v_x'; ky3 = 'v_y'

md1 = var_string(ky1); md2 = var_string(ky2); md3 = var_string(ky3)

fig = plt.figure(figsize=(16,10))

ax1 = fig.add_subplot(2, 3, 1)

ax1.plot(x_inx[start_trunc:end_trunc], true_pred_state_array[start_trunc:end_trunc,mode1], color=next(colors),

marker=next(markers), markersize=8,label='True',lw=2,markevery=100)

for nn in range(len(best_models)):

ax1.plot(x_inx[start_trunc:end_trunc], znode[nn][start_trunc:end_trunc,mode1], color=next(colors),

marker=next(markers), markersize=8,label='NODE%d'%nn,lw=2,markevery=100)

# lg=plt.legend(ncol=1,fancybox=True,loc='best')

ax1.set_title('$\mathbf{%s}$: $%d$'%(md1,mode1+1))

ax2 = fig.add_subplot(2, 3, 2)

ax2.plot(x_inx[start_trunc:end_trunc], true_pred_state_array[start_trunc:end_trunc,mode2], color=next(colors),

marker=next(markers), markersize=8,label='True',lw=2,markevery=100)

for nn in range(len(best_models)):

ax2.plot(x_inx[start_trunc:end_trunc], znode[nn][start_trunc:end_trunc,mode2], color=next(colors),

marker=next(markers), markersize=8,label='NODE%d'%nn,lw=2,markevery=100)

# lg=plt.legend(ncol=1,fancybox=True,loc='best')

ax2.set_title('$\mathbf{%s}$: $%d$'%(md1,mode2+1))

ax3 = fig.add_subplot(2, 3, 3)

ax3.plot(x_inx[start_trunc:end_trunc], true_pred_state_array[start_trunc:end_trunc,mode3], color=next(colors),

marker=next(markers), markersize=8,label='True',lw=2,markevery=100)

for nn in range(len(best_models)):

ax3.plot(x_inx[start_trunc:end_trunc], znode[nn][start_trunc:end_trunc,mode3], color=next(colors),

marker=next(markers), markersize=8,label='NODE%d'%nn,lw=2,markevery=100)

# lg=plt.legend(ncol=1,fancybox=True,loc='best')

ax3.set_title('$\mathbf{%s}$: $%d$'%(md1,mode3+1))

ax4 = fig.add_subplot(2, 3, 4)

ax4.plot(x_inx[start_trunc:end_trunc], true_pred_state_array[start_trunc:end_trunc,nw['p']+mode1], color=next(colors),

marker=next(markers), markersize=8,label='True',lw=2,markevery=100)

for nn in range(len(best_models)):

ax4.plot(x_inx[start_trunc:end_trunc], znode[nn][start_trunc:end_trunc,nw['p']+mode1], color=next(colors),

marker=next(markers), markersize=8,label='NODE%d'%nn,lw=2,markevery=100)

# lg=plt.legend(ncol=1,fancybox=True,loc='best')

ax4.set_title('$\mathbf{%s}$: $%d$'%(md2,mode1+1))

ax4.set_xlabel('Time (seconds)')

ax5 = fig.add_subplot(2, 3, 5)

ax5.plot(x_inx[start_trunc:end_trunc], true_pred_state_array[start_trunc:end_trunc,nw['p']+mode2], color=next(colors),

marker=next(markers), markersize=8,label='True',lw=2,markevery=100)

for nn in range(len(best_models)):

ax5.plot(x_inx[start_trunc:end_trunc], znode[nn][start_trunc:end_trunc,nw['p']+mode2], color=next(colors),

marker=next(markers), markersize=8,label='NODE%d'%nn,lw=2,markevery=100)

# lg=plt.legend(ncol=1,fancybox=True,loc='best')

ax5.set_title('$\mathbf{%s}$: $%d$'%(md2,mode2+1))

ax5.set_xlabel('Time (seconds)')

ax6 = fig.add_subplot(2, 3, 6)

ax6.plot(x_inx[start_trunc:end_trunc], true_pred_state_array[start_trunc:end_trunc,nw['p']+mode3], color=next(colors),

marker=next(markers), markersize=8,label='True',lw=2,markevery=100)

for nn in range(len(best_models)):

ax6.plot(x_inx[start_trunc:end_trunc], znode[nn][start_trunc:end_trunc,nw['p']+mode3], color=next(colors),

marker=next(markers), markersize=8,label='NODE%d'%(nn+1),lw=2,markevery=100)

lg=plt.legend(ncol=5,fancybox=True,bbox_to_anchor=(0.65, -0.18)) #loc='best')

ax6.set_title('$\mathbf{%s}$: $%d$'%(md2,mode3+1))

ax6.set_xlabel('Time (seconds)')

# os.chdir(fig_dir)

# plt.savefig('OF_node_comp_proj_tskip%d_oskip%d.png'%(snap_incr,pred_incr),dpi=300,bbox_extra_artists=(lg,), bbox_inches='tight')

### Compute spatial RMS errors

fig = plt.figure(figsize=(16,4))

x_inx = times_online_dmd*tscale

start_trunc = 10+0*np.searchsorted(times_predict,times_train[-1])//10

end_trunc = 10*np.searchsorted(times_predict,times_train[-1])//10

end_trunc = end_trunc + (Nt_online - end_trunc)//1

tr_mark = np.searchsorted(times_predict, times_train[-1])

time_ind = np.searchsorted(times_offline, times_predict)

ky1 = 'p'; ky2 = 'v_x'; ky3 = 'v_y'

md1 = var_string(ky1); md2 = var_string(ky2); md3 = var_string(ky3)

Nc = len(soln_names)

dmd_err1 = {}; dmd_err2 = {}

node_err = {};

for nn in range(len(best_models)):

node_err[nn] = {}

for ivar,key in enumerate(soln_names):

node_err[nn][key] = np.linalg.norm(snap_data[key][:,time_ind]- unode[nn][key][:,:], axis=0)/np.sqrt(Nn) #\

for ivar,key in enumerate(soln_names):

dmd_err1[key] = np.linalg.norm(X_true[ivar::Nc,:] - Xdmd1[ivar::Nc,:], axis = 0)/np.sqrt(Nn)

dmd_err2[key] = np.linalg.norm(X_true[ivar::Nc,:] - Xdmd2[ivar::Nc,:], axis = 0)/np.sqrt(Nn)

ax1 = fig.add_subplot(1, 2, 1)

ax1.plot(x_inx[start_trunc:end_trunc], dmd_err1[ky1][start_trunc:end_trunc], color=next(colors),

marker=next(markers), markersize=8,label='DMD(20):$\mathbf{%s}$'%(md1),lw=2,markevery=100)

ax1.plot(x_inx[start_trunc:end_trunc], dmd_err2[ky1][start_trunc:end_trunc], color=next(colors),

marker=next(markers), markersize=8,label='DMD(8):$\mathbf{%s}$'%(md1),lw=2,markevery=100)

for nn in range(len(best_models)):

ax1.plot(x_inx[start_trunc:end_trunc], node_err[nn][ky1][start_trunc:end_trunc], color=next(colors),

markersize=8,marker = next(markers),label='NODE%d:$\mathbf{%s}$'%(nn+1,md1),lw=2,markevery=100)

ymax_ax1 = dmd_err2[ky1][start_trunc:end_trunc].max()

ax1.vlines(x_inx[tr_mark], 0, ymax_ax1, colors ='k', linestyles='dashdot')

ax1.set_xlabel('Time (seconds)');lg=plt.legend(ncol=2,fancybox=True,loc='best')

ax2 = fig.add_subplot(1, 2, 2)

ax2.plot(x_inx[start_trunc:end_trunc], dmd_err1[ky2][start_trunc:end_trunc], color=next(colors),

markersize=8,marker = next(markers),label='DMD(20):$\mathbf{%s}$'%(md2), lw=2,markevery=100)

ax2.plot(x_inx[start_trunc:end_trunc], dmd_err2[ky2][start_trunc:end_trunc], color=next(colors),

markersize=8,marker = next(markers),label='DMD(8):$\mathbf{%s}$'%(md2), lw=2,markevery=100)

for nn in range(len(best_models)):

ax2.plot(x_inx[start_trunc:end_trunc], node_err[nn][ky3][start_trunc:end_trunc],color=next(colors),

markersize=8,marker = next(markers),label='NODE%d:$\mathbf{%s}$'%(nn+1,md2), lw=2,markevery=100)

ymax_ax2 = np.maximum(dmd_err2[ky2][start_trunc:end_trunc].max(), dmd_err2[ky3][start_trunc:end_trunc].max())

ax2.vlines(x_inx[tr_mark],0,ymax_ax2, colors = 'k', linestyles ='dashdot')

ax2.set_xlabel('Time (seconds)');lg=plt.legend(ncol=2,fancybox=True,loc='best')

fig.suptitle('Spatial RMS errors of NIROM solutions', fontsize=18)

# os.chdir(fig_dir)

# plt.savefig('cyl_node_comp_rms_tskip%d_oskip%d.pdf'%(snap_incr,pred_incr), bbox_inches='tight')

def plot_nirom_soln(Xtrue, Xdmd, Xnode, Nc, Nt_plot, nodes, elems, times_online, comp_names, seed =100, flag = True):

np.random.seed(seed)

itime = np.searchsorted(times_online,2.90) #np.random.randint(0,Nt_plot)

ivar = 1 #np.random.randint(1,Nc)

ky = comp_names[ivar]

tn = times_online[itime]

if flag: ### for interleaved snapshots

tmp_dmd = Xdmd1[ivar::Nc,itime]

tmp_true = Xtrue[ivar::Nc,itime]

else:

tmp_dmd = Xdmd1[ivar*Nn:(ivar+1)*Nn,itime]

tmp_true = Xtrue[ivar*Nn:(ivar+1)*Nn,itime]

tmp_node = Xnode[ky][:,itime]

fig = plt.figure(figsize=(18,25));

ax1 = fig.add_subplot(5, 1, 1)

surf1 = ax1.tripcolor(nodes[:,0], nodes[:,1],elems, tmp_dmd, cmap=plt.cm.jet)

ax1.set_title('DMD solution: {0} at t={1:1.2f} seconds, {0} range = [{2:5.3g},{3:4.2g}]'.format(ky,tn,

tmp_dmd.min(),tmp_dmd.max()),fontsize=16)

plt.axis('off')

plt.colorbar(surf1, orientation='horizontal',shrink=0.6,aspect=40, pad = 0.03)

ax2 = fig.add_subplot(5, 1, 3)

surf2 = ax2.tripcolor(nodes[:,0], nodes[:,1],elems, tmp_true, cmap=plt.cm.jet)

ax2.set_title('HFM solution: {0} at t={1:1.2f} seconds, {0} range = [{2:5.3g},{3:4.2g}]'.format(ky,tn,

tmp_true.min(),tmp_true.max()),fontsize=16)

plt.axis('off')

plt.colorbar(surf2, orientation='horizontal',shrink=0.6,aspect=40, pad = 0.03)

err_dmd = tmp_dmd-tmp_true

ax3 = fig.add_subplot(5, 1, 4)

surf3 = ax3.tripcolor(nodes[:,0], nodes[:,1],elems, err_dmd, cmap=plt.cm.Spectral)

ax3.set_title('DMD error: {0} at t={1:1.2f} seconds, error range = [{2:5.3g},{3:4.2g}]'.format(ky,tn,

err_dmd.min(),err_dmd.max()),fontsize=16)

plt.axis('off')

plt.colorbar(surf3,orientation='horizontal',shrink=0.6,aspect=40, pad = 0.03)

ax4 = fig.add_subplot(5, 1, 2)

surf4 = ax4.tripcolor(nodes[:,0], nodes[:,1],elems, tmp_node, cmap=plt.cm.jet)

ax4.set_title('PODNODE solution: {0} at t={1:1.2f} seconds, {0} range = [{2:5.3g},{3:4.2g}]'.format(ky,tn,

tmp_node.min(),tmp_node.max()),fontsize=16)

plt.axis('off')

plt.colorbar(surf4, orientation='horizontal',shrink=0.6,aspect=40, pad = 0.03)

err_node = tmp_node-tmp_true

ax5 = fig.add_subplot(5, 1, 5)

surf5 = ax5.tripcolor(nodes[:,0], nodes[:,1],elems, err_node, cmap=plt.cm.Spectral)

ax5.set_title('PODNODE error: {0} at t={1:1.2f} seconds, error range = [{2:5.3g},{3:4.2g}]'.format(ky,tn,

err_node.min(),err_node.max()),fontsize=16)

plt.axis('off')

plt.colorbar(surf5,orientation='horizontal',shrink=0.6,aspect=40, pad = 0.03)

return tn

Nt_plot = np.searchsorted(times_predict, times_train[-1])

itime = plot_nirom_soln(X_true, Xdmd1, unode[0],Nc, Nt_plot, nodes, triangles, times_predict,

soln_names, seed=1990,flag = True)

# os.chdir(fig_dir)

# plt.savefig('cyl_nirom_t%.3f_tskip%d_oskip%d.pdf'%(itime,snap_incr,pred_incr), bbox_inches='tight')

```

| github_jupyter |

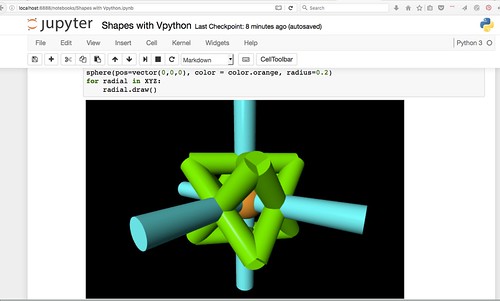

Python for Everyone!<br/>[Oregon Curriculum Network](http://4dsolutions.net/ocn/)

## VPython inside Jupyter Notebooks

### The Vector, Edge and Polyhedron types

The Vector class below is but a thin wrapper around VPython's built-in vector type. One might wonder, why bother? Why not just use vpython.vector and be done with it? Also, if wanting to reimplement, why not just subclass instead of wrap? All good questions.

A primary motivation is to keep the Vector and Edge types somewhat aloof from vpython's vector and more welded to vpython's cylinder instead. We want vectors and edges to materialize as cylinders quite easily.

So whether we subclass, or wrap, we want our vectors to have the ability to self-draw.

The three basis vectors must be negated to give all six spokes of the XYZ apparatus. Here's an opportunity to test our \_\_neg\_\_ operator then.

The overall plan is to have an XYZ "jack" floating in space, around which two tetrahedrons will be drawn, with a common center, as twins.

Their edges will intersect as at the respective face centers of the six-faced, twelve-edged hexahedron, our "duo-tet" cube (implied, but could be hard-wired as a next Polyhedron instance, just give it the six faces).

A lot of this wrapper code is about turning vpython.vectors into lists for feeding to Vector, which expects three separate arguments. A star in front of an iterable accomplishes the feat of exploding it into the separate arguments required.

Note that vector operations, including negation, always return fresh vectors. Even color has not been made a mutable property, but maybe could be.

```

from vpython import *

class Vector:

def __init__(self, x, y, z):

self.v = vector(x, y, z)

def __add__(self, other):

v_sum = self.v + other.v

return Vector(*v_sum.value)

def __neg__(self):

return Vector(*((-self.v).value))

def __sub__(self, other):

V = (self + (-other))

return Vector(*V.v.value)

def __mul__(self, scalar):

V = scalar * self.v

return Vector(*V.value)

def norm(self):

v = norm(self.v)

return Vector(*v.value)

def length(self):

return mag(self.v)

def draw(self):

self.the_cyl = cylinder(pos=vector(0,0,0), axis=self.v, radius=0.1)

self.the_cyl.color = color.cyan

XBASIS = Vector(1,0,0)

YBASIS = Vector(0,1,0)

ZBASIS = Vector(0,0,1)

XNEG = -XBASIS

YNEG = -YBASIS

ZNEG = -ZBASIS

XYZ = [XBASIS, XNEG, YBASIS, YNEG, ZBASIS, ZNEG]

sphere(pos=vector(0,0,0), color = color.orange, radius=0.2)

for radial in XYZ:

radial.draw()

```

Even though the top code cell contains no instructions to draw, Vpython's way of integrating into Jupyter Notebook seems to be by adding a scene right after the first code cell. Look below for the code that made all of the above happen. Yes, that's a bit strange.

```

class Edge:

def __init__(self, v0, v1):

self.v0 = v0

self.v1 = v1

def draw(self):

"""cylinder wants a starting point, and a direction vector"""

pointer = (self.v1 - self.v0)

direction_v = norm(pointer) * pointer.length() # normalize then stretch

self.the_cyl = cylinder(pos = self.v0.v, axis=direction_v.v, radius=0.1)

self.the_cyl.color = color.green

class Polyhedron:

def __init__(self, faces, corners):

self.faces = faces

self.corners = corners

self.edges = self._get_edges()

def _get_edges(self):

"""

take a list of face-tuples and distill

all the unique edges,

e.g. ((1,2,3)) => ((1,2),(2,3),(1,3))

e.g. icosahedron has 20 faces and 30 unique edges

( = cubocta 24 + tetra's 6 edges to squares per

jitterbug)

"""

uniqueset = set()

for f in self.faces:

edgetries = zip(f, f[1:]+ (f[0],))

for e in edgetries:

e = tuple(sorted(e)) # keeps out dupes

uniqueset.add(e)

return tuple(uniqueset)

def draw(self):

for edge in self.edges:

the_edge = Edge(Vector(*self.corners[edge[0]]),

Vector(*self.corners[edge[1]]))

the_edge.draw()

the_verts = \

{ 'A': (0.35355339059327373, 0.35355339059327373, 0.35355339059327373),

'B': (-0.35355339059327373, -0.35355339059327373, 0.35355339059327373),

'C': (-0.35355339059327373, 0.35355339059327373, -0.35355339059327373),

'D': (0.35355339059327373, -0.35355339059327373, -0.35355339059327373),

'E': (-0.35355339059327373, -0.35355339059327373, -0.35355339059327373),

'F': (0.35355339059327373, 0.35355339059327373, -0.35355339059327373),

'G': (0.35355339059327373, -0.35355339059327373, 0.35355339059327373),

'H': (-0.35355339059327373, 0.35355339059327373, 0.35355339059327373)}

the_faces = (('A','B','C'),('A','C','D'),('A','D','B'),('B','C','D'))

other_faces = (('E','F','G'), ('E','G','H'),('E','H','F'),('F','G','H'))

tetrahedron = Polyhedron(the_faces, the_verts)

inv_tetrahedron = Polyhedron(other_faces, the_verts)

print(tetrahedron._get_edges())

print(inv_tetrahedron._get_edges())

tetrahedron.draw()

inv_tetrahedron.draw()

```

The code above shows how we might capture an Edge as the endpoints of two Vectors, setting the stage for a Polyhedron as a set of such edges. These edges are derived from faces, which are simply clockwise or counterclockwise circuits of named vertices.

Pass in a dict of vertices or corners you'll need, named by letter, along with the tuple of faces, and you're set. The Polyhedron will distill the edges for you, and render them as vpython.cylinder objects.

Remember to scroll up, to the scene right after the first code cell, to find the actual output of the preceding code cell.

At Oregon Curriculum Network (OCN) you will find material on Quadrays, oft used to generate 26 points of interest A-Z, the A-H above the beginning of the sequence. From the duo-tet cube we move to its dual, the octahedron, and then the 12 vertices of the cuboctahedron. 8 + 6 + 12 = 26.

When studying Synergetics (a namespace) you will encounter [canonical volume numbers](https://github.com/4dsolutions/Python5/blob/master/Computing%20Volumes.ipynb) for these as well: (Tetrahedron: 1, Cube: 3, Octahedron: 4, Rhombic Dodecahedron 6, Cuboctahedron 20).

<i>For Further Reading:</i>

[Polyhedrons 101](https://github.com/4dsolutions/Python5/blob/master/Polyhedrons%20101.ipynb)<br />

[STEM Mathematics](http://nbviewer.jupyter.org/github/4dsolutions/Python5/blob/master/STEM%20Mathematics.ipynb) -- with nbviewer

| github_jupyter |

```

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from scipy.stats import shapiro, normaltest, bartlett, levene, f_oneway, ttest_ind, ranksums, kruskal, mannwhitneyu, spearmanr

from statsmodels.stats.multicomp import pairwise_tukeyhsd, MultiComparison

from statsmodels.graphics import gofplots

from scipy.stats import describe,anderson,t,ttest_rel

```

# Normality Tests

```

def normality_test(data, alpha=0.05):

# print col describe

print("Data describe","\n")

print(describe(data),"\n")

# drop nans and duplicates

# dist plot and qqplot

# plot col distplot

sns.distplot(data).set_title("DistPlot for data")

plt.show()

# qq plot

gofplots.qqplot(data, line='45', fit=True)

plt.title('Q-Q plot for data')

plt.show()

# Shapiro-Wilk test

print('Shapiro-Wilk Test')

stat, p = shapiro(data)

if p > alpha:

print('Accept Ho: Distribution is normal (alpha = {0})'.format(alpha))

else:

print('Reject Ho: Distribution is not normal (alpha = {0})'.format(alpha))

print('P_value: ', p,'\n')

# D’Agostino’s K^2 Test (tests Skewness and Kurtosis)

print('D’Agostino’s Test')

stat, p = normaltest(data)

if p > alpha:

print('Accept Ho: Distribution is normal (alpha = {0})'.format(alpha))

else:

print('Reject Ho: Distribution is not normal (alpha = {0})'.format(alpha))

print('P_value: ', p,'\n')

#Anderson DArling Tests

print('Anderson-Darling Test')

result = anderson(data)

print('Statistic: %.3f' % result.statistic)

p = 0

for i in range(len(result.critical_values)):

sl, cv = result.significance_level[i], result.critical_values[i]

if result.statistic < result.critical_values[i]:

print('%.3f: %.3f, Accept Ho: Distribution is normal' % (sl, cv))

else:

print('%.3f: %.3f, Reject Ho: Distribution is not normal (reject H0)' % (sl, cv))

print('======================================================================')

np.random.seed(45)

sample_size = 1000

normal_data = np.random.normal(0,1,size=sample_size)

uniform_data = np.random.uniform(0,10,sample_size)

normality_test(normal_data)

normality_test(uniform_data)

df = 6

t_dist_data = np.random.standard_t(df,size = sample_size)

normality_test(t_dist_data)

```

# HomogenetyTests(equal variances)

```

def homogeneity_test(data1,data2, alpha=0.05):

# Bartlett’s test (equal variances)

print("Homogenity tests","\n")

print("Data1 describe")

print(describe(data1),"\n")

print("Data1 describe")

print(describe(data2),"\n")

print('Bartlett’s test')

stat, p = bartlett(data1, data2)

if p > alpha:

print('Accept Ho: All input samples are from populations with equal variances (alpha = {0})'.format(alpha))

else:

print('Reject Ho: All input samples are not from populations with equal variances (alpha = {0})'.format(alpha))

print('\n')

# Levene test (equal variances)

print('Levene’s test')

stat, p = levene(data1, data2)

if p > alpha:

print('Accept Ho: All input samples are from populations with equal variances (alpha = 5%)')

else:

print('Reject Ho: All input samples are not from populations with equal variances (alpha = 5%)')

print('\n')

print('======================================================================')

np.random.seed(45)

data1 = np.random.normal(10,11,size = sample_size)

data2 = np.random.normal(20,20,size = sample_size)

homogeneity_test(data1,data2)

np.random.seed(45)

data1 = np.random.normal(10,11,size = sample_size)

data2 = np.random.normal(20,11.5,size = sample_size)

homogeneity_test(data1,data2)

```

# Parametric Tests

```

def ind_ttest(data1,data2,alpha=0.05):

print("Data1 describe")

print(describe(data1),"\n")

print("Data1 describe")

print(describe(data2),"\n")

X = np.concatenate((data1.reshape(-1,1),data2.reshape(-1,1)),axis=1)

sns.boxplot(data = X)

plt.show()

print('Inependent T-test')

stat, p = ttest_ind(data1, data2)

if p > alpha:

print('Accept Ho: Input samples are from populations with equal means (alpha = {0})'.format(alpha))

else:

print('Reject Ho: Input samples are not from populations with equal means (alpha = {0})'.format(alpha))

print('\n')

print('======================================================================')

np.random.seed(45)

sample_size = 100

data1 = np.random.normal(15,10,size = sample_size)

data2 = np.random.normal(10,10,size = sample_size)

ind_ttest(data1,data2,alpha = 0.05)

```

# Paired T-Test(not independent)

```

def rel_indtest(data1,data2,alpha=0.05):

print("Data1 describe")

print(describe(data1),"\n")

print("Data1 describe")

print(describe(data2),"\n")

X = np.concatenate((data1.reshape(-1,1),data2.reshape(-1,1)),axis=1)

sns.boxplot(data = X)

plt.show()

print('Inependent T-test')

stat, p = ttest_rel(data1, data2)

if p > alpha:

print('Accept Ho: Input samples are from populations with equal means (alpha = {0})'.format(alpha))

else:

print('Reject Ho: Input samples are not from populations with equal means (alpha = {0})'.format(alpha))

print('\n')

print('======================================================================')

data1 = 5 * np.random.randn(100) + 50

data2 = 5 * np.random.randn(100) + 51

rel_indtest(data1,data2)

```

```

def anova_test(data1,data2,data3,alpha=0.05):

# anova test (equal means)

print("Data1 describe")

print(describe(data1),"\n")

print("Data1 describe")

print(describe(data2),"\n")

X = np.concatenate((data1.reshape(-1,1),data2.reshape(-1,1),data3.reshape(-1,1)),axis=1)

sns.boxplot(data = X)

plt.show()

print("\n")

print('ANOVA test')

stat, p = f_oneway(data1, data2, data3)

if p > alpha:

print('Accept Ho: Input samples are from populations with equal means (alpha = {0})'.format(alpha))

else:

print('Reject Ho: Input samples are not from populations with equal means (alpha = {0})'.format(alpha))

print('\n')

print('======================================================================')

np.random.seed(45)

sample_size = 100

data1 = np.random.normal(15,10,size = sample_size)

data2 = np.random.normal(15,10,size = sample_size)

data3 = np.random.normal(10,10,size = sample_size)

anova_test(data1,data2,data3)

```

# Non-parametric tests

```

def mann_whitney(data1,data2,alpha=0.05):

# MannWhitney test for two independent samples (equal means)

print("Data1 describe")

print(describe(data1),"\n")

print("Data1 describe")

print(describe(data2),"\n")

sns.distplot(data1).set_title("data1")

plt.show()

sns.distplot(data2).set_title("data2")

plt.show()

print('Mann-Whitney test')

stat, p = mannwhitneyu(data1, data2)

if p > alpha:

print('Accept Ho: The distributions of both samples are equal. (alpha = {0})'.format(alpha))

else:

print('Reject Ho: the distributions of both samples are not equal. (alpha = {0})'.format(alpha))

print('\n')

data1 = 5 * np.random.randn(100) + 50

data2 = 5 * np.random.randn(100) + 50

mann_whitney(data1,data2)

data1 = 5 * np.random.randn(100) + 50

data2 = 5 * np.random.randn(100) + 51

mann_whitney(data1,data2)

```

```

def WilcoxonRankSum(data1,data2, alpha=0.05):

# Wilcoxon Rank-Sum test for two independent samples (equal means)

print("Data1 describe")

print(describe(data1),"\n")

print("Data1 describe")

print(describe(data2),"\n")

sns.distplot(data1).set_title("data1")

plt.show()

sns.distplot(data2).set_title("data2")

plt.show()

print('WilcoxonRankSum')

stat, p = ranksums(data1, data2)

if p > alpha:

print('Accept Ho: The distributions of both samples are equal. (alpha = {0})'.format(alpha))

else:

print('Reject Ho: the distributions of both samples are not equal. (alpha = {0})'.format(alpha))

print('\n')

data1 = 5 * np.random.randn(100) + 50

data2 = 5 * np.random.randn(100) + 50

WilcoxonRankSum(data1,data2)

data1 = 5 * np.random.randn(100) + 50

data2 = 5 * np.random.randn(100) + 55

WilcoxonRankSum(data1,data2)

```

```

def kruskal_wallis_h_test(data1,data2,data3, alpha=0.05):

# Kruskal-Wallis H-test for independent samples (non-parametric version of ANOVA)

print("Data1 describe")

print(describe(data1),"\n")

print("Data2 describe")

print(describe(data2),"\n")

print("Data3 describe")

print(describe(data3),"\n")

sns.distplot(data1).set_title("data1")

plt.show()

sns.distplot(data2).set_title("data2")

plt.show()

sns.distplot(data3).set_title("data2")

plt.show()

print('Kruskal-Wallis H-test')

stat, p = kruskal(data1, data2, data3)

if p > alpha:

print('Accept Ho: The distributions of both samples are equal. (alpha = {0})'.format(alpha))

else:

print('Reject Ho: the distributions of both samples are not equal. (alpha = {0})'.format(alpha))

print('\n')

data1 = 5 * np.random.randn(100) + 50

data2 = 5 * np.random.randn(100) + 53

data3 = 5 * np.random.randn(100) + 50

kruskal_wallis_h_test(data1,data2,data3)

```

| github_jupyter |

##### <small>

Copyright (c) 2017 Andrew Glassner

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

</small>

# Deep Learning From Basics to Practice

## by Andrew Glassner, https://dlbasics.com, http://glassner.com

------

## Chapter 24: Autoencoders

### Notebook 5: Denoising

This notebook is provided as a “behind-the-scenes” look at code used to make some of the figures in this chapter. It is still in the hacked-together form used to develop the figures, and is only lightly commented.

```

# some code adapted from https://blog.keras.io/building-autoencoders-in-keras.html

from keras.datasets import mnist

from keras.models import Sequential, Model

from keras.layers import Dense

from keras.layers.convolutional import Conv2D, UpSampling2D, MaxPooling2D, Conv2DTranspose

import h5py

import numpy as np

import matplotlib.pyplot as plt

#from pathlib import Path

from keras import backend as keras_backend

keras_backend.set_image_data_format('channels_last')

# Make a File_Helper for saving and loading files.

save_files = True

import os, sys, inspect

current_dir = os.path.dirname(os.path.abspath(inspect.getfile(inspect.currentframe())))

sys.path.insert(0, os.path.dirname(current_dir)) # path to parent dir

from DLBasics_Utilities import File_Helper

file_helper = File_Helper(save_files)

def get_mnist_samples():

random_seed = 42

np.random.seed(random_seed)

# Read MNIST data. We won't be using the y_train or y_test data

(X_train, y_train), (X_test, y_test) = mnist.load_data()

pixels_per_image = np.prod(X_train.shape[1:])

# Cast values into the current floating-point type

X_train = keras_backend.cast_to_floatx(X_train)

X_test = keras_backend.cast_to_floatx(X_test)

X_train = np.reshape(X_train, (len(X_train), 28, 28, 1))

X_test = np.reshape(X_test, (len(X_test), 28, 28, 1))

# Normalize the range from [0,255] to [0,1]

X_train /= 255.

X_test /= 255.

return (X_train, X_test)

def add_noise_to_mnist(X_train, X_test, noise_factor=0.5): # add noise to the digita

X_train_noisy = X_train + noise_factor * np.random.normal(loc=0.0, scale=1.0, size=X_train.shape)

X_test_noisy = X_test + noise_factor * np.random.normal(loc=0.0, scale=1.0, size=X_test.shape)

X_train_noisy = np.clip(X_train_noisy, 0., 1.)

X_test_noisy = np.clip(X_test_noisy, 0., 1.)

return (X_train_noisy, X_test_noisy)

def build_autoencoder1():

# build the autoencoder.

model = Sequential()

model.add(Conv2D(32, (3,3), activation='relu', padding='same', input_shape=(28,28,1)))

model.add(MaxPooling2D((2,2,), padding='same'))

model.add(Conv2D(32, (3,3), activation='relu', padding='same'))

model.add(MaxPooling2D((2,2), padding='same'))

# down to 7, 7, 32 now go back up

model.add(Conv2D(32, (3,3), activation='relu', padding='same'))

model.add(UpSampling2D((2,2)))

model.add(Conv2D(32, (3,3), activation='relu', padding='same'))

model.add(UpSampling2D((2,2)))

model.add(Conv2D(1, (3,3), activation='sigmoid', padding='same'))

model.compile(optimizer='adadelta', loss='binary_crossentropy')

return model

def build_autoencoder2():

# build the autoencoder.

model = Sequential()

model.add(Conv2D(32, (3,3), activation='relu', padding='same', strides=2, input_shape=(28,28,1)))

model.add(Conv2D(32, (3,3), activation='relu', padding='same', strides=2))

# down to 7, 7, 32 now go back up

model.add(Conv2D(32, (3,3), activation='relu', padding='same'))

model.add(UpSampling2D((2,2)))

model.add(Conv2D(32, (3,3), activation='relu', padding='same'))

model.add(UpSampling2D((2,2)))

model.add(Conv2D(1, (3,3), activation='sigmoid', padding='same'))

model.compile(optimizer='adadelta', loss='binary_crossentropy')

return model

def build_autoencoder3():

# build the autoencoder.

model = Sequential()

model.add(Conv2D(32, (3,3), activation='relu', padding='same', strides=2, input_shape=(28,28,1)))

model.add(Conv2D(32, (3,3), activation='relu', padding='same', strides=2))

# down to 7, 7, 32 now go back up

model.add(Conv2DTranspose(32, (3,3), activation='relu', strides=2, padding='same'))

model.add(Conv2DTranspose(32, (3,3), activation='relu', strides=2, padding='same'))

model.add(Conv2D(1, (3,3), activation='sigmoid', padding='same'))

model.compile(optimizer='adadelta', loss='binary_crossentropy')

return model

def functional_api_build_autoencoder():

# build the autoencoder.

input_img = Input(shape=(28, 28, 1)) # using `channels_last` image data format

x = Conv2D(32, (3, 3), activation='relu', padding='same')(input_img)

x = MaxPooling2D((2, 2), padding='same')(x)

x = Conv2D(32, (3, 3), activation='relu', padding='same')(x)

encoded = MaxPooling2D((2, 2), padding='same')(x)

# at this point the representation is (7, 7, 32)

x = Conv2D(32, (3, 3), activation='relu', padding='same')(encoded)

x = UpSampling2D((2, 2))(x)

x = Conv2D(32, (3, 3), activation='relu', padding='same')(x)

x = UpSampling2D((2, 2))(x)

decoded = Conv2D(1, (3, 3), activation='sigmoid', padding='same')(x)

model = Model(input_img, decoded)

model.compile(optimizer='adadelta', loss='binary_crossentropy')

return model

(X_train, X_test) = get_mnist_samples()

(X_train_noisy, X_test_noisy) = add_noise_to_mnist(X_train, X_test, 0.5)

plt.figure(figsize=(10,3))

for i in range(8):

plt.subplot(2, 8, i+1)

plt.imshow(X_test[i].reshape(28, 28))

plt.gray()

plt.xticks([], [])

plt.yticks([], [])

plt.subplot(2, 8, i+1+8)

plt.imshow(X_test_noisy[i].reshape(28, 28))

plt.gray()

plt.xticks([], [])

plt.yticks([], [])

plt.tight_layout()

file_helper.save_figure("NB5-AE-noisy-mnist-input")

plt.show()

model1 = build_autoencoder1()

weights_filename = "NB5-Denoising-AE1"

np.random.seed(42)

if not file_helper.load_model_weights(model1, weights_filename):

history1 = model1.fit(X_train_noisy, X_train,

epochs=100,

batch_size=128,

shuffle=True,

validation_data=(X_test_noisy, X_test))

file_helper.save_model_weights(model1, weights_filename)

model2 = build_autoencoder2()

weights_filename = "NB5-Denoising-AE2"

np.random.seed(42)

if not file_helper.load_model_weights(model2, weights_filename):

history2 = model2.fit(X_train_noisy, X_train,

epochs=100,

batch_size=128,

shuffle=True,

validation_data=(X_test_noisy, X_test))

file_helper.save_model_weights(model2, weights_filename)

model3 = build_autoencoder3()

weights_filename = "NB5-Denoising-AE3"

np.random.seed(42)

if not file_helper.load_model_weights(model3, weights_filename):

history3 = model3.fit(X_train_noisy, X_train,

epochs=100,

batch_size=128,

shuffle=True,

validation_data=(X_test_noisy, X_test))

file_helper.save_model_weights(model3, weights_filename)

def draw_noisy_predictions_set(predictions, filename=None):

plt.figure(figsize=(8, 4))

for i in range(5):

plt.subplot(2, 5, i+1)

plt.imshow(X_test_noisy[i].reshape(28, 28), vmin=0, vmax=1, cmap="gray")

ax = plt.gca()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.subplot(2, 5, i+6)

plt.imshow(predictions[i,:,:,0].reshape(28, 28), vmin=0, vmax=1, cmap="gray")

ax = plt.gca()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.tight_layout()

file_helper.save_figure(filename+'-predictions')

plt.show()

predictions1 = model1.predict(X_test_noisy)

draw_noisy_predictions_set(predictions1, 'NB5-Noisy-Model1')

predictions2 = model2.predict(X_test_noisy)

draw_noisy_predictions_set(predictions2, 'NB5-Noisy-Model2')

predictions3 = model3.predict(X_test_noisy)

draw_noisy_predictions_set(predictions3, 'NB5-Noisy-Model3')

```

| github_jupyter |

##### Copyright 2019 The TensorFlow Authors.

```

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

```

# Use XLA with tf.function

<table class="tfo-notebook-buttons" align="left">

<td>

<a target="_blank" href="https://www.tensorflow.org/xla/tutorials/compile"><img src="https://www.tensorflow.org/images/tf_logo_32px.png" />View on TensorFlow.org</a>

</td>

<td>

<a target="_blank" href="https://colab.research.google.com/github/tensorflow/tensorflow/blob/master/tensorflow/compiler/xla/g3doc/tutorials/jit_compile.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Run in Google Colab</a>

</td>

<td>

<a href="https://storage.googleapis.com/tensorflow_docs/tensorflow/tensorflow/compiler/xla/g3doc/tutorials/jit_compile.ipynb"><img src="https://www.tensorflow.org/images/download_logo_32px.png" />Download notebook</a>

</td>

<td>

<a target="_blank" href="https://github.com/tensorflow/tensorflow/blob/master/tensorflow/compiler/xla/g3doc/tutorials/jit_compile.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />View source on GitHub</a>

</td>

</table>

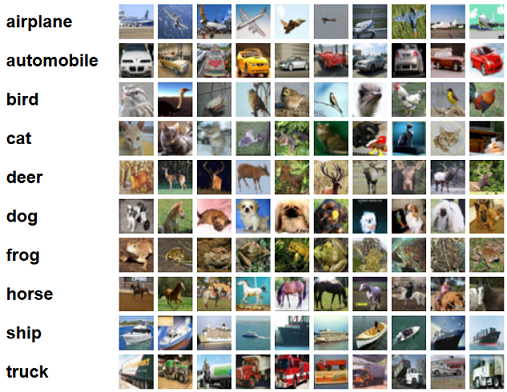

This tutorial trains a TensorFlow model to classify the MNIST dataset, where the training function is compiled using XLA.

First, load TensorFlow and enable eager execution.

```

import tensorflow as tf

tf.compat.v1.enable_eager_execution()

```

Then define some necessary constants and prepare the MNIST dataset.

```

# Size of each input image, 28 x 28 pixels

IMAGE_SIZE = 28 * 28

# Number of distinct number labels, [0..9]

NUM_CLASSES = 10

# Number of examples in each training batch (step)

TRAIN_BATCH_SIZE = 100

# Number of training steps to run

TRAIN_STEPS = 1000

# Loads MNIST dataset.

train, test = tf.keras.datasets.mnist.load_data()

train_ds = tf.data.Dataset.from_tensor_slices(train).batch(TRAIN_BATCH_SIZE).repeat()

# Casting from raw data to the required datatypes.

def cast(images, labels):

images = tf.cast(

tf.reshape(images, [-1, IMAGE_SIZE]), tf.float32)

labels = tf.cast(labels, tf.int64)

return (images, labels)

```

Finally, define the model and the optimizer. The model uses a single dense layer.

```

layer = tf.keras.layers.Dense(NUM_CLASSES)

optimizer = tf.keras.optimizers.Adam()

```

# Define the training function

In the training function, you get the predicted labels using the layer defined above, and then minimize the gradient of the loss using the optimizer. In order to compile the computation using XLA, place it inside `tf.function` with `jit_compile=True`.

```

@tf.function(jit_compile=True)

def train_mnist(images, labels):

images, labels = cast(images, labels)

with tf.GradientTape() as tape:

predicted_labels = layer(images)

loss = tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits(

logits=predicted_labels, labels=labels

))

layer_variables = layer.trainable_variables

grads = tape.gradient(loss, layer_variables)

optimizer.apply_gradients(zip(grads, layer_variables))

```

# Train and test the model

Once you have defined the training function, define the model.

```

for images, labels in train_ds:

if optimizer.iterations > TRAIN_STEPS:

break

train_mnist(images, labels)

```

And, finally, check the accuracy:

```

images, labels = cast(test[0], test[1])

predicted_labels = layer(images)

correct_prediction = tf.equal(tf.argmax(predicted_labels, 1), labels)

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

print("Prediction accuracy after training: %s" % accuracy)

```

Behind the scenes, the XLA compiler has compiled the entire TF function to HLO, which has enabled fusion optimizations. Using the introspection facilities, we can see the HLO code (other interesting possible values for "stage" are `optimized_hlo` for HLO after optimizations and `optimized_hlo_dot` for a Graphviz graph):

```

print(train_mnist.experimental_get_compiler_ir(images, labels)(stage='hlo'))

```

| github_jupyter |

```

import sys

sys.path.append("/home/ly/workspace/mmsa")

seed = 2245

import numpy as np

import torch

from torch import nn

from torch import optim

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

from models.mvsa_lymodel5 import *

from utils.train import *

from typing import *

from utils.load_mvsa import *

from utils.dataset import *

from utils.train import *

from utils.train import *

config

%%time

train_set, valid_set, test_set= load_glove_data(config)

batch_size = 64

workers = 4

train_loader, valid_loader, test_loader = get_loader(batch_size, workers, get_collate_fn(config), train_set, valid_set, test_set)

model = Model(config).cuda()

loss = nn.CrossEntropyLoss()

print(get_parameter_number(model), loss)

_interval = 5

lr = 1e-3

epoches = 50

stoping_step = 10

optimizer = get_regal_optimizer(model, optim.AdamW, lr)

viz = get_Visdom()

batch_loss_drawer = VisdomScalar(viz, f"batch_loss interval:{_interval}")

epoch_loss_drawer = VisdomScalar(viz, f"Train and valid loss", 2)

acc_drawer = VisdomScalar(viz, "Train and valid accuracy", 2)

text_writer = VisdomTextWriter(viz, "Training")

batch_loss = []

train_loss = []

valid_loss = []

train_acc = []

valid_acc = []

res, model = train_visdom_v2(model, optimizer, loss, viz, train_loader,

valid_loader, epoches, batch_loss, batch_loss_drawer,

train_loss, valid_loss, epoch_loss_drawer,

train_acc, valid_acc, acc_drawer, text_writer,

_interval=_interval, early_stop=stoping_step)

res

eval_model(model, test_loader, loss)

## 调参

config["embedding_dim"] = 50

config["text_hidden_size"] = 50

config

%%time

train_set, valid_set, test_set= load_glove_data(config)

batch_size = 64

workers = 4

train_loader, valid_loader, test_loader = get_loader(batch_size, workers, get_collate_fn(config), train_set, valid_set, test_set)

model = Model(config).cuda()

loss = nn.CrossEntropyLoss()

print(get_parameter_number(model), loss)

_interval = 5

lr = 1e-3

epoches = 50

stoping_step = 10

optimizer = get_regal_optimizer(model, optim.AdamW, lr)

viz = get_Visdom()

batch_loss_drawer = VisdomScalar(viz, f"batch_loss interval:{_interval}")

epoch_loss_drawer = VisdomScalar(viz, f"Train and valid loss", 2)

acc_drawer = VisdomScalar(viz, "Train and valid accuracy", 2)

text_writer = VisdomTextWriter(viz, "Training")

batch_loss = []

train_loss = []

valid_loss = []

train_acc = []

valid_acc = []

res, model = train_visdom_v2(model, optimizer, loss, viz, train_loader,

valid_loader, epoches, batch_loss, batch_loss_drawer,

train_loss, valid_loss, epoch_loss_drawer,

train_acc, valid_acc, acc_drawer, text_writer,

_interval=_interval, early_stop=stoping_step)

eval_model(model, test_loader, loss)

## 调参

config["embedding_dim"] = 25

config["text_hidden_size"] = 25

config["attention_nhead"] = 5

config["fusion_nheads"] = 5

config

%%time

train_set, valid_set, test_set= load_glove_data(config)

batch_size = 64

workers = 4

train_loader, valid_loader, test_loader = get_loader(batch_size, workers, get_collate_fn(config), train_set, valid_set, test_set)

model = Model(config).cuda()

loss = nn.CrossEntropyLoss()

print(get_parameter_number(model), loss)

_interval = 5

lr = 1e-3

epoches = 50

stoping_step = 10

optimizer = get_regal_optimizer(model, optim.AdamW, lr)

viz = get_Visdom()

batch_loss_drawer = VisdomScalar(viz, f"batch_loss interval:{_interval}")

epoch_loss_drawer = VisdomScalar(viz, f"Train and valid loss", 2)

acc_drawer = VisdomScalar(viz, "Train and valid accuracy", 2)

text_writer = VisdomTextWriter(viz, "Training")

batch_loss = []

train_loss = []

valid_loss = []

train_acc = []

valid_acc = []

res, model = train_visdom_v2(model, optimizer, loss, viz, train_loader,

valid_loader, epoches, batch_loss, batch_loss_drawer,

train_loss, valid_loss, epoch_loss_drawer,

train_acc, valid_acc, acc_drawer, text_writer,

_interval=_interval, early_stop=stoping_step)

eval_model(model, test_loader, loss)

## 调参

config["embedding_dim"] = 50

config

%%time

train_set, valid_set, test_set= load_glove_data(config)

batch_size = 64

workers = 4

train_loader, valid_loader, test_loader = get_loader(batch_size, workers, get_collate_fn(config), train_set, valid_set, test_set)

model = Model(config).cuda()

loss = nn.CrossEntropyLoss()

print(get_parameter_number(model), loss)

_interval = 5

lr = 1e-3

epoches = 50

stoping_step = 10

optimizer = get_regal_optimizer(model, optim.AdamW, lr)

viz = get_Visdom()

batch_loss_drawer = VisdomScalar(viz, f"batch_loss interval:{_interval}")

epoch_loss_drawer = VisdomScalar(viz, f"Train and valid loss", 2)

acc_drawer = VisdomScalar(viz, "Train and valid accuracy", 2)

text_writer = VisdomTextWriter(viz, "Training")

batch_loss = []

train_loss = []

valid_loss = []

train_acc = []

valid_acc = []

res, model = train_visdom_v2(model, optimizer, loss, viz, train_loader,

valid_loader, epoches, batch_loss, batch_loss_drawer,

train_loss, valid_loss, epoch_loss_drawer,

train_acc, valid_acc, acc_drawer, text_writer,

_interval=_interval, early_stop=stoping_step)

eval_model(model, test_loader, loss)

```

| github_jupyter |

# Basic data set generation

```

import numpy as np

import os

from scipy.misc import imread, imresize

import matplotlib.pyplot as plt

%matplotlib inline

print ("Package loaded")

cwd = os.getcwd()

print ("Current folder is %s" % (cwd) )

```

# SPECIFY THE FOLDER PATHS

## + RESHAPE SIZE + GRAYSCALE

```

# Training set folder

paths = {"../../img_dataset/celebs/Arnold_Schwarzenegger"

, "../../img_dataset/celebs/Junichiro_Koizumi"

, "../../img_dataset/celebs/Vladimir_Putin"

, "../../img_dataset/celebs/George_W_Bush"}

# The reshape size

imgsize = [64, 64]

# Grayscale

use_gray = 1

# Save name

data_name = "custom_data"

print ("Your images should be at")

for i, path in enumerate(paths):

print (" [%d/%d] %s/%s" % (i, len(paths), cwd, path))

print ("Data will be saved to %s"

% (cwd + '/data/' + data_name + '.npz'))

```

# RGB 2 GRAY FUNCTION

```

def rgb2gray(rgb):

if len(rgb.shape) is 3:

return np.dot(rgb[...,:3], [0.299, 0.587, 0.114])

else:

# print ("Current Image if GRAY!")

return rgb

```

# LOAD IMAGES

```

nclass = len(paths)

valid_exts = [".jpg",".gif",".png",".tga", ".jpeg"]

imgcnt = 0

for i, relpath in zip(range(nclass), paths):

path = cwd + "/" + relpath

flist = os.listdir(path)

for f in flist:

if os.path.splitext(f)[1].lower() not in valid_exts:

continue

fullpath = os.path.join(path, f)

currimg = imread(fullpath)

# Convert to grayscale

if use_gray:

grayimg = rgb2gray(currimg)

else:

grayimg = currimg

# Reshape

graysmall = imresize(grayimg, [imgsize[0], imgsize[1]])/255.

grayvec = np.reshape(graysmall, (1, -1))

# Save

curr_label = np.eye(nclass, nclass)[i:i+1, :]

if imgcnt is 0:

totalimg = grayvec

totallabel = curr_label

else:

totalimg = np.concatenate((totalimg, grayvec), axis=0)

totallabel = np.concatenate((totallabel, curr_label), axis=0)

imgcnt = imgcnt + 1

print ("Total %d images loaded." % (imgcnt))

```

# DIVIDE TOTAL DATA INTO TRAINING AND TEST SET

```

def print_shape(string, x):

print ("Shape of '%s' is %s" % (string, x.shape,))

randidx = np.random.randint(imgcnt, size=imgcnt)

trainidx = randidx[0:int(3*imgcnt/5)]

testidx = randidx[int(3*imgcnt/5):imgcnt]

trainimg = totalimg[trainidx, :]

trainlabel = totallabel[trainidx, :]

testimg = totalimg[testidx, :]

testlabel = totallabel[testidx, :]

print_shape("trainimg", trainimg)

print_shape("trainlabel", trainlabel)

print_shape("testimg", testimg)

print_shape("testlabel", testlabel)

```

# SAVE TO NPZ

```

savepath = cwd + "/data/" + data_name + ".npz"

np.savez(savepath, trainimg=trainimg, trainlabel=trainlabel

, testimg=testimg, testlabel=testlabel, imgsize=imgsize, use_gray=use_gray)

print ("Saved to %s" % (savepath))

```

# LOAD TO CHECK!

```

# Load them!

cwd = os.getcwd()

loadpath = cwd + "/data/" + data_name + ".npz"

l = np.load(loadpath)

# See what's in here

l.files

# Parse data

trainimg_loaded = l['trainimg']

trainlabel_loaded = l['trainlabel']

testimg_loaded = l['testimg']

testlabel_loaded = l['testlabel']

print ("%d train images loaded" % (trainimg_loaded.shape[0]))

print ("%d test images loaded" % (testimg_loaded.shape[0]))

print ("Loaded from to %s" % (savepath))

```

# PLOT RANDOMLY SELECTED TRAIN IMAGES

```

ntrain_loaded = trainimg_loaded.shape[0]

batch_size = 10;

randidx = np.random.randint(ntrain_loaded, size=batch_size)

for i in randidx:

currimg = np.reshape(trainimg_loaded[i, :], (imgsize[0], -1))

currlabel_onehot = trainlabel_loaded[i, :]

currlabel = np.argmax(currlabel_onehot)

if use_gray:

currimg = np.reshape(trainimg[i, :], (imgsize[0], -1))

plt.matshow(currimg, cmap=plt.get_cmap('gray'))

plt.colorbar()

else:

currimg = np.reshape(trainimg[i, :], (imgsize[0], imgsize[1], 3))

plt.imshow(currimg)

title_string = "[%d] %d-class" % (i, currlabel)

plt.title(title_string)

plt.show()

```

# PLOT RANDOMLY SELECTED TEST IMAGES

```

# Do batch stuff using loaded data

ntest_loaded = testimg_loaded.shape[0]

batch_size = 3;

randidx = np.random.randint(ntest_loaded, size=batch_size)

for i in randidx:

currimg = np.reshape(testimg_loaded[i, :], (imgsize[0], -1))

currlabel_onehot = testlabel_loaded[i, :]

currlabel = np.argmax(currlabel_onehot)

if use_gray:

currimg = np.reshape(testimg[i, :], (imgsize[0], -1))

plt.matshow(currimg, cmap=plt.get_cmap('gray'))

plt.colorbar()

else:

currimg = np.reshape(testimg[i, :], (imgsize[0], imgsize[1], 3))

plt.imshow(currimg)

title_string = "[%d] %d-class" % (i, currlabel)

plt.title(title_string)

plt.show()

```

| github_jupyter |

# Tutorial about running analysis procedures

Standardized analysis procedures are provided as individual classes in the `locan.analysis` module.

Here we outline the principle use of any analysis class using a mock analysis procedure - the AnalysisExampleAlgorithm_1 class.

```

from pathlib import Path

%matplotlib inline

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import locan as lc

from locan.analysis.analysis_example import AnalysisExampleAlgorithm_1, AnalysisExampleAlgorithm_2

lc.show_versions(system=False, dependencies=False, verbose=False)

```

## Some localization data

```

localization_dict = {

'Position_x': [0, 0, 1, 4, 5],

'Position_y': [0, 1, 3, 4, 1]

}

df = pd.DataFrame(localization_dict)

dat = lc.LocData.from_dataframe(dataframe=df)

dat.print_summary()

```

## Instantiating and using the Analysis class

### Instantiate the Analysis_example object

```

ae = AnalysisExampleAlgorithm_2()

```

Show the initialized parameters:

```

ae

ae.parameter

```

### Primary results of the analysis procedure

Some random data a and b is generated as primary result of the analysis procedure:

```

ae.compute(locdata=dat)

ae.results.head()

```

The class provides various methods for further dealing with the primary data.

```

methods = [x for x in dir(ae) if callable(getattr(ae, x)) and not x.startswith('_')]

methods

```

### Generating a plot of results

Typically the primary results are further analyzed and represented by visual representations. This can be a plot or a histogram. Secondary results may be generated e.g. by fitting the plot.

```

ae.plot()

ae.plot_2()

```

After a plot or histogram has been generated, secondary results may have been added as attributes.

```

attributes = [x for x in dir(ae) if not callable(getattr(ae, x)) and not x.startswith('_')]

attributes

print('Fit result:\n Center: {}\n Sigma: {}'.format(ae.attribute_center, ae.attribute_sigma))

```

### Generating a report

A report contains various plots, histograms and secondary results.

```

ae.report()

```

The report can be saved as pdf by providing a path:

```

# ae.report(path)

```

## Modifying plots for publication

### This is the original plot from the analysis class

```

ae.plot()

```

### Save the plot in various formats

```

from pathlib import Path

temp_directory = Path('.') / 'temp'

temp_directory.mkdir(parents=True, exist_ok=True)

path = temp_directory / 'filename.pdf'

path

ae.plot()

plt.savefig(fname=path, dpi=None, facecolor='w', edgecolor='w',

orientation='portrait', format=None,

transparent=False, bbox_inches=None, pad_inches=0.1)

```

Delete the file and empty directory

```

path.unlink()

temp_directory.rmdir()

```

### Make changes to this figure

```

ae.plot()

fig = plt.gcf()

ax = fig.axes

print('Number of axes: ', len(ax))

ax[0].set_title('This is some other title.', fontsize='large')

plt.show()

```

### Delete axis elements

```

# fig.delaxes()

```

## Comparing different datasets

### Instantiate the Analysis_example objects

```

ae_1 = AnalysisExampleAlgorithm_2().compute(locdata=dat)

ae_2 = AnalysisExampleAlgorithm_2().compute(locdata=dat)

```

### Combine all plots in one

```

fig, ax = plt.subplots(nrows=1, ncols=1)

ae_1.plot(ax=ax)

ae_2.plot(ax=ax)

legend_strings = list(ae_1.meta.identifier)*2 + list(ae_2.meta.identifier)*2

ax.legend(legend_strings, title='Identifier')

plt.show()

```

| github_jupyter |

```

from __future__ import division, print_function, absolute_import

import tflearn

from tflearn.layers.core import input_data, dropout, fully_connected

from tflearn.layers.conv import conv_2d, max_pool_2d

from tflearn.layers.normalization import local_response_normalization

from tflearn.layers.estimator import regression

import numpy as np

import matplotlib.pyplot as plt

import sklearn.utils

import tensorflow as tf

import h5py

from tflearn.data_preprocessing import ImagePreprocessing

from tflearn.data_augmentation import ImageAugmentation

%matplotlib inline

IMG_WIDTH = 32 # Side for each transformed Image

IMG_HEIGHT = 32

IMG_DEPTH = 1 # RGB files

MAX_DIGITS = 5

imgsAll = np.empty(shape = (0,IMG_HEIGHT, IMG_WIDTH), dtype=float)

labelsAll = np.empty(shape = (0,MAX_DIGITS), dtype=float)

numDigitsAll = np.empty(shape = (0), dtype=float)

for numDigits in range(1,MAX_DIGITS + 1):

h5FileName = 'svhn_' + str(numDigits) + '.h5'

data = h5py.File(h5FileName)

imgs = np.array(data['images']).astype(float)

labels = np.array(data['digits'])

# Buff up labels to MAX_DIGITS width

zerosToFill = np.zeros(shape = (labels.shape[0], MAX_DIGITS - numDigits ), dtype = float)

labels = np.concatenate ((labels, zerosToFill), axis = 1)

# Concat to full Dataset

imgsAll = np.concatenate((imgsAll, imgs), axis = 0)

labelsAll = np.concatenate((labelsAll, labels), axis = 0)

numDigitsAll = np.concatenate((numDigitsAll, np.full(labels.shape[0], numDigits, dtype= float))) # Add num of digits for this set of images

print (imgsAll.shape)

print (labelsAll.shape)

print (numDigitsAll.shape)

print (labelsAll[100000])

plt.imshow(imgsAll[100000], cmap='gray')

print (numDigitsAll[100000])

def dense_to_one_hot(labels_dense, num_classes=10):

"""Convert class labels from scalars to one-hot vectors."""

num_labels = labels_dense.shape[0]

index_offset = np.arange(num_labels) * num_classes

labels_one_hot = np.zeros((num_labels, num_classes))

index_update = [int(x) for x in index_offset + labels_dense]

labels_one_hot.flat[index_update] = 1

return labels_one_hot

# Get the dataset

X = imgsAll.reshape([-1, IMG_HEIGHT, IMG_WIDTH, IMG_DEPTH])

Y = numDigitsAll

X, Y = sklearn.utils.shuffle(X, Y, random_state=0)

# Generate validation set

ratio = 0.9 # Train/Test set

randIdx = np.random.random(imgsAll.shape[0]) <= ratio

#print (sum(map(lambda x: int(x), randIdx)))

X_train = X[randIdx]

Y_train = Y[randIdx]

X_test = X[randIdx == False]

Y_test = Y[randIdx == False]

Y_train = dense_to_one_hot(Y_train, num_classes = MAX_DIGITS+1)

Y_test = dense_to_one_hot(Y_test, num_classes = MAX_DIGITS+1)

#del X, Y # release some space

print (X_train.shape)

print (Y_train.shape)

# Building convolutional network

with tf.Graph().as_default():

# Building convolutional network

# Real-time data preprocessing

img_prep = ImagePreprocessing()

img_prep.add_featurewise_zero_center()

img_prep.add_featurewise_stdnorm()

# Real-time data augmentation

img_aug = ImageAugmentation()

#img_aug.add_random_flip_leftright()

img_aug.add_random_rotation(max_angle=25.)

network = input_data(shape=[None, IMG_HEIGHT, IMG_WIDTH, IMG_DEPTH], name='input',

data_preprocessing=img_prep,

data_augmentation=img_aug)

network = conv_2d(network, 32, 3, activation='relu', regularizer="L2")

network = max_pool_2d(network, 2)

network = local_response_normalization(network)

network = conv_2d(network, 64, 3, activation='relu', regularizer="L2")

network = max_pool_2d(network, 2)

network = local_response_normalization(network)

fc_1 = fully_connected(network, 1024, activation='tanh')

softmax1 = fully_connected(fc_1, MAX_DIGITS + 1, activation='softmax')

network = regression(softmax1, optimizer='adam', learning_rate=0.001,

loss='categorical_crossentropy', name='target')

model = tflearn.DNN(network, tensorboard_verbose=3)

model.fit({'input': X_train}, Y_train,

validation_set= (X_test, Y_test), n_epoch=1, snapshot_step=100, show_metric=True, run_id='convnet_svhn_numDigits')

numImgEachAxis = 8

f,ax = plt.subplots(numImgEachAxis, numImgEachAxis, figsize=(10,10))

for i in range(numImgEachAxis):

for j in range(numImgEachAxis):

#res = np.array([np.argmax(x) for x in model.predict([X_train[i*numImgEachAxis + j]])])

#print (str(i) + ',' + str(j) + ' -> ' +str(res))

#ax[i][j].set_title(str([np.round(x,2) for x in res]))

ax[i][j].imshow(X_train[i*numImgEachAxis + j].reshape((IMG_HEIGHT,IMG_WIDTH)) ,cmap = 'gray')

plt.show() # or display.display(plt.gcf()) if you prefer

# print (model.evaluate(X_test,feedTestList))

```

| github_jupyter |

# *OPTIONAL* Astronomical widget libraries

The libraries demonstrated here are not as mature as the ones we've seen so far. Keep an eye on them for future developments!

## PyWWT - widget interface to the World Wide Telescope

### https://github.com/WorldWideTelescope/pywwt