code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import matplotlib.pyplot as plt

from scipy import signal

import scipy

import scipy.io as sio

import copy

import pylab as pl

import time

from IPython import display

# ## Chirp parameters

start_freq = 770000

band_freq = 80000

duration = 0.0004

samples_one_second = 10000000

rate = samples_one_second / start_freq

sample = start_freq * rate

npnts = int(sample * duration)

print("Smpale per cicle", rate, "Sample for one second", samples_one_second, "Total semples", npnts)

# ## Create the chirp

# +

timevec = np.linspace(0, duration, npnts)

adding_freq = np.linspace(0, band_freq, npnts)

chirp = np.sin(2*np.pi * (start_freq + adding_freq * 0.5) * timevec)

# chirp = signal.chirp(timevec,f0=start_freq,t1=duration,f1=start_freq + band_freq)

ciclestoshow = int(rate * 30)

plt.figure(figsize=(16,4))

plt.subplot(211)

plt.plot(timevec[:ciclestoshow], chirp[:ciclestoshow])

plt.title("Time domain Low")

plt.subplot(212)

plt.plot(timevec[-ciclestoshow:], chirp[-ciclestoshow:])

plt.title("Time domain High")

plt.tight_layout()

plt.show()

# +

hz = np.linspace(0, sample / 2, int(np.floor(npnts / 2) + 1))

spectrum = 2*np.abs(scipy.fftpack.fft(chirp)) / npnts

plt.figure(figsize=(16,4))

plt.stem(hz,spectrum[0:len(hz)])

plt.xlim([start_freq - band_freq,start_freq + band_freq*3])

plt.title('Power spectrum')

plt.show()

# -

# # Distance of an object in km

kilometer = 16.8

# # RX TX Chirp Mix

# +

light_speed_km = 300000

print('Theoretical max distance of a chirp of', light_speed_km * duration / 2, 'km')

smallest_measure_distance = light_speed_km * (1 / samples_one_second) / 2

print('smallest measure distance',smallest_measure_distance, 'km')

shift = int((1 / smallest_measure_distance) * kilometer)

print('shift', shift, 'out of', npnts, 'sample points, for a distance of', np.round(shift * smallest_measure_distance, 3), 'km')

distance_per_herz = (light_speed_km * duration / 2) / band_freq

print('Friquncy domain per distance', distance_per_herz, 'km per herz')

print()

chirp_time = np.linspace(0, duration, npnts)

chirp_freq = np.linspace(0, band_freq, npnts)

plt.plot(chirp_time, chirp_freq, label='TX chirp')

plt.plot(chirp_time[shift:], chirp_freq[:-shift], label='RX chirp')

plt.plot([chirp_time[shift],chirp_time[shift]], [0,chirp_freq[shift]], 'g-' , label='St Frequency {} hz'.format(np.round(chirp_freq[shift])))

plt.plot(chirp_time[shift],0, 'gv')

plt.plot(chirp_time[shift],chirp_freq[shift], 'g^')

plt.plot([chirp_time[shift],chirp_time[-1]],[chirp_freq[shift],chirp_freq[shift]], 'c:', label='IF signal')

plt.plot([chirp_time[-1]],[chirp_freq[shift]], 'c>')

plt.ylabel('Frequency')

plt.xlabel('Time')

plt.title('RX Reflection of corresponding object')

plt.legend()

plt.tight_layout()

plt.show()

# -

# ## Object detection

# +

# local synthesizer

tx = chirp[shift:]

# Object reflection signal

rx = chirp[:-shift]

# mixing all frequencies

mix = tx * rx

plt.figure(figsize=(16,4))

plt.plot(timevec[:-shift], mix)

plt.title("Time domain mix shift chirp")

plt.xlabel('Time')

plt.show()

accuracy = int(npnts * 5)

hz = np.linspace(0, sample / 2, int(np.floor(accuracy / 2) + 1))

# Get IF frequencies spectrum

fftmix = scipy.fftpack.fft(mix, n=accuracy)

ifSpectrum = np.abs(fftmix) / accuracy

ifSpectrum[1:] = ifSpectrum[1:] * 2

# Find local high as detection

hz_band_freq = hz[hz <= band_freq]

testIifSpectrum = ifSpectrum[:len(hz_band_freq)]

localMax = np.squeeze(np.where( np.diff(np.sign(np.diff(testIifSpectrum))) < 0)[0]) + 1

# Adjust trigger level

meanMax = testIifSpectrum[localMax].mean()

maxSpectrum = testIifSpectrum[localMax].max()

trigger = maxSpectrum * .8

# Frequency detection

valid_local_indexs = localMax[testIifSpectrum[localMax] > trigger]

colors = ['r','g','c','m','y']

plt.figure(figsize=(16,4))

plt.plot(hz_band_freq, testIifSpectrum,'b-o', label='spectrum')

# Convert chirp shift to distance

dist = smallest_measure_distance * shift

# Convert distance to frequency

scale = dist / distance_per_herz

plt.plot([scale,scale], [maxSpectrum, 0],'g--', label='closest distance {}'.format(np.round(dist,3)))

plt.plot([hz_band_freq[0],hz_band_freq[-1]],[trigger,trigger],'--',label='trigger level {}'.format(np.round(trigger,3)))

for i in range(len(valid_local_indexs)):

pos = valid_local_indexs[i]

freq = hz_band_freq[pos]

spect_val = testIifSpectrum[pos]

plt.plot(freq, spect_val,colors[i] + 'o', label='detection frq {} distance {}'.format(freq, np.round(freq * distance_per_herz, 2)))

plt.xlim([0,hz[valid_local_indexs[-1]] * 2])

plt.title("Friquncy domain IF signal")

plt.xlabel('Frequency')

plt.legend()

plt.show()

# +

# Low pass filter mixed IF

lowCut = band_freq * 1.2

nyquist = sample/2

transw = .1

order = npnts

# To avoid edge effect

longmix = np.concatenate((mix[::-1],mix,mix[::-1]))

# order must be odd

if order%2==0:

order += 1

shape = [ 1, 1, 0, 0 ]

frex = [ 0, lowCut-lowCut*transw, lowCut, nyquist ]

# define filter shape

# filter kernel

filtkern = signal.firls(order,frex,shape,fs=sample)

filtkern = filtkern * np.hanning(order)

nConv = len(filtkern) + len(longmix) - 1

lenfilter = len(filtkern)

half_filt_len = int(np.floor(lenfilter / 2))

filtkernFft = scipy.fftpack.fft(filtkern,n=nConv)

rowFft = scipy.fftpack.fft(longmix,n=nConv)

ifSignal = np.real(scipy.fftpack.ifft(rowFft * filtkernFft))

ifSignal = ifSignal[half_filt_len:-half_filt_len]

ifSignal = ifSignal[len(mix):-len(mix)]

siglen = len(ifSignal)

plt.figure(figsize=(16,4))

plt.plot(timevec[:siglen] * duration, ifSignal)

plt.title("Time domain IF signal (Mix low pass filter)")

plt.xlabel('Time')

plt.show()

# -

|

Notebook/1-Radar-rx-tx-mix.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Enconding Natural Data

#

# One of the primary challeges in natural language processing is properly encoding the text so that mathematical models such as neural networks can extract meaning from the text, and process it into some kind of result. The field has evolved quite a lot over time, and we're going to briefly present a few of the once-common strategies for language encoding then spend some time talking about the current favorite method: embeddings.

#

# ## Rules Based Systems

#

# At first there were rules based systems. Like many other systems at the time, these were "expert systems" designed with extensive help from linguists and grammatician. These systems would look at a sentnce and try to identify critical aspects of a sentence (the subject, object, key verb...), label the words into types (adjective, noun, verb adverb), and map their function (which adjectives describe which nouns?).

#

# These expert systems could produce some data structure representing the sentence or document, and then these structures could be used for some custom built purpose (grammar check, chat bot...)

#

# Unlike ML systems, these systems didn't have any real purpose for statistical based learning, and as a result the data structures didn't need to be strictly numerically based, nor did they have to explicitly encode individual words or longer sentences into pure numeric formats.

#

# ## Bag of Words

#

# Once statistical learning methods began advancing, NLP researchers needed pure numeric encodings that could meaningfully represent, words, sentences, or other strings of text. Some simple strategies like the classic one-hot-encoding were fleetingly popular — but the need for a vector the size of the entire vocabulary just to represent a single word was prohibitively costly for all but the simplest use cases.

#

# The "Bag of Words" encoding came next. Instead of representing single words at a time, this strategy reduces an entire string of text into a vector. Again the vector is the lenght of the vocabulary, but instead of one-hot the individual values are the number of times that word appears in a the text.

#

# For example, say our vocabulary is only 5 words:

#

# Hello, live, work, to, friend

#

# Each of these words is represented by a position in a vector, and we loop through the text to create the vector for our text.

#

# The sentence, "Hello friend" becomes the vector `[1, 0, 0, 0, 1]`

#

# "Live to work" and "work to live" both become the vector `[0, 1, 1, 1, 0]`

#

# For some simple tasks such an encoding can work reasonably well especially if the input texts are always short. For example, mapping Tweets to a binary positive/negative sentiment system. But such a simple system clearly discards much of the semantic meaning of the sentence by completely ignoring the order of the words. It also struggles with words like "live" that have multiple possible meanings ("The new feature is going live tomorrow." vs "She is going to live!")

#

# Such an encoding can indeed be used with a standard ANN. That said, none of the state of the art research is proceeding down this path.

#

#

# ## TF-IDF

#

# TF-IDF, or Term Frequencey Inverse Document Frequency is a way to turn an individual word into a numeric value rather than process a series of words the way Bag of Words would. The TF-IDF value is a representation of how common a word is within a particular document as well as how common the word is accross all documents in an entire corpus or dataset. We won't be using it, but you can view the [mathematical details on Wikipedia](https://en.wikipedia.org/wiki/Tf%E2%80%93idf) if you're curious

#

# While this is useful in some information retrieval contexts for many natural language tasks such as machine translation and sentiment analysis, information about a words commonality isn't especially helpful. Researchers needed a way to create a numeric representation of individual words that could somehow capture the semantic meaning of those words...

#

# ## Word Embeddings

#

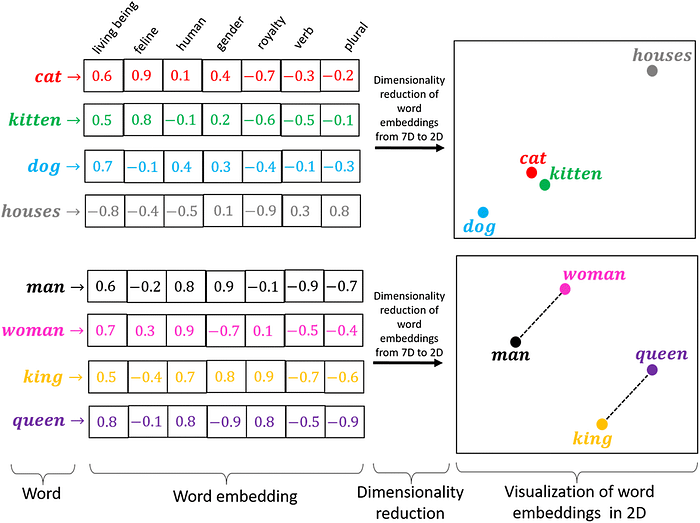

# In 2013, a team of researchers at Google published two papers describing Word2Vec, a neural network that transformed individual words into vectors that could represent the word's semantic meaning. Word2Vec formed a strong foundation for reseach, and ultimately led to the creation of a now-standard tool: Word Embedding layers.

#

# Word embedding layers provide a generic interface for the first layer of any neural network that wants to process one word at a time as input. Their function is simple: Embedding layers act as a lookup table mapping a word from our vocabulary into a dense vector of a chosen length representing that word.

#

# The values associated with each position in the word-vector are learned during back-propagation just as the weights in a Dense layer would be, but don't require an entire matrix multiply as each word maps to a single vector of the matrix representing the entire vocabulary. This difference saves computational time, but learns through backpropagation very similarly to a Dense layer.

#

# Sometimes we may use a pre-trained network (such as Word2Vec) to create the word embeddings. Although it is now quite common to train an embedding layer as part of the network, which allows the word embeddings to learn patterns that are specific to the task at hand.

#

# Regardless, the result is a lookup that maps words to vectors and (if it works as expected) words that are closely related in the dataset result in vectors that are near each other in vector space. When it REALLY works, these embedding vectors can be thought of as a rich set of features extracted from the word.

#

# Analysis of the embeddings themselves can be done. For example computing the cosine similarity between two words in the resulting vector space frequently reveals that semantically related words like cat and kitty are close to each other in the resulting vector space, as explained here [https://medium.com/@hari4om/word-embedding-d816f643140](https://medium.com/@hari4om/word-embedding-d816f643140)

#

#

#

# > image from linked reading

|

08-recurrent-neural-networks/01-encoding-textual-data.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Getting started

# ### Ranges

# A range is a rectangular group of cells. We define ranges from the top left to the bottom right. For example A1:B2.

# ### Formulas

# In B2, add 29 to 121. You should use the + operator. **It's really important here to put the = in front of the cell**.

# =29+121

# ### Exponents and parentheses

# = (7 + 2)^2 - 16

# ### Percentage

# = 4*21%

#

# ### Comparison operators

# = 0.4 < 1 will show a value of FALSE

# ### Data types: text and numbers

# ="Eggs"+1 will give an error. Hover over the cell to see the details.

#

# Fill in '= 0.40 * 21% in F2, **mind the ' in front**. Spaces are important here. By using the single quote, you ensure the formula will not be calculated.

#

# plain text, will be used if there's no other type recognized. You can force this with ', e.g.: 'text, or '2. Aligned to the left by default.

# ### Data types: currency and date

# Google Sheets tries to interpret the correct data type by default. For example, if you start a cell with $, it will be interpreted as a currency. Sometimes, it might be necessary to manually change a data type. You do this by selecting the cells you want to change and click on Format > Number in the menu bar.

#

# Select the values in D2:D5. These currently have type date. Using the format menu, change the format to use / instead of - to separate the date parts. Use the 'More date and time formats...' option in 'Format > Number > More formats'.

#

# Select the values in E2:E5. They currently have type currency, using the dollar $ sign. Change the format of these values to currency (rounded) You can use the format menu, or any other shortcuts you'd like.

# ### Data types: logic

# When you enter true or false in a cell, it's also recognized as a logical. Logicals are case insensitive, but Google Sheets will replace the value you entered by the capitalized logical: TRUE or FALSE.

#

# =true <> false, fill a cell with this will give a TRUE. <> is != in C++.

# ## References

# * Reference and absolute reference

# * Auto filling

# * Reactivity

# ### Cell references

# For example, you could make a reference to cell A1 in another cell: = A1. The cell will then always be the same as what's in A1. As cell references are case insensitive, = a1 would be fine as well.

#

# In D2 cell, write = C2

# In E2 cell, write = D2

# If C2 is changed to 20, then D2 and E2 will be changed to 20 automatically.

# ### Circular references

# But what happens when the referencing cell and the referenced cell are the same? In other words, what happens if a cell references itself? It will have problems.

# ### Copying references

# Referencing becomes especially useful if you start copying the references around. When you copy formulas containing references to neighbouring cells, the references will shift along.

#

# Assume you have = A1 in the cell with address B1. If you copy this cell to B2, the reference will change to = A2.

#

# To copy a cell to neighbouring cells, select the cell and move your mouse to the lower right corner. **The cursor should change to a "+"-sign. Drag and drop to where you want to copy.**

#

# ### Copying horizontally

# Just like you can copy cells vertically, you can just as well copy cells horizontally. Once again, when copying references, they will shift along. This time they will change columns.

#

# ### Copying columns

# Copy columns of references all at once!

#

# Start by filling in a reference to B2 in D2. Make sure to start the formula with a =.

#

# Copy D2 down to D11 vertically by dragging it downwards.

#

# Make sure D2:D11 is selected, copy this column one to the right to E2:E11, also by dragging.

# ### Mathematical operators and references

# Do calculations with references.

#

# Fill in the land area of China in miles in D2. To convert square kilometers to square miles, divide the values by 2.59. Use a reference to C2. That is: = C2/2.59

# Use the copying technique you learned in the previous exercises to fill down the square miles in D2:D11.

# ### Percentages and references

# First, fill in = B2 * 1.12% in D2. Then find the lower right corner to copy the values until D11 by dragging.

# Fill in = B2 / C2 in E2, then use your copy skills to fill out the columns until E11.

# ### Comparison operators and references

# In F2:F11, fill in a column which is TRUE each time the density is bigger than the world average and FALSE otherwise. The world average is 51. That is, fill with: =E2 > 51

#

# In G2:G11, fill in a column which is TRUE each time the continent is equal to "Asia" and FALSE otherwise. That is fill with: =D2="Asia"

# ### Absolute references

# If fill one cell with: = B2/B12 * 100, and they copy by dragging down, and then we will see B3/B13 * 100, B4/B14 * 100,... However, B13, B14, ... are not existed. So there will be error.

#

# We actually want B12 not changed in all the cells. So adding a `$` before B makes column B absolute (not changing when copying). Then adding a `$` before 12 makes row 12 absolute. That is, we fill the first cell with `=B2/$B$12 * 100`. When dragging down, we will have `B2/B12*100, B3/B12*100, B4/B12*100,...`

#

# ### Absolute references: row

#

# Fill D2 with `=C2/C$12 * 100.` The `$` only makes the row absolute.

# ### Absolute references: column

#

# Fill D2 with `=C2/$`C12` * 100`. The `$` only makes the row absolute.

|

dataManipulation/spreadsheets/Part I -- Spreadsheets basics/.ipynb_checkpoints/spreadsheet basics-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Load data

# +

import pickle

train_filename = "C:/Users/behl/Desktop/lung disease/train_data_sample_gray.p"

(train_labels, train_data, train_tensors) = pickle.load(open(train_filename, mode='rb'))

valid_filename = "C:/Users/behl/Desktop/lung disease/valid_data_sample_gray.p"

(valid_labels, valid_data, valid_tensors) = pickle.load(open(valid_filename, mode='rb'))

test_filename = "C:/Users/behl/Desktop/lung disease/test_data_sample_gray.p"

(test_labels, test_data, test_tensors) = pickle.load(open(test_filename, mode='rb'))

# +

def onhotLabels(label):

from sklearn.preprocessing import OneHotEncoder

enc = OneHotEncoder()

enc.fit(label)

return enc.transform(label).toarray()

train_labels = onhotLabels(train_labels)

valid_labels = onhotLabels(valid_labels)

test_labels = onhotLabels(test_labels)

# -

# # CapsNet model

# +

import os

import argparse

from keras.preprocessing.image import ImageDataGenerator

from tensorflow.keras import callbacks

import numpy as np

from keras import layers, models, optimizers

from keras import backend as K

import matplotlib.pyplot as plt

from PIL import Image

from capsulelayers import CapsuleLayer, PrimaryCap, Length, Mask

def CapsNet(input_shape, n_class, routings):

"""

A Capsule Network on MNIST.

:param input_shape: data shape, 3d, [width, height, channels]

:param n_class: number of classes

:param routings: number of routing iterations

:return: Two Keras Models, the first one used for training, and the second one for evaluation.

`eval_model` can also be used for training.

"""

x = layers.Input(shape=input_shape)

# Layer 1: Just a conventional Conv2D layer

conv1 = layers.Conv2D(filters=256, kernel_size=9, strides=1, padding='valid', activation='relu', name='conv1')(x)

# Layer 2: Conv2D layer with `squash` activation, then reshape to [None, num_capsule, dim_capsule]

primarycaps = PrimaryCap(conv1, dim_capsule=8, n_channels=32, kernel_size=9, strides=2, padding='valid')

# Layer 3: Capsule layer. Routing algorithm works here.

digitcaps = CapsuleLayer(num_capsule=n_class, dim_capsule=16, routings=routings,

name='digitcaps')(primarycaps)

# Layer 4: This is an auxiliary layer to replace each capsule with its length. Just to match the true label's shape.

# If using tensorflow, this will not be necessary. :)

out_caps = Length(name='capsnet')(digitcaps)

# Decoder network.

y = layers.Input(shape=(n_class,))

masked_by_y = Mask()([digitcaps, y]) # The true label is used to mask the output of capsule layer. For training

masked = Mask()(digitcaps) # Mask using the capsule with maximal length. For prediction

# Shared Decoder model in training and prediction

decoder = models.Sequential(name='decoder')

decoder.add(layers.Dense(512, activation='relu', input_dim=16*n_class))

decoder.add(layers.Dense(1024, activation='relu'))

decoder.add(layers.Dense(np.prod(input_shape), activation='sigmoid'))

decoder.add(layers.Reshape(target_shape=input_shape, name='out_recon'))

# Models for training and evaluation (prediction)

train_model = models.Model([x, y], [out_caps, decoder(masked_by_y)])

eval_model = models.Model(x, [out_caps, decoder(masked)])

# manipulate model

noise = layers.Input(shape=(n_class, 16))

noised_digitcaps = layers.Add()([digitcaps, noise])

masked_noised_y = Mask()([noised_digitcaps, y])

manipulate_model = models.Model([x, y, noise], decoder(masked_noised_y))

return train_model, eval_model, manipulate_model

model, eval_model, manipulate_model = CapsNet(input_shape=train_tensors.shape[1:],

n_class=len(np.unique(train_labels)),

routings=4)

decoder.summary()

model.summary()

# +

from keras import backend as K

def binary_accuracy(y_true, y_pred):

return K.mean(K.equal(y_true, K.round(y_pred)))

def precision_threshold(threshold = 0.5):

def precision(y_true, y_pred):

threshold_value = threshold

y_pred = K.cast(K.greater(K.clip(y_pred, 0, 1), threshold_value), K.floatx())

true_positives = K.round(K.sum(K.clip(y_true * y_pred, 0, 1)))

predicted_positives = K.sum(y_pred)

precision_ratio = true_positives / (predicted_positives + K.epsilon())

return precision_ratio

return precision

def recall_threshold(threshold = 0.5):

def recall(y_true, y_pred):

threshold_value = threshold

y_pred = K.cast(K.greater(K.clip(y_pred, 0, 1), threshold_value), K.floatx())

true_positives = K.round(K.sum(K.clip(y_true * y_pred, 0, 1)))

possible_positives = K.sum(K.clip(y_true, 0, 1))

recall_ratio = true_positives / (possible_positives + K.epsilon())

return recall_ratio

return recall

def fbeta_score_threshold(beta = 1, threshold = 0.5):

def fbeta_score(y_true, y_pred):

threshold_value = threshold

beta_value = beta

p = precision_threshold(threshold_value)(y_true, y_pred)

r = recall_threshold(threshold_value)(y_true, y_pred)

bb = beta_value ** 2

fbeta_score = (1 + bb) * (p * r) / (bb * p + r + K.epsilon())

return fbeta_score

return fbeta_score

# +

def margin_loss(y_true, y_pred):

"""

Margin loss for Eq.(4). When y_true[i, :] contains not just one `1`, this loss should work too. Not test it.

:param y_true: [None, n_classes]

:param y_pred: [None, num_capsule]

:return: a scalar loss value.

"""

L = y_true * K.square(K.maximum(0., 0.9 - y_pred)) + \

0.5 * (1 - y_true) * K.square(K.maximum(0., y_pred - 0.1))

return K.mean(K.sum(L, 1))

def train(model, data, lr, lr_decay, lam_recon, batch_size, shift_fraction, epochs):

"""

Training a CapsuleNet

:param model: the CapsuleNet model

:param data: a tuple containing training and testing data, like `((x_train, y_train), (x_test, y_test))`

:param args: arguments

:return: The trained model

"""

# unpacking the data

(x_train, y_train), (x_test, y_test) = data

# callbacks

log = callbacks.CSVLogger('saved_models/CapsNet_log.csv')

tb = callbacks.TensorBoard(log_dir='saved_models/tensorboard-logs',

batch_size=batch_size, histogram_freq=0)

checkpoint = callbacks.ModelCheckpoint(filepath='saved_models/CapsNet.best.from_scratch.hdf5',

verbose=1, save_best_only=True)

cb_lr_decay = callbacks.LearningRateScheduler(schedule=lambda epoch: lr * (lr_decay ** epoch))

# compile the model

model.compile(optimizer='sgd', loss='binary_crossentropy',

metrics=[precision_threshold(threshold = 0.5),

recall_threshold(threshold = 0.5),

fbeta_score_threshold(beta=0.5, threshold = 0.5),

'accuracy'])

# Training without data augmentation:

# model.fit([x_train, y_train], [y_train, x_train], batch_size=batch_size, epochs=epochs,

# validation_data=[[x_test, y_test], [y_test, x_test]], callbacks=[log, tb, checkpoint, cb_lr_decay])

# Begin: Training with data augmentation ---------------------------------------------------------------------#

def train_generator(x, y, batch_size, shift_fraction=0.):

train_datagen = ImageDataGenerator(width_shift_range=shift_fraction,

height_shift_range=shift_fraction) # shift up to 2 pixel for MNIST

generator = train_datagen.flow(x, y, batch_size=batch_size)

while 1:

x_batch, y_batch = generator.next()

yield ([x_batch, y_batch], [y_batch, x_batch])

# Training with data augmentation. If shift_fraction=0., also no augmentation.

model.fit_generator(generator=train_generator(x_train, y_train, batch_size, shift_fraction),

steps_per_epoch=int(y_train.shape[0] / batch_size),

epochs=epochs,

validation_data=[[x_test, y_test], [y_test, x_test]],

callbacks=[log, tb, checkpoint, cb_lr_decay])

# End: Training with data augmentation -----------------------------------------------------------------------#

from utils import plot_log

plot_log('saved_models/CapsNet_log.csv', show=True)

return model

# -

train(model=model, data=((train_tensors, train_labels), (valid_tensors, valid_labels)),

lr=0.001, lr_decay=0.9, lam_recon=0.392, batch_size=32, shift_fraction=0.1, epochs=20)

# # Testing

model.load_weights('saved_models/CapsNet.best.from_scratch.hdf5')

prediction = eval_model.predict(test_tensors)

# +

threshold = 0.5

beta = 0.5

pre = K.eval(precision_threshold(threshold = threshold)(K.variable(value=test_labels),

K.variable(value=prediction[0])))

rec = K.eval(recall_threshold(threshold = threshold)(K.variable(value=test_labels),

K.variable(value=prediction[0])))

fsc = K.eval(fbeta_score_threshold(beta = beta, threshold = threshold)(K.variable(value=test_labels),

K.variable(value=prediction[0])))

print ("Precision: %f %%\nRecall: %f %%\nFscore: %f %%"% (pre, rec, fsc))

# -

K.eval(binary_accuracy(K.variable(value=test_labels),

K.variable(value=prediction[0])))

prediction[:30]

|

Capsule Network basic - SampleDataset.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

import tensorflow as tf

import pandas as pd

import numpy as np

from glob import glob

import matplotlib.pyplot as plt

# + _uuid="d629ff2d2480ee46fbb7e2d37f6b5fab8052498a" _cell_guid="79c7e3d0-c299-4dcb-8224-4455121ee9b0"

dataset_path = "/kaggle/input/signlang/ArASL_Database_54K_Final/ArASL_Database_54K_Final"

label_path = "/kaggle/input/signlang/ArSL_Data_Labels.csv"

# -

image = glob(dataset_path + "/*/*.*")

len(image)

# example of pixel normalization

from numpy import asarray

from PIL import Image

# load image

image = Image.open(image[0])

pixels = asarray(image)

# confirm pixel range is 0-255

print('Data Type: %s' % pixels.dtype)

print('Min: %.3f, Max: %.3f' % (pixels.min(), pixels.max()))

# convert from integers to floats

pixels = pixels.astype('float32')

# normalize to the range 0-1

pixels /= 255.0

# confirm the normalization

print('Min: %.3f, Max: %.3f' % (pixels.min(), pixels.max()))

df = pd.read_csv(label_path)

df.head()

classes = df.Class.unique().tolist()

classes

# +

from tensorflow.keras.preprocessing.image import ImageDataGenerator

datagen = ImageDataGenerator(rescale=1.0/255, validation_split=0.2)

training_generator = datagen.flow_from_directory(

dataset_path,

target_size=(64, 64),

batch_size=32,

color_mode="grayscale",

classes = classes,

subset='training')

validation_generator = datagen.flow_from_directory(

dataset_path, # same directory as training data

target_size=(64, 64),

batch_size=32,

color_mode="grayscale",

classes = classes,

subset='validation') # set as validation data

# +

from tensorflow.keras import models

from tensorflow.keras import layers

import tensorflow as tf

with tf.device('/device:GPU:0'):

# build a 6-layer

model = models.Sequential()

model.add(layers.Conv2D(32, (3, 3), activation='relu', input_shape=(64, 64, 1)))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(64, (3, 3), activation='relu'))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(64, (3, 3), activation='relu'))

model.add(layers.Flatten())

model.add(layers.Dense(64, activation='relu'))

model.add(layers.Dense(32, activation='softmax'))

model.summary()

# +

from tensorflow.keras.optimizers import Adam

early_stopping = tf.keras.callbacks.EarlyStopping(patience=10, verbose=1)

checkpointer = tf.keras.callbacks.ModelCheckpoint('asl_char.h5',verbose=1,save_best_only=True)

optimizer = Adam(lr=0.001)

model.compile(optimizer=optimizer, loss='categorical_crossentropy', metrics=['accuracy'])

history = model.fit(

data_generator,

steps_per_epoch = data_generator.samples // 32,

validation_data = validation_generator,

validation_steps = validation_generator.samples // 32,

epochs=50, callbacks=[early_stopping, checkpointer])

# -

converter = tf.lite.TFLiteConverter.from_keras_model(model)

converter.experimental_new_converter = True

tflite_model = converter.convert()

open("asl_char.tflite", "wb").write(tflite_model)

|

train_model/train_char_model.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] colab_type="text" id="rX8mhOLljYeM"

# ##### Copyright 2019 The TensorFlow Authors.

# + cellView="form" colab_type="code" id="BZSlp3DAjdYf" colab={}

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# + [markdown] colab_type="text" id="3wF5wszaj97Y"

# # Lướt nhanh cơ bản TensorFlow 2.0

# + [markdown] colab_type="text" id="DUNzJc4jTj6G"

# <table class="tfo-notebook-buttons" align="left">

# <td>

# <a target="_blank" href="https://www.tensorflow.org/tutorials/quickstart/beginner"><img src="https://www.tensorflow.org/images/tf_logo_32px.png" />Xem trên TensorFlow.org</a>

# </td>

# <td>

# <a target="_blank" href="https://colab.research.google.com/github/tensorflow/docs-l10n/blob/master/site/vi/tutorials/quickstart/beginner.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Chạy trên Google Colab</a>

# </td>

# <td>

# <a target="_blank" href="https://github.com/tensorflow/docs-l10n/blob/master/site/vi/tutorials/quickstart/beginner.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />Xem mã nguồn trên GitHub</a>

# </td>

# <td>

# <a href="https://storage.googleapis.com/tensorflow_docs/docs-l10n/site/vi/tutorials/quickstart/beginner.ipynb"><img src="https://www.tensorflow.org/images/download_logo_32px.png" />Tải notebook</a>

# </td>

# </table>

# + [markdown] id="BbU6UaFdS-WR" colab_type="text"

# Note: Cộng đồng TensorFlow Việt Nam đã dịch các tài liệu này từ nguyên bản tiếng Anh.

# Vì bản dịch này dựa trên sự cố gắng từ các tình nguyện viên, nên không thể đám bảo luôn bám sát

# [Tài liệu chính thức bằng tiếng Anh](https://www.tensorflow.org/?hl=en).

# Nếu bạn có đề xuất để cải thiện bản dịch này, vui lòng tạo PR đến repository trên GitHub của [tensorflow/docs](https://github.com/tensorflow/docs)

#

# Để đăng ký dịch hoặc duyệt lại nội dung bản dịch, các bạn hãy liên hệ

# [<EMAIL>](https://groups.google.com/a/tensorflow.org/forum/#!forum/docs).

# + [markdown] colab_type="text" id="hiH7AC-NTniF"

# Đây là một tệp notebook [Google Colaboratory](https://colab.research.google.com/notebooks/welcome.ipynb). Các chương trình Python sẽ chạy trực tiếp trong trình duyệt, giúp bạn dễ dàng tìm hiểu và sử dụng TensorFlow. Để làm theo giáo trình này, chạy notebook trên Google Colab bằng cách nhấp vào nút ở đầu trang.

#

# 1. Trong Colab, kết nối đến Python runtime: Ở phía trên cùng bên phải của thanh menu, chọn *CONNECT*.

# 2. Chạy tất cả các ô chứa mã trong notebook: Chọn *Runtime* > *Run all*.

# + [markdown] colab_type="text" id="nnrWf3PCEzXL"

# Tải và cài đặt TensorFlow 2.0 RC. Import TensorFlow vào chương trình:

# + colab_type="code" id="0trJmd6DjqBZ" colab={}

from __future__ import absolute_import, division, print_function, unicode_literals

# Install TensorFlow

try:

# # %tensorflow_version only exists in Colab.

# %tensorflow_version 2.x

except Exception:

pass

import tensorflow as tf

# + [markdown] colab_type="text" id="7NAbSZiaoJ4z"

# Load và chuẩn bị [tập dữ liệu MNIST](http://yann.lecun.com/exdb/mnist/). Chuyển kiểu dữ liệu của các mẫu từ số nguyên sang số thực dấu phẩy động:

# + colab_type="code" id="7FP5258xjs-v" colab={}

mnist = tf.keras.datasets.mnist

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, x_test = x_train / 255.0, x_test / 255.0

# + [markdown] colab_type="text" id="BPZ68wASog_I"

# Xây dựng mô hình `tf.keras.Sequential` bằng cách xếp chồng các layers. Chọn trình tối ưu hoá (optimizer) và hàm thiệt hại (loss) để huấn luyện:

# + colab_type="code" id="h3IKyzTCDNGo" colab={}

model = tf.keras.models.Sequential([

tf.keras.layers.Flatten(input_shape=(28, 28)),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(10, activation='softmax')

])

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

# + [markdown] colab_type="text" id="ix4mEL65on-w"

# Huấn luyện và đánh giá mô hình:

# + colab_type="code" id="F7dTAzgHDUh7" colab={}

model.fit(x_train, y_train, epochs=5)

model.evaluate(x_test, y_test, verbose=2)

# + [markdown] colab_type="text" id="T4JfEh7kvx6m"

# Mô hình phân loại ảnh này, sau khi được huấn luyện bằng tập dữ liệu trên, đạt độ chính xác (accuracy) ~98%. Để tìm hiểu thêm, bạn có thể đọc [Giáo trình TensorFlow](https://www.tensorflow.org/tutorials/).

|

site/vi/tutorials/quickstart/beginner.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

import numpy as np

import pandas as pd

from pandas import Series,DataFrame

# +

import matplotlib.pyplot as plt

import seaborn as sns

sns.set_style('whitegrid')

# %matplotlib inline

# -

from sklearn.datasets import load_boston

boston = load_boston()

print boston.DESCR

# +

plt.hist(boston.target,bins=50)

plt.xlabel('Prices in $1000s')

plt.ylabel('Number of houses')

# +

plt.scatter(boston.data[:,5],boston.target)

plt.ylabel('Price in $1000s')

plt.xlabel('Number of rooms')

# +

boston_df = DataFrame(boston.data)

boston_df.columns = boston.feature_names

boston_df.head()

# +

boston_df['Price'] = boston.target

boston_df.head()

# -

sns.lmplot('RM', 'Price',data=boston_df)

|

Linear_Regrsn_Price_Predict.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import time

import numpy as np

from keras.models import Sequential

from keras.layers import Dense, Activation

from sklearn.model_selection import StratifiedKFold

def gen_rand(n_size=1):

'''

This function return a n_size-dimensional random vector.

'''

return np.random.random(n_size)

class NN_DE(object):

def __init__(self, n_pop=10, n_neurons=5, F=0.4, Cr=0.9, p=1, change_scheme=True ,scheme='rand',

bounds=[-1, 1], max_sp_evals=np.int(1e5), sp_tol=1e-2):

#self.n_gens=n_gens

self.n_pop=n_pop

self.n_neurons=n_neurons

self.F=F*np.ones(self.n_pop)

self.Cr=Cr*np.ones(self.n_pop)

self.bounds=bounds

self.p=p

self.scheme=scheme

self.change_schame=change_scheme

self.max_sp_evals=max_sp_evals

self.sp_tol = sp_tol

self.sp_evals=0

self.interactions=0

# Build generic model

model = Sequential()

model.add(Dense(self.n_neurons, input_dim=100, activation='tanh'))

model.add(Dense(1, activation='tanh'))

model.compile( loss='mean_squared_error', optimizer = 'rmsprop', metrics = ['accuracy'] )

self.model=model

self.change_schame=False

self.n_dim=model.count_params()

#self.population=NN_DE.init_population(self, pop_size=self.n_pop,

# dim=self.n_dim, bounds=self.bounds)

#self.train_dataset= train_dataset

#self.test_dataset= test_dataset

def init_population(self, pop_size, dim, bounds=[-1,1]):

'''

This function initialize the population to be use in DE

Arguments:

pop_size - Number of individuals (there is no default value to this yet.).

dim - dimension of the search space (default is 1).

bounds - The inferior and superior limits respectively (default is [-1, 1]).

'''

return np.random.uniform(low=bounds[0], high=bounds[1], size=(pop_size, dim))

def keep_bounds(self, pop, bounds, idx):

'''

This function keep the population in the seach space

Arguments:

pop - Population;

bounds - The inferior and superior limits respectively

'''

#up_ = np.where(pop>bounds[1])

#down_ = np.where(pop<bounds[1])

#best_ = pop[idx]

#print(pop[pop<bounds[0]])

#print(down_)

#print(best_.shape)

pop[pop<bounds[0]] = bounds[0]; pop[pop>bounds[1]] = bounds[1]

#pop[pop<bounds[0]] = 0.5*(bounds[0]+best_[down_]); pop[pop>bounds[1]] = 0.5*(bounds[1]+best_[up_])

return pop

# Define the Fitness to be used in DE

def sp_fitness(self, target, score):

'''

Calculate the SP index and return the index of the best SP found

Arguments:

target: True labels

score: the predicted labels

'''

from sklearn.metrics import roc_curve

fpr, tpr, thresholds = roc_curve(target, score)

jpr = 1. - fpr

sp = np.sqrt( (tpr + jpr)*.5 * np.sqrt(jpr*tpr) )

idx = np.argmax(sp)

return sp[idx], tpr[idx], fpr[idx]#sp, idx, sp[idx], tpr[idx], fpr[idx]

def convert_vector_weights(self, pop, nn_model):

model = nn_model

generic_weights = model.get_weights()

hl_lim = generic_weights[0].shape[0]*generic_weights[0].shape[1]

w = []

hl = pop[:hl_lim]

ol = pop[hl_lim+generic_weights[1].shape[0]:hl_lim+generic_weights[1].shape[0]+generic_weights[1].shape[0]]

w.append(hl.reshape(generic_weights[0].shape))

w.append(pop[hl_lim:hl_lim+generic_weights[1].shape[0]])

w.append(ol.reshape(generic_weights[2].shape))

w.append(np.array(pop[-1]).reshape(generic_weights[-1].shape))

return w

def set_weights_to_keras_model_and_compute_fitness(self,pop, data, nn_model):

'''

This function will create a generic model and set the weights to this model and compute the fitness.

Arguments:

pop - The population of weights.

data - The samples to be used to test.

'''

fitness = np.zeros((pop.shape[0],3))

#test_fitness = np.zeros((pop.shape[0],3))

model=nn_model

if pop.shape[0]!= self.n_pop:

#print('Local seach ind...')

w = NN_DE.convert_vector_weights(self, pop=pop, nn_model=model)

model.set_weights(w)

y_score = model.predict(data[0])

fitness = NN_DE.sp_fitness(self, target=data[1], score=y_score)

# Compute the SP for test in the same calling to minimeze the evals

#test_y_score = model.predict(test_data[0])

#test_fitness = NN_DE.sp_fitness(self, target=test_data[1], score=test_y_score)

return fitness#, test_fitness

for ind in range(pop.shape[0]):

w = NN_DE.convert_vector_weights(self, pop=pop[ind], nn_model=model)

model.set_weights(w)

y_score = model.predict(data[0])

fitness[ind] = NN_DE.sp_fitness(self, target=data[1], score=y_score)

# Compute the SP for test in the same calling to minimeze the evals

#test_y_score = model.predict(test_data[0])

#test_fitness[ind] = NN_DE.sp_fitness(self, target=test_data[1], score=test_y_score)

#print('Population ind: {} - SP: {} - PD: {} - PF: {}'.format(ind, fitness[ind][0], fitness[ind][1], fitness[ind][2]))

return fitness#, test_fitness

def evolution(self, dataset):

self.population=NN_DE.init_population(self, pop_size=self.n_pop,

dim=self.n_dim, bounds=self.bounds)

r_NNDE = {}

fitness = NN_DE.set_weights_to_keras_model_and_compute_fitness(self, pop=self.population,

data=dataset,

nn_model=self.model)

best_idx = np.argmax(fitness[:,0])

# Create the vectors F and Cr to be adapted during the interactions

NF = np.zeros_like(self.F)

NCr = np.zeros_like(self.Cr)

# Create a log

r_NNDE['log'] = []

r_NNDE['log'].append((self.sp_evals, fitness[best_idx], np.mean(fitness, axis=0),

np.std(fitness, axis=0), np.min(fitness, axis=0), np.median(fitness, axis=0), self.F, self.Cr))

#r_NNDE['test_log'] = []

#r_NNDE['test_log'].append((self.sp_evals, test_fitness[best_idx], np.mean(test_fitness, axis=0),

#np.std(test_fitness, axis=0), np.min(test_fitness, axis=0), np.median(test_fitness, axis=0), self.F, self.Cr))

while self.sp_evals < self.max_sp_evals:

# ============ Mutation Step ===============

mutant = np.zeros_like(self.population)

for ind in range(self.population.shape[0]):

if gen_rand() < 0.1:

NF[ind] = 0.2 +0.2*gen_rand()

else:

NF[ind] = self.F[ind]

tmp_pop = np.delete(self.population, ind, axis=0)

choices = np.random.choice(tmp_pop.shape[0], 1+2*self.p, replace=False)

diffs = 0

for idiff in range(1, len(choices), 2):

diffs += NF[ind]*((tmp_pop[choices[idiff]]-tmp_pop[choices[idiff+1]]))

if self.scheme=='rand':

mutant[ind] = tmp_pop[choices[0]] + diffs

elif self.scheme=='best':

mutant[ind] = self.population[best_idx] + diffs

# keep the bounds

mutant = NN_DE.keep_bounds(self, mutant, bounds=[-1,1], idx=best_idx)

# ============ Crossover Step =============

trial_pop = np.copy(self.population)

K = np.random.choice(trial_pop.shape[1])

for ind in range(trial_pop.shape[0]):

if gen_rand() < 0.1:

NCr[ind] = 0.8 +0.2*gen_rand()

else:

NCr[ind] = self.Cr[ind]

for jnd in range(trial_pop.shape[1]):

if jnd == K or gen_rand()<NCr[ind]:

trial_pop[ind][jnd] = mutant[ind][jnd]

# keep the bounds

trial_pop = NN_DE.keep_bounds(self, trial_pop, bounds=[-1,1], idx=best_idx)

trial_fitness = NN_DE.set_weights_to_keras_model_and_compute_fitness(self, pop=trial_pop,

data=dataset,

nn_model=self.model)

self.sp_evals += self.population.shape[0]

# ============ Selection Step ==============

winners = np.where(trial_fitness[:,0]>fitness[:,0])

# Auto-adtaptation of F and Cr like NSSDE

self.F[winners] = NF[winners]

self.Cr[winners] = NCr[winners]

# Greedy Selection

fitness[winners] = trial_fitness[winners]

self.population[winners] = trial_pop[winners]

best_idx = np.argmax(fitness[:,0])

if self.interactions > 0.95*self.max_sp_evals/self.n_pop:

print('=====Interaction: {}====='.format(self.interactions+1))

print('Best NN found - SP: {} / PD: {} / FA: {}'.format(fitness[best_idx][0],

fitness[best_idx][1],

fitness[best_idx][2]))

#print('Test > Mean - SP: {} +- {}'.format(np.mean(test_fitness,axis=0)[0],

# np.std(test_fitness,axis=0)[0]))

# Local search like NSSDE

a_1 = gen_rand(); a_2 = gen_rand()

a_3 = 1.0 - a_1 - a_2

k, r1, r2 = np.random.choice(self.population.shape[0], size=3)

V = np.zeros_like(self.population[k])

for jdim in range(self.population.shape[1]):

V[jdim] = a_1*self.population[k][jdim] + a_2*self.population[best_idx][jdim] + a_3*(self.population[r1][jdim] - self.population[r2][jdim])

V = NN_DE.keep_bounds(self, V, bounds=self.bounds, idx=best_idx)

V_train_fitness = NN_DE.set_weights_to_keras_model_and_compute_fitness(self, pop=V,

data=dataset,

nn_model=self.model)

self.sp_evals += 1

if V_train_fitness[0] > fitness[k][0]:

#print('Found best model using local search...')

self.population[k] = V

if V_train_fitness[0] > fitness[best_idx][0]:

best_idx = k

# ======== Done interaction ===========

self.interactions += 1

r_NNDE['log'].append((self.sp_evals, fitness[best_idx], np.mean(fitness, axis=0),

np.std(fitness, axis=0), np.min(fitness, axis=0), np.median(fitness, axis=0), self.F, self.Cr))

#r_NNDE['test_log'].append((self.sp_evals, test_fitness[best_idx], np.mean(test_fitness, axis=0),

# np.std(test_fitness, axis=0), np.min(test_fitness, axis=0), np.median(test_fitness, axis=0), self.F, self.Cr))

#print('Fitness: ', fitness[:,0])

#print('Mean: ',np.mean(fitness[:,0]))

if np.mean(fitness[:,0]) > .9 and np.abs(np.mean(fitness[:,0])-fitness[best_idx][0])< self.sp_tol:

print('Stop by Mean Criteria...')

break

# Compute the test

#test_fitness = NN_DE.set_weights_to_keras_model_and_compute_fitness(self, pop=self.population,

# data=self.test_dataset, nn_model=self.model)

r_NNDE['champion weights'] = NN_DE.convert_vector_weights(self, self.population[best_idx], self.model)

r_NNDE['model'] = self.model

r_NNDE['best index'] = best_idx

r_NNDE['Best NN'] = fitness[best_idx]

r_NNDE['fitness'] = fitness

#r_NNDE['test_fitness'] = test_fitness

r_NNDE['population'] = self.population,

return r_NNDE

# +

data = np.load('/home/micael/MyWorkspace/RingerRepresentation/2channels/data17-18_13TeV.sgn_lhmedium_probes.EGAM2.bkg.vetolhvloose.EGAM7.samples.npz')

sgn = data['signalPatterns_etBin_2_etaBin_0']

bkg = data['backgroundPatterns_etBin_2_etaBin_0']

# Equilibrate the classes to make a controled tests

bkg = bkg[np.random.choice(bkg.shape[0], size=sgn.shape[0]),:]

#print(sgn.shape, bkg.shape)

sgn_trgt = np.ones(sgn.shape[0])

bkg_trgt = -1*np.ones(bkg.shape[0])

sgn_normalized = np.zeros_like(sgn)

for ind in range(sgn.shape[0]):

sgn_normalized[ind] = sgn[ind]/np.abs(np.sum(sgn[ind]))

bkg_normalized = np.zeros_like(bkg)

for ind in range(bkg.shape[0]):

bkg_normalized[ind] = bkg[ind]/np.abs(np.sum(bkg[ind]))

data_ = np.append(sgn_normalized, bkg_normalized, axis=0)

trgt = np.append(sgn_trgt, bkg_trgt)

# -

n_runs = 10

# +

# %%time

result_dict = {}

nn_de = NN_DE(n_pop=20, max_sp_evals=2e3, scheme='best', sp_tol=1e-3)

for irun in range(n_runs):

init_run_time = time.time()

print('Begin Run {}'.format(irun+1))

result_dict['Run {}'.format(irun+1)] = nn_de.evolution(dataset=(data_, trgt))

end_run_time = time.time()

print('Run {} - Time: {}'.format(irun+1, end_run_time - init_run_time))

# -

return_dict.keys()

r = []

m = []

for ifold in return_dict.keys():

print(ifold, '>', return_dict[ifold]['fitness'][return_dict[ifold]['best index']])

r.append(return_dict[ifold]['fitness'][return_dict[ifold]['best index']])

m.append(np.mean(return_dict[ifold]['fitness'], axis=0))

#print('population: {}+-{}'.format(np.around(np.mean(return_dict[ifold]['fitness'], axis=0),7),

# np.around(np.std(return_dict[ifold]['fitness'], axis=0),7)))

print(np.around(100*np.mean(r, axis=0),7), np.around(100*np.std(r, axis=0),7))

print('Pop: ', np.around(100*np.mean(m, axis=0),7), np.around(100*np.std(m, axis=0),7))

r_ = {}

for ifold in return_dict.keys():

print(len(return_dict[ifold]['log']))

checks = list(range(0,len(return_dict[ifold]['log'])))

r_[ifold]={}

r_[ifold]['train'] = []

#r_[ifold]['test'] = []

for icheck in checks:

#print(ifold, '>', return_dict[ifold]['log'][icheck][2])

r_[ifold]['train'].append(return_dict[ifold]['log'][icheck][2])

#r_[ifold]['test'].append(return_dict[ifold]['test_log'][icheck][2])

merits = {

'SP' : 'SP Index',

'PD' : 'PD',

'FA' : 'FA'

}

# +

import matplotlib.pyplot as plt

plt.style.use('_classic_test')

folds = ['Run 1', 'Run 2', 'Run 3', 'Run 4', 'Run 5', 'Run 6', 'Run 7', 'Run 8', 'Run 9', 'Run 10']

#x_axis = np.array([0., 20, 100, 1000,2000,3000, 4000, 5000, 6000, 7000, 8000, 9000, 10000])

for idx, imerit in enumerate(merits.keys()):

print('Plot: ', imerit)

#f, (ax1, ax2) = plt.subplots(1, 2, sharey=False, figsize=(15,5))

for ifold in folds:

plt.plot(np.array(r_[ifold]['train'])[:,idx], label=ifold)

plt.legend(fontsize='large', loc='best')

plt.title(merits[imerit]+' - Train', fontsize=15)

plt.xlabel('Interactions', fontsize=10)

plt.ylabel('Mean '+merits[imerit], fontsize=10)

plt.grid(True)

#ax2.plot(np.array(r_[ifold]['test'])[:,idx], label=ifold)

#ax2.legend(fontsize='large', loc='best')

#ax2.set_title(merits[imerit]+' - Test', fontsize=15)

#ax2.set_xlabel('Interactions', fontsize=10)

#ax2.set_ylabel('Mean '+merits[imerit], fontsize=10)

#ax2.grid(True)

#plt.savefig(merits[imerit]+'.rand1bin.2000evals.withLS.MeanStopCriteria.pdf',)

#plt.savefig(merits[imerit]+'.rand1bin.2000evals.withLS.MeanStopCriteria.png', dpi=150)

plt.show()

# -

print(type(return_dict))

return_dict = dict(return_dict)

print(type(return_dict))

return_dict.keys()

return_dict['CVO'] = CVO

return_dict['CVO']

# +

import pickle

with open('nnde.5neurons.rand1bin.2000evals.withLS.MeanStopCriteria.pickle', 'wb') as handle:

pickle.dump(return_dict, handle, protocol=pickle.HIGHEST_PROTOCOL)

# -

# # Here begin the Backpropagation

#

# Steps:

#

# 1. Get the champions and set the model.

# 2. Fit the model in each fold.

# 3. Get the results.

number_of_epoch = 100

# %%time

for i in range(number_of_epoch):

pass

return_dict['Fold 1'].keys()

return_dict['Fold 1']['champion weights']

# +

import time

inicio = time.time()

nn_de = NN_DE(n_pop=20, max_sp_evals=1e4, scheme='rand')

resultado = {}

for ifold, (train_index, test_index) in enumerate(CVO):

print("TRAIN:", train_index, "TEST:", test_index, "Fold: ", ifold)

resultado['Fold {}'.format(ifold+1)] = nn_de.evolution(train_dataset=(data_[train_index], trgt[train_index]),

test_dataset=(data_[test_index], trgt[test_index]))

fim=time.time()

print('Demorou - {} segundos'.format(fim-inicio))

# -

resultado['Fold 1']

resultado = {}

for train_index, test_index in skf.split(data_, trgt):

print("TRAIN:", train_index, "TEST:", test_index)

teste = NN_DE(n_pop=20,max_sp_evals=2e3, scheme='rand', sp_tol=0.1)

ev = teste.evolution(train_dataset=(data_, trgt), test_dataset=(data_, trgt))

ev.keys()

ev['log'][-1]

np.mean(ev['fitness'],axis=0), np.std(ev['fitness'], axis=0)

np.argmin(ev['fitness'][:,0]), ev['best index']

ev['fitness'][ev['best index']], ev['fitness'][np.argmin(ev['fitness'][:,0])]

|

dev_notebooks/classNN-DE.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# # Example curve fitting

# ## Error of the curve

#

# This script also shows the error of the curve fit, the error shows how far the actual data and the curve fit data are apart from eachother. Their are 4 types of errors shown:

#

# ### Max error

# The maximum error shows the highest difference between the actual data and the curve fit data at a certain point in the graph.

#

# ### Minimum error

# The minimum error shows the lowest difference between the actual data and the curve fit data at a certain point in the graph.

#

# ### Total error

# The total error shows the sum of all the differences between the actual data and the curve fit data.

#

# ### Average error

# The average error shows the average difference between the actual data and the curve fit data through the entire graph.

#

# ### Root mean squared error

# This is a indication of how accurate the simulated data is compared to the actual data. This rmse is the most important stat for our curve fitting model

# ## Import libraries

from numpy import arange

from numpy import sin

import numpy as np

from pandas import read_csv

from scipy.optimize import curve_fit

from matplotlib import pyplot

import math

from curveFitAlgorithm import *

# ## Dataset we are working with in the examples

#

# This dataset contains information about population vs employed

# +

# link of the tutorial https://machinelearningmastery.com/curve-fitting-with-python/

# plot "Population" vs "Employed"

# load the dataset

url = 'https://raw.githubusercontent.com/jbrownlee/Datasets/master/longley.csv'

dataframe = read_csv(url, header=None)

data = dataframe.values

# choose the input and output variables

x, y = data[:, 4], data[:, -1]

# plot input vs output

pyplot.scatter(x, y)

pyplot.show()

# -

# ## Polynomial regression curve fitting

#

# In statistics, polynomial regression is a form of regression analysis in which the relationship between the independent variable x and the dependent variable y is modelled as an nth degree polynomial in x. Polynomial regression fits a nonlinear relationship between the value of x and the corresponding conditional mean of y, denoted E(y |x). Although polynomial regression fits a nonlinear model to the data, as a statistical estimation problem it is linear, in the sense that the regression function E(y | x) is linear in the unknown parameters that are estimated from the data. For this reason, polynomial regression is considered to be a special case of multiple linear regression.

# +

#polynomial regression curve fitting

# choose the input and output variables

x, y = data[:, 4], data[:, -1]

curve_fit_algorithm = PolynomialRegressionFit(x, y)

y_line = curve_fit_algorithm.get_y_line()

x_line = curve_fit_algorithm.get_x_line()

# plot input vs output

pyplot.scatter(x, y)

# create a line plot for the mapping function

pyplot.plot(x_line, y_line, '-', color='red')

pyplot.show()

print('rmse: ', curve_fit_algorithm.get_rmse())

print('total error: ', curve_fit_algorithm.get_total_error())

print('max error: ', curve_fit_algorithm.get_max_error())

print('min error: ', curve_fit_algorithm.get_min_error())

print('average error: ', curve_fit_algorithm.get_average_error())

# -

# ## Sine wave curve fitting

#

# The sine-fit algorithm is a fitting algorithm based on parameter estimation. Sine function signal model is sampled at equal intervals. The least squares method is used to fit the sampling sequence to determine the amplitude, frequency, phase and DC component of the sine-wave, so as to obtain a sine function expression

#

# +

#Sine wave curve fitting

# choose the input and output variables

x, y = data[:, 4], data[:, -1]

curve_fit_algorithm = SineWaveFit(x, y)

y_line = curve_fit_algorithm.get_y_line()

x_line = curve_fit_algorithm.get_x_line()

# plot input vs output

pyplot.scatter(x, y)

# create a line plot for the mapping function

pyplot.plot(x_line, y_line, '-', color='red')

pyplot.show()

print('rmse: ', curve_fit_algorithm.get_rmse())

print('total error: ', curve_fit_algorithm.get_total_error())

print('max error: ', curve_fit_algorithm.get_max_error())

print('min error: ', curve_fit_algorithm.get_min_error())

print('average error: ', curve_fit_algorithm.get_average_error())

# -

# ## non-linear least squares curve fitting

#

# Non-linear least squares is the form of least squares analysis used to fit a set of m observations with a model that is non-linear in n unknown parameters (m ≥ n). It is used in some forms of nonlinear regression. The basis of the method is to approximate the model by a linear one and to refine the parameters by successive iterations.

#

# +

#non-linear least squares curve fitting

# choose the input and output variables

x, y = data[:, 4], data[:, -1]

curve_fit_algorithm = NonLinearLeastSquaresFit(x, y)

y_line = curve_fit_algorithm.get_y_line()

x_line = curve_fit_algorithm.get_x_line()

# plot input vs output

pyplot.scatter(x, y)

# create a line plot for the mapping function

pyplot.plot(x_line, y_line, '-', color='red')

pyplot.show()

print('rmse: ', curve_fit_algorithm.get_rmse())

print('total error: ', curve_fit_algorithm.get_total_error())

print('max error: ', curve_fit_algorithm.get_max_error())

print('min error: ', curve_fit_algorithm.get_min_error())

print('average error: ', curve_fit_algorithm.get_average_error())

# -

# ## Fifth degree polynomial

#

# Fifth degree polynomials are also known as quintic polynomials. Quintics have these characteristics:

#

# * One to five roots.

# * Zero to four extrema.

# * One to three inflection points.

# * No general symmetry.

# * It takes six points or six pieces of information to describe a quintic function.

# +

#Fifth degree polynomial

# choose the input and output variables

x, y = data[:, 4], data[:, -1]

curve_fit_algorithm = FifthDegreePolynomialFit(x, y)

y_line = curve_fit_algorithm.get_y_line()

x_line = curve_fit_algorithm.get_x_line()

# plot input vs output

pyplot.scatter(x, y)

# create a line plot for the mapping function

pyplot.plot(x_line, y_line, '-', color='red')

pyplot.show()

print('rmse: ', curve_fit_algorithm.get_rmse())

print('total error: ', curve_fit_algorithm.get_total_error())

print('max error: ', curve_fit_algorithm.get_max_error())

print('min error: ', curve_fit_algorithm.get_min_error())

print('average error: ', curve_fit_algorithm.get_average_error())

# -

# ## Linear curve fitting

#

#

# +

#linear curve fitting

# choose the input and output variables

x, y = data[:, 4], data[:, -1]

# plot input vs output

pyplot.scatter(x, y)

# For details about the algoritm read the curveFitAlgoritm.py file or the technical documentation

curve_fit_algorithm = LinearFit(x, y)

y_line = curve_fit_algorithm.get_y_line()

x_line = curve_fit_algorithm.get_x_line()

# create a line plot for the mapping function

pyplot.plot(x_line, y_line, '-', color='red')

pyplot.show()

print('rmse: ', curve_fit_algorithm.get_rmse())

print('total error: ', curve_fit_algorithm.get_total_error())

print('max error: ', curve_fit_algorithm.get_max_error())

print('min error: ', curve_fit_algorithm.get_min_error())

print('average error: ', curve_fit_algorithm.get_average_error())

# -

|

docs/curve fitting/example.ipynb

|

# ##### Copyright 2020 The OR-Tools Authors.

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# # least_diff

# <table align="left">

# <td>

# <a href="https://colab.research.google.com/github/google/or-tools/blob/master/examples/notebook/contrib/least_diff.ipynb"><img src="https://raw.githubusercontent.com/google/or-tools/master/tools/colab_32px.png"/>Run in Google Colab</a>

# </td>

# <td>

# <a href="https://github.com/google/or-tools/blob/master/examples/contrib/least_diff.py"><img src="https://raw.githubusercontent.com/google/or-tools/master/tools/github_32px.png"/>View source on GitHub</a>

# </td>

# </table>

# First, you must install [ortools](https://pypi.org/project/ortools/) package in this colab.

# !pip install ortools

# +

# Copyright 2010 <NAME> <EMAIL>

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

"""

Least diff problem in Google CP Solver.

This model solves the following problem:

What is the smallest difference between two numbers X - Y

if you must use all the digits (0..9) exactly once.

Compare with the following models:

* Choco : http://www.hakank.org/choco/LeastDiff2.java

* ECLiPSE : http://www.hakank.org/eclipse/least_diff2.ecl

* Comet : http://www.hakank.org/comet/least_diff.co

* Tailor/Essence': http://www.hakank.org/tailor/leastDiff.eprime

* Gecode : http://www.hakank.org/gecode/least_diff.cpp

* Gecode/R: http://www.hakank.org/gecode_r/least_diff.rb

* JaCoP : http://www.hakank.org/JaCoP/LeastDiff.java

* MiniZinc: http://www.hakank.org/minizinc/least_diff.mzn

* SICStus : http://www.hakank.org/sicstus/least_diff.pl

* Zinc : http://hakank.org/minizinc/least_diff.zinc

This model was created by <NAME> (<EMAIL>)

Also see my other Google CP Solver models:

http://www.hakank.org/google_cp_solver/

"""

from __future__ import print_function

from ortools.constraint_solver import pywrapcp

# Create the solver.

solver = pywrapcp.Solver("Least diff")

#

# declare variables

#

digits = list(range(0, 10))

a = solver.IntVar(digits, "a")

b = solver.IntVar(digits, "b")

c = solver.IntVar(digits, "c")

d = solver.IntVar(digits, "d")

e = solver.IntVar(digits, "e")

f = solver.IntVar(digits, "f")

g = solver.IntVar(digits, "g")

h = solver.IntVar(digits, "h")

i = solver.IntVar(digits, "i")

j = solver.IntVar(digits, "j")

letters = [a, b, c, d, e, f, g, h, i, j]

digit_vector = [10000, 1000, 100, 10, 1]

x = solver.ScalProd(letters[0:5], digit_vector)

y = solver.ScalProd(letters[5:], digit_vector)

diff = x - y

#

# constraints

#

solver.Add(diff > 0)

solver.Add(solver.AllDifferent(letters))

# objective

objective = solver.Minimize(diff, 1)

#

# solution

#

solution = solver.Assignment()

solution.Add(letters)

solution.Add(x)

solution.Add(y)

solution.Add(diff)

# last solution since it's a minimization problem

collector = solver.LastSolutionCollector(solution)

search_log = solver.SearchLog(100, diff)

# Note: I'm not sure what CHOOSE_PATH do, but it is fast:

# find the solution in just 4 steps

solver.Solve(

solver.Phase(letters, solver.CHOOSE_PATH, solver.ASSIGN_MIN_VALUE),

[objective, search_log, collector])

# get the first (and only) solution

xval = collector.Value(0, x)

yval = collector.Value(0, y)

diffval = collector.Value(0, diff)

print("x:", xval)

print("y:", yval)

print("diff:", diffval)

print(xval, "-", yval, "=", diffval)

print([("abcdefghij" [i], collector.Value(0, letters[i])) for i in range(10)])

print()

print("failures:", solver.Failures())

print("branches:", solver.Branches())

print("WallTime:", solver.WallTime())

print()

|

examples/notebook/contrib/least_diff.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + active=""

# Explore indicator names to identify indicators that are not documented each year and indicators named differently in consecutive years, to support renaming or exclusion decisions.

# -

import pandas as pd

import os

import re

years=['2014','2015','2016','2017']

dirpath='//Users/rony/Dropbox/2_projects/Health_Geo/tables/'

#check indicator names

indicators=[]

filenames=[]

indicator_names={}

for year in years:

input_path=dirpath+'{year}/prevalence/cleaned/'.format(year=year)

filenames=os.listdir(path=input_path)

filenames=[y for y in filenames if bool(re.search('.csv',y))==True] #clean none .csv files

indicators=[re.findall('(.*).csv',x)[0] for x in filenames]

if '.ipynb_checkpoints' in filenames:

filenames.remove('.ipynb_checkpoints')

year_int=int(year)

indicator_names[year]=indicators

#double iteration over the indicator list dictionary to identify names that differ for the same indicator

for year, indicators in indicator_names.items():

#print (year,indicators)

if int(year)<2017:

year_1=str(int(year)+1)

for year, indicators in indicator_names.items():

indicators_1=indicator_names[year_1]

if year!=year_1:

set1=set (indicators)

set2=set (indicators_1)

if set1 - set2 !={}:

print ('indicator names difference , {year} and {year1}:'.format (year=year, year1=year_1), set1 - set2)

if set2 - set1 !={}:

print ('indicator names difference, {year1} and {year}:'.format (year=year, year1=year_1), set2 - set1)

print ('-----------------')

#double iteration over the indicator list dictionary to identify names that differ for the same indicator

for year, indicators in indicator_names.items():

#print (year,indicators)

if int(year)<2017:

year_1=str(int(year)+1)

indicators_1=indicator_names[year_1]

set1=set (indicators)

set2=set (indicators_1)

print ('indicator names difference , {year} and {year1}:'.format (year=year, year1=year_1), set1 - set2)

print ('indicator names difference, {year1} and {year}:'.format (year=year, year1=year_1), set2 - set1)

print ()

print ('indicators:', indicators)

print ()

print ('indicators_1:', indicators_1)

print ()

print ('-----------------')

# + active=""

# indicator names difference , 2014 and 2015: {'Learning_Disabilities_(18+)', 'Cardiovascular_Disease_-_Primary_Prevention', 'Hypothyroidism'}

# indicator names difference, 2015 and 2014: {'Learning_Disabilities', 'Heart_Failure_Due_To_Left_Ventrical_Systolic_Dysfunction', 'Cardiovascular_Disease_-_Primary_Prevention_(30-74)', 'Obesity', 'Learning_Disabilities_All_Ages'}

# + active=""

# Summary of manual check and decisions: indicators_names_differences.xsls

# -

decisions=pd.read_csv('indicators_names_differences.csv')

decisions

|

preparation/data/prevalence/indicator_names.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import gzip

import json

import pandas as pd

path_data = '../dataset/reviews_Cell_Phones_and_Accessories_5.json.gz'

path_data_prepaired = '../dataset/dataset.json'

# +

def parse(path):

g = gzip.open(path, 'rb')

for l in g:

yield eval(l)

def getDF(path):

i = 0

df = {}

for d in parse(path):

df[i] = d

i += 1

return pd.DataFrame.from_dict(df, orient='index')

# %time df = getDF(path_data)

# -

# %time df.to_json(path_data_prepaired, orient='records')

df.shape

# # References (links):

#

# - 1. https://www.kaggle.com/varun08/sentiment-analysis-using-word2vec

#

# - 2. http://www.aclweb.org/anthology/C14-1008

#

# - 3. datasets are here http://jmcauley.ucsd.edu/data/amazon/

#

|

notebooks/preprocessing.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Convolutional Neural Networks

#

# ## Project: Write an Algorithm for a Dog Identification App

#

# ---

#

# In this notebook, some template code has already been provided for you, and you will need to implement additional functionality to successfully complete this project. You will not need to modify the included code beyond what is requested. Sections that begin with **'(IMPLEMENTATION)'** in the header indicate that the following block of code will require additional functionality which you must provide. Instructions will be provided for each section, and the specifics of the implementation are marked in the code block with a 'TODO' statement. Please be sure to read the instructions carefully!

#

# > **Note**: Once you have completed all of the code implementations, you need to finalize your work by exporting the Jupyter Notebook as an HTML document. Before exporting the notebook to html, all of the code cells need to have been run so that reviewers can see the final implementation and output. You can then export the notebook by using the menu above and navigating to **File -> Download as -> HTML (.html)**. Include the finished document along with this notebook as your submission.

#

# In addition to implementing code, there will be questions that you must answer which relate to the project and your implementation. Each section where you will answer a question is preceded by a **'Question X'** header. Carefully read each question and provide thorough answers in the following text boxes that begin with **'Answer:'**. Your project submission will be evaluated based on your answers to each of the questions and the implementation you provide.

#

# >**Note:** Code and Markdown cells can be executed using the **Shift + Enter** keyboard shortcut. Markdown cells can be edited by double-clicking the cell to enter edit mode.

#

# The rubric contains _optional_ "Stand Out Suggestions" for enhancing the project beyond the minimum requirements. If you decide to pursue the "Stand Out Suggestions", you should include the code in this Jupyter notebook.

#

#

#

# ---

# ### Why We're Here

#

# In this notebook, you will make the first steps towards developing an algorithm that could be used as part of a mobile or web app. At the end of this project, your code will accept any user-supplied image as input. If a dog is detected in the image, it will provide an estimate of the dog's breed. If a human is detected, it will provide an estimate of the dog breed that is most resembling. The image below displays potential sample output of your finished project (... but we expect that each student's algorithm will behave differently!).

#

#

#

# In this real-world setting, you will need to piece together a series of models to perform different tasks; for instance, the algorithm that detects humans in an image will be different from the CNN that infers dog breed. There are many points of possible failure, and no perfect algorithm exists. Your imperfect solution will nonetheless create a fun user experience!

#

# ### The Road Ahead

#

# We break the notebook into separate steps. Feel free to use the links below to navigate the notebook.

#

# * [Step 0](#step0): Import Datasets

# * [Step 1](#step1): Detect Humans

# * [Step 2](#step2): Detect Dogs

# * [Step 3](#step3): Create a CNN to Classify Dog Breeds (from Scratch)

# * [Step 4](#step4): Create a CNN to Classify Dog Breeds (using Transfer Learning)

# * [Step 5](#step5): Write your Algorithm

# * [Step 6](#step6): Test Your Algorithm

#

# ---

# <a id='step0'></a>

# ## Step 0: Import Datasets

#

# Make sure that you've downloaded the required human and dog datasets:

#