code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

import numpy as np

import pandas as pd

from os.path import join

import matplotlib.pyplot as plt

from glob import glob

from keras.models import load_model

from matplotlib.colors import LogNorm

from scipy.ndimage import gaussian_filter, maximum_filter, minimum_filter

from deepsky.gan import unnormalize_multivariate_data

from skimage.morphology import disk

import pickle

data_path = "/glade/work/dgagne/spatial_storm_results_20171220/"

#data_path = "/Users/dgagne/data/spatial_storm_results_20171220/"

scores = ["auc", "bss"]

models = ["conv_net", "logistic_mean", "logistic_pca"]

imp_scores = {}

for model in models:

imp_scores[model] = {}

for score in scores:

score_files = sorted(glob(data_path + "var_importance_{0}_{1}_*.csv".format(model, score)))

imp_score_list = []

for score_file in score_files:

print(score_file)

imp_data = pd.read_csv(score_file, index_col="Index")

imp_score_list.append(((imp_data.iloc[0,0] - imp_data.loc[1:])).mean(axis=0))

imp_scores[model][score] = pd.concat(imp_score_list, axis=1).T

imp_scores[model][score].columns = imp_scores[model][score].columns.str.rstrip("_prev"

).str.replace("_", " "

).str.replace("-component of", ""

).str.replace("dew point temperature", "dewpoint"

).str.capitalize()

fig, axes = plt.subplots(3, 2, figsize=(12, 12))

plt.subplots_adjust(wspace=0.6)

model_titles = ["Conv. Net", "Logistic Mean", "Logistic PCA"]

for m, model in enumerate(models):

for s, score in enumerate(scores):

rankings = imp_scores[model][score].mean(axis=0).sort_values().index

axes[m,s ].boxplot(imp_scores[model][score].loc[:, rankings].values, vert=False,

boxprops={"color":"k"}, whiskerprops={"color":"k"},

medianprops={"color":"k"}, flierprops={"marker":".", "markersize":3},whis=[2.5, 97.5])

axes[m, s].set_yticklabels(imp_scores[model][score].loc[:, rankings].columns.str.replace(" mb", " hPa"))

axes[m, s].set_title(model_titles[m] + " " + score.upper())

axes[m, s].grid()

#axes[m, s].set_xscale("log")

if m == len(model_titles) - 1:

axes[m, s].set_xlabel("Decrease in " + score.upper(), fontsize=12)

plt.savefig("var_imp_box.pdf", dpi=300, bbox_inches="tight")

input_cols = imp_scores[model][score].columns

log_pca_coefs = np.zeros((30, 75))

for i in range(30):

with open("/Users/dgagne/data/spatial_storm_results_20171220/" + "hail_logistic_pca_sample_{0:03d}.pkl".format(i), "rb") as pca_pkl:

log_pca_model = pickle.load(pca_pkl)

log_pca_coefs[i] = log_pca_model.model.coef_

log_gan_coefs = np.zeros((30, 64))

for i in range(30):

with open("/Users/dgagne/data/spatial_storm_results_20171220/" + "logistic_gan_{0:d}_logistic.pkl".format(i), "rb") as gan_pkl:

log_gan_model = pickle.load(gan_pkl)

log_gan_coefs[i] = log_gan_model.coef_.ravel()

plt.boxplot(np.abs(log_gan_coefs.T))

np.abs(log_pca_coefs).min()

plt.figure(figsize=(6, 10))

plt.pcolormesh(np.abs(log_pca_coefs).T, norm=LogNorm(0.0001, 1))

plt.yticks(np.arange(0, 75, 5), input_cols)

plt.barh(np.arange(15), np.abs(log_pca_coefs).mean(axis=0).reshape(15, 5).mean(axis=1))

plt.yticks(np.arange(15), input_cols)

mean_imp_matrix = pd.DataFrame(index=imp_scores["conv_net"]["bss"].columns, columns=models, dtype=float)

mean_imp_rank_matrix = pd.DataFrame(index=imp_scores["conv_net"]["bss"].columns, columns=models, dtype=int)

for model in models:

mean_imp_matrix.loc[:, model] = imp_scores[model]["bss"].values.mean(axis=0)

rank = np.argsort(imp_scores[model]["bss"].values.mean(axis=0))

for r in range(rank.size):

mean_imp_rank_matrix.loc[mean_imp_rank_matrix.index[rank[r]], model] = rank.size - r

mean_imp_matrix["conv_net"].values[np.argsort(mean_imp_matrix["conv_net"].values)]

mean_imp_rank_matrix

| notebooks/spatial_hail_feature_importance.ipynb |

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python

# language: python3

# name: python3

# ---

#

# <a id='matplotlib'></a>

# <div id="qe-notebook-header" align="right" style="text-align:right;">

# <a href="https://quantecon.org/" title="quantecon.org">

# <img style="width:250px;display:inline;" width="250px" src="https://assets.quantecon.org/img/qe-menubar-logo.svg" alt="QuantEcon">

# </a>

# </div>

# # Matplotlib

#

#

# <a id='index-1'></a>

# ## Contents

#

# - [Matplotlib](#Matplotlib)

# - [Overview](#Overview)

# - [The APIs](#The-APIs)

# - [More Features](#More-Features)

# - [Further Reading](#Further-Reading)

# - [Exercises](#Exercises)

# - [Solutions](#Solutions)

# ## Overview

#

# We’ve already generated quite a few figures in these lectures using [Matplotlib](http://matplotlib.org/).

#

# Matplotlib is an outstanding graphics library, designed for scientific computing, with

#

# - high-quality 2D and 3D plots

# - output in all the usual formats (PDF, PNG, etc.)

# - LaTeX integration

# - fine-grained control over all aspects of presentation

# - animation, etc.

# ### Matplotlib’s Split Personality

#

# Matplotlib is unusual in that it offers two different interfaces to plotting.

#

# One is a simple MATLAB-style API (Application Programming Interface) that was written to help MATLAB refugees find a ready home.

#

# The other is a more “Pythonic” object-oriented API.

#

# For reasons described below, we recommend that you use the second API.

#

# But first, let’s discuss the difference.

# ## The APIs

#

#

# <a id='index-2'></a>

# ### The MATLAB-style API

#

# Here’s the kind of easy example you might find in introductory treatments

# + hide-output=false

%matplotlib inline

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (10, 6) #set default figure size

import numpy as np

x = np.linspace(0, 10, 200)

y = np.sin(x)

plt.plot(x, y, 'b-', linewidth=2)

plt.show()

# -

# This is simple and convenient, but also somewhat limited and un-Pythonic.

#

# For example, in the function calls, a lot of objects get created and passed around without making themselves known to the programmer.

#

# Python programmers tend to prefer a more explicit style of programming (run `import this` in a code block and look at the second line).

#

# This leads us to the alternative, object-oriented Matplotlib API.

# ### The Object-Oriented API

#

# Here’s the code corresponding to the preceding figure using the object-oriented API

# + hide-output=false

fig, ax = plt.subplots()

ax.plot(x, y, 'b-', linewidth=2)

plt.show()

# -

# Here the call `fig, ax = plt.subplots()` returns a pair, where

#

# - `fig` is a `Figure` instance—like a blank canvas.

# - `ax` is an `AxesSubplot` instance—think of a frame for plotting in.

#

#

# The `plot()` function is actually a method of `ax`.

#

# While there’s a bit more typing, the more explicit use of objects gives us better control.

#

# This will become more clear as we go along.

# ### Tweaks

#

# Here we’ve changed the line to red and added a legend

# + hide-output=false

fig, ax = plt.subplots()

ax.plot(x, y, 'r-', linewidth=2, label='sine function', alpha=0.6)

ax.legend()

plt.show()

# -

# We’ve also used `alpha` to make the line slightly transparent—which makes it look smoother.

#

# The location of the legend can be changed by replacing `ax.legend()` with `ax.legend(loc='upper center')`.

# + hide-output=false

fig, ax = plt.subplots()

ax.plot(x, y, 'r-', linewidth=2, label='sine function', alpha=0.6)

ax.legend(loc='upper center')

plt.show()

# -

# If everything is properly configured, then adding LaTeX is trivial

# + hide-output=false

fig, ax = plt.subplots()

ax.plot(x, y, 'r-', linewidth=2, label='$y=\sin(x)$', alpha=0.6)

ax.legend(loc='upper center')

plt.show()

# -

# Controlling the ticks, adding titles and so on is also straightforward

# + hide-output=false

fig, ax = plt.subplots()

ax.plot(x, y, 'r-', linewidth=2, label='$y=\sin(x)$', alpha=0.6)

ax.legend(loc='upper center')

ax.set_yticks([-1, 0, 1])

ax.set_title('Test plot')

plt.show()

# -

# ## More Features

#

# Matplotlib has a huge array of functions and features, which you can discover

# over time as you have need for them.

#

# We mention just a few.

# ### Multiple Plots on One Axis

#

#

# <a id='index-3'></a>

# It’s straightforward to generate multiple plots on the same axes.

#

# Here’s an example that randomly generates three normal densities and adds a label with their mean

# + hide-output=false

from scipy.stats import norm

from random import uniform

fig, ax = plt.subplots()

x = np.linspace(-4, 4, 150)

for i in range(3):

m, s = uniform(-1, 1), uniform(1, 2)

y = norm.pdf(x, loc=m, scale=s)

current_label = f'$\mu = {m:.2}$'

ax.plot(x, y, linewidth=2, alpha=0.6, label=current_label)

ax.legend()

plt.show()

# -

# ### Multiple Subplots

#

#

# <a id='index-4'></a>

# Sometimes we want multiple subplots in one figure.

#

# Here’s an example that generates 6 histograms

# + hide-output=false

num_rows, num_cols = 3, 2

fig, axes = plt.subplots(num_rows, num_cols, figsize=(10, 12))

for i in range(num_rows):

for j in range(num_cols):

m, s = uniform(-1, 1), uniform(1, 2)

x = norm.rvs(loc=m, scale=s, size=100)

axes[i, j].hist(x, alpha=0.6, bins=20)

t = f'$\mu = {m:.2}, \quad \sigma = {s:.2}$'

axes[i, j].set(title=t, xticks=[-4, 0, 4], yticks=[])

plt.show()

# -

# ### 3D Plots

#

#

# <a id='index-5'></a>

# Matplotlib does a nice job of 3D plots — here is one example

# + hide-output=false

from mpl_toolkits.mplot3d.axes3d import Axes3D

from matplotlib import cm

def f(x, y):

return np.cos(x**2 + y**2) / (1 + x**2 + y**2)

xgrid = np.linspace(-3, 3, 50)

ygrid = xgrid

x, y = np.meshgrid(xgrid, ygrid)

fig = plt.figure(figsize=(10, 6))

ax = fig.add_subplot(111, projection='3d')

ax.plot_surface(x,

y,

f(x, y),

rstride=2, cstride=2,

cmap=cm.jet,

alpha=0.7,

linewidth=0.25)

ax.set_zlim(-0.5, 1.0)

plt.show()

# -

# ### A Customizing Function

#

# Perhaps you will find a set of customizations that you regularly use.

#

# Suppose we usually prefer our axes to go through the origin, and to have a grid.

#

# Here’s a nice example from [<NAME>](https://github.com/xcthulhu) of how the object-oriented API can be used to build a custom `subplots` function that implements these changes.

#

# Read carefully through the code and see if you can follow what’s going on

# + hide-output=false

def subplots():

"Custom subplots with axes through the origin"

fig, ax = plt.subplots()

# Set the axes through the origin

for spine in ['left', 'bottom']:

ax.spines[spine].set_position('zero')

for spine in ['right', 'top']:

ax.spines[spine].set_color('none')

ax.grid()

return fig, ax

fig, ax = subplots() # Call the local version, not plt.subplots()

x = np.linspace(-2, 10, 200)

y = np.sin(x)

ax.plot(x, y, 'r-', linewidth=2, label='sine function', alpha=0.6)

ax.legend(loc='lower right')

plt.show()

# -

# The custom `subplots` function

#

# 1. calls the standard `plt.subplots` function internally to generate the `fig, ax` pair,

# 1. makes the desired customizations to `ax`, and

# 1. passes the `fig, ax` pair back to the calling code.

# ## Further Reading

#

# - The [Matplotlib gallery](http://matplotlib.org/gallery.html) provides many examples.

# - A nice [Matplotlib tutorial](http://scipy-lectures.org/intro/matplotlib/index.html) by <NAME>, <NAME> and <NAME>.

# - [mpltools](http://tonysyu.github.io/mpltools/index.html) allows easy

# switching between plot styles.

# - [Seaborn](https://github.com/mwaskom/seaborn) facilitates common statistics plots in Matplotlib.

# ## Exercises

# ### Exercise 1

#

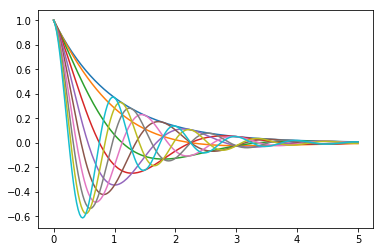

# Plot the function

#

# $$

# f(x) = \cos(\pi \theta x) \exp(-x)

# $$

#

# over the interval $ [0, 5] $ for each $ \theta $ in `np.linspace(0, 2, 10)`.

#

# Place all the curves in the same figure.

#

# The output should look like this

#

#

# ## Solutions

# ### Exercise 1

#

# Here’s one solution

# + hide-output=false

def f(x, θ):

return np.cos(np.pi * θ * x ) * np.exp(- x)

θ_vals = np.linspace(0, 2, 10)

x = np.linspace(0, 5, 200)

fig, ax = plt.subplots()

for θ in θ_vals:

ax.plot(x, f(x, θ))

plt.show()

| tests/project/ipynb/matplotlib.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# # Introduction to Data Analysis

# **Data Analyst Nanodegree P2: Investigate a Dataset**

#

# **<NAME>**

#

# [List of Resources](#Resources)

#

# ## Introduction

#

# For the final project, you will conduct your own data analysis and create a file to share that documents your findings. You should start by taking a look at your dataset and brainstorming what questions you could answer using it. Then you should use Pandas and NumPy to answer the questions you are most interested in, and create a report sharing the answers. You will not be required to use statistics or machine learning to complete this project, but you should make it clear in your communications that your findings are tentative. This project is open-ended in that we are not looking for one right answer.

#

# ## Step One - Choose Your Data Set

#

# **Titanic Data** - Contains demographics and passenger information from 891 of the 2224 passengers and crew on board the Titanic. You can view a description of this dataset on the [Kaggle website](https://www.kaggle.com/c/titanic/data), where the data was obtained.

#

# From the Kaggle website:

#

# VARIABLE DESCRIPTIONS:

# survival Survival

# (0 = No; 1 = Yes)

# pclass Passenger Class

# (1 = 1st; 2 = 2nd; 3 = 3rd)

# name Name

# sex Sex

# age Age

# sibsp Number of Siblings/Spouses Aboard

# parch Number of Parents/Children Aboard

# ticket Ticket Number

# fare Passenger Fare

# cabin Cabin

# embarked Port of Embarkation

# (C = Cherbourg; Q = Queenstown; S = Southampton)

#

# SPECIAL NOTES:

# Pclass is a proxy for socio-economic status (SES)

# 1st ~ Upper; 2nd ~ Middle; 3rd ~ Lower

#

# Age is in Years; Fractional if Age less than One (1)

# If the Age is Estimated, it is in the form xx.5

#

# With respect to the family relation variables (i.e. sibsp and parch)some relations were ignored. The following are the definitions used for sibsp and parch.

#

# Sibling: Brother, Sister, Stepbrother, or Stepsister of Passenger Aboard Titanic

# Spouse: Husband or Wife of Passenger Aboard Titanic (Mistresses and Fiances Ignored)

# Parent: Mother or Father of Passenger Aboard Titanic

# Child: Son, Daughter, Stepson, or Stepdaughter of Passenger Aboard Titanic

#

# Other family relatives excluded from this study include cousins, nephews/nieces, aunts/uncles, and in-laws.

# Some children travelled only with a nanny, therefore parch=0 for them. As well, some travelled with very close friends or neighbors in a village, however, the definitions do not support such relations.

#

# ## Step Two - Get Organized

#

# Eventually you’ll want to submit your project (and share it with friends, family, and employers). Get organized before you begin. We recommend creating a single folder that will eventually contain:

#

# Using IPython notebook, containing both the code report of findings in the same document

# ## Step Three - Analyze Your Data

#

# Brainstorm some questions you could answer using the data set you chose, then start answering those questions. Here are some ideas to get you started:

#

# Titanic Data

# What factors made people more likely to survive?

# +

import pandas as pd

import numpy as np

from scipy import stats

import matplotlib.pyplot as plt

import matplotlib

# %pylab inline

matplotlib.style.use('ggplot')

# -

titanic_data = pd.read_csv('titanic_data.csv')

titanic_data.head()

# ## Step Four - Share Your Findings

#

# Once you have finished analyzing the data, create a report that shares the findings you found most interesting. You might wish to use IPython notebook to share your findings alongside the code you used to perform the analysis, but you can also use another tool if you wish.

#

# ## Step Five - Review

#

# Use the Project Rubric to review your project. If you are happy with your submission, then you're ready to submit your project. If you see room for improvement, keep working to improve your project.

# ## <a id='Resources'></a>List of Resources

#

# 1. Pandas documentation: http://pandas.pydata.org/pandas-docs/stable/index.html

# 2. Scipy ttest documentation: http://docs.scipy.org/doc/scipy-0.14.0/reference/generated/scipy.stats.ttest_rel.html

# 3. t-table: https://s3.amazonaws.com/udacity-hosted-downloads/t-table.jpg

# 4. Stroop effect Wikipedia page: https://en.wikipedia.org/wiki/Stroop_effect

| P2/p2_investigate_a_dataset.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Controlled Abstention Networks (CAN) for regression tasks

# * authors: <NAME>, <NAME>

# * published in Barnes, <NAME>. and <NAME>: Controlled abstention neural networks for identifying skillful predictions for regression problems, submitted to JAMES, 04/2021.

# * code updated: April 22, 2021

# +

import os

import time

import sys

import pprint

import imp

import glob

from sklearn import preprocessing

import tensorflow as tf

import numpy as np

import data1d

import metrics

import abstentionloss

import network

import plots

import matplotlib.pyplot as plt

import matplotlib as mpl

mpl.rcParams['figure.facecolor'] = 'white'

mpl.rcParams['figure.dpi']= 150

np.warnings.filterwarnings('ignore', category=np.VisibleDeprecationWarning)

tf.print(f"sys.version = {sys.version}", output_stream=sys.stdout)

tf.print(f"tf.version.VERSION = {tf.version.VERSION}", output_stream=sys.stdout)

# -

# # Initialize Experiment

# +

checkpointDir = 'checkpoints/'

EXP_LIST = {

'data1d_constant':

{'exp_name': 'data1d_constant',

'loss': 'AbstentionLogLoss',

'updater': 'Constant', # set updater to Contant to run with fixed alpha

'nupd': np.nan,

'numClasses': 2,

'n_samples': [4000, 1000], #(number of noisy points, number of not noisy points)

'noise': [.5, .05],

'slope': [1., .7],

'yint': [-2., .6],

'x_sigma': [.25, .5],

'undersample': False,

'spinup': 0,

'hiddens': [5, 5],

'lr_init': .0001,

'batch_size': 32,

'np_seed': 99,

'act_fun': 'relu',

'kappa_setpoint': .1,

'fixed_alpha': .1, # fixed alpha (not using PID controller)

'n_spinup_epochs': 225,

'n_coarse_epochs': 0,

'n_epochs': 1000,

'patience': 200,

'boxcox': False,

'ridge_param': 0.,

},

}

EXPINFO = EXP_LIST['data1d_constant']

EXP_NAME = EXPINFO['exp_name']

pprint.pprint(EXPINFO, width=60)

# -

NP_SEED = EXPINFO['np_seed']

np.random.seed(NP_SEED)

tf.random.set_seed(NP_SEED)

# ## Internal functions

def get_long_name(exp_name, loss_str, setpoint, network_seed, np_seed):

# set experiment name

LONG_NAME = (

exp_name

+ '_' + loss_str

+ '_setpoint' + str(setpoint)

+ '_networkSeed' + str(network_seed)

+ '_npSeed' + str(np_seed)

)

return LONG_NAME

# +

def make_model(loss_str = 'RegressLogLoss', updater_str='Colorado', kappa=1.0e5, tau=1.0e5, spinup_epochs=0, coarse_epochs=0, setpoint=.5, nupd=10, network_seed=0):

# Define and train the model

tf.keras.backend.clear_session()

model = network.defineNN(hiddens=HIDDENS,

input_shape=X_train_std.shape[1],

output_shape=NUM_CLASSES,

ridge_penalty=RIDGE,

act_fun=ACT_FUN,

network_seed=network_seed)

if(loss_str=='AbstentionLogLoss'):

if(updater_str=='Constant'):

updater = getattr(abstentionloss, updater_str)(setpoint=setpoint,

alpha_init=FIXED_ALPHA,

)

loss_function = getattr(abstentionloss, loss_str)(kappa=kappa,

tau_fine=kappa,

updater=updater,

spinup_epochs=spinup_epochs,

coarse_epochs=coarse_epochs,

)

else:

updater = getattr(abstentionloss, updater_str)(setpoint=setpoint,

alpha_init=0.1,

length=nupd)

loss_function = getattr(abstentionloss, loss_str)(

kappa=kappa,

tau_fine=tau,

updater=updater,

spinup_epochs=spinup_epochs,

coarse_epochs=coarse_epochs,

)

model.compile(

optimizer=tf.keras.optimizers.SGD(lr=LR_INIT, momentum=0.9, nesterov=True),

loss = loss_function,

metrics=[

alpha_value,

metrics.AbstentionFraction(tau=tau),

metrics.MAE(),

metrics.MAECovered(tau=tau),

metrics.LikelihoodCovered(tau=tau),

metrics.LogLikelihoodCovered(tau=tau),

metrics.SigmaCovered(tau=tau),

]

)

else:

loss_function = getattr(abstentionloss, loss_str)()

model.compile(

optimizer=tf.keras.optimizers.SGD(lr=LR_INIT, momentum=0.9, nesterov=True),

loss = loss_function,

metrics=[

metrics.MAE(),

metrics.Likelihood(),

metrics.LogLikelihood(),

metrics.Sigma(),

]

)

# model.summary()

return model, loss_function

# +

def get_tau_vector(model,X):

y_pred = model.predict(X)

tau_dict = {}

for perc in np.around(np.arange(.1, 1.1, .1), 3):

tau_dict[perc] = np.percentile(y_pred[:, -1], 100-perc*100.)

return tau_dict

def alpha_value(y_true,y_pred):

return loss_function.updater.alpha

def scheduler(epoch, lr):

if epoch < LR_EPOCH_BOUND:

return lr

else:

return LR_INIT/2. # lr*tf.math.exp(-0.1)

class EarlyStoppingCAN(tf.keras.callbacks.Callback):

"""Stop training when the loss is at its min, i.e. the loss stops decreasing.

Arguments:

patience: Number of epochs to wait after min has been hit. After this

number of no improvement, training stops.

"""

def __init__(self, patience=0, updater_str='Colorado'):

super(EarlyStoppingCAN, self).__init__()

self.patience = patience

self.updater_str = updater_str

# best_weights to store the weights at which the minimum loss occurs.

self.best_weights = None

def on_train_begin(self, logs=None):

# The number of epoch it has waited when loss is no longer minimum.

self.wait = 0

# The epoch the training stops at.

self.stopped_epoch = 0

# Initialize the best to be the worse possible.

self.best = np.Inf

self.best_epoch = 0

# initialize best_weights to non-trained model

self.best_weights = self.model.get_weights()

def on_epoch_end(self, epoch, logs=None):

current = logs.get("val_loss")

if np.less(current, self.best):

if(self.updater_str=='Constant'):

abstention_error = 0.

else:

abstention_error = np.abs(logs.get("val_abstention_fraction") - setpoint)

if np.less(abstention_error, .1):

if (epoch >= EXPINFO['n_spinup_epochs']):

self.best = current

self.wait = 0

# Record the best weights if current results is better (greater).

self.best_weights = self.model.get_weights()

self.best_epoch = epoch

else:

self.wait += 1

if self.wait >= self.patience:

self.stopped_epoch = epoch

self.model.stop_training = True

print("Restoring model weights from the end of the best epoch.")

self.model.set_weights(self.best_weights)

def on_train_end(self, logs=None):

if self.stopped_epoch > 0:

print("Early stopping, setting to best_epoch = " + str(self.best_epoch + 1))

else:

self.best_epoch = np.nan

# -

# ## Make the data

X_train_std, onehot_train, X_val_std, onehot_val, X_test_std, onehot_test, xmean, xstd, tr_train, tr_val, tr_test = data1d.get_data(EXPINFO,to_plot=True)

# # Train the model

# +

#---------------------

LOSS = EXPINFO['loss']

UPDATER = EXPINFO['updater']

ACT_FUN = EXPINFO['act_fun']

NUPD = EXPINFO['nupd']

HIDDENS = EXPINFO['hiddens']

BATCH_SIZE = EXPINFO['batch_size']

LR_INIT = EXPINFO['lr_init']

NUM_CLASSES = EXPINFO['numClasses']

RIDGE = EXPINFO['ridge_param']

KAPPA_SEPOINT = EXPINFO['kappa_setpoint']

N_SPINUP_EPOCHS = EXPINFO['n_spinup_epochs']

N_COARSE_EPOCHS = EXPINFO['n_coarse_epochs']

PATIENCE = EXPINFO['patience']

FIXED_ALPHA = EXPINFO['fixed_alpha']

#---------------------

# Set parameters

LR_EPOCH_BOUND = 10000 # don't use the learning rate scheduler, but keep as an option

NETWORK_SEED = 0

SETPOINT_LIST = [-1., 0., .2,] # -1. = fit model for spinup period only with RegressionLogLoss

# 0. = fit model with RegressionLogLoss

# (0,1) = fit with AbstentionLogLoss for setpoint coverage,

# choose any value (0,1) if running with UPDATER = 'Constant'

for isetpoint, setpoint in enumerate(SETPOINT_LIST):

# set loss function to use ----

N_EPOCHS = EXPINFO['n_epochs']

if(setpoint==0.):

if(LOSS == 'AbstentionLogLoss'):

RUN_LOSS = 'RegressLogLoss'

else:

RUN_LOSS = LOSS

elif(setpoint==-1.):

if(LOSS == 'AbstentionLogLoss'):

RUN_LOSS = 'RegressLogLoss'

N_EPOCHS = EXPINFO['n_spinup_epochs']

else:

continue

else:

if(LOSS != 'AbstentionLogLoss' ):

continue

else:

RUN_LOSS = LOSS

#-------------------

LONG_NAME = get_long_name(EXP_NAME, RUN_LOSS, setpoint, NETWORK_SEED, NP_SEED)

model_name = 'saved_models/model_' + LONG_NAME

print(LONG_NAME)

#-------------------------------

# load the baseline spin-up model

if(setpoint>0):

spinup_file = 'saved_models/model_' + get_long_name(exp_name=EXP_NAME,

loss_str='RegressLogLoss',

setpoint=-1.,

network_seed=NETWORK_SEED,

np_seed=NP_SEED)

model_spinup, __ = make_model(loss_str = RUN_LOSS,

network_seed=NETWORK_SEED,

)

model_spinup.load_weights(spinup_file + '.h5')

tau_dict = get_tau_vector(model_spinup,X_val_std)

KAPPA = tau_dict[KAPPA_SEPOINT]

TAU = tau_dict[setpoint]

print(' kappa = ' + str(KAPPA) + ', tau = ' + str(TAU))

else:

TAU = np.nan

KAPPA = np.nan

#-------------------------------

# set random seed again

np.random.seed(NP_SEED)

# get the model

tf.keras.backend.clear_session()

# callbacks

lr_callback = tf.keras.callbacks.LearningRateScheduler(scheduler,verbose=0)

cp_callback = tf.keras.callbacks.ModelCheckpoint(

filepath = checkpointDir + 'model_' + LONG_NAME + '_epoch{epoch:03d}.h5',

verbose=0,

save_weights_only=True,

)

# define the model and loss function

if(RUN_LOSS=='AbstentionLogLoss'): # run with AbstentionLogLoss

es_can_callback = EarlyStoppingCAN(patience=PATIENCE,

updater_str=UPDATER,

)

model, loss_function = make_model(loss_str = RUN_LOSS,

updater_str=UPDATER,

kappa = KAPPA,

tau = TAU,

spinup_epochs=N_SPINUP_EPOCHS,

coarse_epochs=N_COARSE_EPOCHS,

setpoint=setpoint,

nupd=NUPD,

network_seed=NETWORK_SEED,

)

callbacks = [abstentionloss.AlphaUpdaterCallback(),

lr_callback,

cp_callback,

es_can_callback,

]

else: # run with RegressLogLoss

es_callback = tf.keras.callbacks.EarlyStopping(monitor='val_loss',

mode='min',

patience=PATIENCE,

restore_best_weights=True,

verbose=1)

model, loss_function = make_model(loss_str = RUN_LOSS,

network_seed=NETWORK_SEED,

)

callbacks = [lr_callback,

cp_callback,

es_callback,

]

#-------------------------------

# Train the model

start_time = time.time()

history = model.fit(

X_train_std,

onehot_train,

validation_data=(X_val_std, onehot_val),

batch_size=BATCH_SIZE,

epochs=N_EPOCHS,

shuffle=True,

verbose=0,

callbacks=callbacks

)

stop_time = time.time()

tf.print(f" elapsed time during fit = {stop_time - start_time:.2f} seconds\n")

model.save_weights(model_name + '.h5')

for f in glob.glob(checkpointDir + 'model_' + LONG_NAME + "_epoch*.h5"):

os.remove(f)

#-------------------------------

# Display the results

if(RUN_LOSS=='AbstentionLogLoss'):

best_epoch = es_can_callback.best_epoch

elif(setpoint==-1):

best_epoch = N_EPOCHS

else:

best_epoch = np.argmin(history.history['val_loss'])

exp_info=(RUN_LOSS, N_EPOCHS, setpoint, N_SPINUP_EPOCHS, HIDDENS, LR_INIT, LR_EPOCH_BOUND, BATCH_SIZE, NETWORK_SEED, best_epoch)

#---- plot nice predictions ----

y_pred = model.predict(X_test_std)

plots.plot_predictionscatter(X_test_std, y_pred, onehot_test[:,0], tr_test, LONG_NAME, showplot = True)

# -

| regression/main_1D.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/chuducthang77/coronavirus/blob/main/Recurring_mutation.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="I8UjHlnvKsB4"

# # Problem:

# We’ll identify mutations as re-occurred mutations if they happened earlier, then probably disappeared and again come back in some other virus sequences. By definition, recurring means something that happens over and over again at regular intervals. The timeline is 3 months. That means if a mutation 1st occurred in Jan 2020 and no mutations in Feb, Mar, and April and then appeared again in May and repeat the gap

# + colab={"base_uri": "https://localhost:8080/"} id="jAMOy7aTLWo9" outputId="aa3cb446-0081-4a19-cb57-f73c56dd13ef"

from google.colab import drive

drive.mount('/content/gdrive')

# + colab={"base_uri": "https://localhost:8080/"} id="kwPLYta6LXIZ" outputId="5f6ac725-0f5d-41db-a8af-d77c7f4cd659"

# %cd 'gdrive/MyDrive/Machine Learning/coronavirus/analysis'

# !ls

# + id="eP06VEZSLGYI"

import pandas as pd

import numpy as np

# + colab={"base_uri": "https://localhost:8080/", "height": 419} id="szLm6PP9LY2E" outputId="8401f46d-a918-4151-8256-66511c205e89"

#Read the csv

df = pd.read_csv('mutations_spike_msa_apr21.csv')

df

# + colab={"base_uri": "https://localhost:8080/", "height": 419} id="vy9NLzEdlWcI" outputId="8417ed54-7f34-4d99-b8d7-f5fa364cb262"

#Eliminate the empty row at the end of the file

df = df[df['Collection Date'].notna()]

df

# + colab={"base_uri": "https://localhost:8080/", "height": 453} id="y-CUpnwUMT1a" outputId="f565a981-b092-4843-e9e6-e4f5776b13f6"

#Create the column Month based on Collection Date

pd.options.mode.chained_assignment = None

dates = pd.to_datetime(df['Collection Date'], format='%Y-%m-%d')

dates = dates.dt.strftime('%m')

df['Month'] = dates

df['Month'] = df['Month'].astype(str).astype(int)

df

# + colab={"base_uri": "https://localhost:8080/", "height": 487} id="euN6wpsk8LFR" outputId="29b5e622-c356-4fa3-9c3d-b1da019cc57c"

#Convert the mutations columns to the list and expand for each item in the list to individual row

df = df.assign(names=df['Mutations'].str.split(',')).explode('names')

df = df.rename(columns={'names': 'Individual mutation'})

df

# + colab={"base_uri": "https://localhost:8080/"} id="HsRbfVVZ2sR6" outputId="75e956e7-f1e7-4fdf-9e71-8490e72f9da3"

#Check the mutation for the given interval

intervals = [{1,5,9},{2,6,10},{3,7,11},{4,8,12}]

result = []

for interval in intervals:

#Group the mutation by their individual mutation and create the set of month it occurs

temp_df = df.groupby('Individual mutation')['Month'].apply(set).reset_index()

#Keep only the set matching the given interval

temp_df = temp_df[temp_df['Month'] == interval]

#Keep only the unique individual mutation

result += list(temp_df['Individual mutation'].unique())

print(result)

# + id="jBpRGsc8WbMA"

#Save the output to the txt file

with open('output.txt', 'w') as output:

output.write(str(result))

| analysis/Recurring_mutation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

import pandas as pd

import numpy as np

import heapq

import matplotlib.pyplot as plt

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfTransformer

from sklearn.pipeline import Pipeline

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import train_test_split

from sklearn import metrics

from time import time

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.feature_extraction.text import HashingVectorizer

from sklearn.feature_selection import SelectKBest, chi2

from sklearn.linear_model import RidgeClassifier

from sklearn.pipeline import Pipeline

from sklearn.svm import LinearSVC

from sklearn.linear_model import SGDClassifier

from sklearn.linear_model import Perceptron

from sklearn.linear_model import PassiveAggressiveClassifier

from sklearn.naive_bayes import BernoulliNB, MultinomialNB

from sklearn.neighbors import KNeighborsClassifier

from sklearn.neighbors import NearestCentroid

from sklearn.ensemble import RandomForestClassifier

from sklearn.utils.extmath import density

from sklearn import metrics

def get_sample(ds, field, num=50):

ds_train = ds[ds[field]==1].sample(num)

ds_train = ds_train.append(ds[ds[field]==0].sample(num))

ds_train = ds_train.append(ds[ds[field]==-1].sample(num))

ds_train.shape

return ds_train

def build_model(X, y):

model = Pipeline([('vect', CountVectorizer())

,('tfidf', TfidfTransformer())

,('clf', MultinomialNB()),

])

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=42)

model = model.fit(X_train, y_train)

return model

def benchmark(clf,X_train, X_test, y_train, y_test):

#print('_' * 80)

#print("Training: ")

#print(clf)

t0 = time()

clf.fit(X_train, y_train)

train_time = time() - t0

#print("train time: %0.3fs" % train_time)

t0 = time()

pred = clf.predict(X_test)

test_time = time() - t0

#print("test time: %0.3fs" % test_time)

score = metrics.accuracy_score(y_test, pred)

#print("accuracy: %0.3f" % score)

if hasattr(clf, 'coef_'):

#print("dimensionality: %d" % clf.coef_.shape[1])

#print("density: %f" % density(clf.coef_))

if False and feature_names is not None:

print("top 10 keywords per class:")

for i, label in enumerate(target_names):

top10 = np.argsort(clf.coef_[i])[-10:]

print(trim("%s: %s" % (label, " ".join(feature_names[top10]))))

#print()

if False:

#print("classification report:")

print(metrics.classification_report(y_test, pred,

target_names=target_names))

if False:

#print("confusion matrix:")

print(metrics.confusion_matrix(y_test, pred))

#print()

clf_descr = str(clf).split('(')[0]

return clf, clf_descr, score, train_time, test_time, pred

def benchmark_models(X_train, X_test, y_train, y_test, vectorizer, path):

X_train = vectorizer.fit_transform(X_train)

X_test = vectorizer.transform(X_test)

results = []

for penalty in ["l2", "l1"]:

print('=' * 80)

print("%s penalty" % penalty.upper())

# Train Liblinear model

results.append(benchmark(LinearSVC(loss='l2', penalty=penalty,

dual=False, tol=1e-3),

X_train, X_test, y_train, y_test))

# Train SGD model

results.append(benchmark(SGDClassifier(alpha=.0001, n_iter=50,

penalty=penalty),

X_train, X_test, y_train, y_test))

# Train SGD with Elastic Net penalty

#print('=' * 80)

#print("Elastic-Net penalty")

results.append(benchmark(SGDClassifier(alpha=.0001, n_iter=50,

penalty="elasticnet"),

X_train, X_test, y_train, y_test))

# Train sparse Naive Bayes classifiers

#print('=' * 80)

#print("Naive Bayes")

results.append(benchmark(MultinomialNB(alpha=.01),

X_train, X_test, y_train, y_test))

results.append(benchmark(MultinomialNB(),

X_train, X_test, y_train, y_test))

results.append(benchmark(BernoulliNB(alpha=.01),

X_train, X_test, y_train, y_test))

#plot_scores(results,path)

return results

def plot_scores(results,path):

# make some plots

indices = np.arange(len(results))

results = [[x[i] for x in results] for i in range(6)]

clfs, clf_names, score, training_time, test_time, preds = results

training_time = np.array(training_time) / np.max(training_time)

test_time = np.array(test_time) / np.max(test_time)

plt.figure(figsize=(12, 8))

plt.title("Score")

plt.barh(indices, score, .2, label="score", color='navy')

plt.barh(indices + .3, training_time, .2, label="training time",

color='c')

plt.barh(indices + .6, test_time, .2, label="test time", color='darkorange')

plt.yticks(())

plt.legend(loc='best')

plt.subplots_adjust(left=.25)

plt.subplots_adjust(top=.95)

plt.subplots_adjust(bottom=.05)

for i, c in zip(indices, clf_names):

plt.text(-.3, i, c)

plt.savefig(path, format='eps')

def test_sample(model):

print('evaluating sample data')

docs_new = ['i agree with you', 'i disagree with you']

predicted = model.predict(docs_new)

for doc, stance in zip(docs_new, predicted):

print('%r => %s' % (doc, stance))

def calc_stats(clf_names, preds):

print('calculating f-scores')

ds_train['stance_pred'] = model.predict(ds_train.text)

types = ds_train.groupby(['type'])

for name, group in types:

#TODO: use only pos and neg inside groups

fscore=metrics.f1_score(group.stance, group.stance_pred, average='micro')

#f1_macro=metrics.f1_score(group.stance, group.stance_pred, labels=[-1,1], average='macro')

#print(name, fscore)

ds_train.loc[ds_train.type==name, 'fscore_nb'] = fscore

#ds_train.loc[ds_train.type==name, 'fscore_macro'] = f1_macro

f1_micro=metrics.f1_score(ds_train.stance, ds_train.stance_pred, average='micro')

f1_macro=metrics.f1_score(ds_train.stance, ds_train.stance_pred, average='macro')

ds_train['fscore_nb_micro'] = f1_micro

ds_train['fscore_nb_macro'] = f1_macro

fscores = ds_train.groupby('type').agg({'fscore_nb': 'mean'})

fscores = fscores.reset_index()

fscores.rename(columns={'fscore_nb': 'NB'}, inplace=True)

fscores

# fscores.loc[fscores.shape[0]] = ['F micro' , ds_train.fscore_nb_micro[0]]

# fscores.loc[fscores.shape[0]] = ['F macro' , ds_train.fscore_nb_macro[0]]

fscores['type'] = fscores['type'].str.replace('_', ' ')

#fscores.to_csv('../results/fscores.csv', index=False)

#print(fscores)

fmscores = ds_train[['fscore_nb_micro', 'fscore_nb_macro']].mean()

fmscores = fmscores.reset_index(name='NB')

f2 = ds_train[['fscore_nb_micro', 'fscore_nb_macro']].mean()

fmscores['index'] = fmscores['index'].str.replace('fscore_nb_' ,'F ')

fmscores['alg2'] = f2.values

fmscores.rename(columns={'index':'F score'}, inplace=True)

#fmscores.to_csv('../results/fmscores.csv', index=False)

#print(fmscores)

return fscores, fmscores

def benchmark_stats(X_test, y_test, results):

print('calculating f-scores')

ds_train = X_test.copy()

ds_train['y_test'] = y_test

print(y_test.shape)

results = heapq.nlargest(5, results, key=lambda x: x[2])

for r in results:

clf_name =r[1]

pred = r[5]

ds_train[clf_name] = pred

types = ds_train.groupby(['type'])

micro_stats = []

macro_stats = []

for r in results:

clf_name = r[1]

for name, group in types:

#TODO: use only pos and neg inside groups

#print(clf_name, group[clf_name].shape)

fscore=metrics.f1_score(group.y_test, group[clf_name], average='micro')

#f1_macro=metrics.f1_score(group.stance, group.stance_pred, labels=[-1,1], average='macro')

#print(name, fscore)

stat_name='fscore_'+clf_name

if not stat_name in micro_stats:

micro_stats.append(stat_name)

ds_train.loc[ds_train.type==name, stat_name] = fscore

#ds_train.loc[ds_train.type==name, 'fscore_macro'] = f1_macro

#print(len(ds_train.columns))

f1_micro=metrics.f1_score(ds_train.y_test, ds_train[clf_name], average='micro')

f1_macro=metrics.f1_score(ds_train.y_test, ds_train[clf_name], average='macro')

stat_name='fscore_micro'+clf_name

macro_stats.append(stat_name)

ds_train[stat_name] = f1_micro

stat_name='fscore_macro'+clf_name

macro_stats.append(stat_name)

ds_train[stat_name] = f1_macro

micro_stats.append('type')

fscores = ds_train[micro_stats].groupby('type').mean()

fscores = fscores.reset_index()

#fscores.rename(columns={'fscore_nb': 'NB'}, inplace=True)

fscores['type'] = fscores['type'].str.replace('_', ' ')

fscores['type'] = fscores['type'].str.replace('+', ' and ')

cols = [c.replace('fscore_', '') for c in fscores.columns]

fscores.columns = cols

#print(len(fscores.columns))

fmscores = ds_train[macro_stats].mean()

fmscores = fmscores.reset_index()

fmscores.columns = ['stat', 'value']

fmscores['stat'] = fmscores['stat'].str.replace('fscore_micro' ,'F micro ')

fmscores['stat'] = fmscores['stat'].str.replace('fscore_macro' ,'F macro ')

# fmscores['alg2'] = f2.values

# fmscores.rename(columns={'index':'F score'}, inplace=True)

#fmscores.to_csv('../results/fmscores.csv', index=False)

#print(fmscores)

return fscores, fmscores

# +

print('our english dataset...')

ds = pd.read_csv('../dataset/wiki/opinions_annotated.csv')

ds = ds[ds.lang=='en']

print('stance classification')

ds_train = get_sample(ds, 'stance')

X = ds_train[['text', 'type']]

y = ds_train.stance

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=42)

#vectorizer = TfidfVectorizer(sublinear_tf=True, max_df=0.5,stop_words='english')

vectorizer = TfidfVectorizer()

results = benchmark_models(X_train.text, X_test.text, y_train, y_test, vectorizer, '../results/opinions_stance_score_en.eps')

fscores, fmscores = benchmark_stats(X_test, y_test, results)

#choose the best model

#model = build_model(X,y)

model = heapq.nlargest(1, results, key=lambda x: x[2])[0][0]

print('best model: ' + str(model))

#test_sample(model)

X = vectorizer.transform(ds.text.values)

#X = ds.text

predicted = model.predict(X)

ds['stance_pred'] = predicted

fscores.to_csv('../results/opinions_fscores_stance_en.csv', index=False)

fmscores.to_csv('../results/opinions_fmscores_stance_en.csv', index=False)

print('sentiment classification')

ds_train = get_sample(ds, 'sentiment', 8)

X = ds_train[['text', 'type']]

y = ds_train.sentiment

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=42)

#vectorizer = TfidfVectorizer(sublinear_tf=True, max_df=0.5,stop_words='english')

vectorizer = TfidfVectorizer()

results = benchmark_models(X_train.text, X_test.text, y_train, y_test, vectorizer, '../results/opinions_sentiment_score_en.eps')

fscores, fmscores = benchmark_stats(X_test, y_test, results)

#choose the best model

#model = build_model(X,y)

model = heapq.nlargest(1, results, key=lambda x: x[2])[0][0]

print('best model: ' + str(model))

#test_sample(model)

X = vectorizer.transform(ds.text.values)

#X = ds.text

predicted = model.predict(X)

ds['sentiment_pred'] = predicted

fscores.to_csv('../results/opinions_fscores_sent_en.csv', index=False)

fmscores.to_csv('../results/opinions_fmscores_sent_en.csv', index=False)

ds.to_csv('../dataset/wiki/opinions_predicted_en.csv', index=False)

# +

print('our spanish dataset...')

ds = pd.read_csv('../dataset/wiki/opinions_annotated.csv')

ds = ds[ds.lang=='es']

print('stance classification')

ds_train = get_sample(ds, 'stance', 50)

X = ds_train[['text', 'type']]

y = ds_train.stance

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=42)

#vectorizer = TfidfVectorizer(sublinear_tf=True, max_df=0.5,stop_words='english')

vectorizer = TfidfVectorizer()

results = benchmark_models(X_train.text, X_test.text, y_train, y_test, vectorizer, '../results/opinions_stance_score_es.eps')

fscores, fmscores = benchmark_stats(X_test, y_test, results)

#choose the best model

#model = build_model(X,y)

model = heapq.nlargest(1, results, key=lambda x: x[2])[0][0]

print('best model: ' + str(model))

#test_sample(model)

X = vectorizer.transform(ds.text.values)

#X = ds.text

predicted = model.predict(X)

ds['stance_pred'] = predicted

fscores.to_csv('../results/opinions_fscores_stance_es.csv', index=False)

fmscores.to_csv('../results/opinions_fmscores_stance_es.csv', index=False)

print('sentiment classification')

ds_train = get_sample(ds, 'sentiment', 4)

X = ds_train[['text', 'type']]

y = ds_train.sentiment

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=42)

#vectorizer = TfidfVectorizer(sublinear_tf=True, max_df=0.5,stop_words='english')

vectorizer = TfidfVectorizer()

results = benchmark_models(X_train.text, X_test.text, y_train, y_test, vectorizer, '../results/opinions_sentiment_score_es.eps')

fscores, fmscores = benchmark_stats(X_test, y_test, results)

#choose the best model

#model = build_model(X,y)

model = heapq.nlargest(1, results, key=lambda x: x[2])[0][0]

print('best model: ' + str(model))

#test_sample(model)

X = vectorizer.transform(ds.text.values)

#X = ds.text

predicted = model.predict(X)

ds['sentiment_pred'] = predicted

fscores.to_csv('../results/opinions_fscores_sent_es.csv', index=False)

fmscores.to_csv('../results/opinions_fmscores_sent_es.csv', index=False)

ds.to_csv('../dataset/wiki/opinions_predicted_es.csv', index=False)

# +

print('aawd dataset...')

ds = pd.read_csv('../dataset/wiki/aawd_preprocessed.csv')

#ds = ds[ds.lang=='en']

ds_train = get_sample(ds, 'stance', 300)

X = ds_train[['text', 'type']]

print('stance classification')

y = ds_train.stance

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.5, random_state=42)

#vectorizer = TfidfVectorizer(sublinear_tf=True, max_df=0.5,stop_words='english')

vectorizer = TfidfVectorizer()

results = benchmark_models(X_train.text, X_test.text, y_train, y_test, vectorizer, '../results/awwd_stance_score.eps')

fscores, fmscores = benchmark_stats(X_test, y_test, results)

#choose the best model

model = build_model(X=ds_train.text,y = ds_train.stance)

#model = heapq.nlargest(1, results, key=lambda x: x[2])

test_sample(model)

#X = vectorizer.transform(ds.text.values)

X = ds.text

predicted = model.predict(X)

ds['stance_pred'] = predicted

ds.to_csv('../dataset/wiki/aawd_predicted.csv', index=False)

fscores.to_csv('../results/aawd_fscores_stance.csv', index=False)

fmscores.to_csv('../results/aawd_fmscores_stance.csv', index=False)

| books/3model .ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: PySpark

# language: python

# name: pyspark

# ---

spark.version

# + id="6hKq-n7G62-2"

from pyspark.sql import SparkSession

import matplotlib.pyplot as plt

# %matplotlib inline

import numpy as np

# + [markdown] id="V5_jvhfhtFCj"

# 1. Data loading

# -

spark = SparkSession.builder.appName("mvp-prediction").getOrCreate()

print('spark session created')

data_path = 'gs://6893-data/player_stats_2020_2021.csv'

data = spark.read.format("csv").option("header", "true").option("inferschema", "true").load(data_path)

data.show(3)

data.printSchema()

# + [markdown] id="CeRTQAUE6VfO"

# 2. Data preprocessing

# + id="3TKctNhO6bHG"

from pyspark.ml import Pipeline

from pyspark.ml.feature import OneHotEncoder, StringIndexer, VectorAssembler

# + id="_83QyptU_nDE"

#stages in our Pipeline

stages = []

# + id="BB4TOB6MBCJ3"

# Transform all features into a vector using VectorAssembler

numericCols = ["reb", "ast", "stl", "blk", "tov", "pts"]

assemblerInputs = numericCols

assembler = VectorAssembler(inputCols=assemblerInputs, outputCol="features")

stages += [assembler]

# + id="Ab0WDG00Bqc0"

pipeline = Pipeline(stages=stages)

pipelineModel = pipeline.fit(data)

preppedDataDF = pipelineModel.transform(data)

# + id="-x6nXJUiByOE"

preppedDataDF.take(3)

# + id="NPONX19OB2Tu"

# Keep relevant columns

cols = data.columns

selectedcols = ["features"] + cols

dataset = preppedDataDF.select(selectedcols)

display(dataset)

# + id="ZYB1oCw4CJuc"

### Randomly split data into training and test sets. set seed for reproducibility

#=====your code here==========

trainingData, testData = dataset.randomSplit([.70, .30], seed=100)

#===============================

print(trainingData.count())

print(testData.count())

# + [markdown] id="STxwMITSBLEH"

# 3. Modeling

# -

from pyspark.ml.classification import LogisticRegression, RandomForestClassifier

from pyspark.ml.evaluation import MulticlassClassificationEvaluator

# + id="2mej0dQPC22x"

# Fit model to prepped data

#LogisticRegression model, maxIter=10

#=====your code here==========

lrModel = LogisticRegression(featuresCol="features", labelCol="label", maxIter=10).fit(trainingData)

#===============================

# select example rows to display.

predictions = lrModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist = []

acc_hist.append(accuracy)

# + id="7AwIbeIwbpsY"

from pyspark.ml.classification import RandomForestClassifier

#Random Forest

#=====your code here==========

rfModel = RandomForestClassifier(featuresCol="features", labelCol="label").fit(trainingData)

#===============================

# select example rows to display.

predictions = rfModel.transform(testData)

predictions.show()

# compute accuracy on the test set

# evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# + id="PHc1qAd6Skf1"

#NaiveBayes

#=====your code here==========

from pyspark.ml.classification import NaiveBayes

nbModel = NaiveBayes(featuresCol="features", labelCol="label").fit(trainingData)

#===============================

# select example rows to display.

predictions = nbModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# + id="PBbr8btnbyV3"

#Decision Tree

#=====your code here==========

from pyspark.ml.classification import DecisionTreeClassifier

dtModel = DecisionTreeClassifier(featuresCol="features", labelCol="label").fit(trainingData)

#===============================

# select example rows to display.

predictions = dtModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# + id="4nccBiy_b8KT"

#Gradient Boosting Trees

#=====your code here==========

from pyspark.ml.classification import GBTClassifier

gbtModel = GBTClassifier(featuresCol="features", labelCol="label").fit(trainingData)

#===============================

# select example rows to display.

predictions = gbtModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# + id="O9sNFLH0b_LH"

# Multi-layer Perceptron

#=====your code here==========

from pyspark.ml.classification import MultilayerPerceptronClassifier

mlpModel = MultilayerPerceptronClassifier(layers=[6, 5, 5, 2], seed=123, featuresCol="features", labelCol="label").fit(trainingData)

#===============================

# select example rows to display.

predictions = mlpModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# + id="AG_EmZcfcCIU"

# Linear Support Vector Machine

#=====your code here==========

from pyspark.ml.classification import LinearSVC

svmModel = LinearSVC(maxIter=10, regParam=0.1).fit(trainingData)

#===============================

# select example rows to display.

predictions = svmModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# + id="IvJc9VXrcGFU"

# One-vs-Rest

#=====your code here==========

from pyspark.ml.classification import LogisticRegression, OneVsRest

lr = LogisticRegression(maxIter=10, tol=1E-6, fitIntercept=True)

ovrModel = OneVsRest(classifier=lr).fit(trainingData)

#===============================

# select example rows to display.

predictions = ovrModel.transform(testData)

predictions.show()

# compute accuracy on the test set

evaluator = MulticlassClassificationEvaluator(labelCol="label", predictionCol="prediction", metricName="accuracy")

accuracy = evaluator.evaluate(predictions)

print("Test set accuracy = " + str(accuracy))

acc_hist.append(accuracy)

# -

print(len(acc_hist), acc_hist)

models = ['lr', 'rf', 'nb', 'dt', 'GBT', 'MLP', 'LSVM', 'ovr']

results = dict(zip(models, acc_hist))

results = sorted(results.items(), key=lambda x: x[1])

print(results)

accs = []

names = []

for i, t in enumerate(results):

accs.append(results[i][1])

names.append(results[i][0])

print(accs, names)

# + [markdown] id="_WIIE8pEDSR9"

# 4. Comparison and analysis

# + id="LpoclCFXD7tV"

# Rank models according to Test set accuracy

#=====your code here==========

fig = plt.figure()

ax = fig.add_axes([0,0,1,1])

ax.bar(range(len(results)), accs, align='center', tick_label=names, width=0.7)

#plt.xticks(range(len(results)), list(results.keys()))

plt.show()

#===============================

# + [markdown] id="HL3j030aa7M8"

# *your analysis*

# <br>

# The accuracy is sorted in an ascending order.

# <br>

# mlp < naive bayes < Linear SVM < Random Forest < Decision Tree < One-vs-Rest < Linear Regression < GBT

| algorithm/project_algor.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

## OPERATION

# -

class Operation():

def __init__(self,input_nodes=[]):

self.input_nodes = input_nodes

self.output_nodes = []

for node in input_nodes:

node.output_nodes.append(self)

_default_graph.operations.append(self)

def compute(self):

pass

class add(Operation):

def __init__(self,x,y):

super().__init__([x,y])

def compute(self,x_var,y_var):

self.inputs = [x_var,y_var]

return x_var + y_var

class multiply(Operation):

def __init__(self,x,y):

super().__init__([x,y])

def compute(self,x_var,y_var):

self.inputs = [x_var,y_var]

return x_var * y_var

class matmul(Operation):

def __init__(self,x,y):

super().__init__([x,y])

def compute(self,x_var,y_var):

self.inputs = [x_var,y_var]

return x_var.dot(y_var)

class Placeholder():

def __init__(self):

self.output_nodes = []

_default_graph.placeholders.append(self)

class Variable():

def __init__(self,initial_value=None):

self.value = initial_value

self.output_nodes = []

self.output_node = []

_default_graph.variables.append(self)

class Graph():

def __init__(self):

self.operations = []

self.placeholders = []

self.variables = []

def set_as_default(self):

global _default_graph

_default_graph = self

# +

z = A*x + b

A = 10

b = 1

z = 10*x + 1

# -

g = Graph()

g.set_as_default()

A = Variable(10)

b = Variable(1)

x = Placeholder()

y = multiply(A,x)

z = add(y,b)

def traverse_postorder(operation):

"""

PostOrder Traversal of Nodes. Basically makes sure computations are done in

the correct order (Ax first , then Ax + b). Feel free to copy and paste this code.

It is not super important for understanding the basic fundamentals of deep learning.

"""

nodes_postorder = []

def recurse(node):

if isinstance(node, Operation):

for input_node in node.input_nodes:

recurse(input_node)

nodes_postorder.append(node)

recurse(operation)

return nodes_postorder

# +

class Session:

def run(self, operation, feed_dict = {}):

"""

operation: The operation to compute

feed_dict: Dictionary mapping placeholders to input values (the data)

"""

# Puts nodes in correct order

nodes_postorder = traverse_postorder(operation)

for node in nodes_postorder:

if type(node) == Placeholder:

node.output = feed_dict[node]

elif type(node) == Variable:

node.output = node.value

else: # Operation

node.inputs = [input_node.output for input_node in node.input_nodes]

node.output = node.compute(*node.inputs)

# Convert lists to numpy arrays

if type(node.output) == list:

node.output = np.array(node.output)

# Return the requested node value

return operation.output

# -

sess = Session()

result = sess.run(operation=z,feed_dict={x:10})

result

| FBTA/ANN/Perceptron.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from keras.datasets import mnist

import matplotlib.pyplot as plt

(X_train, y_train), (X_test, y_test) = mnist.load_data()

X_test.shape

y_test.shape

plt.imshow(X_test[90], cmap="gray")

y_test[90]

X = X_test.reshape(-1, 28*28)

y = y_test

X.shape

# +

# Step 1 - Preprocessing

# -

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_ = sc.fit_transform(X)

X_.shape

plt.imshow(X_[90].reshape(28,28) , cmap="gray")

# ## Sklearn PCA

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

Z_pca = pca.fit_transform(X_)

Z_pca.shape

Z_pca

pca.explained_variance_

# ## Custom PCA

import numpy as np

# +

# Step 2 - Computer Covar matrix

# -

covar = np.dot(X_.T, X_)

covar.shape

# +

# Step - 3 Computer eigen vectors using SVD

# -

from numpy.linalg import svd

U, S, V = svd(covar)

U.shape

Ured = U[:, :2]

Ured.shape

# +

# Step 4 - Project of Data on New axis(Components)

# -

Z = np.dot(X_, Ured)

Z.shape

Z

# ## Visualize Dataset

import pandas as pd

new_dataset = np.hstack((Z, y.reshape(-1,1)))

dataframe = pd.DataFrame(new_dataset , columns=["PC1", "PC2", "label"])

dataframe.head()

import seaborn as sns

plt.figure(figsize=(15,15))

fg = sns.FacetGrid(dataframe, hue="label", height=10)

fg.map(plt.scatter, "PC1", "PC2")

fg.add_legend()

plt.show()

# # PCA with 784

pca = PCA()

Z_pca= pca.fit_transform(X_)

Z_pca.shape

cum_var_exaplined = np.cumsum(pca.explained_variance_ratio_)

cum_var_exaplined

plt.figure(figsize=(8,6))

plt.plot(cum_var_exaplined)

plt.grid()

plt.xlabel("n_components")

plt.ylabel("Cummulative explained variance")

plt.show()

| ml_repo/PCA/PCA.ipynb |

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia O3 1.6.0

# language: julia

# name: julia-o3-1.6

# ---

using Rocket

using ReactiveMP

using GraphPPL

using Distributions

using Plots

@model function kalman_filter()

x_t_min_mean = datavar(Float64)

x_t_min_var = datavar(Float64)

x_t_min ~ NormalMeanVariance(x_t_min_mean, x_t_min_var)

x_t ~ NormalMeanVariance(x_t_min, 1.0) + 1.0

γ_shape = datavar(Float64)

γ_rate = datavar(Float64)

γ ~ GammaShapeRate(γ_shape, γ_rate)

y = datavar(Float64)

y ~ NormalMeanPrecision(x_t, γ) where { q = MeanField() }

return x_t_min_mean, x_t_min_var, x_t_min, x_t, γ_shape, γ_rate, γ, y

end

function start_inference(data_stream)

model, (x_t_min_mean, x_t_min_var, x_t_min, x_t, γ_shape, γ_rate, γ, y) = kalman_filter()

x_t_min_prior = NormalMeanVariance(0.0, 1e7)

γ_prior = GammaShapeRate(0.001, 0.001)

x_t_stream = Subject(Marginal)

γ_stream = Subject(Marginal)

x_t_subscribtion = subscribe!(getmarginal(x_t, IncludeAll()), (x_t_posterior) -> begin

next!(x_t_stream, x_t_posterior)

update!(x_t_min_mean, mean(x_t_posterior))

update!(x_t_min_var, var(x_t_posterior))

end)

γ_subscription = subscribe!(getmarginal(γ, IncludeAll()), (γ_posterior) -> begin

next!(γ_stream, γ_posterior)

update!(γ_shape, shape(γ_posterior))

update!(γ_rate, rate(γ_posterior))

end)

setmarginal!(x_t, x_t_min_prior)

setmarginal!(γ, γ_prior)

data_subscription = subscribe!(data_stream, (d) -> update!(y, d))

return x_t_stream, γ_stream, () -> begin

unsubscribe!(x_t_subscribtion)

unsubscribe!(γ_subscription)

unsubscribe!(data_subscription)

end

end

# +

mutable struct DataGenerationProcess

previous :: Float64

process_noise :: Float64

observation_noise :: Float64

history :: Vector{Float64}

observations :: Vector{Float64}

end

function getnext!(process::DataGenerationProcess)

next = process.previous

process.previous = rand(Normal(process.previous, process.process_noise)) + 1.0

observation = next + rand(Normal(0.0, process.observation_noise))

push!(process.history, next)

push!(process.observations, observation)

return observation

end

function gethistory(process::DataGenerationProcess)

return process.history

end

function getobservations(process::DataGenerationProcess)

return process.observations

end

# +

n = 100

process = DataGenerationProcess(0.0, 1.0, 1.0, Float64[], Float64[])

stream = timer(100, 100) |> map_to(process) |> map(Float64, getnext!) |> take(n)

x_t_stream, γ_stream, stop_cb = start_inference(stream);

plot_callback = (posteriors) -> begin

IJulia.clear_output(true)

p = plot(mean.(posteriors), ribbon = std.(posteriors), label = "Estimation")

p = plot!(gethistory(process), label = "Real states")

p = scatter!(getobservations(process), ms = 2, label = "Observations")

p = plot(p, size = (1000, 400), legend = :bottomright)

display(p)

end

sub = subscribe!(x_t_stream |> scan(Vector{Marginal}, vcat, Marginal[]) |> map(Vector{Marginal}, reverse), lambda(

on_next = plot_callback,

on_error = (e) -> println(e)

))

# -

stop_cb()

IJulia.clear_output(false);

| demo/Infinite Data Stream.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="H3ZPVmgmMGHg" colab_type="code" colab={}

# ライブラリのインポート

# %matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

from sklearn.tree import DecisionTreeRegressor

from sklearn.datasets import load_boston

# + id="lrY8TMglUGtE" colab_type="code" outputId="fdd9257c-2772-4ff0-950a-949d9367fe2e" executionInfo={"status": "ok", "timestamp": 1566453518531, "user_tz": -540, "elapsed": 1133, "user": {"displayName": "\u6bdb\u5229\u62d3\u4e5f", "photoUrl": "", "userId": "17854120745961292401"}} colab={"base_uri": "https://localhost:8080/", "height": 102}

# 住宅価格データセットのダウンロード

boston = load_boston()

# 特徴量に低所得者の割合(LSTAT)を選択し100行に絞り込み

X = boston.data[:100,[12]]

# 正解に住宅価格(MDEV)を設定し100行に絞り込み

y = boston.target[:100]

# 決定木回帰のモデルを作成

model = DecisionTreeRegressor(criterion='mse', max_depth=3, random_state=0)

# モデルの訓練

model.fit(X, y)

# + id="BTOo3xzfaD0C" colab_type="code" outputId="0f7389d8-7bcb-462e-d237-91fce0c1f577" executionInfo={"status": "ok", "timestamp": 1566453520064, "user_tz": -540, "elapsed": 894, "user": {"displayName": "\u6bdb\u5229\u62d3\u4e5f", "photoUrl": "", "userId": "17854120745961292401"}} colab={"base_uri": "https://localhost:8080/", "height": 295}

plt.figure(figsize=(8,4)) #プロットのサイズ指定

# 訓練データの最小値から最大値まで0.01刻みのX_pltを作成し、住宅価格を予測

X_plt = np.arange(X.min(), X.max(), 0.01)[:, np.newaxis]

y_pred = model.predict(X_plt)

# 訓練データ(低所得者の割合と住宅価格)の散布図と決定木回帰のプロット

plt.scatter(X, y, color='blue', label='data')

plt.plot(X_plt, y_pred, color='red',label='Decision tree')

plt.ylabel('Price in $1000s [MEDV]')

plt.xlabel('lower status of the population [LSTAT]')

plt.title('Boston house-prices')

plt.legend(loc='upper right')

plt.show()

# + id="MQOcQC5Y9U37" colab_type="code" colab={}

| chapter3/section3_7_1.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## part 1 ##

testdata = '''2199943210

3987894921

9856789892

8767896789

9899965678'''.split('\n')

testdata

with open('day9.txt') as fp:

puzzledata = fp.read().split('\n')[:-1]

puzzledata[-1]

def heightmap(data):

hmap = {}

nrows = len(data)

ncols = len(data[0])

for i, row in enumerate(data):

for j,c in enumerate(row):

height = int(c)

hmap[(i,j)] = height

return nrows, ncols, hmap

def isnbor(pos, nrows, ncols):

r, c = pos

return (0 <= r < nrows) and (0 <= c < ncols)

# +

def islow(pos, hmap, nrows, ncols):

h = hmap[pos]

row, col = pos

nbors = [(r,c) for (r,c) in [(row-1, col), (row+1, col), (row, col-1), (row, col+1)]

if isnbor((r,c), nrows, ncols)]

return all(hmap[nb] > h for nb in nbors)

# -

def lowpts(hmap, nrows, ncols):

return [pos for pos in hmap if islow(pos, hmap, nrows, ncols)]

def totrisk(pts, hmap):

return sum(hmap[pt]+1 for pt in pts)

testrows, testcols, testhmap = heightmap(testdata)

totrisk(lowpts(testhmap, testrows, testcols), testhmap)

puzzlerows, puzzlecols, puzzlehmap = heightmap(puzzledata)

totrisk(lowpts(puzzlehmap, puzzlerows, puzzlecols), puzzlehmap)

# ## part 2 ##

def walk(pos, hmap, basin):

r, c = pos

h = hmap[pos]

nbors = [(r-1, c), (r+1, c), (r, c-1), (r, c+1)]

for nb in nbors:

if (nb not in hmap) or (nb in basin):

continue

nbh = hmap[nb]

if nbh == 9:

continue

if (h < nbh):

basin.append(nb)

walk(nb, hmap, basin)

return basin

def getbasin(pt, hmap):

return walk(pt, hmap, [pt])

import math

def solve(hmap, nrows, ncols):

basin_sizes = []

for pt in lowpts(hmap, nrows, ncols):

basin = getbasin(pt, hmap)

basin_sizes.append(len(basin))

return math.prod(sorted(basin_sizes)[-3:])

solve(testhmap, testrows, testcols)

solve(puzzlehmap, puzzlerows, puzzlecols)

| day9.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# ## Libs

# +

import os

import shap

import pickle

import warnings

import numpy as np

import pandas as pd

import xgboost as xgb

import lightgbm as lgbm

from tqdm.notebook import tqdm

import matplotlib.pyplot as plt

from utils.general import viz_performance

from sklearn.tree import DecisionTreeClassifier

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import classification_report, confusion_matrix

from sklearn.ensemble import RandomForestClassifier, AdaBoostClassifier, ExtraTreesClassifier

from sklearn.model_selection import cross_validate, cross_val_score, KFold, train_test_split

warnings.filterwarnings('ignore')

# -

# ## Constants

SEED = 1

# ## Data Ingestion

DATA_RAW_PATH = os.path.join('..','data','raw')

MODEL_PATH = os.path.join('..', 'models')

DATA_RAW_NAME = ['abono.csv', 'aposentados.csv']

DATA_IMG_PATH = os.path.join('..', 'figures')

DATA_INTER_PATH = os.path.join('..','data','interim')

DATA_PROCESSED_PATH = os.path.join('..','data','processed')

DATA_INTER_NAME = 'interim.csv'

DATA_PROCESSED_NAME = 'processed.csv'

MODEL_NAME = 'model.pkl'

df = pd.read_csv(os.path.join(DATA_PROCESSED_PATH, DATA_PROCESSED_NAME), sep='\t')

df.head(3)

df.duplicated().sum()

# ## Modeling

df.RENDIMENTO_TOTAL.value_counts(normalize=True)

train = df.drop('RENDIMENTO_TOTAL', axis=1)

X_train, X_test, y_train, y_test = train_test_split(train, df.RENDIMENTO_TOTAL,

test_size=.3,

random_state=SEED,

stratify=df.RENDIMENTO_TOTAL)

# ### Baseline

reglog = LogisticRegression(

class_weight='balanced',

solver='saga',

random_state=SEED

)

viz_performance(X_train, X_test, y_train, y_test, reglog, ['0', '1'], figsize=(14,14))

plt.savefig(os.path.join(DATA_IMG_PATH,'baseline-reglog-metrics.png'), format='png')

# ### Models

models = [

('DecisionTree', DecisionTreeClassifier(random_state=SEED)),

('RandomForest', RandomForestClassifier(random_state=SEED)),

('ExtraTree', ExtraTreesClassifier(random_state=SEED)),

('Adaboost', AdaBoostClassifier(random_state=SEED)),

('XGBoost', xgb.XGBClassifier(random_state=SEED, verbosity=0)),

('LightGBM', lgbm.LGBMClassifier(random_state=SEED))

]

# +

original = pd.DataFrame()

for name, model in tqdm(models):

kfold = KFold(n_splits=5, random_state=SEED, shuffle=True)

score = cross_validate(model, X_train, y_train, cv=kfold, scoring=['precision_weighted','recall_weighted','f1_weighted'], return_train_score=True)

additional = pd.DataFrame({

'precision_train':np.mean(score['train_precision_weighted']),

'precision_test':np.mean(score['test_precision_weighted']),

'recall_train':np.mean(score['train_recall_weighted']),

'recall_test':np.mean(score['test_recall_weighted']),

'f1_train':np.mean(score['train_f1_weighted']),

'f1_test':np.mean(score['test_f1_weighted']),

}, index=[name])

new = pd.concat([original, additional], axis=0)

original = new

original

# +

results = []

names = []

for name, model in tqdm(models):