code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# -*- coding: utf-8 -*-

# +

using DelimitedFiles

A = readdlm("sketches/ww15mgh.grd", skipstart = 1)

A1 = permutedims(A); #transpose into column major order

a1 = vec(A1); #concatenate into a vector to remove unwanted string elements

a1_clean = filter(x -> !(x isa AbstractString), a1);

a_f32 = convert(Array{Float32, 1}, a1_clean) #cast to Float32

#we know that each sequence of 1441 elements corresponds to a constant-latitude

#set of values. after reshaping, each column is a constant-latitude set of

#values running over lon [-pi, pi]

A_F32 = reshape(a_f32, 1441, :);

A_F32 = permutedims(A_F32); #transpose so that each row corresponds to a latitude value

#in the original text file latitude decreases from π/2 to -π/2, we need to flip

#it!

A_final = reverse(A_F32, dims = 1);

#write as big endian

open("ww15mgh_be.bin", "w") do f #opens the specified file, returns it as f, and applies to it the function in the block

write(f, hton.(A_final))

end

#write as little endian

open("ww15mgh_le.bin", "w") do f #opens the specified file, returns it as f, and applies to it the function in the block

write(f, htol.(A_final))

end

#read from big endian

A_tmp = Matrix{Float32}(undef, 721, 1441)

open("ww15mgh_be.bin", "r") do f

read!(f, A_tmp)

end

A_retrieved = ntoh.(A_tmp)

@assert all(A_retrieved[end,:] .== Float32(13.606))

@assert all(A_retrieved[1,:] .== Float32(-29.534))

A_tmp = Matrix{Float32}(undef, 721, 1441)

open("ww15mgh_le.bin", "r") do f

read!(f, A_tmp)

end

A_retrieved = ltoh.(A_tmp)

@assert all(A_retrieved[end,:] .== Float32(13.606))

@assert all(A_retrieved[1,:] .== Float32(-29.534))

# -

B = []

| sketches/geoid96.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # OpenAlex

# ## Getting Started with the APS dataset

# ### <NAME> (<EMAIL>)

# ### Mar 1, 2022

# This notebook is a getting started guide for getting familiar with the OpenAlex databases.

# The OpenAlex database has over 200 million papers and ~2 billion citations.

#

# To keep things manageable, we will focus on a smaller snapshot of the dataset which only covers the papers published by the APS.

# + tags=[]

# load libraries

import pandas as pd

from pathlib import Path

import igraph as ig

import seaborn as sns

import matplotlib.pyplot as plt

from tqdm.auto import tqdm

import networkx as nx

import numpy as np

tqdm.pandas()

plt.rcParams.update({'font.size': 22})

sns.set(style="ticks", context="talk")

plt.style.use("dark_background")

# + tags=[]

# set paths

basepath: Path = Path('/N/project/openalex')

aps_csv_path = basepath / 'APS' / 'csvs' # directory containing CSVs

aps_parq_path = basepath / 'APS' / 'parquets' # directory containing parquet files

# + tags=[]

# some helper functions

def read_parquet(name, **args):

path = aps_parq_path / name

df = pd.read_parquet(path, engine='pyarrow')

df.reset_index(inplace=True)

if '__null_dask_index__' in df.columns:

df.drop(['__null_dask_index__'], axis=1, inplace=True)

if 'index' in df.columns:

df.drop(['index'], axis=1, inplace=True)

df.drop_duplicates(inplace=True)

if 'index_col' in args:

df.set_index(args['index_col'], inplace=True)

print(f'Read {len(df):,} rows from {path.stem!r}')

return df

def read_csv(name, **args):

path = aps_csv_path / f'{name}.csv.gz'

df = pd.read_csv(path, **args)

df.drop_duplicates(inplace=True)

print(f'Read {len(df):,} rows from {path.stem!r}')

return df

def to_parquet(df, path, **args):

print(f'Writing {len(df):,} rows to {path.stem!r}')

df.to_parquet(path, engine='pyarrow', index=False, **args)

return

# -

# ## Dataframes

# #### Notes:

# 1. Every table exists in two formats: CSV and Parquet. CSVs are good for humans while Parquets are more efficient. You may use either format.

#

# 2. Each work, venue, author, institution, and concept are assigned **unique** IDs.

#

# 4. The following relationship between the objects are defined.

# * `works_authorships`: relates works and authors (with institution info). Note: a (work_id, author_id) does not uniquely identify a row because an author may have multiple affiliations on a single paper.

#

# * `aps_referenced_aps`: relates works `w_1` and `w_2` if `w_1` cites `w_2`. Important: both `w_1` and `w_2` are published in the APS.

#

# * `works_concepts`: relates works with concepts. Note: a work may have multiple concepts.

#

# * `concepts_ancestors`: relates a pair of concepts `c_1` and `c_2` if `c_2` is an ancestor of `c_1` in the hierarchy of concepts

#

# * `concepts_related_concepts`: relates a pair of concepts `c_1` and `c_2` if `c_1` is related to `c_2`

# + tags=[]

aps_works_df = read_parquet('works') # read the works db

## or equivalently aps_works_df = read_csv('works')

aps_works_df.head(3)

# + tags=[]

concepts_df = read_parquet('concepts')

concepts_df.head()

# + tags=[]

aps_authors_df = read_parquet('works_authorships')

aps_authors_df.head(3)

# + tags=[]

aps_refs_df = read_parquet('aps_referenced_aps')

aps_refs_df.head(3)

# + tags=[]

works_concepts_df = read_parquet('works_concepts')

works_concepts_df.head(3)

# + tags=[]

concepts_ancestors_df = read_parquet('concepts_ancestors')

concepts_ancestors_df

# + [markdown] jp-MarkdownHeadingCollapsed=true tags=[]

# ## Making graphs using iGraph

#

# We have a few options on what graphs we want to construct. To keep things simple, we'll construct a citation graph using `igraph`.

# `aps_refs_df` is essentially an edge list.

#

# Let's create a node dataframe which would have necessary node info.

# -

aps_works_df

# + tags=[]

nodes_df = aps_works_df[['work_id', 'venue_id', 'venue_name', 'title', 'publication_year', 'abstract']] # change this according to your needs

# + tags=[]

nodes_df

# + tags=[]

cite_g = ig.Graph.DataFrame(edges=aps_refs_df, vertices=nodes_df, directed=True)

print(cite_g.summary())

# -

# We have a graph!

#

# Node attributes can be accessed using the `vertex_property` dictionary. Refer to [this link](https://igraph.org/python/tutorial/latest/tutorial.html#querying-vertices-and-edges-based-on-attributes) for more info.

# + tags=[]

print(cite_g.vs.attribute_names()) # name has the work_ids

# + tags=[]

example_work_id = 'https://openalex.org/W3086477784'

print('Node IDs:', cite_g.neighbors(example_work_id, mode='out')) # list the outgoing neighbors of this node

# to get names of the nodes, use the cite_g.vs

print('Node names:', [cite_g.vs[u]['name'] for u in cite_g.neighbors(example_work_id, mode='out')]) # similarly for titles, abstract, etc.

# + tags=[]

# compute the degree distributions

in_degrees= [cite_g.degree(v, 'in') for v in cite_g.vs]

out_degrees= [cite_g.degree(v, 'out') for v in cite_g.vs]

fig, (ax1, ax2) = plt.subplots(figsize=(17, 8), ncols=2)

sns.histplot(in_degrees, bins=10, ax=ax1);

sns.histplot(out_degrees, bins=10, ax=ax2);

ax1.set_xlabel('In-degree')

ax1.set_yscale('log');

ax2.set_xlabel('Out-degree')

ax2.set_yscale('log');

# -

clusters = cite_g.clusters(mode='weak')

lcc_g = clusters.giant()

print(lcc_g.summary())

# + [markdown] jp-MarkdownHeadingCollapsed=true tags=[]

# ## Co-authorship graphs

# -

aps_authors_df

# + tags=[]

work_id = 'https://openalex.org/W3134780308'

aps_authors_df.query(f'work_id==@work_id')[['author_id', 'author_name']]

# + tags=[]

author_counts = aps_authors_df.groupby('work_id').count()['author_id'].to_dict() # no of authors for a paper

# -

aps_authors_df.groupby('author_id').count()['work_id'] # no of authors for a paper

# + tags=[]

df = aps_authors_df.groupby('work_id')['author_id'].apply(list).reset_index()

# + tags=[]

df

# -

aps_refs_df

# + tags=[]

aps_refs_df.loc[:, 'work_authors_count'] = aps_refs_df.work_id.apply(lambda w:

author_counts.get(w, 0))

aps_refs_df.loc[:, 'ref_work_authors_count'] = aps_refs_df.referenced_work_id.apply(lambda w:

author_counts.get(w, 0))

aps_refs_df

# + tags=[]

aps_refs_df.work_authors_count.value_counts().reset_index().sort_values(by='index')

# -

aps_refs_df.ref_work_authors_count.value_counts().reset_index().sort_values(by='index')

aps_refs_df

import numpy as np

def calculate_weights(row):

m = row.work_authors_count

n = row.ref_work_authors_count

if m == 0 or n == 0:

return np.nan

else:

return 1 / (m * n)

# + tags=[]

aps_refs_df.loc[:, 'weight'] = aps_refs_df.progress_apply(lambda row: calculate_weights(row),axis=1)

# -

aps_refs_df

# +

aps_refs_df[aps_refs_df.weight.isna()]

# + tags=[]

df = aps_authors_df.head(100)

pd.crosstab(df.author_id, df.work_id)

# + tags=[]

auth_id1 = 'https://openalex.org/A2259130185'

auth_id2 = 'https://openalex.org/A2489500432'

work_ids = aps_authors_df.query('author_id==@auth_id1')['work_id']

aps_authors_df.query('author_id==@auth_id2 & work_id.isin(@work_ids)').count()

# + tags=[]

cleaned_authors_df = aps_authors_df.drop_duplicates(subset=['work_id', 'author_id']) # throw out authors with multiple affils

cleaned_authors_df

# -

cleaned_authors_df.query('work_id==@work_id & author_id==@auth_id')

# + tags=[]

df = cleaned_authors_df.head(500_000)

pair_count_df = pd.crosstab(df.author_id, df.author_id)

# + tags=[]

pair_count_df.unstack()

# + tags=[]

x = pair_count_df.to_numpy()

np.count_nonzero(x - np.diag(np.diagonal(x)))

# -

# ## NetworkX

#

# Let's use NetworkX to construct bipartite graphs -- co-authorships, work-concepts

aps_authors_df

# + tags=[]

cleaned_authors_df = aps_authors_df.drop_duplicates(subset=('work_id', 'author_id')) # drops affils, each paper author pair appears exactly once

# + tags=[]

authorship_g = nx.from_pandas_edgelist(cleaned_authors_df, source='work_id', target='author_id', edge_attr=['title', 'publication_year', 'author_name'])

print(nx.info(authorship_g))

# + tags=[]

nx.is_bipartite(authorship_g) # should be True

# + tags=[]

degree_sequence_auth = sorted((d for n, d in authorship_g.degree() if 'A' in n), reverse=True)

degree_sequence_works = sorted((d for n, d in authorship_g.degree() if 'W' in n), reverse=True)

# + tags=[]

fig, ax = plt.subplots(figsize=(15, 6))

ax.plot(*np.unique(degree_sequence_auth, return_counts=True), label='Authors')

ax.plot(*np.unique(degree_sequence_works, return_counts=True), label='Works')

ax.set_title("Degree histogram");

ax.set_xlabel("Degree");

ax.set_ylabel("Counts");

ax.set_xscale('log');

ax.set_yscale('log');

ax.legend();

# -

# discard works with > 50 authors

K = 50

discard_nodes = [n for n in authorship_g.nodes() if 'W' in n and authorship_g.degree(n) > K]

len(discard_nodes)

# + tags=[]

authorship_g.remove_nodes_from(discard_nodes)

# -

nx.is_connected(authorship_g)

# + tags=[]

comp_sizes = sorted((len(c) for c in nx.connected_components(authorship_g)), reverse=True)

comp_sizes[: 10]

# -

lcc = max(nx.connected_components(authorship_g), key=len)

lcc_auth_g = authorship_g.subgraph(lcc).copy()

print(authorship_g)

print(lcc_auth_g)

# ### Read references

# + tags=[]

refs_g = nx.from_pandas_edgelist(aps_refs_df, source='work_id', target='referenced_work_id', create_using=nx.DiGraph)

print(refs_g)

# + tags=[]

# assign weights to citation graph -- if a paper with i authors cite a paper with j authors, weight is 1 / (i * j)

edge_wts = {}

for u, v in refs_g.edges():

if authorship_g.has_node(u) and authorship_g.has_node(v):

w = 1 / (authorship_g.degree(u) * authorship_g.degree(v))

else:

w = 0

edge_wts[(u, v)] = w

nx.set_edge_attributes(refs_g, name='weight', values=edge_wts)

# + tags=[]

zeros = [k for k, v in edge_wts.items() if v == 0]

zeros[: 2]

# -

refs_g.remove_edges_from(zeros) # remove the 0 weighted edges

# + tags=[]

author_nodes = [n for n in lcc_auth_g if 'A' in n]

len(author_nodes)

# + tags=[]

coauth_g = nx.bipartite.weighted_projected_graph(lcc_auth_g, nodes=author_nodes)

print(coauth_g)

# + tags=[]

wts = [v for v in nx.get_edge_attributes(coauth_g, 'weight').values()]

len(wts)

# + tags=[]

fig, ax = plt.subplots(figsize=(15, 8))

sns.histplot(wts, bins=100, ax=ax);

ax.set_yscale('log');

# -

| notebooks/getting-started.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

#

# # Qiskit Chemistry, Programmatic Approach

#

# ### Introduction

# In the [declarative_approach](declarative_approach.ipynb) example, we show how to configure different parameters in an input dictionary for different experiments in Qiskit Chemistry. However, many users might be interested in experimenting with new algorithms or algorithm components, or in programming an experiment step by step using the Qiskit Chemistry APIs. This notebook illustrates how to use Qiskit Chemistry's programmatic APIs.

#

# In this notebook, we decompose the computation of the ground state energy of a molecule into 4 steps:

# 1. Define a molecule and get integrals from a computational chemistry driver (PySCF in this case)

# 2. Construct a Fermionic Hamiltonian and map it onto a qubit Hamiltonian

# 3. Instantiate and initialize dynamically-loaded algorithmic components, such as the quantum algorithm VQE, the optimizer and variational form it will use, and the initial_state to initialize the variational form

# 4. Run the algorithm on a quantum backend and retrieve the results

# +

# import common packages

import numpy as np

from qiskit import Aer

# lib from Qiskit Aqua

from qiskit.aqua import QuantumInstance

from qiskit.aqua.algorithms import VQE, ExactEigensolver

from qiskit.aqua.operators import Z2Symmetries

from qiskit.aqua.components.optimizers import COBYLA

# lib from Qiskit Aqua Chemistry

from qiskit.chemistry import FermionicOperator

from qiskit.chemistry.drivers import PySCFDriver, UnitsType

from qiskit.chemistry.aqua_extensions.components.variational_forms import UCCSD

from qiskit.chemistry.aqua_extensions.components.initial_states import HartreeFock

# -

# ### Step 1: Define a molecule

# Here, we use LiH in the sto3g basis with the PySCF driver as an example.

# The `molecule` object records the information from the PySCF driver.

# using driver to get fermionic Hamiltonian

# PySCF example

driver = PySCFDriver(atom='Li .0 .0 .0; H .0 .0 1.6', unit=UnitsType.ANGSTROM,

charge=0, spin=0, basis='sto3g')

molecule = driver.run()

# ### Step 2: Prepare qubit Hamiltonian

# Here, we setup the **to-be-frozen** and **to-be-removed** orbitals to reduce the problem size when we map to the qubit Hamiltonian. Furthermore, we define the **mapping type** for the qubit Hamiltonian.

# For the particular `parity` mapping, we can further reduce the problem size.

# +

# please be aware that the idx here with respective to original idx

freeze_list = [0]

remove_list = [-3, -2] # negative number denotes the reverse order

map_type = 'parity'

h1 = molecule.one_body_integrals

h2 = molecule.two_body_integrals

nuclear_repulsion_energy = molecule.nuclear_repulsion_energy

num_particles = molecule.num_alpha + molecule.num_beta

num_spin_orbitals = molecule.num_orbitals * 2

print("HF energy: {}".format(molecule.hf_energy - molecule.nuclear_repulsion_energy))

print("# of electrons: {}".format(num_particles))

print("# of spin orbitals: {}".format(num_spin_orbitals))

# +

# prepare full idx of freeze_list and remove_list

# convert all negative idx to positive

remove_list = [x % molecule.num_orbitals for x in remove_list]

freeze_list = [x % molecule.num_orbitals for x in freeze_list]

# update the idx in remove_list of the idx after frozen, since the idx of orbitals are changed after freezing

remove_list = [x - len(freeze_list) for x in remove_list]

remove_list += [x + molecule.num_orbitals - len(freeze_list) for x in remove_list]

freeze_list += [x + molecule.num_orbitals for x in freeze_list]

# prepare fermionic hamiltonian with orbital freezing and eliminating, and then map to qubit hamiltonian

# and if PARITY mapping is selected, reduction qubits

energy_shift = 0.0

qubit_reduction = True if map_type == 'parity' else False

ferOp = FermionicOperator(h1=h1, h2=h2)

if len(freeze_list) > 0:

ferOp, energy_shift = ferOp.fermion_mode_freezing(freeze_list)

num_spin_orbitals -= len(freeze_list)

num_particles -= len(freeze_list)

if len(remove_list) > 0:

ferOp = ferOp.fermion_mode_elimination(remove_list)

num_spin_orbitals -= len(remove_list)

qubitOp = ferOp.mapping(map_type=map_type, threshold=0.00000001)

qubitOp = Z2Symmetries.two_qubit_reduction(qubitOp, num_particles) if qubit_reduction else qubitOp

qubitOp.chop(10**-10)

print(qubitOp.print_details())

print(qubitOp)

# -

# We use the classical eigen decomposition to get the smallest eigenvalue as a reference.

# Using exact eigensolver to get the smallest eigenvalue

exact_eigensolver = ExactEigensolver(qubitOp, k=1)

ret = exact_eigensolver.run()

print('The computed energy is: {:.12f}'.format(ret['eigvals'][0].real))

print('The total ground state energy is: {:.12f}'.format(ret['eigvals'][0].real + energy_shift + nuclear_repulsion_energy))

# ### Step 3: Initiate and configure dynamically-loaded instances

# To run VQE with the UCCSD variational form, we require

# - VQE algorithm

# - Classical Optimizer

# - UCCSD variational form

# - Prepare the initial state in the HartreeFock state

# ### [Optional] Setup token to run the experiment on a real device

# If you would like to run the experiment on a real device, you need to setup your account first.

#

# Note: If you did not store your token yet, use `IBMQ.save_account('MY_API_TOKEN')` to store it first.

# +

# from qiskit import IBMQ

# provider = IBMQ.load_account()

# -

backend = Aer.get_backend('statevector_simulator')

# +

# setup COBYLA optimizer

max_eval = 200

cobyla = COBYLA(maxiter=max_eval)

# setup HartreeFock state

HF_state = HartreeFock(qubitOp.num_qubits, num_spin_orbitals, num_particles, map_type,

qubit_reduction)

# setup UCCSD variational form

var_form = UCCSD(qubitOp.num_qubits, depth=1,

num_orbitals=num_spin_orbitals, num_particles=num_particles,

active_occupied=[0], active_unoccupied=[0, 1],

initial_state=HF_state, qubit_mapping=map_type,

two_qubit_reduction=qubit_reduction, num_time_slices=1)

# setup VQE

vqe = VQE(qubitOp, var_form, cobyla)

quantum_instance = QuantumInstance(backend=backend)

# -

# ### Step 4: Run algorithm and retrieve the results

# The smallest eigenvalue is stored in the first entry of the `eigvals` key.

results = vqe.run(quantum_instance)

print('The computed ground state energy is: {:.12f}'.format(results['eigvals'][0]))

print('The total ground state energy is: {:.12f}'.format(results['eigvals'][0] + energy_shift + nuclear_repulsion_energy))

print("Parameters: {}".format(results['opt_params']))

import qiskit.tools.jupyter

# %qiskit_version_table

# %qiskit_copyright

| qiskit/advanced/aqua/chemistry/programmatic_approach.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Emboss Filter #

# This is a Emboss Filter

#

# First you download the bit file.

# +

from pynq.overlays.video import *

from pynq.lib.video import *

base = VideoOverlay("video.bit")

hdmi_in = base.video.hdmi_in

hdmi_out = base.video.hdmi_out

# -

# Then start up the PRControl, the video will not work otherwise. It initailzes the Video Axi Switch so HDMI runs through the VDMA.

from pynq.overlays.video import PRControl

pr_inst = PRControl()

# The best video sources are computers where you can control the resoltuion.

hdmi_in.configure()

hdmi_out.configure(hdmi_in.mode,PIXEL_BGR)

hdmi_out.start()

hdmi_in.start()

hdmi_in.tie(hdmi_out)

# Here is a frame in VDMA.

import PIL.Image

import cv2

frame = hdmi_in.readframe()

frame = cv2.cvtColor(frame,cv2.COLOR_BGR2RGB)

image = PIL.Image.fromarray(frame)

image

# The Dilate Filter has to be loaded in. In can fit into L0,M0,M1,M2,S0,S1,S2,S3,S4,S5

# Connect the HDMI_IN to S0 and S0 to VDMA and VDMA to HDMI_OUT

pr_inst.connect("HDMI_IN","L0")

pr_inst.connect("L0","VDMA")

pr_inst.connect("VDMA","HDMI_OUT")

PartialBitstream("emboss_l0.bit").download()

import PIL.Image

import cv2

frame = hdmi_in.readframe()

frame = cv2.cvtColor(frame,cv2.COLOR_BGR2RGB)

image = PIL.Image.fromarray(frame)

image

# There are no settings for Emboss

# Try other filter locations or two emboss in a row

hdmi_out.close()

hdmi_in.close()

| Video_notebooks/Emboss Filter.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="DWJm-HoPI4Zh"

# # Desafio Python e E-mail

#

# ### Descrição

#

# Digamos que você trabalha em uma indústria e está responsável pela área de inteligência de negócio.

#

# Todo dia, você, a equipe ou até mesmo um programa, gera um report diferente para cada área da empresa:

# - Financeiro

# - Logística

# - Manutenção

# - Marketing

# - Operações

# - Produção

# - Vendas

#

# Cada um desses reports deve ser enviado por e-mail para o Gerente de cada Área.

#

# Crie um programa que faça isso automaticamente. A relação de Gerentes (com seus respectivos e-mails) e áreas está no arquivo 'Enviar E-mails.xlsx'.

#

# Dica: Use o pandas read_excel para ler o arquivo dos e-mails que isso vai facilitar.

# + id="Fc4oggaQI4Zx"

import pandas as pd

import win32com.client as win32

outlook = win32.Dispatch('outlook.application')

gerentes_df = pd.read_excel('Enviar E-mails.xlsx')

#gerentes_df.info()

for i, email in enumerate(gerentes_df['E-mail']):

gerente = gerentes_df.loc[i, 'Gerente']

area = gerentes_df.loc[i, 'Relatório']

mail = outlook.CreateItem(0)

mail.To = '<EMAIL>'

mail.Subject = 'Relatório de {}'.format(area)

mail.Body = '''

Prezado {},

Segue em anexo o Relatório de {}, conforme solicitado.

Qualquer dúvida estou à disposição.

Att.,

'''.format(gerente, area)

attachment = r'C:\Users\Maki\Downloads\e-mail\{}.xlsx'.format(area)

mail.Attachments.Add(attachment)

mail.Send()

| desafio.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.6.13 64-bit (''python-chilla'': conda)'

# language: python

# name: python3

# ---

# ## Student Detials

# > Title= "Mr"\

# > Name= "<NAME>"\

# > email = "<EMAIL>"\

# > whatsapp = "03358043653"

# ### In this Notebook I am are going to Explore OpenCV with Python

Layer

# ### Layout

# > + Introduction to images

# > + installation

# > + Read Data

# > + Images

# > + Videos

# > + Webcam (live)

# > + Importatnt function to be used in Opencv

# > + Scalling or normalizing images

# > + Adding objects to images

# > + Wrap and Persecpetives

# > + Joining images

# > + Color detection

# > + Edge detection

# > + Face Detection

# > + Projcet

# > + Car Counter

# > + Face Detection and much more

#

# # What is Pixel:

# A pixel is the smallest unit of a digital image or graphic that can be displayed and represented on a digital display device.

#

# A pixel is the basic logical unit in digital graphics. Pixels are combined to form a complete image, video, text, or any visible thing on a computer display.

#

# A pixel is also known as a picture element (pix = picture, el = element).

# ### Pixel may refer to any of the following:

#

# ## Pixels

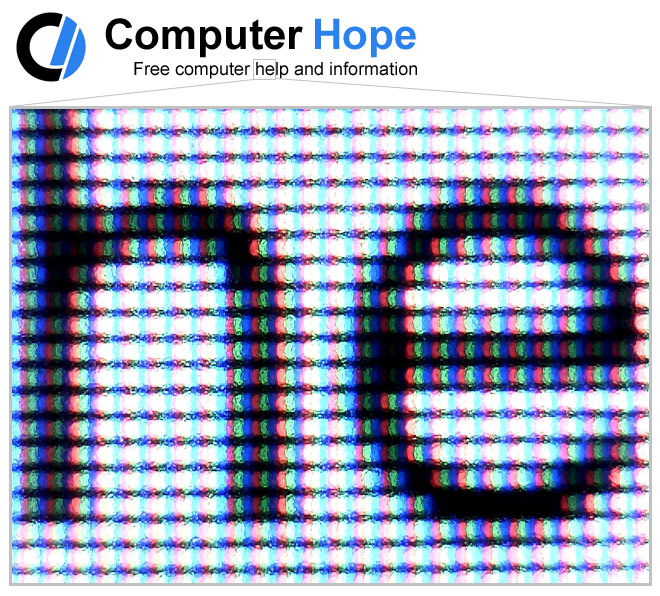

# 1. Term that comes from the words PEL (picture element). A px (pixel) is the smallest portion of an image or display that a computer is capable of printing or displaying. You can get a better understanding of what a pixel is when zooming into an image as seen in the picture.

#

# As you can see in this example, the character image in this picture is zoomed into at 1600%. Each of the blocks seen in the example picture is a single pixel of this image. Everything on the computer display looks similar to this when zoomed in upon. The same is true with printed images, which are created by several little dots that are measured in DPI.

#

# ## Screen pixels

# In the picture below is an example of a close up of pixels on an LCD screen. As shown in the picture, we've zoomed into the "he" part of the word "help" to illustrate how the display works. Each pixel has RGB (red, green, and blue) color components. The brightness of each component is increased or decreased to produce the variance of colors you see on the screen.

#

# # Screen Size

# We have different types of Scren sizes

# 1. VGA

# 2. SXGA

# 3. HDTV

# 4. 4K

#

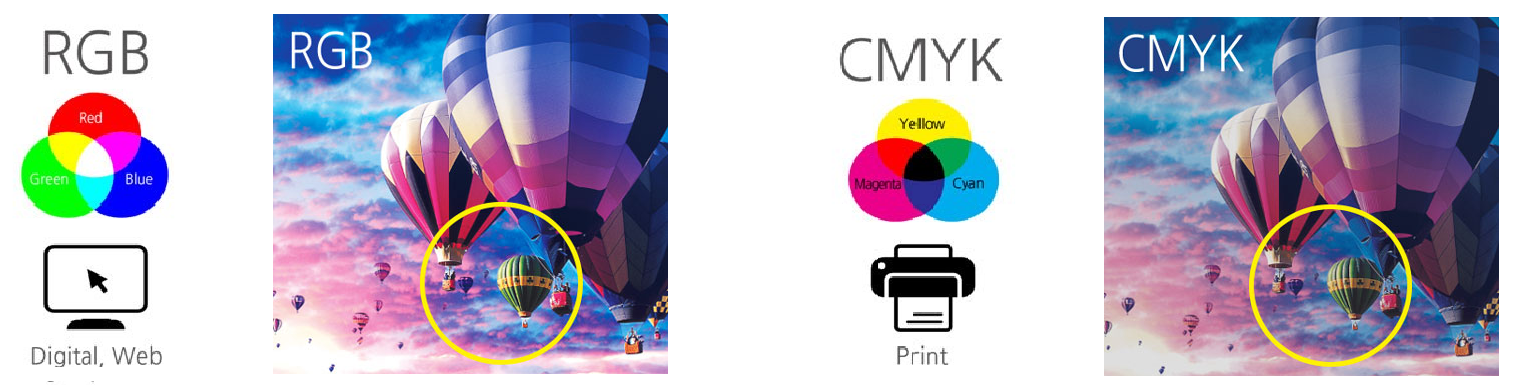

# ## More than just RGB

# Let’s talk about color modes a little bit more. A color model is a system for creating a full range of colors

# using the primary colors. There are two different color models here: additive color models and subtractive color

# models. Additive models use light to represent colors in computer screens while subtractive models use inks to

# print those digital images on papers. The primary colors are red, green and blue (RGB) for the first one and

# cyan, magenta, yellow and black (CMYK) for the latter one. All the other colors we see on images are made by

# combining or mixing these primary colors. So the pictures can be depicted a little bit differently when they

# are represented in RGB and CMYK.

#

#

# You would be pretty accustomed to these two kinds of models. In the world of color models, however, there are more than two kinds of models. Among them, grayscale, HSV and HLS are the ones you’re going to see quite often in computer vision.

#

# A grayscale is simple. It represents images and morphologies by the intensity of black and white, which means it has only one channel. To see images in grayscale, we need to convert the color mode into gray just as what we did with the BGR image earlier.

# ## Bits or Bit Depth

# Bit depth refers to the color information stored in an image. The higher the bit depth of an image, the more colors it can store. The simplest image, a 1 bit image, can only show two colors, black and white. That is because the 1 bit can only store one of two values, 0 (white) and 1 (black).

#

#

# Take a look at the images above. The three images show you how each channel is composed of. In the R channel picture, the part with the high saturation of red colors looks white. Why is that? This is because the values in the red color parts will be near 255. And in grayscale mode, the higher the value is, the whiter the color becomes. You can also check this with G or B channels and compare how certain parts differ one from another.

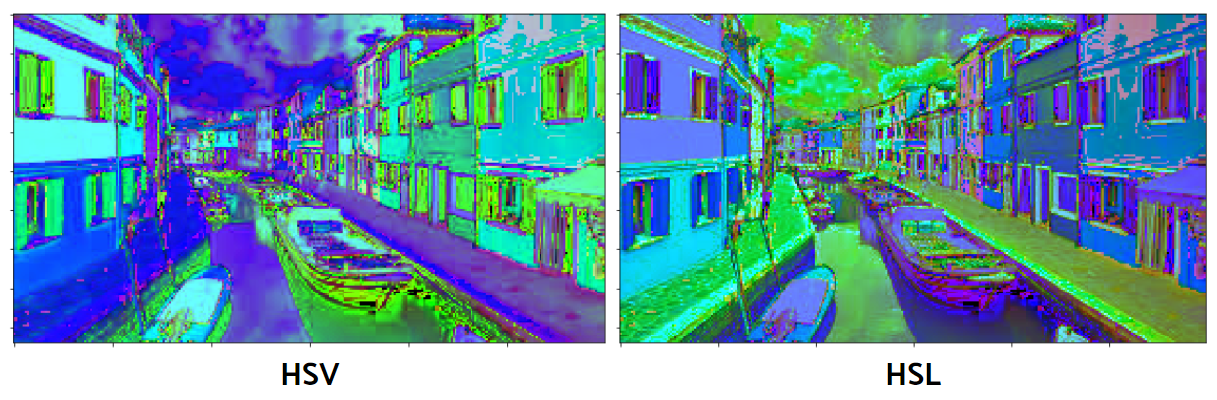

#

# HSV and HLS take a bit different aspect. As you can see above, they have a three-dimensional representation, and it’s more similar to the way of human perception. HSV stands for hue, saturation and value. HSL stands for hue, saturation and lightness. The center axis for HSV is the value of colors while that for HSL is the amount of light. Along the angles from the center axis, there is hue, the actual colors. And the distance from the center axis belongs to saturation.

#

# ## Gray Scale

# Multilevel

# ## Black = 0

# ## Gray = 1-255

# ## White = 256

# 8 bit 2**8= 256

# ## Bits or Bit Depth

# A colr image is typically represented by a bit dept ranign from 8 to 24 or hhiher

#

# With a 24-bit image the bit are often divied into three groping: 8 for red and 8 for green and 8 for blue channel.

#

# Combinaing pf bits are used to reprent those bit.

#

# A 24 bit images offers 16.7 milion 2**24 colors

# ## Three kind of images

#

#

# **Bit Depth:** Left to right - 1-bit bitonal, 8-bit grayscale, and 24-bit color images.

#

# Binary calculations for the number of tones represented by common bit depths:

#

# 1. 1 bit (21) = 2 tones

# 2. 2 bits (22) = 4 tones

# 3. 3 bits (23) = 8 tones

# 4. 4 bits (24) = 16 tones

# 5. 8 bits (28) = 256 tones

# 6. 16 bits (216) = 65,536 tones

# 7. 24 bits (224) = 16.7 million tones

# ## Videos

# its a continous sequence of images

# like:

#

#

# ## Frame per Second (fps)

# Frames Per Second or FPS is the rate at which back to back images called frames appear in a display and form moving imagery.

#

# Video content that we consume daily isn’t actually moving. In fact, they are still images that play one after the other. If a video is shot at 24fps, this means that 24 individual frames are played back in a second. They change at a different rate across mediums depending on a lot of other factors.

# ## calculated FPS in video:

# * for 60 fps: 3600 frames

# * for 24 fps: 1440 frames

# * for 15 fps: 900 frames

# ## Detecting Images is Similir to videos:

#

# So images is move during video and you have to track. E.g selfi tracking

# ### Installing python and opencv

# ```

#

# # !pip install python

# # !pip install python-opencv

#

# ```

#

import numpy as np

import cv2

import matplotlib.pyplot as plt

# %matplotlib inline

# Import the image

img = cv2.imread('data/img.jpg')

plt.imshow(img)

# or open with cv2 not using plt

cv2.imshow('Image Window', img)

cv2.waitKey(0)

| opencv_with_python/01_cv_with_python.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## enum

# ### enum is used to create symbols for values instead of using strings and interger.

# +

# Creating enum class

import enum

class PlaneStatus(enum.Enum):

standing = 0

enroute_runway = 1

takeoff = 2

in_air = 3

landing = 4

print('\nMember name: {}'.format(PlaneStatus.enroute_runway.name))

print('Member value: {}'.format(PlaneStatus.enroute_runway.value))

# +

#Iterating over enums

for member in PlaneStatus:

print('{} = {}'.format(member.name, member.value))

# +

#Comparing enums - compare using identity and equality

actual_state = PlaneStatus.enroute_runway

desired_state = PlaneStatus.in_air

#comparison through equality

print('Equality: ', actual_state == desired_state, actual_state == PlaneStatus.enroute_runway)

#comparison through identity

print('Identity: ', actual_state is desired_state, actual_state is PlaneStatus.enroute_runway)

# +

'''NO SUPPORT FOR ORDERED SORTING AND COMPARISON'''

print('Ordered by value:')

try:

print('\n'.join('' + s.name for s in sorted(PlaneStatus)))

except TypeError as err:

print('Cannot sort:{}'.format(err))

# -

# ## Use IntEnum for order support

# +

# Ordered by value

class NewPlaneStatus(enum.IntEnum):

standing = 0

enroute_runway = 1

takeoff = 2

in_air = 3

landing = 4

print('\n'.join(' ' + s.name for s in sorted(NewPlaneStatus)))

# -

# ## Unique Ebumeration values

# +

#Aliases for other members, do not appear separately in the output when iterating over the Enum.

#The canonical name for a member is the first name attached to the value.

class SamePlaneStatus(enum.Enum):

standing = 0

enroute_runway = 1

takeoff = 2

in_air = 3

landing = 4

maintainance = 0

fueling = 3

for status in SamePlaneStatus:

print('{} = {}'.format(status.name, status.value))

print('\nSame: standing is maintainance: ', SamePlaneStatus.standing is SamePlaneStatus.maintainance)

print('Same: in_air is fueling: ', SamePlaneStatus.in_air is SamePlaneStatus.fueling)

# +

# Add @unique decorator to the Enum

@enum.unique

class UniPlaneStatus(enum.Enum):

standing = 0

enroute_runway = 1

takeoff = 2

in_air = 3

landing = 4

#error triggered here

maintainance = 0

fueling = 3

for status in SamePlaneStatus:

print('{} = {}'.format(status.name, status.value))

# -

# ## Creating Enums programmatically

# +

PlaneStatus = enum.Enum(

value = 'PlaneStatus',

names = ('standing', 'enroute_runway', 'takeoff', 'in_air', 'landing')

)

print('Member:{}'.format(PlaneStatus.in_air))

print('\nAll Members:')

for status in PlaneStatus:

print('{} = {}'.format(status.name, status.value))

# +

PlaneStatus = enum.Enum(

value = 'PlaneStatus',

names = [

('standing', 1),

('enroute_runway', 2),

('takeoff', 3),

('in_air', 4),

('landing', 5)

]

)

print('\nAll Members:')

for status in PlaneStatus:

print('{} = {}'.format(status.name, status.value))

# -

| data structures/enum.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from pyspark.sql import SparkSession

from pyspark.sql.types import StructType,StructField,StringType

spark = (SparkSession.builder.appName("Challenge 2").getOrCreate())

# ### tại sao khi load ta lại để hết StringType

# #### Vì rất có thể dữ liệu đang bị sai mà load vào sẽ không nhận và bị lỗi trong khi load

# #### trong khi đó stringtype nhận mọi loại dữ liệu, ta có thể load vào và xử lý từ từ

sale_df = StructType([

StructField("Order ID",StringType(),True),

StructField("Product",StringType(),True),

StructField("Quantity",StringType(),True),

StructField("Price",StringType(),True),

StructField("Order Date",StringType(),True),

StructField("Address",StringType(),True)

])

sale_path = "salesdata"

sale_df = (spark.read.format("csv")

.option("header",True)

.schema(sale_df)

.load(sale_path))

sale_df.show(10)

from pyspark.sql.functions import col

sale_df.filter(col("Order ID") == "Order ID").show(10)

# ### Xoá các hàng bị null và sai

# #### bây giờ ta sẽ thực hiện xoá các cột bị null

# #### tiếp theo sẽ xoá các cột có giá trị không mong muốn như sai type, sai kiểu dữ liệu

sale_df.filter(col("Order ID").isNull()==True).show(10)

sale_df = sale_df.na.drop("any")

sale_df.filter(col("Order ID").isNull()==True).show(10)

sale_df.describe("Order ID","Product","Quantity","Price","Order Date","Address").show()

# ### ta thấy rằng có một vài vấn đề không đúng ở đây, ví dụ

# #### cột id đáng ra chỉ có số nhưng lại có những giá trị như "Order ID"

# #### và các cột còn lại có rất nhiều giá trị bất hợp lý ở hàng max

# #### đầu tiên ở dưới ta thực hiện xoá những bản ghi trùng lặp

distinct_df = sale_df.distinct()

distinct_df.filter(col("Order ID")== "Order ID").show(10)

clean_df = distinct_df.filter(col("Order ID") != "Order ID")

clean_df.filter(col("Order ID") == "Order ID").show(10)

clean_df.describe("Order ID","Product","Quantity","Price","Order Date","Address").show()

# #### bây giờ ở các cột ta đã thấy rằng giá trị đúng cần tìm đúng là số, chữ hay địa chỉ chứ không phải sai thể loại nữa

# ### Trích xuất những dữ liệu nhỏ hơn

# trong ý tưởng ta có thể nghĩ ra rằng chúng ta sẽ rút chuỗi dựa trên dấu phẩy sau đó trích xuất tương ứng

clean_df.show(10, truncate = False)

from pyspark.sql.functions import split

clean_df.select("Address").show(10,False)

clean_df.select("Address",split(col("Address"),",")).show(10,False)

# Bây giờ ta có thẻ hiểu rằng cột thứ 2 như là một list, ta có thể thoải mái lấy thông tin dựa trên index

clean_df.select("Address",split(col("Address"),",").getItem(1)).show(10,False)

clean_df.select("Address",split(col("Address"),",").getItem(2)).show(10,False)

clean_df.select("Address",split(split(col("Address"),",").getItem(2)," ")).show(10,False)

new_col = (sale_df.withColumn("City",split(col("Address"),",").getItem(1))

.withColumn("State",split(split(col("Address"),",").getItem(2)," ").getItem(1)))

new_col.show(10)

# ### Rename and change DataType

# bây giờ ta đã thấy dữ liệu đã sạch đẹp, bước tiếp theo là trả về đúng kiểu dữ liệu

from pyspark.sql.functions import to_timestamp, year, month

from pyspark.sql.types import IntegerType,FloatType

new_df = (new_col.withColumn("Order_ID",col("Order ID").cast(IntegerType()))

.withColumn("Quantity_pro",col("Quantity").cast(IntegerType()))

.withColumn("Price_pro",col("Price").cast(IntegerType()))

.withColumn("Order_Date",to_timestamp(col("Order Date"),"MM/dd/yy HH:mm"))

.withColumnRenamed("Address","StoreAddress")

.drop("Order ID")

.drop("Quantity")

.drop("Price")

.drop("Order Date"))

new_df.show(10)

new_df.printSchema()

new_df = (new_df.withColumn("Year",year(col("Order_Date")))

.withColumn("Month",month(col("Order_Date"))))

new_df.show(10)

# ### lưu vào file parquet

# Tại sao ta không lưu vào một định dạng dễ nhìn như csv hay pgadmin mà phải lưu vào parquet<br>

# ta có thể hiểu rằng file parquet phân vùng dữ liệu cực kì tốt và có thể chia nhỏ những dữ liệu theo mong muốn của mình<br>

# việc này giúp khi lưu dữ liệu xuống nó được sắp xếp ngăn nắp trong các folder riêng việt<br>

# khi muốn lấy dữ liệu nào ta chỉ cần lấy đúng vị trí và địa chỉ đó không phải load một khối lượng data to đùng<br>

output_path = "challenge2"

new_df.write.mode("overwrite").partitionBy("Year","Month").parquet(output_path)

# vậy là ta sẽ thấy được dữ liệu lưu xuống theo 2 năm là 2019 và 2020<br>

# trong folder 2019 và 2020 lại được chia ra folder nhỏ với 12 tháng<br>

| Section4/Challenge2.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.3.0-rc4

# language: julia

# name: julia-1.3

# ---

# # Systematic comparison of MendelImpute against Minimac4 and Beagle5

using Revise

using VCFTools

using MendelImpute

using GeneticVariation

using Random

using Suppressor

# # Simulate data of various sizes

#

# ## Step 0. Install `msprime`

#

# [msprime download Link](https://msprime.readthedocs.io/en/stable/installation.html).

#

# Some people might need to activate conda environment via `conda config --set auto_activate_base True`. You can turn it off once simulation is done by executing `conda config --set auto_activate_base False`.

#

#

# ## Step 1. Simulate data in terminal

# ```

# python3 msprime_script.py 50000 10000 10000000 2e-8 2e-8 2020 > ./compare_sys/data1.vcf

# python3 msprime_script.py 50000 10000 10000000 4e-8 4e-8 2020 > ./compare_sys/data2.vcf

# python3 msprime_script.py 50000 10000 100000000 2e-8 2e-8 2020 > ./compare_sys/data3.vcf

# python3 msprime_script.py 50000 10000 5000000 2e-8 2e-8 2020 > ./compare_sys/data4.vcf

# ```

# ### Arguments:

# + Number of haplotypes = 40000

# + Effective population size = 10000 ([source](https://www.the-scientist.com/the-nutshell/ancient-humans-more-diverse-43556))

# + Sequence length = 10 million (same as Beagle 5's choice)

# + Rrecombination rate = 2e-8 (default)

# + mutation rate = 2e-8 (default)

# + seed = 2019

@show nsamples("./compare_sys/data1.vcf.gz")

@show nrecords("./compare_sys/data1.vcf.gz")

@show nsamples("./compare_sys/data2.vcf.gz")

@show nrecords("./compare_sys/data2.vcf.gz")

@show nsamples("./compare_sys/data3.vcf.gz")

@show nrecords("./compare_sys/data3.vcf.gz")

@show nsamples("./compare_sys/data4.vcf.gz")

@show nrecords("./compare_sys/data4.vcf.gz")

# ## Step 2: Compress files to .gz

# run these in terminal

run(`cat data1.vcf | gzip > data1.vcf.gz`)

run(`cat data2.vcf | gzip > data2.vcf.gz`)

run(`cat data3.vcf | gzip > data3.vcf.gz`)

run(`cat data4.vcf | gzip > data4.vcf.gz`)

compress_vcf_to_gz("./compare_sys/data1.vcf"); rm("./compare_sys/data1.vcf", force=true)

compress_vcf_to_gz("./compare_sys/data2.vcf"); rm("./compare_sys/data2.vcf", force=true)

compress_vcf_to_gz("./compare_sys/data3.vcf"); rm("./compare_sys/data3.vcf", force=true)

# # Move over to hoffman now.

#

# Put scripts below in under `/u/home/b/biona001/haplotype_comparisons/data`:

#

# ## `filter_and_mask.jl`

# ```Julia

# using VCFTools

# using MendelImpute

# using Random

#

# """

# filter_and_mask(data::String, samples::Int)

#

# Creates reference haplotypes and (unphased) target genotype files from `data`.

#

# # Inputs

# `data`: The full (phased) data simulated by msprime.

# `samples`: Number of samples (genotypes) desired in target file. Remaining haplotypes will become the reference panel

# """

# function filter_and_mask(data::String, samples::Int)

# missingprop = 0.1

# n = nsamples(data)

# p = nrecords(data)

# samples > n && error("requested samples exceed total number of genotypes in $data.")

#

# # output filenames (tgt_data1.vcf.gz, ref_data1.vcf.gz, and tgt_masked_data1.vcf.gz)

# tgt = "./tgt_" * data

# ref = "./ref_" * data

# tgt_mask = "./tgt_masked_" * data

# tgt_mask_unphase = "./tgt_masked_unphased_" * data

#

# # compute target and reference index

# tgt_index = falses(n)

# tgt_index[1:samples] .= true

# ref_index = .!tgt_index

# record_index = 1:p # save all records (SNPs)

#

# # generate masking matrix with `missingprop`% of trues (true = convert to missing)

# Random.seed!(2020)

# masks = falses(p, samples)

# for j in 1:samples, i in 1:p

# rand() < missingprop && (masks[i, j] = true)

# end

#

# # create outputs

# VCFTools.filter(data, record_index, tgt_index, des = tgt)

# VCFTools.filter(data, record_index, ref_index, des = ref)

# mask_gt(tgt, masks, des=tgt_mask)

#

# # finally, unphase the target data

# unphase(tgt_mask, outfile=tgt_mask_unphase)

# end

#

# data = ARGS[1]

# samples = parse(Int, ARGS[2])

# filter_and_mask(data, samples)

# ```

#

# ## `mendel_fast.jl`

# ```Julia

# using VCFTools

# using MendelImpute

# using GeneticVariation

#

# function run(data::String, width::Int)

# tgtfile = "./tgt_masked_unphased_" * data

# reffile = "./ref_" * data

# outfile = "./mendel_imputed_" * data

# phase(tgtfile, reffile, outfile = outfile, width = width, fast_method=true)

# end

#

# data = ARGS[1]

# width = parse(Int, ARGS[2])

# run(data, width)

# ```

#

# ## `mendel_dp.jl`

# ```Julia

# using VCFTools

# using MendelImpute

# using GeneticVariation

#

# function run(data::String, width::Int)

# tgtfile = "./tgt_masked_unphased_" * data

# reffile = "./ref_" * data

# outfile = "./mendel_imputed_" * data

# phase(tgtfile, reffile, outfile = outfile, width = width, fast_method=false)

# end

#

# data = ARGS[1]

# width = parse(Int, ARGS[2])

# run(data, width)

# ```

# ## Step 1: filter files

#

# + `ref_data1.vcf.gz`: haplotype reference files

# + `tgt_data1.vcf.gz`: complete genotype information

# + `tgt_masked_data1.vcf.gz`: the same as `tgt_data1.vcf.gz` except some entries are masked

# + `tgt_masked_unphased_data1.vcf.gz`: the same as `tgt_data1.vcf.gz` except some entries are masked and heterzygotes are unphased.

# specify simulation parameters

target_data = ["data1.vcf.gz", "data4.vcf.gz"]

memory = 47

missingprop = 0.1

samples = 1000

cd("/u/home/b/biona001/haplotype_comparisons/data")

for data in target_data

# if unphased target genotype file exist already, move to next step

if isfile(data * "./tgt_masked_unphased_" * data)

continue

end

open("filter.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# filter/mask data")

println(io, "julia ./filter_and_mask.jl $data $samples")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub filter.sh`)

rm("filter.sh", force=true)

sleep(2)

end

# ## Step 2: prephasing using beagle 4.1

#

# + data1: 7 hours 18 minutes 19 seconds

# +

# +

# + data4: 3 hours 48 minutes 36 seconds

cd("/u/home/b/biona001/haplotype_comparisons/data")

for data in target_data

open("prephase.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# run prephasing using beagle 4.1")

println(io, "java -Xss5m -Xmx$(memory)g -jar beagle4.1.jar gt=./tgt_masked_unphased_$(data) ref=./ref_$(data) niterations=0 out=./tgt_masked_phased_$(data)")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub prephase.sh`)

rm("prephase.sh", force=true)

sleep(2)

end

# ## Step 3: run MendelImpute

widths = [400; 800; 1600]

cd("/u/home/b/biona001/haplotype_comparisons/data")

for data in target_data, width in widths

# fast version

open("mendel$width.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# run MendelImpute (fast)")

println(io, "julia mendel_fast.jl $data $width")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub mendel$width.sh`)

rm("mendel$width.sh", force=true)

sleep(2)

# dynamic programming version

open("dp_mendel$width.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# run MendelImpute (dynamic programming)")

println(io, "julia mendel_dp.jl $data $width")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub dp_mendel$width.sh`)

rm("dp_mendel$width.sh", force=true)

sleep(2)

end

# ## Step 3: run Beagle 5

cd("/u/home/b/biona001/haplotype_comparisons/data")

for data in target_data

open("beagle.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# run beagle 5.0 for imputation")

println(io, "java -Xmx$(memory)g -jar beagle5.0.jar gt=tgt_masked_phased_$(data).vcf.gz.vcf.gz ref=ref_$(data).vcf.gz out=beagle_imputed_$(data)")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub beagle.sh`)

rm("beagle.sh", force=true)

sleep(2)

end

# ## Run Minimac4

cd("/u/home/b/biona001/haplotype_comparisons/data")

for data in target_data

# first convert vcf files to m3vcf files using minimac3

open("minimac3.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# run minimac3 to convert vcf files to m3vcf files")

println(io, "minimac3 --refHaps ref_$(data).vcf.gz --processReference --prefix ref_$(data)")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub minimac3.sh`)

rm("minimac3.sh", force=true)

sleep(2)

# run minimac4 for imputation

open("minimac4.sh", "w") do io

println(io, "#!/bin/bash")

println(io, "#\$ -cwd")

println(io, "# error = Merged with joblog")

println(io, "#\$ -o joblog.\$JOB_ID")

println(io, "#\$ -j y")

println(io, "#\$ -l arch=intel-X5650,exclusive,h_rt=24:00:00,h_data=$(memory)G")

println(io, "# Email address to notify")

println(io, "#\$ -M \$USER@mail")

println(io, "# Notify when")

println(io, "#\$ -m a")

println(io)

println(io, "echo \"Job \$JOB_ID started on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID started on: \" `date `")

println(io)

println(io, "# load the job environment:")

println(io, ". /u/local/Modules/default/init/modules.sh")

println(io, "module load julia/1.2.0")

println(io, "module load R/3.5.1")

println(io, "module load java/1.8.0_111")

println(io)

println(io, "# run minimac 4 for imputation")

println(io, "minimac4 --refHaps ref_$(data).m3vcf --haps tgt_masked_phased_$(data).vcf.gz.vcf.gz --prefix minimac_imputed_$(data) --format GT")

println(io)

println(io, "echo \"Job \$JOB_ID ended on: \" `hostname -s`")

println(io, "echo \"Job \$JOB_ID ended on: \" `date `")

println(io)

end

# submit job

run(`qsub minimac4.sh`)

rm("minimac4.sh", force=true)

sleep(2)

end

| simulation/compare_sys.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from sklearn import datasets

from sklearn.naive_bayes import GaussianNB, MultinomialNB

import pandas as pd

import numpy as np

from sklearn import preprocessing

from sklearn import datasets

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

cancer = datasets.load_breast_cancer()

x = cancer.data

y = cancer.target

x[:2]

# +

x_train, x_test, y_train, y_test = train_test_split(x, y, test_size=0.2)

scaler = preprocessing.StandardScaler().fit(x_train)

x_train = scaler.transform(x_train)

model = GaussianNB()

model.fit(x_train, y_train)

x_test = scaler.transform(x_test)

y_pred = model.predict(x_test)

accuracy = accuracy_score(y_test, y_pred)

num_correct_samples = accuracy_score(y_test, y_pred, normalize=False)

print('number of correct sample: {}'.format(num_correct_samples))

print('accuracy: {}'.format(accuracy))

| .ipynb_checkpoints/mod12-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: '''Python Interactive'''

# language: python

# name: 0273d3a9-be6d-4326-9d0d-fafd7eacc490

# ---

# # Monte Carlo Methods

# Import relevant libraries

import numpy as np

import matplotlib.pyplot as plt

from scipy.linalg import sqrtm

# Initialize variables; these are the settings for the simulation

mu1 = 0.1

mu2 = 0.2

sg1 = 0.05

sg2 = 0.1

dt = 1 / 252

T = 1

L = int(T / dt)

rho = 0.5

S0 = 1

# Create the Monte Carlo Simulation. Two random walks are created, both representing stock price paths.

# +

# %matplotlib notebook

plt.figure()

S1 = [S0]

S2 = [S0]

eps1 = np.random.normal(size=(L))

e12 = np.random.normal(size=(L))

eps2 = rho * eps1 + np.sqrt(1 - rho ** 2) * e12

for i in range(1, L):

S1.append(S1[-1] * np.exp((mu1 - 0.5 * sg1 ** 2) * dt + sg1 * eps1[i] * np.sqrt(dt)))

S2.append(S2[-1] * np.exp((mu2 - 0.5 * sg2 ** 2) * dt + sg2 * eps2[i] * np.sqrt(dt)))

plt.plot(S1)

plt.plot(S2)

plt.show()

# -

R = np.array([[1, 0.4, 0.4], [0.4, 1, 0.2], [-0.4, 0.2, 1]])

X = sqrtm(R) @ np.random.normal(size=(3, int(1e5)))

phi = np.corrcoef(X)

# ## Antitheic MC simulation, basic idea

#

# The variance (STD) of the antitheic MC is less than half of the basic MC.

f = lambda x: np.exp(x)

x = np.random.normal(size=(100000))

np.mean(f(x)), np.std(f(x)), np.mean((f(x) + f(-x)) / 2), np.std((f(x) + f(-x)) / 2)

| Python/monte_carlo/Monte Carlo Simulations of Multiple Correlated Stocks.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="Q5aSQdvJ4C4Y"

# # <NAME>

# + [markdown] editable=true id="hyUP-UXo4C4Z"

# Hasta ahora, nos hemos centrado principalmente en datos unidimensionales y bidimensionales, almacenados en objetos Pandas `` Series `` y `` DataFrame ``, respectivamente.

#

# A menudo, es útil ir más allá y almacenar datos de mayor dimensión, es decir, datos indexados por más de una o dos claves.

#

# Si bien Pandas proporciona objetos `` Panel `` y `` Panel4D `` (que mencionaremos más adelante) que manejan de forma nativa datos tridimensionales y tetradimensionales, un método mucho más común en la práctica es hacer uso de la *indexación jerárquica* (también conocida como *indexación múltiple*) para incorporar múltiples índices (o niveles) dentro de un solo índice.

#

# De esta manera, los datos de mayor dimensión se pueden representar de forma compacta dentro de los objetos familiares unidimensionales `` Series `` y bidimensionales `` DataFrame ``.

#

# En esta sección, exploraremos la creación directa de objetos `` MultiIndex ``, consideraciones al indexar, dividir y calcular estadísticas a través de datos indexados múltiples, y rutinas útiles para realizar conversiones entre representaciones simples e indexadas jerárquicamente.

#

# Comenzamos con las importaciones estándar:

# + editable=true id="iK4FG9Zo4C4a"

import pandas as pd

import numpy as np

# + [markdown] editable=true id="iDvudf7_4C4d"

# ## Series con índices múltiples

#

# Comencemos por considerar cómo podríamos representar datos bidimensionales dentro de una `` Serie `` unidimensional.

# Para mayor concreción, consideraremos una serie de datos donde cada punto tiene un carácter y una clave numérica.

# + [markdown] editable=true id="mKbJFTwe4C4e"

# ### El camino que deberías evitar

#

# Supongamos que nos gustaría rastrear datos sobre los estados de USA para dos años diferentes.

# Con las herramientas de Pandas que ya hemos cubierto, es posible que tengamos la tentación de usar simplemente tuplas de Python como claves:

# + editable=true id="qW5y5hdD4C4e" jupyter={"outputs_hidden": false} outputId="8b304f78-9ffe-428c-96bb-a018d6c8d0e3"

index = [('California', 2000), ('California', 2010),

('New York', 2000), ('New York', 2010),

('Texas', 2000), ('Texas', 2010)]

populations = [33871648, 37253956,

18976457, 19378102,

20851820, 25145561]

pop = pd.Series(populations, index=index)

pop

# + [markdown] editable=true id="1p-gv66R4C4i"

# Con este esquema de indexación, podemos indexar o dividir directamente la serie en función de este índice múltiple:

# + editable=true id="nJBmNB8R4C4i" jupyter={"outputs_hidden": false} outputId="add786c1-4473-432a-b749-e4613ba4a948"

pop[('California', 2010):('Texas', 2000)]

# + [markdown] editable=true id="PC0T6Yu_4C4k"

# Pero la conveniencia termina ahí. Por ejemplo, si necesitásemos seleccionar todos los valores de 2010, necesitaríamos hacer algunas tareas desordenadas (y lentas) para conseguirlo:

# + editable=true id="YtzVNVTC4C4l" jupyter={"outputs_hidden": false} outputId="9c206ce5-4e0c-41ca-d3d9-fc9205a5962a"

pop[[i for i in pop.index if i[1] == 2010]]

# + [markdown] editable=true id="mC25gfZl4C4n"

# Con esto conseguimos el resultado deseado, pero no es tan limpio (o tan eficiente para grandes conjuntos de datos) como la sintaxis de segmentación que nos proporciona Pandas.

# + [markdown] editable=true id="c4A7XVpc4C4o"

# ### Un camino mejor: Pandas MultiIndex

#

# Afortunadamente, Pandas ofrece una manera mucho mejor, que será más eficiente y nos evitará posibles quebraderos de cabeza.

#

# Nuestra indexación basada en tuplas es esencialmente un índice múltiple rudimentario, y el tipo Pandas `` MultiIndex `` nos ofrece el tipo de operaciones que deseamos tener.

#

# Podemos crear un MultiIndex a partir de las tuplas de la siguiente manera:

# + editable=true id="3dvz98ZI4C4o" jupyter={"outputs_hidden": false} outputId="f941d9c4-3eac-47c4-eb95-e502cae604f2"

index = pd.MultiIndex.from_tuples(index)

index

# + [markdown] editable=true id="fkqqKEJc4C4r"

# Fíjate que el `` MultiIndex `` contiene múltiples niveles de indexación. En este caso, los nombres de los estados y los años. Pero el nivel no termina ahí, sino que podríamos enlazar más niveles.

#

# Si volvemos a indexar nuestra serie con este `` MultiIndex ``, podremos ver la representación jerárquica de los datos:

# + editable=true id="FFz0H-CS4C4r" jupyter={"outputs_hidden": false} outputId="5f96d61a-9503-4a80-e94c-70deca6a3b9d"

pop = pop.reindex(index)

pop

# + [markdown] editable=true id="5HM_OgOe4C4u"

# Aquí, las dos primeras columnas de la representación de la `` Serie `` muestran los valores del MultiIndex, mientras que la tercera columna muestra los datos.

# Fíjate que faltan algunas entradas en la primera columna: en esta representación de índices múltiples, cualquier entrada en blanco indica el mismo valor que la línea que está encima.

# + [markdown] editable=true id="JupNsBd24C4u"

# Ahora, para acceder a todos los datos para los que el segundo índice es 2010, simplemente podemos usar la indexación de Pandas que hemos viso hasta ahora:

# + editable=true id="CtvYBxkG4C4v" jupyter={"outputs_hidden": false} outputId="de061ec4-092a-4bfd-f171-b6e0f8528519"

pop[:, 2010]

# + [markdown] editable=true id="ysxQV0h74C4x"

# El resultado es una matriz indexada individualmente con solo las claves que nos interesan.

#

# Esta sintaxis es mucho más apropiada (además de eficiente) que la solución de indexación múltiple basada en tuplas caseras que hemos visto antes.

#

# A continuación, analizaremos más a fondo este tipo de operación de indexación en datos indexados jerárquicamente.

# + [markdown] editable=true id="ZLg3mxg94C4x"

# ### MultiIndex como dimensión extra

#

# Si pensamos un poco, podríamos haber almacenado fácilmente los mismos datos utilizando un simple `` DataFrame `` con sus etiquetas de índice y columna.

#

# De hecho, Pandas se construye con esta equivalencia en mente. El método `unstack ()` convertirá rápidamente una `Serie` indexada de forma múltiple en un ``DataFrame`` indexado convencionalmente:

# + editable=true id="SQiwqeuA4C4y" jupyter={"outputs_hidden": false} outputId="e000ac83-965e-4a01-d8fd-865b7a375634"

pop_df = pop.unstack()

pop_df

# + [markdown] editable=true id="3zpwSh4i4C40"

# De forma análoga, el método ``stack()`` hace exactamente lo contrario:

# + editable=true id="xS9vIP1g4C40" jupyter={"outputs_hidden": false} outputId="9bc147fd-8e27-4be6-e562-8be9a54da249"

pop_df.stack()

# + [markdown] editable=true id="EF7B7Xlu4C43"

# Al ver esto, es posible preguntarse por qué utilizar la indexación jerárquica.

#

# La razón es simple: así como pudimos usar la indexación múltiple para representar datos bidimensionales dentro de una `` Serie `` unidimensional, también podemos usarla para representar datos de tres o más dimensiones en una `` Serie `` o `` DataFrame ``.

#

# Cada nivel adicional en un índice múltiple representa una dimensión adicional de datos; aprovechar esta propiedad nos da mucha más flexibilidad en los tipos de datos que podemos representar. Concretamente, podríamos querer agregar otra columna de datos demográficos para cada estado de cada año (por ejemplo, población menor de 18 años). Con un `` MultiIndex `` esto es tan sencillo como agregar otra columna al `` DataFrame ``:

# + editable=true id="EMahoI114C43" jupyter={"outputs_hidden": false} outputId="d9b9ffe4-5f9f-4b99-f49d-c4ffcc7e5f4b"

pop_df = pd.DataFrame({'total': pop,

'under18': [9267089, 9284094,

4687374, 4318033,

5906301, 6879014]})

pop_df

# + [markdown] editable=true id="z60a1aEc4C45"

# Además, todas las ufuncs que hemos visto, así como otras funcionalidades comentadas, también funcionan con los índices jerárquicos.

#

# A continuación, calculamos la fracción de personas menores de 18 años por año, dados los datos anteriores:

# + editable=true id="zJyAJoUP4C46" jupyter={"outputs_hidden": false} outputId="00d81b10-f9ce-4154-f1b3-2552c7844fe3"

f_u18 = pop_df['under18'] / pop_df['total']

f_u18.unstack()

# + [markdown] editable=true id="cnxvoAwU4C48"

# Esto nos permite manipular y realizar una exploración de manera rápida y fácil, incluso con datos de alta dimensión.

# + [markdown] editable=true id="NMFLAhz64C48"

# ## Métodos de creación con MultiIndex

#

# La forma más sencilla de construir una `` Serie `` o `` DataFrame `` indexados de forma múltiple es simplemente pasar una lista de dos o más matrices de índices al constructor. Por ejemplo:

# + editable=true id="MPpeGESV4C48" jupyter={"outputs_hidden": false} outputId="272b5222-f627-441f-95a3-abbabf31ccd9"

df = pd.DataFrame(np.random.rand(4, 2),

index=[['a', 'a', 'b', 'b'], [1, 2, 1, 2]],

columns=['data1', 'data2'])

df

# + [markdown] editable=true id="5rh7RwYH4C4-"

# El trabajo de crear el `` MultiIndex `` se realiza en segundo plano.

#

# De manera similar, si pasamos un diccionario con las tuplas apropiadas como claves, Pandas lo reconocerá automáticamente y usará un `` MultiIndex `` por defecto:

# + editable=true id="wb1-jroZ4C4_" jupyter={"outputs_hidden": false} outputId="75383707-743d-49d2-9397-f85c465dc3f7"

data = {('California', 2000): 33871648,

('California', 2010): 37253956,

('Texas', 2000): 20851820,

('Texas', 2010): 25145561,

('New York', 2000): 18976457,

('New York', 2010): 19378102}

pd.Series(data)

# + [markdown] editable=true id="9o8f8pLv4C5E"

# Sin embargo, a veces es útil crear explícitamente un `` MultiIndex ``. Veamos un par de estos métodos:

# + [markdown] editable=true id="ugSyyTVA4C5E"

# ### Constructores explícitos MultiIndex

#

# Para mayor flexibilidad en la forma en que se construye el índice, en su lugar puede utilizar los constructores de métodos de clase disponibles en el objeto `` pd.MultiIndex ``.

#

# Por ejemplo, como hemos hecho antes, podríamos construir el `` MultiIndex `` a partir de una lista simple de matrices que dan los valores de índice dentro de cada nivel:

# + editable=true id="xDr_swbL4C5F" jupyter={"outputs_hidden": false} outputId="381cdef7-c0b2-4af6-aa72-0a20ec2c25e1"

pd.MultiIndex.from_arrays([['a', 'a', 'b', 'b'], [1, 2, 1, 2]])

# + [markdown] editable=true id="Gjoh_w5z4C5H"

# Podemos construirlo a partir de una lista de tuplas, especificando cada posible valor de la tupla para cada punto:

# + editable=true id="qUBCiGXw4C5I" jupyter={"outputs_hidden": false} outputId="ac33a470-c3d0-4e7f-a203-3bf4b4f14722"

pd.MultiIndex.from_tuples([('a', 1), ('a', 2), ('b', 1), ('b', 2)])

# + [markdown] editable=true id="dwExBtYe4C5K"

# Podríamos incluso construirlo a partir del producto cartesiano de los índices únicos:

# + editable=true id="r2aeDnv24C5K" jupyter={"outputs_hidden": false} outputId="fb650f91-9197-4147-9e93-2175d2a28ec9"

pd.MultiIndex.from_product([['a', 'b'], [1, 2]])

# + [markdown] editable=true id="1NRCYVho4C5M"

# De manera similar, podríamos construir un ``MultiIndex`` directamente usando su codificación interna pasándole los ``levels`` (una lista de listas que contienen valores de índice disponibles para cada nivel) y `` codes `` (una lista de listas que hacen referencia a estas etiquetas):

# + editable=true id="sud6ly8U4C5M" jupyter={"outputs_hidden": false} outputId="9c6126dc-0c85-4d7b-cbdf-a9e7c739ce6e"

pd.MultiIndex(levels=[['a', 'b'], [1, 2]], codes=[[0, 0, 1, 1], [0, 1, 0, 1]])

# + [markdown] editable=true id="ll90pPKl4C5O"

# Cualquiera de estos objetos se puede pasar como el argumento "índice" al crear una ``Serie`` o un ``DataFrame``, o se puede pasar al método `` reindex `` de una `` Serie`` o `` DataFrame ``.

# + [markdown] editable=true id="5TGFpClg4C5O"

# ### MultiIndex: nombrando los niveles

#

# A veces, es conveniente nombrar los niveles del `` MultiIndex ``.

#

# Esto se puede lograr pasando el argumento `` names `` a cualquiera de los constructores `` MultiIndex `` anteriores, o configurando el atributo `` names `` del índice después de haberlo contruido:

# + editable=true id="3fcikBaN4C5P" jupyter={"outputs_hidden": false} outputId="f607d7e4-eadd-4c20-f3fe-41a76b566f68"

pop.index.names = ['state', 'year']

pop

# + [markdown] editable=true id="MpRRmtTE4C5Q"

# Con conjuntos de datos más complicados, esta puede ser una forma útil de realizar un seguimiento del significado de varios valores de índice.

# + [markdown] editable=true id="R6bZC5cj4C5R"

# ### MultiIndex para columnas

#

# En un ``DataFrame``, las filas y columnas son completamente simétricas, y así como las filas pueden tener múltiples niveles de índices, las columnas también pueden tener múltiples niveles.

#

# Creemos una maqueta de algunos datos médicos:

# + editable=true id="phtS-Wvb4C5R" jupyter={"outputs_hidden": false} outputId="3f5ac3dd-763c-48b3-e872-e8a7eacae309"

# hierarchical indices and columns

index = pd.MultiIndex.from_product([[2013, 2014], [1, 2]],

names=['year', 'visit'])

columns = pd.MultiIndex.from_product([['Bob', 'Guido', 'Sue'], ['HR', 'Temp']],

names=['subject', 'type'])

# mock some data

data = np.round(np.random.randn(4, 6), 1)

data[:, ::2] *= 10

data += 37

# create the DataFrame

health_data = pd.DataFrame(data, index=index, columns=columns)

health_data

# + [markdown] editable=true id="OjkcbQrR4C5T"

# Aquí vemos dónde la indexación múltiple para filas y columnas puede ser especialmente útil.

#

# Se trata fundamentalmente de datos de cuatro dimensiones, donde las dimensiones son el sujeto (subject), el tipo de medida (type), el año (year) y el número de visita (visit).

#

# Con esto en su lugar, podemos, por ejemplo, indexar la columna de nivel superior por el nombre de la persona y obtener un `` DataFrame `` completo que contenga solo la información de esa persona:

# + editable=true id="PMXmcANH4C5T" jupyter={"outputs_hidden": false} outputId="3f320e2e-9961-4c79-860a-7e8d18e71444"

health_data['Guido']

# + [markdown] editable=true id="xgaC9CWC4C5V"

# Para registros complicados que contengan múltiples mediciones etiquetadas en múltiples momentos para muchos sujetos (personas, países, ciudades, etc.), el uso de filas y columnas jerárquicas puede ser extremadamente conveniente.

# + [markdown] editable=true id="vWCdaI004C5V"

# ## Indexing y Slicing en MultiIndex

#

# La indexación y la división en un `` MultiIndex `` está diseñada para ser intuitiva, pensando en los índices como dimensiones adicionales.

#

# Primero, veremos la indexación de `` Series `` indexadas de forma múltiple, y luego los `` DataFrame `` indexados de forma múltiple.

# + [markdown] editable=true id="IVHjt-pM4C5W"

# ### Seeries indexadas de manera múltiple

#

# Consideremos la `` Serie `` de poblaciones estatales con índices múltiples que vimos anteriormente:

# + editable=true id="zF5LHkGw4C5W" jupyter={"outputs_hidden": false} outputId="38f6d65f-eb3b-4e31-8497-9dcca9998569"

pop

# + [markdown] editable=true id="Qb3a-PHA4C5Y"

# Podemos acceder a elementos individuales indexando con varios términos:

# + editable=true id="cuyBixt74C5Y" jupyter={"outputs_hidden": false} outputId="33548699-219e-4d6b-b2fa-9fe66dbb8bf6"

pop['California', 2000]

# + [markdown] editable=true id="bUC_hzzq4C5Z"

# El `` MultiIndex `` también admite indexación parcial, o indexar solo uno de los niveles del índice.

#

# El resultado es otra `` Serie `` manteniendo los índices de nivel inferior:

# + editable=true id="mGeP3ElL4C5a" jupyter={"outputs_hidden": false} outputId="5d042b80-2e8c-4446-8cc7-70186f8d3571"

pop['California']

# + [markdown] editable=true id="RM5E-0oZ4C5c"

# También se puede usar el slicing parcial, siempre que el `` Índice múltiple `` esté ordenado:

# + editable=true id="V4Qatdkm4C5e" jupyter={"outputs_hidden": false} outputId="90135a07-cf56-4e4d-cdd5-0ccb67bb3637"

pop.loc['California':'New York']

# + [markdown] editable=true id="EzF6CkJQ4C5f"