code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

import matplotlib.pyplot as plt

# # Fundamental Building Blocks

#

# Based on the ideas presented by Cleveland, Ware, and the Gestalt Principles of Visual perception, we're going start talking about the types of charts we want to build and the types of customizations we would entertain, in order to take advantage of these principles (or not violate them).

#

# For <NAME>, this limits our basic elements to just four (4!):

#

# 1. Points

# 2. Lines

# 3. Bars

# 4. Boxes

#

# That's it at least in terms of *quantitative* encoding. For now, we will ignore boxes so that really leaves us points, lines and bars and some combinations.

#

# ## Points

#

# From a `matplotlib` point of view, these do not map exactly onto the functions available. For example, using points for *categorical* data is different than using points for *numerical* data. Additionally, you have to do things differently if you want your chart to be horizontal or vertical.

#

# Here's a categorical chart that uses points, *Dot Chart*. A Dot Chart is preferrable to a Bar Chart when you do not wish to include 0 in the y axis. The dotted lines are visual guidelines, especially in horizontal versions, they will go all the way through like gridlines. Both versions are provided here.

#

# Once you understand how `matplotlib` works, you will see that I have essentially used the regular `plot` function and made up fake x-axis data and gave it categorical labels.

# +

figure = plt.figure(figsize=(5, 3))

xs = [1, 2, 3, 4, 5]

data = [100, 87, 23, 47, 57]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, data, "o", color="dimgray")

axes.vlines(xs, [0], data, linestyles='dotted', lw=2)

axes.set_xticks(xs)

axes.set_xlim((0, 6))

axes.set_ylim((0, 110))

axes.set_xticklabels(["A", "B", "C", "D", "E"])

axes.xaxis.grid(False)

# +

figure = plt.figure(figsize=(5, 3))

xs = [1, 2, 3, 4, 5]

data = [100, 87, 23, 47, 57]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, data, "o", color="dimgray")

axes.vlines(xs, [0], [110], linestyles='dotted', lw=2)

axes.set_xticks(xs)

axes.set_xlim((0, 6))

axes.set_ylim((0, 110))

axes.set_xticklabels(["A", "B", "C", "D", "E"])

axes.xaxis.grid(False)

# -

# To use points with numerical data only, we use the same command `plot` and create a *Scatter Plot* or *XY-Plot*.

# +

figure = plt.figure(figsize=(5, 3))

xs = [50, 42, 39, 19, 27]

ys = [100, 87, 23, 47, 57]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, ys, "o", color="dimgray")

axes.set_xlim((10, 55))

axes.set_ylim((10, 110))

# -

# Notice that there's no specific reason to start at 0 on the x or y scale.

#

# ## Lines

#

# Unless these points follow each other, we should not connect them with lines. If, however, these were readings of two variables that occurred in time, we might connect the points with lines. Note that this uses the Gestalt Principle of Connection...if there is no connection, don't use lines!

# +

figure = plt.figure(figsize=(5, 3))

xs = [50, 42, 39, 19, 27]

ys = [100, 87, 23, 47, 57]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, ys, "o-", color="dimgray")

axes.set_xlim((10,60))

axes.set_ylim((10, 110))

axes.set_xticklabels(["A", "B", "C", "D", "E"])

# -

# A single value that is recorded over time is often shown with either just line, points or both. There can be various reasons for each. Just a line is often used where the implication that the numerical measurement is *continuous*. Just points are often used when there are breaks in the measurement (for example, no values for weekends). Points and lines can be used together when we want to emphasize both the continuity and the specific values, especially if we are plotting multiple lines.

#

#

# Note that for `matplotlib`'s purposes, time is categorical. You may need to create an index or use the actual millis on the x-axis and create tick labels for them.

# +

figure = plt.figure(figsize=(5, 3))

xs = [1, 2, 3, 4, 5]

ys = [100, 87, 23, 47, 57]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, ys, "o-", color="dimgray")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

# -

# Notice how the Gestalt Principle of Closure describes how your perception projects the line out to June.

#

# ### Bars

#

# We've already done Bars. We will reproduce the chart from above.

# +

sales = [29, 23, 18, 17, 13]

width = 1/1.5

x = range( len( sales))

figure, axes = plt.subplots()

axes.bar(x, sales, width, color="dimgray", align="center")

axes.set_xticks([0, 1, 2, 3, 4])

axes.set_xticklabels(["A", "D", "B", "C", "E"])

axes.yaxis.grid( b=True, which="major")

axes.set_ylim((0, 30))

plt.show()

# -

# So those are the basic chart types. Which one you use, and what secondary changes you make (labels, color, "small multiples") depends on what you're trying to accomplish. Additionally, there will be times when you need to use specialized charts (for example, we'll see a "box-and-whiskers plot" soon) but you should always start with these.

# ## Multiple Data Series

#

# The Playfair Trade Balance chart is an example of a chart with multiple data series (they don't always have to be time related). The two series are exports and imports (and we could derive a 3rd which would be the trade balance). As another example, we can have sales and expenditures for our ten business units.

#

# We can also have data series for different things at different points in time. For example, monthly unemployment rates by state over the last 60 months.

#

# So far our charts have shown a single data series. How do we deal with multiple data series? This largely depends on the base chart (line, bar, dots) and exactly how many series we have...two or 50?

#

# Here's a typical line plot with three data series:

# +

figure = plt.figure(figsize=(5, 3))

xs = [1, 2, 3, 4, 5]

ys1 = [100, 87, 23, 47, 57]

ys2 = [55, 98, 91, 72, 89]

ys3 = [78, 93, 52, 80, 69]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, ys1, "o-", color="dimgray", label="ys1")

axes.plot(xs, ys2, "o-", color="dimgray", label="ys2")

axes.plot(xs, ys3, "o-", color="dimgray", label="ys3")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.legend()

plt.show()

plt.close()

# -

# Of course, we can't tell which one is which. We haven't talked about *color* yet but we can use different markers for the lines:

# +

figure = plt.figure(figsize=(5, 3))

xs = [1, 2, 3, 4, 5]

ys1 = [100, 87, 23, 47, 57]

ys2 = [55, 98, 91, 72, 89]

ys3 = [78, 93, 52, 80, 69]

axes = figure.add_subplot(1, 1, 1)

axes.plot(xs, ys1, "o-", color="dimgray", label="ys1")

axes.plot(xs, ys2, "x-", color="dimgray", label="ys2")

axes.plot(xs, ys3, "d-", color="dimgray", label="ys3")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.legend()

plt.show()

plt.close()

# -

# This is still pretty difficult to decode. We have to keep looking at the legend and the points and then back. One of the easier solutions is small multiples...at least once you learn how to get multiple charts in a single plot area.

# +

figure = plt.figure(figsize=(20, 6))

xs = [1, 2, 3, 4, 5]

ys1 = [100, 87, 23, 47, 57]

ys2 = [55, 98, 91, 72, 89]

ys3 = [78, 93, 52, 80, 69]

axes = figure.add_subplot(1, 3, 1)

axes.plot(xs, ys1, "o-", color="dimgray", label="ys1")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.set_xlabel("ys1")

axes = figure.add_subplot(1, 3, 2)

axes.plot(xs, ys2, "o-", color="dimgray", label="ys2")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.set_xlabel("ys2")

axes = figure.add_subplot(1, 3, 3)

axes.plot(xs, ys3, "o-", color="dimgray", label="ys3")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.set_xlabel("ys3")

plt.show()

plt.close()

# -

# Amazingly, it's fairly effortless to detect differences in the series. We can also arrange them vertically if we think that'll make for a better comparison:

# +

figure = plt.figure(figsize=(15, 8))

xs = [1, 2, 3, 4, 5]

ys1 = [100, 87, 23, 47, 57]

ys2 = [55, 98, 91, 72, 89]

ys3 = [78, 93, 52, 80, 69]

axes = figure.add_subplot(3, 1, 1)

axes.plot(xs, ys1, "o-", color="dimgray", label="ys1")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.set_ylabel("ys1")

axes = figure.add_subplot(3, 1, 2)

axes.plot(xs, ys2, "o-", color="dimgray", label="ys2")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.set_ylabel("ys2")

axes = figure.add_subplot(3, 1, 3)

axes.plot(xs, ys3, "o-", color="dimgray", label="ys3")

axes.set_xlim((0, 6))

axes.set_ylim((10, 110))

axes.set_xticks(xs)

axes.set_xticklabels(["Jan", "Feb", "Mar", "Apr", "May"])

axes.set_ylabel("ys3")

plt.show()

plt.close()

# -

# This works for bar charts as well although if you only have two series for a bar chart, there is an alternative.

| fundamentals_2018.9/visualization/blocks.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # kNN Clustering - DOHMH New York City Restaurant Inspection Results

#

# Find groups of different business names that might be alternative representations of the same venue. This is an example for the **kNN clustering** supported by **openclean**.

# +

# Open the downloaded dataset to extract the relevant columns and records.

import os

from openclean.pipeline import stream

df = stream(os.path.join('data', '43nn-pn8j.tsv.gz'))

# -

# ## Extract Relevant Records

#

# Get set of distinct business names from *DBA* column.

# +

# Get distinct set of street names. By computing the distinct set of

# street names first we avoid computing keys for each distinct street

# name multiple times.

dba = df.select('DBA').distinct()

print('{} distinct bisiness names (for {} total values)'.format(len(dba), sum(dba.values())))

# +

# Cluster business names using kNN clusterer (with the default n-gram setting)

# using the Levenshtein distance as the similarity measure.

# Remove clusters that contain less than ten distinct values (for display

# purposes).

from openclean.cluster.knn import knn_clusters

from openclean.function.similarity.base import SimilarityConstraint

from openclean.function.similarity.text import LevenshteinDistance

from openclean.function.value.threshold import GreaterThan

# Minimum cluster size. Use ten as default (to limit

# the number of clusters that are printed in the next cell).

minsize = 5

clusters = knn_clusters(

values=dba,

sim=SimilarityConstraint(func=LevenshteinDistance(), pred=GreaterThan(0.9)),

minsize=minsize

)

print('{} clusters of size {} or greater'.format(len(clusters), minsize))

# +

# For each cluster print cluster values, their frequency counts,

# and the suggested common value for the cluster.

def print_cluster(cnumber, cluster):

print('Cluster {} (of size {})\n'.format(cnumber, len(cluster)))

for val, count in cluster.items():

print('{} ({})'.format(val, count))

print('\nSuggested value: {}\n\n'.format(cluster.suggestion()))

# Sort clusters by decreasing number of distinct values.

clusters.sort(key=lambda c: len(c), reverse=True)

for i, cluster in enumerate(clusters):

print_cluster(i + 1, cluster)

# +

# Perform normalization of business names first to get an

# initial set of clusters using key collision clustering.

# Then run kNN clustering on the collision keys.

from collections import Counter

from openclean.cluster.knn import knn_collision_clusters

clusters = knn_collision_clusters(

values=dba,

sim=SimilarityConstraint(func=LevenshteinDistance(), pred=GreaterThan(0.9)),

minsize=minsize

)

print('{} clusters of size {} or greater'.format(len(clusters), minsize))

# +

# Print resulting clusters.

clusters.sort(key=lambda c: len(c), reverse=True)

for i, cluster in enumerate(clusters):

print_cluster(i + 1, cluster)

| examples/notebooks/nyc-restaurant-inspections/NYC Restaurant Inspections - kNN Clusters for Business Name.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# In this part of the exercise, you will implement regularized logistic regression to predict whether microchips from a fabrication plant passes quality assurance (QA). During QA, each microchip goes through various tests to ensure it is functioning correctly.

# Suppose you are the product manager of the factory and you have the test results for some microchips on two different tests. From these two tests, you would like to determine whether the microchips should be accepted or rejected. To help you make the decision, you have a dataset of test results on past microchips, from which you can build a logistic regression model.

#import the libraries

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# %matplotlib inline

#Check out the data file

# !head ../data/ex2data2.txt

data = pd.read_csv('../data/ex2data2.txt', sep=',', header=None, names=['Test1', 'Test2', 'Pass'])

data.head()

data.describe()

# Scale of Test values are similar. I will not apply Standardization. But if means and standart deviations were really far apart, it may have made sense to standardize the data for the optimization purposes. We can see it on a box plot.

plt.figure()

plt.boxplot([data['Test1'], data['Test2']]);

# Let's change the visual style:

plt.style.use('ggplot')

# Finally, let's visualize the data at hand:

# +

fig, ax = plt.subplots(figsize=(12, 8))

ax.scatter(data[data["Pass"] == 0]["Test1"], data[data["Pass"] == 0]["Test2"],

marker='x', label='Failed')

ax.scatter(data[data["Pass"] == 1]["Test1"], data[data["Pass"] == 1]["Test2"],

label='Passed')

ax.legend(frameon = True, fontsize="large", facecolor = "White", framealpha = 0.7)

ax.set_xlabel('Test1')

ax.set_ylabel('Test2');

# -

# A linear seperator will not cut it here, and as a result we are going to implement 5th degree polynomial regression here. It is an overkill but we will use the regularization to get rid of unnecessary elements.

#

# There is a MapFeature module in the homework written in Octave code. Using this code and the code from @jdwittenauer repo, I will transform the Dataset at hand to have the following transformation on our dataframe:

#

# $mapFeature(x) = [x_1, x_2, x_1^2, x_1 x_2,x_2^2,..., x_1x_2^5,x_2^6]$

# +

#let's first keep original data set as I will modify the dataframe

data_or = data.copy()

#There is the MapFeature.m

#I think there was a mistake in his implementation, I corrected it

degree = 7

for i in range(1, degree):

for j in range(0, i+1):

data["T" + str(i-j) + str(j)] = np.power(data["Test1"], i-j) * np.power(data["Test2"], j)

# -

data.drop(['Test1','Test2'], axis=1, inplace=True)

data.insert(1, "Ones", 1)

data.head()

# "As a result of this mapping, our vector of two features (the scores on two QA tests) has been transformed into a 28-dimensional vector. "

#

# We have 28 columns + 1 column for results as well. Finally define the numpy input and output arrays, initial theta value and check the dimensions for them

# +

X = data.iloc[:,1:]

y = data.iloc[:,0]

X = np.asarray(X.values)

y = np.asarray(y.values)

theta = np.zeros(X.shape[1])

#check the dimensions

X.shape, y.shape, theta.shape

# -

# **Regularization**

#

# Regularization means we will add the following item to our old cost function:

#

# $J(\theta) = J_{\text{old}}(\theta) + \frac{\lambda}{2m} \sum_{j=1}^{k}\theta_j^2$

# +

def sigmoid(z):

return 1.0 / (1.0 + np.exp(-z))

def h(theta, X):

"""

Hypothesis Function where

X is an n x k dimensional array of explanatory variables

theta is a array with k elements of multipliers for linear function

Result will be one dimensional vector of n variables

"""

return sigmoid(np.dot(X, theta))

# -

#Now the cost function

def cost_function(theta, Lambda, X, y):

"""

This is a cost function that returns the cost of theta given X and y

X is an n x k dimensional array of explanatory variables

y is a one dimensional array with n elements of explained variables

theta is a vector with k elements of multipliers for linear function

"""

item1 = - y.flatten() * np.log(h(theta, X))

item2 = -(1 - y.flatten()) * np.log(1 - h(theta, X))

item3 = Lambda/(2*X.shape[0]) * np.sum(np.power(theta, 2)[1:])

return np.sum(item1 + item2) / (X.shape[0]) + item3

# $\frac{\partial J(\theta)}{\partial \theta_{j}} = \frac{1}{n} \sum\limits_{i=1}^n (h_{\theta}(x^{i}) - y^i)x_j^i$

def gradient(theta, Lambda, X, y):

"""

This function will take in theta value and calculate the gradient

descent values.

X is an n x k matrix of explanatory variables

y is a n sized array of explained variables

theta is a vector with k elements of multipliers for linear function

"""

errors = h(theta, X) - y

#errors will be calculated more than once, so let's do it once and store it

correction2 = (Lambda/X.shape[0]) * theta

correction2[0] = 0.0

correction = np.sum(errors.reshape((X.shape[0], 1)) * X, axis=0) * (1.0 / X.shape[0])

return correction + correction2

# The cost for Lambda=1 and theta=0 is:

theta=np.zeros(X.shape[1])

cost_function(theta, 1, X, y)

# **Finding the Parameter**

# Finally let's apply our optimization method and find the optimal theta values

# +

import scipy.optimize as opt

Lambda = 1.0

theta = np.zeros(X.shape[1])

result = opt.minimize(fun=cost_function, method='TNC',

jac= gradient, x0=theta, args=(Lambda, X,y), options={'maxiter':400})

result

# -

# Interesting tidbit: if you don't use the gradient function, it takes 750 loops. But if you use it it takes 32. Cost functions are very close.

# Let's check the accuracy rate of our prediction function.

# +

theta_opt = result['x']

def prediction_function(theta, X):

return h(theta, X) >= 0.5

total_corrects = np.sum((y.flatten() == prediction_function(theta_opt, X)))

total_dpoints = X.shape[0]

accuracy_rate = total_corrects/total_dpoints

accuracy_rate

# -

# **Plotting the decision boundary**

#

# We will plot the decision boundary. In order to that I will create a 3d grid with values of test1 and test2 data, and the corresponding $h_{\theta}(X)$ (Acutally we will only find $\theta X$ since $h$ is one to one function). Using this data we can use the plot-contour level functions to find where $\theta X = 0$ since this is where the decision boundary is.

#

# In order to this we need to write the MapFeature function explicitly. It should take in arrays or values and give us polynomial elements of them.

def MapFeature(x1, x2):

"""

This takes in two n elements vector arrays, then builds a

n x 28 dimensional array of features

"""

#flatten the vectors in case:

x1 = x1.flatten()

x2 = x2.flatten()

num_ele = len(x1)

degrees = 6

res_ar = np.ones( (len(x1), 1) )

for i in range(1, degrees+1):

for j in range(0, i+1):

res1 = np.power(x1, i-j)

res2 = np.power(x2, j)

res3 = np.multiply(res1, res2).reshape( (num_ele, 1) )

res_ar = np.hstack(( res_ar, res3 ))

return res_ar

# Let's check if our function work properly:

#The following code checks if there are any non-equal elements.

np.count_nonzero(MapFeature(X[:,1],X[:,2]) != X)

# Now let's define a function to draw counters. I have utilized some of the code from the homework and then also @kaleko's website. In order to repeat the image from the homework, I had to transpose zvals like Prof Ng did in his codes. To be honest, I don't know why we had to do this.

#

# Our function will also show the accuracy rate before it shows the graph it drew. Quite handy.

def Draw_Contour(X, y, Lambda):

#First we need to find optimal Theta

theta_initial = np.zeros(X.shape[1])

result = opt.minimize(fun=cost_function, method='TNC', jac= gradient,

x0=theta_initial, args=(Lambda, X,y), options={'maxiter':400, 'disp':False})

theta = result['x']

#Next define the grids

xvals = np.linspace(-1,1.5,50)

yvals = np.linspace(-1,1.5,50)

zvals = np.zeros((len(xvals),len(yvals)))

for i, xv in enumerate(xvals):

for j, yv in enumerate(yvals):

features = MapFeature(np.array(xv), np.array(yv))

zvals[i, j] = np.dot(features, theta)

zvals = zvals.transpose()

#To be honest I don't know why we transpose but this is the way it is in

#the code provided by Professor Ng.

#Now draw the graph. I reused some code from before.

fig, ax = plt.subplots(figsize=(12, 8))

ax.scatter(X[(y == 0), 1], X[(y == 0), 2], marker='x', label='Failed')

ax.scatter(X[(y == 1), 1], X[(y == 1), 2], label='Passed')

mycontour = ax.contour( xvals, yvals, zvals, [0], label="boundary")

myfmt = { 0:'Lambda = %d'%Lambda}

ax.clabel(mycontour, inline=1, fontsize=15, fmt=myfmt)

ax.legend(frameon = True, fontsize="large", facecolor = "White", framealpha = 0.7)

ax.set_xlabel('Test1')

ax.set_ylabel('Test2')

#Our function will also show the accuracy rate as it draws the graph

total_corrects = np.sum((y.flatten() == prediction_function(theta, X)))

total_dpoints = X.shape[0]

accuracy_rate = total_corrects/total_dpoints

return accuracy_rate

# Let's try our function for different Lambda values, for Lambda = 0, 1, 10

Draw_Contour(X, y, 0.00001)

Draw_Contour(X, y, 1.0)

Draw_Contour(X, y, 10)

| Ex 2 - Logistic Regression/Logistic Regression with Regularization.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Graphes : arbres de recherche binaire et quadtrees

# Les graphes sont une notion très importante en informatique. Ils nous seront très utiles pour représenter des réseaux (de transport, de distribution d'énergie etc.) mais aussi pour construire des "espaces de recherche".

# ## Qu'est-ce qu'un graphe ?

# L'histoire des graphes commence en 1735 dans la ville de Königsberg (qui est aujourd'hui l'enclave russe de Kaliningrad).

#

#

#

# Le mathématicien <NAME> se pose la question de savoir s'il existe ou non une promenade dans les rues de Königsberg permettant, à partir d'un point de départ au choix, de passer une et une seule fois par chaque pont (il y en a 7), et de revenir à son point de départ.

# La réponse est non mais la modélisation mathématique consiste à représenter le problème des sept ponts de Königsberg par un graphe :

#

#

# Un graphe est constitué de *noeuds* (ici A, B, C, D et E) et *d'arêtes* (I, II, III, IV, V, VI et VII). Il est par exemple possible de passer du noeud A au noeud B puis de revenir à A. C'est ce qu'on appele un *cycle*.

# Un *arbre* est un graphe sans cycle.

#

#

# Comment coder un graphe en python ? On peut utiliser un dictionnaire :

# dictionnaire konigsberg = {...}

# On peut bien évidemment utiliser des classes d'objets.

#

# ## Noeud

class Node:

"""Définition d'un noeud"""

# Constructeur de noeud

def __init__(self, label = "NaN", content = 0):

self.label = label

self.content = content

# Méthode utilisée par print()

def __str__(self):

t = "Node(" + self.label + ", " + str(self.content) + ")"

return t

a = Node(label = "A", content = 6)

type(a)

print(a)

# ## Arbre de recherche binaire

#

# Commençons par modéliser un arbre de recherche binaire (sera aussi vu en cours d'algorithmique) :

#

#

class ArbreBin:

"""Arbre binaire"""

# Constructeur d'arbre binaire

def __init__(self, arbre_g, racine, arbre_d):

self.arbre_g = arbre_g

self.racine = racine

self.arbre_d = arbre_d

# A faire !

def __str__(self):

pass

af = ArbreBin(None, Node('F', 8), None)

ag = ArbreBin(None, Node('G', 11), None)

ad = ArbreBin(af, Node('D', 9), ag)

ae = ArbreBin(None, Node('E', 24), None)

ac = ArbreBin(ad, Node('C', 12), ae)

ab = ArbreBin(None, Node('B', 4), None)

aa = ArbreBin(ab, Node('A', 6), ac)

type(aa)

# +

# print(aa) doit donner :

# ArbreBin(ArbreBin(None, Node(B, 4), None), Node(A, 6), ArbreBin(ArbreBin(ArbreBin(None, Node(F, 8), None), Node(D, 9), ArbreBin(None, Node(G, 11), None)), Node(C, 12), ArbreBin(None, Node(E, 24), None)))

# -

class ArbreBin:

"""Arbre binaire"""

# Constructeur d'arbre binaire

def __init__(self, arbre_g, racine, arbre_d):

self.arbre_g = arbre_g

self.racine = racine

self.arbre_d = arbre_d

def __str__(self):

if (self.arbre_g is None):

t_g = "None"

else:

t_g = self.arbre_g.__str__()

if (self.arbre_d is None):

t_d = "None"

else:

t_d = self.arbre_d.__str__()

return "ArbreBin(" + t_g + ", " + self.racine.__str__() + ", " + t_d + ")"

# Ajoute content à l'arbre

#def add(self, node):

# Si strictement inférieur, ajout à gauche

#if (node.content < self.racine.content):

#if self.arbre_g is None:

#left = ...

#else:

#left = ...

#return ArbreBin(...)

# Sinon (supérieur ou égal), ajout à droite

#else:

#if self.arbre_d is None:

#right = ArbreBin(...)

# else:

#right = ...

#return ArbreBin(...)

aa = ArbreBin(None, Node('A', 6), None)

#new_aa = aa.add(Node('B', 7))

# +

#print(new_aa)

# -

class ArbreBin:

"""Arbre binaire"""

# Constructeur d'arbre binaire

def __init__(self, arbre_g, racine, arbre_d):

self.arbre_g = arbre_g

self.racine = racine

self.arbre_d = arbre_d

def __str__(self):

if (self.arbre_g is None):

t_g = "None"

else:

t_g = self.arbre_g.__str__()

if (self.arbre_d is None):

t_d = "None"

else:

t_d = self.arbre_d.__str__()

return "ArbreBin(" + t_g + ", " + self.racine.__str__() + ", " + t_d + ")"

# Ajoute content à l'arbre

def add(self, node):

# Si strictement inférieur, ajout à gauche

if (node.content < self.racine.content):

if self.arbre_g is None:

left = ArbreBin(None, node, None)

else:

left = self.arbre_g.add(node)

return ArbreBin(left, self.racine, self.arbre_d)

# Sinon (supérieur ou égal), ajout à droite

else:

if self.arbre_d is None:

right = ArbreBin(None, node, None)

else:

right = self.arbre_d.add(node)

return ArbreBin(self.arbre_g, self.racine, right)

# Teste si l'arbre contient content

#def contains(self, content):

#if (content == self.racine.content):

#return ...

#elif (content < self.racine.content):

#return ...

#else:

#return ...

aa = ArbreBin(None, Node('A', 6), None)

#new_aa = aa.add(Node('D', 9)).add(Node('E', 24)).add(Node('F', 8)).add(Node('G', 11))

# +

#new_aa.contains(8)

# +

#new_aa.contains(21)

# -

# ## Ok mais à quoi servent les arbres binaires ?

#

# Reprenons l'algorithme de recherche séquentielle vu au TP3.

def rechercher(tableau, valeur):

isFound = False

j = 0

while (j < len(tableau)) and not isFound:

x = tableau[j]

j += 1

if (x == valeur):

isFound = True

return isFound

rechercher([1, 4, 67, 8, 5], 8)

rechercher([1, 4, 67, 8, 5], 0)

# Exercice : ajouter à la classe ArbreBin une méthode permettant de créer un arbre binaire à partir d'un tableau (liste).

class ArbreBin:

"""Arbre binaire"""

# Constructeur d'arbre binaire

def __init__(self, arbre_g, racine, arbre_d):

self.arbre_g = arbre_g

self.racine = racine

self.arbre_d = arbre_d

def __str__(self):

if (self.arbre_g is None):

t_g = "None"

else:

t_g = self.arbre_g.__str__()

if (self.arbre_d is None):

t_d = "None"

else:

t_d = self.arbre_d.__str__()

return "ArbreBin(" + t_g + ", " + self.racine.__str__() + ", " + t_d + ")"

# Ajoute content à l'arbre

def add(self, node):

# Si strictement inférieur, ajout à gauche

if (node.content < self.racine.content):

if self.arbre_g is None:

left = ArbreBin(None, node, None)

else:

left = self.arbre_g.add(node)

return ArbreBin(left, self.racine, self.arbre_d)

# Sinon (supérieur ou égal), ajout à droite

else:

if self.arbre_d is None:

right = ArbreBin(None, node, None)

else:

right = self.arbre_d.add(node)

return ArbreBin(self.arbre_g, self.racine, right)

# Ajoute une liste d'entiers à un arbre

# A faire !

def addL(self, tableau):

pass

# Teste si l'arbre contient content

def contains(self, content):

if (content == self.racine.content):

return True

elif (content < self.racine.content):

return (not (self.arbre_g is None)) and self.arbre_g.contains(content)

else:

return (not (self.arbre_d is None)) and self.arbre_d.contains(content)

aa = ArbreBin(None, Node('Root'), None)

#new_aa = aa.addL([0, 15, 27])

# +

#print(new_aa)

# -

print(aa)

# Maintenant voyons quel est l'algorithme le plus rapide : rechercher ou contains ?

# +

# A faire commme la comparaison des algorithmes de tri

# -

# On voit donc que la recherche dans un arbre bianire est beaucoup plus efficace.

# ## Ok mais quel rapport avec la géomatique ?

#

# Des idées similaires aux arbres binaires (quadtrees, R-trees etc.) sont utilisées dans de nombreux domaines (bases de données, synthèse/compression d'images, simulations, jeux vidéo et géomatique) pour "indexer" les données et augmenter considérablement les performances.

#

#

#

# Supposons que l'on veuille calculer quels sont les objets proches de B. On va donc devoir faire autant de calcul de distance qu'il y a d'objets même s'il est évident que certains objets ne sont pas "proches". Et si l'on veut connaître tous les objets se trouvant à moins d'une certaine distance les uns des autres (par exemple, où se trouve toutes les antennes relais situées à moins de 100 m d'une école ?), le nombre de calculs augmente encore plus rapidement : 100 pour 10 objets, 10000 pour 100 objets, 1000000 pour 1000 objets etc.

#

#

#

# Les quadtrees sont des arbres quaternaires, chaque noeud à 4 descendants. Ils permettent d'indexer les objets du plan. Lorsque le nombre d'objets du plan considéré dépasse un certain seuil arbitraire (1 dans notre exemple), le plan est découpé en 4 sous-zones. Et ainsi de suite récursivement. Pour connaître les points "proches" de B, on ne considérera que les points placés dans le même noeud que B, ou ceux appartenant à des noeuds "proches" (ce qui se calcule facilement car on connaît les coordonnées des points aux extrémités des zones).

# (le code ci-dessous n'est pas de moi, je l'adapte de [là](https://jrtechs.net/data-science/implementing-a-quadtree-in-python))

class Point():

def __init__(self, x, y):

self.x = x

self.y = y

class Node():

def __init__(self, x0, y0, w, h, points):

self.x0 = x0

self.y0 = y0

self.width = w

self.height = h

self.points = points

self.children = []

def get_width(self):

return self.width

def get_height(self):

return self.height

def get_points(self):

return self.points

# +

def recursive_subdivide(node, k):

if len(node.points) > k:

w_ = float(node.width/2)

h_ = float(node.height/2)

p = contains(node.x0, node.y0, w_, h_, node.points)

x1 = Node(node.x0, node.y0, w_, h_, p)

recursive_subdivide(x1, k)

p = contains(node.x0, node.y0+h_, w_, h_, node.points)

x2 = Node(node.x0, node.y0+h_, w_, h_, p)

recursive_subdivide(x2, k)

p = contains(node.x0+w_, node.y0, w_, h_, node.points)

x3 = Node(node.x0 + w_, node.y0, w_, h_, p)

recursive_subdivide(x3, k)

p = contains(node.x0+w_, node.y0+h_, w_, h_, node.points)

x4 = Node(node.x0+w_, node.y0+h_, w_, h_, p)

recursive_subdivide(x4, k)

node.children = [x1, x2, x3, x4]

def contains(x, y, w, h, points):

pts = []

for point in points:

if (point.x >= x) and (point.x <= x + w) and (point.y >= y) and (point.y <= y + h):

pts.append(point)

return pts

def find_children(node):

if not node.children:

return [node]

else:

children = []

for child in node.children:

children += (find_children(child))

return children

# +

import random

import matplotlib.pyplot as plt # plotting libraries

import matplotlib.patches as patches

class QTree():

def __init__(self, k, n):

self.threshold = k

self.points = [Point(random.uniform(0, 10), random.uniform(0, 10)) for x in range(n)]

self.root = Node(0, 0, 10, 10, self.points)

def add_point(self, x, y):

self.points.append(Point(x, y))

def get_points(self):

return self.points

def subdivide(self):

recursive_subdivide(self.root, self.threshold)

def graph(self):

fig = plt.figure(figsize=(12, 8))

plt.title("Quadtree")

c = find_children(self.root)

print("Number of segments: %d" %len(c))

areas = set()

for el in c:

areas.add(el.width*el.height)

print("Minimum segment area: %.3f units" %min(areas))

for n in c:

plt.gcf().gca().add_patch(patches.Rectangle((n.x0, n.y0), n.width, n.height, fill=False))

x = [point.x for point in self.points]

y = [point.y for point in self.points]

plt.plot(x, y, 'ro') # plots the points as red dots

plt.show()

# -

test = QTree(3, 100)

test.subdivide()

test.graph()

# + active=""

#

| programmation_python/TP6.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 0.6.2

# language: julia

# name: julia-0.6

# ---

# # Types

# The **type** of a variable tells us the "shape" of the variable, i.e. how to treat / interpret the data stored in the block of memory associated with that variable.

#

# Although it is possible to do much in Julia without thinking or worrying about types, they are always just under the surface, and the true power of the language becomes available through their use.

x = 3

typeof(x)

# For basic ("primitive") types, we can see the raw bits that are associated to a variable:

bits(x) # bitstring in Julia 0.7

y = 3.0

bits(y)

# The internal representations are different, corresponding to the different types.

# We can treat the storage as being of a different type:

z = reinterpret(Int, y)

h = hex(z)

z1 = parse(Int, h, 16) # base 16

bits(z1)

# # Defining our own types

# We have previously seen some examples of how to define a new type in Julia.

#

# A type definition can be thought of as template for a kind (type) of box, that contains certain **fields** containing data.

#

# One of the simplest examples is a "volume" type, representing the volume of some physical or mathematical object:

struct Vol

value

end

Vol

# This defines a new type, called `Vol`, containing one field, called `value`.

#

# It does not yet create an object that has that type. That is done by calling a **constructor** -- a special function with the same name as the type:

V = Vol(3)

V

# `V` is a Julia variable that is of type `Vol`:

typeof(V)

# Its "shape" is thus that of a box containing itself a single variable, which we can access as

V.value

# Since we defined the type `Vol` as `struct`, we cannot modify the contents of the object once it has been created:

V.value = 10

# We could instead make a mutable object:

mutable struct Vol1

value

end

V = Vol1(3)

V.value

V.value = 10

V

# We can change how our objects look by defining a new method of the `show` function:

# +

import Base: show

show(io::IO, V::Vol1) = print(io, "Volume with value ", V.value)

# -

V = Vol1(3)

# We can define e.g. the sum of two volumes:

# +

import Base: +

+(V1::Vol1, V2::Vol1) = Vol1(V1.value + V2.value)

# -

V + V

# But the following does not work, since we haven't defined `*` yet on our type:

2V

# **Exercise**: Define `*` of two `Vol`s and of a `Vol` and a number.

# ## Type annotations

# There is a problem with our definition:

Vol1("hello")

# It doesn't make sense to have a string as a volume. So we should **restrict** which kinds of `value` are allowed, by specifying ("annotating") the type of `value` in the type definition:

struct Vol2

value::Float64

end

Vol2(3.1)

Vol2("hello")

# # Parameterizing a type

# In different contexts, we may want integer volumes, or rational volumes, rather than `Vol`s which contain a floating-point number, e.g. for a 3D printer that makes everything out of cubes of the same size.

#

# We could define the following sequence of different types.

# +

type Vol_Int

value::Int

end

type Vol_Float

value::Float64

end

type Vol_Rational

value::Rational{Int64}

end

# -

Vol_Int(3)

Vol_Int(3.1)

Vol_Float(3.1)

# But clearly this is the wrong way to do it, since we're repeating ourselves, leading to inefficiency and buggy code. (https://en.wikipedia.org/wiki/Don't_repeat_yourself).

#

# Can't Julia itself automatically generate all of these different types?

# ## Specifying type parameters

# What we would like to do is tell Julia that the **type** itself (here, `Int`, `Float64` or `Rational{Int64}`)

# is a special kind of **parameter** that we will specify.

#

# To do so, we use curly braces (`{`, `}`) to specify such **type parameter** `T`:

struct Vol3{T}

value

end

# We can now create objects of type `Vol3` with any type `T`:

V = Vol3{Float64}(3.1)

typeof(V)

V2 = Vol3{Int64}(4)

typeof(V2)

# We see that the types of `V1` and `V2` are *different* (but related), and we have achieved what

# we wanted.

# The type `Vol3` is called a **parametric type**, with **type parameter** `T`. Parameteric types may have several type parameters, as we have already seen with `Array`s:

a = [3, 4, 5]

typeof(a)

# The type parameters here are `Int64`, which is itself a type, and the number `1`.

# ## Improving the solution

# The problem with this solution is the following, which echos what happened at the start of the notebook:

V = Vol3{Int64}(3.1)

typeof(V.value)

# The type `Float64` of the field `V.value` is distinct from the type parameter `Int64` we specified.

# So we have not yet actually captured the pattern of `Vol2`,

# which restricted the `value` field to be of the desired type.

#

# We solve this be specifying the field to **also be of type `T`**, with the **same `T`**:

struct Vol4{T}

value::T

end

# For example,

V = Vol4{Int64}(3)

# If necessary and possible, the argument to the constructor will be converted to the parametric type `T` that we specify:

V = Vol4{Int64}(3.0)

typeof(V.value)

# Now when we try to do

Vol4{Int64}(3.1)

# Julia throws an error, namely an `InexactError()`.

# This means that we are trying to "force" the number 3.1 into a "smaller" type `Int64`, i.e. one in which it can't be represented.

# However, now we seem to be repeating ourselves again: We know that `3.1` is of type `Float64`, and in fact Julia knows this too; so it seems redundant to have to specify it. Can't Julia just work it out? Indeed, it can!:

Vol4(3.1)

# Here, Julia has **inferred** the type `T` from the "inside out". That is, it performed pattern matching to realise that `value::T` is **matched** if `T` is chosen to be `Float64`, and then propagated this same value of `T` **upwards** to the type parameter.

# ## More fields

# **Exercise**: Define a `Point` type that represents a point in 2D, with two fields. What are the options for this type, mirroring the types `Vol1` through `Vol4`?

# ## Summary

# With parametric types, we have the following possibilities:

#

# 1. Julia converts (if possible) to the header type

#

# 2. Julia infers the header type from the inside (through the argument)

#

# # Constructors

# When we define a type, Julia also defines the **constructor functions** that we have been using above. These are functions with exactly the same name as the type.

#

# They can be discovered using `methods`:

struct Vol1

value

end

methods(Vol1)

# We see that Julia provides two default constructors. [Note that the output has changed in Julia 0.7.]

# For parametric types, it is a bit more complicated:

methods(Vol4)

methods(Vol4{Float64})

# ## Outer constructors

# Julia allows us to provide our own constructor functions.

# E.g.

struct Vol1

value

end

struct Vol2

value::Float64

end

Vol2(3)

Vol2("3.1")

# Here, we have tried to provide a numeric string, which is not allowed, since the string is not a number. We can add a constructor to allow this:

Vol2(s::String) = Vol2(parse(Float64, s))

Vol2("3.1")

Vol2("hello")

# We have added a new constructor outside the type definition, so it is called an **outer constructor**.

# ## Constructors that impose a restriction: **inner constructors**

# Now consider the following:

Vol4(3)

V = Vol4(3.5)

V.value

Vol4(-1)

# Oops! A volume cannot be negative, but the constructors so far have no restrictions, and so allow us to make a negative volume. To prevent this, Julia allows us to provide our own constructor, in which any restrictions are enforced.

#

# These constructors are written **within the type definition itself**, and hence are called **inner constructors**.

#

# [In Julia, these are the **only methods** that may be defined inside the type definition. Unlike in object-oriented languages, methods **do not belong to types** in Julia; rather, they exist outside any particular type, and (multiple) dispatch is used instead.]

#

# For example:

struct Vol5

value::Float64

function Vol5(V)

if V < 0

throw(ArgumentError("Volumes cannot be negative"))

end

new(V)

end

end

Vol5(3)

Vol5(-34)

# If we define an inner constructor, then Julia no longer defines the standard constructors; this is why defining an inner constructor gives us exclusive control over how our objects are created.

# Note that we use a special function `new` to actually create the object by filling in the values of the fields.

# If we use an immutable object (defined using `struct`), there is no way of changing the value of the field stored inside the object, so the invariant that `value` must be positive can never be violated.

# # Parametric functions

# Since we now have the ability to make parametric types, we may wish to define parametric functions on those types. E.g.

struct Length{T}

length::T

end

l = Length(10)

function square_area(l)

return l^2

end

# Suppose that we wish to round the area of squares with floating-point side length:

function square_area(l::T) where {T <: AbstractFloat} # method for types T that are subtypes of AbstractFloat

return ceil(Int, l^2)

end

square_area(11.1)

# # Inner constructors for parametric types

struct Vol6{T<:Real}

value::T

function Vol6{T}(V) where {T<:Real} # where specifies that T is a parameter of the parametric function Vol6

if V < 0

throw(ArgumentError("Negative"))

end

return new{T}(V)

end

end

# Here, we have used the syntax for parametric functions to specify a parametric inner constructor

Vol6(3)

Vol6{Float64}(3.1)

# We see that so far, we must explicitly specify the parametric type.

methods(Vol6)

# We can again make Julia infer the type for us:

Vol6(x::T) where {T<:Real} = Vol6{T}(x)

Vol6(3.1)

Vol6(3)

methods(Vol6)

x = 3//4 # rational number

Vol6(x)

# # Fixed-size objects are efficient

struct Vec{T}

x::T

y::T

end

# How efficient is this?

# +

import Base: +

+(f::Vec{T}, g::Vec{T}) where {T} = Vec(f.x + g.x, f.y + g.y)

# -

using BenchmarkTools

@btime +(Vec(1.0, 2.0), Vec(1.0, 2.0))

@btime [1.0, 2.0] + [1.0, 2.0]

# Using fixed-size objects is much more efficient (50 times more efficient)! They are defined in the `StaticArrays.jl` package.

| 08. User-defined types and parametric types.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## 모델의 성능 개선하기

# * IBM sample datasets

# https://www.kaggle.com/blastchar/telco-customer-churn

#

# * Demographic info:

# * Gender, SeniorCitizen, Partner, Dependents

# * Services subscribed:

# * PhoneService, MultipleLine, InternetService, OnlineSecurity, OnlineBackup, DeviceProtection, TechSupport, StreamingTV, StreamingMovies

# * Customer account info:

# * CustomerID, Contract, PaperlessBilling, PaymentMethod, MonthlyCharges, TotalCharges, Tenure

import pandas as pd

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

# +

from IPython.display import set_matplotlib_formats

set_matplotlib_formats('retina')

# -

# ## 데이터 로드하기

# * 전처리된 모델을 로드하기

df = pd.read_csv("data/telco_feature.csv")

df.shape

# customerID 를 인덱스로 설정하기

df = df.set_index("customerID")

df.head()

# ## 전처리

# 결측치를 채워주는 방법도 있지만 일단 제거하도록 합니다.

df = df.dropna()

df["Churn"].value_counts()

# ## 학습, 예측 데이터셋 나누기

# ### 학습, 예측에 사용할 컬럼

# 피처로 사용할 컬럼 지정하기

feature_names = df.columns.tolist()

feature_names.remove("Churn")

feature_names

# ### 정답값이자 예측해야 될 값

# +

# label_name 이라는 변수에 예측할 컬럼의 이름을 담습니다.

label_name = "Churn"

label_name

# -

# ### 문제(feature)와 답안(label)을 나누기

#

# * X, y를 만들어 줍니다.

# * X는 feature, 독립변수, 예) 시험의 문제

# * y는 label, 종속변수, 예) 시험의 정답

# X, y를 만들어 줍니다.

X = df.drop(label_name, axis=1)

y = df[label_name]

# ### 학습, 예측 데이터셋 만들기

# * X_train : 학습 세트 만들기, 행렬, 판다스의 데이터프레임, 2차원 리스트(배열) 구조, 예) 시험의 기출문제

# * y_train : 정답 값을 만들기, 벡터, 판다스의 시리즈, 1차원 리스트(배열) 구조, 예) 기출문제의 정답

# * X_test : 예측에 사용할 데이터세트를 만듭니다. 예) 실전 시험 문제

# * y_test : 예측의 정답값 예) 실전 시험 문제의 정답

# +

# train_test_split 으로 데이터셋 나누기

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# -

X_train.shape, X_test.shape, y_train.shape, y_test.shape

X_train.head(3)

X_test.head(3)

y_train.head(2)

# ## 머신러닝 모델로 예측하기

# +

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier, GradientBoostingClassifier

estimators = [DecisionTreeClassifier(random_state=42),

RandomForestClassifier(random_state=42),

GradientBoostingClassifier(random_state=42)

]

estimators

# -

results = []

for estimator in estimators:

result = []

result.append(estimator.__class__.__name__)

results.append(result)

results

# +

from sklearn.model_selection import RandomizedSearchCV

results = []

for estimator in estimators:

result = []

max_depth = np.random.randint(2, 20, 10)

max_features = np.random.uniform(0.3, 1.0, 10)

param_distributions = {"max_depth": max_depth,

"max_features": max_features}

if estimator.__class__.__name__ != 'DecisionTreeClassifier':

param_distributions["n_estimators"] = np.random.randint(100, 200, 10)

clf = RandomizedSearchCV(estimator,

param_distributions,

n_iter=5,

scoring="accuracy",

n_jobs=-1,

cv=5,

verbose=2

)

clf.fit(X_train, y_train)

result.append(estimator.__class__.__name__)

result.append(clf.best_params_)

result.append(clf.best_score_)

result.append(clf.score(X_test, y_test))

result.append(clf.cv_results_)

results.append(result)

# -

df_result = pd.DataFrame(results,

columns=["estimator", "best_params", "train_score", "test_score", "cv_result"])

df_result

# ## 학습하기

model = DecisionTreeClassifier(max_depth=6, max_features=0.9, random_state=42)

model

# 학습하기

model.fit(X_train, y_train)

# 예측하기

y_predict = model.predict(X_test)

y_predict

# ## 모델 평가하기

# +

# 피처의 중요도를 추출하기

importances = pd.DataFrame({"importances" : model.feature_importances_,

"feature_names" :feature_names})

importances = importances.sort_values("importances", ascending=False)

# -

# 피처의 중요도 시각화 하기

plt.figure(figsize=(10, 20))

sns.barplot(data=importances, x="importances", y="feature_names")

# ### 점수 측정하기

# #### Accuracy

# +

from sklearn.metrics import accuracy_score

accuracy_score(y_test, y_predict)

# -

(y_test == y_predict).mean()

# #### F1 score

# * precision 과 recall의 조화평균

# * [정밀도와 재현율 - 위키백과, 우리 모두의 백과사전](https://ko.wikipedia.org/wiki/%EC%A0%95%EB%B0%80%EB%8F%84%EC%99%80_%EC%9E%AC%ED%98%84%EC%9C%A8)

# +

# plot_confusion_matrix 를 그립니다.

from sklearn.metrics import plot_confusion_matrix

plot_confusion_matrix(model, X_train, y_train)

# +

from sklearn.metrics import classification_report

report = classification_report(y_test, y_predict)

print(report)

# -

| telco-customer-churn/telco_prediction_04_random_search.ipynb |

// ---

// jupyter:

// jupytext:

// text_representation:

// extension: .ts

// format_name: light

// format_version: '1.5'

// jupytext_version: 1.14.4

// kernelspec:

// display_name: Typescript 3.3

// language: typescript

// name: typescript

// ---

// # Asynchronous output

//

// Typescript and Node.JS make heavy use of asynchronous execution. ITypescript lets you exercise these asynchronous capabilities, both:

//

// - by updating `stdout` and `stderr` asynchronously, or

// - by updating the ITypescript output asynchronously.

//

// **Note**: This function came from IJavascript, and we port these as static functions.

// ## Updating `stdout` and `stderr` asynchronously

//

// Both streams `stdout` and `stderr` can be written asynchronously. Any text written to these streams will be forwarded back to the latest request from the frontend:

// +

class Counter {

private _n: number = 1;

private _intervalObject: any;

start(n: number, millisec: number){

this._n = n;

this._intervalObject = setInterval(() => {

console.log(this._n--);

if(this._n < 0){

clearInterval(this._intervalObject);

console.warn("Done!");

}

}, millisec);

}

}

let c = new Counter();

c.start(5, 1000);

// -

// ## Updating the ITypescript output asynchronously

//

// ITypescript offers two global definitions to help produce an output asynchronously:

// * IJavascript style: `$$.async()` and `$$.sendResult(result: any)` or `$$.done()`.

// * ITypescript style: `%async on` and `$TS.retrieve(result?: any)`.

//

// When you call `$$.async()`, the ITypescript kernel is instructed not to produce an output. Instead, an output can be produced by calling `$$.sendResult()` or `$TS.retrieve()`.

//

// **Note**: `%async on` should be present at the top of the cell.

// +

// IJavascript style

$$.async();

console.log("Hello!");

setTimeout(

() => {

$$.sendResult("And good bye!");

},

1000

);

// -

// It is also possible to produce a graphical output asynchronously, the same way it is done synchronously, with the difference that `$$done$$()` has to be called to instruct the ITypescript kernel that the output is ready:

// +

// %async on

// ITypescript style

console.log("Hello!");

setTimeout(

() => {

$TS.svg("<svg><circle cx='30px' cy='30px' r='20px'/></svg>");

$TS.done();

},

1000

);

// -

| doc/async.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from jupyter_plotly_dash import JupyterDash

import dash_core_components as dcc

import dash_html_components as html

import dash

from dash.dependencies import Input, Output

from pymongo import MongoClient

import urllib.parse

from bson.json_util import dumps

#TODO: import for their CRUD module

from animalsCRUD import AnimalShelter

# this is a juypter dash application

app = JupyterDash('ModuleFive')

# the application interfaces are declared here

# this application has two input boxes, a submit button, a horizontal line and div for output

app.layout = html.Div(

[

dcc.Input(

id="input_user".format("text"),

type="text",

placeholder="input type {}".format("text")),

dcc.Input(

id="input_passwd".format("password"),

type="password",

placeholder="input type {}".format("password")),

html.Button('Execute', id='submit-val', n_clicks=0),

html.Hr(),

html.Div(id="query-out"),

#TODO: insert unique identifier code here

html.Header("Header"),

html.Tfoot("CS 340.")

]

)

# this is area to define application responses or callback routines

# this one callback will take the entered text and if the submit button is clicked then call the

# mongo database with the find_one query and return the result to the output div

@app.callback(

Output("query-out", "children"),

[Input("input_user".format("text"), "value"),

Input("input_passwd".format("password"),"value"),

Input('submit-val', 'n_clicks')],

[dash.dependencies.State('input_passwd', 'value')]

)

def cb_render(userValue,passValue,n_clicks,buttonValue):

if n_clicks > 0:

###########################

# Data Manipulation / Model

# use CRUD module to access MongoDB

##########################

username = "aacuser"

password = "password"

#TODO: Instantiate CRUD object with above authentication username and password values

testMod5 = AnimalShelter('aacuser', 'password')

# note that MongoDB returns BSON, the pyMongo JSON utility function dumps is used to convert to text

#TODO: Return example query results

tester = testMod5.locate({"animal_type":"Dog","name":"Lucy"})

for y in tester:

return y

app

# -

| Undergrad/CS-340/Project One/python.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:new]

# language: python

# name: conda-env-new-py

# ---

# As <NAME> failed to deliver all the legacy *Spitzer* MIPS maps, I have had to collect certian maps. For the Bootes field, these have come from Mattia Vaccari's [Datafusion folders](http://mattiavaccari.net/df/m24/). The files available are:

# ls

from astropy.io import fits

# Lets check format of one of the previous maps

elais_n1=fits.open('../../dmu26/data/ELAIS_N1/MIPS/wp4_elais-n1_mips24_map_v1.0.fits.gz')

elais_n1

elais_n1[0].header

elais_n1[1].header

elais_n1[2].header

elais_n1[3].header

# Ok, so there is a primary header, an image map, an uncertianty map and a coverage map. Lets create the FITS file for Bootes.

hdr = fits.Header()

hdr['PRODUCT'] = 'WP4_Bootes_OFFICIAL_MIPS_24'

hdr['VERSION'] = 'V1.0 '

hdr['FIELD'] = 'Bootes'

hdr['SFILTERS']= 'MIPS_24 '

hdr['DESC'] = 'image, uncertainty and & coverage'

hdr['RIGHTS'] = 'Public '

primary_hdu = fits.PrimaryHDU(header=hdr)

hd1=fits.open('./data/bootes_24um_v2_mosaic.fits.gz')

hd2=fits.open('./data/bootes_24um_v2_mosaic_unc.fits.gz')

hd3=fits.open('./data/bootes_24um_v2_mosaic_cov.fits.gz')

hdul=fits.HDUList([primary_hdu,fits.ImageHDU(hd1[0].data,

header=hd1[0].header),fits.ImageHDU(hd2[0].data, header=hd2[0].header),

fits.ImageHDU(hd3[0].data, header=hd3[0].header)])

hdul.writeto('wp4_bootes_mips24_map_v1.0.fits.gz')

| dmu17/dmu17_Bootes/create_HELP_format_MIPS_map.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Box plots: An investigation into box plots and their use, with example plots.

# ### Notebook structure

# The notebook is split up into the following sections which are based on the project problem statement requirements.

#

# * Section One - Getting started with all necessary python libraries/packages

# * Section Two - Summarise the history of the box plot and situations in which it is used, explaining relevant terminology. (I have combined two of the project statement requirements in section two as I felt they fitted well together).

# * Section Three - Demonstrate the use of the box plot, using data of your choosing.

# * Section Four - Compare the box plot to alternatives.

# * Section Five - References and conclusion

# ### Section one - getting started: importing packages

# The following Python packages are imported for use in this notebook:

# * Mathplotlib.pyplot.py [Mathplotlib.org](https://matplotlib.org). Matplotlib is a Python plotting library and Pyplot is a matplotlib module which provides a MATLAB type interface.

# * NumPy [NumPy](http://www.numpy.org/). NumPy is a Python package for mathematical computing.

# * Seaborn [Seaborn](https://seaborn.pydata.org). Seaborn is a Python package used for plotting data.

# * Pandas [Pandas](https://pandas.pydata.org). Pandas is a Python package for use with data frames.

#

#command below ensures plots display correctly in the notebook

# %matplotlib inline

#below imports all necessary python packages for this notebook

import matplotlib.pyplot as plt

import numpy as np

import seaborn as sns

import pandas as pd

# ### Section Two - Summarise the history of the box plot and uses. Explain the structure of a boxplot and related terminology.

# Boxplots were first used in 1969 by mathematician [<NAME>](https://en.wikipedia.org/wiki/John_Tukey), below.

# <NAME> was an American mathematician and the founding chairman of the Princeton statistics department.

# Amongst his many contributions to mathematics and statistics one which most people might recognise was the coining of the term "bit" for Binary digits (this, whilst researching at Bell laboratories).

#

# Whilst first using them himself in 1970, Tukey first introduced boxplots in his 1977 book, "Exploratory Data Analysis."

# They are a simple way of visually displaying data distribution based on the five number summary: minimum, first quartile, median, third quartile, and maximum.

#

# In the intervening years they have become one of the most used graphs for statistical display and there are also some variations of the original plot that allow for better display of the distributions within the boxplot than the original. Some of the more common variations are the violin plot and variable width boxplot, both which give a feel for data density. The variations stay true to the Tukey's original boxplot five number summary. It is probably fair to say that it is the advancement of computing and the capabilities of display of data using computers that has led to the many more sophisticated variations of the original box plot which could be created by hand. A paper from 2011,*40 Years of Boxplots by <NAME> and <NAME>*$^{1}$ outlines many of these variations.

#

# Tukey pioneered exploratory data analysis (EDA), believing too great an emphasis was put on confirmatory data analysis (CDA). During my research on Tukey I came across an interesting paper by *<NAME>* on *Exploratory Data Analysis*$^{2}$in which he refers to Tukey's likening EDA to detective work and also highlights the complementary relationship between EDA and CDA.

#

#

#

# The boxplot is a primary tool in EDA, displaying in a graphical manner the variability in the data. They are easily constructed and during research I came across an online lesson from *University of Texas-Houston*$^{3}$covering boxplots which I would highly recommend a look at.

#

# Boxplots are used to show the distribution of data and show the following five points (which are summary statistics of a dataset.

#

# * data minimum - this is the minimum data point excluding outliers.

# * first quartile this is the 25$^{th}$ Percentile, 25% of data points are below this point.

# * median - this is the 50th percentile, 50% of data points will be above and 50% of points below this point.

# * third quartile - this is the 75$^{th}$ percentile, 75% of data points are below this point.

# * data maximum - this is the maximum data point excluding outliers.

#

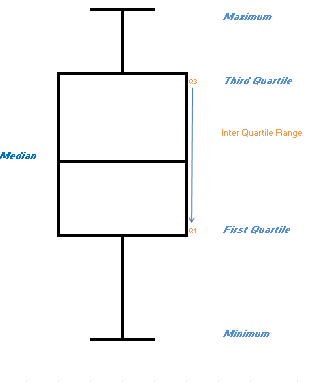

# Below I have represented the main features of a box plot.

#

#

#

#

# It consists of a rectangular box with whiskers extending from each end to the minimum and maximum data points. The rectangle itself goes from the first quartile to the third quartile (called the interquartile range) and a line within the box shows the median value of the data. It allows a simple but highly visually effective method of displaying and comparing data distributions.

#

# For example, if the median is not in the middle of the box the distribution is skewed. Where the median is closer to the bottom, the distribution is skewed right. Where the median is closer to the top, the distribution is skewed left. Therefore, the shape of the boxplot (whisker length and median position) can be used to predict the likely data distribution.

#

# To create a boxplot data is split into four equal sized groups.

# The lowest 25% goes from the minimum to the first quartile **Q1** - **the end of the whisker to the bottom of the box**.

#

# The **box itself shows the next 50% of data** with the **median** (middle value) marked as a line within the box.

#

# The top 25% of data goes from the third quartile **Q3** to the maximum - **the top of the box to the end of the top whisker.**

#

# The range of data from the Third Quartile to First Quartile (Q3-Q1) is called the **interquartile range** (IQR).

#

# Any data points that are more than 1.5 times the box length (IQR) above Q3 or below Q1 may be outliers. Data points more than 3 times the IQR above Q3 or below Q1 are extreme values. Outliers and extreme values are plotted as separate data points rather than as part of the whiskers.

#

# Some possible uses of boxplots would be

# * to compare data from duplicate production lines (to ensure all machines/tools working correctly)

# * to look at change over time where external factors might influence data (as I will do in my examples below).

# * A simple example might be to compare standardised test results of different schools.

# * For large datasets it provides a good first look and would assist in the identification of data that needs cleaning (as I will demonstrate in my second scenario below).

# ### Section Three - Demonstrate the use of the box plot using data of your choosing.

#

# For this I decided to use a few simple examples based on CAO points.

#

# Each year Irish Students undergo a state examination called the Leaving Certificate (LC), see a Wikipedia page about the examination here, [Leaving Cert](https://en.wikipedia.org/wiki/Leaving_Certificate_(Ireland)). For these LC students entry to third level courses in Ireland is via a central application system [CAO](http://www.cao.ie/).

#

# For the purpose of allocating college places Leaving cert results are converted to **applicant points** and Third Level institutions review applicant points & available course places and determine the minimum entry points for the course. Course are then offered to eligible applicants based on their points, in descending order until the course places are all allocated. If an applicant does not take the offered place subsequent rounds of offers are made until the course is filled, with course point reductions being made if necessary. The **course points** will therefore be the total points obtained by the last applicant allocated a course place.

#

# This method means that course popularity and number of available places can result in points changing from year to year, therefore it is to a large extent an example of supply and demand. It also means that the spread of student points can vary from year to year.

#

# In the first simulated example below, example one, a course had a points requirement in 2016 of 365 with a max points of 530, in 2017 a points requirement of 397 with max 495. Intake is unchanged with 39 places allocated in each year.

#

# Below I have plotted the points and also used NumPy to calculate the five boxplot points and the interquartile ranges.

#

# I then used Seaborn to generate the same plot and generated some summary statistics; this is to demonstrate how easily a smart looking plot and summary statistics can be generated by even the most novice user. Something that I feel is extremely relevant in current workplace environments where working with IT systems and data is no longer the sole preserve of the IT department!

#

# In the final part of this section I will bring in a second dataset which represents the variation of points for a different programme, program B across six years. This is intended to show the effect of outliers and unclean data and how a quick visual review with a box plot can highlight data cleansing requirements.

#

# #### Box Plot Example One

## Sample Data One - below I am reading in a file of mocked up CAO points for a course A

df = pd.read_csv("https://raw.githubusercontent.com/Hudsonsue/BOXPLOTS/master/mock%20up%20points.csv", header =0)

#df

# +

## using mathplotlib I am going to create a boxplot of the dataset

boxplot = df.boxplot(column=['201600','201700'], return_type='axes')

plt.title ('Program A, Points Comparison 2016 & 2017')

plt.ylabel('points')

plt.xlabel('term code')

## using numpy I am going to calculate the five points of the box plot and the interquartile range

## minimum

min_pts = np.min(df, axis=0)

print("Min 201600", min_pts[0], " --- Min 201700",min_pts[1])

## Q1

Q1 =np.percentile(df, 25, axis =0)

print ("Q1 201600", Q1[0], "--- Q1 201700", Q1[1])

## median

med =np.median(df,axis =0)

print ("Median 201600", med[0], "--- Median 201700", med[1])

## Q3

Q3 =np.percentile(df, 75, axis=0)

print ("Q3 201600", Q3[0], "--- Q3 201700", Q3[1])

## Maximum

max_pts = np.max(df, axis=0)

print("Max 201600", max_pts[0], " --- Max 201700",max_pts[1])

##Inter Quartile Range

IR = Q3-Q1

print()

print("Interquartile range 201600", (IR[0]))

print("Interquartile range 201700", (IR[1]))

# +

## below I am replicating the above using seaborn.

## I just want to highlight how easily a nice looking plot can be generated.

df1 = pd.DataFrame(data = df, columns = ['201600','201700'])

sns.set(style="darkgrid")

sns.boxplot(x="variable",y = "value", hue = "variable",data=pd.melt(df), palette="Set3").set_title("CAO Points Course A, Comparison of 2016 & 2017")

plt.legend()

plt.ylabel('points')

plt.xlabel('term code')

# below are descriptive statistics for the data. The min, 25%, 50%, 75% and max are the five points of the boxplot

df.describe()

# -

# The example above is demonstrating in 2016 the two common scenarios in CAO points, at the higher end you have a student who has an excess of points but has stuck with the course they want; at the lower end the scenario where the demand was not as high as expected and to fill and make the course viable it is decided to drop points and pull in the last few students in a later round of offers.

#

# In both years the data is skewed right with the part of the box below the median smaller than that above, this tells me that the points of students in the lower part of the box are less varied (e.g. closer together)

#

# When plotted as boxplots it is apparent that whilst the overall spread of points and the max were greater in 2016; the 2017 median was higher as were entry points (bottom point of lower whisker).

#

# The box plots provide a nice visualisation of the course intake across the two years.

# #### Box Plot Example Two

# +

## I am going to read in a second csv to represent the change in points for a programme across a longer timeframe

## of six years.

df3= pd.read_csv("https://raw.githubusercontent.com/Hudsonsue/BOXPLOTS/master/boxplotdata.csv", header = 0)

df3.head(2)

# -

## seaborn box plot of dataset for Course B across six years

## outliers /incorrect data causing plot to be impossible to review

df4 = pd.DataFrame(data = df3, columns = [ '2012','2013', '2014', '2015','2016','2017'] )

sns.boxplot(x="variable", y="value", data= pd.melt(df4), palette="bright").set_title("CAO Points Course B 2012-2017")

plt.ylabel('points')

plt.xlabel('term code')

plt.show()

# Having pulled in the data there are some possible outliers showing:

#

# In 2012 I can see one in 2012 sitting far below the rest of the data, upon investigation it appears to be a typo as it is 41 when all other points in 2012 are three digits and in the range 400-565.

#

# In 2014 I can see some outliers but upon investigation I decide they are valid data points as they are within the range of possible LC points. So I would not remove them.

#

# In 2017 I can see some outliers, upon looking at the plot I can see they are sitting around 900 and 1000. As I am familiar with CAO coding I immediately recognise these as data that should not be in the dataset as they are codes used by CAO for mature and previous defer students and not part of the dataset I wish to use, that of student LC points.

# I hope the above demonstrates the use of boxplots to perform some quick data analysis and to avoid any in-depth analysis of inaccurate datasets. In the next part of the notebook I will demonstrate how the data would look once cleaned and re plotted.

# +

## I am going to read in the cleaned up version of the dataset for programme B, points for a programme

##across a longer timeframe of six years.

df4= pd.read_csv("https://raw.githubusercontent.com/Hudsonsue/BOXPLOTS/master/boxplotdatacleaned.csv", header = 0)

df4.head(2)

# +

## seaborn box plot of dataset for Course B across six years following removal of incorrect data points.

## I have also plotted a strip plot to show how it could be used in conjunction with the box plot to show

##the distribution within the box

#distplot of the cleaned dataset

df5 = pd.DataFrame(data = df4, columns = [ '201200','201300', '201400', '201500','201600','201700'] )

sns.boxplot(x="variable", y="value", palette = "bright", data= pd.melt(df4)).set_title("CAO Points Course B 2012-2017")

plt.ylabel('points')

plt.xlabel('term code')

plt.show()

# strip plot of the cleaned dataset

df6 = pd.DataFrame(data = df5, columns = [ '201200','201300', '201400', '201500','201600','201700']) #making a dataframe for the plot

sns.stripplot(x="variable",y = "value",data=pd.melt(df6), palette="bright").set_title("CAO Points Course B 2012-2017")

plt.ylabel('points') # title of the y axis

plt.xlabel('term code') # title of the x axis

plt.show()

# -

# Once the dataset is cleaned the plots are much easier to review. I added in the strip plot as it complements the boxplot. The rising points for the course from 2012 through to 2016 can be seen from the lowest whisker extremity.

# For example, in 2012 the data has an outlier and the data distribution has a large spread of data. The data is skewed right with the points of the students in the lower section of the box closer to each other than in the upper section.

#

# In 2014 the data is much closer (with two outliers identified) but skewed left with points of the students in the top section of the box closer together.

#

# The most symmetrical of all year plots is 2016 with the points reasonably evenly spread throughout the range.

# In 2017 the data is again skewed left with the points of students in the top section of the box closer together.

# ### Section Four - Compare the box plot to alternatives.

#