Readme: adding installation info

Browse files

README.md

CHANGED

|

@@ -1,86 +1,102 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: cc-by-4.0

|

| 3 |

-

---

|

| 4 |

-

|

| 5 |

-

# OSMa-Bench Dataset

|

| 6 |

-

|

| 7 |

-

[](https://be2rlab.github.io/OSMa-Bench/)

|

| 8 |

-

|

| 9 |

-

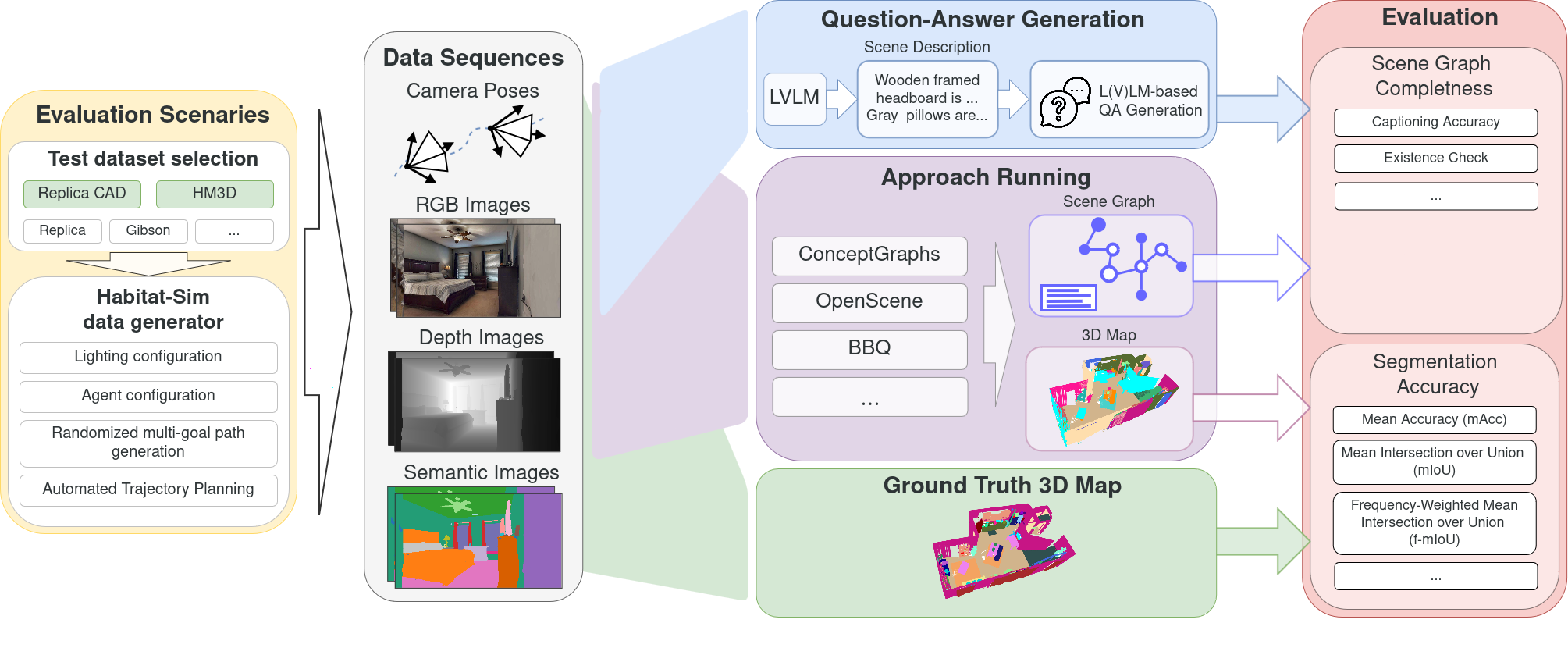

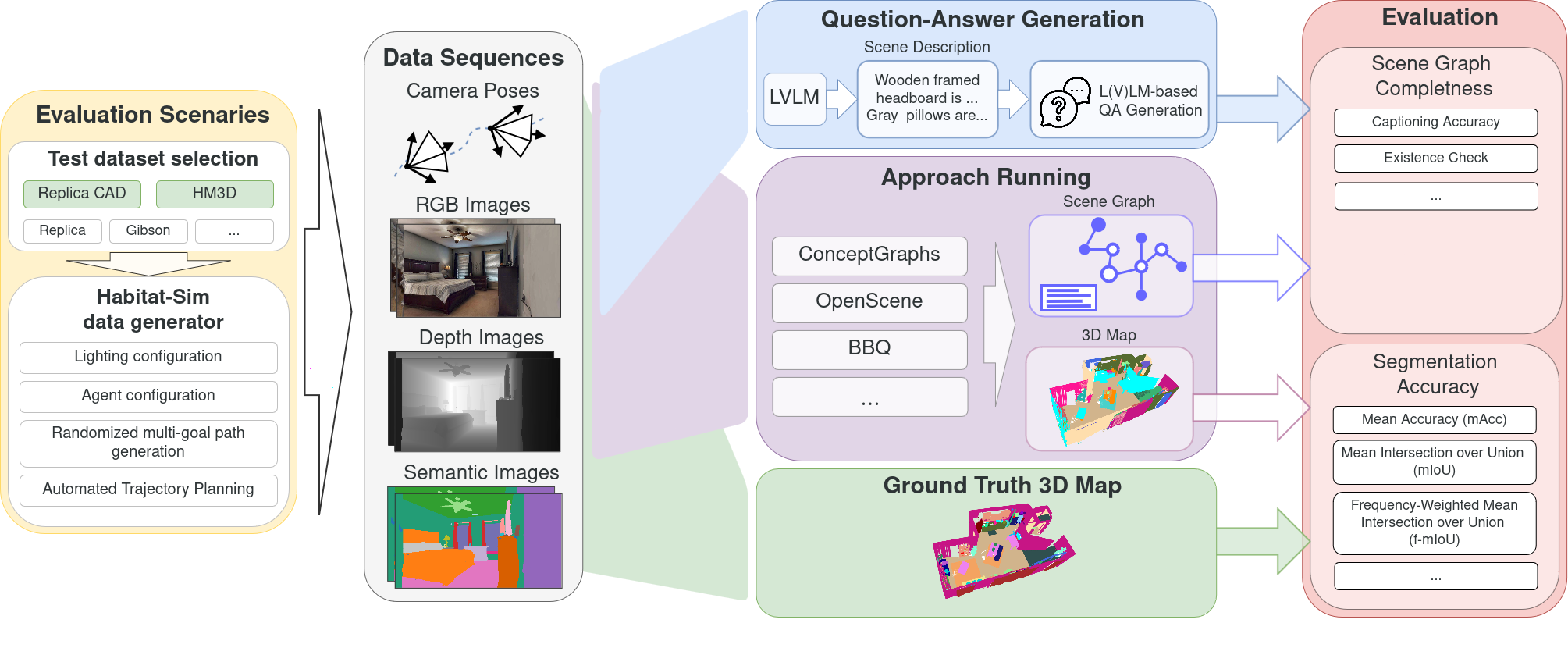

OSMa-Bench (Open Semantic Mapping Benchmark) dataset is a fully automatically generated dataset for evaluating the robustness of open semantic mapping and segmentation systems under varying indoor lighting conditions and robot movement dynamics. This dataset is part of [OSMa-Bench](https://be2rlab.github.io/OSMa-Bench/) pipeline.

|

| 10 |

-

|

| 11 |

-

- **Homepage:** [OSMa-Bench Project Page](https://be2rlab.github.io/OSMa-Bench/)

|

| 12 |

-

|

| 13 |

-

## Dataset Summary

|

| 14 |

-

|

| 15 |

-

This dataset provides simulated RGB-D and semantically annotated posed sequences for evaluation of semantic mapping and segmentation, with a particular focus on handling dynamic lighting—a critical but often overlooked factor in existing benchmarks. It also includes a collection of automatically generated question–answer pairs across multiple categories to support the evaluation of scene-graph–based reasoning, offering a task-driven measure of how well a system’s reconstructed scene captures semantic relationships between objects.

|

| 16 |

-

|

| 17 |

-

The data is built upon two base datasets:

|

| 18 |

-

- **ReplicaCAD**: 22 scenes with 4 lighting configurations and a velocity modifier.

|

| 19 |

-

- **Habitat Matterport 3D (HM3D)**: 8 scenes with 2 lighting configurations and a velocity modifier.

|

| 20 |

-

|

| 21 |

-

##

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

|

| 32 |

-

|

| 33 |

-

--

|

| 34 |

-

|

| 35 |

-

|

| 36 |

-

|

| 37 |

-

|

| 38 |

-

|

| 39 |

-

|

| 40 |

-

|

| 41 |

-

|

|

| 42 |

-

|

|

| 43 |

-

|

|

| 44 |

-

|

|

| 45 |

-

|

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

|

| 59 |

-

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

|

| 78 |

-

|

| 79 |

-

``

|

| 80 |

-

|

| 81 |

-

|

| 82 |

-

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 86 |

```

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: cc-by-4.0

|

| 3 |

+

---

|

| 4 |

+

|

| 5 |

+

# OSMa-Bench Dataset

|

| 6 |

+

|

| 7 |

+

[](https://be2rlab.github.io/OSMa-Bench/)

|

| 8 |

+

|

| 9 |

+

OSMa-Bench (Open Semantic Mapping Benchmark) dataset is a fully automatically generated dataset for evaluating the robustness of open semantic mapping and segmentation systems under varying indoor lighting conditions and robot movement dynamics. This dataset is part of [OSMa-Bench](https://be2rlab.github.io/OSMa-Bench/) pipeline.

|

| 10 |

+

|

| 11 |

+

- **Homepage:** [OSMa-Bench Project Page](https://be2rlab.github.io/OSMa-Bench/)

|

| 12 |

+

|

| 13 |

+

## Dataset Summary

|

| 14 |

+

|

| 15 |

+

This dataset provides simulated RGB-D and semantically annotated posed sequences for evaluation of semantic mapping and segmentation, with a particular focus on handling dynamic lighting—a critical but often overlooked factor in existing benchmarks. It also includes a collection of automatically generated question–answer pairs across multiple categories to support the evaluation of scene-graph–based reasoning, offering a task-driven measure of how well a system’s reconstructed scene captures semantic relationships between objects.

|

| 16 |

+

|

| 17 |

+

The data is built upon two base datasets:

|

| 18 |

+

- **ReplicaCAD**: 22 scenes with 4 lighting configurations and a velocity modifier.

|

| 19 |

+

- **Habitat Matterport 3D (HM3D)**: 8 scenes with 2 lighting configurations and a velocity modifier.

|

| 20 |

+

|

| 21 |

+

## Installation

|

| 22 |

+

|

| 23 |

+

We offer two versions of the dataset: one with separate files and one as a single compressed archive. Use the following command to download the separated files (may be slow):

|

| 24 |

+

|

| 25 |

+

```bash

|

| 26 |

+

git xet install

|

| 27 |

+

git clone https://huggingface.co/datasets/warmhammer/OSMa-Bench_dataset -b main

|

| 28 |

+

```

|

| 29 |

+

and this command to download the compressed version (faster one):

|

| 30 |

+

|

| 31 |

+

```bash

|

| 32 |

+

git xet install

|

| 33 |

+

git clone https://huggingface.co/datasets/warmhammer/OSMa-Bench_dataset -b compressed

|

| 34 |

+

unzip data.zip

|

| 35 |

+

```

|

| 36 |

+

|

| 37 |

+

## Data Configurations

|

| 38 |

+

|

| 39 |

+

The dataset includes the following configurations for the ReplicaCAD and HM3D scenes:

|

| 40 |

+

|

| 41 |

+

| Configuration | Description |

|

| 42 |

+

| :--- | :--- |

|

| 43 |

+

| `baseline` | Static, non-uniformly distributed light sources (ReplicaCAD only) |

|

| 44 |

+

| `dynamic_lighting` | Lighting conditions change along the robot's path (ReplicaCAD only) |

|

| 45 |

+

| `nominal_lights` | The mesh itself emits light without added light sources |

|

| 46 |

+

| `camera_light` | An extra directed light source is attached to the camera |

|

| 47 |

+

| `velocity` | Sequences recorded at doubled nominal velocity |

|

| 48 |

+

|

| 49 |

+

---

|

| 50 |

+

|

| 51 |

+

## Data Configurations

|

| 52 |

+

|

| 53 |

+

The dataset provides structured data for each scene, suitable for tasks like 3D scene understanding, visual question answering, and robotics. Each scene contains the following components:

|

| 54 |

+

|

| 55 |

+

| Component | Description | Format / Example |

|

| 56 |

+

| ------------------------- | ------------------------------------------------------------------------------------------------- | --------------------------------------|

|

| 57 |

+

| **RGB Images** | Standard color images captured from different camera viewpoints. | `frame000000.jpg`, ... |

|

| 58 |

+

| **Depth Images** | Depth maps aligned with RGB images. Each pixel encodes depth in meters. | `depth000000.png`, ... |

|

| 59 |

+

| **Semantic Masks** | Pixel-wise semantic segmentation labels. Each pixel corresponds to a semantic class ID. | `semantic000000.png`, ... |

|

| 60 |

+

| **Camera Trajectories** | Flattened 4×4 transformation matrices representing camera poses for each frame. | `traj.txt` (one 4×4 matrix per line) |

|

| 61 |

+

| **Question-Answer Pairs** | Validated question-answer pairs related to the scene, optionally associated with specific frames. | `validated_questions.json` |

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

## VQA Question Categories

|

| 65 |

+

The dataset includes a automatically generated answer-question pairs with the following question types:

|

| 66 |

+

1. **Binary General** – Yes/No questions about the presence of objects and general scene characteristics

|

| 67 |

+

*Example:* `Is there a blue sofa?`

|

| 68 |

+

|

| 69 |

+

2. **Binary Existence-Based** – Yes/No questions designed to track false positives by querying non-existent objects

|

| 70 |

+

*Example:* `Is there a piano?`

|

| 71 |

+

|

| 72 |

+

3. **Binary Logical** – Yes/No questions with logical operators such as AND/OR

|

| 73 |

+

*Example:* `Is there a chair AND a table?`

|

| 74 |

+

|

| 75 |

+

4. **Measurement** – Questions requiring numerical answers related to object counts or scene attributes

|

| 76 |

+

*Example:* `How many windows are present?`

|

| 77 |

+

|

| 78 |

+

5. **Object Attributes** – Queries about object properties, including color, shape, and material

|

| 79 |

+

*Example:* `What color is the door?`

|

| 80 |

+

|

| 81 |

+

6. **Object Relations (Functional)** – Questions about functional relationships between objects

|

| 82 |

+

*Example:* `Which object supports the table?`

|

| 83 |

+

|

| 84 |

+

7. **Object Relations (Spatial)** – Queries about spatial placement of objects within the scene

|

| 85 |

+

*Example:* `What is in front of the staircase?`

|

| 86 |

+

|

| 87 |

+

8. **Comparison** – Questions that compare object properties such as size, color, and position

|

| 88 |

+

*Example:* `Which is taller: the bookshelf or the lamp?`

|

| 89 |

+

|

| 90 |

+

|

| 91 |

+

## Citing OSMa-Bench Dataset

|

| 92 |

+

|

| 93 |

+

Using OSMa-Bench dataset in your research? Please cite following paper: [OSMa-Bench arxiv](https://arxiv.org/abs/2503.10331).

|

| 94 |

+

|

| 95 |

+

```bibtex

|

| 96 |

+

@inproceedings{popov2025osmabench,

|

| 97 |

+

title = {OSMa-Bench: Evaluating Open Semantic Mapping Under Varying Lighting Conditions},

|

| 98 |

+

author = {Popov, Maxim and Kurkova, Regina and Iumanov, Mikhail and Mahmoud, Jaafar and Kolyubin, Sergey},

|

| 99 |

+

booktitle = {2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)},

|

| 100 |

+

year = {2025}

|

| 101 |

+

}

|

| 102 |

```

|