Instructions to use daviddavidlu/DAPO-with-prompt-augmentation-step2820 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use daviddavidlu/DAPO-with-prompt-augmentation-step2820 with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="daviddavidlu/DAPO-with-prompt-augmentation-step2820") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("daviddavidlu/DAPO-with-prompt-augmentation-step2820") model = AutoModelForCausalLM.from_pretrained("daviddavidlu/DAPO-with-prompt-augmentation-step2820") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Inference

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use daviddavidlu/DAPO-with-prompt-augmentation-step2820 with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "daviddavidlu/DAPO-with-prompt-augmentation-step2820" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "daviddavidlu/DAPO-with-prompt-augmentation-step2820", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/daviddavidlu/DAPO-with-prompt-augmentation-step2820

- SGLang

How to use daviddavidlu/DAPO-with-prompt-augmentation-step2820 with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "daviddavidlu/DAPO-with-prompt-augmentation-step2820" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "daviddavidlu/DAPO-with-prompt-augmentation-step2820", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "daviddavidlu/DAPO-with-prompt-augmentation-step2820" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "daviddavidlu/DAPO-with-prompt-augmentation-step2820", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use daviddavidlu/DAPO-with-prompt-augmentation-step2820 with Docker Model Runner:

docker model run hf.co/daviddavidlu/DAPO-with-prompt-augmentation-step2820

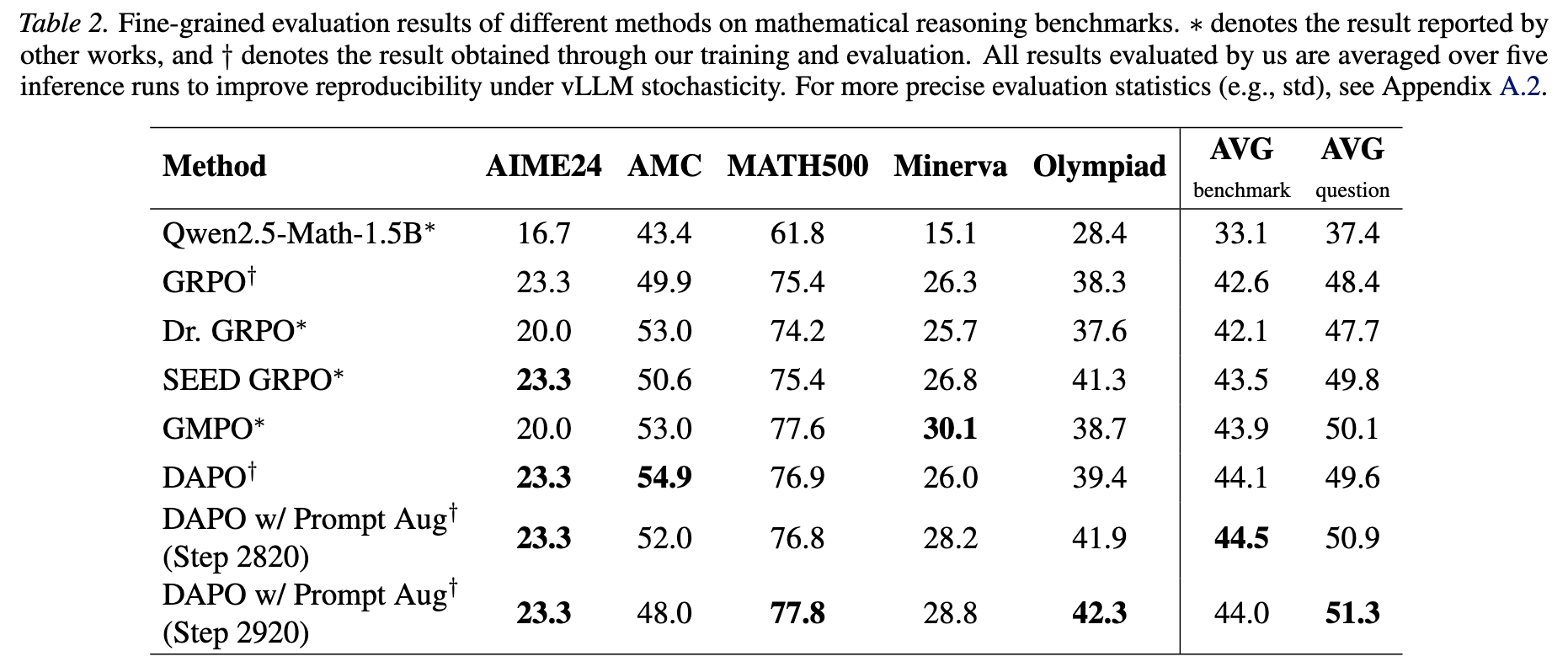

Model Card for PrAg-PO Qwen2.5-Math-1.5B step2820 (Outdated)

If you are using the model, a star to our github repo would be really appreciated! 😊

For more checkpoints with better performance, please refer to step 2720 and step 2480

This is the step 2820 checkpoint when training Qwen2.5-Math-1.5B on MATH Level-3-to-5 Dataset using PrAg-PO. The training procedure is outlined in the paper PrAg-PO: Prompt Augmented Policy Optimization for Robust and Diverse Mathematical Reasoning.

Model Sources

- Repository 🤖: https://github.com/wenquanlu/PrAg-PO

- Paper 📝: PrAg-PO: Prompt Augmented Policy Optimization for Robust and Diverse Mathematical Reasoning

Uses

This model is intended for mathematical reasoning tasks. It leverages prompt augmentation to generate reasoning traces under diverse templates, increasing rollout diversity and stability during RL training.

Results

Citation

@misc{lu2026pragpopromptaugmentedpolicy,

title={PrAg-PO: Prompt Augmented Policy Optimization for Robust and Diverse Mathematical Reasoning},

author={Wenquan Lu and Hai Huang and Enqi Liu and Randall Balestriero},

journal={arXiv preprint arXiv:2602.03190},

url={https://arxiv.org/abs/2602.03190},

year={2026},

}

- Downloads last month

- 118