license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Intent-Classification-Bert-Base-Cased

results: []

Intent-Classification-Bert-Base-Cased

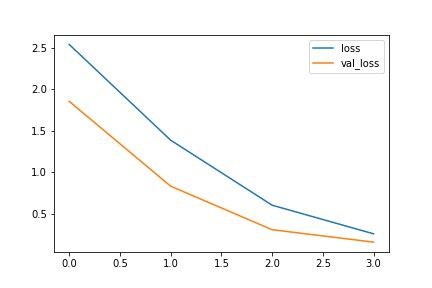

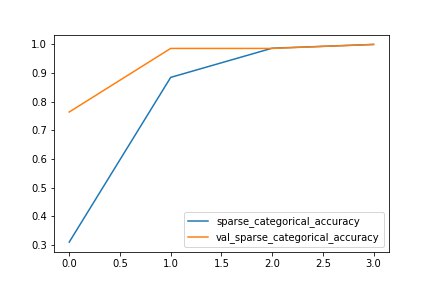

This model is a fine-tuned version of bert-base-cased on an Intent-Classification-Commands dataset. It achieves the following results on the evaluation set:

- Train Loss: 0.6110

- Train Sparse Categorical Accuracy: 0.9836

- Validation Loss: 0.4073

- Validation Sparse Categorical Accuracy: 0.9583

- Epoch: 3

Model description

Base model: 'bert-base-cased' can be used for intent classification. It trained on the Intent-Classification-Commands dataset. With the following classes-

{

"0": "asking date",

"1": "asking time",

"2": "asking weather",

"3": "check internet speed",

"4": "click photo",

"5": "covid cases",

"6": "download youtube video",

"7": "goodbye",

"8": "greet",

"9": "open website",

"10": "play games",

"11": "play on youtube",

"12": "send email",

"13": "send whatsapp message",

"14": "take screenshot",

"15": "tell me about",

"16": "tell me joke",

"17": "tell me news"

}

Intended uses & limitations

Intent Classifications for Chatbot or Virtual Assistant. Only supports the English language. It can't work in outside classes. But you can fine-tune it for your own use.

Training and evaluation data

Dataset Used: Intent-Classification-Commands

Training procedure

https://colab.research.google.com/drive/1KHg14glvhdV_ziOcY0pHm66PBYoBZMS0?usp=sharing

Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': 5e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False}

- training_precision: float32

Training results

Framework versions

- Transformers 4.19.2

- TensorFlow 2.8.0

- Datasets 2.2.2

- Tokenizers 0.12.1

Connect me on-

Subscribe to me on: https://youtube.com/techportofficial

DM me on (for quick response): https://instagram.com/dipesh_pal17