teknium/openhermes

Viewer • Updated • 243k • 824 • 220

How to use gardner/TinyLlama-1.1B-Instruct-3T with Transformers:

# Use a pipeline as a high-level helper

from transformers import pipeline

pipe = pipeline("text-generation", model="gardner/TinyLlama-1.1B-Instruct-3T") # Load model directly

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("gardner/TinyLlama-1.1B-Instruct-3T")

model = AutoModelForCausalLM.from_pretrained("gardner/TinyLlama-1.1B-Instruct-3T")How to use gardner/TinyLlama-1.1B-Instruct-3T with vLLM:

# Install vLLM from pip:

pip install vllm

# Start the vLLM server:

vllm serve "gardner/TinyLlama-1.1B-Instruct-3T"

# Call the server using curl (OpenAI-compatible API):

curl -X POST "http://localhost:8000/v1/completions" \

-H "Content-Type: application/json" \

--data '{

"model": "gardner/TinyLlama-1.1B-Instruct-3T",

"prompt": "Once upon a time,",

"max_tokens": 512,

"temperature": 0.5

}'docker model run hf.co/gardner/TinyLlama-1.1B-Instruct-3T

How to use gardner/TinyLlama-1.1B-Instruct-3T with SGLang:

# Install SGLang from pip:

pip install sglang

# Start the SGLang server:

python3 -m sglang.launch_server \

--model-path "gardner/TinyLlama-1.1B-Instruct-3T" \

--host 0.0.0.0 \

--port 30000

# Call the server using curl (OpenAI-compatible API):

curl -X POST "http://localhost:30000/v1/completions" \

-H "Content-Type: application/json" \

--data '{

"model": "gardner/TinyLlama-1.1B-Instruct-3T",

"prompt": "Once upon a time,",

"max_tokens": 512,

"temperature": 0.5

}'docker run --gpus all \

--shm-size 32g \

-p 30000:30000 \

-v ~/.cache/huggingface:/root/.cache/huggingface \

--env "HF_TOKEN=<secret>" \

--ipc=host \

lmsysorg/sglang:latest \

python3 -m sglang.launch_server \

--model-path "gardner/TinyLlama-1.1B-Instruct-3T" \

--host 0.0.0.0 \

--port 30000

# Call the server using curl (OpenAI-compatible API):

curl -X POST "http://localhost:30000/v1/completions" \

-H "Content-Type: application/json" \

--data '{

"model": "gardner/TinyLlama-1.1B-Instruct-3T",

"prompt": "Once upon a time,",

"max_tokens": 512,

"temperature": 0.5

}'How to use gardner/TinyLlama-1.1B-Instruct-3T with Docker Model Runner:

docker model run hf.co/gardner/TinyLlama-1.1B-Instruct-3T

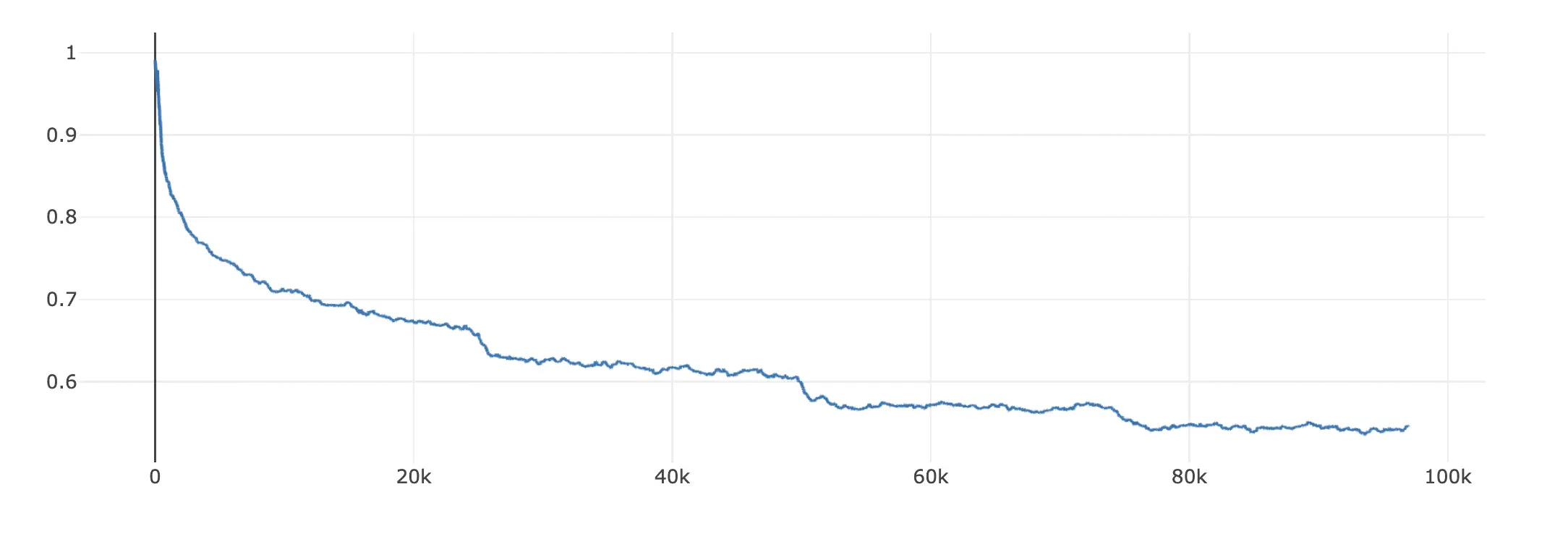

This is the 3T base model trained on openhermes instruct dataset for 4 epochs. It is intended to be used for further finetuning.

base_model: TinyLlama/TinyLlama-1.1B-intermediate-step-1431k-3T

model_type: LlamaForCausalLM

tokenizer_type: LlamaTokenizer

is_llama_derived_model: true

load_in_8bit: true

load_in_4bit: false

strict: false

datasets:

- path: teknium/openhermes

type: alpaca

dataset_prepared_path:

val_set_size: 0.05

output_dir: ./tiny-llama-instruct-lora

sequence_len: 4096

sample_packing: true

pad_to_sequence_len: true

adapter: lora

lora_model_dir:

lora_r: 32

lora_alpha: 16

lora_dropout: 0.05

lora_target_linear: true

lora_fan_in_fan_out:

gradient_accumulation_steps: 4

micro_batch_size: 2

num_epochs: 4

optimizer: adamw_bnb_8bit

lr_scheduler: cosine

learning_rate: 0.0002

train_on_inputs: false

group_by_length: false

bf16: true

fp16: false

tf32: false

gradient_checkpointing: true

early_stopping_patience:

resume_from_checkpoint:

local_rank:

logging_steps: 1

xformers_attention:

flash_attention: true

warmup_steps: 10

evals_per_epoch: 4

saves_per_epoch: 1

debug:

deepspeed:

weight_decay: 0.0

fsdp:

fsdp_config:

special_tokens: