V-Reflection (7B)

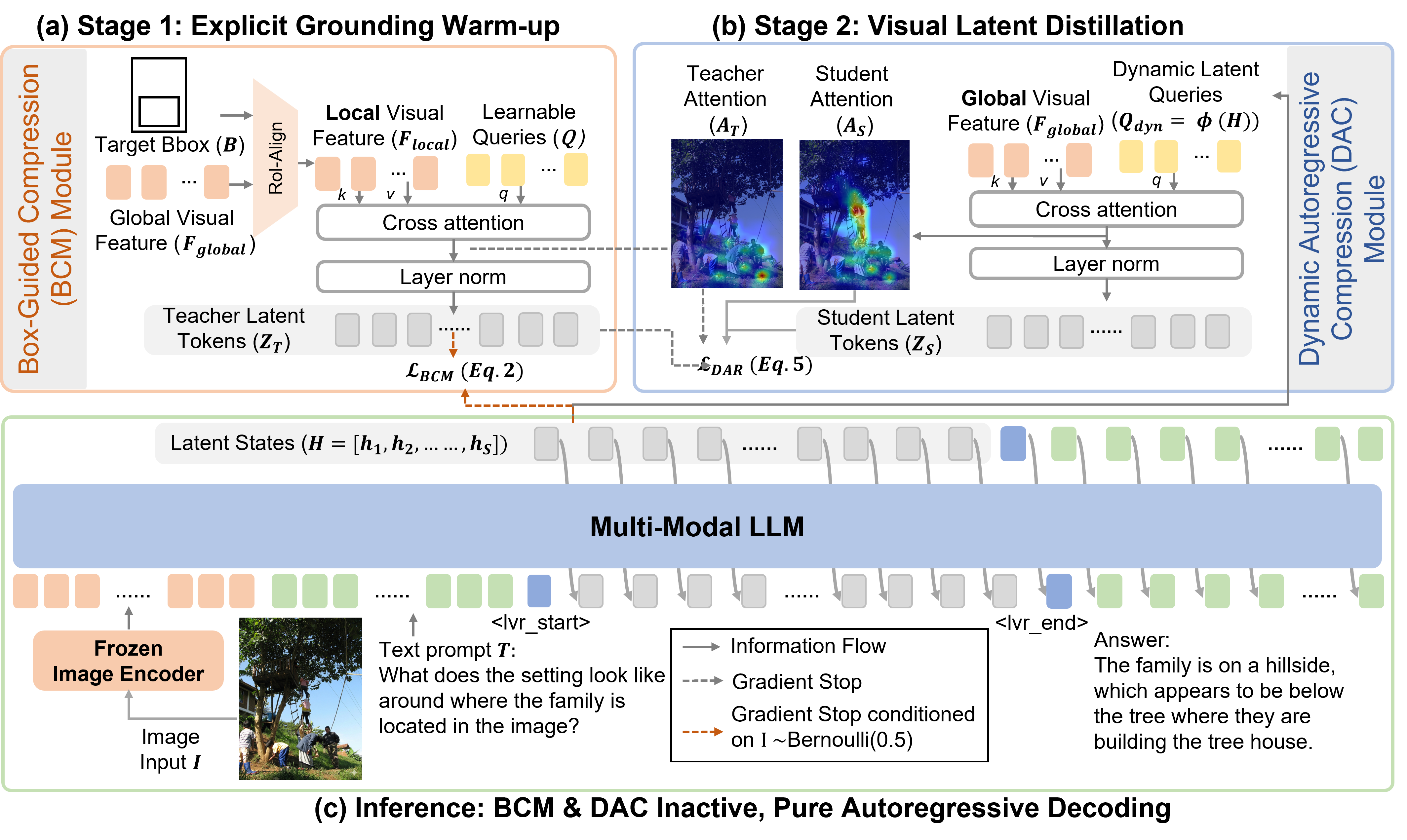

V-Reflection is a Qwen2.5-VL-based multimodal model that turns the MLLM into an active interrogator via a think-then-look visual reflection mechanism: fixed-length latent visual reasoning before answering.

| Resource | Link |

|---|---|

| Paper | arxiv.org/abs/2604.03307 |

| Project page | idea-research.github.io/V-Reflection |

| Code & scripts | github.com/IDEA-Research/V-Reflection |

Model summary

- Architecture: Built on Qwen2.5-VL-7B-Instruct with Box-Guided Compression (BCM) and Dynamic Autoregressive Compression (DAC) for latent visual probing.

- Stage 1 (BCM): Box-guided compression produces stable pixel-to-latent targets; stochastic decoupled alignment trains the resampler and LLM jointly.

- Stage 2 (DAC): Student maps LLM hidden states into dynamic probes over the global visual map, with MSE distillation from a frozen BCM teacher.

- Inference: BCM/DAC are inactive at inference; decoding is end-to-end autoregressive in latent space (8-step latent reasoning by default).

Repository contents

This Hub repo includes model weights (sharded Safetensors) and pre-formatted LVR training annotations used in the paper project:

| File(s) | Role |

|---|---|

*.safetensors, config.json, tokenizer assets |

Fine-tuned V-Reflection checkpoint |

meta_data_lvr_sft_stage1.json |

Meta config for default SROIE + DUDE mix |

viscot_sroie_dude_lvr_formatted.json |

SROIE + DUDE subset |

viscot_363k_lvr_formatted.json |

Full Visual CoT–style 363K split |

Download images for Visual CoT / listed datasets from their official sources (see the code repo data section).

Intended use

- Research on visual reasoning, high-resolution understanding, and latent multimodal reasoning.

- Not a drop-in replacement for stock Qwen2.5-VL in generic chat UIs without the official custom model class and generation path.

How to run

Loading and evaluation rely on the QwenWithLVR implementation and scripts in the GitHub repository (training, packing, and benchmarks are documented there).

- Clone IDEA-Research/V-Reflection and follow

README.md(environment, data layout). - Download this checkpoint into a local directory (or rely on

hf_hub_downloadfrom their evaluation scripts). - Point

EVAL_CHECKPOINT_PATH(or the training script checkpoint args) to the unpacked folder.

Example evaluation entrypoint (from upstream docs):

bash scripts_release/evaluation/evaluation_7b_stage2.sh

Results (reported in project materials)

| Benchmark | V-Reflection | Qwen2.5-VL-7B |

|---|---|---|

| MMVP | 72.3 | 66.7 |

| BLINK | 56.4 | 54.5 |

| V* | 81.7 | 78.5 |

| HRBench-4K | 72.6 | 68.0 |

| HRBench-8K | 66.3 | 63.8 |

| MME-RealWorld-Lite | 53.9 | 45.8 |

Limitations

- Requires the official codebase for correct loading and latent reasoning behavior.

- Performance and safety have not been audited for unrestricted production deployment; use judgment for real-world products.

- Biases may mirror base model and training data distributions.

License

Apache-2.0 (same as the codebase). See the license file in the GitHub repository.

Acknowledgements

- Downloads last month

- 9

Model tree for garlandchou/V-Reflection

Base model

Qwen/Qwen2.5-VL-7B-InstructPaper for garlandchou/V-Reflection

Paper • 2604.03307 • Published • 14