| # deit_tiny_patch16_224 | |

| Implementation of DeiT proposed in [Training data-efficient image | |

| transformers & distillation through | |

| attention](https://arxiv.org/pdf/2010.11929.pdf) | |

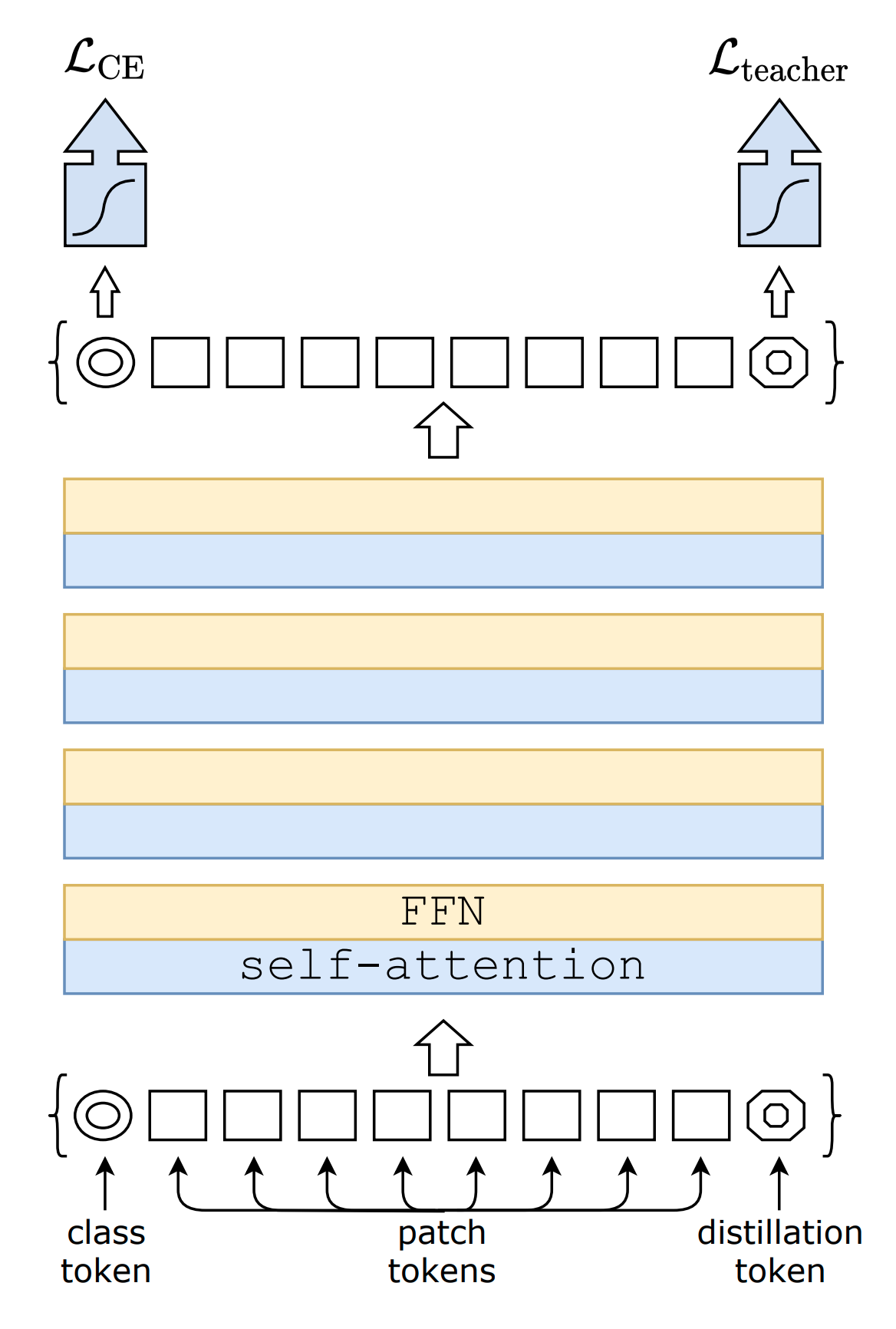

| An attention based distillation is proposed where a new token is added | |

| to the model, the [dist]{.title-ref} token. | |

|  | |

| ``` {.sourceCode .} | |

| DeiT.deit_tiny_patch16_224() | |

| DeiT.deit_small_patch16_224() | |

| DeiT.deit_base_patch16_224() | |

| DeiT.deit_base_patch16_384() | |

| ``` | |