Install from brew

brew install llama.cpp

# Start a local OpenAI-compatible server with a web UI:

llama-server -hf google/codegemma-1.1-2b-GGUF:F16# Run inference directly in the terminal:

llama-cli -hf google/codegemma-1.1-2b-GGUF:F16Install from WinGet (Windows)

winget install llama.cpp

# Start a local OpenAI-compatible server with a web UI:

llama-server -hf google/codegemma-1.1-2b-GGUF:F16# Run inference directly in the terminal:

llama-cli -hf google/codegemma-1.1-2b-GGUF:F16Use pre-built binary

# Download pre-built binary from:

# https://github.com/ggerganov/llama.cpp/releases# Start a local OpenAI-compatible server with a web UI:

./llama-server -hf google/codegemma-1.1-2b-GGUF:F16# Run inference directly in the terminal:

./llama-cli -hf google/codegemma-1.1-2b-GGUF:F16Build from source code

git clone https://github.com/ggerganov/llama.cpp.git

cd llama.cpp

cmake -B build

cmake --build build -j --target llama-server llama-cli# Start a local OpenAI-compatible server with a web UI:

./build/bin/llama-server -hf google/codegemma-1.1-2b-GGUF:F16# Run inference directly in the terminal:

./build/bin/llama-cli -hf google/codegemma-1.1-2b-GGUF:F16Use Docker

docker model run hf.co/google/codegemma-1.1-2b-GGUF:F16Access CodeGemma on Hugging Face

This repository is publicly accessible, but you have to accept the conditions to access its files and content.

To access Gemma on Hugging Face, you’re required to review and agree to Google’s usage license. To do this, please ensure you’re logged-in to Hugging Face and click below. Requests are processed immediately.

Log in or Sign Up to review the conditions and access this model content.

CodeGemma

Model Page : CodeGemma

Resources and Technical Documentation : Technical Report : Responsible Generative AI Toolkit

Terms of Use : Terms

Authors : Google

In llama.cpp, and other related tools such as Ollama and LM Studio, please make sure that you have these flags set correctly, especially

repeat-penalty. Georgi Gerganov (llama.cpp's author) shared his experience in https://huggingface.co/google/gemma-7b-it/discussions/38#65d7b14adb51f7c160769fa1.

Description

CodeGemma is a collection of lightweight open code models built on top of Gemma. CodeGemma models are text-to-text and text-to-code decoder-only models and are available as a 7 billion pretrained variant that specializes in code completion and code generation tasks, a 7 billion parameter instruction-tuned variant for code chat and instruction following and a 2 billion parameter pretrained variant for fast code completion.

| codegemma-2b | codegemma-7b | codegemma-7b-it | |

|---|---|---|---|

| Code Completion | ✅ | ✅ | |

| Generation from natural language | ✅ | ✅ | |

| Chat | ✅ | ||

| Instruction Following | ✅ |

For detailed model card, refer to https://huggingface.co/google/codegemma-1.1-2b.

Sample Usage

$ cat non_prime

/// Write a rust function to identify non-prime numbers.

///

/// Examples:

/// >>> is_not_prime(2)

/// False

/// >>> is_not_prime(10)

/// True

pub fn is_not_prime(n: i32) -> bool {

$ main -m codegemma-1.1-2b.gguf --temp 0 --top-k 0 -f non_prime --log-disable --repeat-penalty 1.0

/// Write a rust function to identify non-prime numbers.

///

/// Examples:

/// >>> is_not_prime(2)

/// False

/// >>> is_not_prime(10)

/// True

pub fn is_not_prime(n: i32) -> bool {

for i in 2..n {

if n % i == 0 {

return true;

}

}

false

}

<|file_separator|>

Coding Benchmarks

| Benchmark | 2B | 2B (1.1) | 7B | 7B-IT | 7B-IT (1.1) |

|---|---|---|---|---|---|

| HumanEval | 31.1 | 37.8 | 44.5 | 56.1 | 60.4 |

| MBPP | 43.6 | 49.2 | 56.2 | 54.2 | 55.6 |

| HumanEval Single Line | 78.4 | 79.3 | 76.1 | 68.3 | 77.4 |

| HumanEval Multi Line | 51.4 | 51.0 | 58.4 | 20.1 | 23.7 |

| BC HE C++ | 24.2 | 19.9 | 32.9 | 42.2 | 46.6 |

| BC HE C# | 10.6 | 26.1 | 22.4 | 26.7 | 54.7 |

| BC HE Go | 20.5 | 18.0 | 21.7 | 28.6 | 34.2 |

| BC HE Java | 29.2 | 29.8 | 41.0 | 48.4 | 50.3 |

| BC HE JavaScript | 21.7 | 28.0 | 39.8 | 46.0 | 48.4 |

| BC HE Kotlin | 28.0 | 32.3 | 39.8 | 51.6 | 47.8 |

| BC HE Python | 21.7 | 36.6 | 42.2 | 48.4 | 54.0 |

| BC HE Rust | 26.7 | 24.2 | 34.1 | 36.0 | 37.3 |

| BC MBPP C++ | 47.1 | 38.9 | 53.8 | 56.7 | 63.5 |

| BC MBPP C# | 28.7 | 45.3 | 32.5 | 41.2 | 62.0 |

| BC MBPP Go | 45.6 | 38.9 | 43.3 | 46.2 | 53.2 |

| BC MBPP Java | 41.8 | 49.7 | 50.3 | 57.3 | 62.9 |

| BC MBPP JavaScript | 45.3 | 45.0 | 58.2 | 61.4 | 61.4 |

| BC MBPP Kotlin | 46.8 | 49.7 | 54.7 | 59.9 | 62.6 |

| BC MBPP Python | 38.6 | 52.9 | 59.1 | 62.0 | 60.2 |

| BC MBPP Rust | 45.3 | 47.4 | 52.9 | 53.5 | 52.3 |

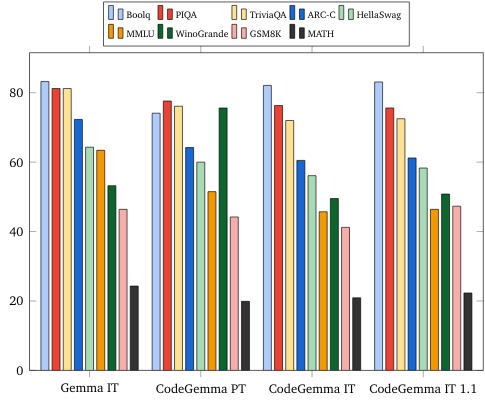

Natural Language Benchmarks

- Downloads last month

- 25

16-bit

# Gated model: Login with a HF token with gated access permission hf auth login