Instructions to use google/ul2 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use google/ul2 with Transformers:

# Load model directly from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("google/ul2") model = AutoModelForSeq2SeqLM.from_pretrained("google/ul2") - Notebooks

- Google Colab

- Kaggle

Update README.md

#10

by HarBat - opened

README.md

CHANGED

|

@@ -9,7 +9,7 @@ license: apache-2.0

|

|

| 9 |

|

| 10 |

# Introduction

|

| 11 |

|

| 12 |

-

UL2 is a unified framework for pretraining models that are universally effective across datasets and setups. UL2 uses Mixture-of-Denoisers (MoD),

|

| 13 |

|

| 14 |

|

| 15 |

|

|

|

|

| 9 |

|

| 10 |

# Introduction

|

| 11 |

|

| 12 |

+

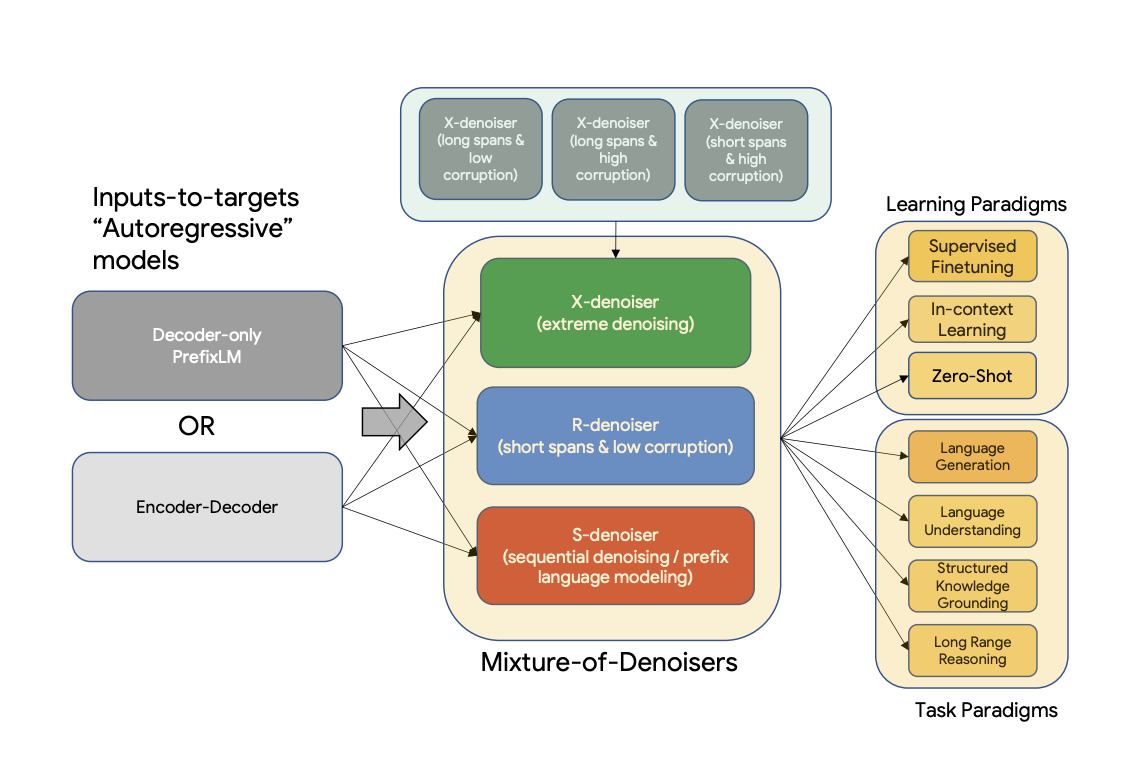

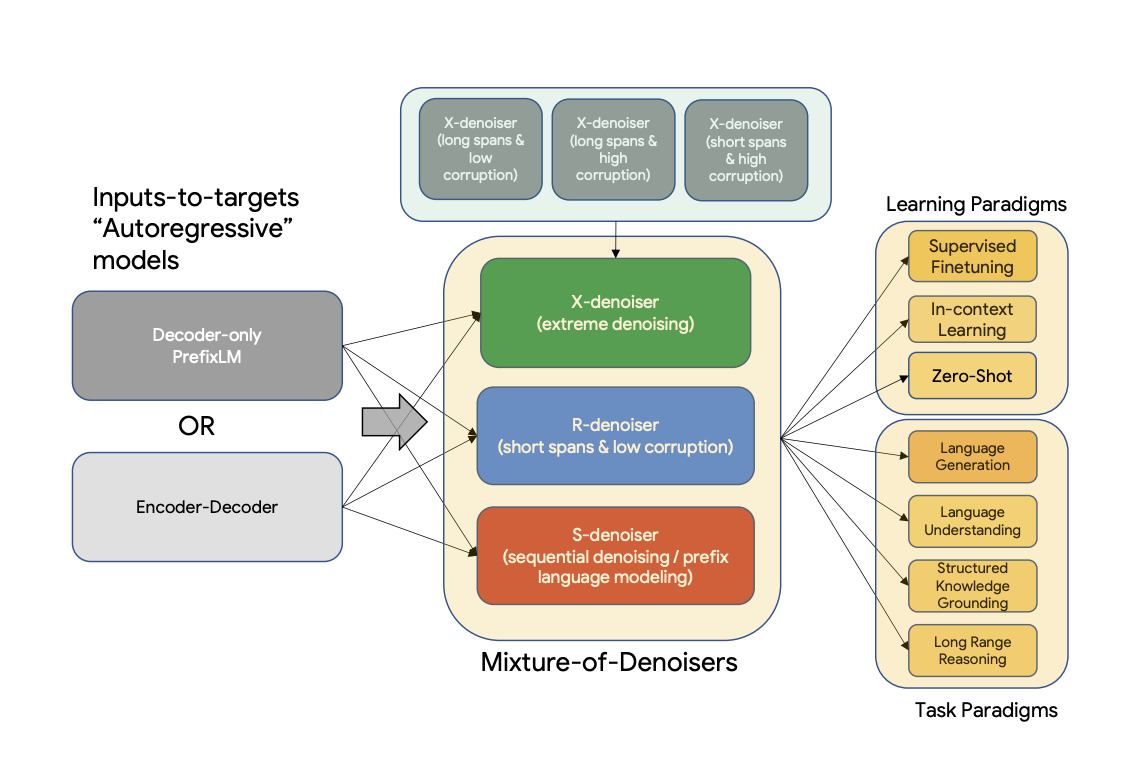

UL2 is a unified framework for pretraining models that are universally effective across datasets and setups. UL2 uses Mixture-of-Denoisers (MoD), a pre-training objective that combines diverse pre-training paradigms together. UL2 introduces a notion of mode switching, wherein downstream fine-tuning is associated with specific pre-training schemes.

|

| 13 |

|

| 14 |

|

| 15 |

|