- 🧠 MIDAS-PYTORCH

- 📂 Folder Structure

- 📖 What is Depth Estimation?

- 🤖 What is MiDaS?

- ⚠️ Relative vs Absolute Depth (Important)

- ✨ Project Features

- 🛠️ Tech Stack

- ⚙️ Installation

- 🧩 How the System Works

- ▶️ Running the Application

- 🖼️ Output Explanation

- 🧠 Model Used

- 🚀 Performance Notes

- ❌ Limitations

- 🌍 Applications

- 🔮 Future Improvements

- 🎯 Interview One-Liner

- 📂 Folder Structure

🧠 MIDAS-PYTORCH

Real-Time Monocular Depth Estimation using PyTorch & OpenCV

This project demonstrates real-time depth estimation from a single RGB camera using the MiDaS deep learning model. It shows how depth can be inferred without stereo cameras or LiDAR, using only computer vision and deep learning.

📂 Folder Structure

MIDAS-PYTORCH/

│── app.py

│── requirements.txt

│── README.md

📖 What is Depth Estimation?

Depth estimation is the task of determining how far objects are from a camera.

Traditional approaches use:

- Stereo cameras

- LiDAR sensors

- RGB-D cameras

This project uses monocular depth estimation, meaning:

Depth is predicted from a single RGB image.

🤖 What is MiDaS?

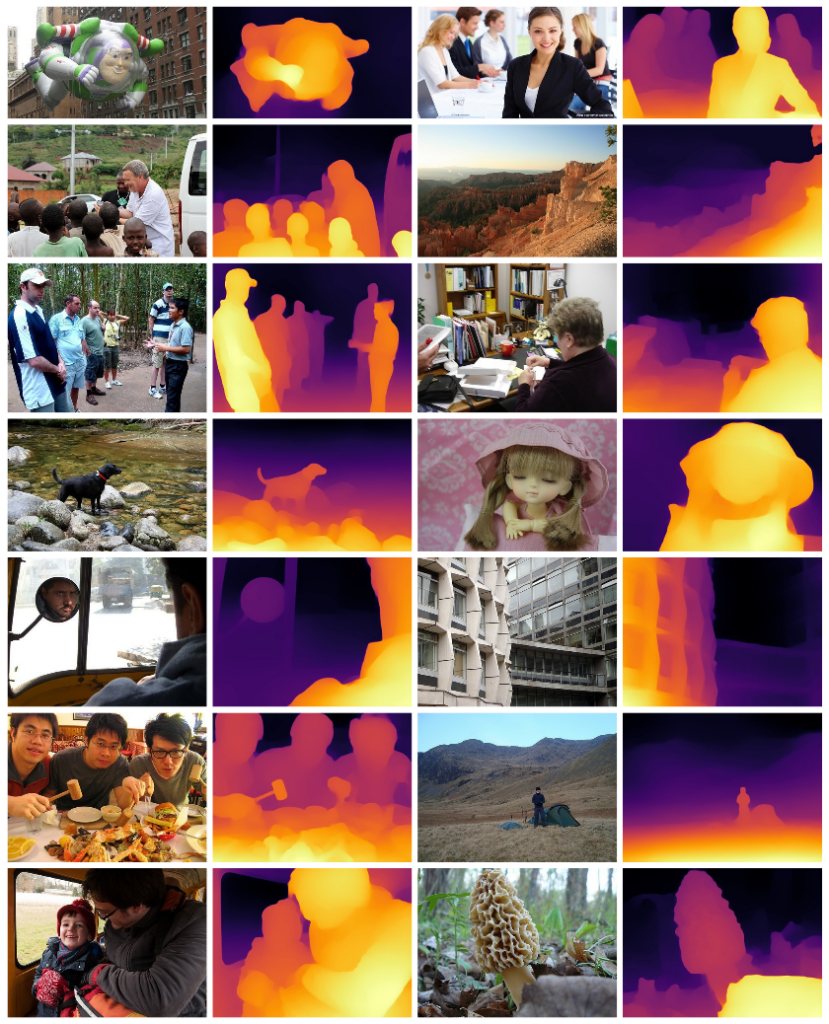

MiDaS (Mixed Datasets for Monocular Depth Estimation) is a pretrained deep learning model that predicts a depth map from one image.

- Input: RGB image

- Output: Depth map

- Bright pixels: Closer objects

- Dark pixels: Farther objects

MiDaS works well because it is trained on multiple diverse datasets.

⚠️ Relative vs Absolute Depth (Important)

❌ MiDaS does NOT give:

- Exact distance in meters

- Physical measurements

✅ MiDaS DOES give:

- Relative depth ordering

- Scene geometry understanding

Example:

Person > Chair > Wall

✨ Project Features

- Real-time webcam depth estimation

- Lightweight MiDaS_small model

- OpenCV-based visualization

- CPU compatible (GPU optional)

- Beginner-friendly implementation

🛠️ Tech Stack

- Python

- PyTorch

- OpenCV

- NumPy

- MiDaS (Intel-ISL)

⚙️ Installation

1️⃣ Clone the repository

git clone <repository-url>

cd MIDAS-PYTORCH

2️⃣ Install dependencies

pip install -r requirements.txt

Recommended Python version: 3.10+

🧩 How the System Works

High-level pipeline:

Webcam Frame

↓

BGR → RGB Conversion

↓

MiDaS Image Transform

↓

Neural Network Inference

↓

Depth Prediction

↓

Interpolation (Resize)

↓

Normalization

↓

Color-Mapped Depth Output

▶️ Running the Application

python app.py

- Press Q to quit.

🖼️ Output Explanation

Two windows are displayed:

- Original Webcam Feed

- Depth Map Visualization

Color meaning:

- 🔴 / Yellow → closer objects

- 🔵 / Dark → farther objects

Depth values are relative, not real-world distances.

🧠 Model Used

| Model | Description |

|---|---|

| MiDaS_small | Fast, lightweight, suitable for real-time webcam inference |

🚀 Performance Notes

- Runs smoothly on CPU

- FPS can be improved by lowering webcam resolution

- GPU acceleration supported if CUDA is available

- OpenCV used for fast real-time visualization

❌ Limitations

- No metric (meter-level) depth

- Struggles with reflective or transparent surfaces

- Relative depth only

🌍 Applications

- Robotics obstacle avoidance

- AR / VR scene understanding

- Autonomous driving research

- 3D scene reconstruction

- Computer vision learning projects

🔮 Future Improvements

- Combine MiDaS with object detection (YOLO)

- Approximate real-world distance estimation

- Web deployment using Streamlit or FastAPI

- Depth-based segmentation

🎯 Interview One-Liner

“This project performs real-time monocular depth estimation from a single RGB webcam feed using the MiDaS deep learning model with PyTorch and OpenCV.”

⭐ If this project helps you, consider starring the repository!