Instructions to use joelbarmettler/md-reheader with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use joelbarmettler/md-reheader with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="joelbarmettler/md-reheader") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("joelbarmettler/md-reheader") model = AutoModelForCausalLM.from_pretrained("joelbarmettler/md-reheader") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Inference

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use joelbarmettler/md-reheader with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "joelbarmettler/md-reheader" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "joelbarmettler/md-reheader", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/joelbarmettler/md-reheader

- SGLang

How to use joelbarmettler/md-reheader with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "joelbarmettler/md-reheader" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "joelbarmettler/md-reheader", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "joelbarmettler/md-reheader" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "joelbarmettler/md-reheader", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use joelbarmettler/md-reheader with Docker Model Runner:

docker model run hf.co/joelbarmettler/md-reheader

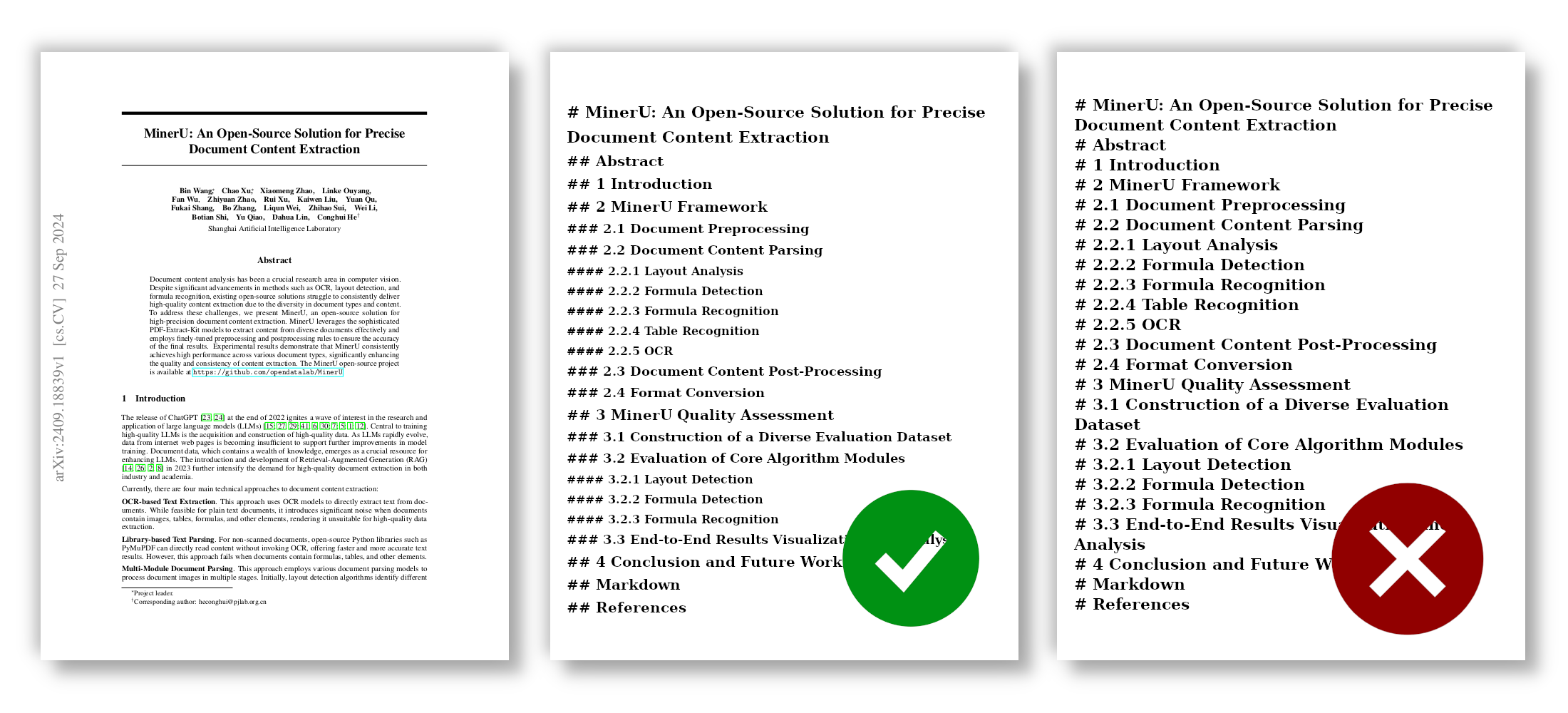

The problem

PDF-to-markdown tools like MinerU, Docling, and Marker do great text extraction — then collapse your document structure. Every heading becomes # or ##. TOCs break. RAG chunking breaks. Navigation breaks.

md-reheader fixes it. A 0.6B-parameter Qwen3 fine-tune reads the document and predicts the correct H1–H6 level for every heading in a single forward pass.

Quick start

CLI

pip install md-reheader

rehead --input flat.md --output fixed.md

Auto-detects CUDA. Use --cpu or --gpu to override. Omit --output to stream to stdout (pipe-friendly).

rehead -i flat.md | tee fixed.md # pipe

rehead -i flat.md --gpu -o out/fixed.md # creates nested dirs

rehead --help # all flags

Python API

from md_reheader.inference.predict import load_model, reheader_document

model, tokenizer = load_model("joelbarmettler/md-reheader")

flat = open("document.md").read()

fixed = reheader_document(flat, model, tokenizer)

The package handles preprocessing (flattening + body stripping) and postprocessing (applying predicted levels back to the original document) automatically.

Direct transformers usage

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("joelbarmettler/md-reheader")

model = AutoModelForCausalLM.from_pretrained(

"joelbarmettler/md-reheader",

dtype=torch.bfloat16,

device_map="auto",

)

messages = [

{"role": "system", "content": "You are a markdown document structure expert. Given a markdown document with incorrect or flattened heading levels, output each heading with its correct markdown prefix (# for level 1, ## for level 2, etc.), one per line."},

{"role": "user", "content": "# Introduction\n\nSome text...\n\n# Background\n\nMore text...\n\n# Methods"},

]

input_text = tokenizer.apply_chat_template(

messages, tokenize=False, add_generation_prompt=True, enable_thinking=False,

)

inputs = tokenizer(input_text, return_tensors="pt").to(model.device)

with torch.no_grad():

outputs = model.generate(**inputs, max_new_tokens=4096, do_sample=False)

generated = outputs[0][inputs["input_ids"].shape[1]:]

print(tokenizer.decode(generated, skip_special_tokens=True))

# # Introduction

# ## Background

# ## Methods

Important: pass

enable_thinking=Falsetoapply_chat_template. Without it, the model enters a repetition loop because training used the non-thinking chat format.

Self-host with vLLM

md-reheader exposes the standard OpenAI-compatible chat endpoint when served with vLLM — higher throughput than raw transformers, and drop-in client compatibility.

pip install vllm

vllm serve joelbarmettler/md-reheader --dtype bfloat16 --max-model-len 8192

On <10 GB cards (e.g. RTX 2000/3060), add --enforce-eager --gpu-memory-utilization 0.70 to skip CUDA-graph allocations that otherwise OOM.

Remote inference (vLLM or any OpenAI-compatible endpoint)

Once a server is running, use md-reheader as a thin client — no local weights needed.

CLI:

rehead -i flat.md -o fixed.md --endpoint http://localhost:8000/v1

# With auth:

rehead -i flat.md -o fixed.md --endpoint https://api.example.com/v1 --api-key sk-xxx

# or set MD_REHEADER_API_KEY in the environment

Python:

from md_reheader.inference.remote import reheader_document_remote

fixed = reheader_document_remote(

open("flat.md").read(),

endpoint="http://localhost:8000/v1",

model="joelbarmettler/md-reheader",

api_key=None, # or a bearer token

)

The remote client preprocesses locally (flatten + strip), sends a chat completion to the server with chat_template_kwargs={"enable_thinking": false} to match training, and applies predicted levels back to the original document. Identical output to local inference.

How it works

flat markdown ──► flatten headings to # ──► strip body to 128+128 tokens

│

▼

restored markdown ◄── apply predicted levels ◄── Qwen3-0.6B (fine-tuned)

- Extract headings with markdown-it-py (correctly skips code blocks).

- Flatten every heading to

#— the model ignores input levels. - Strip each section's body to its first 128 + last 128 tokens — preserves structural cues, kills the context bloat.

- Qwen3-0.6B predicts the correct

#prefix per heading. - Levels get mapped back to the original document.

Evaluation

Benchmarked on 7,321 held-out documents from GitHub markdown and Wikipedia.

| Metric | All-H1 baseline | Heuristic | md-reheader |

|---|---|---|---|

| Exact match | 0.0% | 14.5% | 56.1% |

| Per-heading accuracy | 13.1% | 49.1% | 80.6% |

| Hierarchy preservation | 61.3% | 68.6% | 91.0% |

| Mean absolute error | 1.38 | 0.62 | 0.22 |

Per-level accuracy

| H1 | H2 | H3 | H4 | H5 | H6 | |

|---|---|---|---|---|---|---|

| Accuracy | 77% | 85% | 78% | 68% | 45% | 50% |

H1–H3 land in the 77–85% band; H5/H6 drop but still beat baselines. Most deep-level errors are off-by-one — the relative structure survives.

By document depth

| Max depth | Exact match | Per-heading accuracy | Hierarchy |

|---|---|---|---|

| Depth 2 | 83% | 91% | 95% |

| Depth 3 | 54% | 82% | 90% |

| Depth 4 | 32% | 70% | 88% |

| Depth 5-6 | 33% | 65% | 89% |

By source

| Source | Exact match | Per-heading accuracy |

|---|---|---|

| GitHub markdown | 49.5% | 74.0% |

| Wikipedia | 71.3% | 95.5% |

Speed

| Document size | RTX 4090 (BF16) | CPU (fp32) |

|---|---|---|

| < 1k tokens | 0.4s | 5s |

| 1k–2k tokens | 0.8s | 10s |

| 2k–4k tokens | 1.4s | ~20s |

| 4k–8k tokens | 3.4s | ~60s |

Documents longer than ~8k tokens (after stripping) are truncated from the tail.

Training

| Item | Value |

|---|---|

| Base model | Qwen/Qwen3-0.6B (text-only) |

| Framework | Axolotl |

| Training data | ~197k markdown docs (GitHub + Wikipedia, depth 4+ oversampled 2–8×) |

| Hardware | 2× RTX 4090, DDP, BF16 |

| Sequence length | 8,192 tokens with sample packing |

| Learning rate | 5e-5, cosine schedule |

| Epochs | 2 (epoch-1 checkpoint — epoch 2 overfits) |

| Effective batch | 24 |

Input format during training

- All headings flattened to

#. - Body text per section truncated to first 128 + last 128 tokens.

- Document truncated to 8k tokens.

- Assistant output: one heading per line with its correct

#prefix.

Limitations

- Deep nesting (H5/H6): accuracy drops to 45–50%. Relative structure is preserved but absolute depth gets compressed by 1–2 levels.

- Ambiguous structure: heading levels are subjective. The model learns common conventions; it can't resolve genuine ambiguity.

- Long documents: >8k tokens (after stripping) get truncated. Headings past the cutoff retain their input levels.

- English-centric: trained primarily on English content.

Author

Built by Joel Barmettler.

Citation

@software{barmettler2026mdreheader,

author = {Barmettler, Joel},

title = {md-reheader: Restoring Heading Hierarchy in Markdown Documents},

year = {2026},

url = {https://github.com/joelbarmettlerUZH/md-reheader}

}

- Downloads last month

- 67