onevision-encoder-large-tf57transformers 5.7+ idiomatic variant of

lmms-lab-encoder/onevision-encoder-large. Weights are byte-identical to the upstream model (samesafetensorsSHA-256). Onlymodeling_onevision_encoder.pyandconfig.json(transformers_versionfield) differ.Why this variant

Upstream

modeling_onevision_encoder.pyis written against thetransformers 4.xAPI surface and does not load correctly undertransformers >= 5.0:

_supports_flash_attn_2was renamed to_supports_flash_attn.- The v5 fast-init / meta-tensor path skips re-initialization of

persistent=Falsebuffers, leavingVideoRotaryEmbeddingSplit466.inv_freq_{t,h,w}filled with uninitialized memory. RoPE then produces garbage and downstream attention diverges (max diff up to 700+ vs upstream).add_start_docstrings*/replace_return_docstringsdecorators are removed in v5.- Manual eager-only attention is replaced by the v5

ALL_ATTENTION_FUNCTIONSinterface dispatching acrosseager,sdpa,flash_attention_2,flex_attention.v5-only notice

This variant requires

transformers >= 5.7.0and will not load undertransformers 4.x. Use the upstream model dir for v4 environments.Diff vs upstream

File Change model.safetensorsunchanged (byte-identical) config.jsontransformers_version: 4.57.3->5.7.0configuration_onevision_encoder.pyunchanged preprocessor_config.jsonunchanged modeling_onevision_encoder.pyfull v5-idiom rewrite: _supports_flash_attn/_supports_sdpa/_supports_flex_attn/_supports_attention_backend,ALL_ATTENTION_FUNCTIONS.get_interface(...)dispatch,@auto_docstring+@can_return_tuple, removed v4 docstring decorators anduse_return_dictbranches,_init_weightshook callsVideoRotaryEmbeddingSplit466.reset_inv_freqs()to fix the inv_freq init bug.Usage

from transformers import AutoModel model = AutoModel.from_pretrained( "path/to/onevision-encoder-large-tf57", trust_remote_code=True, attn_implementation="eager", # or "sdpa", "flash_attention_2", "flex_attention" )Tested with

transformers==5.7.0,torch>=2.4.Equivalence verification

Cross-version (upstream tf 4.57.3 vs this tf 5.7.0) on 11 input shapes (single image / multi-frame video / batched / non-square /

visible_indices):

dtype attn result fp32 eager bit-identical (max_diff = 0.0 across all 22 tensors) bf16 eager bit-identical (max_diff = 0.0 across all 22 tensors) Plus 7 v5-only scenario tests, all PASSED:

- eager vs sdpa equivalence (max=7.5e-5)

- save_pretrained then from_pretrained bit-identical round-trip

- cpu vs cuda equivalence (max=4.1e-5)

- fp32/bf16/fp16 dtype preservation

- gradient flow (389/399 params receive non-zero grad)

- runtime

_attn_implementationswitchfrom_pretrainedidempotency (two loads bit-identical)Plus real-input end-to-end tests on a real JPEG (1332x725) and a real MP4 (decord, 4 frames @ 512x512), preprocessed through

AutoImageProcessor(CLIPImageProcessor):

path result image: PIL -> processor -> model fwd finite, lhs=(1,1024,1024), pool=(1,1024) video: decord -> 5D (1,3,4,448,448) -> model fwd finite, lhs=(1,4096,1024), pool=(1,1024) model-only equivalence on identical pixel_values (v4 vs v5) bit-identical (max_diff = 0.0 on image+video) Note: Raw

pixel_valuesfromCLIPImageProcessordiffer by ~1e-2 between transformers 4.57.3 and 5.7.0 due to upstream resize/normalize changes intransformersitself (independent of this variant). When the same pixel_values are fed to both versions, this model is bit-identical.Reproduce with

tools/upgrade_v5/run_all.shfrom the OneVision-Encoder repo.Changelog

- tf57: full v5-idiom rewrite; weights unchanged.

The original model card from upstream follows.

Model Card

| Property | Value |

|---|---|

| Model Type | Vision Transformer (ViT) |

| Architecture | HEVC-Style Vision Transformer |

| Hidden Size | 1024 |

| Intermediate Size | 4096 |

| Number of Layers | 24 |

| Number of Attention Heads | 16 |

| Patch Size | 16 |

| Image Resolution | 448×448 (pre-trained) |

| Video Resolution | 224×224 with 256 tokens per frame |

| Positional Encoding | 3D RoPE (4:6:6 split for T:H:W) |

| Normalization | Layer Normalization |

| Activation Function | GELU |

| License | Apache 2.0 |

Key Features

- Codec-Style Patch Selection: Instead of sampling sparse frames densely (all patches from few frames), OneVision Encoder samples dense frames sparsely (important patches from many frames).

- 3D Rotary Position Embedding: Uses a 4:6:6 split for temporal, height, and width dimensions to capture spatiotemporal relationships.

- Native Resolution Support: Supports native resolution input without tiling or cropping.

- Flash Attention 2: Efficient attention implementation for improved performance and memory efficiency.

Intended Use

Primary Use Cases

- Video Understanding: Action recognition, video captioning, video question answering

- Image Understanding: Document understanding (DocVQA), chart understanding (ChartQA), OCR tasks

- Vision-Language Models: As the vision encoder backbone for multimodal large language models

Downstream Tasks

- Video benchmarks: MVBench, VideoMME, Perception Test

- Image understanding: DocVQA, ChartQA, OCRBench

- Action recognition: SSv2, UCF101, Kinetics

Quick Start

Note: This model supports native resolution input. For optimal performance:

- Image: 448×448 resolution (pre-trained)

- Video: 224×224 resolution with 256 tokens per frame (pre-trained)

from transformers import AutoModel, AutoImageProcessor

from PIL import Image

import torch

# Load model and preprocessor

model = AutoModel.from_pretrained(

"lmms-lab-encoder/onevision-encoder-large",

trust_remote_code=True,

attn_implementation="flash_attention_2"

).to("cuda").eval()

preprocessor = AutoImageProcessor.from_pretrained(

"lmms-lab-encoder/onevision-encoder-large",

trust_remote_code=True

)

# Image inference: [B, C, H, W]

image = Image.open("path/to/your/image.jpg") # Replace with your image path

pixel_values = preprocessor(images=image, return_tensors="pt")["pixel_values"].to("cuda")

with torch.no_grad():

outputs = model(pixel_values)

# outputs.last_hidden_state: [B, num_patches, hidden_size]

# outputs.pooler_output: [B, hidden_size]

# Video inference: [B, C, T, H, W] with visible_indices

num_frames, frame_tokens, target_frames = 16, 256, 64

# Load video frames and preprocess each frame (replace with your video frame paths)

frames = [Image.open(f"path/to/frame_{i}.jpg") for i in range(num_frames)]

video_pixel_values = preprocessor(images=frames, return_tensors="pt")["pixel_values"]

# Reshape from [T, C, H, W] to [B, C, T, H, W]

video = video_pixel_values.unsqueeze(0).permute(0, 2, 1, 3, 4).to("cuda")

# Build visible_indices for temporal sampling

frame_pos = torch.linspace(0, target_frames - 1, num_frames).long().cuda()

visible_indices = (frame_pos.unsqueeze(-1) * frame_tokens + torch.arange(frame_tokens).cuda()).reshape(1, -1)

# visible_indices example (with 256 tokens per frame):

# Frame 0 (pos=0): indices [0, 1, 2, ..., 255]

# Frame 1 (pos=4): indices [1024, 1025, 1026, ..., 1279]

# Frame 2 (pos=8): indices [2048, 2049, 2050, ..., 2303]

# ...

# Frame 15 (pos=63): indices [16128, 16129, ..., 16383]

with torch.no_grad():

outputs = model(video, visible_indices=visible_indices)

LMM Probe Results

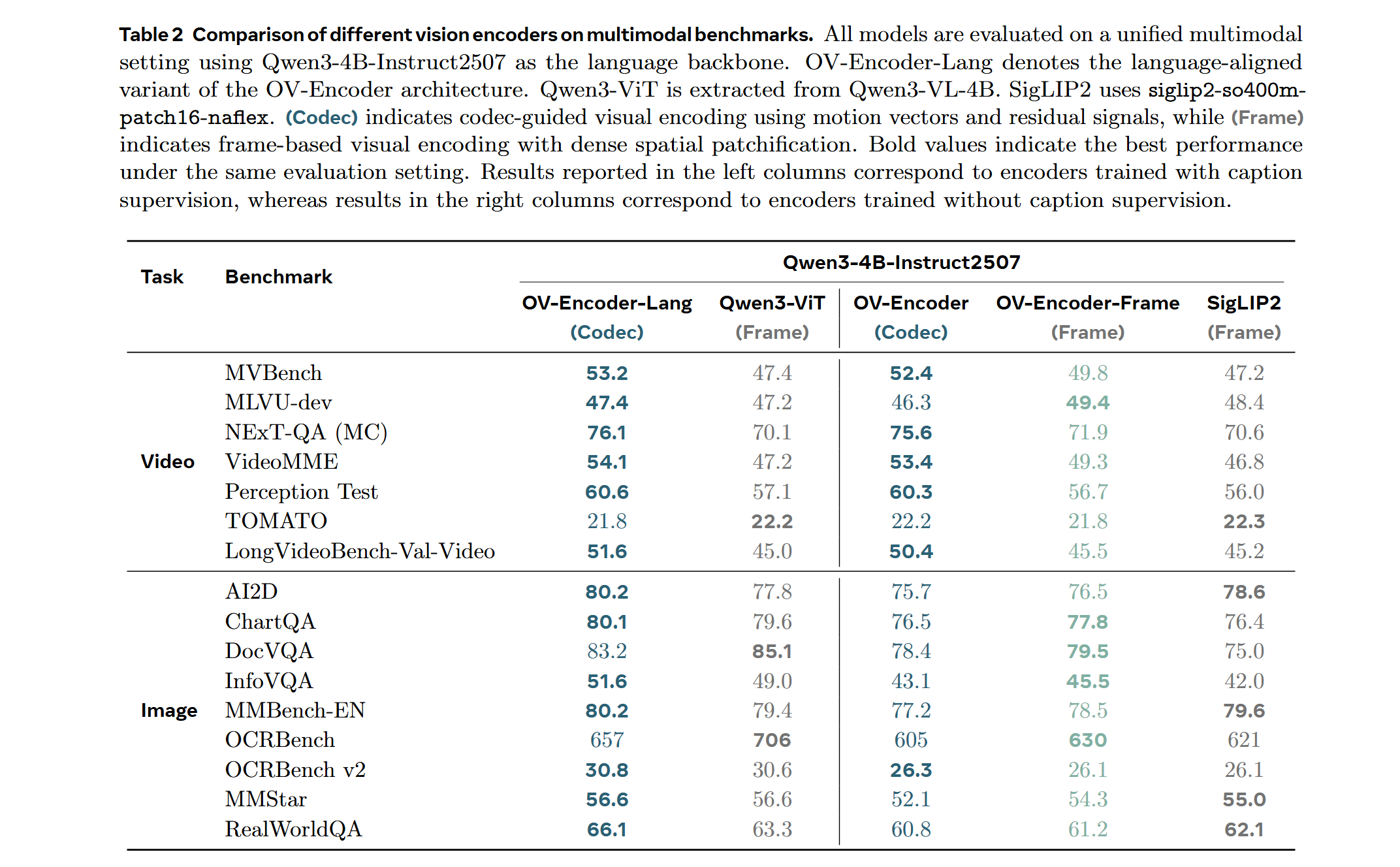

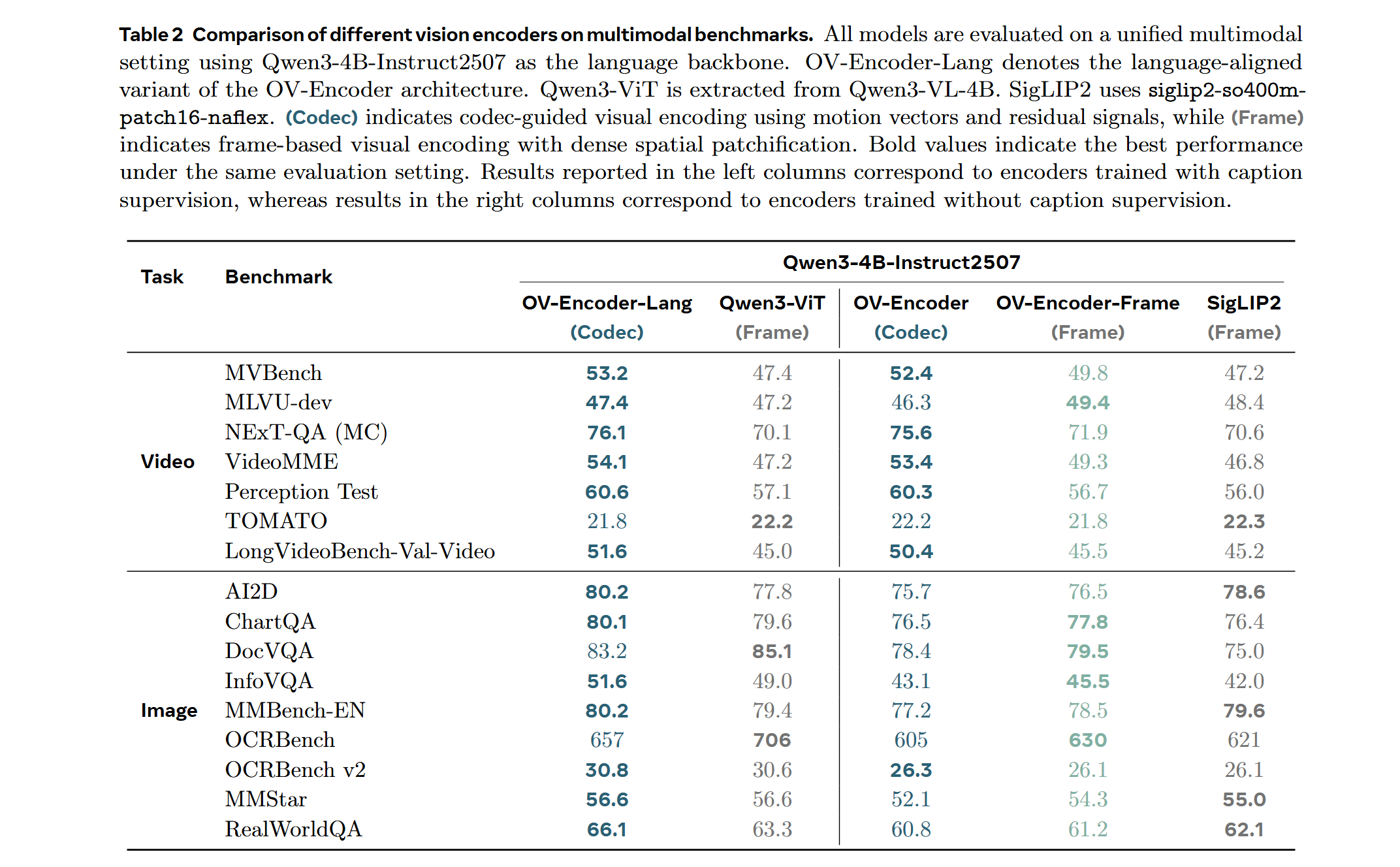

Training on a mixed dataset of 740K samples from LLaVA-OneVision and 800K samples from LLaVA-Video SFT. The training pipeline proceeds directly to Stage 2 fine-tuning. We adopt a streamlined native-resolution strategy inspired by LLaVA-OneVision: when the input frame resolution matches the model's native input size, it is fed directly—without tiling or cropping—to evaluate the ViT's native resolution capability.

Attentive Probe Results

Performance comparison of different vision encoders using Attentive Probe evaluation. Models are evaluated using single clip input and trained for 10 epochs across 8 action recognition datasets. Results show average performance and per-dataset scores for 8-frame and 16-frame configurations.

Codec Input

TODO: Add codec-style input documentation for temporal saliency-based patch selection.

- Downloads last month

- -