Add model-index evaluation metadata

Browse files

README.md

CHANGED

|

@@ -23,6 +23,69 @@ tags:

|

|

| 23 |

- english

|

| 24 |

- vision-language

|

| 25 |

- custom-code

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 26 |

---

|

| 27 |

|

| 28 |

# M2-Encoder-0.4B

|

|

@@ -165,9 +228,7 @@ image_embeds = image_session.run(

|

|

| 165 |

Runnable script:

|

| 166 |

|

| 167 |

```bash

|

| 168 |

-

python examples/run_onnx_inference.py

|

| 169 |

-

--image pokemon.jpeg \

|

| 170 |

-

--text 杰尼龟 妙蛙种子 小火龙 皮卡丘

|

| 171 |

```

|

| 172 |

|

| 173 |

## Inference Endpoints

|

|

@@ -209,6 +270,8 @@ According to the official project README and paper, the M2-Encoder series is tra

|

|

| 209 |

|

| 210 |

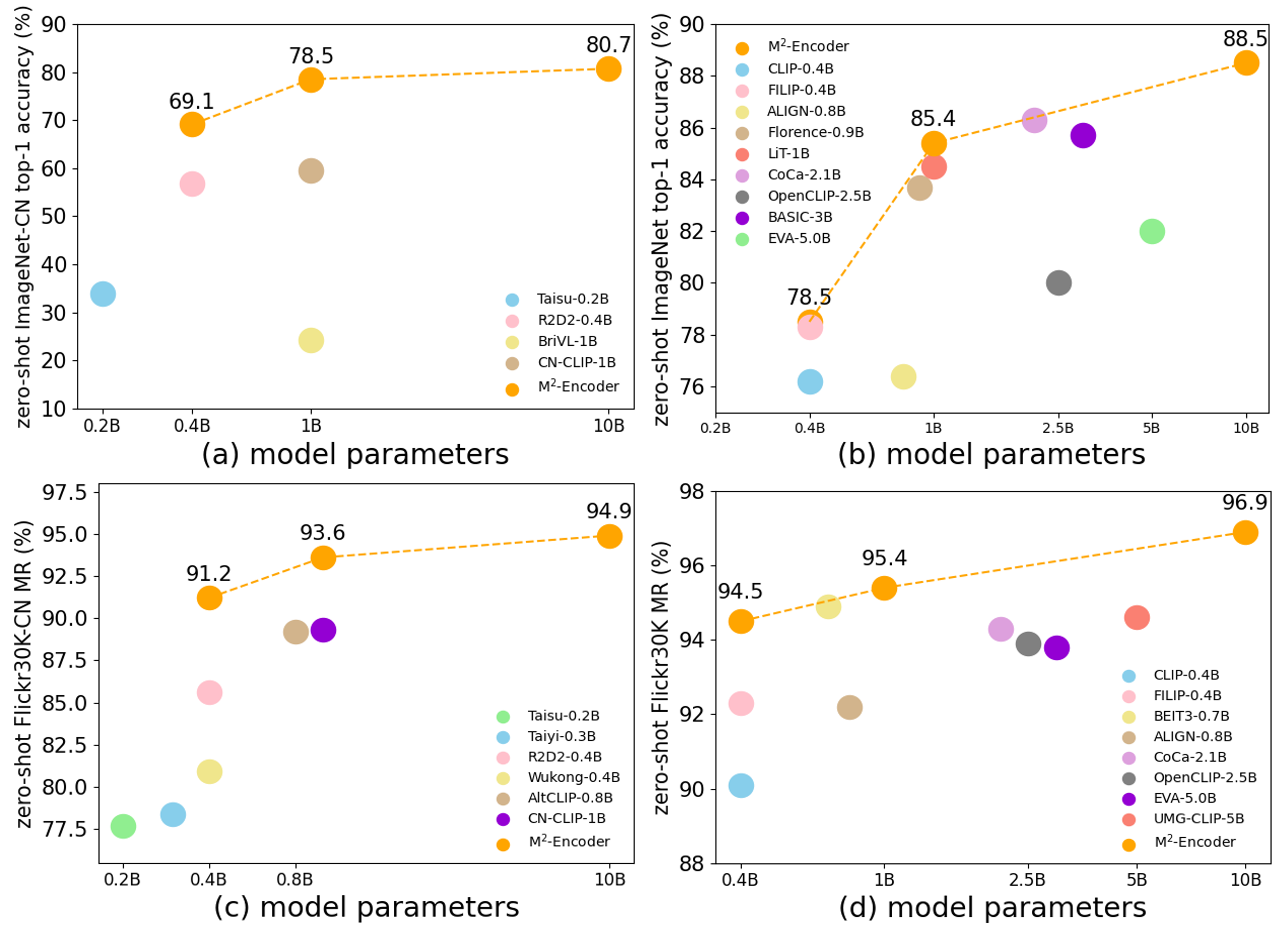

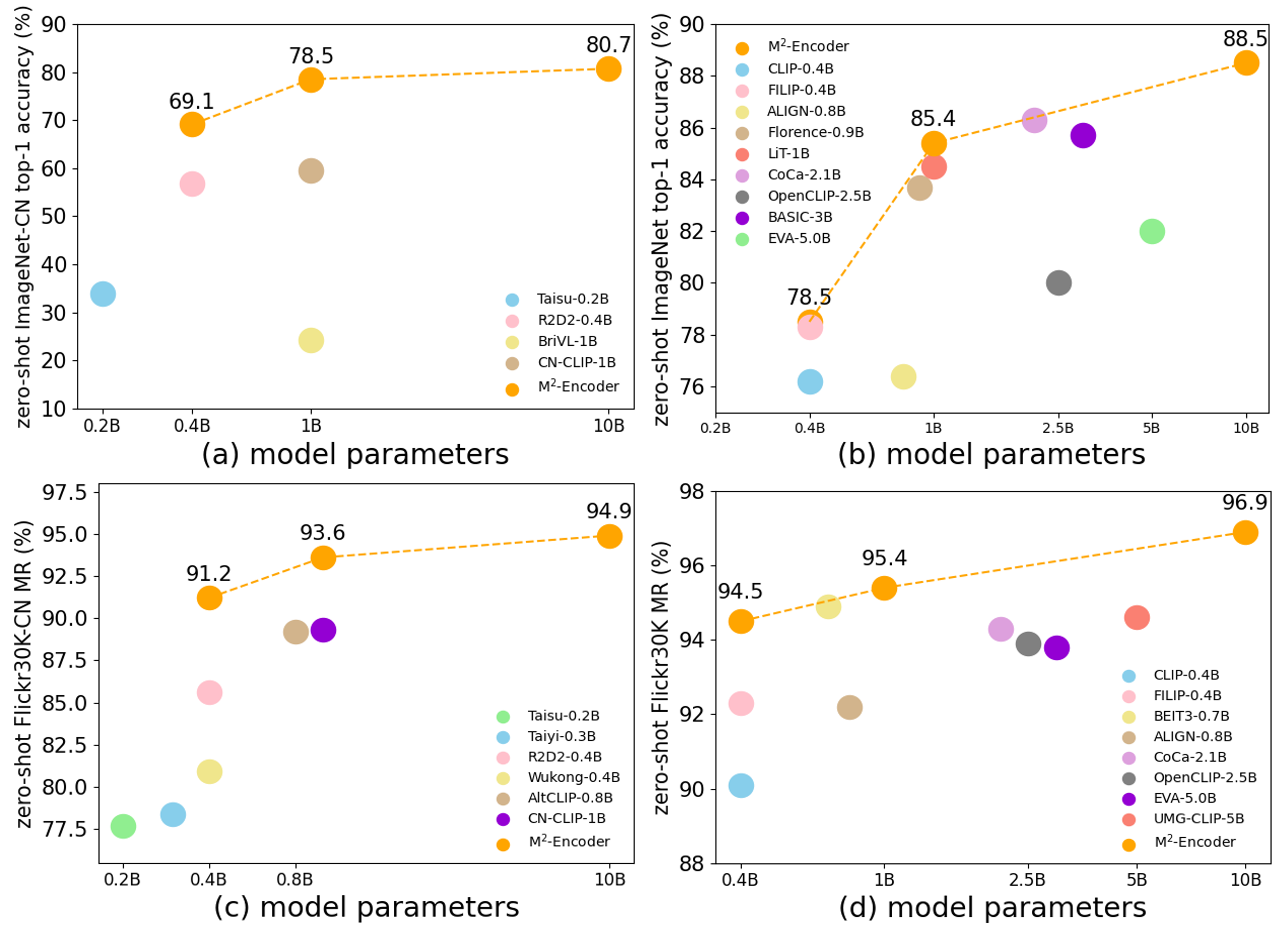

The official project reports that the M2-Encoder family sets strong bilingual retrieval and zero-shot classification results, and that the 10B variant reaches 88.5 top-1 on ImageNet and 80.7 top-1 on ImageNet-CN in the zero-shot setting. See the paper for exact cross-variant comparisons.

|

| 211 |

|

|

|

|

|

|

|

| 212 |

|

| 213 |

|

| 214 |

## Notes

|

|

|

|

| 23 |

- english

|

| 24 |

- vision-language

|

| 25 |

- custom-code

|

| 26 |

+

model-index:

|

| 27 |

+

- name: M2-Encoder-0.4B

|

| 28 |

+

results:

|

| 29 |

+

- task:

|

| 30 |

+

type: zero-shot-image-classification

|

| 31 |

+

name: Zero-Shot Image Classification

|

| 32 |

+

dataset:

|

| 33 |

+

name: ImageNet

|

| 34 |

+

type: ImageNet

|

| 35 |

+

metrics:

|

| 36 |

+

- type: accuracy

|

| 37 |

+

value: 78.5

|

| 38 |

+

name: Top-1 Accuracy

|

| 39 |

+

- task:

|

| 40 |

+

type: zero-shot-image-classification

|

| 41 |

+

name: Zero-Shot Image Classification

|

| 42 |

+

dataset:

|

| 43 |

+

name: ImageNet-CN

|

| 44 |

+

type: ImageNet-CN

|

| 45 |

+

metrics:

|

| 46 |

+

- type: accuracy

|

| 47 |

+

value: 69.1

|

| 48 |

+

name: Top-1 Accuracy

|

| 49 |

+

- task:

|

| 50 |

+

type: image-text-retrieval

|

| 51 |

+

name: Zero-Shot Image-Text Retrieval

|

| 52 |

+

dataset:

|

| 53 |

+

name: Flickr30K

|

| 54 |

+

type: Flickr30K

|

| 55 |

+

metrics:

|

| 56 |

+

- type: mean_recall

|

| 57 |

+

value: 94.5

|

| 58 |

+

name: MR

|

| 59 |

+

- task:

|

| 60 |

+

type: image-text-retrieval

|

| 61 |

+

name: Zero-Shot Image-Text Retrieval

|

| 62 |

+

dataset:

|

| 63 |

+

name: COCO

|

| 64 |

+

type: COCO

|

| 65 |

+

metrics:

|

| 66 |

+

- type: mean_recall

|

| 67 |

+

value: 75.2

|

| 68 |

+

name: MR

|

| 69 |

+

- task:

|

| 70 |

+

type: image-text-retrieval

|

| 71 |

+

name: Zero-Shot Image-Text Retrieval

|

| 72 |

+

dataset:

|

| 73 |

+

name: Flickr30K-CN

|

| 74 |

+

type: Flickr30K-CN

|

| 75 |

+

metrics:

|

| 76 |

+

- type: mean_recall

|

| 77 |

+

value: 91.2

|

| 78 |

+

name: MR

|

| 79 |

+

- task:

|

| 80 |

+

type: image-text-retrieval

|

| 81 |

+

name: Zero-Shot Image-Text Retrieval

|

| 82 |

+

dataset:

|

| 83 |

+

name: COCO-CN

|

| 84 |

+

type: COCO-CN

|

| 85 |

+

metrics:

|

| 86 |

+

- type: mean_recall

|

| 87 |

+

value: 87.8

|

| 88 |

+

name: MR

|

| 89 |

---

|

| 90 |

|

| 91 |

# M2-Encoder-0.4B

|

|

|

|

| 228 |

Runnable script:

|

| 229 |

|

| 230 |

```bash

|

| 231 |

+

python examples/run_onnx_inference.py --image pokemon.jpeg --text 杰尼龟 妙蛙种子 小火龙 皮卡丘

|

|

|

|

|

|

|

| 232 |

```

|

| 233 |

|

| 234 |

## Inference Endpoints

|

|

|

|

| 270 |

|

| 271 |

The official project reports that the M2-Encoder family sets strong bilingual retrieval and zero-shot classification results, and that the 10B variant reaches 88.5 top-1 on ImageNet and 80.7 top-1 on ImageNet-CN in the zero-shot setting. See the paper for exact cross-variant comparisons.

|

| 272 |

|

| 273 |

+

The structured `model-index` metadata in this card is taken from the official paper tables for this released variant. On the Hugging Face page, those results should surface in the evaluation panel once the metadata is parsed.

|

| 274 |

+

|

| 275 |

|

| 276 |

|

| 277 |

## Notes

|