Instructions to use monsterapi/zephyr-7b-alpha_metamathqa with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use monsterapi/zephyr-7b-alpha_metamathqa with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="monsterapi/zephyr-7b-alpha_metamathqa") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("monsterapi/zephyr-7b-alpha_metamathqa") model = AutoModelForCausalLM.from_pretrained("monsterapi/zephyr-7b-alpha_metamathqa") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use monsterapi/zephyr-7b-alpha_metamathqa with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "monsterapi/zephyr-7b-alpha_metamathqa" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "monsterapi/zephyr-7b-alpha_metamathqa", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/monsterapi/zephyr-7b-alpha_metamathqa

- SGLang

How to use monsterapi/zephyr-7b-alpha_metamathqa with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "monsterapi/zephyr-7b-alpha_metamathqa" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "monsterapi/zephyr-7b-alpha_metamathqa", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "monsterapi/zephyr-7b-alpha_metamathqa" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "monsterapi/zephyr-7b-alpha_metamathqa", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use monsterapi/zephyr-7b-alpha_metamathqa with Docker Model Runner:

docker model run hf.co/monsterapi/zephyr-7b-alpha_metamathqa

Finetuning Overview:

Model Used: HuggingFaceH4/zephyr-7b-alpha

Dataset: meta-math/MetaMathQA

Dataset Insights:

The MetaMathQA dataset is a newly created dataset specifically designed for enhancing the mathematical reasoning capabilities of large language models (LLMs). It is built by bootstrapping mathematical questions and rewriting them from multiple perspectives, providing a comprehensive and challenging environment for LLMs to develop and refine their mathematical problem-solving skills.

Finetuning Details:

Using MonsterAPI's LLM finetuner, this finetuning:

- Was conducted with efficiency and cost-effectiveness in mind.

- Completed in a total duration of 10.9 hours for 0.5 epoch using an A6000 48GB GPU.

- Costed

$22.01for the entire finetuning process.

Hyperparameters & Additional Details:

- Epochs: 0.5

- Total Finetuning Cost: $22.01

- Model Path: HuggingFaceH4/zephyr-7b-alpha

- Learning Rate: 0.0001

- Data Split: 95% train 5% validation

- Gradient Accumulation Steps: 4

Prompt Structure

Below is an instruction that describes a task. Write a response that appropriately completes the request.

###Instruction:[query]

###Response:[response]

Training loss:

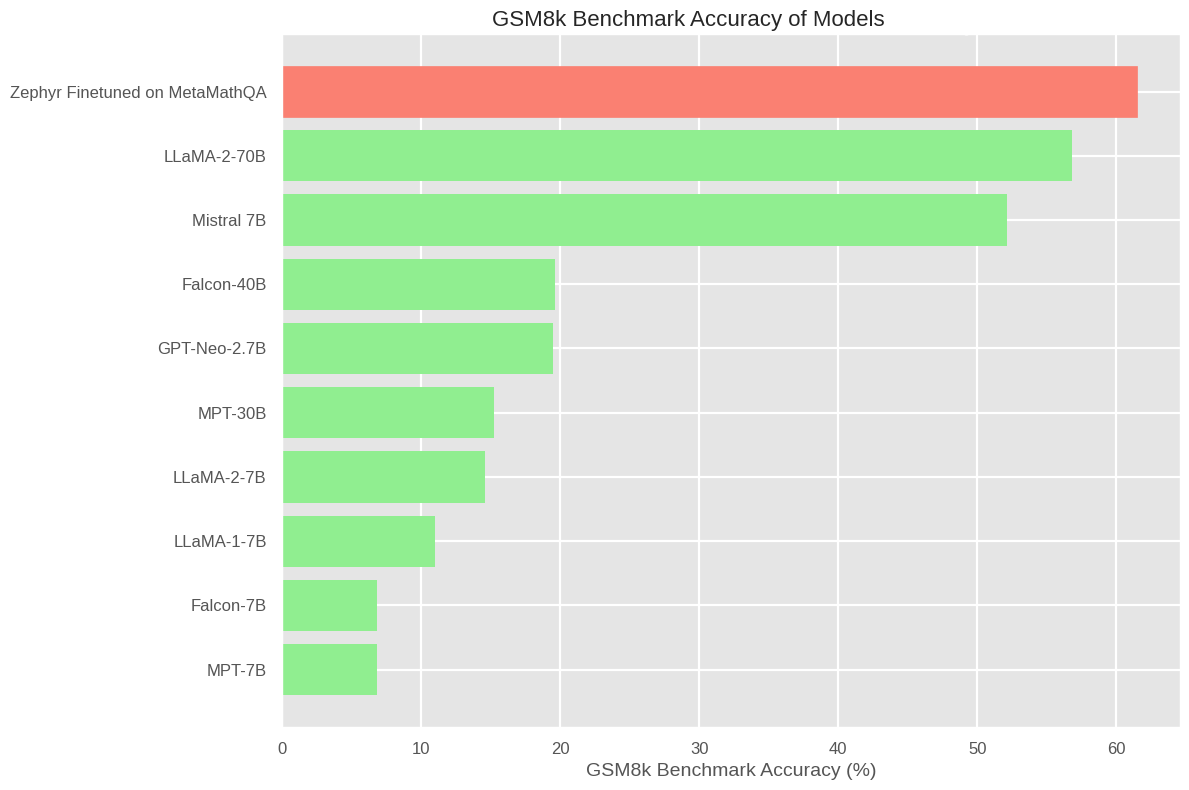

Benchmark Results:

GSM8K is a dataset of 8.5K high quality linguistically diverse grade school math word problems, These problems take between 2 and 8 steps to solve, and solutions primarily involve performing a sequence of elementary calculations using basic arithmetic operations (+ − ×÷) to reach the final answer. A bright middle school student should be able to solve every problem. Its a industry wide used benchmark for testing an LLM for for multi-step mathematical reasoning.

license: apache-2.0

- Downloads last month

- 25

Model tree for monsterapi/zephyr-7b-alpha_metamathqa

Base model

mistralai/Mistral-7B-v0.1