import torch

from diffusers import DiffusionPipeline

# switch to "mps" for apple devices

pipe = DiffusionPipeline.from_pretrained("openai/consistency-decoder", dtype=torch.bfloat16, device_map="cuda")

prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k"

image = pipe(prompt).images[0]Consistency Decoder

This is a decoder that can be used to improve decoding for Stable Diffusion VAEs. To know more, refer to the DALL-E 3 technical report.

To original code repository can be found here.

Usage in 🧨 diffusers

import torch

from diffusers import DiffusionPipeline, ConsistencyDecoderVAE

vae = ConsistencyDecoderVAE.from_pretrained("openai/consistency-decoder", torch_dtype=pipe.torch_dtype)

pipe = StableDiffusionPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5", vae=vae, torch_dtype=torch.float16

).to("cuda")

pipe("horse", generator=torch.manual_seed(0)).images

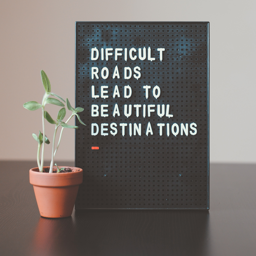

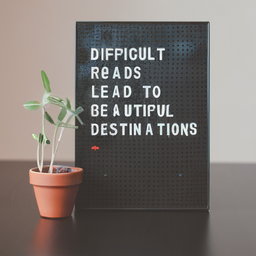

Results

(Taken from the original code repository)

Examples

- Downloads last month

- 231

Inference Providers NEW

This model isn't deployed by any Inference Provider. 🙋 Ask for provider support