metadata

license: apache-2.0

language:

- en

base_model:

- Qwen/Qwen3-VL-8B-Instruct

pipeline_tag: image-text-to-text

library_name: transformers

tags:

- chart

- reasoning

- vision-language

- multimodal

- chart-understanding

- VLM

- SOTA

datasets:

- opendatalab/ChartVerse-SFT-600K

- opendatalab/ChartVerse-RL-40K

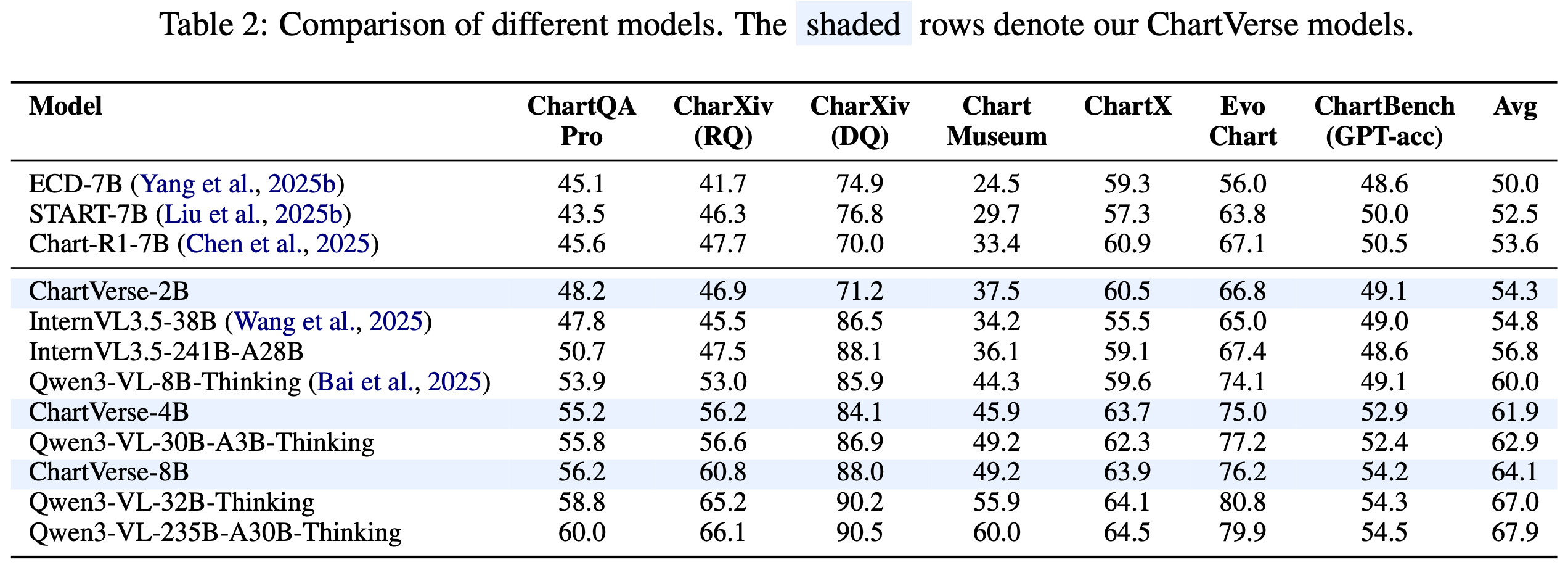

ChartVerse-8B is a state-of-the-art Vision Language Model (VLM) achieving top-tier performance on chart reasoning benchmarks, developed as part of the opendatalab/ChartVerse project. For more details about our method, datasets, and full model series, please visit our Project Page.

Most notably, ChartVerse-8B surpasses its teacher model Qwen3-VL-30B-A3B-Thinking (62.9%) and approaches Qwen3-VL-32B-Thinking (67.0%), breaking the distillation ceiling and demonstrating that high-quality synthetic data can enable student models to exceed their teachers.

🔥 Highlights

- 🏆 SOTA Performance: 64.1% average score across 6 challenging chart benchmarks

- 📈 Surpasses Teacher: Outperforms Qwen3-VL-30B-A3B-Thinking (62.9%) with only 8B parameters

- 🎯 Approaches 32B: Rivals Qwen3-VL-32B-Thinking (67.0%) performance

📊 Model Performance

Overall Results

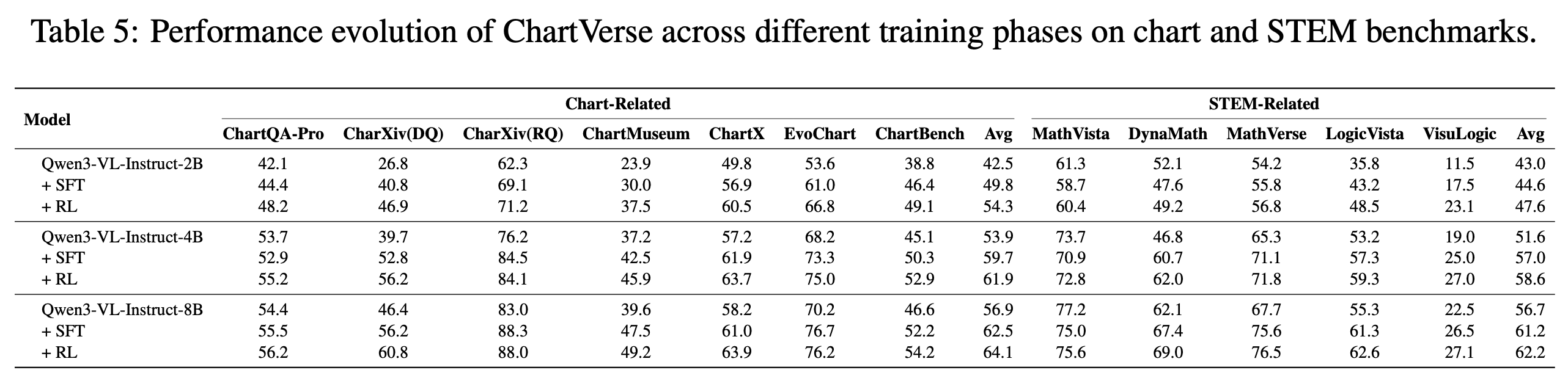

SFT vs RL Performance

📚 Training Data

ChartVerse-SFT-600K

- 412K unique high-complexity charts

- 603K QA pairs with 3.9B tokens of CoT reasoning

- Rollout Posterior Entropy: 0.44 (highest among all datasets)

- Truth-anchored answer verification via code execution

ChartVerse-RL-40K

- 40K highest-difficulty samples

- Filtered by failure rate: 0 < r(Q) < 1

- Ensures "hard but solvable" training signal

🏋️ Training Details

Supervised Fine-Tuning (SFT):

- Framework: LLaMA-Factory

- Dataset: ChartVerse-SFT-600K

- Learning rate: 1.0 × 10⁻⁵

- Global batch size: 128

- Context length: 22,000 tokens

- Training time: ~1.5 days on 32× A100 GPUs

Reinforcement Learning (RL):

- Framework: veRL

- Dataset: ChartVerse-RL-40K

- Algorithm: GSPO

- Learning rate: 1.0 × 10⁻⁶

- Rollout samples: 16 per prompt

- Training time: ~4 days on 32× A100 GPUs

🚀 Quick Start

from transformers import Qwen3VLForConditionalGeneration, AutoProcessor

from qwen_vl_utils import process_vision_info

from PIL import Image

# 1. Load Model

model_path = "opendatalab/ChartVerse-8B"

model = Qwen3VLForConditionalGeneration.from_pretrained(

model_path, torch_dtype="auto", device_map="auto"

)

processor = AutoProcessor.from_pretrained(model_path)

# 2. Prepare Input

image_path = "path/to/your/chart.png"

query = "Which region demonstrates the greatest proportional variation in annual revenue compared to its typical revenue level?"

messages = [

{

"role": "user",

"content": [

{"type": "image", "image": image_path},

{"type": "text", "text": query},

],

}

]

# 3. Inference

text = processor.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

image_inputs, video_inputs = process_vision_info(messages)

inputs = processor(

text=[text],

images=image_inputs,

padding=True,

return_tensors="pt",

).to("cuda")

generated_ids = model.generate(**inputs, max_new_tokens=16384)

output_text = processor.batch_decode(

generated_ids, skip_special_tokens=True, clean_up_tokenization_spaces=False

)

print(output_text[0])

📖 Citation

@misc{liu2026chartversescalingchartreasoning,

title={ChartVerse: Scaling Chart Reasoning via Reliable Programmatic Synthesis from Scratch},

author={Zheng Liu and Honglin Lin and Chonghan Qin and Xiaoyang Wang and Xin Gao and Yu Li and Mengzhang Cai and Yun Zhu and Zhanping Zhong and Qizhi Pei and Zhuoshi Pan and Xiaoran Shang and Bin Cui and Conghui He and Wentao Zhang and Lijun Wu},

year={2026},

eprint={2601.13606},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2601.13606},

}

📄 License

This model is released under the Apache 2.0 License.

🙏 Acknowledgements

- Base model: Qwen3-VL-8B-Instruct

- Teacher model: Qwen3-VL-30B-A3B-Thinking

- Training frameworks: LLaMA-Factory, veRL

- Evaluation: VLMEvalKit, Compass-Verifier