import torch

from diffusers import DiffusionPipeline

# switch to "mps" for apple devices

pipe = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-xl-base-1.0", dtype=torch.bfloat16, device_map="cuda")

pipe.load_lora_weights("ostris/ikea-instructions-lora-sdxl")

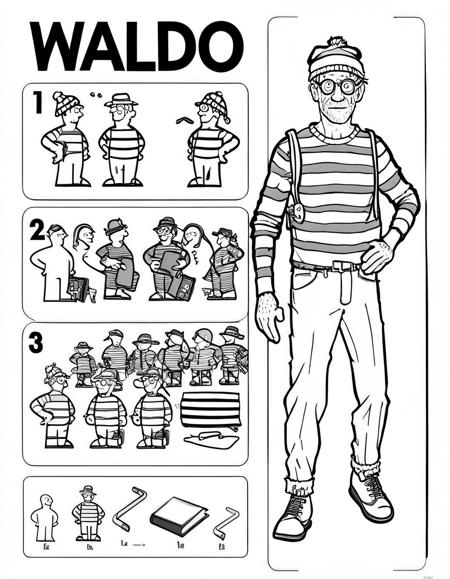

prompt = "where is waldo "

image = pipe(prompt).images[0]Ikea Instructions - LoRA - SDXL

where is waldo

No trigger word needed. Weight of 1.0 works well on the SDXL 1.0 base. Negatives are usually not needed, but "blurry" and "low quality" seem to help. You can use simple prompts such as "hamburger" or describe steps you want it to show. SDXL does a pretty good job of figuring out the steps to make it.

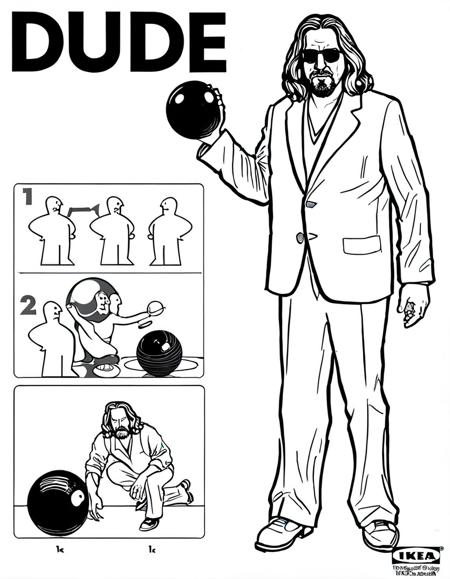

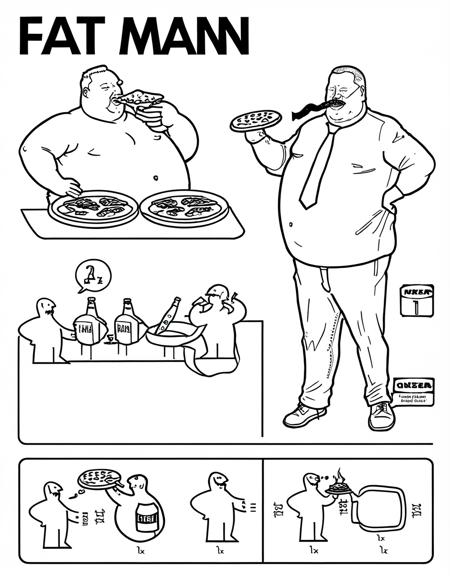

Image examples for the model:

sleep

hamburger,, lettuce, mayo, lettuce, no tomato

barbie and ken

back to the future

the dude, form the movie the big lebowski, drinking, rug wet, bowling ball

hippie

fat man, eat pizza, eat hamburgers, drink beer

dayman, fighter of the night man, champion of the sun

nightman, pay troll toll to get into that boys hole

- Downloads last month

- 652

Model tree for ostris/ikea-instructions-lora-sdxl

Base model

stabilityai/stable-diffusion-xl-base-1.0