Instructions to use pearsonkyle/ArtPrompter with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use pearsonkyle/ArtPrompter with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="pearsonkyle/ArtPrompter")# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("pearsonkyle/ArtPrompter") model = AutoModelForCausalLM.from_pretrained("pearsonkyle/ArtPrompter") - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use pearsonkyle/ArtPrompter with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "pearsonkyle/ArtPrompter" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "pearsonkyle/ArtPrompter", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker

docker model run hf.co/pearsonkyle/ArtPrompter

- SGLang

How to use pearsonkyle/ArtPrompter with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "pearsonkyle/ArtPrompter" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "pearsonkyle/ArtPrompter", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "pearsonkyle/ArtPrompter" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "pearsonkyle/ArtPrompter", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }' - Docker Model Runner

How to use pearsonkyle/ArtPrompter with Docker Model Runner:

docker model run hf.co/pearsonkyle/ArtPrompter

ArtPrompter

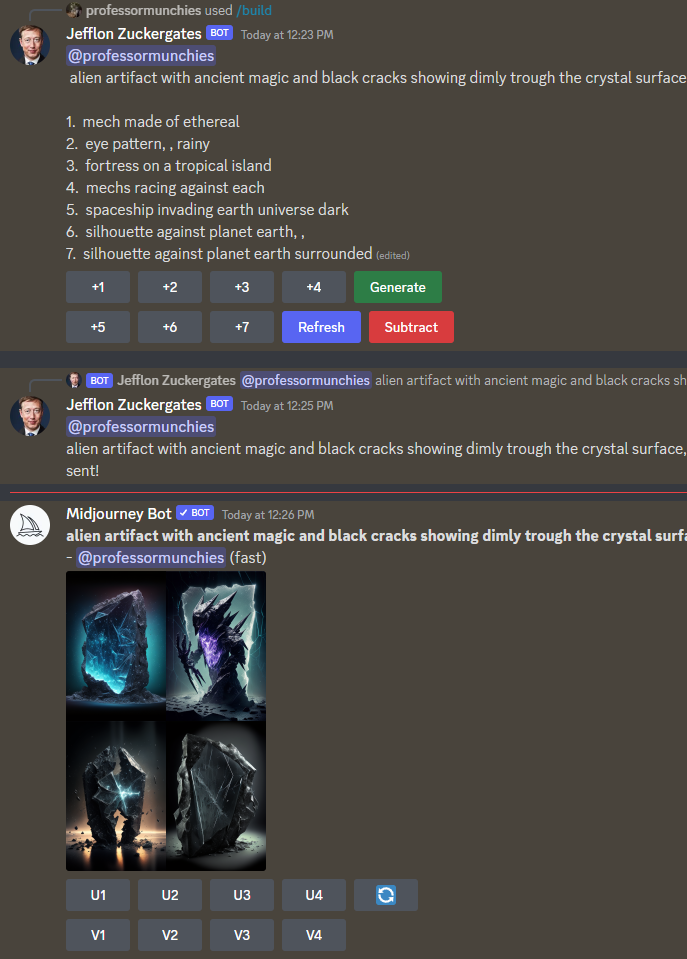

A gpt2 powered predictive algorithm for making descriptive text prompts for A.I. image generators (e.g. MidJourney, Stable Diffusion, ArtBot, etc). The model was trained on a custom dataset containing 666K unique prompts from MidJourney. Simply start a prompt and let the algorithm suggest ways to finish it.

from transformers import pipeline

prompter = pipeline('text-generation',model='pearsonkyle/ArtPrompter', tokenizer='gpt2')

texts = prompter('A portal to a galaxy, view with', max_length=30, num_return_sequences=5)

for i in range(5):

print(texts[i]['generated_text']+'\n')

Intended uses & limitations

Build sick prompts and lots of them.. use it to make animations or a discord bot that can interact with MidJourney.

Examples

The entire universe is a simulation,a confessional with a smiling guy fawkes mask, symmetrical, inviting,hyper realistic

a pug disguised as a teacher. Setting is a class room

I wish I had an angel For one moment of love I wish I had your angel Your Virgin Mary undone Im in love with my desire Burning angelwings to dust

The heart of a galaxy, surrounded by stars, magnetic fields, big bang, cinestill 800T,black background, hyper detail, 8k, black

Training procedure

~30 hours of finetune on RTX3070 with 666K unique prompts

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

Framework versions

- Transformers 4.26.0

- Pytorch 1.13.1

- Tokenizers 0.13.2

- Downloads last month

- 16

Install from pip and serve model

# Install vLLM from pip: pip install vllm# Start the vLLM server: vllm serve "pearsonkyle/ArtPrompter"# Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "pearsonkyle/ArtPrompter", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'