fp8 @ - models

Qwen-Image-Edit-AIO-fp8

Qwen-Image-Edit-AIO-fp8 is an FP8-compressed Transformers-only release built from Qwen-Image-Edit-2511 and Qwen-Image-Edit-2509. This repository provides FP8 (W8A8 · F8_E4M3) compressed transformer weights compatible with both Transformers and Diffusers pipelines, optimized for lower VRAM usage and higher throughput while maintaining strong editing fidelity.

Diffusers Usage

Qwen-Image-Edit-2509-fp8

import torch

from diffusers.models import QwenImageTransformer2DModel

from diffusers import QwenImageEditPlusPipeline

from diffusers.utils import load_image

# Load transformer (2509 version)

transformer = QwenImageTransformer2DModel.from_pretrained(

"prithivMLmods/FireRed-Image-Edit-1.0-fp8",

subfolder="Qwen-Image-Edit-2509-fp8/transformer",

torch_dtype=torch.bfloat16

)

# Load pipeline

pipeline = QwenImageEditPlusPipeline.from_pretrained(

"Qwen/Qwen-Image-Edit-2509",

transformer=transformer,

torch_dtype=torch.bfloat16

)

pipeline.to("cuda")

# Load image

image1 = load_image("grumpycat.png")

prompt = "turn the cat into an orange cat"

# Inference inputs

inputs = {

"image": [image1],

"prompt": prompt,

"generator": torch.manual_seed(42),

"true_cfg_scale": 1.0,

"negative_prompt": " ",

"num_inference_steps": 40,

"guidance_scale": 1.0,

"num_images_per_prompt": 1,

}

# Run pipeline

output = pipeline(**inputs)

output_image = output.images[0]

output_image.save("output_image_2509.png")

Qwen-Image-Edit-2511-fp8

import torch

from diffusers.models import QwenImageTransformer2DModel

from diffusers import QwenImageEditPlusPipeline

from diffusers.utils import load_image

# Load transformer (2511 version)

transformer = QwenImageTransformer2DModel.from_pretrained(

"prithivMLmods/FireRed-Image-Edit-1.0-fp8",

subfolder="Qwen-Image-Edit-2511-fp8/transformer",

torch_dtype=torch.bfloat16

)

# Load pipeline

pipeline = QwenImageEditPlusPipeline.from_pretrained(

"Qwen/Qwen-Image-Edit-2511",

transformer=transformer,

torch_dtype=torch.bfloat16

)

pipeline.to("cuda")

# Load image

image1 = load_image("grumpycat.png")

prompt = "turn the cat into an orange cat"

# Inference inputs

inputs = {

"image": [image1],

"prompt": prompt,

"generator": torch.manual_seed(42),

"true_cfg_scale": 1.0,

"negative_prompt": " ",

"num_inference_steps": 40,

"guidance_scale": 1.0,

"num_images_per_prompt": 1,

}

# Run pipeline

output = pipeline(**inputs)

output_image = output.images[0]

output_image.save("output_image_2511.png")

About the Base Models

Qwen-Image-Edit-2511 is an advanced iteration over Qwen-Image-Edit-2509, a production-grade 20B-parameter image editing model developed by Alibaba's Qwen team.

It is built on the Qwen-Image MMDiT architecture with VL-Qwen2.5 integration and VAE encoding for high-fidelity real-world editing workflows.

Architecture Overview

|

DiT / MMDiT Block |

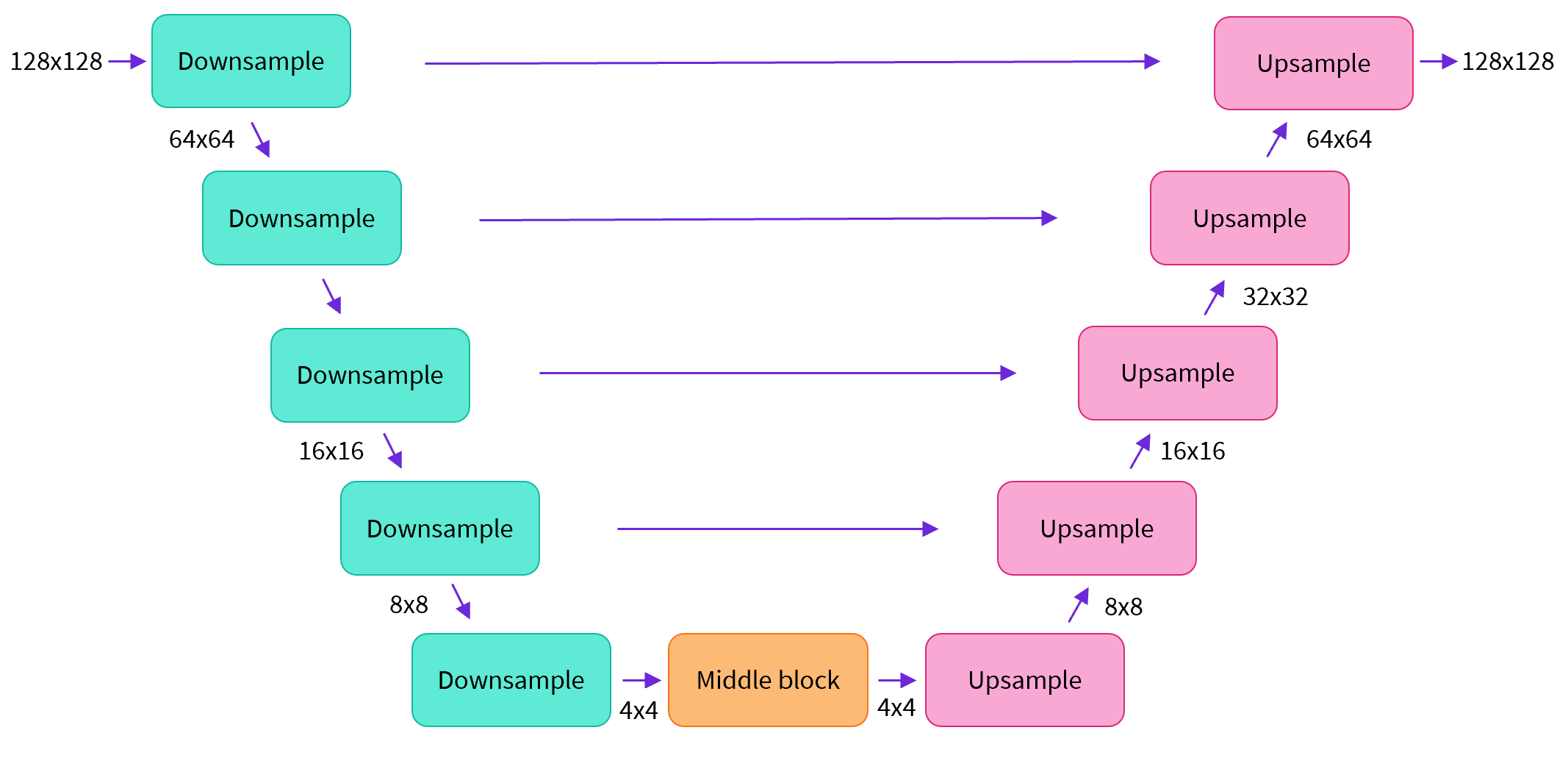

U-Net Architecture

|

Cross-Attention Mechanism

|

Variational Autoencoder (VAE)

|

- Qwen-Image MMDiT backbone

- VL-Qwen2.5 multimodal integration

- Latent VAE encoding

- Diffusion-based editing pipeline

- Compatible with Diffusers and ComfyUI workflows

What Makes 2511 Stronger Than 2509

Multi-Person & Identity Consistency

- Reduced identity swaps in group photos

- Improved pose consistency

- Stable multi-subject generation

High-Fidelity Iterative Editing

- Strong identity preservation across multiple edits

- Reduced image drift

- Reliable prompt alignment

Dual-Mode Editing Control

- Appearance editing for localized modifications

- Semantic editing for global transformations

Precise Text Handling

- Natural typography rendering

- Accurate on-image text replacement

- Reduced distortions in signage or UI text

Enhanced Structural Reasoning

- Better geometric alignment

- Accurate object replacement

- Material-aware transformations

Industrial & Commercial Use

- Product design material swaps

- Clean geometry preservation

- Suitable for e-commerce and marketing workflows

- Reliable outputs for creative production pipelines

What This FP8 AIO Version Provides

Qwen-Image-Edit-AIO-fp8 introduces:

- FP8 (W8A8 · F8_E4M3) transformer compression

- Reduced VRAM requirements

- Higher inference throughput

- Transformers-native loading

- Diffusers-compatible transformer weights

- Optimized for Hopper-class and compatible GPUs

This release focuses strictly on compressed transformer weights while preserving original editing capabilities.

Intended Workflows

- ComfyUI pipelines

- Diffusers-based applications

- Production-grade editing systems

- Batch commercial pipelines

- E-commerce product editing

- Marketing creative generation

Limitations

- FP8 acceleration requires compatible GPU architectures.

- Extremely fine-grained edge cases may show minor precision differences compared to full BF16.

- Users are responsible for lawful and ethical deployment.

- Downloads last month

- -

Model tree for prithivMLmods/Qwen-Image-Edit-AIO-fp8

Base model

Qwen/Qwen-Image-Edit-2509