| --- |

| library_name: pytorch |

| license: other |

| tags: |

| - android |

| pipeline_tag: object-detection |

|

|

| --- |

| |

|  |

|

|

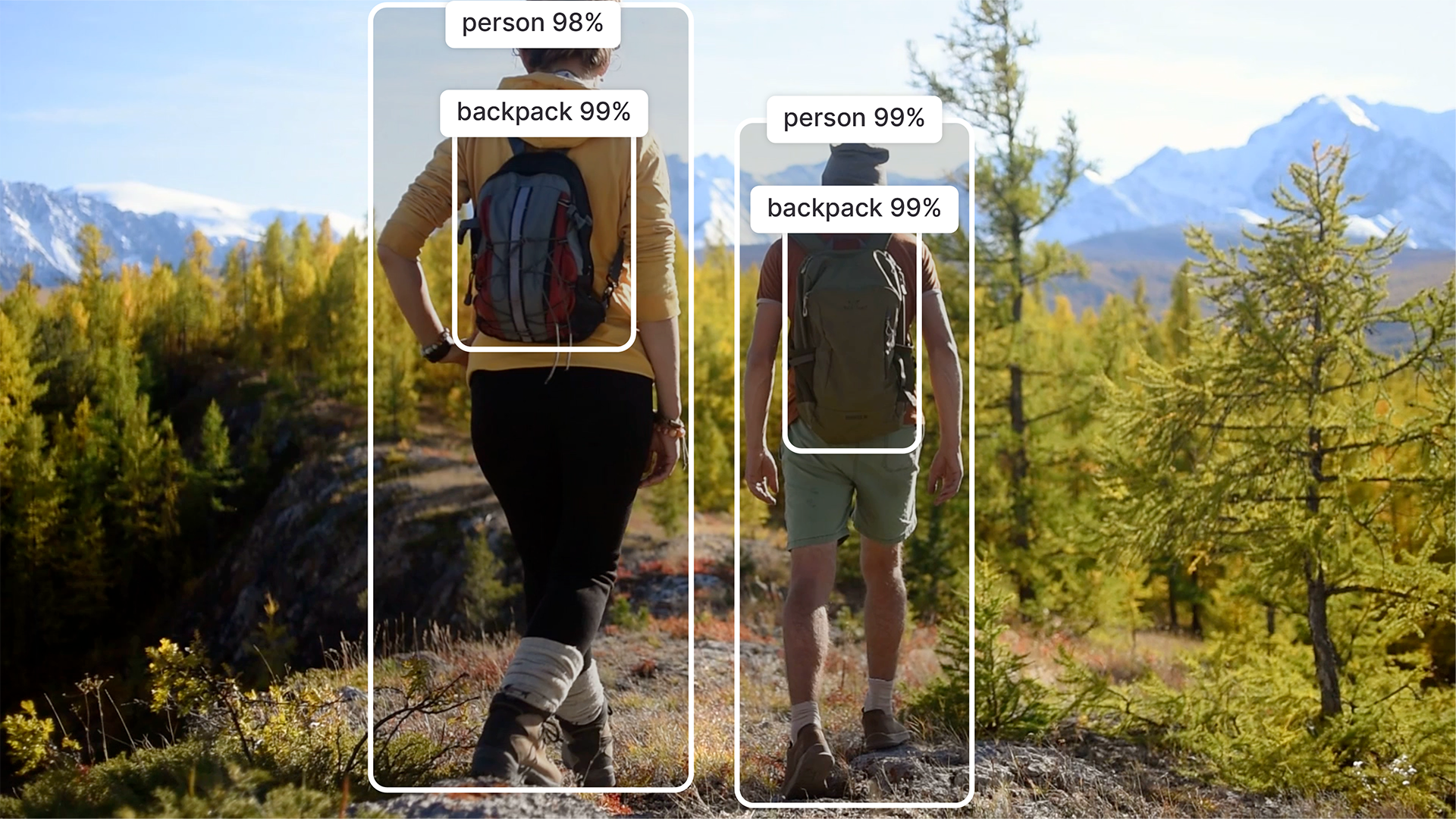

| # ResNet34-SSD: Optimized for Qualcomm Devices |

|

|

| ResNet34-SSD is a single-stage object detection model that integrates the ResNet34 backbone with the SSD (Single Shot MultiBox Detector) framework. It is optimized for real-time detection tasks and supports multiple deployment backends including PyTorch, TensorFlow, and ONNX. |

|

|

| This is based on the implementation of ResNet34-SSD found [here](https://github.com/mlcommons/inference/tree/33894a19c4af6207f7cfdda75f84570f04836de5/vision/classification_and_detection). |

| This repository contains pre-exported model files optimized for Qualcomm® devices. You can use the [Qualcomm® AI Hub Models](https://github.com/qualcomm/ai-hub-models/blob/main/src/qai_hub_models/models/resnet34_ssd1200) library to export with custom configurations. More details on model performance across various devices, can be found [here](#performance-summary). |

|

|

| Qualcomm AI Hub Models uses [Qualcomm AI Hub Workbench](https://workbench.aihub.qualcomm.com) to compile, profile, and evaluate this model. [Sign up](https://myaccount.qualcomm.com/signup) to run these models on a hosted Qualcomm® device. |

|

|

| ## Getting Started |

| There are two ways to deploy this model on your device: |

|

|

| ### Option 1: Download Pre-Exported Models |

|

|

| Below are pre-exported model assets ready for deployment. |

|

|

| | Runtime | Precision | Chipset | SDK Versions | Download | |

| |---|---|---|---|---| |

| | ONNX | float | Universal | QAIRT 2.42, ONNX Runtime 1.24.3 | [Download](https://qaihub-public-assets.s3.us-west-2.amazonaws.com/qai-hub-models/models/resnet34_ssd1200/releases/v0.53.1/resnet34_ssd1200-onnx-float.zip) |

| | QNN_DLC | float | Universal | QAIRT 2.45 | [Download](https://qaihub-public-assets.s3.us-west-2.amazonaws.com/qai-hub-models/models/resnet34_ssd1200/releases/v0.53.1/resnet34_ssd1200-qnn_dlc-float.zip) |

| | TFLITE | float | Universal | QAIRT 2.45 | [Download](https://qaihub-public-assets.s3.us-west-2.amazonaws.com/qai-hub-models/models/resnet34_ssd1200/releases/v0.53.1/resnet34_ssd1200-tflite-float.zip) |

| |

| For more device-specific assets and performance metrics, visit **[ResNet34-SSD on Qualcomm® AI Hub](https://aihub.qualcomm.com/models/resnet34_ssd1200)**. |

| |

| |

| ### Option 2: Export with Custom Configurations |

| |

| Use the [Qualcomm® AI Hub Models](https://github.com/qualcomm/ai-hub-models/blob/main/src/qai_hub_models/models/resnet34_ssd1200) Python library to compile and export the model with your own: |

| - Custom weights (e.g., fine-tuned checkpoints) |

| - Custom input shapes |

| - Target device and runtime configurations |

| |

| This option is ideal if you need to customize the model beyond the default configuration provided here. |

| |

| See our repository for [ResNet34-SSD on GitHub](https://github.com/qualcomm/ai-hub-models/blob/main/src/qai_hub_models/models/resnet34_ssd1200) for usage instructions. |

| |

| ## Model Details |

| |

| **Model Type:** Model_use_case.object_detection |

|

|

| **Model Stats:** |

| - Model checkpoint: resnet34-ssd1200 |

| - Input resolution: 1x3x1200x1200 |

| - Number of parameters: 20.0M |

| - Model size (float): 76.2 MB |

|

|

| ## Performance Summary |

| | Model | Runtime | Precision | Chipset | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit |

| |---|---|---|---|---|---|--- |

| | ResNet34-SSD | ONNX | float | Snapdragon® 8 Elite Gen 5 Mobile | 38.733 ms | 17 - 513 MB | NPU |

| | ResNet34-SSD | ONNX | float | Snapdragon® 8 Elite Mobile | 50.177 ms | 2 - 428 MB | NPU |

| | ResNet34-SSD | ONNX | float | Snapdragon® X2 Elite | 43.419 ms | 30 - 30 MB | NPU |

| | ResNet34-SSD | ONNX | float | Snapdragon® X Elite | 91.464 ms | 29 - 29 MB | NPU |

| | ResNet34-SSD | ONNX | float | Snapdragon® X Elite | 91.464 ms | 29 - 29 MB | NPU |

| | ResNet34-SSD | ONNX | float | Snapdragon® 8 Gen 3 Mobile | 62.899 ms | 0 - 517 MB | NPU |

| | ResNet34-SSD | ONNX | float | Qualcomm® QCS8550 (Proxy) | 90.698 ms | 0 - 573 MB | NPU |

| | ResNet34-SSD | ONNX | float | Qualcomm® QCS9075 | 152.711 ms | 16 - 36 MB | NPU |

| | ResNet34-SSD | ONNX | float | Snapdragon® 8 Elite For Galaxy Mobile | 50.177 ms | 2 - 428 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® 8 Elite Gen 5 Mobile | 52.76 ms | 15 - 553 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® 8 Elite Mobile | 66.869 ms | 16 - 391 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® X2 Elite | 61.836 ms | 17 - 17 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® X Elite | 128.96 ms | 17 - 17 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® X Elite | 128.96 ms | 17 - 17 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® 8 Gen 3 Mobile | 84.568 ms | 15 - 606 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Qualcomm® QCS8275 (Proxy) | 481.914 ms | 16 - 385 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Qualcomm® QCS8550 (Proxy) | 128.396 ms | 17 - 19 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Qualcomm® QCS9075 | 193.951 ms | 17 - 35 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Qualcomm® QCS8450 (Proxy) | 262.739 ms | 3 - 511 MB | NPU |

| | ResNet34-SSD | QNN_DLC | float | Snapdragon® 8 Elite For Galaxy Mobile | 66.869 ms | 16 - 391 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Snapdragon® 8 Elite Gen 5 Mobile | 76.117 ms | 0 - 564 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Snapdragon® 8 Elite Mobile | 88.158 ms | 0 - 402 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Snapdragon® 8 Gen 3 Mobile | 107.7 ms | 0 - 543 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Qualcomm® QCS8275 (Proxy) | 513.324 ms | 0 - 378 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Qualcomm® QCS8550 (Proxy) | 146.036 ms | 0 - 3 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Qualcomm® QCS9075 | 199.375 ms | 0 - 64 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Qualcomm® QCS8450 (Proxy) | 234.949 ms | 1 - 617 MB | NPU |

| | ResNet34-SSD | TFLITE | float | Snapdragon® 8 Elite For Galaxy Mobile | 88.158 ms | 0 - 402 MB | NPU |

| |

| ## License |

| * The license for the original implementation of ResNet34-SSD can be found |

| [here](https://github.com/mlcommons/inference/blob/33894a19c4af6207f7cfdda75f84570f04836de5/LICENSE.md). |

| |

| ## References |

| * [Source Model Implementation](https://github.com/mlcommons/inference/tree/33894a19c4af6207f7cfdda75f84570f04836de5/vision/classification_and_detection) |

| |

| ## Community |

| * Join [our AI Hub Slack community](https://aihub.qualcomm.com/community/slack) to collaborate, post questions and learn more about on-device AI. |

| * For questions or feedback please [reach out to us](mailto:ai-hub-support@qti.qualcomm.com). |

| |