AttributioNet: A Fine-Tuned RoBERTa Model for Attribution Classification

Overview

This repository contains a fine-tuned RoBERTa model designed for multi-label classification of attributions for self and others. The model predicts four attribution categories (in this order):

- Self-Dispositional

- Self-Situational

- Other-Dispositional

- Other-Situational

The training process and evaluation results, including calibration, loss curves, and ROC curves, are documented below.

Model Details

- Base Model: roberta-base

- Fine-Tuning Approach: Multi-label classification

- Number of Labels: 4

- Loss Function: Binary Cross-Entropy with Logits (BCEWithLogitsLoss)

- Optimizer: AdamW

- Batch Size: 16

- Learning Rate: 2e-5

- Epochs: 3

Dataset

The dataset consists of ~217,000 sentences labeled with attributions. Labels are provided as binary indicators for each category. The data was split into:

- Training Set: 60%

- Validation Set: 20%

- Test Set: 20%

Training & Evaluation

Performance Metrics

- Overall ROC AUC Score: 0.9439

- Overall PR AUC Score: 0.8479

- Per-Class Performance:

- Self-Dispositional: ROC AUC: 0.9643, PR AUC: 0.8062

- Self-Situational: ROC AUC: 0.9534, PR AUC: 0.8871

- Other-Dispositional: ROC AUC: 0.9421, PR AUC: 0.8771

- Other-Situational: ROC AUC: 0.9159, PR AUC: 0.8211

Evaluation Metrics

- Classification report (saved as

classification_report.csv) - Calibration curve (

calibration_curve.png) - ROC curves (

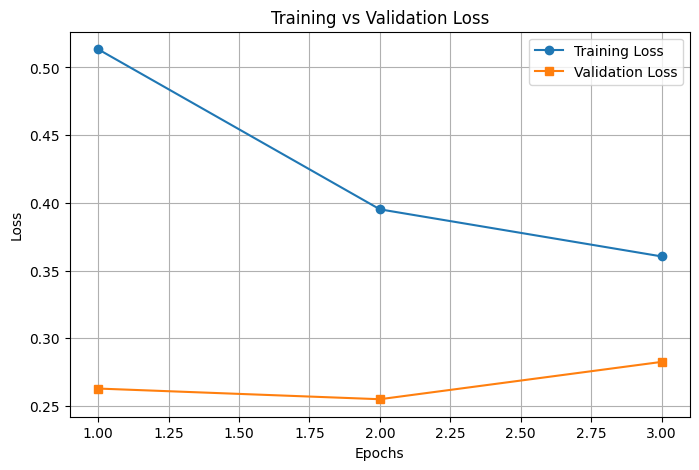

per_class_roc_curves.png) - Training and validation loss (

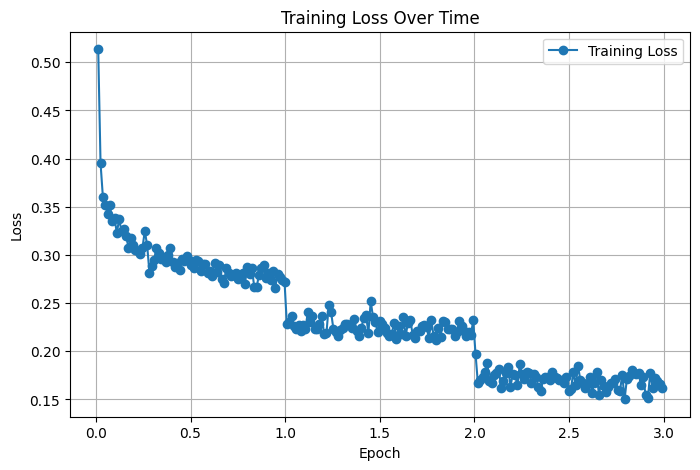

learning_curve.png) - Training loss progression (

training_loss_plot.png)

Usage

Installation

Ensure you have transformers, datasets, and torch installed:

pip install transformers datasets torch

Loading the Model

You can load the model and tokenizer using the transformers library:

from transformers import RobertaTokenizer, RobertaForSequenceClassification

tokenizer = RobertaTokenizer.from_pretrained("ryanboyd/AttributioNet")

model = RobertaForSequenceClassification.from_pretrained("ryanboyd/AttributioNet")

Alternatively, you can use a custom-made Python package, blamegame, to easily download/deploy this model to individual texts or batch process CSV files.

For more information, see: https://pypi.org/project/blamegame/

Inference

def predict(text):

inputs = tokenizer(text, return_tensors="pt", padding=True, truncation=True, max_length=128)

outputs = model(**inputs)

probs = torch.sigmoid(outputs.logits).detach().numpy()

predictions = (probs > 0.5).astype(int)

return predictions

sample_text = "The situation was beyond my control."

predictions = predict(sample_text)

print(predictions) # Binary labels for each class

Fine-Tuning Details

The fine-tuning process was carried out using the Hugging Face Trainer API with custom modifications:

- Custom loss function for multi-label classification

- Per-class F1-score computation for evaluation

- Model checkpointing based on best macro F1-score

Training was performed using the following command:

trainer.train()

Results Visualization

Calibration Curve

Training vs Validation Loss

Per-Class ROC Curves

Training Loss Over Time

Citation

If you use this model, please cite this work appropriately. An official citation will be coming soon.

- Downloads last month

- -

Model tree for ryanboyd/AttributioNet

Base model

FacebookAI/roberta-base