Update README.md

Browse files

README.md

CHANGED

|

@@ -1,84 +1,84 @@

|

|

| 1 |

-

---

|

| 2 |

-

base_model: stabilityai/stable-diffusion-2-1

|

| 3 |

-

license: openrail++

|

| 4 |

-

model_creator: stabilityai

|

| 5 |

-

model_name: stable-diffusion-2-1

|

| 6 |

-

quantized_by: Second State Inc.

|

| 7 |

-

tags:

|

| 8 |

-

- stable-diffusion

|

| 9 |

-

- text-to-image

|

| 10 |

-

---

|

| 11 |

-

|

| 12 |

-

<!-- header start -->

|

| 13 |

-

<!-- 200823 -->

|

| 14 |

-

<div style="width: auto; margin-left: auto; margin-right: auto">

|

| 15 |

-

<img src="https://github.com/LlamaEdge/LlamaEdge/raw/dev/assets/logo.svg" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 16 |

-

</div>

|

| 17 |

-

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

|

| 18 |

-

<!-- header end -->

|

| 19 |

-

|

| 20 |

-

# stable-diffusion-2-1-GGUF

|

| 21 |

-

|

| 22 |

-

## Original Model

|

| 23 |

-

|

| 24 |

-

[stabilityai/stable-diffusion-2-1](https://huggingface.co/stabilityai/stable-diffusion-2-1)

|

| 25 |

-

|

| 26 |

-

## Run with

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

<!-- - LlamaEdge version: [v0.12.2](https://github.com/LlamaEdge/LlamaEdge/releases/tag/0.12.2) and above

|

| 31 |

-

|

| 32 |

-

- Prompt template

|

| 33 |

-

|

| 34 |

-

- Prompt type: `chatml`

|

| 35 |

-

|

| 36 |

-

- Prompt string

|

| 37 |

-

|

| 38 |

-

```text

|

| 39 |

-

<|im_start|>system

|

| 40 |

-

{system_message}<|im_end|>

|

| 41 |

-

<|im_start|>user

|

| 42 |

-

{prompt}<|im_end|>

|

| 43 |

-

<|im_start|>assistant

|

| 44 |

-

```

|

| 45 |

-

|

| 46 |

-

- Context size: `4096`

|

| 47 |

-

|

| 48 |

-

- Run as LlamaEdge service

|

| 49 |

-

|

| 50 |

-

```bash

|

| 51 |

-

wasmedge --dir .:. --nn-preload default:GGML:AUTO:stablelm-2-12b-chat-Q5_K_M.gguf \

|

| 52 |

-

llama-api-server.wasm \

|

| 53 |

-

--prompt-template chatml \

|

| 54 |

-

--ctx-size 4096 \

|

| 55 |

-

--model-name stablelm-2-12b-chat

|

| 56 |

-

```

|

| 57 |

-

|

| 58 |

-

- Run as LlamaEdge command app

|

| 59 |

-

|

| 60 |

-

```bash

|

| 61 |

-

wasmedge --dir .:. \

|

| 62 |

-

--nn-preload default:GGML:AUTO:stablelm-2-12b-chat-Q5_K_M.gguf \

|

| 63 |

-

llama-chat.wasm \

|

| 64 |

-

--prompt-template chatml \

|

| 65 |

-

--ctx-size 4096

|

| 66 |

-

``` -->

|

| 67 |

-

|

| 68 |

-

## Quantized GGUF Models

|

| 69 |

-

|

| 70 |

-

Using formats of different precisions will yield results of varying quality.

|

| 71 |

-

|

| 72 |

-

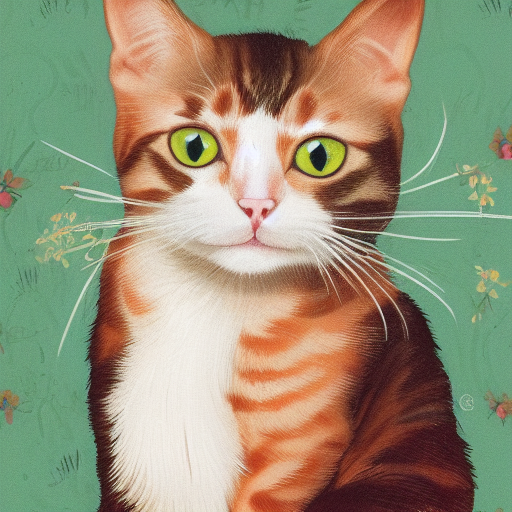

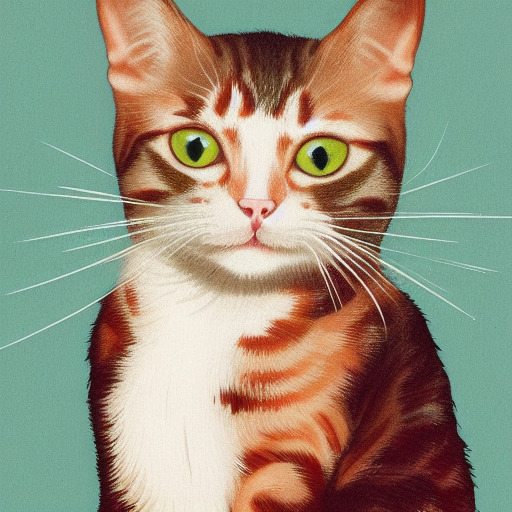

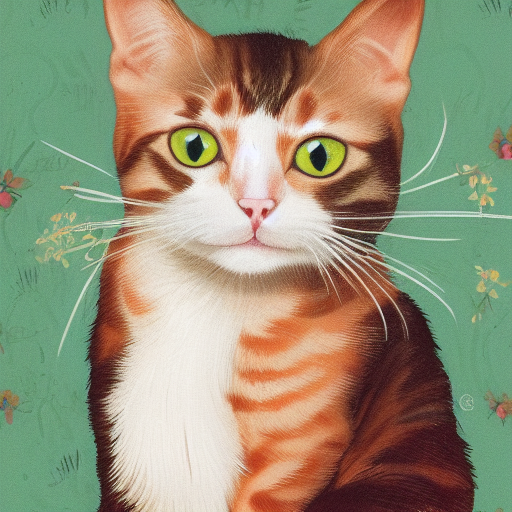

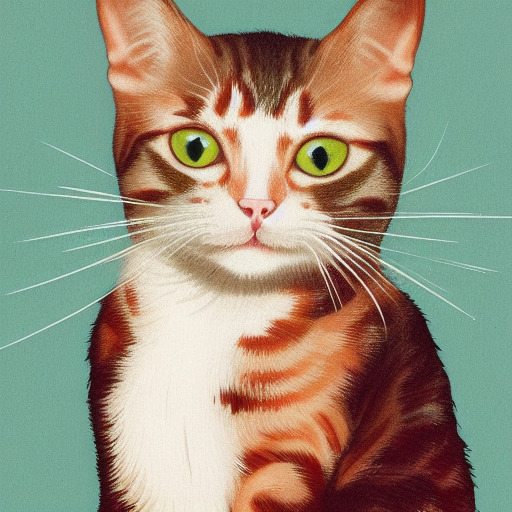

| f32 | f16 |q8_0 |q5_0 |q5_1 |q4_0 |q4_1 |

|

| 73 |

-

| ---- |---- |---- |---- |---- |---- |---- |

|

| 74 |

-

|  | | | | | | |

|

| 75 |

-

|

| 76 |

-

| Name | Quant method | Bits | Size | Use case |

|

| 77 |

-

| ---- | ---- | ---- | ---- | ----- |

|

| 78 |

-

| [v2-1_768-nonema-pruned-Q4_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q4_0.gguf) | Q4_0 | 2 | 1.70 GB | |

|

| 79 |

-

| [v2-1_768-nonema-pruned-Q4_1.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q4_1.gguf) | Q4_1 | 3 | 1.74 GB | |

|

| 80 |

-

| [v2-1_768-nonema-pruned-Q5_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q5_0.gguf) | Q5_0 | 3 | 1.78 GB | |

|

| 81 |

-

| [v2-1_768-nonema-pruned-Q5_1.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q5_1.gguf) | Q5_1 | 3 | 1.82 GB | |

|

| 82 |

-

| [v2-1_768-nonema-pruned-Q8_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q8_0.gguf) | Q8_0 | 4 | 2.01 GB | |

|

| 83 |

-

| [v2-1_768-nonema-pruned-f16.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-f16.gguf) | f16 | 4 | 2.61 GB | |

|

| 84 |

-

| [v2-1_768-nonema-pruned-f32.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-f32.gguf) | f32 | 4 | 5.21 GB | |

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: stabilityai/stable-diffusion-2-1

|

| 3 |

+

license: openrail++

|

| 4 |

+

model_creator: stabilityai

|

| 5 |

+

model_name: stable-diffusion-2-1

|

| 6 |

+

quantized_by: Second State Inc.

|

| 7 |

+

tags:

|

| 8 |

+

- stable-diffusion

|

| 9 |

+

- text-to-image

|

| 10 |

+

---

|

| 11 |

+

|

| 12 |

+

<!-- header start -->

|

| 13 |

+

<!-- 200823 -->

|

| 14 |

+

<div style="width: auto; margin-left: auto; margin-right: auto">

|

| 15 |

+

<img src="https://github.com/LlamaEdge/LlamaEdge/raw/dev/assets/logo.svg" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 16 |

+

</div>

|

| 17 |

+

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

|

| 18 |

+

<!-- header end -->

|

| 19 |

+

|

| 20 |

+

# stable-diffusion-2-1-GGUF

|

| 21 |

+

|

| 22 |

+

## Original Model

|

| 23 |

+

|

| 24 |

+

[stabilityai/stable-diffusion-2-1](https://huggingface.co/stabilityai/stable-diffusion-2-1)

|

| 25 |

+

|

| 26 |

+

## Run with `sd-api-server`

|

| 27 |

+

|

| 28 |

+

Go to the [sd-api-server](https://github.com/LlamaEdge/sd-api-server/blob/main/README.md) repository for more information.

|

| 29 |

+

|

| 30 |

+

<!-- - LlamaEdge version: [v0.12.2](https://github.com/LlamaEdge/LlamaEdge/releases/tag/0.12.2) and above

|

| 31 |

+

|

| 32 |

+

- Prompt template

|

| 33 |

+

|

| 34 |

+

- Prompt type: `chatml`

|

| 35 |

+

|

| 36 |

+

- Prompt string

|

| 37 |

+

|

| 38 |

+

```text

|

| 39 |

+

<|im_start|>system

|

| 40 |

+

{system_message}<|im_end|>

|

| 41 |

+

<|im_start|>user

|

| 42 |

+

{prompt}<|im_end|>

|

| 43 |

+

<|im_start|>assistant

|

| 44 |

+

```

|

| 45 |

+

|

| 46 |

+

- Context size: `4096`

|

| 47 |

+

|

| 48 |

+

- Run as LlamaEdge service

|

| 49 |

+

|

| 50 |

+

```bash

|

| 51 |

+

wasmedge --dir .:. --nn-preload default:GGML:AUTO:stablelm-2-12b-chat-Q5_K_M.gguf \

|

| 52 |

+

llama-api-server.wasm \

|

| 53 |

+

--prompt-template chatml \

|

| 54 |

+

--ctx-size 4096 \

|

| 55 |

+

--model-name stablelm-2-12b-chat

|

| 56 |

+

```

|

| 57 |

+

|

| 58 |

+

- Run as LlamaEdge command app

|

| 59 |

+

|

| 60 |

+

```bash

|

| 61 |

+

wasmedge --dir .:. \

|

| 62 |

+

--nn-preload default:GGML:AUTO:stablelm-2-12b-chat-Q5_K_M.gguf \

|

| 63 |

+

llama-chat.wasm \

|

| 64 |

+

--prompt-template chatml \

|

| 65 |

+

--ctx-size 4096

|

| 66 |

+

``` -->

|

| 67 |

+

|

| 68 |

+

## Quantized GGUF Models

|

| 69 |

+

|

| 70 |

+

Using formats of different precisions will yield results of varying quality.

|

| 71 |

+

|

| 72 |

+

| f32 | f16 |q8_0 |q5_0 |q5_1 |q4_0 |q4_1 |

|

| 73 |

+

| ---- |---- |---- |---- |---- |---- |---- |

|

| 74 |

+

|  | | | | | | |

|

| 75 |

+

|

| 76 |

+

| Name | Quant method | Bits | Size | Use case |

|

| 77 |

+

| ---- | ---- | ---- | ---- | ----- |

|

| 78 |

+

| [v2-1_768-nonema-pruned-Q4_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q4_0.gguf) | Q4_0 | 2 | 1.70 GB | |

|

| 79 |

+

| [v2-1_768-nonema-pruned-Q4_1.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q4_1.gguf) | Q4_1 | 3 | 1.74 GB | |

|

| 80 |

+

| [v2-1_768-nonema-pruned-Q5_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q5_0.gguf) | Q5_0 | 3 | 1.78 GB | |

|

| 81 |

+

| [v2-1_768-nonema-pruned-Q5_1.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q5_1.gguf) | Q5_1 | 3 | 1.82 GB | |

|

| 82 |

+

| [v2-1_768-nonema-pruned-Q8_0.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-Q8_0.gguf) | Q8_0 | 4 | 2.01 GB | |

|

| 83 |

+

| [v2-1_768-nonema-pruned-f16.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-f16.gguf) | f16 | 4 | 2.61 GB | |

|

| 84 |

+

| [v2-1_768-nonema-pruned-f32.gguf](https://huggingface.co/second-state/stable-diffusion-2-1-GGUF/blob/main/v2-1_768-nonema-pruned-f32.gguf) | f32 | 4 | 5.21 GB | |

|