Spaces:

Running

Running

File size: 5,165 Bytes

82677d6 ce05689 82677d6 970f6b8 82677d6 970f6b8 82677d6 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 4c8cb56 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 970f6b8 ce05689 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 |

---

title: Complexity Framework

emoji: 🐢

colorFrom: purple

colorTo: blue

sdk: static

pinned: true

thumbnail: >-

https://cdn-uploads.huggingface.co/production/uploads/643222d9f76c34519e96a299/8j1GHX24MV3-sv-4zl7ZB.png

---

# Complexity Framework

**Modular Python framework for building LLMs with INL Dynamics stability**

## What is Complexity Framework?

Complexity Framework is a complete toolkit for building transformer architectures with built-in training stability. It provides:

- **INL Dynamics** - Second-order dynamical system for training stability

- **Token-Routed MLP (MoE)** - Efficient sparse activation

- **CUDA/Triton Optimizations** - Flash Attention, Sliding Window, Sparse, Linear

- **O(N) Architectures** - Mamba, RWKV, RetNet

- **Small Budget Training** - Quantization, Mixed Precision, Gradient Checkpointing

## Key Innovation: INL Dynamics

Velocity tracking to prevent training explosion after 400k+ steps:

```python

from complexity.api import INLDynamics

# CRITICAL: beta in [0, 2], NOT [0, inf)!

dynamics = INLDynamics(

hidden_size=768,

beta_max=2.0, # Clamp beta for stability

velocity_max=10.0, # Limit velocity

)

h_next, v_next = dynamics(hidden_states, velocity)

```

**The bug we fixed**: `softplus` without clamp goes to infinity, causing NaN after 400k steps. Clamping beta to [0, 2] keeps training stable.

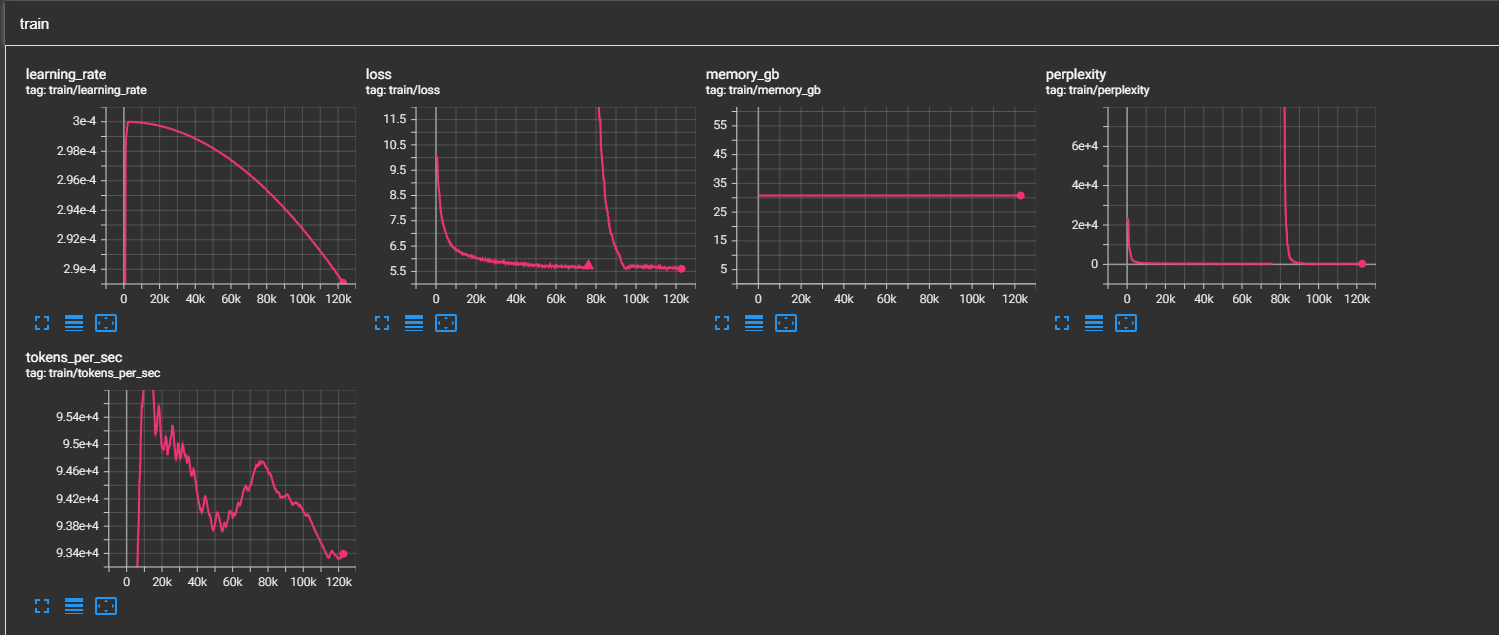

## Loss Spike Recovery

*INL Dynamics recovers from loss spikes thanks to velocity damping.*

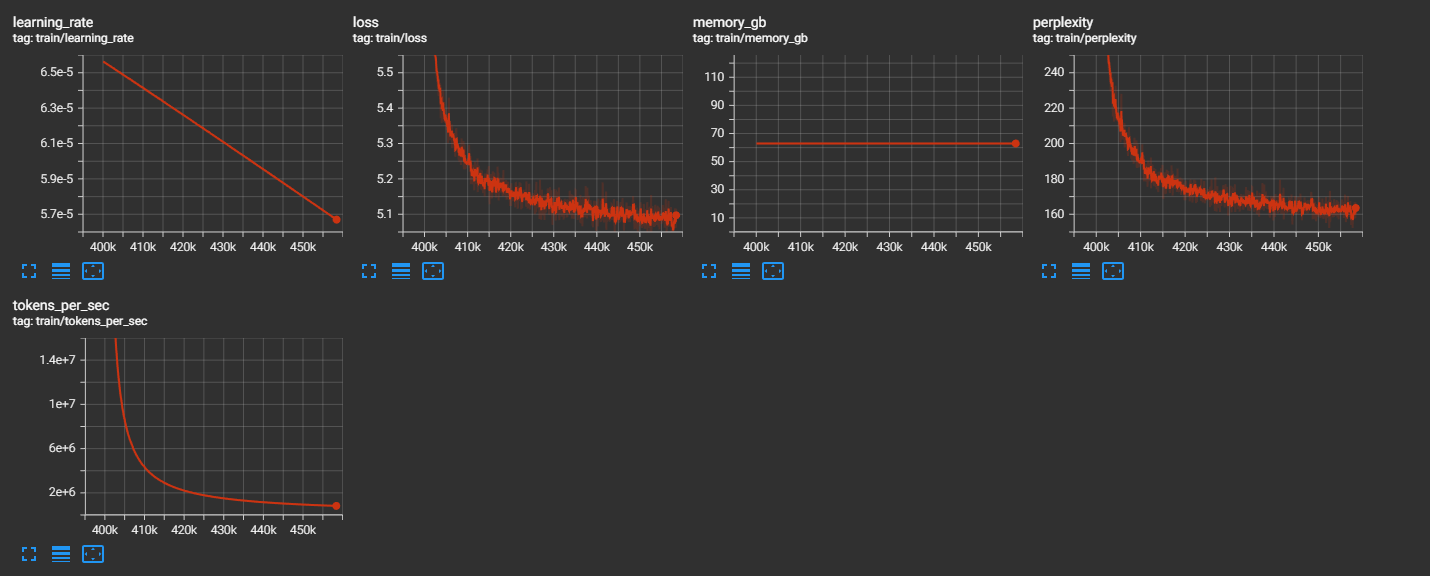

## Stability at 400k+ Steps

*After beta clamping fix: training remains stable past 400k steps where it previously exploded.*

## Quick Start

```bash

pip install complexity-framework

```

```python

from complexity.api import (

# Building blocks

Attention, MLP, RMSNorm, RoPE, INLDynamics,

# Optimizations

CUDA, Efficient,

# Architectures O(N)

Architecture, Mamba, RWKV,

)

# Flash Attention

attn = CUDA.flash(hidden_size=4096, num_heads=32)

# INL Dynamics (training stability)

dynamics = INLDynamics(hidden_size=768, beta_max=2.0)

h, velocity = dynamics(hidden_states, velocity)

# Small budget model

model = Efficient.tiny_llm(vocab_size=32000) # ~125M params

```

## Features

| Module | Description |

|--------|-------------|

| **Core** | Attention (GQA/MHA/MQA), MLP (SwiGLU/GeGLU/MoE), Position (RoPE/YaRN/ALiBi) |

| **INL Dynamics** | Velocity tracking for training stability |

| **CUDA/Triton** | Flash Attention, Sliding Window, Sparse, Linear |

| **Efficient** | Quantization, Mixed Precision, Small Models |

| **O(N) Architectures** | Mamba, RWKV, RetNet |

| **Multimodal** | Vision, Audio, Fusion |

## Token-Routed MLP (MoE)

```python

from complexity.api import MLP, TokenRoutedMLP

# Via factory

moe = MLP.moe(hidden_size=4096, num_experts=8, top_k=2)

# Direct

moe = TokenRoutedMLP(

hidden_size=4096,

num_experts=8,

top_k=2,

)

output, aux_loss = moe(hidden_states)

```

## Small Budget Training

```python

from complexity.api import Efficient

# Pre-configured models

model = Efficient.nano_llm(vocab_size=32000) # ~10M params

model = Efficient.micro_llm(vocab_size=32000) # ~30M params

model = Efficient.tiny_llm(vocab_size=32000) # ~125M params

model = Efficient.small_llm(vocab_size=32000) # ~350M params

# Memory optimizations

Efficient.enable_checkpointing(model)

model, optimizer, scaler = Efficient.mixed_precision(model, optimizer)

```

## O(N) Architectures

For very long sequences:

```python

from complexity.api import Architecture

model = Architecture.mamba(hidden_size=768, num_layers=12)

model = Architecture.rwkv(hidden_size=768, num_layers=12)

model = Architecture.retnet(hidden_size=768, num_layers=12)

```

## Documentation

- [Getting Started](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/getting-started.md)

- [API Reference](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/api.md)

- [INL Dynamics](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/dynamics.md)

- [MoE / Token-Routed MLP](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/moe.md)

- [CUDA Optimizations](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/cuda.md)

- [Efficient Training](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/efficient.md)

- [O(N) Architectures](https://github.com/Complexity-ML/complexity-framework/blob/main/docs/architectures.md)

## Links

- [GitHub](https://github.com/Complexity-ML/complexity-framework)

- [PyPI](https://pypi.org/project/complexity-framework/)

## License

CC BY-NC 4.0 (Creative Commons Attribution-NonCommercial 4.0)

## Citation

```bibtex

@software{complexity_framework_2024,

title={Complexity Framework: Modular LLM Building Blocks with INL Dynamics},

author={Complexity-ML},

year={2024},

url={https://github.com/Complexity-ML/complexity-framework}

}

```

---

**Build stable LLMs. Train with confidence.**

|