Spaces:

Sleeping

Sleeping

Upload 11 files

#1

by alexcpn - opened

- Dockerfile +41 -0

- Dockerfile.local +21 -0

- README.md +144 -6

- agent_interface.py +48 -0

- git_utils.py +31 -0

- pyproject.toml +25 -0

- review_orchestrator.py +348 -0

- start_hf.sh +32 -0

- test_client.py +25 -0

- uv.lock +0 -0

- web_server.py +162 -0

Dockerfile

ADDED

|

@@ -0,0 +1,41 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM python:3.10-slim

|

| 2 |

+

|

| 3 |

+

# Install system dependencies

|

| 4 |

+

RUN apt-get update && apt-get install -y \

|

| 5 |

+

git \

|

| 6 |

+

redis-server \

|

| 7 |

+

curl \

|

| 8 |

+

procps \

|

| 9 |

+

&& rm -rf /var/lib/apt/lists/*

|

| 10 |

+

|

| 11 |

+

# Install uv

|

| 12 |

+

COPY --from=ghcr.io/astral-sh/uv:latest /uv /uvx /bin/

|

| 13 |

+

|

| 14 |

+

WORKDIR /app

|

| 15 |

+

|

| 16 |

+

# Copy project files

|

| 17 |

+

COPY pyproject.toml uv.lock /app/

|

| 18 |

+

RUN uv sync --frozen --no-install-project

|

| 19 |

+

|

| 20 |

+

COPY . /app

|

| 21 |

+

RUN uv sync --frozen

|

| 22 |

+

|

| 23 |

+

# Clone MCP server

|

| 24 |

+

RUN git clone https://github.com/alexcpn/codereview_mcp_server.git

|

| 25 |

+

|

| 26 |

+

# Make startup script executable

|

| 27 |

+

RUN chmod +x start_hf.sh

|

| 28 |

+

|

| 29 |

+

# Create a user to run the app (optional but good practice, though HF often runs as 1000)

|

| 30 |

+

# For simplicity in this setup, we'll run as root or default user,

|

| 31 |

+

# but we need to make sure we can write to directories if needed.

|

| 32 |

+

# HF Spaces usually run as user 1000.

|

| 33 |

+

RUN useradd -m -u 1000 user

|

| 34 |

+

USER user

|

| 35 |

+

ENV HOME=/home/user \

|

| 36 |

+

PATH=/home/user/.local/bin:$PATH

|

| 37 |

+

|

| 38 |

+

# Expose the port (HF Spaces expects 7860)

|

| 39 |

+

EXPOSE 7860

|

| 40 |

+

|

| 41 |

+

CMD ["./start_hf.sh"]

|

Dockerfile.local

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM python:3.10-slim-trixie

|

| 2 |

+

|

| 3 |

+

COPY --from=ghcr.io/astral-sh/uv:latest /uv /uvx /bin/

|

| 4 |

+

WORKDIR /app

|

| 5 |

+

|

| 6 |

+

# Install dependencies first (cached unless lockfile changes)

|

| 7 |

+

COPY pyproject.toml uv.lock /app/

|

| 8 |

+

RUN uv sync --frozen --no-install-project

|

| 9 |

+

|

| 10 |

+

# Then copy the rest of the code

|

| 11 |

+

COPY . /app

|

| 12 |

+

RUN uv sync --frozen

|

| 13 |

+

|

| 14 |

+

# Run the server

|

| 15 |

+

# Run the server

|

| 16 |

+

CMD ["uv", "run", "python", "agent_interface.py"]

|

| 17 |

+

|

| 18 |

+

# Build the docker

|

| 19 |

+

# docker build -t codereview-agent .

|

| 20 |

+

# run as

|

| 21 |

+

# docker run -it --rm -p 7860:7860 codereview-agent

|

README.md

CHANGED

|

@@ -1,11 +1,149 @@

|

|

| 1 |

---

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

|

|

|

| 6 |

sdk: docker

|

|

|

|

| 7 |

pinned: false

|

| 8 |

-

short_description: An Angentic AI example with code review

|

| 9 |

---

|

| 10 |

|

| 11 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

|

| 3 |

+

title: Code Review Agentic AI based on MCP

|

| 4 |

+

emoji: 🛰️

|

| 5 |

+

colorFrom: indigo

|

| 6 |

+

colorTo: blue

|

| 7 |

sdk: docker

|

| 8 |

+

app_file: Dockerfile

|

| 9 |

pinned: false

|

|

|

|

| 10 |

---

|

| 11 |

|

| 12 |

+

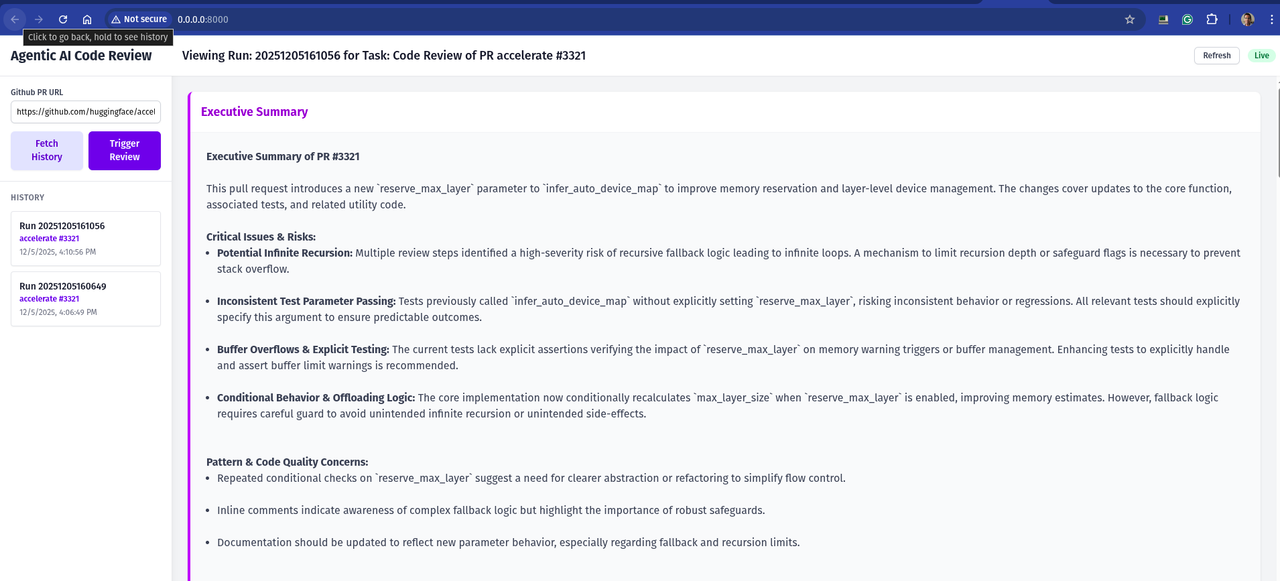

# Agentic AI Code Review in Pure Python - No Agentic Framework

|

| 13 |

+

|

| 14 |

+

Agentic code review pipeline that plans, calls tools, and produces structured findings without any heavyweight framework. Implemented in plain Python using [uv](https://github.com/astral-sh/uv), [FastAPI](https://fastapi.tiangolo.com/), and a small footprint wrapper library [nmagents](https://github.com/alexcpn/noagent-ai). Deep code context comes from a Tree-Sitter-backed Model Context Protocol (MCP) server.

|

| 15 |

+

|

| 16 |

+

## What this repo demonstrates

|

| 17 |

+

- End-to-end AI review loop in a few hundred lines of Python (`code_review_agent.py`)

|

| 18 |

+

- Tool-augmented LLM via Tree-Sitter AST introspection from an MCP server

|

| 19 |

+

- Deterministic step planning/execution with JSON repair and YAML logs

|

| 20 |

+

- Works with OpenAI or any OpenAI-compatible endpoint (ollam,vllm)

|

| 21 |

+

- Ships as a FastAPI service, CLI helper, and Docker image

|

| 22 |

+

|

| 23 |

+

## How it works

|

| 24 |

+

- Fetch the PR diff, ask the LLM for a per-file review plan, then execute each step.

|

| 25 |

+

- MCP server ([codereview_mcp_server](https://github.com/alexcpn/codereview_mcp_server)) exposes AST tools (definitions, call-sites, docstrings) using [Tree-Sitter](https://tree-sitter.github.io/tree-sitter/).

|

| 26 |

+

- Minimal orchestration comes from [nmagents](https://github.com/alexcpn/noagent-ai) Command pattern: plan → optional tool calls → critique/patch suggestions → YAML logs.

|

| 27 |

+

|

| 28 |

+

Models are effective with very detailed prompts instead of one-liners. Illustration prompt is [prompts/code_review_prompts.txt](prompts/code_review_prompts.txt) with context populated at place holders.

|

| 29 |

+

|

| 30 |

+

Results are good if a task can be broken into steps and each step executed in place. This keeps the context tight.

|

| 31 |

+

|

| 32 |

+

Models which gives good result are GPT 4.1 Nano, GPT 5 Nano.

|

| 33 |

+

|

| 34 |

+

Also this will run with any OpenAI API comptatible model; Like ollam (with Microsoft phi3.5 model) and vllm (with Google gemma model) wtih a laptop GPU.

|

| 35 |

+

|

| 36 |

+

Note that these small models are really not that good with complex tasks like this.

|

| 37 |

+

|

| 38 |

+

### Core flow (excerpt from `review_orchestrator.py`)

|

| 39 |

+

|

| 40 |

+

```python

|

| 41 |

+

file_diffs = git_utils.get_pr_diff_url(repo_url, pr_number)

|

| 42 |

+

response = call_llm_command.execute(context) # plan steps

|

| 43 |

+

response_data, _ = parse_json_response_with_repair(...) # repair/parse plan

|

| 44 |

+

|

| 45 |

+

tools = step.get("tools", [])

|

| 46 |

+

if tools:

|

| 47 |

+

tool_outputs = await execute_step_tools(step, ast_tool_call_command)

|

| 48 |

+

|

| 49 |

+

step_context = load_prompt(diff_or_code_block=diff, tool_outputs=step.get("tool_results", ""))

|

| 50 |

+

step_response = call_llm_command.execute(step_context) # execute each step

|

| 51 |

+

```

|

| 52 |

+

|

| 53 |

+

## Prerequisites

|

| 54 |

+

- Python 3.10+

|

| 55 |

+

- [uv](https://github.com/astral-sh/uv) installed

|

| 56 |

+

- `.env` with `OPENAI_API_KEY=...`

|

| 57 |

+

- Running MCP server with AST tools (e.g., [codereview_mcp_server](https://github.com/alexcpn/codereview_mcp_server)) reachable at `CODE_AST_MCP_SERVER_URL`

|

| 58 |

+

|

| 59 |

+

# Setup

|

| 60 |

+

|

| 61 |

+

## Start the Code Review MCP server

|

| 62 |

+

```bash

|

| 63 |

+

git clone https://github.com/alexcpn/codereview_mcp_server.git

|

| 64 |

+

cd codereview_mcp_server

|

| 65 |

+

uv run python http_server.py # serves MCP at http://127.0.0.1:7860/mcp/

|

| 66 |

+

```

|

| 67 |

+

|

| 68 |

+

# Running Locally with Ray (Pure Ray)

|

| 69 |

+

|

| 70 |

+

This is the simplest way to run the agent without Kubernetes complexity.

|

| 71 |

+

|

| 72 |

+

## Start Ray

|

| 73 |

+

Start a local Ray cluster instance:

|

| 74 |

+

```bash

|

| 75 |

+

uv run ray start --head

|

| 76 |

+

# if there is problem with start up, kill old process

|

| 77 |

+

ray stop --force

|

| 78 |

+

```

|

| 79 |

+

*Note: This starts Ray on your local machine. You can view the dashboard at http://127.0.0.1:8265*

|

| 80 |

+

|

| 81 |

+

|

| 82 |

+

## Run Redis with persistent storage:

|

| 83 |

+

|

| 84 |

+

```

|

| 85 |

+

docker run -d \

|

| 86 |

+

-p 6380:6379 \

|

| 87 |

+

--name redis-review \

|

| 88 |

+

-v $(pwd)/redis-data:/data \

|

| 89 |

+

redis \

|

| 90 |

+

redis-server --appendonly yes

|

| 91 |

+

```

|

| 92 |

+

|

| 93 |

+

To delete older jobs

|

| 94 |

+

```

|

| 95 |

+

redis-cli --scan --pattern "review:*" | xargs redis-cli del

|

| 96 |

+

```

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

## Run the Agentic AI Webserver

|

| 100 |

+

|

| 101 |

+

Note - see the .env (copy) file and create a .env file with the same variables but correct values

|

| 102 |

+

|

| 103 |

+

```

|

| 104 |

+

OPENAI_API_KEY=xxx

|

| 105 |

+

REDIS_PORT=6380

|

| 106 |

+

AST_MCP_SERVER_URL=http://127.0.0.1:7860/mcp/

|

| 107 |

+

RAY_ADDRESS="auto"

|

| 108 |

+

```

|

| 109 |

+

|

| 110 |

+

```

|

| 111 |

+

uv run web_server.py

|

| 112 |

+

```

|

| 113 |

+

This will start the web server on http://0.0.0.0:8000/

|

| 114 |

+

|

| 115 |

+

You will get a UI to trigger the review and see triggered reviews and steps

|

| 116 |

+

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

## Deploying to Hugging Face Spaces

|

| 120 |

+

|

| 121 |

+

This repository includes a configuration to deploy directly to Hugging Face Spaces (Docker SDK).

|

| 122 |

+

|

| 123 |

+

1. **Create a New Space**:

|

| 124 |

+

- Go to [Hugging Face Spaces](https://huggingface.co/spaces).

|

| 125 |

+

- Create a new Space.

|

| 126 |

+

- Select **Docker** as the SDK.

|

| 127 |

+

|

| 128 |

+

2. **Upload Files**:

|

| 129 |

+

- Upload the contents of this repository to your Space.

|

| 130 |

+

- **Important**: You must tell Hugging Face to use `Dockerfile.hf` instead of the default `Dockerfile`.

|

| 131 |

+

- You can do this by renaming `Dockerfile.hf` to `Dockerfile` in the Space, or by configuring the Space settings if supported.

|

| 132 |

+

- *Recommendation*: Rename `Dockerfile` to `Dockerfile.local` and `Dockerfile.hf` to `Dockerfile` before pushing to the Space.

|

| 133 |

+

|

| 134 |

+

3. **Set Secrets**:

|

| 135 |

+

- In your Space settings, go to **Settings > Variables and secrets**.

|

| 136 |

+

- Add a new secret: `OPENAI_API_KEY` with your API key.

|

| 137 |

+

|

| 138 |

+

4. **Run**:

|

| 139 |

+

- The Space will build and start.

|

| 140 |

+

- Once running, you will see the web interface.

|

| 141 |

+

|

| 142 |

+

---

|

| 143 |

+

|

| 144 |

+

## References

|

| 145 |

+

- [Model Context Protocol](https://github.com/modelcontextprotocol/specification)

|

| 146 |

+

- [Tree-Sitter](https://tree-sitter.github.io/tree-sitter/)

|

| 147 |

+

- [codereview_mcp_server](https://github.com/alexcpn/codereview_mcp_server)

|

| 148 |

+

- [nmagents (noagent-ai)](https://github.com/alexcpn/noagent-ai)

|

| 149 |

+

- [uv package manager](https://github.com/astral-sh/uv)

|

agent_interface.py

ADDED

|

@@ -0,0 +1,48 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import grpc

|

| 2 |

+

from concurrent import futures

|

| 3 |

+

import logging as log

|

| 4 |

+

import os

|

| 5 |

+

import asyncio

|

| 6 |

+

import protos.agent_pb2 as agent_pb2

|

| 7 |

+

import protos.agent_pb2_grpc as agent_pb2_grpc

|

| 8 |

+

from review_orchestrator import CodeReviewOrchestrator

|

| 9 |

+

from load_dotenv import load_dotenv

|

| 10 |

+

load_dotenv()

|

| 11 |

+

|

| 12 |

+

# Configure logging

|

| 13 |

+

log.basicConfig(level=log.INFO, format="%(asctime)s [%(levelname)s] %(message)s")

|

| 14 |

+

|

| 15 |

+

class CodeReviewAgentServicer(agent_pb2_grpc.CodeReviewAgentServicer):

|

| 16 |

+

def __init__(self):

|

| 17 |

+

self.orchestrator = CodeReviewOrchestrator()

|

| 18 |

+

|

| 19 |

+

def ReviewPR(self, request, context):

|

| 20 |

+

repo_url = request.repo_url

|

| 21 |

+

async def ReviewPR(self, request, context):

|

| 22 |

+

log.info(f"Received review request for PR #{request.pr_number}")

|

| 23 |

+

try:

|

| 24 |

+

async for result in self.orchestrator.review_pr_stream(request.repo_url, request.pr_number):

|

| 25 |

+

yield agent_pb2.ReviewResponse(

|

| 26 |

+

status="Success",

|

| 27 |

+

review_comment=result["comment"],

|

| 28 |

+

file_path=result["file_path"]

|

| 29 |

+

)

|

| 30 |

+

except Exception as e:

|

| 31 |

+

log.error(f"Error during review: {e}")

|

| 32 |

+

yield agent_pb2.ReviewResponse(

|

| 33 |

+

status="Error",

|

| 34 |

+

review_comment=str(e),

|

| 35 |

+

file_path=""

|

| 36 |

+

)

|

| 37 |

+

|

| 38 |

+

async def serve():

|

| 39 |

+

server = grpc.aio.server()

|

| 40 |

+

agent_pb2_grpc.add_CodeReviewAgentServicer_to_server(CodeReviewAgentServicer(), server)

|

| 41 |

+

server.add_insecure_port('[::]:50051')

|

| 42 |

+

log.info("Starting Async gRPC server on port 50051...")

|

| 43 |

+

await server.start()

|

| 44 |

+

await server.wait_for_termination()

|

| 45 |

+

|

| 46 |

+

if __name__ == '__main__':

|

| 47 |

+

import asyncio

|

| 48 |

+

asyncio.run(serve())

|

git_utils.py

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import requests

|

| 2 |

+

import re

|

| 3 |

+

from collections import defaultdict

|

| 4 |

+

import logging as log

|

| 5 |

+

|

| 6 |

+

|

| 7 |

+

def get_pr_diff_url(repo_url, pr_number):

|

| 8 |

+

"""

|

| 9 |

+

Get the diff URL for a specific pull request number.

|

| 10 |

+

Args:

|

| 11 |

+

repo_url (str): The URL of the GitHub repository.

|

| 12 |

+

pr_number (int): The pull request number.

|

| 13 |

+

"""

|

| 14 |

+

pr_diff_url = f"https://patch-diff.githubusercontent.com/raw/{repo_url.split('/')[-2]}/{repo_url.split('/')[-1]}/pull/{pr_number}.diff"

|

| 15 |

+

response = requests.get(pr_diff_url,verify=False)

|

| 16 |

+

|

| 17 |

+

if response.status_code != 200:

|

| 18 |

+

log.error(f"Failed to fetch diff: {response.status_code}")

|

| 19 |

+

raise ValueError(f"Failed to fetch diff: {response.status_code}")

|

| 20 |

+

|

| 21 |

+

diff_text = response.text

|

| 22 |

+

file_diffs = defaultdict(str)

|

| 23 |

+

file_diff_pattern = re.compile(r'^diff --git a/(.*?) b/\1$', re.MULTILINE)

|

| 24 |

+

split_points = list(file_diff_pattern.finditer(diff_text))

|

| 25 |

+

for i, match in enumerate(split_points):

|

| 26 |

+

file_path = match.group(1)

|

| 27 |

+

start = match.start()

|

| 28 |

+

end = split_points[i + 1].start() if i + 1 < len(split_points) else len(diff_text)

|

| 29 |

+

file_diffs[file_path] = diff_text[start:end]

|

| 30 |

+

return file_diffs

|

| 31 |

+

|

pyproject.toml

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[project]

|

| 2 |

+

name = "llm-code-review-agent"

|

| 3 |

+

version = "0.1.0"

|

| 4 |

+

description = "Add your description here"

|

| 5 |

+

readme = "README.md"

|

| 6 |

+

requires-python = ">=3.10"

|

| 7 |

+

dependencies = [

|

| 8 |

+

"fastapi>=0.115.12",

|

| 9 |

+

"fastmcp>=2.3.4",

|

| 10 |

+

"gitpython>=3.1.44",

|

| 11 |

+

"mcp[cli]>=1.9.0",

|

| 12 |

+

"openai>=1.79.0",

|

| 13 |

+

"openai-agents>=0.0.17",

|

| 14 |

+

"pyyaml>=6.0.2",

|

| 15 |

+

"requests>=2.32.3",

|

| 16 |

+

"tiktoken>=0.9.0",

|

| 17 |

+

"uvicorn>=0.34.2",

|

| 18 |

+

"ray[default]==2.41.0",

|

| 19 |

+

"grpcio>=1.60.0",

|

| 20 |

+

"grpcio-tools>=1.60.0",

|

| 21 |

+

"nmagents>=0.1.0",

|

| 22 |

+

"load-dotenv>=0.1.0",

|

| 23 |

+

"redis>=5.0.0",

|

| 24 |

+

"jinja2>=3.1.0",

|

| 25 |

+

]

|

review_orchestrator.py

ADDED

|

@@ -0,0 +1,348 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import ray

|

| 2 |

+

import os

|

| 3 |

+

import logging as log

|

| 4 |

+

import yaml

|

| 5 |

+

from datetime import datetime

|

| 6 |

+

from typing import Any, List, Dict

|

| 7 |

+

import git_utils

|

| 8 |

+

from fastmcp import Client

|

| 9 |

+

from openai import OpenAI

|

| 10 |

+

from dotenv import load_dotenv

|

| 11 |

+

from nmagents.command import CallLLM, ToolCall, ToolList

|

| 12 |

+

from nmagents.utils import parse_json_response_with_repair, execute_step_tools

|

| 13 |

+

from pathlib import Path

|

| 14 |

+

import redis

|

| 15 |

+

import json

|

| 16 |

+

|

| 17 |

+

# Configure logging

|

| 18 |

+

log.basicConfig(level=log.INFO, format="%(asctime)s [%(levelname)s] %(message)s")

|

| 19 |

+

|

| 20 |

+

# Load environment variables

|

| 21 |

+

load_dotenv()

|

| 22 |

+

|

| 23 |

+

# Constants

|

| 24 |

+

MAX_CONTEXT_LENGTH = 16385

|

| 25 |

+

COST_PER_TOKEN_INPUT = 0.10 / 10e6

|

| 26 |

+

COST_PER_TOKEN_OUTPUT = 0.40 / 10e6

|

| 27 |

+

MODEL_NAME = "gpt-4.1-nano"

|

| 28 |

+

FALLBACK_MODEL_NAME = os.getenv("JSON_REPAIR_MODEL", "gpt-4.1-nano")

|

| 29 |

+

FALLBACK_MAX_BUDGET = float(os.getenv("JSON_REPAIR_MAX_BUDGET", "0.2"))

|

| 30 |

+

AST_MCP_SERVER_URL = os.getenv("CODE_AST_MCP_SERVER_URL", "http://127.0.0.1:7860/mcp/")

|

| 31 |

+

|

| 32 |

+

if AST_MCP_SERVER_URL and not AST_MCP_SERVER_URL.endswith("/"):

|

| 33 |

+

AST_MCP_SERVER_URL = AST_MCP_SERVER_URL + "/"

|

| 34 |

+

|

| 35 |

+

TEMPLATE_PATH = Path(__file__).parent / "prompts/code_review_prompts.txt"

|

| 36 |

+

|

| 37 |

+

def load_prompt(**placeholders) -> str:

|

| 38 |

+

template = TEMPLATE_PATH.read_text(encoding="utf-8")

|

| 39 |

+

default_values = {

|

| 40 |

+

"arch_notes_or_empty": "",

|

| 41 |

+

"guidelines_list_or_link": "",

|

| 42 |

+

"threat_model_or_empty": "",

|

| 43 |

+

"perf_slos_or_empty": "",

|

| 44 |

+

"tool_outputs": "",

|

| 45 |

+

"diff_or_code_block": "",

|

| 46 |

+

}

|

| 47 |

+

merged = {**default_values, **placeholders}

|

| 48 |

+

for key, value in merged.items():

|

| 49 |

+

value_str = str(value)

|

| 50 |

+

template = template.replace(f"{{{{{key}}}}}", value_str)

|

| 51 |

+

template = template.replace(f"{{{key}}}", value_str)

|

| 52 |

+

return template

|

| 53 |

+

|

| 54 |

+

@ray.remote

|

| 55 |

+

def process_file_review(file_path: str, diff: str, repo_url: str, pr_number: int, tool_schemas_content: str, step_schema_content: str, time_hash: str, redis_host: str, redis_port: int, api_key: str | None = None, mcp_server_url: str | None = None):

|

| 56 |

+

import asyncio

|

| 57 |

+

return asyncio.run(_process_file_review_async(file_path, diff, repo_url, pr_number, tool_schemas_content, step_schema_content, time_hash, redis_host, redis_port, api_key, mcp_server_url))

|

| 58 |

+

|

| 59 |

+

async def _process_file_review_async(file_path: str, diff: str, repo_url: str, pr_number: int, tool_schemas_content: str, step_schema_content: str, time_hash: str, redis_host: str, redis_port: int, api_key: str | None = None, mcp_server_url: str | None = None):

|

| 60 |

+

log.info(f"Starting review for {file_path}")

|

| 61 |

+

|

| 62 |

+

# Initialize Redis client

|

| 63 |

+

# redis_host and redis_port are passed from the orchestrator

|

| 64 |

+

redis_client = redis.Redis(host=redis_host, port=redis_port, db=0)

|

| 65 |

+

repo_name = repo_url.rstrip('/').split('/')[-1]

|

| 66 |

+

stream_key = f"review:stream:{repo_name}:{pr_number}:{time_hash}"

|

| 67 |

+

runs_key = f"review:runs:{repo_name}:{pr_number}"

|

| 68 |

+

|

| 69 |

+

# Add this run to the history

|

| 70 |

+

try:

|

| 71 |

+

redis_client.sadd(runs_key, time_hash)

|

| 72 |

+

except Exception as e:

|

| 73 |

+

log.error(f"Failed to add run to history: {e}")

|

| 74 |

+

|

| 75 |

+

# Re-initialize clients inside the remote task

|

| 76 |

+

if not api_key:

|

| 77 |

+

api_key = os.getenv("OPENAI_API_KEY")

|

| 78 |

+

openai_client = OpenAI(api_key=api_key, base_url="https://api.openai.com/v1")

|

| 79 |

+

|

| 80 |

+

call_llm_command = CallLLM(openai_client, "Call the LLM", MODEL_NAME, COST_PER_TOKEN_INPUT, COST_PER_TOKEN_OUTPUT, 0.5)

|

| 81 |

+

repair_llm_command = CallLLM(openai_client, "Repair YAML", FALLBACK_MODEL_NAME, COST_PER_TOKEN_INPUT, COST_PER_TOKEN_OUTPUT, FALLBACK_MAX_BUDGET)

|

| 82 |

+

|

| 83 |

+

step_execution_results = []

|

| 84 |

+

|

| 85 |

+

# Use passed URL or fallback to env var

|

| 86 |

+

mcp_url = mcp_server_url or AST_MCP_SERVER_URL

|

| 87 |

+

if not mcp_url.endswith("/"):

|

| 88 |

+

mcp_url = mcp_url + "/"

|

| 89 |

+

|

| 90 |

+

async with Client(mcp_url) as ast_tool_client:

|

| 91 |

+

ast_tool_call_command = ToolCall(ast_tool_client, "Call tool")

|

| 92 |

+

|

| 93 |

+

main_context = f""" Your task today is Code Reivew. You are given the following '{pr_number}' to review from the repo '{repo_url}'

|

| 94 |

+

You have to first come up with a plan to review the code changes in the PR as a series of steps.

|

| 95 |

+

Write the plan as per the following step schema: {step_schema_content}

|

| 96 |

+

Make sure to follow the step schema format exactly and output only JSON """

|

| 97 |

+

|

| 98 |

+

context = main_context + f" Here is the file diff for {file_path}:\n{diff} for review\n" + \

|

| 99 |

+

f"You have access to the following MCP tools to help you with your code review: {tool_schemas_content}"

|

| 100 |

+

|

| 101 |

+

response = call_llm_command.execute(context)

|

| 102 |

+

log.info(f"Plan generated for {file_path}")

|

| 103 |

+

|

| 104 |

+

response_data, _ = parse_json_response_with_repair(

|

| 105 |

+

response_text=response or "",

|

| 106 |

+

schema_hint=step_schema_content,

|

| 107 |

+

repair_command=repair_llm_command,

|

| 108 |

+

context_label="plan",

|

| 109 |

+

)

|

| 110 |

+

|

| 111 |

+

if not response_data:

|

| 112 |

+

log.error(f"Failed to parse plan for {file_path}")

|

| 113 |

+

return {

|

| 114 |

+

"file_path": file_path,

|

| 115 |

+

"results": [{"step_name": "plan", "error": "Failed to parse plan"}]

|

| 116 |

+

}

|

| 117 |

+

|

| 118 |

+

# Save plan log

|

| 119 |

+

safe_filename = file_path.replace("/", "_").replace("\\", "_")

|

| 120 |

+

repo_name = repo_url.rstrip('/').split('/')[-1]

|

| 121 |

+

job_dir = f"{repo_name}_PR{pr_number}_{time_hash}"

|

| 122 |

+

logs_dir = Path("logs") / job_dir

|

| 123 |

+

logs_dir.mkdir(parents=True, exist_ok=True)

|

| 124 |

+

|

| 125 |

+

plan_log_path = logs_dir / f"plan_{safe_filename}.yaml"

|

| 126 |

+

with open(plan_log_path, "w", encoding="utf-8") as f:

|

| 127 |

+

yaml.dump(response_data, f)

|

| 128 |

+

|

| 129 |

+

# Publish plan to Redis

|

| 130 |

+

try:

|

| 131 |

+

redis_client.xadd(stream_key, {

|

| 132 |

+

"type": "plan",

|

| 133 |

+

"file_path": file_path,

|

| 134 |

+

"content": json.dumps(response_data)

|

| 135 |

+

})

|

| 136 |

+

except Exception as e:

|

| 137 |

+

log.error(f"Failed to write plan to Redis: {e}")

|

| 138 |

+

|

| 139 |

+

steps = response_data.get("steps", [])

|

| 140 |

+

|

| 141 |

+

for index, step in enumerate(steps, start=1):

|

| 142 |

+

name = step.get("name", "<unnamed>")

|

| 143 |

+

step_description = step.get("description", "")

|

| 144 |

+

|

| 145 |

+

tools = step.get("tools", [])

|

| 146 |

+

if tools:

|

| 147 |

+

log.info(f"Executing tools for step {name}: {tools}")

|

| 148 |

+

tool_outputs = await execute_step_tools(step, ast_tool_call_command)

|

| 149 |

+

for output in tool_outputs:

|

| 150 |

+

tool_result_context = load_prompt(repo_name=repo_url, brief_change_summary=step_description,

|

| 151 |

+

diff_or_code_block=diff, tool_outputs=output)

|

| 152 |

+

step["tool_results"] = tool_result_context

|

| 153 |

+

|

| 154 |

+

try:

|

| 155 |

+

step_context = load_prompt(repo_name=repo_url, brief_change_summary=step_description,

|

| 156 |

+

diff_or_code_block=diff, tool_outputs=step.get("tool_results", ""))

|

| 157 |

+

|

| 158 |

+

step_response = call_llm_command.execute(step_context)

|

| 159 |

+

|

| 160 |

+

step_data, _ = parse_json_response_with_repair(

|

| 161 |

+

response_text=step_response or "",

|

| 162 |

+

schema_hint="",

|

| 163 |

+

repair_command=repair_llm_command,

|

| 164 |

+

context_label=f"step {name}",

|

| 165 |

+

)

|

| 166 |

+

|

| 167 |

+

if not step_data:

|

| 168 |

+

log.error(f"Failed to parse result for step {name}")

|

| 169 |

+

step_execution_results.append({

|

| 170 |

+

"step_name": name,

|

| 171 |

+

"error": "Failed to parse step result"

|

| 172 |

+

})

|

| 173 |

+

continue

|

| 174 |

+

|

| 175 |

+

# Save step log

|

| 176 |

+

step_log_path = logs_dir / f"step_{name}_{safe_filename}.yaml"

|

| 177 |

+

with open(step_log_path, "w", encoding="utf-8") as f:

|

| 178 |

+

yaml.dump(step_data, f)

|

| 179 |

+

|

| 180 |

+

step_execution_results.append({

|

| 181 |

+

"step_name": name,

|

| 182 |

+

"result": step_data

|

| 183 |

+

})

|

| 184 |

+

|

| 185 |

+

# Publish step result to Redis

|

| 186 |

+

try:

|

| 187 |

+

redis_client.xadd(stream_key, {

|

| 188 |

+

"type": "step",

|

| 189 |

+

"file_path": file_path,

|

| 190 |

+

"step_name": name,

|

| 191 |

+

"content": json.dumps(step_data)

|

| 192 |

+

})

|

| 193 |

+

except Exception as e:

|

| 194 |

+

log.error(f"Failed to write step to Redis: {e}")

|

| 195 |

+

|

| 196 |

+

except Exception as e:

|

| 197 |

+

log.error(f"Failed to execute step {name} for {file_path}: {e}")

|

| 198 |

+

step_execution_results.append({

|

| 199 |

+

"step_name": name,

|

| 200 |

+

"error": str(e)

|

| 201 |

+

})

|

| 202 |

+

break

|

| 203 |

+

|

| 204 |

+

return {

|

| 205 |

+

"file_path": file_path,

|

| 206 |

+

"results": step_execution_results

|

| 207 |

+

}

|

| 208 |

+

|

| 209 |

+

class CodeReviewOrchestrator:

|

| 210 |

+

def __init__(self):

|

| 211 |

+

# Initialize Ray

|

| 212 |

+

# Check if running in a cluster or local

|

| 213 |

+

ray_address = os.getenv("RAY_ADDRESS")

|

| 214 |

+

if ray_address:

|

| 215 |

+

ray.init(address=ray_address, ignore_reinit_error=True)

|

| 216 |

+

else:

|

| 217 |

+

ray.init(ignore_reinit_error=True)

|

| 218 |

+

|

| 219 |

+

async def review_pr_stream(self, repo_url: str, pr_number: int, time_hash: str = None, api_key: str | None = None, mcp_server_url: str | None = None):

|

| 220 |

+

log.info(f"Orchestrating review for {repo_url} PR #{pr_number}")

|

| 221 |

+

|

| 222 |

+

# Get diffs

|

| 223 |

+

try:

|

| 224 |

+

file_diffs = git_utils.get_pr_diff_url(repo_url, pr_number)

|

| 225 |

+

except Exception as e:

|

| 226 |

+

log.error(f"Failed to get diffs: {e}")

|

| 227 |

+

yield {

|

| 228 |

+

"type": "error",

|

| 229 |

+

"file_path": "system",

|

| 230 |

+

"content": json.dumps({"error": f"Failed to get diffs: {str(e)}"})

|

| 231 |

+

}

|

| 232 |

+

return

|

| 233 |

+

|

| 234 |

+

# Get tool schemas (need to do this once)

|

| 235 |

+

# Use passed URL or fallback to env var

|

| 236 |

+

mcp_url = mcp_server_url or AST_MCP_SERVER_URL

|

| 237 |

+

if not mcp_url.endswith("/"):

|

| 238 |

+

mcp_url = mcp_url + "/"

|

| 239 |

+

|

| 240 |

+

async with Client(mcp_url) as ast_tool_client:

|

| 241 |

+

ast_tool_list_command = ToolList(ast_tool_client, "List tools")

|

| 242 |

+

tool_schemas_content = await ast_tool_list_command.execute(None)

|

| 243 |

+

|

| 244 |

+

sample_step_schema_file = "schemas/steps_schema.json"

|

| 245 |

+

with open(sample_step_schema_file, "r", encoding="utf-8") as f:

|

| 246 |

+

step_schema_content = f.read()

|

| 247 |

+

|

| 248 |

+

if not time_hash:

|

| 249 |

+

time_hash = datetime.now().strftime("%Y%m%d%H%M%S")

|

| 250 |

+

|

| 251 |

+

# Redis config to pass to workers

|

| 252 |

+

redis_host = os.getenv("REDIS_HOST", "localhost")

|

| 253 |

+

redis_port = int(os.getenv("REDIS_PORT", 6380))

|

| 254 |

+

|

| 255 |

+

# Launch Ray tasks

|

| 256 |

+

pending_futures = []

|

| 257 |

+

for file_path, diff in file_diffs.items():

|

| 258 |

+

pending_futures.append(process_file_review.remote(

|

| 259 |

+

file_path, diff, repo_url, pr_number, tool_schemas_content, step_schema_content, time_hash, redis_host, redis_port, api_key, mcp_url

|

| 260 |

+

))

|

| 261 |

+

|

| 262 |

+

# Collect all reviews for final summary

|

| 263 |

+

all_reviews_context = ""

|

| 264 |

+

|

| 265 |

+

# Process results as they complete

|

| 266 |

+

while pending_futures:

|

| 267 |

+

# Run ray.wait in a separate thread to avoid blocking the asyncio event loop

|

| 268 |

+

import asyncio

|

| 269 |

+

done_futures, pending_futures = await asyncio.to_thread(ray.wait, pending_futures)

|

| 270 |

+

for future in done_futures:

|

| 271 |

+

try:

|

| 272 |

+

result = await future

|

| 273 |

+

|

| 274 |

+

# Format the result for this file

|

| 275 |

+

file_summary = f"File: {result['file_path']}\n"

|

| 276 |

+

for step in result['results']:

|

| 277 |

+

if 'error' in step:

|

| 278 |

+

file_summary += f"- {step['step_name']}: [Error] {step['error']}\n"

|

| 279 |

+

else:

|

| 280 |

+

file_summary += f"- {step['step_name']}: {step['result']}\n"

|

| 281 |

+

|

| 282 |

+

all_reviews_context += file_summary + "\n" + "-"*40 + "\n"

|

| 283 |

+

|

| 284 |

+

yield {

|

| 285 |

+

"file_path": result['file_path'],

|

| 286 |

+

"comment": file_summary

|

| 287 |

+

}

|

| 288 |

+

except Exception as e:

|

| 289 |

+

log.error(f"Error processing result from ray: {e}")

|

| 290 |

+

yield {

|

| 291 |

+

"file_path": "system",

|

| 292 |

+

"comment": f"Error: {str(e)}"

|

| 293 |

+

}

|

| 294 |

+

|

| 295 |

+

# Generate Final Consolidated Summary

|

| 296 |

+

log.info("Generating consolidated PR summary...")

|

| 297 |

+

try:

|

| 298 |

+

if not api_key:

|

| 299 |

+

api_key = os.getenv("OPENAI_API_KEY")

|

| 300 |

+

openai_client = OpenAI(api_key=api_key, base_url="https://api.openai.com/v1")

|

| 301 |

+

summary_llm_command = CallLLM(openai_client, "Summarize PR", MODEL_NAME, COST_PER_TOKEN_INPUT, COST_PER_TOKEN_OUTPUT, 0.5)

|

| 302 |

+

|

| 303 |

+

summary_prompt = f"""

|

| 304 |

+

You are a Principal Software Engineer.

|

| 305 |

+

Review the following code review results for PR #{pr_number} in {repo_url}.

|

| 306 |

+

|

| 307 |

+

Aggregated Reviews:

|

| 308 |

+

{all_reviews_context}

|

| 309 |

+

|

| 310 |

+

Please provide a concise Executive Summary of the PR.

|

| 311 |

+

1. Highlight the most critical issues found across all files.

|

| 312 |

+

2. Identify any recurring patterns or code quality concerns.

|

| 313 |

+

3. Provide a final recommendation (Merge, Request Changes, etc.).

|

| 314 |

+

"""

|

| 315 |

+

|

| 316 |

+

final_summary = summary_llm_command.execute(summary_prompt)

|

| 317 |

+

|

| 318 |

+

# Publish summary to Redis

|

| 319 |

+

try:

|

| 320 |

+

redis_client = redis.Redis(host=redis_host, port=redis_port, db=0)

|

| 321 |

+

stream_key = f"review:stream:{repo_url.rstrip('/').split('/')[-1]}:{pr_number}:{time_hash}"

|

| 322 |

+

redis_client.xadd(stream_key, {

|

| 323 |

+

"type": "summary",

|

| 324 |

+

"file_path": "PR_SUMMARY",

|

| 325 |

+

"content": final_summary,

|

| 326 |

+

"repo_url": repo_url,

|

| 327 |

+

"pr_number": str(pr_number)

|

| 328 |

+

})

|

| 329 |

+

redis_client.close()

|

| 330 |

+

except Exception as e:

|

| 331 |

+

log.error(f"Failed to write summary to Redis: {e}")

|

| 332 |

+

|

| 333 |

+

yield {

|

| 334 |

+

"file_path": "PR_SUMMARY",

|

| 335 |

+

"comment": f"# Consolidated PR Summary\n\n{final_summary}"

|

| 336 |

+

}

|

| 337 |

+

|

| 338 |

+

# Save summary log

|

| 339 |

+

logs_dir = Path("logs") / f"{repo_url.rstrip('/').split('/')[-1]}_PR{pr_number}_{time_hash}"

|

| 340 |

+

with open(logs_dir / "pr_summary.md", "w", encoding="utf-8") as f:

|

| 341 |

+

f.write(final_summary)

|

| 342 |

+

|

| 343 |

+

except Exception as e:

|

| 344 |

+

log.error(f"Failed to generate final summary: {e}")

|

| 345 |

+

yield {

|

| 346 |

+

"file_path": "PR_SUMMARY",

|

| 347 |

+

"comment": f"Failed to generate summary: {e}"

|

| 348 |

+

}

|

start_hf.sh

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/bin/bash

|

| 2 |

+

set -e

|

| 3 |

+

|

| 4 |

+

# Start Redis

|

| 5 |

+

echo "Starting Redis..."

|

| 6 |

+

redis-server --port 6380 &

|

| 7 |

+

|

| 8 |

+

# Start Ray

|

| 9 |

+

echo "Starting Ray..."

|

| 10 |

+

# We use --head to start a single node cluster

|

| 11 |

+

# We need to make sure it doesn't try to use too much memory if limited

|

| 12 |

+

uv run ray start --head --disable-usage-stats --port=6379 --dashboard-host=0.0.0.0

|

| 13 |

+

|

| 14 |

+

# Start MCP Server

|

| 15 |

+

echo "Starting MCP Server..."

|

| 16 |

+

cd codereview_mcp_server

|

| 17 |

+

uv run http_server.py &

|

| 18 |

+

MCP_PID=$!

|

| 19 |

+

cd ..

|

| 20 |

+

|

| 21 |

+

# Wait for MCP server to be ready (simple sleep for now, or check port)

|

| 22 |

+

sleep 5

|

| 23 |

+

|

| 24 |

+

# Set environment variables

|

| 25 |

+

export REDIS_PORT=6380

|

| 26 |

+

export AST_MCP_SERVER_URL=http://127.0.0.1:7860/mcp/

|

| 27 |

+

export RAY_ADDRESS="auto"

|

| 28 |

+

|

| 29 |

+

# Start Web Server

|

| 30 |

+

echo "Starting Web Server..."

|

| 31 |

+

# HF Spaces expects the app to listen on port 7860

|

| 32 |

+

uv run uvicorn web_server:app --host 0.0.0.0 --port 7860

|

test_client.py

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import grpc

|

| 2 |

+

import protos.agent_pb2 as agent_pb2

|

| 3 |

+

import protos.agent_pb2_grpc as agent_pb2_grpc

|

| 4 |

+

import logging as log

|

| 5 |

+

|

| 6 |

+

log.basicConfig(level=log.INFO)

|

| 7 |

+

|

| 8 |

+

def run():

|

| 9 |

+

with grpc.insecure_channel('localhost:50051') as channel:

|

| 10 |

+

stub = agent_pb2_grpc.CodeReviewAgentStub(channel)

|

| 11 |

+

log.info("Sending ReviewPR request...")

|

| 12 |

+

response_stream = stub.ReviewPR(agent_pb2.ReviewRequest(

|

| 13 |

+

repo_url="https://github.com/huggingface/accelerate",

|

| 14 |

+

pr_number=3321

|

| 15 |

+

))

|

| 16 |

+

|

| 17 |

+

log.info("Waiting for stream...")

|

| 18 |

+

for response in response_stream:

|

| 19 |

+

log.info(f"--- Received Review for {response.file_path} ---")

|

| 20 |

+

log.info(f"Status: {response.status}")

|

| 21 |

+

log.info(response.review_comment)

|

| 22 |

+

log.info("-" * 50)

|

| 23 |

+

|

| 24 |

+

if __name__ == '__main__':

|

| 25 |

+

run()

|

uv.lock

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

web_server.py

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import json

|

| 3 |

+

import asyncio

|

| 4 |

+

import redis.asyncio as redis

|

| 5 |

+

from fastapi import FastAPI, Request, BackgroundTasks

|

| 6 |

+

from fastapi.responses import HTMLResponse, StreamingResponse

|

| 7 |

+

from fastapi.templating import Jinja2Templates

|

| 8 |

+

from fastapi.staticfiles import StaticFiles

|

| 9 |

+

from review_orchestrator import CodeReviewOrchestrator

|

| 10 |

+

from pydantic import BaseModel

|

| 11 |

+

from load_dotenv import load_dotenv

|

| 12 |

+

load_dotenv()

|

| 13 |

+

app = FastAPI()

|

| 14 |

+

templates = Jinja2Templates(directory="templates")

|

| 15 |

+

|

| 16 |

+

# Initialize Orchestrator

|

| 17 |

+

orchestrator = CodeReviewOrchestrator()

|

| 18 |

+

|

| 19 |

+

class ReviewRequest(BaseModel):

|

| 20 |

+

repo_url: str

|

| 21 |

+

pr_number: int

|

| 22 |

+

openai_api_key: str | None = None

|

| 23 |

+

mcp_server_url: str | None = None

|

| 24 |

+

|

| 25 |

+

class MCPRequest(BaseModel):

|

| 26 |

+

mcp_server_url: str

|

| 27 |

+

|

| 28 |

+

@app.get("/", response_class=HTMLResponse)

|

| 29 |

+

async def read_root(request: Request):

|

| 30 |

+

return templates.TemplateResponse("index.html", {"request": request})

|

| 31 |

+

|

| 32 |

+

@app.post("/list-tools")

|

| 33 |

+

async def list_tools(request: MCPRequest):

|

| 34 |

+

from fastmcp import Client

|

| 35 |

+

from nmagents.command import ToolList

|

| 36 |

+

|

| 37 |

+

try:

|

| 38 |

+

# Ensure URL ends with /

|

| 39 |

+

url = request.mcp_server_url

|

| 40 |

+

if not url.endswith("/"):

|

| 41 |

+

url = url + "/"

|

| 42 |

+

|

| 43 |

+

async with Client(url) as client:

|

| 44 |

+

# We can't easily use ToolList command here as it returns a formatted string

|

| 45 |

+

# We'll use the client directly to list tools if possible, or parse the output

|

| 46 |

+

# fastmcp client doesn't expose list_tools directly in a simple way without calling the server

|

| 47 |

+

# But nmagents ToolList does exactly that.

|

| 48 |

+

|

| 49 |

+

tool_list_command = ToolList(client, "List tools")

|

| 50 |

+

tools_description = await tool_list_command.execute(None)

|

| 51 |

+

return {"status": "success", "tools": tools_description}

|

| 52 |

+

except Exception as e:

|

| 53 |

+

return {"status": "error", "message": str(e)}

|

| 54 |

+

|

| 55 |

+

@app.post("/review")

|

| 56 |

+

async def trigger_review(request: ReviewRequest, background_tasks: BackgroundTasks):

|

| 57 |

+

# Trigger the review in the background

|

| 58 |

+

# We need to wrap the async generator to consume it, otherwise it won't run

|

| 59 |

+

# We need to get the time_hash to return it, but the orchestrator generates it.

|

| 60 |

+

# For now, we will generate it here and pass it, or just return a "latest" indicator.

|

| 61 |

+

# Better: Orchestrator's review_pr_stream generates it. We can't easily get it back from a background task.

|

| 62 |

+

# Solution: We will generate time_hash here and pass it to orchestrator (need to update orchestrator signature).

|

| 63 |

+

|

| 64 |

+

from datetime import datetime

|

| 65 |

+

time_hash = datetime.now().strftime("%Y%m%d%H%M%S")

|

| 66 |

+

|

| 67 |

+

# Add run to history immediately

|

| 68 |

+

redis_host = os.getenv("REDIS_HOST", "localhost")

|

| 69 |

+

redis_port = int(os.getenv("REDIS_PORT", 6380))

|

| 70 |

+

r = redis.Redis(host=redis_host, port=redis_port, db=0, decode_responses=True)

|

| 71 |

+

repo_name = request.repo_url.rstrip('/').split('/')[-1]

|

| 72 |

+

runs_key = f"review:runs:{repo_name}:{request.pr_number}"

|

| 73 |

+

await r.sadd(runs_key, time_hash)

|