Spaces:

Sleeping

A newer version of the Gradio SDK is available: 6.13.0

title: Snowman AI

emoji: ⛄

colorFrom: indigo

colorTo: purple

sdk: gradio

sdk_version: 5.50.0

app_file: app.py

pinned: false

license: mit

tags:

- mcp-in-action-track-consumer

- building-mcp-track-consumer

- mcp

- literature-review

- langgraph

- academic-research

- openai

short_description: Autonomous Literature Review Agent with MCP

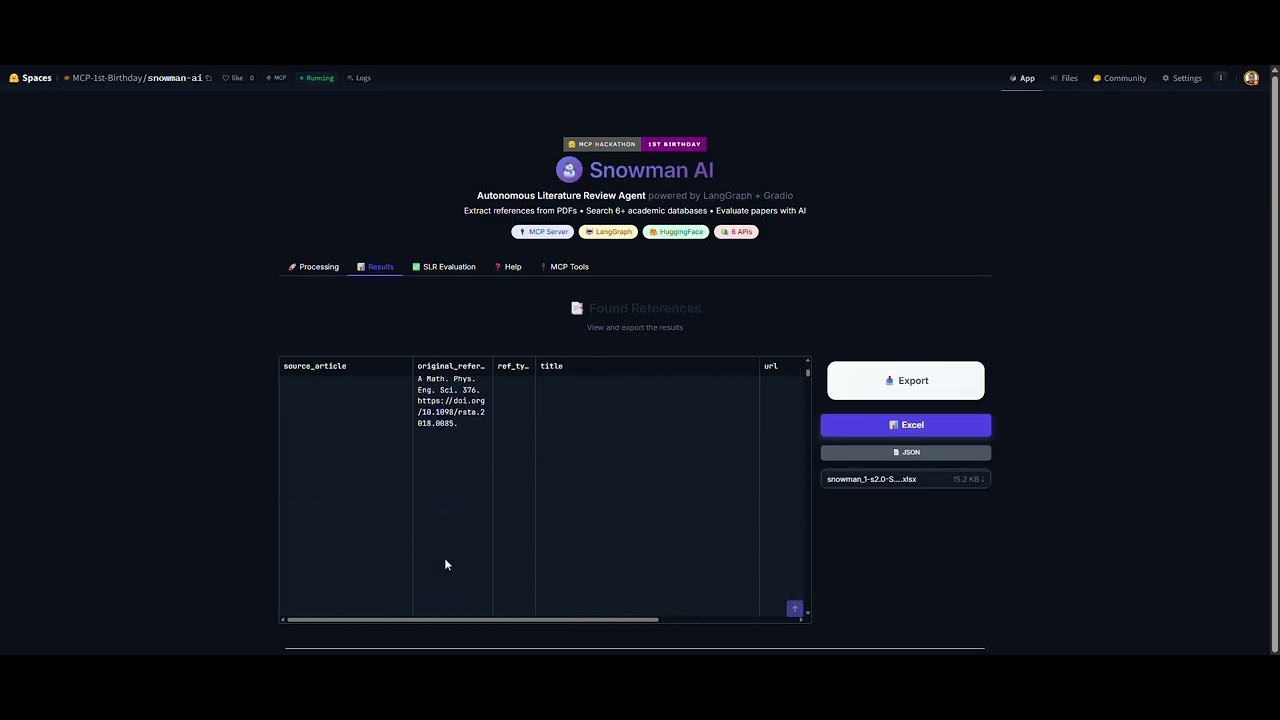

☃️ Snowman AI

Autonomous Literature Review Agent powered by LangGraph + Gradio MCP Server

⛄ Snowman AI

🏆 Hackathon Submission

Tracks:

- 🤖 Track 2: MCP in Action - Consumer (

mcp-in-action-track-consumer) - 🔧 Track 1: Building MCP - Consumer (

building-mcp-track-consumer)

⛄ This app qualifies for both tracks: it's an autonomous agent with planning, reasoning, and execution (Track 2) that also exposes a fully functional MCP Server with 6 tools (Track 1).

Team Members:

- @nextmarte - Marcus Antonio Cardoso Ramalho

Social Media Post: LinkedIn Post

🏅 Sponsor Integration:

- ✅ OpenAI GPT-4o - Powers intelligent reference parsing and SLR relevance evaluation

🎥 Demo Video

📹 Click to watch the full demo (2 minutes) - See Snowman extract 100+ references from a PDF and search them across 6 databases!

📖 What is Snowman?

Snowman AI is an autonomous AI agent that revolutionizes systematic literature reviews (SLR) by automating the tedious process of reference extraction and abstract retrieval.

The Problem

Researchers spend hundreds of hours manually:

- Reading PDFs to extract bibliographic references

- Searching multiple databases for each reference

- Finding and reading abstracts

- Evaluating relevance for inclusion/exclusion

The Solution

Snowman is a multi-agent system that:

📄 PDF Upload → 🤖 AI Reference Extraction → 🔍 Cascade Search → ✅ SLR Evaluation

- PDF Extractor Agent: Intelligently finds the References section in academic PDFs

- Parser Agent: Uses GPT-4o to identify and clean bibliographic references

- Cascade Search Agent: Searches 6 academic APIs in parallel

- Deduplication Agent: Removes duplicates by DOI and title similarity

- SLR Evaluation Agent: Automatically evaluates papers against your inclusion/exclusion criteria

✨ Key Features

🤖 Autonomous Agent Behavior

- Planning: Analyzes PDF structure to find references

- Reasoning: Uses LLM to parse unstructured reference text

- Execution: Parallel searches across multiple APIs

- Self-correction: Falls back to alternative sources if primary fails

🔍 Cascade Search Strategy

Searches multiple academic databases in order of reliability:

| Priority | Source | Type | Features |

|---|---|---|---|

| 1️⃣ | CrossRef | Free API | DOI resolution, metadata |

| 2️⃣ | Semantic Scholar | Free API | Best abstracts, citations |

| 3️⃣ | OpenAlex | Open API | Comprehensive coverage |

| 4️⃣ | DuckDuckGo | Web Search | Fallback web scraping |

| 5️⃣ | Tavily | Paid API | Last resort, high quality |

📊 Smart Caching

- SQLite-based persistent cache

- Avoids redundant API calls

- Caches both positive and negative results

- PDF parsing cache for repeated uploads

✅ RSL Evaluation

- Define inclusion/exclusion criteria

- AI evaluates each paper automatically

- Export decisions with justifications

- Excel/JSON export for further analysis

🛠️ Technology Stack

| Component | Technology |

|---|---|

| Orchestration | LangGraph (StateGraph) |

| LLM | OpenAI GPT-4o |

| UI Framework | Gradio 6 |

| PDF Processing | PyMuPDF |

| APIs | CrossRef, Semantic Scholar, OpenAlex, Tavily |

| Caching | SQLite |

| Parallelism | ThreadPoolExecutor |

🚀 Quick Start

Prerequisites

- Python 3.12+

- OpenAI API Key

- (Optional) Tavily API Key for enhanced search

Installation

# Clone the repository

git clone https://github.com/nextmarte/snowball.git

cd snowball

# Install with uv (recommended)

uv sync

# Or with pip

pip install -e .

Configuration

Create a .env file:

OPENAI_API_KEY=sk-your-key-here

TAVILY_API_KEY=tvly-your-key-here # Optional

Run

uv run app.py

Open http://localhost:7860 in your browser.

🏗️ Architecture

┌─────────────────────────────────────────────────────────────┐

│ GRADIO UI │

│ ┌─────────┐ ┌──────────┐ ┌───────────┐ ┌────────────┐ │

│ │ Upload │ │ Pipeline │ │ Results │ │ RSL Eval │ │

│ │ Tab │ │ View │ │ Table │ │ Tab │ │

│ └────┬────┘ └────┬─────┘ └─────┬─────┘ └─────┬──────┘ │

└───────┼────────────┼──────────────┼──────────────┼──────────┘

│ │ │ │

▼ ▼ ▼ ▼

┌─────────────────────────────────────────────────────────────┐

│ LANGGRAPH WORKFLOW │

│ │

│ ┌──────────┐ ┌──────────┐ ┌────────────┐ │

│ │ Extractor│───►│ Parser │───►│ Researcher │───► END │

│ │ Node │ │ Node │ │ Node │ │

│ └──────────┘ └──────────┘ └────────────┘ │

│ │ │ │ │

│ ▼ ▼ ▼ │

│ PyMuPDF GPT-4o LLM ThreadPool │

│ Executor │

└─────────────────────────────────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────┐

│ CASCADE SEARCH SERVICES │

│ │

│ CrossRef → Semantic Scholar → OpenAlex → DDG → Tavily │

│ │

└─────────────────────────────────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────┐

│ SQLITE CACHE │

│ ┌─────────────────┐ ┌──────────────────┐ │

│ │ Search Cache │ │ PDF Parse Cache │ │

│ └─────────────────┘ └──────────────────┘ │

└─────────────────────────────────────────────────────────────┘

🎯 Use Cases

📚 Academic Researchers

- Conduct systematic literature reviews faster

- Find abstracts for hundreds of references in minutes

- Export to Excel for further analysis

🏫 Graduate Students

- Speed up thesis literature review

- Ensure comprehensive coverage of references

- Automatic relevance evaluation

📊 Research Teams

- Batch process multiple PDFs

- Collaborative review workflows

- Consistent evaluation criteria

🔮 Roadmap

- MCP Server integration for AI tools (Claude Desktop, Cursor, etc.)

- HuggingFace Papers API integration

- Citation network visualization

- Full-text PDF analysis

- Multi-language support (PT-BR, ES)

- Zotero/Mendeley export

🔌 MCP Server Integration

This app exposes an MCP (Model Context Protocol) server that can be consumed by AI tools like Claude Desktop, Cursor, and other MCP-compatible clients.

Available MCP Tools

| Tool | Description |

|---|---|

search_reference |

Search academic databases for a reference |

get_doi_abstract |

Get abstract and metadata by DOI |

classify_reference |

Classify reference type (article, book, etc.) |

evaluate_relevance |

Evaluate paper relevance for a research topic |

batch_search |

Search multiple references at once |

cache_stats |

Get cache statistics |

MCP Server URL

When running the app, the MCP server is available at:

http://127.0.0.1:7860/gradio_api/mcp/

Connecting Claude Desktop

Add to your Claude Desktop config (claude_desktop_config.json):

{

"mcpServers": {

"snowman": {

"url": "http://127.0.0.1:7860/gradio_api/mcp/sse"

}

}

}

🤝 Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

📄 License

MIT License - see LICENSE for details.

🙏 Acknowledgments

- LangChain for LangGraph

- Gradio for the amazing UI framework

- Anthropic for MCP protocol

- HuggingFace for hosting the hackathon

- All the open academic APIs: CrossRef, Semantic Scholar, OpenAlex

Built with ❤️ for the MCP 1st Birthday Hackathon

November 2025