| # MNIST Examples for GGML | |

| These are simple examples of how to use GGML for inferencing. | |

| The first example uses convolutional neural network (CNN), the second one uses fully connected neural network. | |

| ## Building the examples | |

| ```bash | |

| git clone https://github.com/ggerganov/ggml | |

| cd ggml | |

| mkdir build && cd build | |

| cmake .. | |

| make -j4 mnist-cnn mnist | |

| ``` | |

| ## MNIST with CNN | |

| This implementation achieves ~99% accuracy on the MNIST test set. | |

| ### Training the model | |

| Use the `mnist-cnn.py` script to train the model and convert it to GGUF format: | |

| ``` | |

| $ python3 ../examples/mnist/mnist-cnn.py train mnist-cnn-model | |

| ... | |

| Keras model saved to 'mnist-cnn-model' | |

| ``` | |

| Convert the model to GGUF format: | |

| ``` | |

| $ python3 ../examples/mnist/mnist-cnn.py convert mnist-cnn-model | |

| ... | |

| Model converted and saved to 'mnist-cnn-model.gguf' | |

| ``` | |

| ### Running the example | |

| ```bash | |

| $ ./bin/mnist-cnn mnist-cnn-model.gguf ../examples/mnist/models/mnist/t10k-images.idx3-ubyte | |

| main: loaded model in 5.17 ms | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ * * * * * _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ * * * * * * * * _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ * * * * * _ _ _ * * _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ * * _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ * _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ * * _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ * * _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ * * * _ _ _ _ * * * * * _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ * * * * * * * * * _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ * * * * * * * * * * _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ * * * * * * _ _ * * * _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ * * * _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ * * _ _ _ _ _ _ _ _ _ * * _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ * * _ _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ * * _ _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ * * * _ _ _ _ _ _ * * * _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ * * * * * * * * * * _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ * * * * * * _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ | |

| ggml_graph_dump_dot: dot -Tpng mnist-cnn.dot -o mnist-cnn.dot.png && open mnist-cnn.dot.png | |

| main: predicted digit is 8 | |

| ``` | |

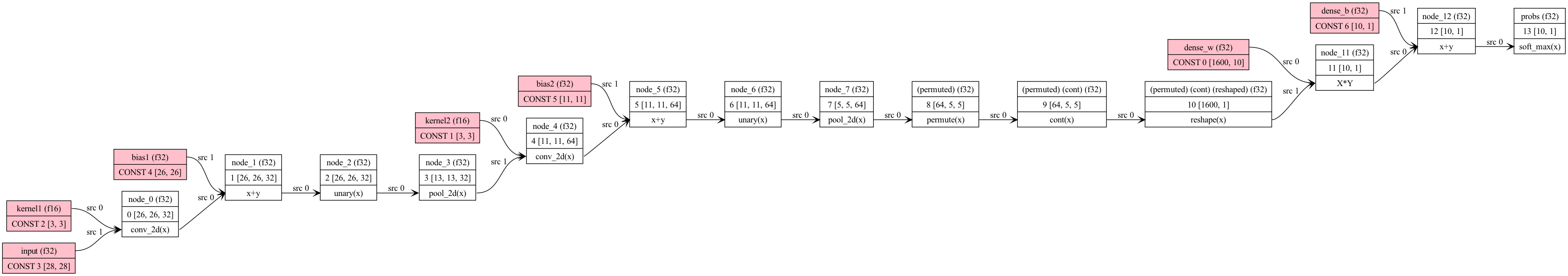

| Computation graph: | |

|  | |

| ## MNIST with fully connected network | |

| A fully connected layer + relu, followed by a fully connected layer + softmax. | |

| ### Training the Model | |

| A Google Colab notebook for training a simple two-layer network to recognize digits is located here. You can | |

| use this to save a pytorch model to be converted to ggml format. | |

| [Colab](https://colab.research.google.com/drive/12n_8VNJnolBnX5dVS0HNWubnOjyEaFSb?usp=sharing) | |

| GGML "format" is whatever you choose for efficient loading. In our case, we just save the hyperparameters used | |

| plus the model weights and biases. Run convert-h5-to-ggml.py to convert your pytorch model. The output format is: | |

| - magic constant (int32) | |

| - repeated list of tensors | |

| - number of dimensions of tensor (int32) | |

| - tensor dimension (int32 repeated) | |

| - values of tensor (int32) | |

| Run ```convert-h5-to-ggml.py mnist_model.state_dict``` where `mnist_model.state_dict` is the saved pytorch model from the Google Colab. For | |

| quickstart, it is included in the mnist/models directory. | |

| ```bash | |

| mkdir -p models/mnist | |

| python3 ../examples/mnist/convert-h5-to-ggml.py ../examples/mnist/models/mnist/mnist_model.state_dict | |

| ``` | |

| ### Running the example | |

| ```bash | |

| ./bin/mnist ./models/mnist/ggml-model-f32.bin ../examples/mnist/models/mnist/t10k-images.idx3-ubyte | |

| ``` | |

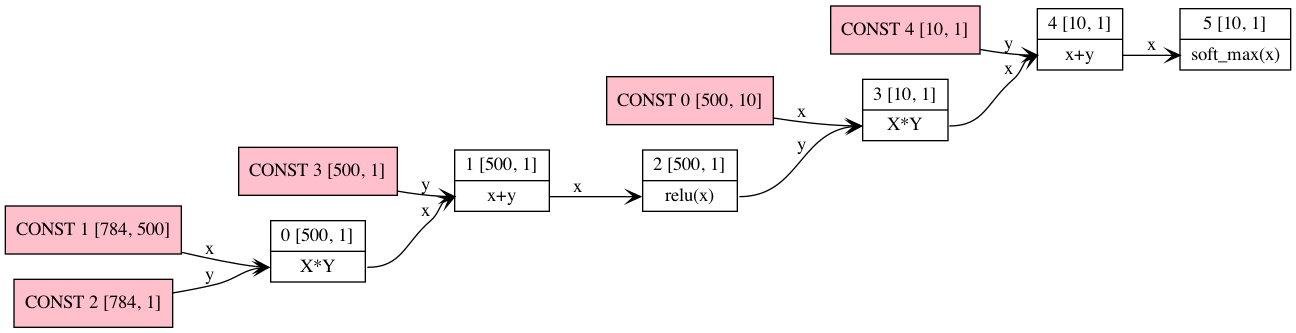

| Computation graph: | |

|  | |

| ## Web demo | |

| The example can be compiled with Emscripten like this: | |

| ```bash | |

| cd examples/mnist | |

| emcc -I../../include -I../../include/ggml -I../../examples ../../src/ggml.c main.cpp -o web/mnist.js -s EXPORTED_FUNCTIONS='["_wasm_eval","_wasm_random_digit","_malloc","_free"]' -s EXPORTED_RUNTIME_METHODS='["ccall"]' -s ALLOW_MEMORY_GROWTH=1 --preload-file models/mnist | |

| ``` | |

| Online demo: https://mnist.ggerganov.com | |