Instructions to use typhoon-ai/typhoon-whisper-large-v3 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use typhoon-ai/typhoon-whisper-large-v3 with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("automatic-speech-recognition", model="typhoon-ai/typhoon-whisper-large-v3")# Load model directly from transformers import AutoProcessor, AutoModelForSpeechSeq2Seq processor = AutoProcessor.from_pretrained("typhoon-ai/typhoon-whisper-large-v3") model = AutoModelForSpeechSeq2Seq.from_pretrained("typhoon-ai/typhoon-whisper-large-v3") - Notebooks

- Google Colab

- Kaggle

Typhoon Whisper Large v3

Typhoon Whisper Large v3 is a state-of-the-art Thai Automatic Speech Recognition (ASR) model fine-tuned on the OpenAI Whisper Large v3 architecture. It delivers exceptional accuracy on Thai speech recognition tasks, achieving superior performance through comprehensive training on diverse Thai audio data.

- Paper: Typhoon ASR Real-time: FastConformer-Transducer for Thai Automatic Speech Recognition

- Project Page: opentyphoon.ai

- GitHub: scb-10x/typhoon-asr

The model was trained on approximately 10 million data points (~11,000 hours) of Thai audio, curated and normalized using the Typhoon data pipeline to ensure consistent handling of Thai numbers, repetition markers, and context-dependent ambiguities.

Model Overview

- Architecture: Whisper Large v3 (32 decoder layers, full model)

- Language: Thai

- Dataset: ~10M training samples of normalized Thai speech (Gigaspeech2, CommonVoice, Internal Curated Public Media)

- Task: Automatic Speech Recognition (ASR)

- License: MIT (inherited from OpenAI Whisper)

Performance

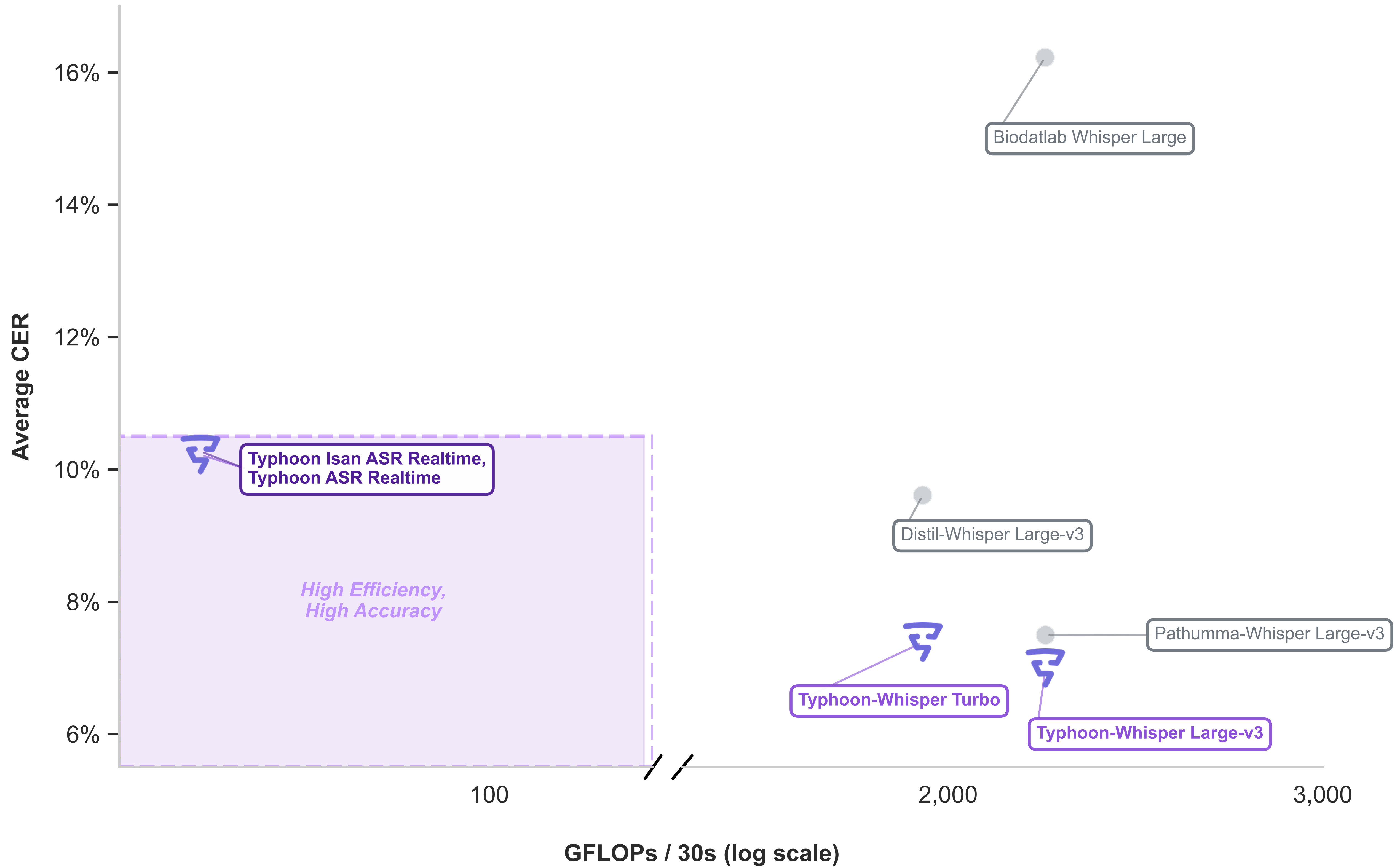

Typhoon Whisper Large v3 achieves state-of-the-art performance on Thai speech recognition benchmarks.

Note: Lower CER (Character Error Rate) is better. Results on Gigaspeech2 (Clean/Academic), TVSpeech (Noisy/In-the-wild), and Google Fleurs (Thai) testset.

Usage

You can use this model directly with the Hugging Face transformers library.

Installation

pip install transformers torch accelerate

Example Code

import torch

from transformers import AutoModelForSpeechSeq2Seq, AutoProcessor, pipeline

device = "cuda:0" if torch.cuda.is_available() else "cpu"

torch_dtype = torch.bfloat16 if torch.cuda.is_available() else torch.float32

model_id = "scb10x/typhoon-whisper-large-v3"

model = AutoModelForSpeechSeq2Seq.from_pretrained(

model_id, torch_dtype=torch_dtype, low_cpu_mem_usage=True, use_safetensors=True

)

model.to(device)

processor = AutoProcessor.from_pretrained(model_id)

pipe = pipeline(

"automatic-speech-recognition",

model=model,

tokenizer=processor.tokenizer,

feature_extractor=processor.feature_extractor,

max_new_tokens=448,

chunk_length_s=30,

batch_size=16,

return_timestamps=True,

torch_dtype=torch_dtype,

device=device,

)

# Transcribe

result = pipe("path_to_audio.wav", generate_kwargs={"language": "thai"})

print(result["text"])

Training Data

The model was trained on approximately 10 million training samples (~11,000 hours) of Thai audio data, including:

- Gigaspeech2: Clean, academic-style speech

- CommonVoice: Crowd-sourced diverse speech samples

- Internal Curated Public Media: Proprietary datasets curated by Typhoon Team, SCB 10X

All data was normalized using the Typhoon data pipeline to ensure:

- Consistent handling of Thai numbers

- Proper treatment of repetition markers

- Resolution of context-dependent ambiguities

Model Architecture

Typhoon Whisper Large v3 is based on OpenAI Whisper Large v3, the full-scale model featuring:

- Full architecture: 32 decoder layers for maximum representational capacity

- State-of-the-art accuracy: Optimized for best possible transcription quality

- Robust performance: Handles diverse acoustic conditions and speaking styles

This is the flagship model in the Typhoon ASR family, prioritizing accuracy over inference speed.

Limitations

- The model is optimized specifically for Thai language speech recognition

- Performance may vary on dialects or accents not well-represented in the training data

- Requires more computational resources compared to lighter variants (e.g., Typhoon Whisper Turbo)

- Best suited for offline transcription where accuracy is prioritized over latency

License

This model is released under the MIT License, inherited from OpenAI Whisper.

Citation

If you use this model in your research or application, please cite our technical report:

@misc{warit2026typhoonasr,

title={Typhoon ASR Real-time: FastConformer-Transducer for Thai Automatic Speech Recognition},

author={Warit Sirichotedumrong and Adisai Na-Thalang and Potsawee Manakul and Pittawat Taveekitworachai and Sittipong Sripaisarnmongkol and Kunat Pipatanakul},

year={2026},

eprint={2601.13044},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2601.13044},

}

Contact

For questions or feedback, please visit our website or open an issue on the model's repository.

Developed by: Typhoon Team, SCB 10X

Model Card Version: 1.0

Last Updated: January 2026

- Downloads last month

- 1,882

Model tree for typhoon-ai/typhoon-whisper-large-v3

Base model

openai/whisper-large-v3