File size: 8,932 Bytes

71aeaf6 ff5ff92 71aeaf6 ff5ff92 71aeaf6 ff5ff92 71aeaf6 ff5ff92 71aeaf6 276ee4f 71aeaf6 276ee4f 71aeaf6 dfb291f 71aeaf6 3a096c5 4918051 3a096c5 71aeaf6 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 |

---

license: apache-2.0

---

<h2 align="center" style="line-height: 25px;">

FocusDiff: Advancing Fine-Grained Text-Image Alignment for Autoregressive Visual Generation through RL

</h2>

<p align="center">

<a href="https://arxiv.org/abs/2506.05501" style="display: inline-block; margin: 0 5px;">

<img src="https://img.shields.io/badge/Paper-red?style=flat&logo=arxiv" style="height: 15px;">

</a>

<a href="https://focusdiff.github.io/" style="display: inline-block; margin: 0 5px;">

<img src="https://img.shields.io/badge/Project Page-white?style=flat&logo=google-docs" style="height: 15px;">

</a>

<a href="https://github.com/wendell0218/FocusDiff" style="display: inline-block; margin: 0 5px;">

<img src="https://img.shields.io/badge/Code-black?style=flat&logo=github" style="height: 15px;">

</a>

<a href="https://huggingface.co/wendell0218/Janus-FocusDiff-7B" style="display: inline-block; margin: 0 5px;">

<img src="https://img.shields.io/badge/-%F0%9F%A4%97%20Checkpoint-orange?style=flat" style="height: 15px;"/>

</a>

</p>

<div align="center">

<span style="font-size: smaller;">

Kaihang Pan<sup>1*</sup>, Wendong Bu<sup>1*</sup>, Yuruo Wu<sup>1*</sup>, Yang Wu<sup>2</sup>, Kai Shen<sup>1</sup>, Yunfei Li<sup>2</sup>,

<br>Hang Zhao<sup>2</sup>, Juncheng Li<sup>1†</sup>, Siliang Tang<sup>1</sup>, Yueting Zhuang<sup>1</sup>

<br><sup>1</sup>Zhejiang University, <sup>2</sup>Ant Group

<br>*Equal Contribution, <sup>†</sup>Corresponding Authors

</span>

</div>

## 🚀 Overview

**FocusDiff** is a new method for improving fine-grained text-image alignment in autoregressive text-to-image models. By introducing the **FocusDiff-Data** dataset and a novel **Pair-GRPO** reinforcement learning framework, we help models learn subtle semantic differences between similar text-image pairs. Based on paired data in FocusDiff-Data, we further introduce the **PairComp** Benchmark, which focuses on subtle semantic differences.

Key Contributions:

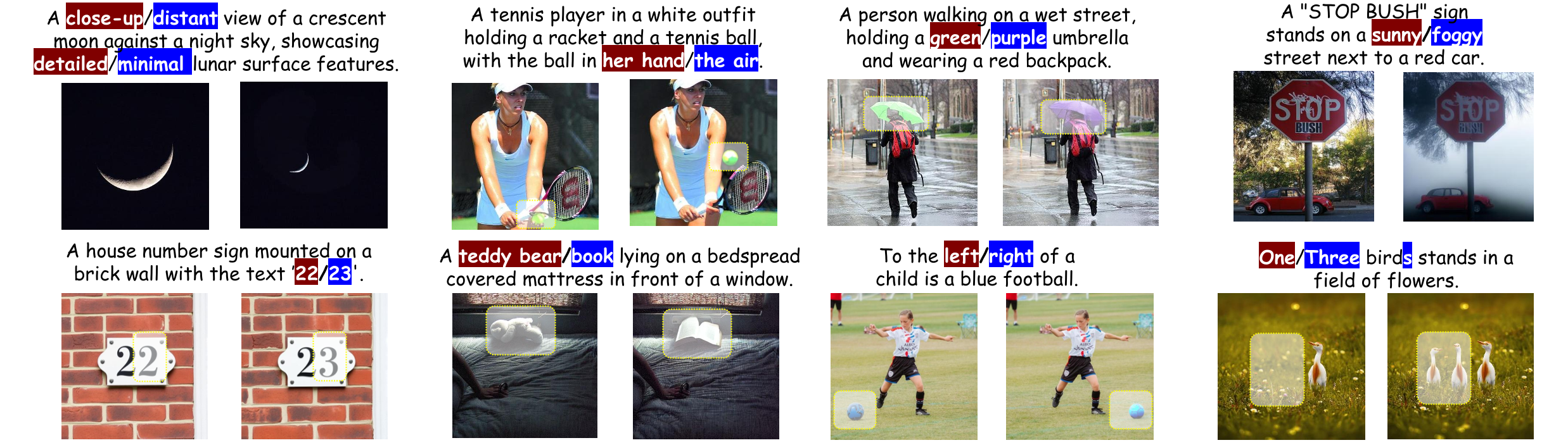

1. **PairComp Benchmark**: A new benchmark focusing on fine-grained differences in text prompts.

<img src="https://raw.githubusercontent.com/wendell0218/FocusDiff/refs/heads/main/assets/benchmark.png" width="100%"/>

2. **FocusDiff Approach**: A method using paired data and reinforcement learning to enhance fine-grained text-image alignment.

<div style="text-align: center;">

<img src="https://raw.githubusercontent.com/wendell0218/FocusDiff/refs/heads/main/assets/grpo.png" width="100%" />

</div>

3. **SOTA Results**: Our model is evaluated with the top performance on multiple benchmarks including **GenEval**, **T2I-CompBench**, **DPG-Bench**, and our newly proposed **PairComp** benchmark.

## ✨️ Quickstart

**1. Prepare Environment**

We recommend using Python 3.10 and setting up a virtual environment:

```bash

# clone our repo

git clone https://github.com/wendell0218/FocusDiff.git

cd FocusDiff

# prepare python environment

conda create -n focus-diff python=3.10

conda activate focus-diff

pip install -r requirements.txt

```

**2. Prepare Pretrained Model**

FocusDiff utilizes `Janus-Pro-7B` as the pretrained model for subsequent supervised fine-tuning. You can download the corresponding model using the following command:

```bash

GIT_LFS_SKIP_SMUDGE=1 git clone https://huggingface.co/deepseek-ai/Janus-Pro-7B

cd Janus-Pro-7B

git lfs pull

```

**3. Start Generating!**

```python

import os

import torch

import PIL.Image

import numpy as np

from transformers import AutoModelForCausalLM

from janus.models import MultiModalityCausalLM, VLChatProcessor

@torch.inference_mode()

def generate(

mmgpt: MultiModalityCausalLM,

vl_chat_processor: VLChatProcessor,

prompt: str,

temperature: float = 1.0,

parallel_size: int = 4,

cfg_weight: float = 5.0,

image_token_num_per_image: int = 576,

img_size: int = 384,

patch_size: int = 16,

img_top_k: int = 1,

img_top_p: float = 1.0,

):

images = []

input_ids = vl_chat_processor.tokenizer.encode(prompt)

input_ids = torch.LongTensor(input_ids)

tokens = torch.zeros((parallel_size*2, len(input_ids)), dtype=torch.int).cuda()

for i in range(parallel_size*2):

tokens[i, :] = input_ids

if i % 2 != 0:

tokens[i, 1:-1] = vl_chat_processor.pad_id

inputs_embeds = mmgpt.language_model.get_input_embeddings()(tokens)

generated_tokens = torch.zeros((parallel_size, image_token_num_per_image), dtype=torch.int).cuda()

for i in range(image_token_num_per_image):

outputs = mmgpt.language_model.model(inputs_embeds=inputs_embeds, use_cache=True, past_key_values=outputs.past_key_values if i != 0 else None)

hidden_states = outputs.last_hidden_state

logits = mmgpt.gen_head(hidden_states[:, -1, :])

logit_cond = logits[0::2, :]

logit_uncond = logits[1::2, :]

logits = logit_uncond + cfg_weight * (logit_cond-logit_uncond)

if img_top_k:

v, _ = torch.topk(logits, min(img_top_k, logits.size(-1)))

logits[logits < v[:, [-1]]] = float("-inf")

probs = torch.softmax(logits / temperature, dim=-1)

if img_top_p:

probs_sort, probs_idx = torch.sort(probs,

dim=-1,

descending=True)

probs_sum = torch.cumsum(probs_sort, dim=-1)

mask = probs_sum - probs_sort > img_top_p

probs_sort[mask] = 0.0

probs_sort.div_(probs_sort.sum(dim=-1, keepdim=True))

next_token = torch.multinomial(probs_sort, num_samples=1)

next_token = torch.gather(probs_idx, -1, next_token)

else:

next_token = torch.multinomial(probs, num_samples=1)

generated_tokens[:, i] = next_token.squeeze(dim=-1)

next_token = torch.cat([next_token.unsqueeze(dim=1), next_token.unsqueeze(dim=1)], dim=1).view(-1)

img_embeds = mmgpt.prepare_gen_img_embeds(next_token)

inputs_embeds = img_embeds.unsqueeze(dim=1)

dec = mmgpt.gen_vision_model.decode_code(generated_tokens.to(dtype=torch.int), shape=[parallel_size, 8, img_size//patch_size, img_size//patch_size])

dec = dec.to(torch.float32).cpu().numpy().transpose(0, 2, 3, 1)

dec = np.clip((dec + 1) / 2 * 255, 0, 255)

visual_img = np.zeros((parallel_size, img_size, img_size, 3), dtype=np.uint8)

visual_img[:, :, :] = dec

for i in range(parallel_size):

images.append(PIL.Image.fromarray(visual_img[i]))

return images

if __name__ == "__main__":

import argparse

parser = argparse.ArgumentParser()

parser.add_argument("--model_path", type=str, default="deepseek-ai/Janus-Pro-7B")

parser.add_argument("--ckpt_path", type=str, default=None)

parser.add_argument("--caption", type=str, default="a brown giraffe and a white stop sign")

parser.add_argument("--gen_path", type=str, default='results/samples')

parser.add_argument("--cfg", type=float, default=5.0)

parser.add_argument("--parallel_size", type=int, default=4)

args = parser.parse_args()

vl_chat_processor: VLChatProcessor = VLChatProcessor.from_pretrained(args.model_path)

vl_gpt: MultiModalityCausalLM = AutoModelForCausalLM.from_pretrained(args.model_path, trust_remote_code=True)

if args.ckpt_path is not None:

state_dict = torch.load(f"{args.ckpt_path}", map_location="cpu")

vl_gpt.load_state_dict(state_dict)

vl_gpt = vl_gpt.to(torch.bfloat16).cuda().eval()

prompt = f'<|User|>: {args.caption}\n\n<|Assistant|>:<begin_of_image>'

images = generate(

vl_gpt,

vl_chat_processor,

prompt,

parallel_size = args.parallel_size,

cfg_weight = args.cfg,

)

if not os.path.exists(args.gen_path):

os.makedirs(args.gen_path, exist_ok=True)

for i in range(args.parallel_size):

img_name = str(i).zfill(4)+".png"

save_path = os.path.join(args.gen_path, img_name)

images[i].save(save_path)

```

## 🤝 Acknowledgment

Our project is developed based on the following repositories:

- [Janus-Series](https://github.com/deepseek-ai/Janus): Unified Multimodal Understanding and Generation Models

- [Open-R1](https://github.com/huggingface/open-r1): Fully open reproduction of DeepSeek-R1

## 📜 Citation

If you find this work useful for your research, please cite our paper and star our git repo:

```bibtex

@article{pan2025focusdiff,

title={FocusDiff: Advancing Fine-Grained Text-Image Alignment for Autoregressive Visual Generation through RL},

author={Pan, Kaihang and Bu, Wendong and Wu, Yuruo and Wu, Yang and Shen, Kai and Li, Yunfei and Zhao, Hang and Li, Juncheng and Tang, Siliang and Zhuang, Yueting},

journal={arXiv preprint arXiv:2506.05501},

year={2025}

}

``` |