DeltaDorsal

Model repository for the paper: DeltaDorsal: Enhancing Hand Pose Estimation with Dorsal Features in Egocentric Views published at ACM CHI 2026

[Github] [DOI (UNDER CONSTRUCTION)] [arXiv]

Overview

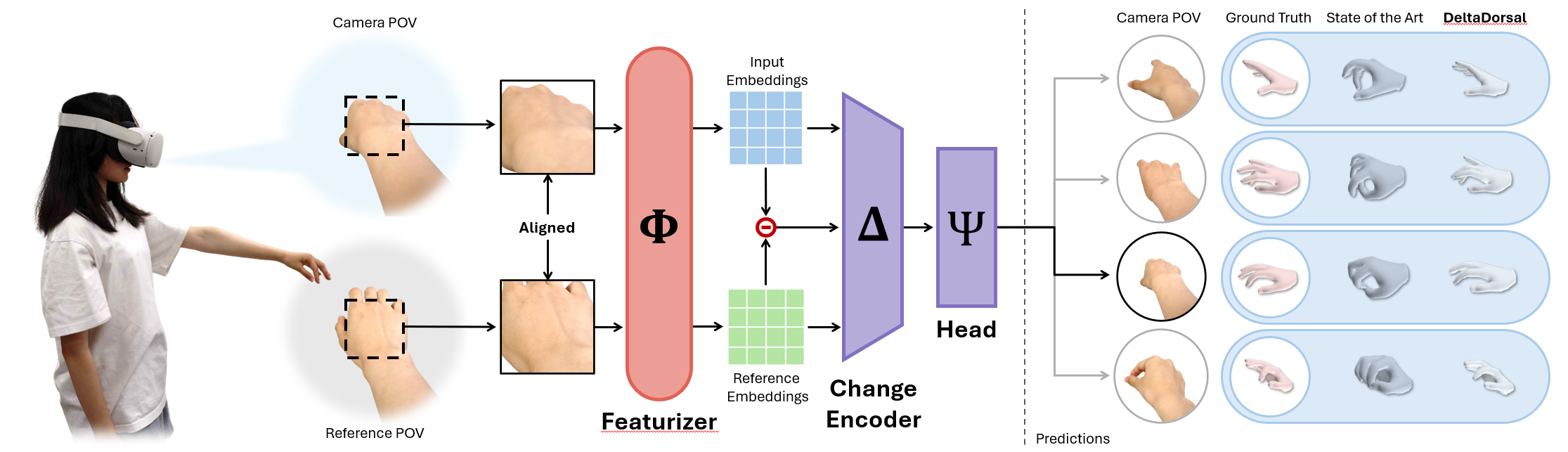

DeltaDorsal is a 3D hand pose estimation model using dorsal features of the hand. DeltaDorsal uses a delta encoding approach where features from a base (neutral) hand image are compared with features from the current hand image to predict 3D hand pose and force. Our repo consists of the following components:

- DeltaDorsalNet: A backbone network using DINOv3 features with our delta encoding

- DINOv3 Backbone: Extracts features from input images

- Change Encoder: Computes delta features between current and base images

- Residual Pose Head: Predicts pose parameter residuals from prior

- Force Head: Classifies finger activation and force levels

- Pose Estimation: Predicts 3D hand pose parameters

- Force Estimation: Classifies finger activation and force levels

Installation

Prerequisites

- Python >= 3.10

- uv or any other package manager of your choice

Setup

- Clone the repository:

git clone --recursive [REPO_URL]

cd wrinklesense

- Create a virtual environment and install PyTorch:

uv pip install torch --torch-backend=auto

- Install all dependencies:

uv sync

- Download DINOv3

Make sure to follow all the installation instructions for DINOv3 found here.

Download all model weights and place them into _DATA/dinov3

- Download MANO

Our repo requires the usage of the MANO hand model. Request access here.

You only need to put MANO_RIGHT.pkl under the _DATA/mano folder.

- (Optional) Set up Weights & Biases for logging:

wandb login

Training

DeltaDorsal uses Hydra for configuration management. Config files are located in configs/:

Modifying Configs

You can override any config parameter via command line:

python src/train_pose.py model.optimizer.lr=0.0001 data.batch_size=32 max_epochs=100

Or create a new config file in configs/experiments/ and reference it:

python src/train_pose.py experiment=my_experiment

Pose Model Training

Train the pose estimation model:

python src/train_pose.py

Common Configuration Overrides

Override config parameters via command line:

# Change number of epochs

python src/train_pose.py max_epochs=50

# Change batch size

python src/train_pose.py data.batch_size=32

# Change learning rate

python src/train_pose.py model.optimizer.lr=1e-4

# Resume from checkpoint

python src/train_pose.py ckpt_path=path/to/checkpoint.ckpt

# Train only (skip testing)

python src/train_pose.py test=False

# Test only (skip training)

python src/train_pose.py train=False test=True ckpt_path=path/to/checkpoint.ckpt

Training with Different Data Splits

# Use leave-one-out cross-validation

python src/train_pose.py data.leave_one_out=True data.val_participants=[1] data.test_participants=[3]

# Train on specific participants

python src/train_pose.py data.all_participant_ids=[1,2,3,4,5]

Force Model Training

Train the force estimation model:

python src/train_force.py

Common Configuration Overrides

# Change number of epochs

python src/train_force.py max_epochs=50

# Change batch size

python src/train_force.py data.batch_size=64

# Resume from checkpoint

python src/train_force.py ckpt_path=path/to/checkpoint.ckpt

# Train only

python src/train_force.py test=False

# Test only

python src/train_force.py train=False test=True ckpt_path=path/to/checkpoint.ckpt

Inference and Testing

Running Test Set Evaluation

After training, evaluate on the test set:

# Pose model evaluation

python src/train_pose.py train=False test=True ckpt_path=path/to/checkpoint.ckpt

# Force model evaluation

python src/train_force.py train=False test=True ckpt_path=path/to/checkpoint.ckpt

Citing

If extending or using our work, please cite the following papers:

@misc{huangDeltaDorsalEnhancingHand2026,

title = {{{DeltaDorsal}}: {{Enhancing Hand Pose Estimation}} with {{Dorsal Features}} in {{Egocentric Views}}},

shorttitle = {{{DeltaDorsal}}},

author = {Huang, William and Pei, Siyou and Zou, Leyi and Gonzalez, Eric J. and Chatterjee, Ishan and Zhang, Yang},

year = 2026,

month = jan,

number = {arXiv:2601.15516},

eprint = {2601.15516},

primaryclass = {cs},

publisher = {arXiv},

doi = {10.48550/arXiv.2601.15516},

urldate = {2026-02-08},

archiveprefix = {arXiv}

}

@article{MANO:SIGGRAPHASIA:2017,

title = {Embodied Hands: Modeling and Capturing Hands and Bodies Together},

author = {Romero, Javier and Tzionas, Dimitrios and Black, Michael J.},

journal = {ACM Transactions on Graphics, (Proc. SIGGRAPH Asia)},

publisher = {ACM},

month = nov,

year = {2017},

url = {http://doi.acm.org/10.1145/3130800.3130883},

month_numeric = {11}

}

@misc{simeoniDINOv32025,

title = {{{DINOv3}}},

author = {Sim{\'e}oni, Oriane and Vo, Huy V. and Seitzer, Maximilian and Baldassarre, Federico and Oquab, Maxime and Jose, Cijo and Khalidov, Vasil and Szafraniec, Marc and Yi, Seungeun and Ramamonjisoa, Micha{\"e}l and Massa, Francisco and Haziza, Daniel and Wehrstedt, Luca and Wang, Jianyuan and Darcet, Timoth{\'e}e and Moutakanni, Th{\'e}o and Sentana, Leonel and Roberts, Claire and Vedaldi, Andrea and Tolan, Jamie and Brandt, John and Couprie, Camille and Mairal, Julien and J{\'e}gou, Herv{\'e} and Labatut, Patrick and Bojanowski, Piotr},

year = 2025,

month = aug,

number = {arXiv:2508.10104},

eprint = {2508.10104},

primaryclass = {cs},

publisher = {arXiv},

doi = {10.48550/arXiv.2508.10104},

urldate = {2025-08-25},

archiveprefix = {arXiv}

}