Moroccan Arabic — Full Ablation Study & Research Report

Detailed evaluation of all model variants trained on Moroccan Arabic Wikipedia data by Wikilangs.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

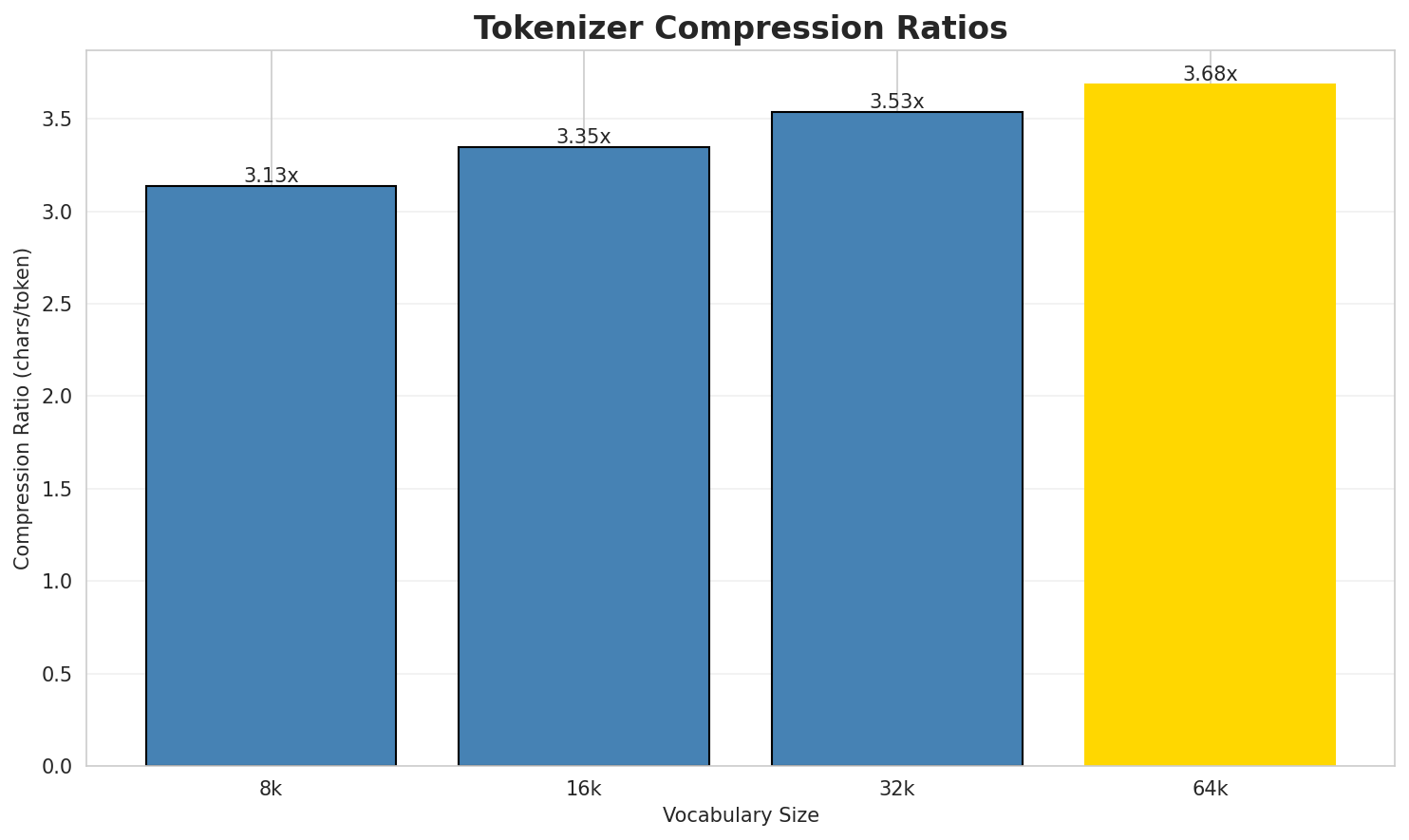

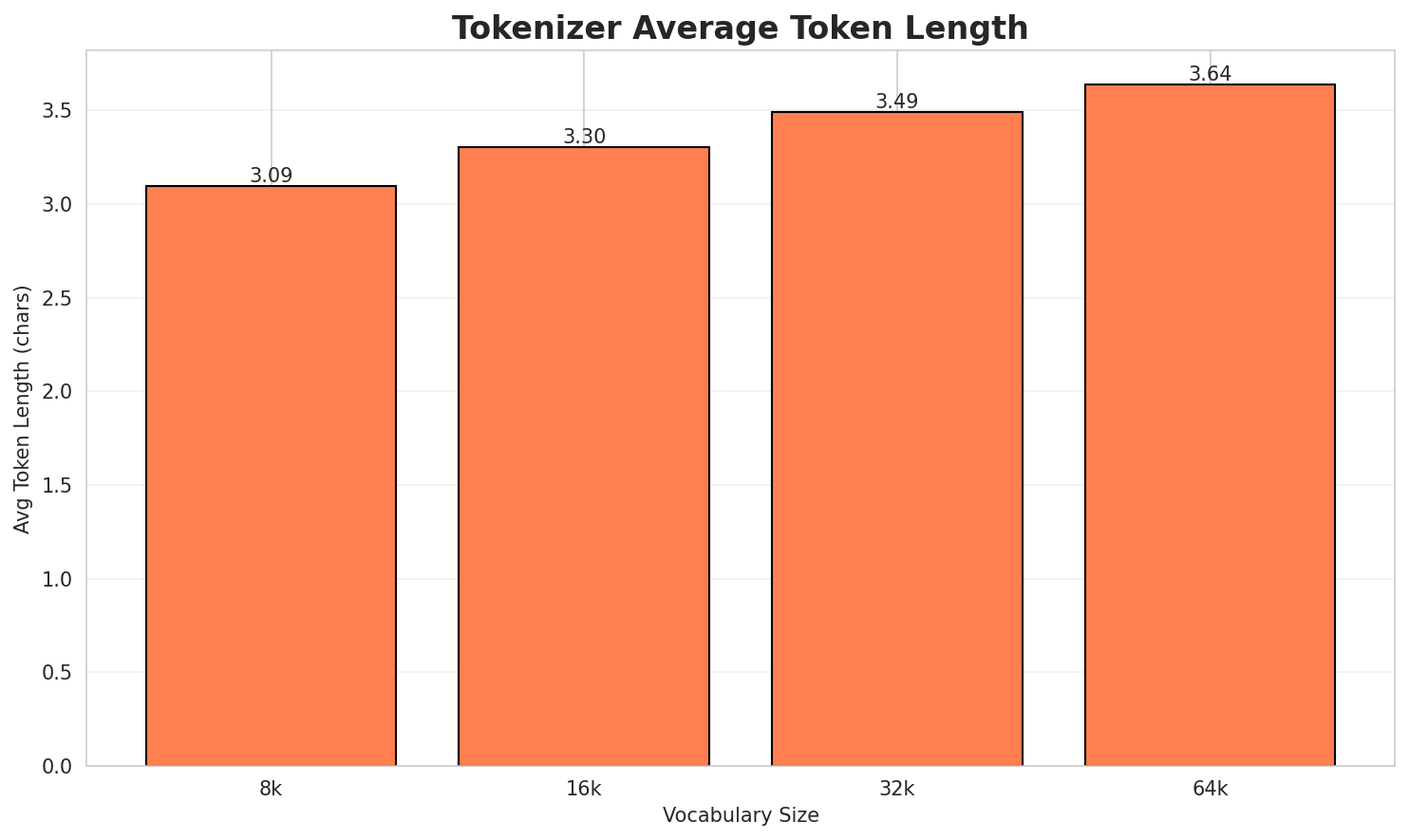

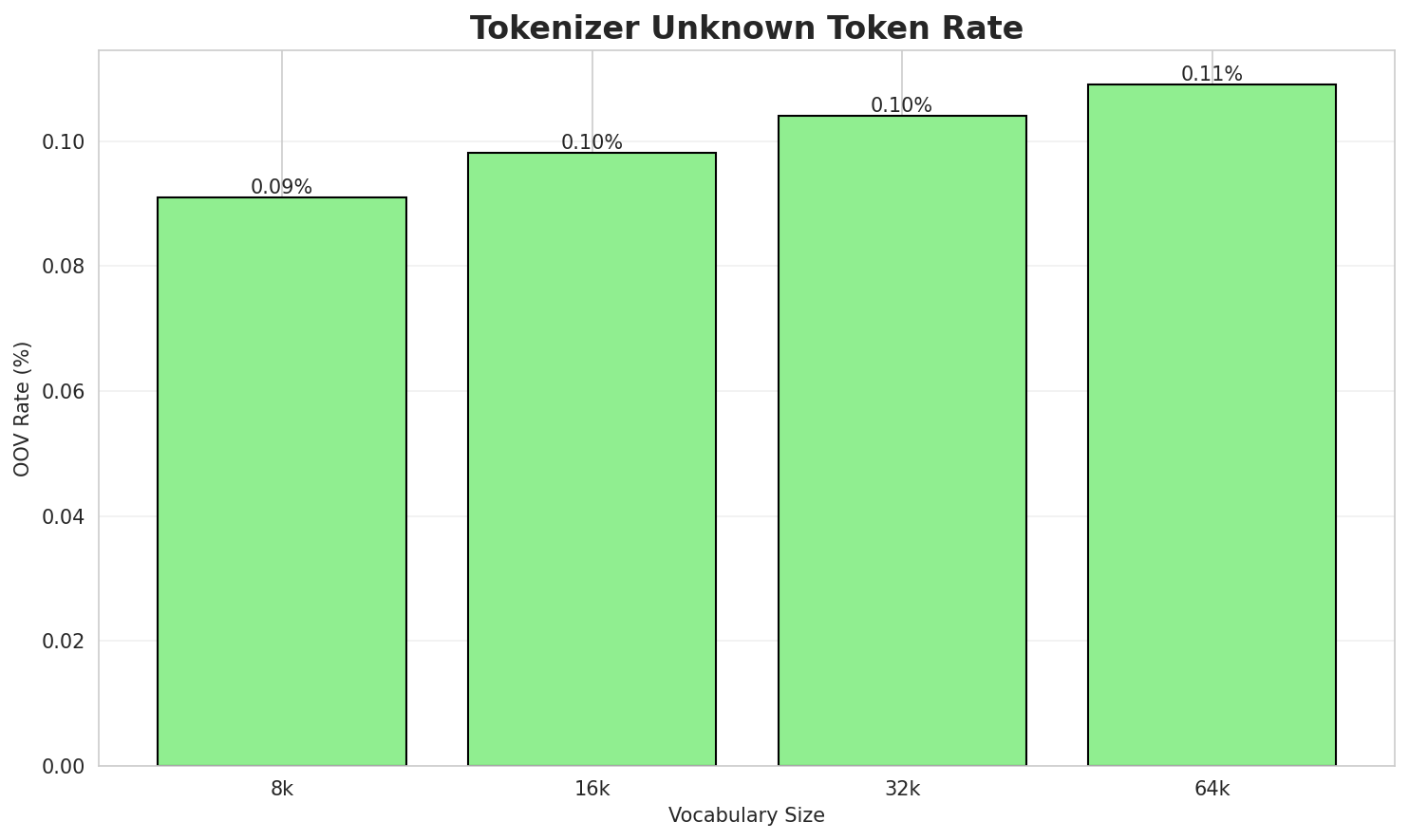

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.481x | 3.48 | 0.0910% | 300,053 |

| 16k | 3.755x | 3.76 | 0.0982% | 278,145 |

| 32k | 3.985x | 3.99 | 0.1041% | 262,127 |

| 64k | 4.172x 🏆 | 4.18 | 0.1090% | 250,361 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: قريش هيا قبيلة ؤلا أجموع قبلي لي، علا حساب لمصادر لإسلامية، كانت ف مكة ؤ كاينتام...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ق ريش ▁هيا ▁قبيلة ▁ؤلا ▁أج موع ▁ق بلي ▁لي ... (+19 more) |

29 |

| 16k | ▁قريش ▁هيا ▁قبيلة ▁ؤلا ▁أج موع ▁ق بلي ▁لي ، ... (+16 more) |

26 |

| 32k | ▁قريش ▁هيا ▁قبيلة ▁ؤلا ▁أجموع ▁ق بلي ▁لي ، ▁علا ... (+15 more) |

25 |

| 64k | ▁قريش ▁هيا ▁قبيلة ▁ؤلا ▁أجموع ▁قبلي ▁لي ، ▁علا ▁حساب ... (+14 more) |

24 |

Sample 2: آيت ميلك جماعة ترابية قروية كاينة في إقليم اشتوكة آيت باها، جهة سوس ماسة، ساكنين...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁آيت ▁ميل ك ▁جماعة ▁ترابية ▁قروية ▁كاينة ▁في ▁إقليم ▁اشتوكة ... (+16 more) |

26 |

| 16k | ▁آيت ▁ميل ك ▁جماعة ▁ترابية ▁قروية ▁كاينة ▁في ▁إقليم ▁اشتوكة ... (+16 more) |

26 |

| 32k | ▁آيت ▁ميل ك ▁جماعة ▁ترابية ▁قروية ▁كاينة ▁في ▁إقليم ▁اشتوكة ... (+16 more) |

26 |

| 64k | ▁آيت ▁ميلك ▁جماعة ▁ترابية ▁قروية ▁كاينة ▁في ▁إقليم ▁اشتوكة ▁آيت ... (+15 more) |

25 |

Sample 3: خديجة بنت علي بن أبي طالب، هي بنت علي بن أبي طالب. مصادر د نسا

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁خديجة ▁بنت ▁علي ▁بن ▁أبي ▁طالب ، ▁هي ▁بنت ▁علي ... (+7 more) |

17 |

| 16k | ▁خديجة ▁بنت ▁علي ▁بن ▁أبي ▁طالب ، ▁هي ▁بنت ▁علي ... (+7 more) |

17 |

| 32k | ▁خديجة ▁بنت ▁علي ▁بن ▁أبي ▁طالب ، ▁هي ▁بنت ▁علي ... (+7 more) |

17 |

| 64k | ▁خديجة ▁بنت ▁علي ▁بن ▁أبي ▁طالب ، ▁هي ▁بنت ▁علي ... (+7 more) |

17 |

Key Findings

- Best Compression: 64k achieves 4.172x compression

- Lowest UNK Rate: 8k with 0.0910% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

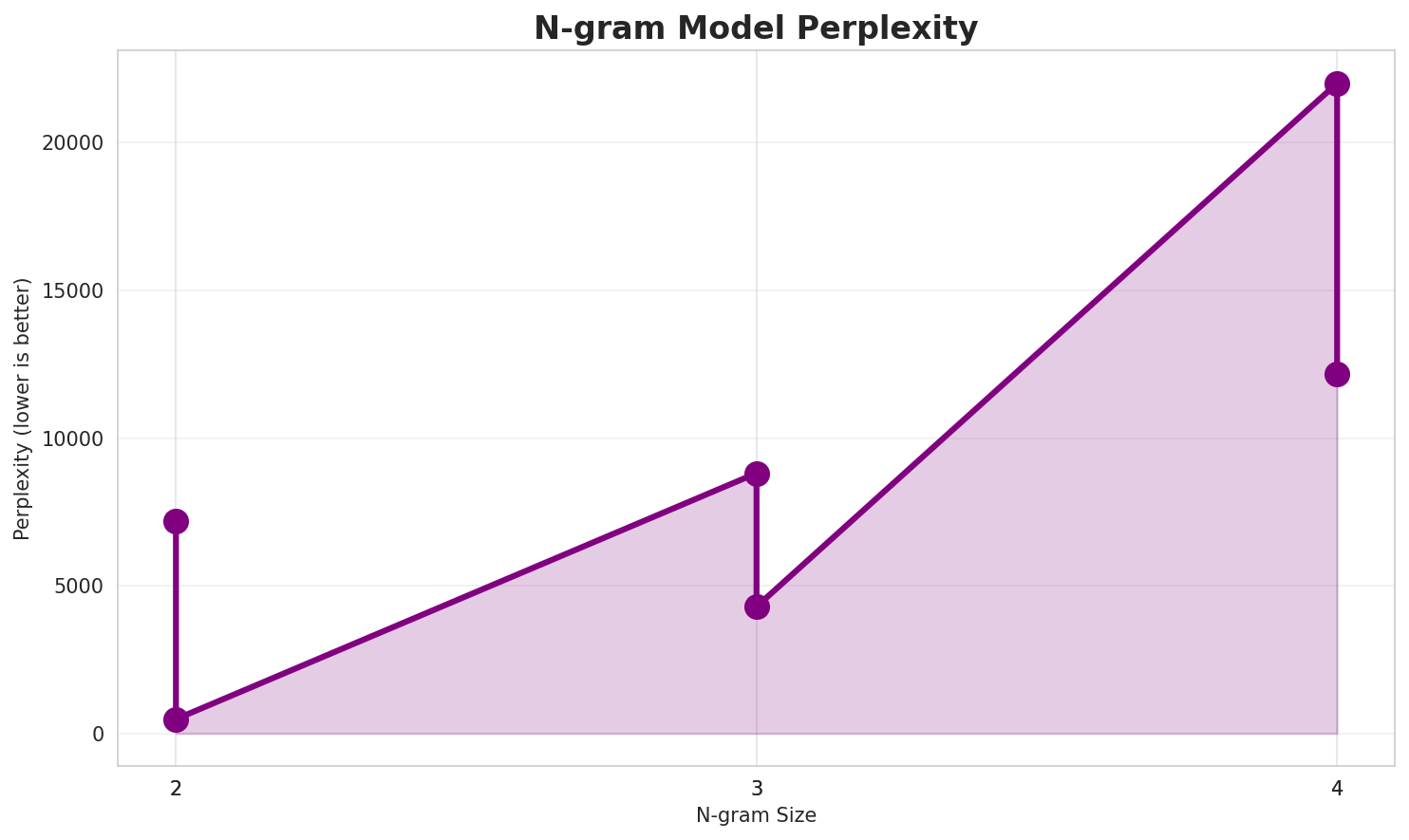

2. N-gram Model Evaluation

Results

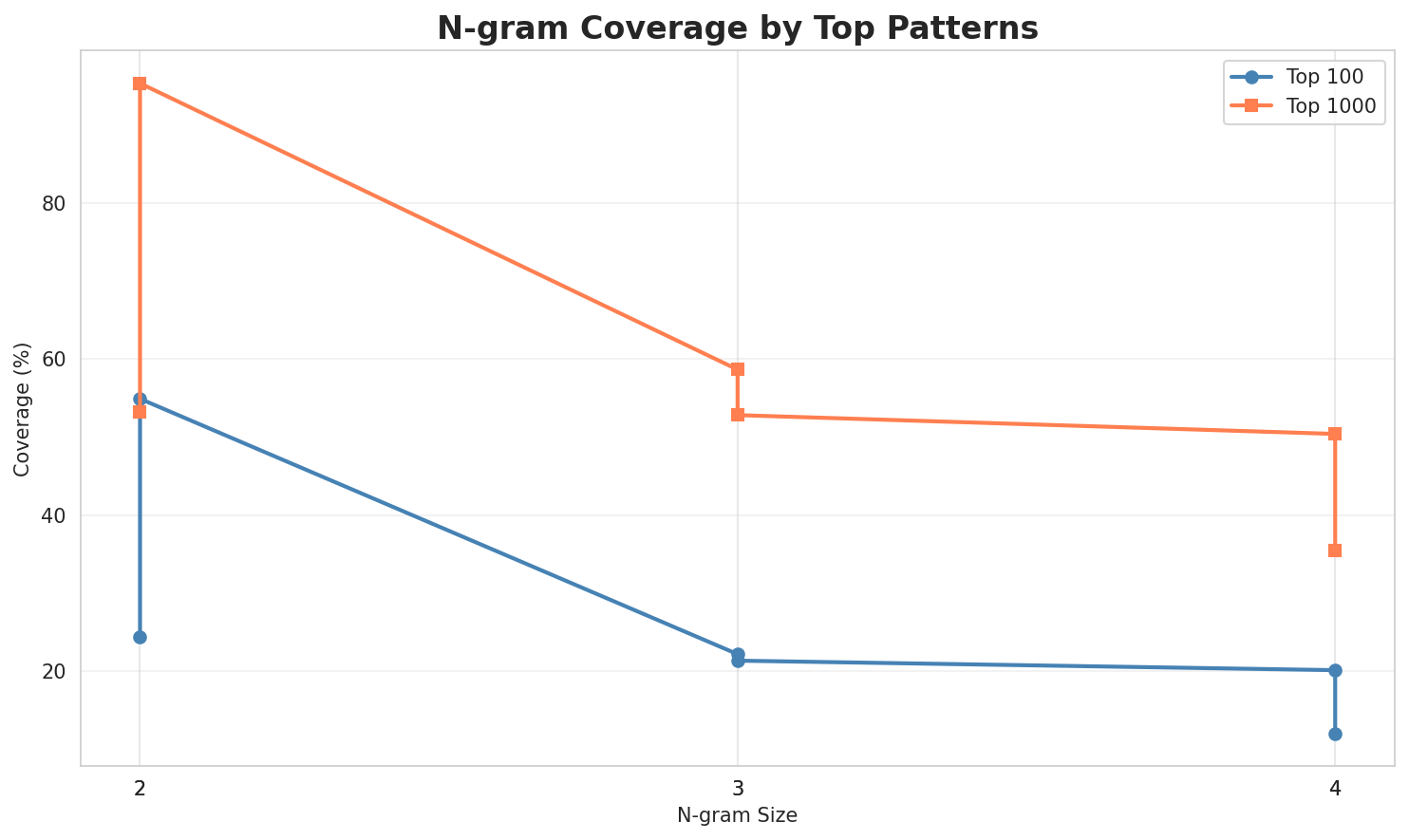

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 7,415 | 12.86 | 40,208 | 22.8% | 50.4% |

| 2-gram | Subword | 428 🏆 | 8.74 | 5,913 | 57.8% | 96.3% |

| 3-gram | Word | 5,775 | 12.50 | 44,139 | 27.3% | 56.7% |

| 3-gram | Subword | 3,823 | 11.90 | 44,840 | 23.0% | 60.5% |

| 4-gram | Word | 8,149 | 12.99 | 71,489 | 27.3% | 53.3% |

| 4-gram | Subword | 20,320 | 14.31 | 222,645 | 11.9% | 35.8% |

| 5-gram | Word | 7,702 | 12.91 | 59,669 | 28.3% | 52.6% |

| 5-gram | Subword | 63,356 | 15.95 | 533,903 | 7.3% | 24.8% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | واصلة ل |

8,540 |

| 2 | نسبة د |

7,170 |

| 3 | ف لمغريب |

6,310 |

| 4 | ف إقليم |

6,015 |

| 5 | ف نسبة |

4,265 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ف نسبة د |

4,264 |

| 2 | فيها مصدر و |

3,235 |

| 3 | و نسبة د |

2,894 |

| 4 | مصدر و بايت |

2,855 |

| 5 | اللي خدامين ف |

2,761 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | فيها مصدر و بايت |

2,855 |

| 2 | نسبة نّاس اللي خدامين |

2,705 |

| 3 | نّاس اللي خدامين ف |

2,595 |

| 4 | على حساب لإحصاء الرسمي |

2,501 |

| 5 | لمغريب هاد دّوار كينتامي |

2,500 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | نسبة نّاس اللي خدامين ف |

2,594 |

| 2 | ف لمغريب هاد دّوار كينتامي |

2,500 |

| 3 | لمغريب هاد دّوار كينتامي ل |

2,500 |

| 4 | هاد دّوار كينتامي ل مشيخة |

2,500 |

| 5 | حساب لإحصاء الرسمي د عام |

2,500 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ا ل |

348,897 |

| 2 | _ ل |

282,523 |

| 3 | ة _ |

230,243 |

| 4 | _ ا |

221,714 |

| 5 | _ م |

157,830 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ ا ل |

216,894 |

| 2 | _ ف _ |

84,068 |

| 3 | ا ت _ |

64,715 |

| 4 | _ و _ |

60,577 |

| 5 | ي ة _ |

60,370 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ د ي ا |

48,269 |

| 2 | د ي ا ل |

48,014 |

| 3 | ي ا ل _ |

33,434 |

| 4 | د _ ا ل |

33,075 |

| 5 | _ م ن _ |

29,173 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ د ي ا ل |

47,884 |

| 2 | د ي ا ل _ |

33,006 |

| 3 | _ ع ل ى _ |

19,658 |

| 4 | _ ا ل ل ي |

18,939 |

| 5 | ا ل ل ي _ |

18,733 |

Key Findings

- Best Perplexity: 2-gram (subword) with 428

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~25% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

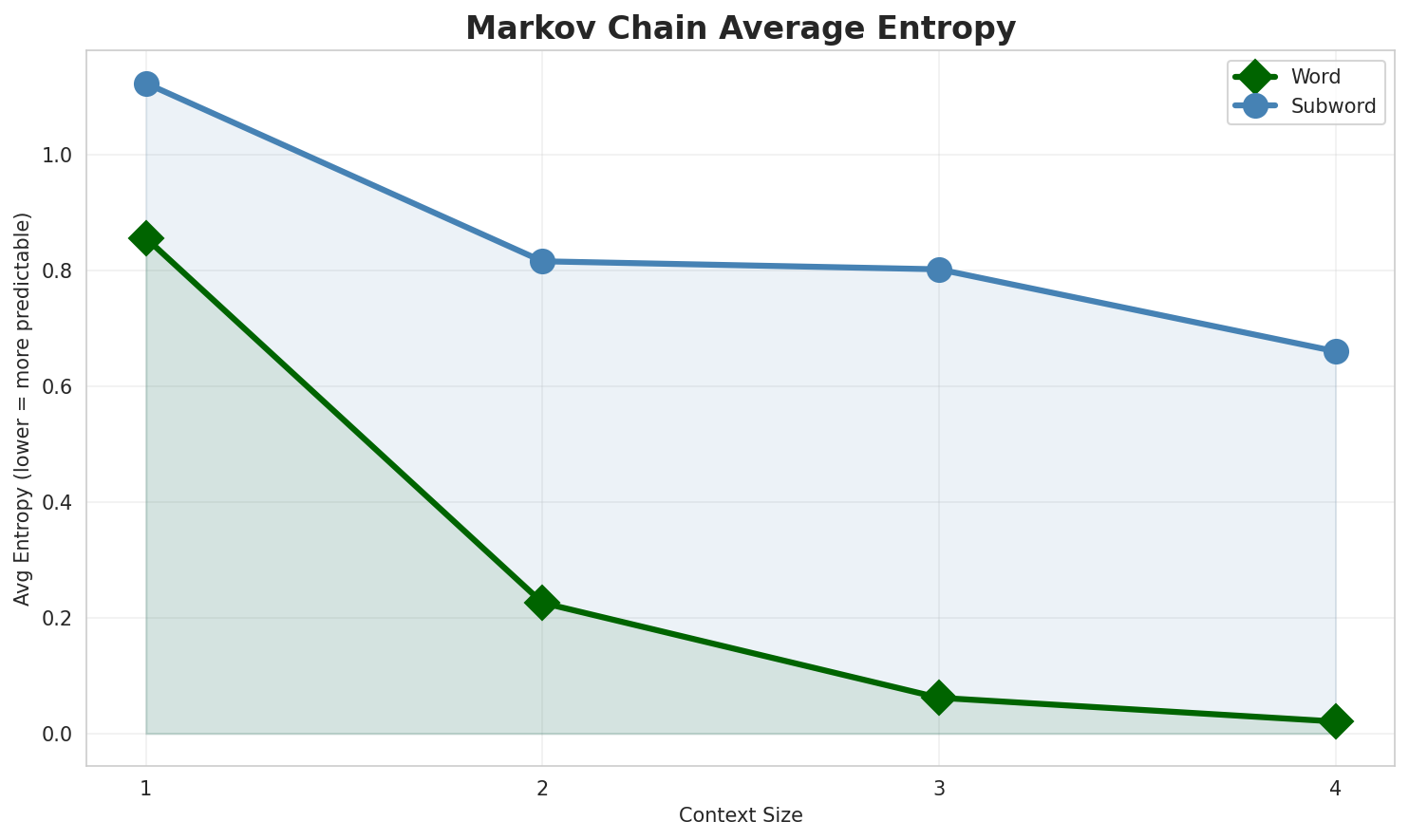

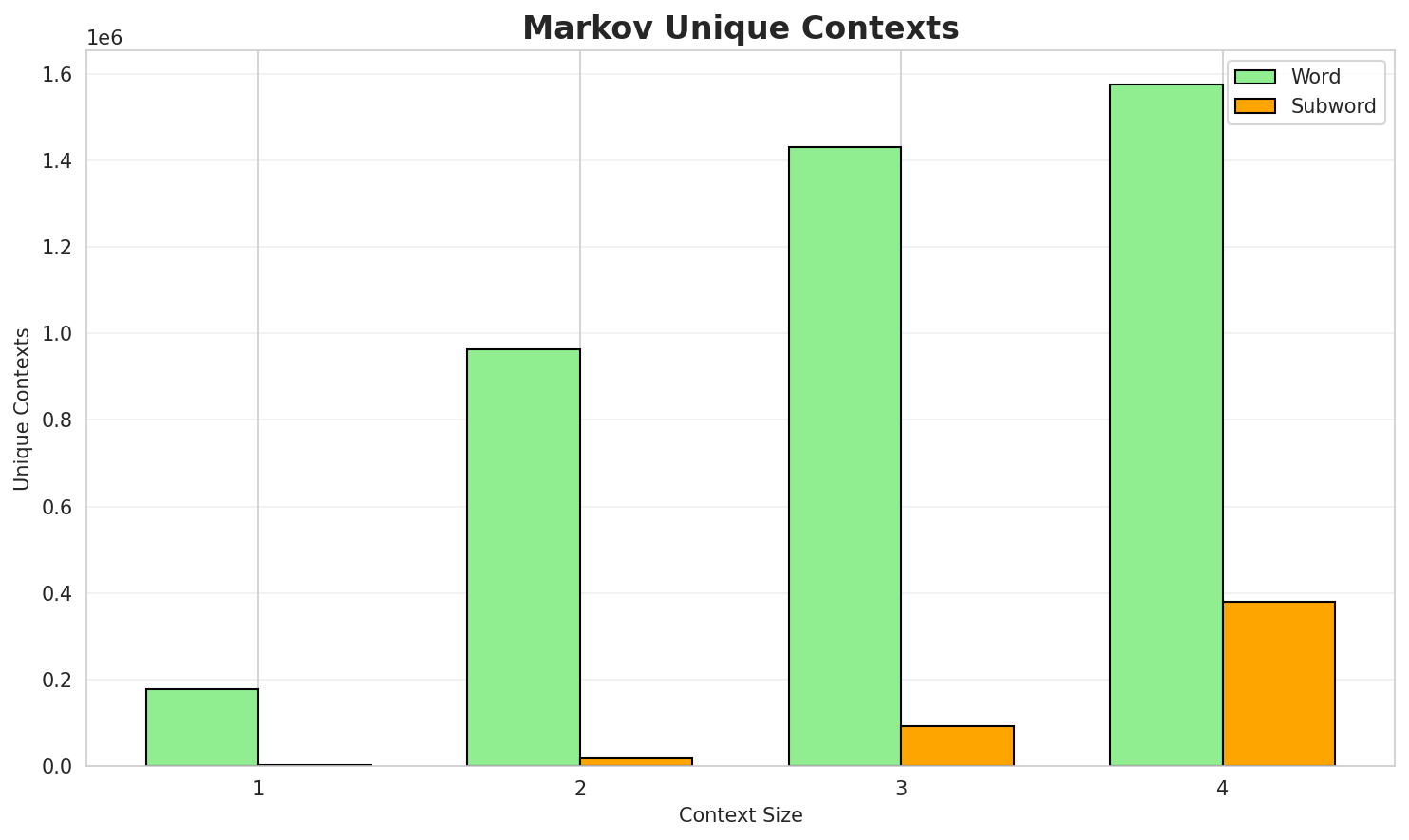

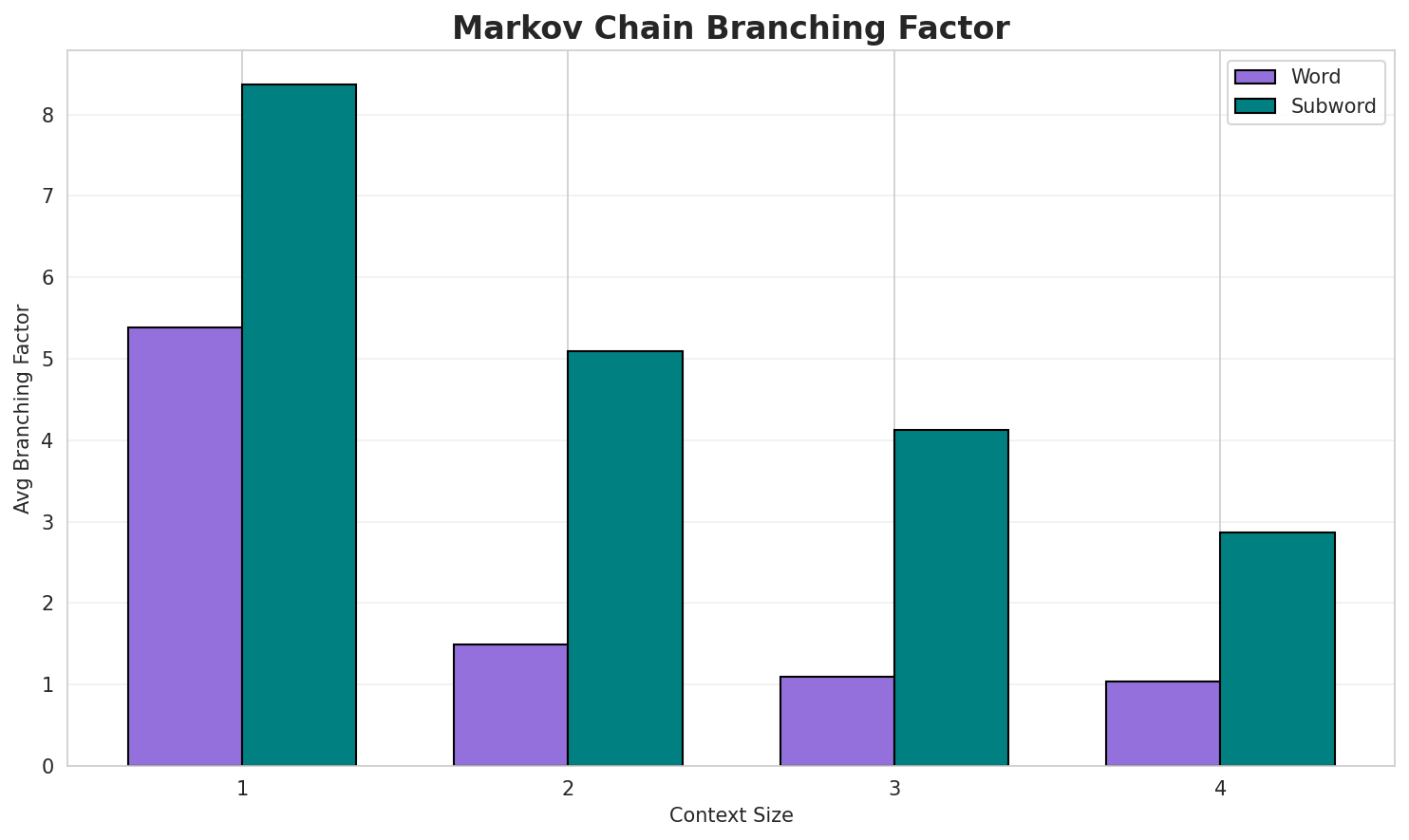

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.8581 | 1.813 | 5.40 | 180,421 | 14.2% |

| 1 | Subword | 1.1243 | 2.180 | 8.36 | 2,159 | 0.0% |

| 2 | Word | 0.2267 | 1.170 | 1.49 | 973,633 | 77.3% |

| 2 | Subword | 0.8165 | 1.761 | 5.10 | 18,051 | 18.4% |

| 3 | Word | 0.0619 | 1.044 | 1.10 | 1,450,643 | 93.8% |

| 3 | Subword | 0.8035 | 1.745 | 4.14 | 92,103 | 19.7% |

| 4 | Word | 0.0207 🏆 | 1.014 | 1.04 | 1,595,675 | 97.9% |

| 4 | Subword | 0.6627 | 1.583 | 2.87 | 381,563 | 33.7% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

ف ايكنان نقص ب سباب غتيال لماليك لأمازيغي ؤ ولّا على المنطق والبحث كان حتا زلزالو نسبة نّاس اللي سبق ليهوم مصادر ربحو جايزة أحسن 10 سنين موراها تولّا لحكم الداتيد لميداليات ف إقليم لحوز جهة مراكش آسفي ف المغرب من بعد باللي كان نتر خيالي

Context Size 2:

واصلة ل 3 ف لعقد ديال عوام كيوافق ف تّقويم لهيجري ؤ ف تّقويم لڭريڭوري بدا نهارنسبة د الشوماج واصلة ل 6 6 044 0 290 يوكطوتانية هيدروجين 7 7 و لخصوبة لكاملةف لمغريب هاد دّوار كينتامي ل مشيخة أيت قضني لي كتضم 9 د دّواور لعاداد د سّكان

Context Size 3:

ف نسبة د التسكويل واصلة ل 90 8 و نسبة د لأمية واصلة ل 50 33 لخدمة ففيها مصدر و بايت على حساب النوع د لحنش التشلال التنفوسي فشلان لكبدة لكوما و bites a dو نسبة د الشوماج واصلة ل 18 4 و لموعدّال د لعمر عند الجواج اللولاني هوّ 23 87

Context Size 4:

نسبة نّاس اللي خدامين في لقطاع لخاص 39 1 مصادر الرباط سلا القنيطرة قروية ف إقليم لخميسات مسكونين فنّاس اللي خدامين ف لپريڤي 57 1 مصادر الرباط سلا القنيطرة قروية ف إقليم سيدي إيفني جهة ݣلميم وادعلى حساب لإحصاء الرسمي د عام نوطات مصادر ف لمغريب ف إقليم تارودانت زادهوم داريجابوت

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_أو_جة_م_-اسبش_دالاف_ف،_عية_لحدالعة_ل_وعبر،_اليب

Context Size 2:

الجديات)._عنصاد_ا_لخمسيوسيحطولا_صرة_ديال_لهي_بزرقة_

Context Size 3:

_اللي_خمائيات_ديال_ف_لجمهورية_الطابلات_(گاع_ل_من_مابين

Context Size 4:

_ديال_المرسى_ديال_اديالهوم_مصادر_فيهم_يال_شيحد_من_بعد_فـ_

Key Findings

- Best Predictability: Context-4 (word) with 97.9% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (381,563 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 79,667 |

| Total Tokens | 2,057,009 |

| Mean Frequency | 25.82 |

| Median Frequency | 4 |

| Frequency Std Dev | 518.98 |

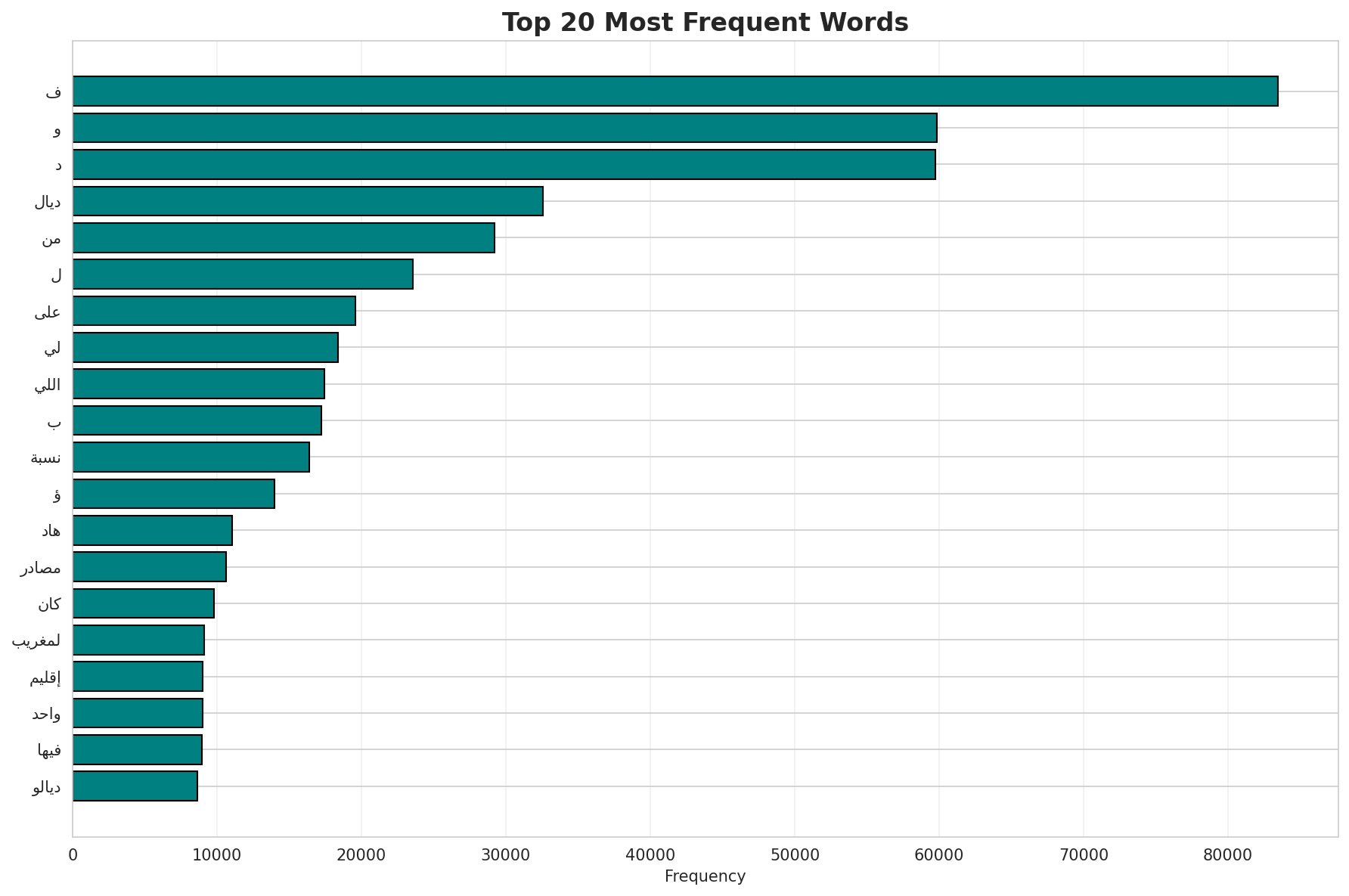

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | ف | 84,381 |

| 2 | و | 60,856 |

| 3 | د | 60,420 |

| 4 | ديال | 32,966 |

| 5 | من | 29,503 |

| 6 | ل | 23,808 |

| 7 | على | 19,757 |

| 8 | لي | 18,777 |

| 9 | ب | 17,745 |

| 10 | اللي | 17,410 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | ختيلال | 2 |

| 2 | تسطية | 2 |

| 3 | التخمار | 2 |

| 4 | لمركزين | 2 |

| 5 | تعلاف | 2 |

| 6 | الروضيو | 2 |

| 7 | رِد | 2 |

| 8 | وينغز | 2 |

| 9 | تايغرز | 2 |

| 10 | كلتة | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0203 |

| R² (Goodness of Fit) | 0.998917 |

| Adherence Quality | excellent |

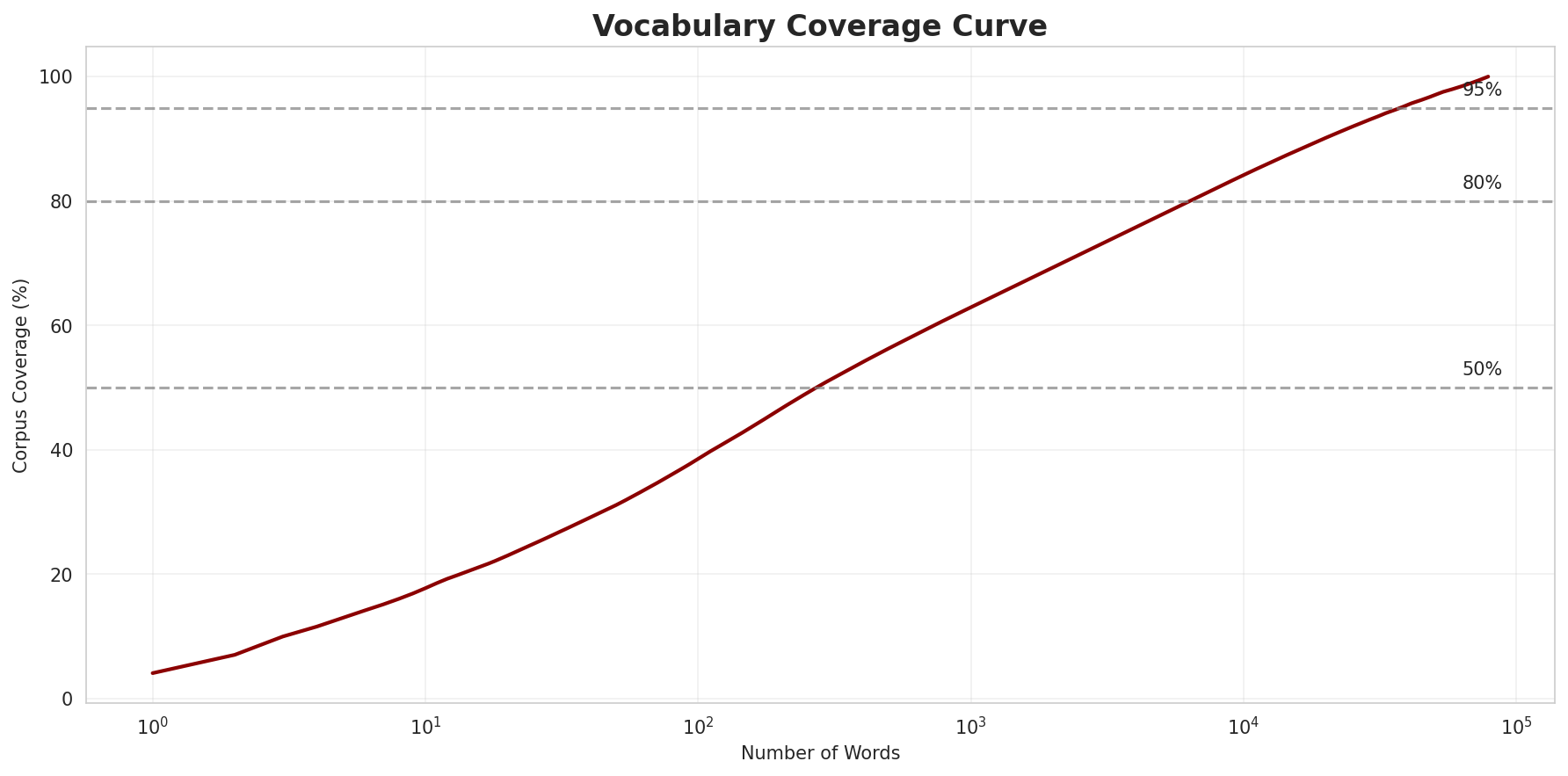

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 38.4% |

| Top 1,000 | 62.8% |

| Top 5,000 | 77.7% |

| Top 10,000 | 84.1% |

Key Findings

- Zipf Compliance: R²=0.9989 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 38.4% of corpus

- Long Tail: 69,667 words needed for remaining 15.9% coverage

5. Word Embeddings Evaluation

5.1 Cross-Lingual Alignment

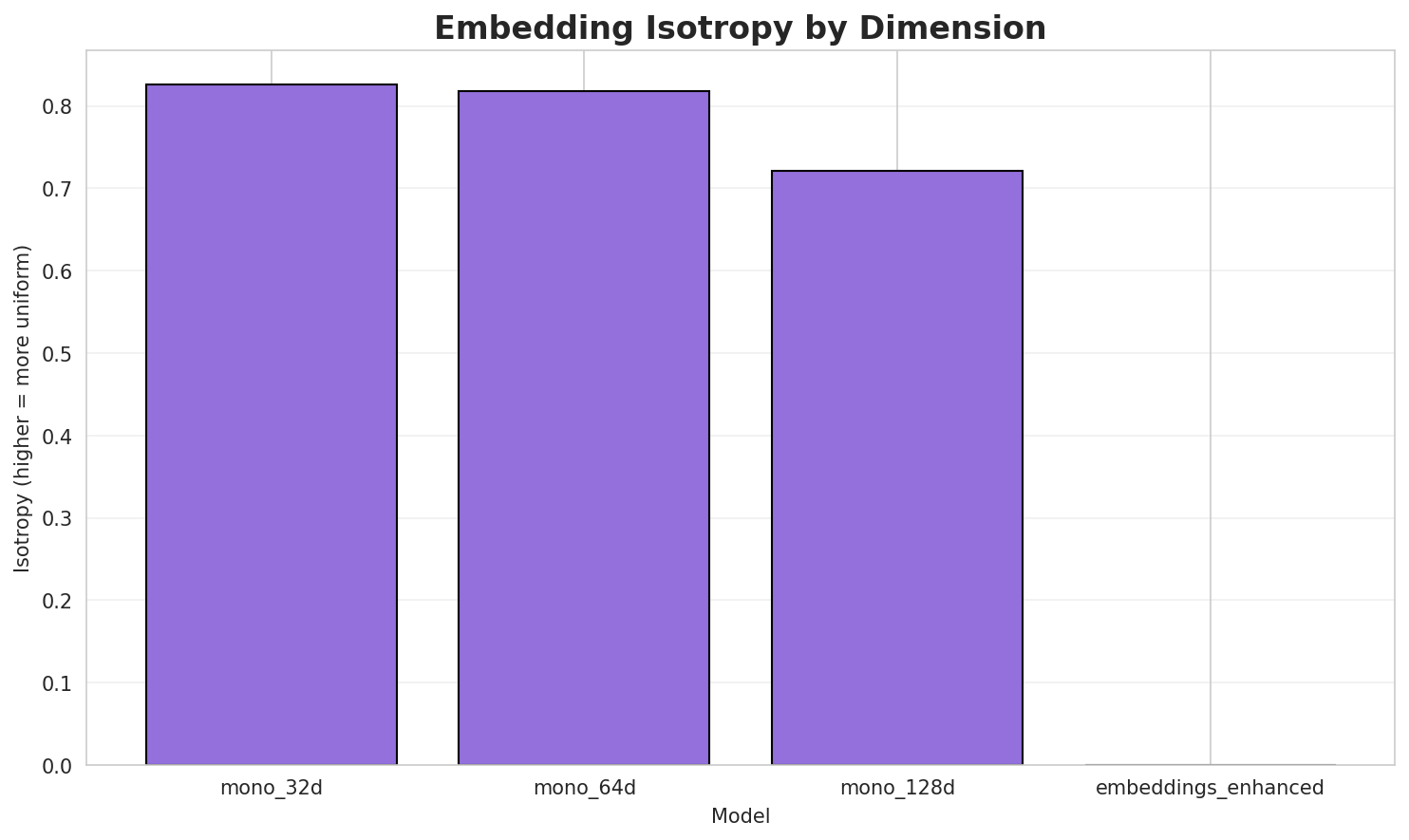

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8215 🏆 | 0.3275 | N/A | N/A |

| mono_64d | 64 | 0.8006 | 0.2538 | N/A | N/A |

| mono_128d | 128 | 0.6555 | 0.2039 | N/A | N/A |

| aligned_32d | 32 | 0.8215 | 0.3276 | 0.0080 | 0.1080 |

| aligned_64d | 64 | 0.8006 | 0.2565 | 0.0380 | 0.2000 |

| aligned_128d | 128 | 0.6555 | 0.2044 | 0.0440 | 0.2420 |

Key Findings

- Best Isotropy: mono_32d with 0.8215 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2623. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 4.4% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 1.121 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-ال |

القوميين, الاحتياطية, الطابلة |

-ل |

لعضان, لكرواتي, لعاميد |

-ت |

تقرا, تحقيقات, تشارلي |

-م |

ميطاكا, معاهد, موليكيلة |

-لم |

لمحلولة, لمقبولين, لمطلوق |

-و |

والهيئات, والطرقان, وبطريقة |

-الم |

المركب, المعروفين, المناخية |

-ب |

بنشليخة, بيئات, بلمارشالية |

Productive Suffixes

| Suffix | Examples |

|---|---|

-ت |

والهيئات, تحقيقات, لبويرات |

-ة |

بنشليخة, وبطريقة, عشبة |

-ات |

والهيئات, تحقيقات, لبويرات |

-ن |

لعضان, والطرقان, القوميين |

-ية |

أكترية, الاحتياطية, والاشتراكية |

-ا |

ميطاكا, تقرا, سيينا |

-ي |

ؤطوماتيكي, لكرواتي, سينتشي |

-ين |

القوميين, پيسّين, مشهورين |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

انية |

1.84x | 68 contexts | سانية, تانية, غانية |

النا |

1.79x | 63 contexts | الناي, الناس, النار |

لمغر |

2.03x | 30 contexts | لمغرب, المغرب, لمغربي |

جماع |

1.89x | 37 contexts | جماعة, إجماع, جماعي |

اللو |

1.66x | 61 contexts | اللون, اللور, اللوز |

الات |

1.59x | 65 contexts | صالات, حالات, سالات |

مغري |

2.11x | 18 contexts | مغرية, مغريب, لمغريب |

دهوم |

2.19x | 16 contexts | ضدهوم, يردهوم, جهدهوم |

إحصا |

2.09x | 17 contexts | إحصاء, لإحصا, إحصائي |

حصاء |

2.23x | 14 contexts | إحصاء, ليحصاء, لإحصاء |

قليم |

2.08x | 16 contexts | إقليم, فقليم, اقليم |

لجوا |

1.76x | 26 contexts | لجواب, الجوا, لجواد |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-ال |

-ة |

281 words | الرواقية, القهوة |

-ل |

-ة |

184 words | لفريسة, للمنصة |

-ال |

-ت |

170 words | المجموعات, الصوتيات |

-ال |

-ات |

164 words | المجموعات, الصوتيات |

-ال |

-ية |

142 words | الرواقية, السيادية |

-ل |

-ت |

131 words | لقمقومات, لپوطوات |

-ل |

-ات |

125 words | لقمقومات, لپوطوات |

-ل |

-ن |

124 words | لعيّان, لخيشوميين |

-ال |

-ن |

119 words | الكربون, الفريقين |

-ل |

-ية |

116 words | لعدمية, لبيولوجية |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| والعمالات | و-ال-عمالات |

7.5 | عمالات |

| والراشيدية | و-ال-راشيدية |

7.5 | راشيدية |

| والمشروبات | و-ال-مشروبات |

7.5 | مشروبات |

| والمؤرخين | و-ال-مؤرخين |

7.5 | مؤرخين |

| والمسيحية | و-ال-مسيحية |

7.5 | مسيحية |

| فالسعودية | ف-ال-سعودية |

7.5 | سعودية |

| بالفرنسية | ب-ال-فرنسية |

7.5 | فرنسية |

| بالكيلوݣرام | ب-ال-كيلوݣرام |

7.5 | كيلوݣرام |

| والأساتذة | و-ال-أساتذة |

7.5 | أساتذة |

| والأقاليم | و-ال-أقاليم |

7.5 | أقاليم |

| باللاتينية | ب-ال-لاتينية |

7.5 | لاتينية |

| باليونانية | ب-ال-يونانية |

7.5 | يونانية |

| لبزقوليين | لبزقول-ي-ين |

7.5 | ي |

| فالجورنال | ف-ال-جورنال |

7.5 | جورنال |

| بالصيناعة | ب-ال-صيناعة |

7.5 | صيناعة |

6.6 Linguistic Interpretation

Automated Insight: The language Moroccan Arabic shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.17x) |

| N-gram | 2-gram | Lowest perplexity (428) |

| Markov | Context-4 | Highest predictability (97.9%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

Generated by Wikilangs Pipeline · 2026-03-02 12:03:50