language: bbc

language_name: BBC

language_family: austronesian_batak

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- monolingual

- family-austronesian_batak

license: mit

library_name: wikilangs

pipeline_tag: feature-extraction

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 4.433

- name: best_isotropy

type: isotropy

value: 0.8253

- name: vocabulary_size

type: vocab

value: 24711

generated: 2025-12-28T00:00:00.000Z

BBC - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on BBC Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4-gram)

- Markov chains (context of 1, 2, 3 and 4)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

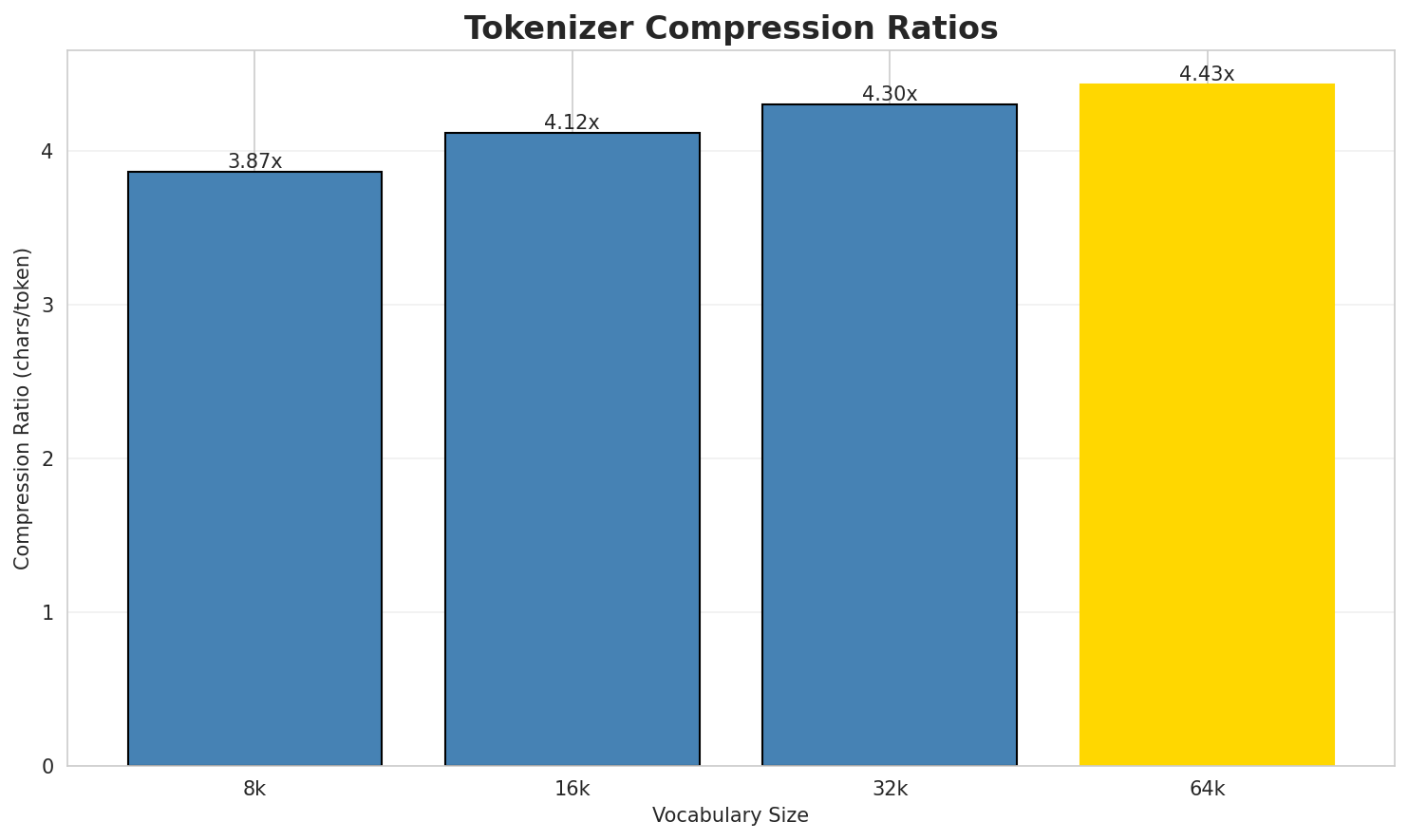

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.867x | 3.83 | 0.1235% | 1,466,873 |

| 16k | 4.118x | 4.08 | 0.1315% | 1,377,727 |

| 32k | 4.304x | 4.27 | 0.1375% | 1,318,061 |

| 64k | 4.433x 🏆 | 4.39 | 0.1416% | 1,279,829 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Panjunan i ma sada huta na adong di Kecamatan Petarukan, Kabupaten Pemalang, Pr...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁panj unan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ... (+11 more) |

21 |

| 16k | ▁panj unan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ... (+11 more) |

21 |

| 32k | ▁panj unan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ... (+11 more) |

21 |

| 64k | ▁panjunan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ▁petarukan ... (+10 more) |

20 |

Sample 2: `Ampapaga (Surat Batak:ᯀᯔ᯲ᯇᯇᯎ) i ma sada suansuanan na tubu di gadu ni hauma.

P...`

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁amp ap aga ▁( surat ▁batak : ᯀ ᯔ᯲ ᯇ ... (+20 more) |

30 |

| 16k | ▁amp ap aga ▁( surat ▁batak : ᯀ ᯔ᯲ᯇ ᯇ ... (+18 more) |

28 |

| 32k | ▁amp apaga ▁( surat ▁batak : ᯀ ᯔ᯲ᯇᯇᯎ ) ▁i ... (+15 more) |

25 |

| 64k | ▁ampapaga ▁( surat ▁batak : ᯀᯔ᯲ᯇᯇᯎ ) ▁i ▁ma ▁sada ... (+13 more) |

23 |

Sample 3: Sungapan i ma sada huta na adong di Kecamatan Pemalang, Kabupaten Pemalang, Pro...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁sung apan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ... (+11 more) |

21 |

| 16k | ▁sung apan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ... (+11 more) |

21 |

| 32k | ▁sung apan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ... (+11 more) |

21 |

| 64k | ▁sungapan ▁i ▁ma ▁sada ▁huta ▁na ▁adong ▁di ▁kecamatan ▁pemalang ... (+10 more) |

20 |

Key Findings

- Best Compression: 64k achieves 4.433x compression

- Lowest UNK Rate: 8k with 0.1235% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

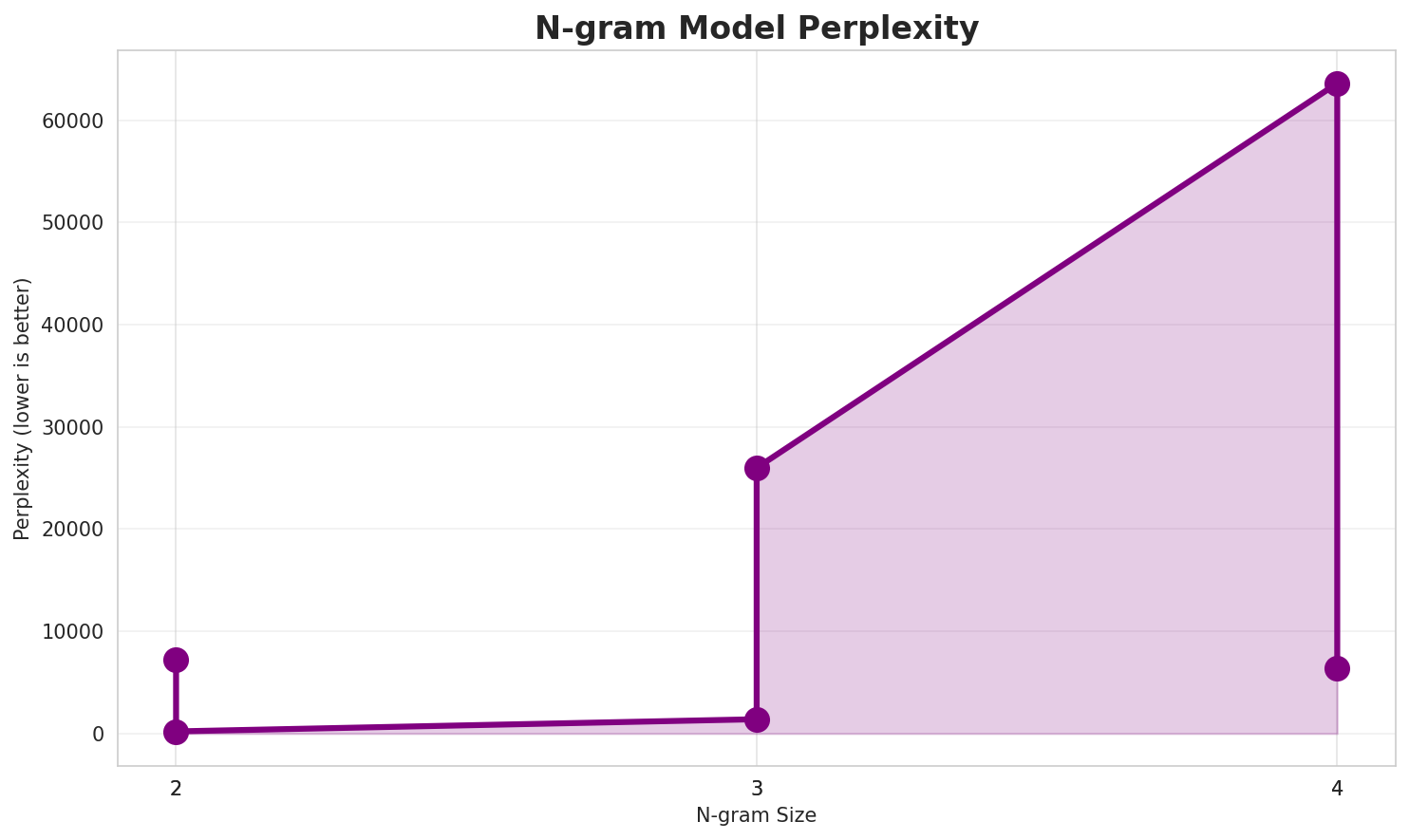

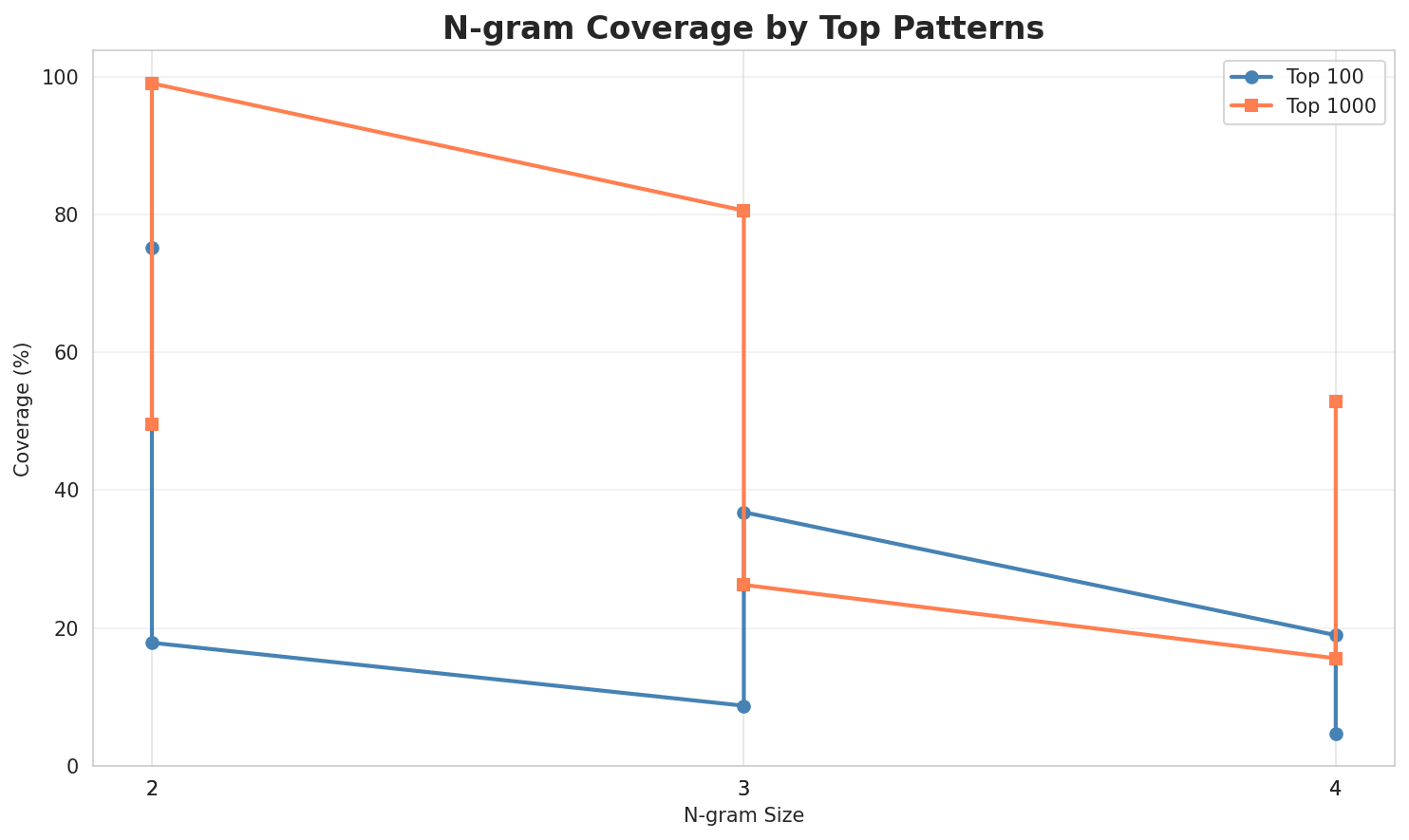

2. N-gram Model Evaluation

Results

| N-gram | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|

| 2-gram | 7,191 🏆 | 12.81 | 30,496 | 17.8% | 49.6% |

| 2-gram | 209 🏆 | 7.70 | 2,868 | 75.2% | 99.1% |

| 3-gram | 25,989 | 14.67 | 62,993 | 8.7% | 26.3% |

| 3-gram | 1,399 | 10.45 | 19,851 | 36.8% | 80.6% |

| 4-gram | 63,619 | 15.96 | 114,847 | 4.7% | 15.6% |

| 4-gram | 6,375 | 12.64 | 81,359 | 18.9% | 52.8% |

Top 5 N-grams by Size

2-grams:

| Rank | N-gram | Count |

|---|---|---|

| 1 | , jala |

8,780 |

| 2 | i , |

6,997 |

| 3 | ᯬ ᯲ |

4,573 |

| 4 | angka na |

4,409 |

| 5 | dung i |

4,328 |

3-grams:

| Rank | N-gram | Count |

|---|---|---|

| 1 | anak ni si |

1,611 |

| 2 | , angka na |

1,528 |

| 3 | . 2 : |

1,079 |

| 4 | , anak ni |

1,069 |

| 5 | ᯰ ᯄ ᯦ |

1,063 |

4-grams:

| Rank | N-gram | Count |

|---|---|---|

| 1 | , anak ni si |

906 |

| 2 | ᯀ ᯰ ᯄ ᯦ |

686 |

| 3 | ᯘ ᯪ ᯀᯉ ᯲ |

457 |

| 4 | ᯬ ᯂᯖ ᯬ ᯲ |

432 |

| 5 | on do hata ni |

421 |

Key Findings

- Best Perplexity: 2-gram with 209

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~53% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

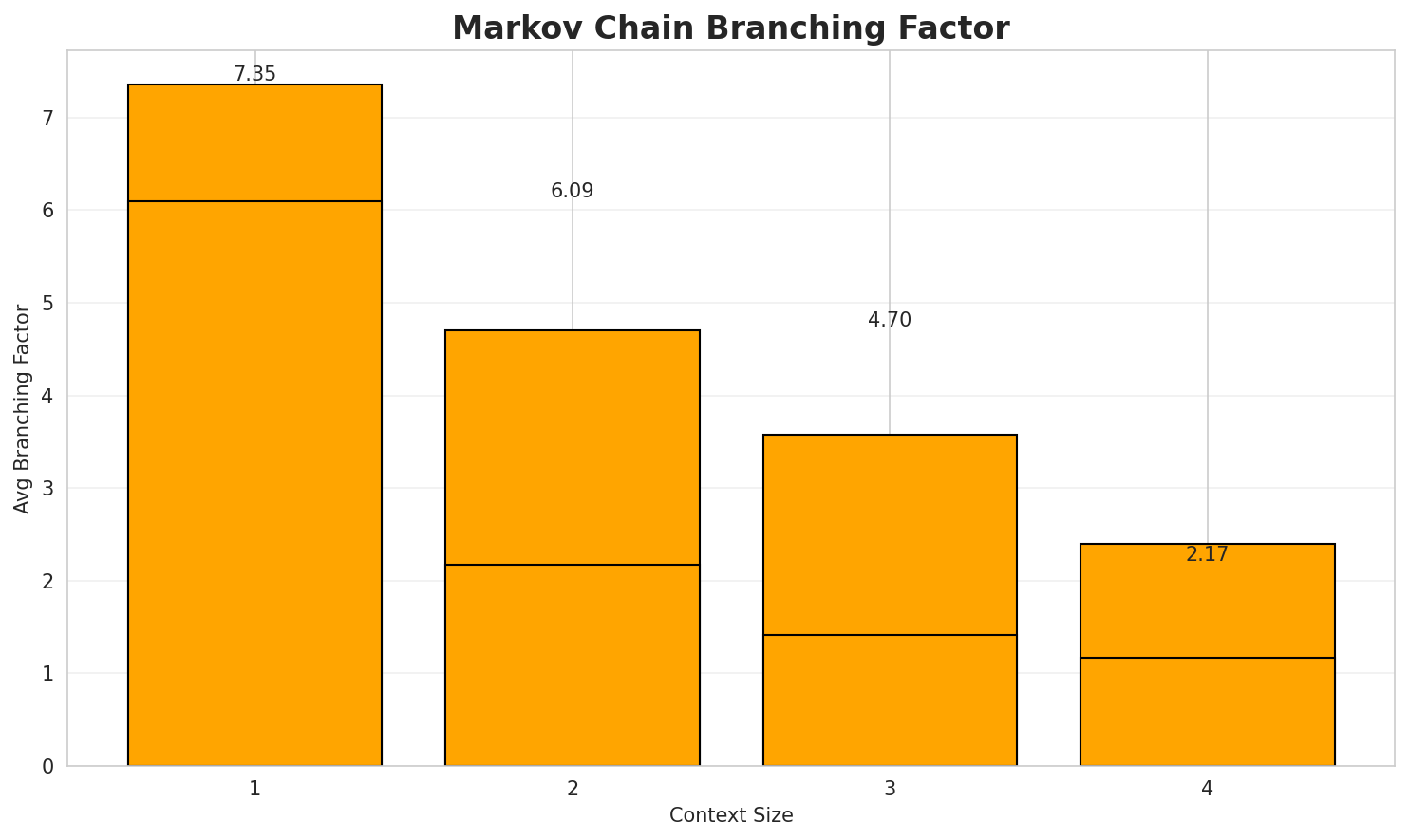

3. Markov Chain Evaluation

Results

| Context | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|

| 1 | 0.8281 | 1.775 | 6.09 | 50,531 | 17.2% |

| 1 | 1.0960 | 2.138 | 7.35 | 1,187 | 0.0% |

| 2 | 0.3983 | 1.318 | 2.17 | 307,642 | 60.2% |

| 2 | 0.8091 | 1.752 | 4.70 | 8,725 | 19.1% |

| 3 | 0.1982 | 1.147 | 1.41 | 667,901 | 80.2% |

| 3 | 0.7878 | 1.726 | 3.57 | 41,004 | 21.2% |

| 4 | 0.0988 🏆 | 1.071 | 1.16 | 943,940 | 90.1% |

| 4 | 0.5526 🏆 | 1.467 | 2.40 | 146,535 | 44.7% |

Generated Text Samples

Below are text samples generated from each Markov chain model:

Context Size 1:

, jala tu si wasti , jala dipadeakdeak hamu sian i jala peakkononna tu palaspalas pamurunan. 12 : songon i hatangku tu bagasan saluhut angka ari puasa bintang di jolo i: ida ma angka na margoar milo dohot paredangedangan , 2 : 35 : 25 pelean

Context Size 2:

, jala tudoshon gumora angka anak ni si joab soara ni sarune i laho ma ho ,i , naung pinauli ni tangan ni halak pangarupa umpogo upa ni pambahenannasida . 99 : 7ᯬ ᯲ ᯘᯞ ᯮ ᯂᯖ ᯮ ᯲ ᯑ ᯩ ᯇᯔ ᯲ ᯅᯂ ᯩ ᯉᯉ ᯲ ᯔ ᯉ

Context Size 3:

anak ni si ahilud do panuturi . 18 : 13 tung sura ahu parohon begu masa tu luat, angka na so bangso hian ; marhite sian bangso na asing , jala marbarita goarmu di betlehem. 2 : 15 alai tarrimas situtu ma si abner dohot di halak na marroha pangansion , ndang

Context Size 4:

, anak ni si ammiel . 3 : 6 ndada tu torop bangso , angka parhata bobang , manangᯀ ᯰ ᯄ ᯦ ᯂᯖ ᯉ ᯪ ᯘ ᯪ ᯅ ᯩ ᯀ ᯩ ᯒ ᯪ ᯑ ᯪ ᯉᯘ ᯪᯘ ᯪ ᯀᯉ ᯲ ᯂᯔ ᯪ ᯐ ᯮ ᯔ ᯬ ᯞ ᯬ ᯉ ᯪ ᯑ ᯩ ᯅᯖ 2 :

Key Findings

- Best Predictability: Context-4 with 90.1% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (146,535 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 24,711 |

| Total Tokens | 1,019,541 |

| Mean Frequency | 41.26 |

| Median Frequency | 4 |

| Frequency Std Dev | 565.94 |

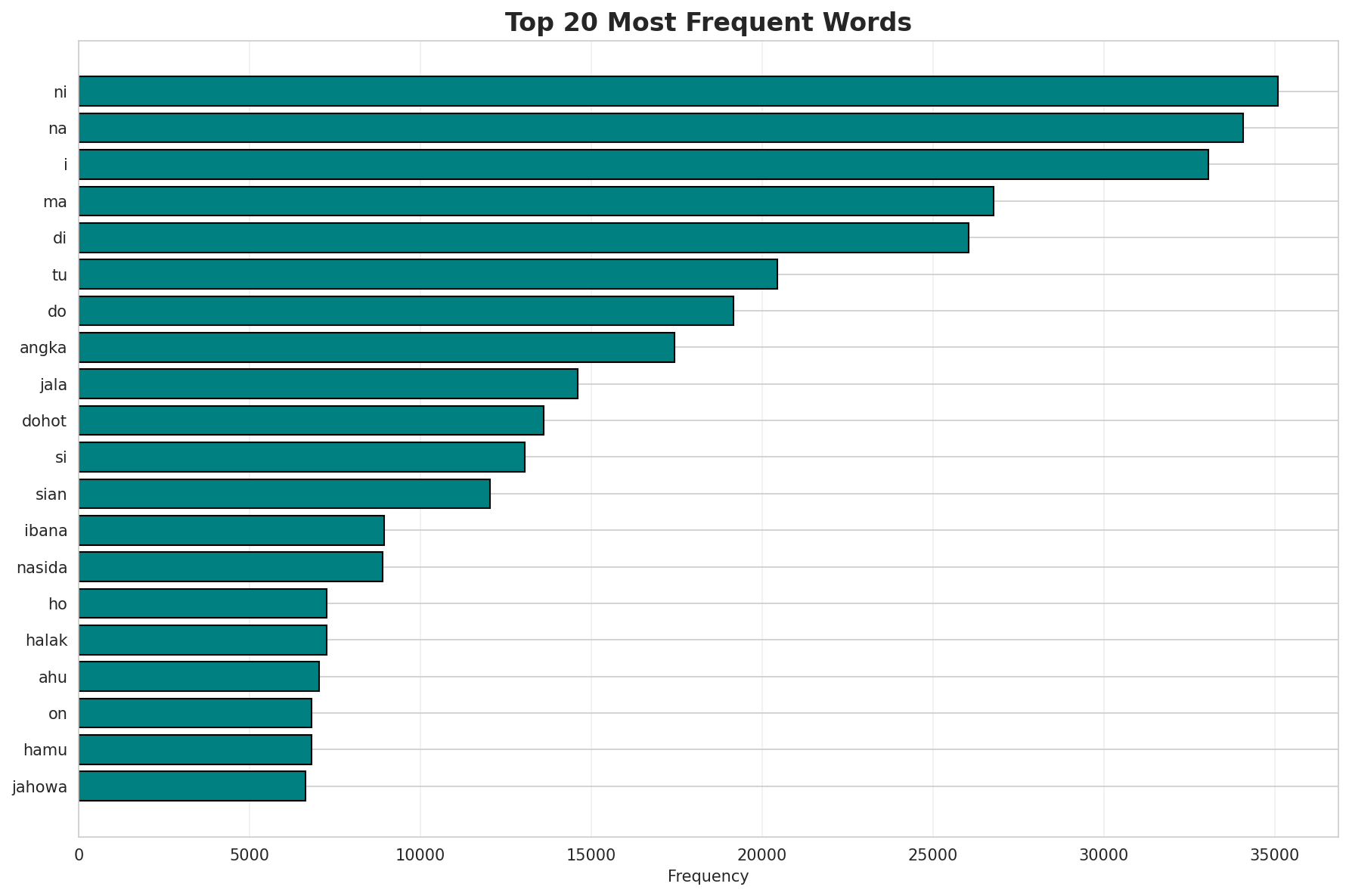

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | ni | 35,101 |

| 2 | na | 34,088 |

| 3 | i | 33,056 |

| 4 | ma | 26,769 |

| 5 | di | 26,053 |

| 6 | tu | 20,450 |

| 7 | do | 19,163 |

| 8 | angka | 17,428 |

| 9 | jala | 14,598 |

| 10 | dohot | 13,609 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | ᯝᯇᯞ | 2 |

| 2 | kayo | 2 |

| 3 | uttar | 2 |

| 4 | ltr | 2 |

| 5 | ebrima | 2 |

| 6 | 290px | 2 |

| 7 | td | 2 |

| 8 | height | 2 |

| 9 | 260px | 2 |

| 10 | 22251 | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.1956 |

| R² (Goodness of Fit) | 0.996705 |

| Adherence Quality | excellent |

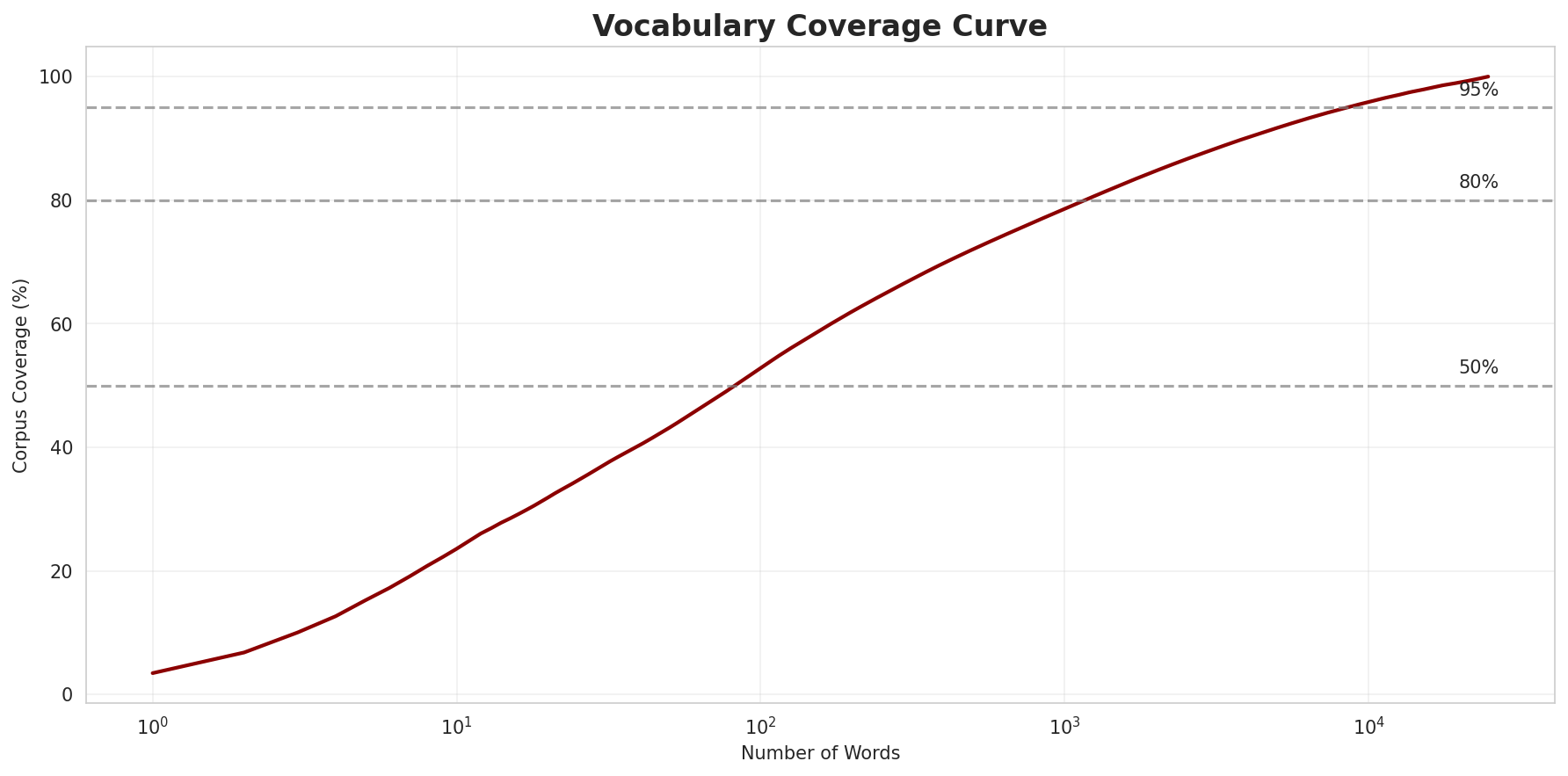

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 52.8% |

| Top 1,000 | 78.6% |

| Top 5,000 | 91.7% |

| Top 10,000 | 95.9% |

Key Findings

- Zipf Compliance: R²=0.9967 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 52.8% of corpus

- Long Tail: 14,711 words needed for remaining 4.1% coverage

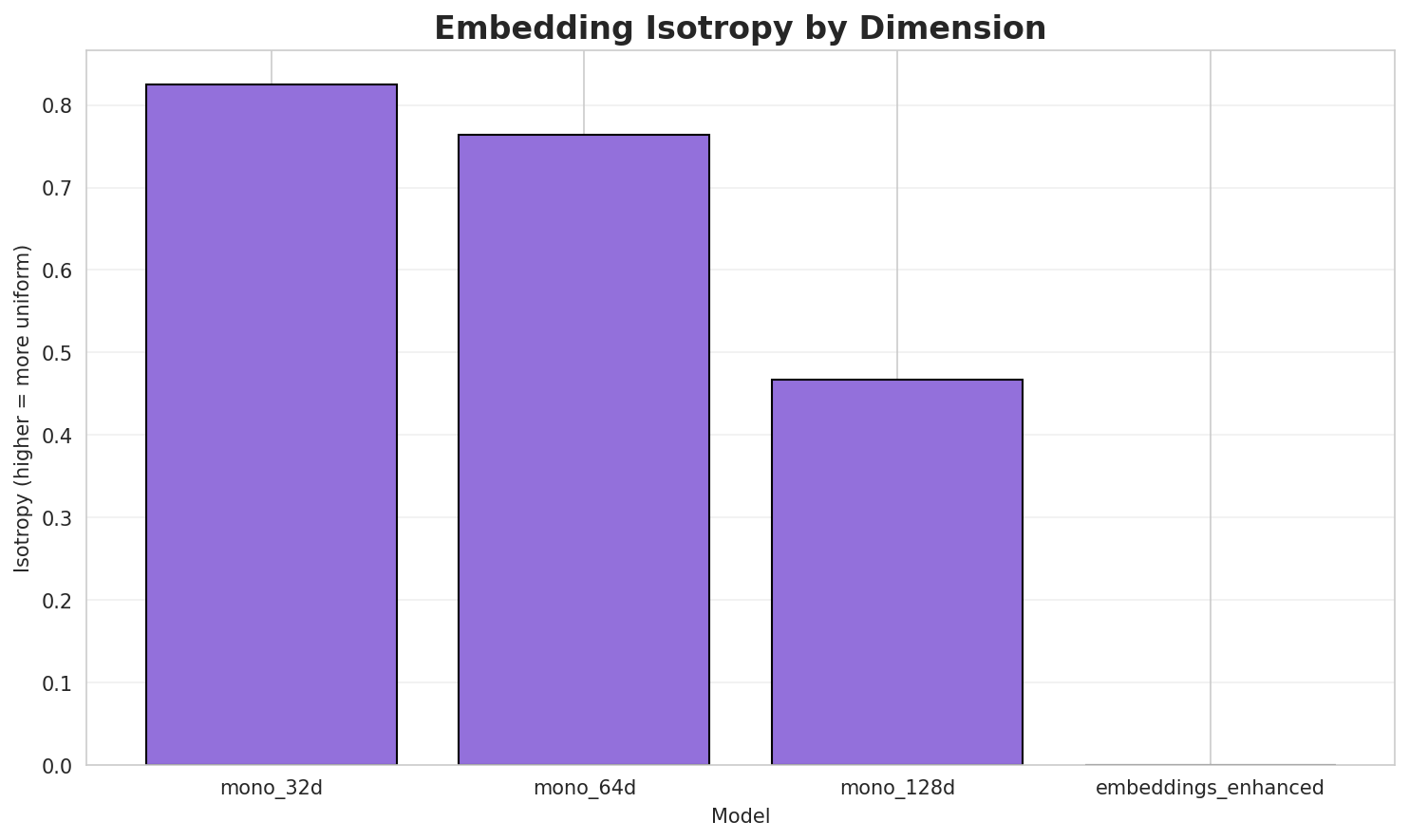

5. Word Embeddings Evaluation

Model Comparison

| Model | Vocab Size | Dimension | Avg Norm | Std Norm | Isotropy |

|---|---|---|---|---|---|

| mono_32d | 15,079 | 32 | 3.458 | 0.818 | 0.8253 🏆 |

| mono_64d | 15,079 | 64 | 3.886 | 0.738 | 0.7641 |

| mono_128d | 15,079 | 128 | 4.143 | 0.691 | 0.4668 |

| embeddings_enhanced | 0 | 0 | 0.000 | 0.000 | 0.0000 |

Key Findings

- Best Isotropy: mono_32d with 0.8253 (more uniform distribution)

- Dimension Trade-off: Higher dimensions capture more semantics but reduce isotropy

- Vocabulary Coverage: All models cover 15,079 words

- Recommendation: 100d for balanced semantic capture and efficiency

6. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 32k BPE | Best compression (4.43x) with low UNK rate |

| N-gram | 5-gram | Lowest perplexity (209) |

| Markov | Context-4 | Highest predictability (90.1%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

publisher = {HuggingFace},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

Generated by Wikilangs Models Pipeline

Report Date: 2025-12-28 00:12:38