Pa'o Karen - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Pa'o Karen Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

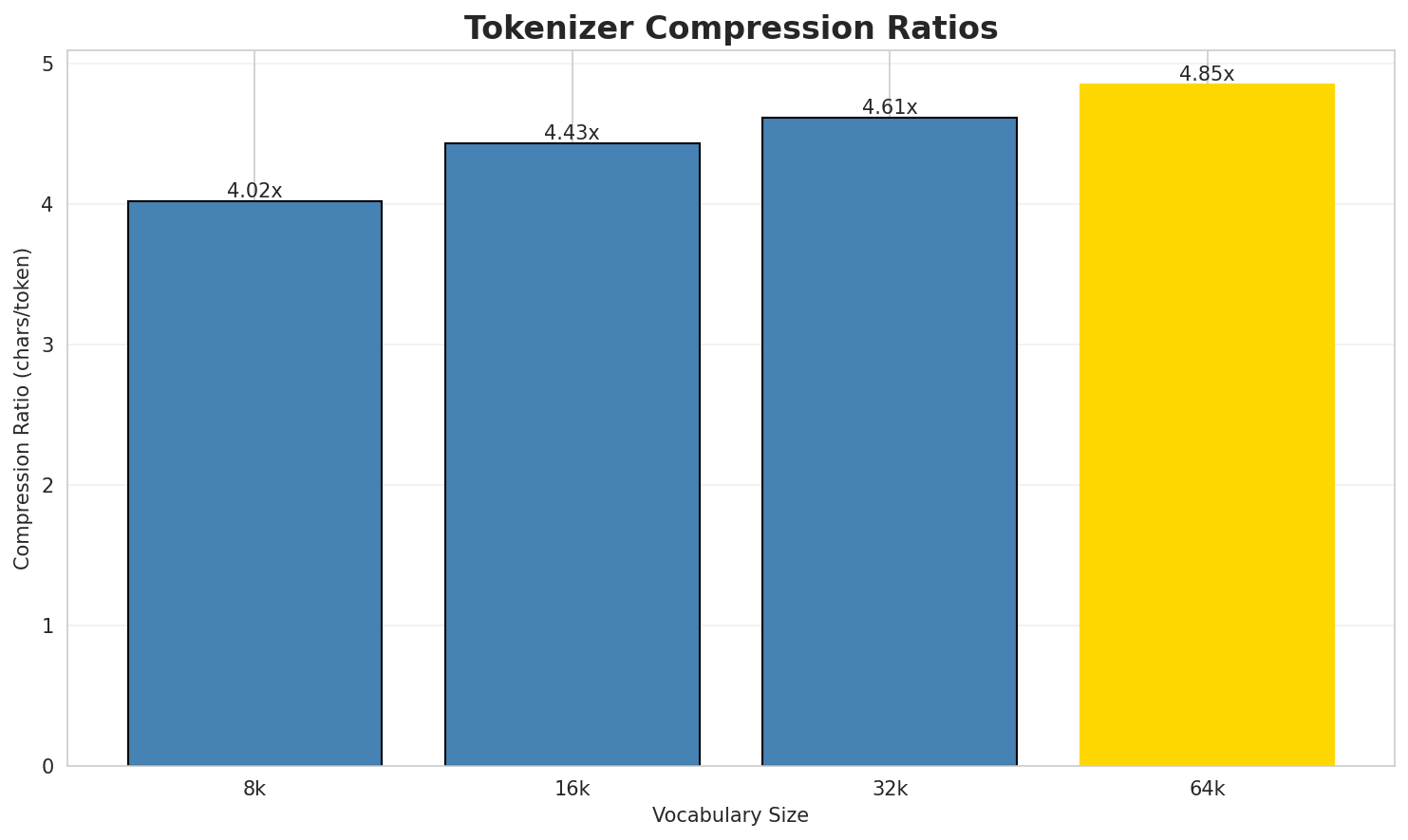

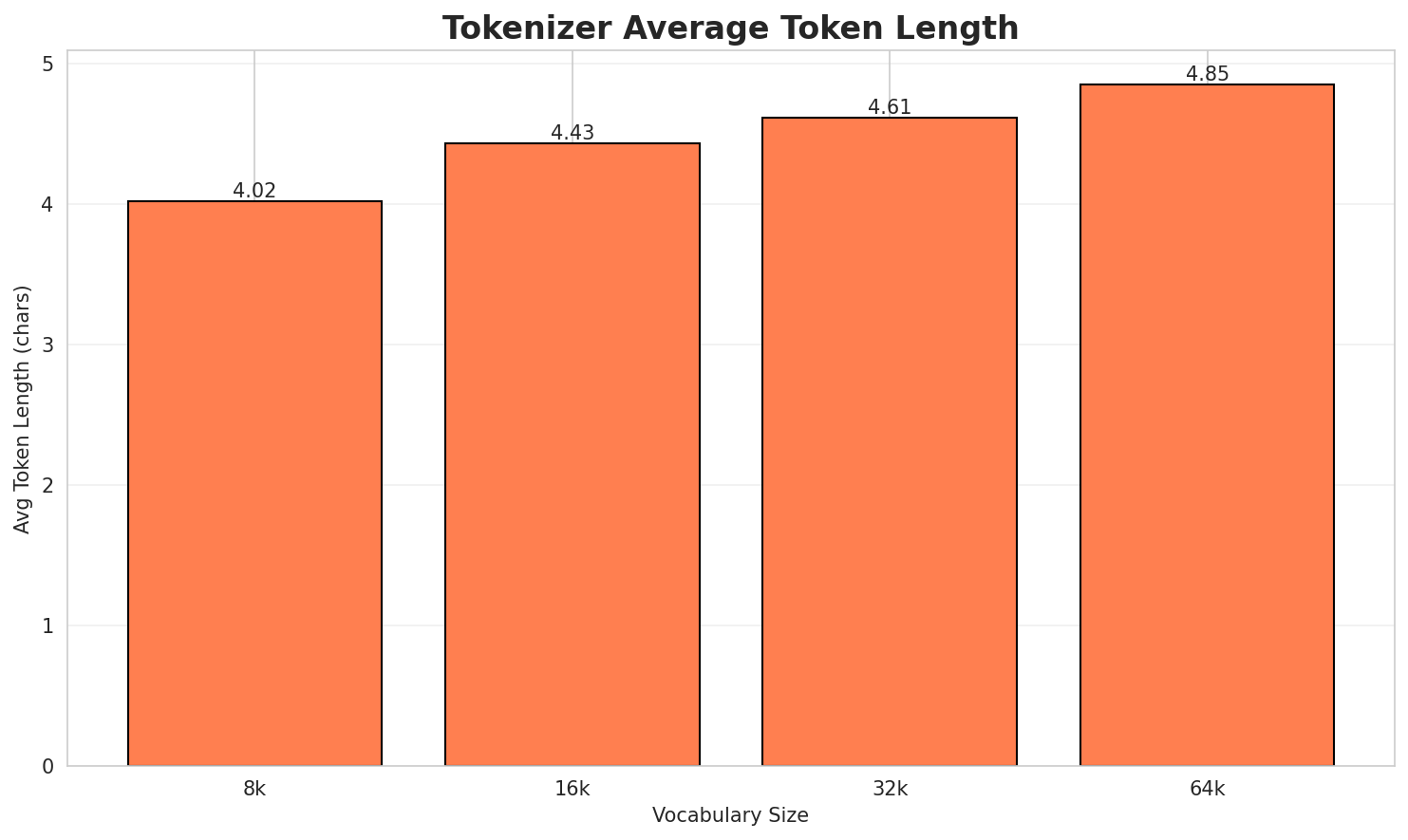

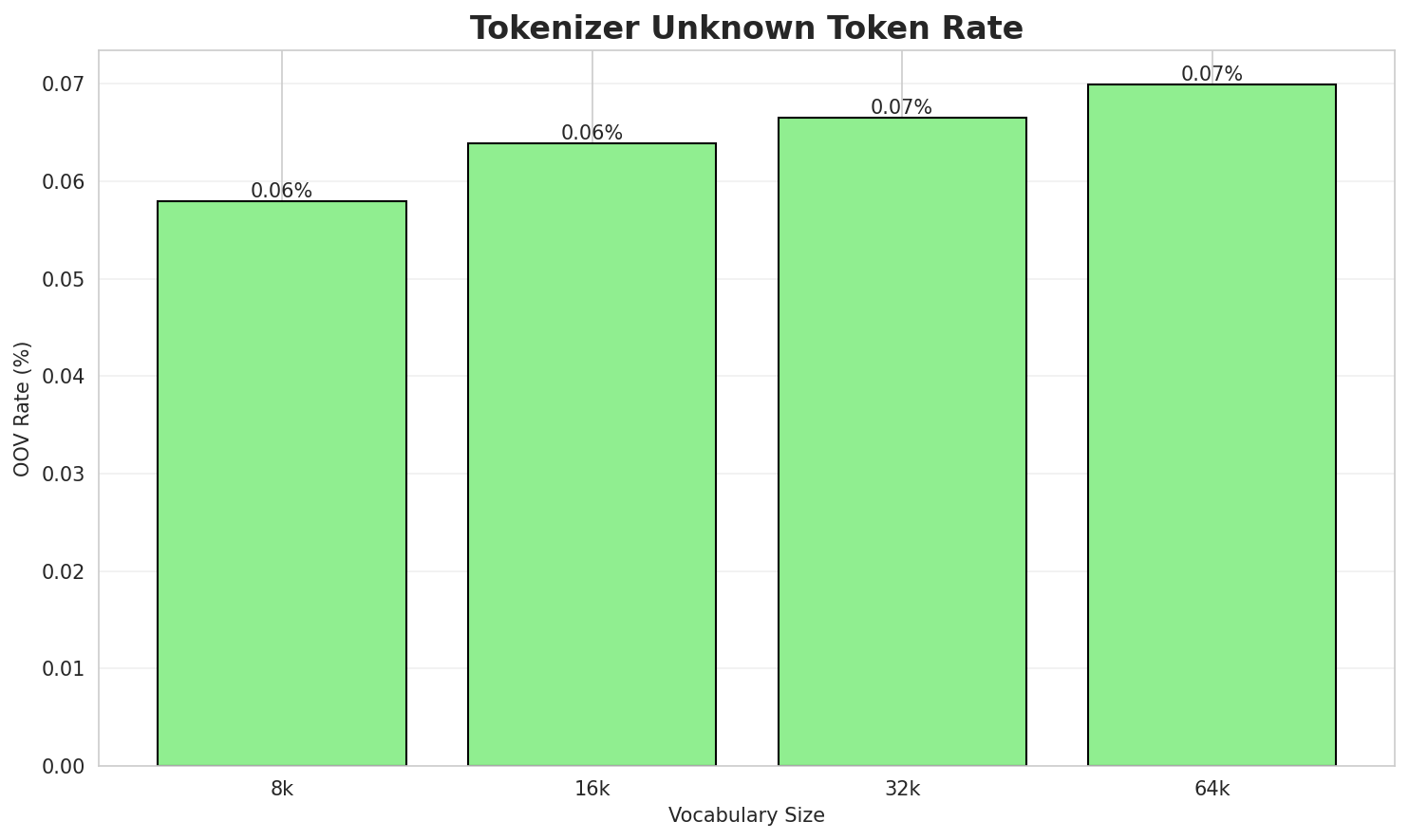

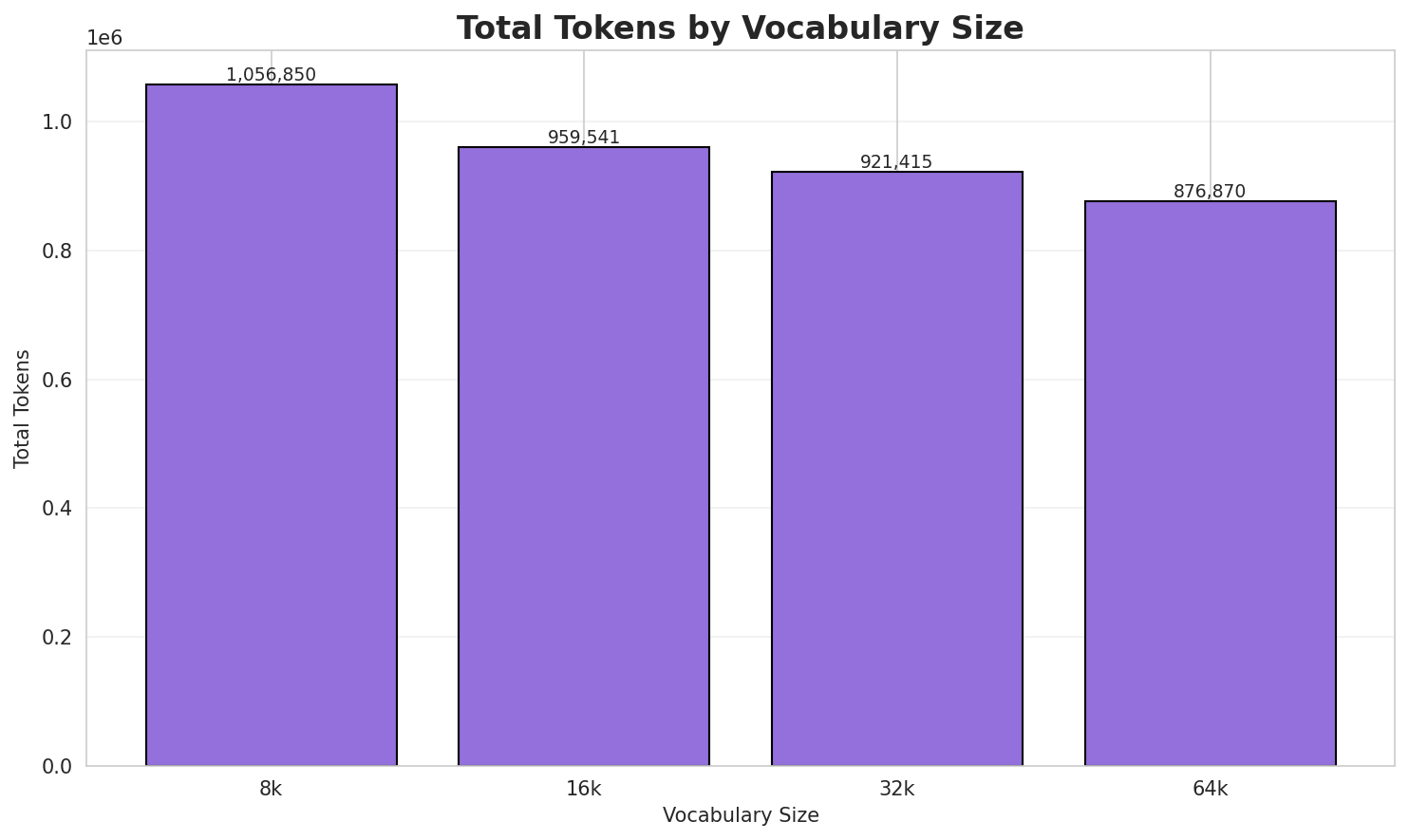

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 4.022x | 4.02 | 0.0580% | 1,056,850 |

| 16k | 4.430x | 4.43 | 0.0639% | 959,541 |

| 32k | 4.613x | 4.61 | 0.0665% | 921,415 |

| 64k | 4.848x 🏆 | 4.85 | 0.0699% | 876,870 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: မျန်မာခမ်းထီကိုယို တွိုင်ꩻဒေႏသတန် အဝ်ႏ ( ၇ )တွိုင်ꩻ နဝ်ꩻသွူ ။

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁မျန်မာခမ်းထီ ကိုယို ▁တွိုင်ꩻဒေႏသတန် ▁အဝ်ႏ ▁( ▁၇ ▁) တွိုင်ꩻ ▁နဝ်ꩻ သွူ ... (+1 more) |

11 |

| 16k | ▁မျန်မာခမ်းထီ ကိုယို ▁တွိုင်ꩻဒေႏသတန် ▁အဝ်ႏ ▁( ▁၇ ▁) တွိုင်ꩻ ▁နဝ်ꩻသွူ ▁။ |

10 |

| 32k | ▁မျန်မာခမ်းထီ ကိုယို ▁တွိုင်ꩻဒေႏသတန် ▁အဝ်ႏ ▁( ▁၇ ▁) တွိုင်ꩻ ▁နဝ်ꩻသွူ ▁။ |

10 |

| 64k | ▁မျန်မာခမ်းထီ ကိုယို ▁တွိုင်ꩻဒေႏသတန် ▁အဝ်ႏ ▁( ▁၇ ▁) တွိုင်ꩻ ▁နဝ်ꩻသွူ ▁။ |

10 |

Sample 2: ဝေင်ꩻနောင်ꩻတရားယိုနဝ်ꩻ အဝ်ႏဒျာႏ မျန်မာခမ်းထီ ၊ ဖြဝ်ꩻခမ်းနယ်ႏအခဝ်နဝ်၊ တောင်ႏကီꩻခရ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ဝေင်ꩻန ောင်ꩻ တရား ယိုနဝ်ꩻ ▁အဝ်ႏဒျာႏ ▁မျန်မာခမ်းထီ ▁၊ ▁ဖြဝ်ꩻခမ်းနယ်ႏ အခဝ်နဝ်၊ ▁တောင်ႏကီꩻခရဲင်ႏ ... (+8 more) |

18 |

| 16k | ▁ဝေင်ꩻန ောင်ꩻ တရား ယိုနဝ်ꩻ ▁အဝ်ႏဒျာႏ ▁မျန်မာခမ်းထီ ▁၊ ▁ဖြဝ်ꩻခမ်းနယ်ႏ အခဝ်နဝ်၊ ▁တောင်ႏကီꩻခရဲင်ႏ ... (+8 more) |

18 |

| 32k | ▁ဝေင်ꩻန ောင်ꩻ တရား ယိုနဝ်ꩻ ▁အဝ်ႏဒျာႏ ▁မျန်မာခမ်းထီ ▁၊ ▁ဖြဝ်ꩻခမ်းနယ်ႏ အခဝ်နဝ်၊ ▁တောင်ႏကီꩻခရဲင်ႏ ... (+8 more) |

18 |

| 64k | ▁ဝေင်ꩻနောင်ꩻ တရားယိုနဝ်ꩻ ▁အဝ်ႏဒျာႏ ▁မျန်မာခမ်းထီ ▁၊ ▁ဖြဝ်ꩻခမ်းနယ်ႏ အခဝ်နဝ်၊ ▁တောင်ႏကီꩻခရဲင်ႏ ▁၊ ▁ဝေင်ꩻနယ်ႏပ ... (+6 more) |

16 |

Sample 3: အမုဲင် ခမ်းထီ ကသှိုပ်စဒါႏ ငဝ်းလဝ်းနီꩻ ၃၅လာအို ၉၄ ထူႏတောမ်

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁အမုဲင် ▁ခမ်းထီ ▁က သ ှို ပ် စဒါႏ ▁ငဝ်း လ ဝ်း ... (+6 more) |

16 |

| 16k | ▁အမုဲင် ▁ခမ်းထီ ▁ကသ ှိုပ် စဒါႏ ▁ငဝ်း လဝ်း နီꩻ ▁၃၅ လာအို ... (+3 more) |

13 |

| 32k | ▁အမုဲင် ▁ခမ်းထီ ▁ကသှိုပ်စဒါႏ ▁ငဝ်းလဝ်းနီꩻ ▁၃၅လာအို ▁၉ ၄ ▁ထူႏတောမ် |

8 |

| 64k | ▁အမုဲင် ▁ခမ်းထီ ▁ကသှိုပ်စဒါႏ ▁ငဝ်းလဝ်းနီꩻ ▁၃၅လာအို ▁၉၄ ▁ထူႏတောမ် |

7 |

Key Findings

- Best Compression: 64k achieves 4.848x compression

- Lowest UNK Rate: 8k with 0.0580% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

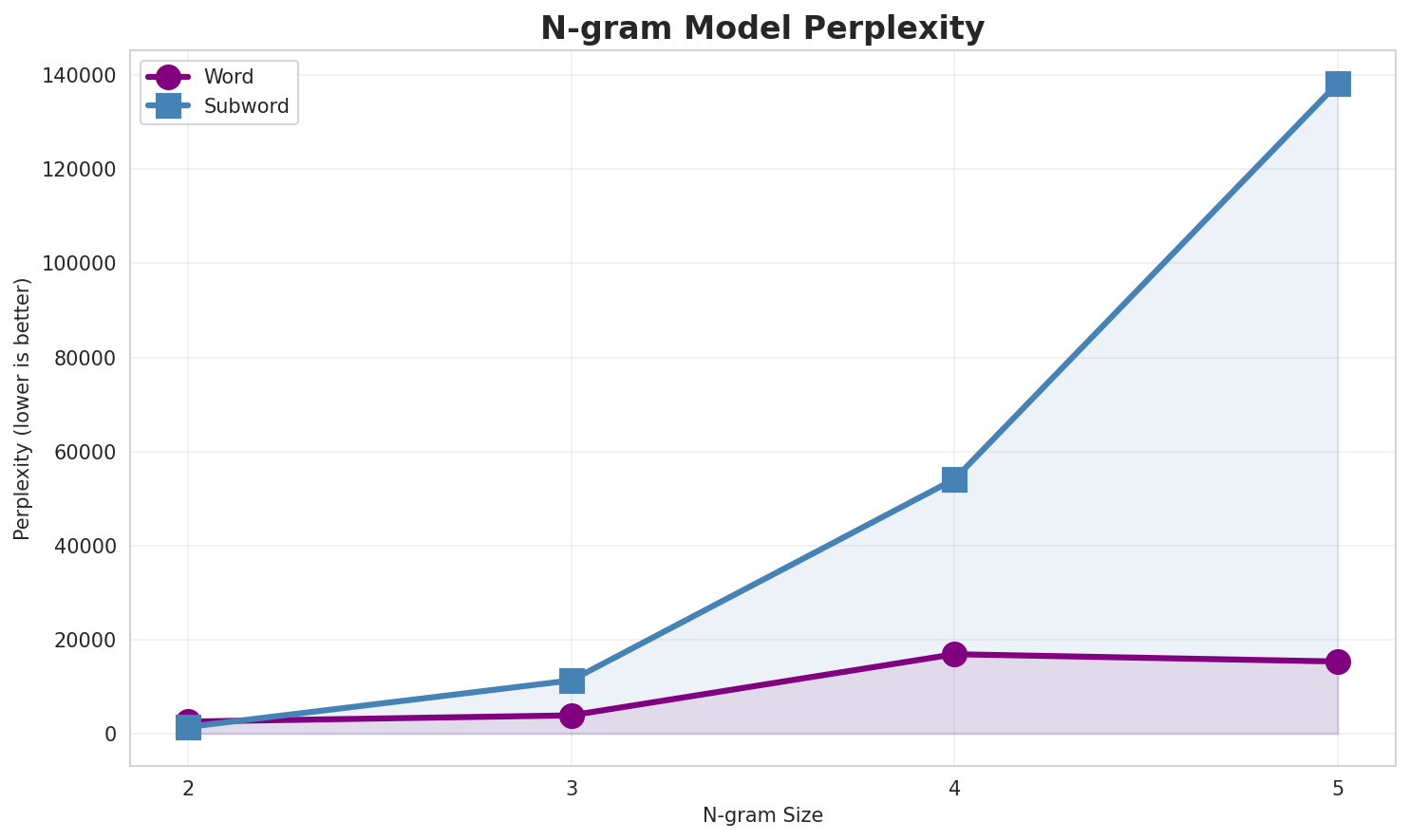

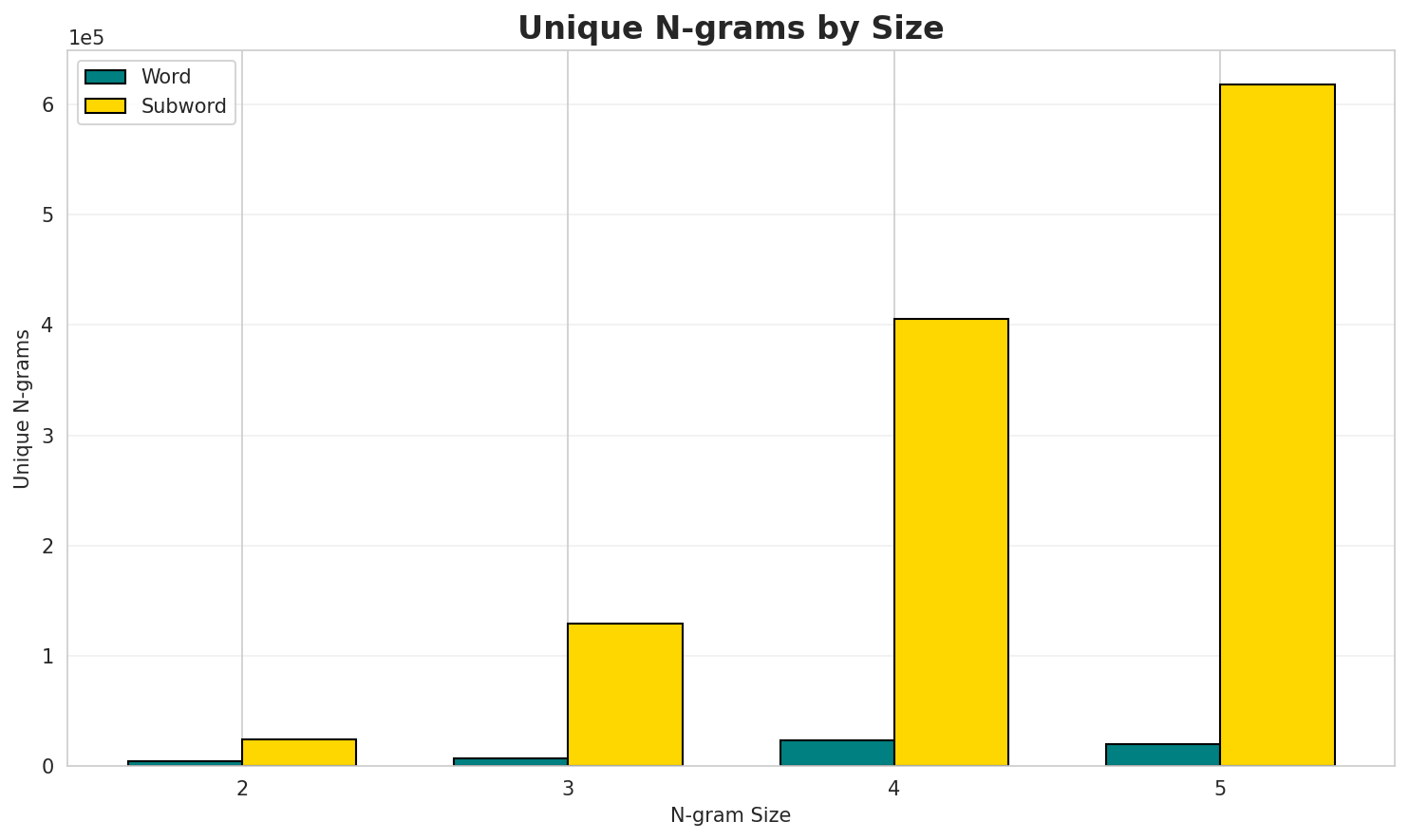

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 2,539 | 11.31 | 4,306 | 21.2% | 57.9% |

| 2-gram | Subword | 1,398 🏆 | 10.45 | 24,285 | 42.8% | 77.0% |

| 3-gram | Word | 3,862 | 11.92 | 6,537 | 18.8% | 47.3% |

| 3-gram | Subword | 11,299 | 13.46 | 129,572 | 19.0% | 45.1% |

| 4-gram | Word | 16,871 | 14.04 | 23,296 | 9.0% | 22.0% |

| 4-gram | Subword | 54,089 | 15.72 | 405,489 | 10.1% | 25.8% |

| 5-gram | Word | 15,317 | 13.90 | 19,946 | 8.7% | 21.0% |

| 5-gram | Subword | 138,288 | 17.08 | 617,898 | 5.8% | 16.6% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | နဝ်ꩻ အဝ်ႏဒျာႏ |

719 |

| 2 | အဝ်ႏဒျာႏ မျန်မာခမ်းထီ |

691 |

| 3 | ခရိစ်နေင်ႏ ဗာႏ |

403 |

| 4 | ဗာႏ စာႏရင်ꩻအလꩻ |

320 |

| 5 | မျန်မာခမ်းထီ အခဝ်ထာႏဝ |

295 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | နဝ်ꩻ အဝ်ႏဒျာႏ မျန်မာခမ်းထီ |

624 |

| 2 | အဝ်ႏဒျာႏ မျန်မာခမ်းထီ အခဝ်ထာႏဝ |

295 |

| 3 | ခရိစ်နေင်ႏ ဗာႏ စာႏရင်ꩻအလꩻ |

261 |

| 4 | ဗာႏ စာႏရင်ꩻအလꩻ ဝေင်ꩻကိုနဝ်ꩻ |

161 |

| 5 | ထာꩻထွာဖုံႏ လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ |

153 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | နဝ်ꩻ အဝ်ႏဒျာႏ မျန်မာခမ်းထီ အခဝ်ထာႏဝ |

282 |

| 2 | ခရိစ်နေင်ႏ ဗာႏ စာႏရင်ꩻအလꩻ ဝေင်ꩻကိုနဝ်ꩻ |

161 |

| 3 | လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ အထွတ်အမျတ်မွူးနီꩻဖုံႏ |

153 |

| 4 | သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ အထွတ်အမျတ်မွူးနီꩻဖုံႏ အာႏကွိုꩻ |

153 |

| 5 | ထာꩻထွာဖုံႏ လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ |

153 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ အထွတ်အမျတ်မွူးနီꩻဖုံႏ အာႏကွိုꩻ |

153 |

| 2 | ထာꩻထွာဖုံႏ လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ အထွတ်အမျတ်မွူးနီꩻဖုံႏ |

153 |

| 3 | သွူ ထာꩻထွာဖုံႏ လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ |

151 |

| 4 | ခရိစ်နေင်ႏ ဗာႏ စာႏရင်ꩻအလꩻ ဝေင်ꩻကိုနဝ်ꩻ လိုꩻဖြာꩻခြွဉ်းအဝ်ႏ |

131 |

| 5 | အဝ်ႏသော့ꩻနဝ်ꩻသွူ ခရိစ်နေင်ႏ ဗာႏ စာႏရင်ꩻအလꩻ ဝေင်ꩻကိုနဝ်ꩻ |

111 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ာ ႏ |

142,384 |

| 2 | ၊ _ |

135,380 |

| 3 | ꩻ _ |

126,353 |

| 4 | ဝ် ꩻ |

102,695 |

| 5 | င် ꩻ |

96,805 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | န ဝ် ꩻ |

77,014 |

| 2 | ဝ် ꩻ _ |

57,567 |

| 3 | ꩻ ၊ _ |

31,811 |

| 4 | သွူ ။ _ |

31,570 |

| 5 | ႏ ၊ _ |

30,928 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | န ဝ် ꩻ _ |

45,450 |

| 2 | နေ ာ ဝ် ꩻ |

23,553 |

| 3 | ꩻ သွူ ။ _ |

18,993 |

| 4 | ꩻ န ဝ် ꩻ |

18,023 |

| 5 | ႏ န ဝ် ꩻ |

17,057 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ဝ် ꩻ သွူ ။ _ |

15,761 |

| 2 | ꩻ န ဝ် ꩻ _ |

12,522 |

| 3 | နေ ာ ဝ် ꩻ _ |

11,865 |

| 4 | ႏ န ဝ် ꩻ _ |

10,503 |

| 5 | န ဝ် ꩻ သွူ ။ |

10,311 |

Key Findings

- Best Perplexity: 2-gram (subword) with 1,398

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~17% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

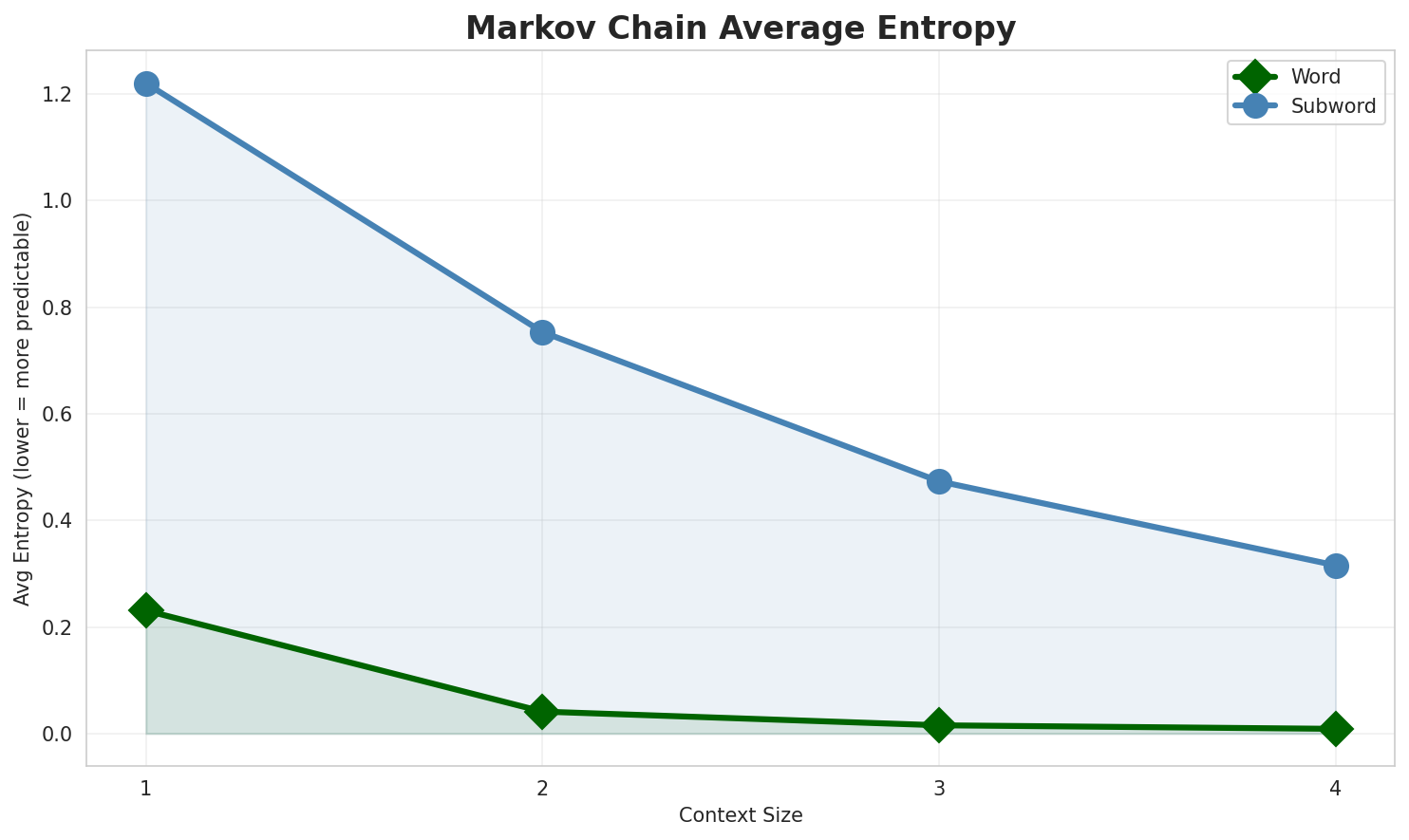

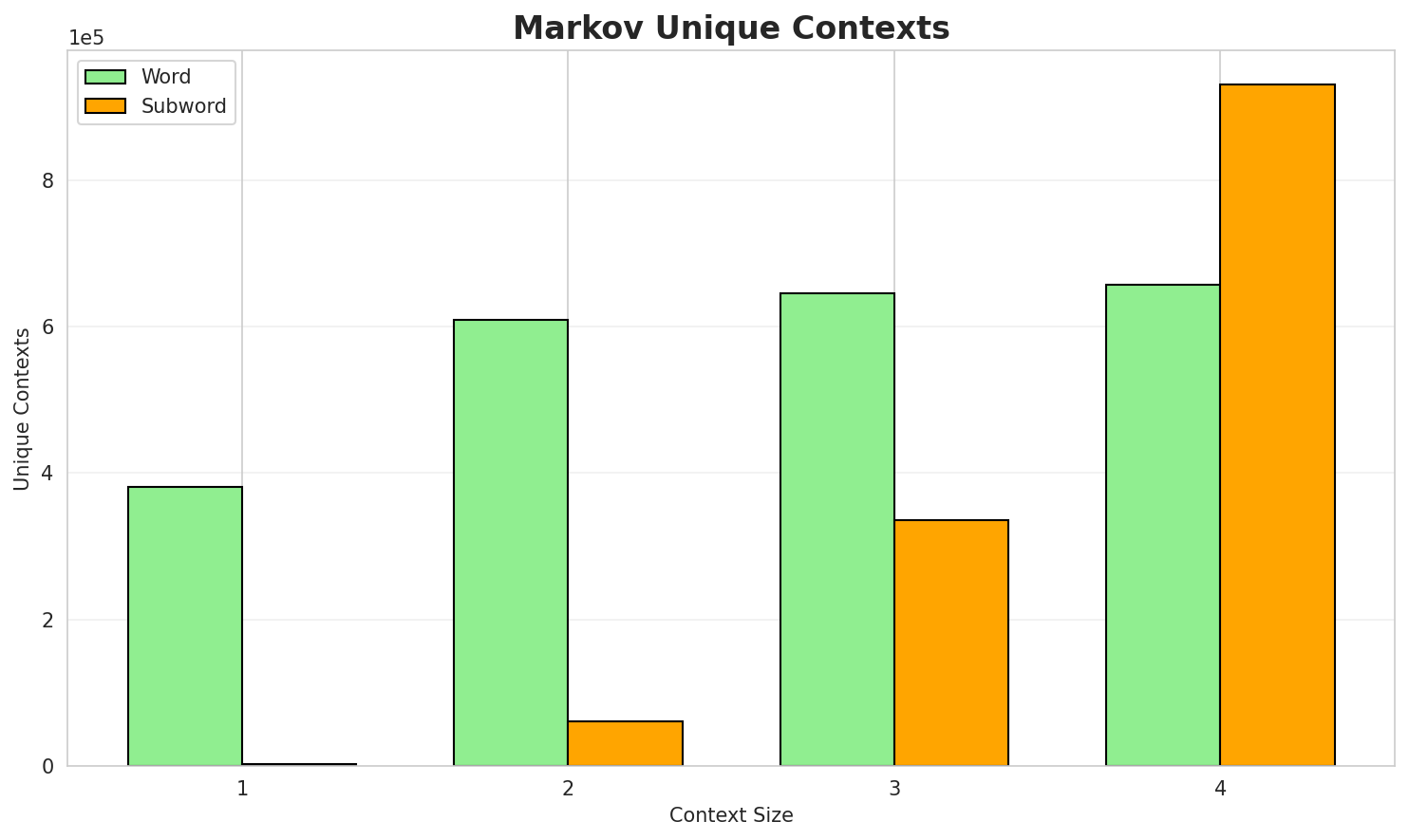

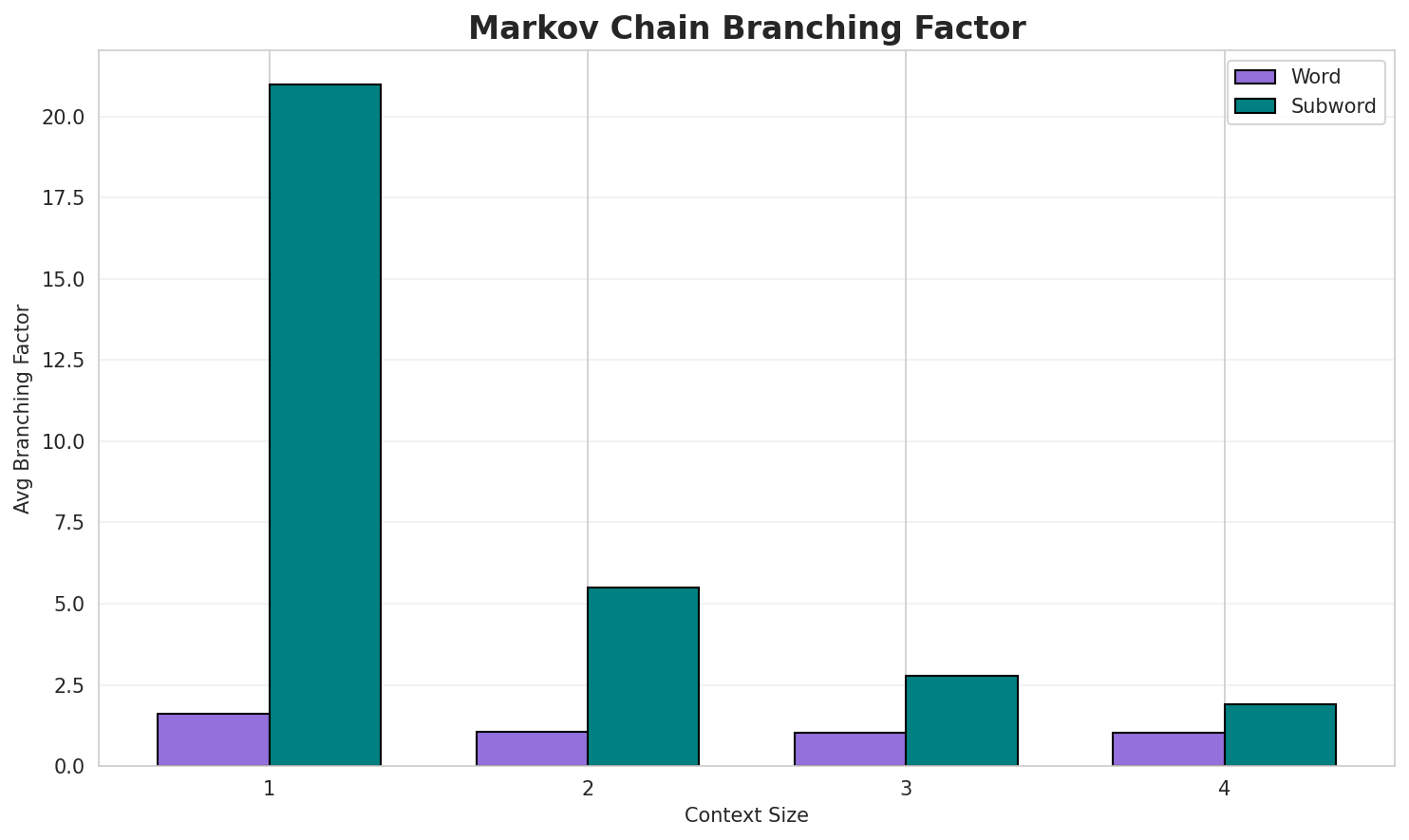

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.2308 | 1.173 | 1.60 | 381,069 | 76.9% |

| 1 | Subword | 1.2202 | 2.330 | 20.98 | 2,909 | 0.0% |

| 2 | Word | 0.0412 | 1.029 | 1.06 | 609,269 | 95.9% |

| 2 | Subword | 0.7534 | 1.686 | 5.49 | 61,020 | 24.7% |

| 3 | Word | 0.0155 | 1.011 | 1.02 | 645,305 | 98.5% |

| 3 | Subword | 0.4733 | 1.388 | 2.77 | 335,231 | 52.7% |

| 4 | Word | 0.0088 🏆 | 1.006 | 1.01 | 656,933 | 99.1% |

| 4 | Subword | 0.3156 | 1.245 | 1.90 | 930,014 | 68.4% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

၂ ဖြုံႏလဲ့ အဝ်ႏသွူ ခမ်းတွူးကောင်ꩻယို အမိဉ်ꩻနဝ်ꩻ ဖန်ဖေႏ စဲဉ်ႏဖေႏဒျာႏလွဉ်းလွဉ်းသွူ ယိုလွုမ်ꩻမကာႏ ဗွေႏဗ...၃ ပွုမ်ႏယိုသွူ က အဟံ ခွေနဝ်ꩻ ကောလက္ခံႏသား ၂ ၃ ပေါႏပါႏဠိဒျာႏနဝ်ꩻ သော့ꩻတောဝ်းအမုဲင် ဟော်ꩻဖတ်ဗော့ꩻ ပါႏဠ...၁ ခြပ် စီ သွံဆီသူ တနတ်တလီꩻ air combat information management unit mimu ဝေင်ꩻနယ်ႏရွုမ်ꩻဖုံႏနဝ်ꩻ အဝ်ႏဒ...

Context Size 2:

နဝ်ꩻ အဝ်ႏဒျာႏ မျန်မာခမ်းထီ ဖြဝ်ꩻခမ်းနယ်ႏအခဝ်ကွဉ်ႏ မွိုင်ꩻတုံခရဲင်ႏ ဝေင်ꩻနယ်ႏမွိုင်ꩻတုံကို ကပါဒါႏ ဝေင...အဝ်ႏဒျာႏ မျန်မာခမ်းထီ အခဝ်ထာႏဝ ပဂိုꩻတွိုင်ꩻဒေႏသတန် အခဝ်ကွဉ်ႏထင်ꩻ တောင်ႏအူခရဲင်ႏ ဝေင်ꩻနယ်ႏဖျူးကို ကပါ...ခရိစ်နေင်ႏ ဗာႏ စဲ့ꩻအစိုႏရစိုးကို ကဗွောင်လွေꩻဒါႏ ခမ်းလင်လစ်ꩻခမ်းတောမ်ႏ ဖြေꩻစာကွန်ႏ လွယ်စယ်ခမ်းကူဂဲတ်လ...

Context Size 3:

နဝ်ꩻ အဝ်ႏဒျာႏ မျန်မာခမ်းထီ အခဝ်ကွဉ်ႏထင်ꩻ ဖြဝ်ꩻခမ်းနယ်ႏ အခဝ်ထင်ꩻ ဟိုပန်ခရဲင်ႏ ဝနမ်းပဲင်ႏအိုပ်ချုတ်ခွင...အဝ်ႏဒျာႏ မျန်မာခမ်းထီ အခဝ်ထာႏဝ နေပီဒေါ်ခမ်းခြွဉ်းဗူႏဟံႏနယ်ႏ လယ်ဝွေးခရဲင်ႏကို ကအဝ်ႏပါသော့ꩻဒါႏ ဝေင်ꩻနယ...ခရိစ်နေင်ႏ ဗာႏ စာႏရင်ꩻအလꩻ ဝေင်ꩻကိုနဝ်ꩻ လိုꩻဖြာꩻအဝ်ႏ ၁၁ ၃၀၅ ဖြာꩻသွူ အဝ်ႏဒျာႏ ထာဝယ် မေက် ကာꩻတဖူꩻတန်လော...

Context Size 4:

နဝ်ꩻ အဝ်ႏဒျာႏ မျန်မာခမ်းထီ အခဝ်ထာႏဝ ဧရာႏဝတီႏတွိုင်ꩻဒေႏသတန် မအူပိဉ်ခရဲင်ႏ ကို ကပါဒါႏ ဝေင်ꩻနယ်ႏတဖြုံႏဒ...ခရိစ်နေင်ႏ ဗာႏ စာႏရင်ꩻအလꩻ ဝေင်ꩻကိုနဝ်ꩻ လိုꩻဖြာꩻအဝ်ႏ ၁၀ ၄၄၃ ဖြာꩻသွူ အဉ်းမယို ကရီးခါနဝ်ꩻ ထွာဒျာႏ ဒုံအဉ...ထာꩻထွာဖုံႏ လွူးဖွာꩻသားဖုံႏ သီမားသားဖုံႏ မွူးနီꩻအုံပဆားနီꩻဖုံႏတောမ်ႏ အထွတ်အမျတ်မွူးနီꩻဖုံႏ အာႏကွိုꩻ

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_တသီႏပေႏစမုံးဝါꩻစွဉ်းထဲꩻနဝ်ႏပုဂ္ဂိုလ်ႏ_ဇာဝ်ꩻသားႏအခြာဏ်ႏတောယိုတဲ့_ဟောႏရာ

Context Size 2:

ာႏနဝ်ꩻ_အောဝ်ꩻသွူကျောင်ႏဒျာ၊_တွိုက်_ကြွဲႏ_ဖန်_သွူ။_ဓမ္မပꩻ_ခင်ႏငံႏ_မန်း"ကို_ကတဲမ်

Context Size 3:

နဝ်ꩻသွူ။_နီကွဉ်ကꩻမွိုန်း။_၂။ဝ်ꩻ_အံႏဖြာꩻနောဝ်ꩻ_ပအိုဝ်ႏယိုꩻ၊_မဲ့သျင်ႏကျင်ꩻ။_နီလိတ်_အဝ်

Context Size 4:

နဝ်ꩻ_ဟဲ့ꩻဗာႏသꩻ_ကွား_ကွန်ပေနောဝ်ꩻ_ဘဝပေါင်ꩻ_ရွဉ်ခန်ဗီႏ_ꩻသွူ။_ပအိုဝ်ႏစွိုးခွိုꩻသီး_သွိုန်ႏသ

Key Findings

- Best Predictability: Context-4 (word) with 99.1% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (930,014 contexts)

- Recommendation: Context-3 or Context-4 for text generation

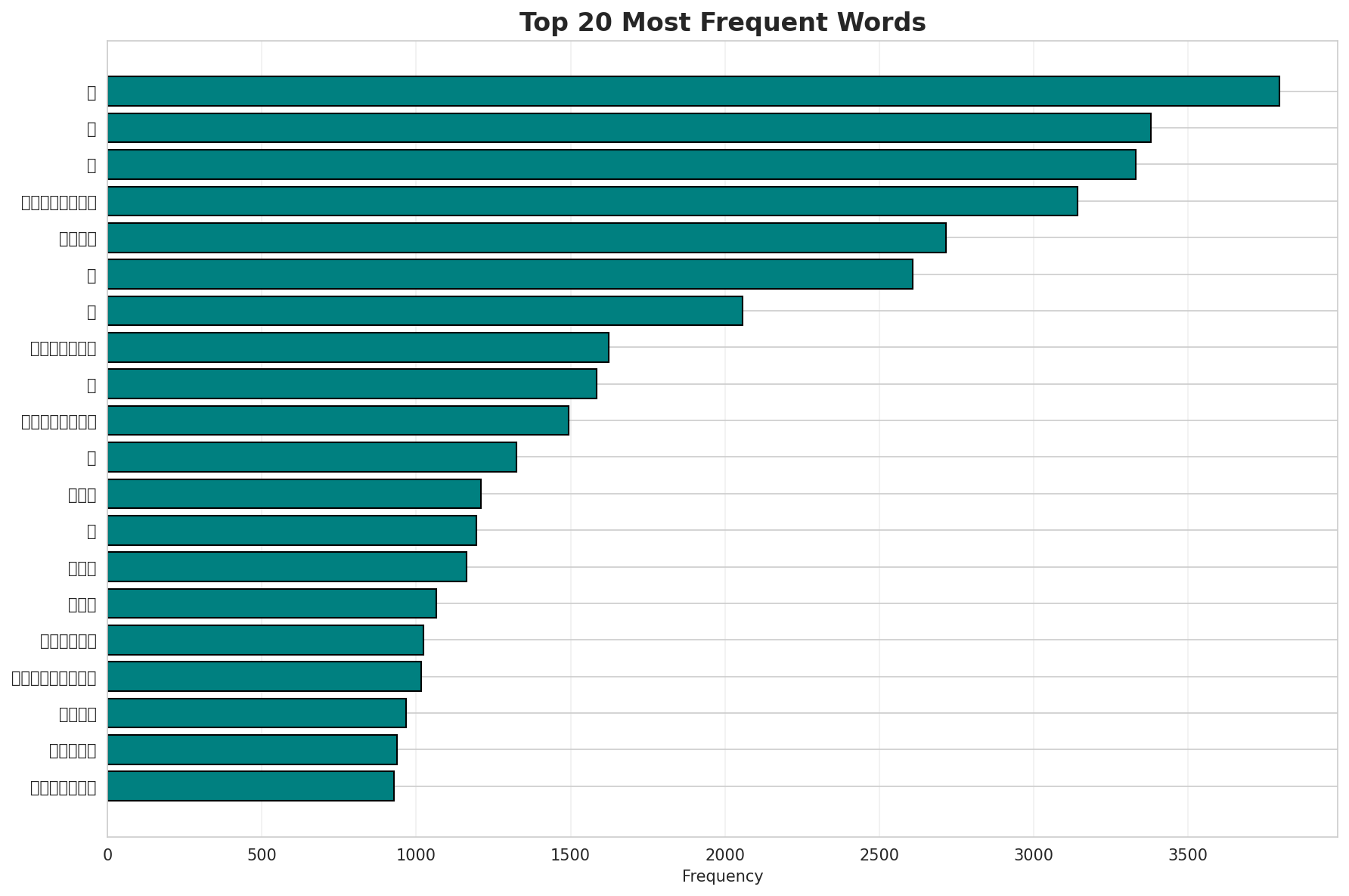

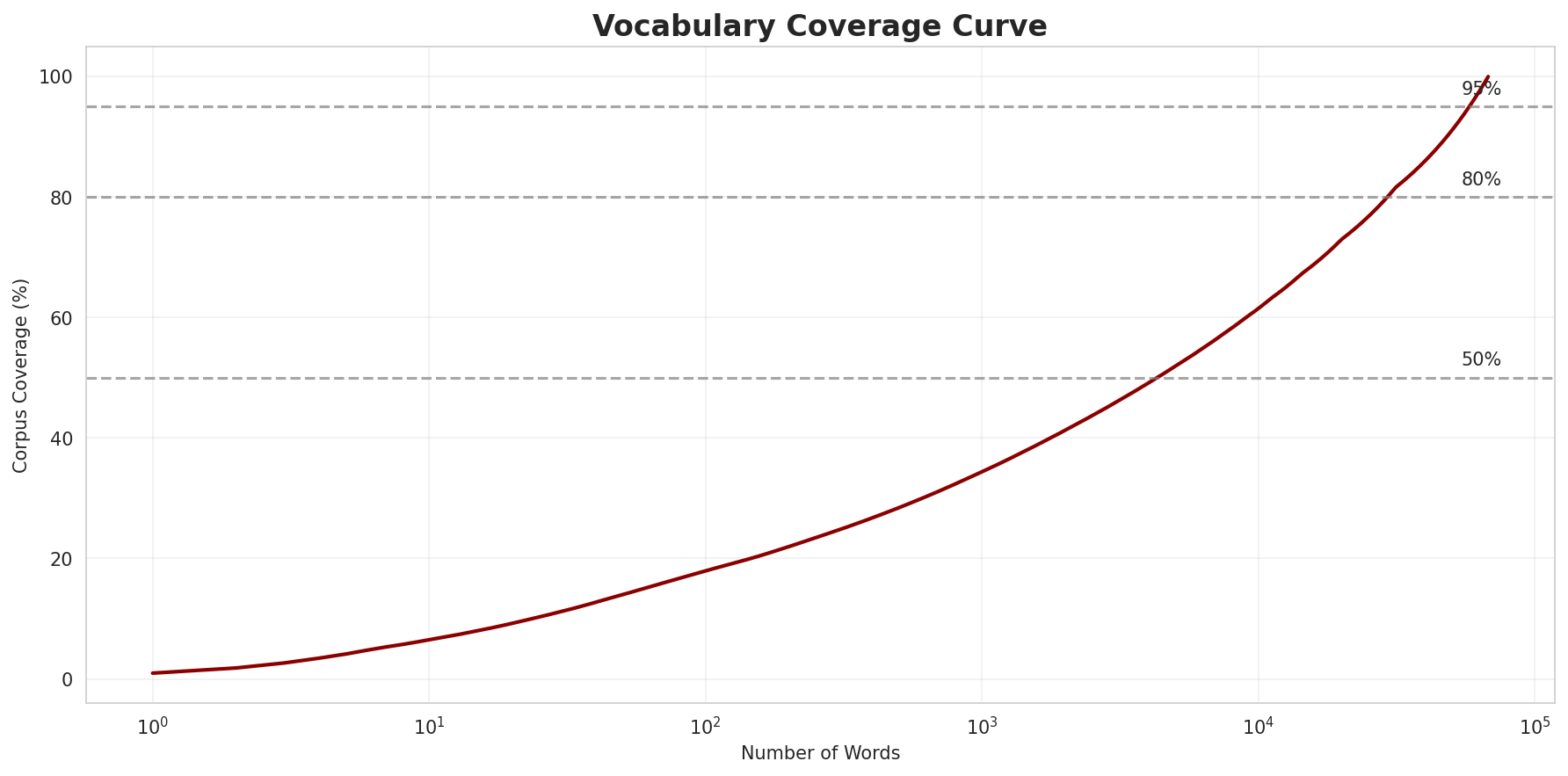

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 67,819 |

| Total Tokens | 396,228 |

| Mean Frequency | 5.84 |

| Median Frequency | 2 |

| Frequency Std Dev | 39.85 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | ၂ | 3,796 |

| 2 | ၃ | 3,380 |

| 3 | ၁ | 3,330 |

| 4 | အာႏကွိုꩻ | 3,141 |

| 5 | နဝ်ꩻ | 2,717 |

| 6 | ၄ | 2,608 |

| 7 | ၅ | 2,058 |

| 8 | ထွာဒျာႏ | 1,623 |

| 9 | ၆ | 1,585 |

| 10 | အဝ်ႏဒျာႏ | 1,494 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | တထာနမ်းနောဝ်ꩻ | 2 |

| 2 | တထာဖြွီꩻဖုံႏ | 2 |

| 3 | antihistamine | 2 |

| 4 | ပထမခွိုꩻ | 2 |

| 5 | ဒုတိယခွိုꩻ | 2 |

| 6 | histamine | 2 |

| 7 | တနယ်ႏလိုမ်းဆဲင်ႏရာꩻ | 2 |

| 8 | အခြေပြုမူလတန်ꩻ | 2 |

| 9 | ပထမကြီးတန်ꩻတွမ်ႏ | 2 |

| 10 | ရန်ႏကုန်ႏတုံး | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.7916 |

| R² (Goodness of Fit) | 0.998007 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 17.9% |

| Top 1,000 | 34.4% |

| Top 5,000 | 51.9% |

| Top 10,000 | 61.5% |

Key Findings

- Zipf Compliance: R²=0.9980 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 17.9% of corpus

- Long Tail: 57,819 words needed for remaining 38.5% coverage

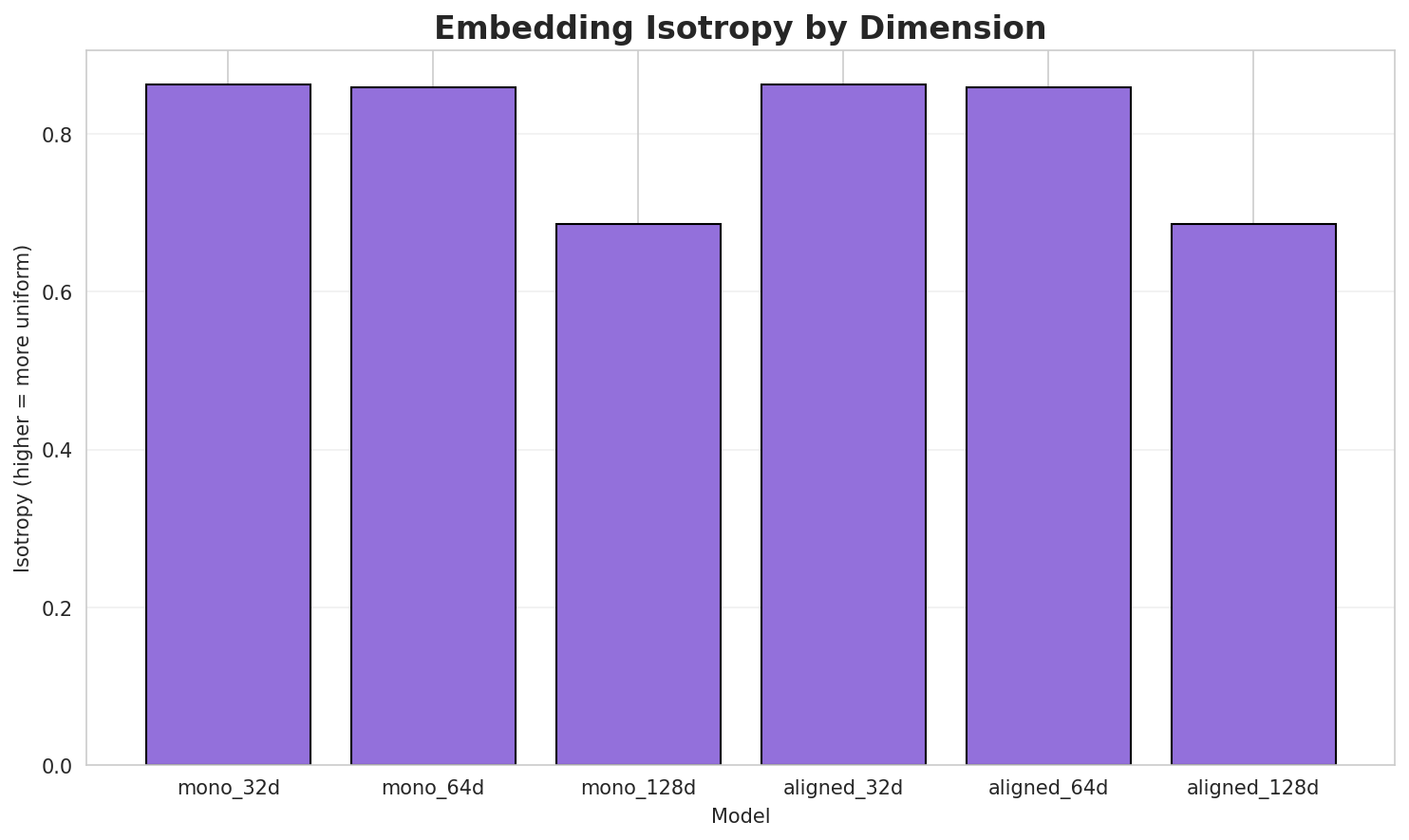

5. Word Embeddings Evaluation

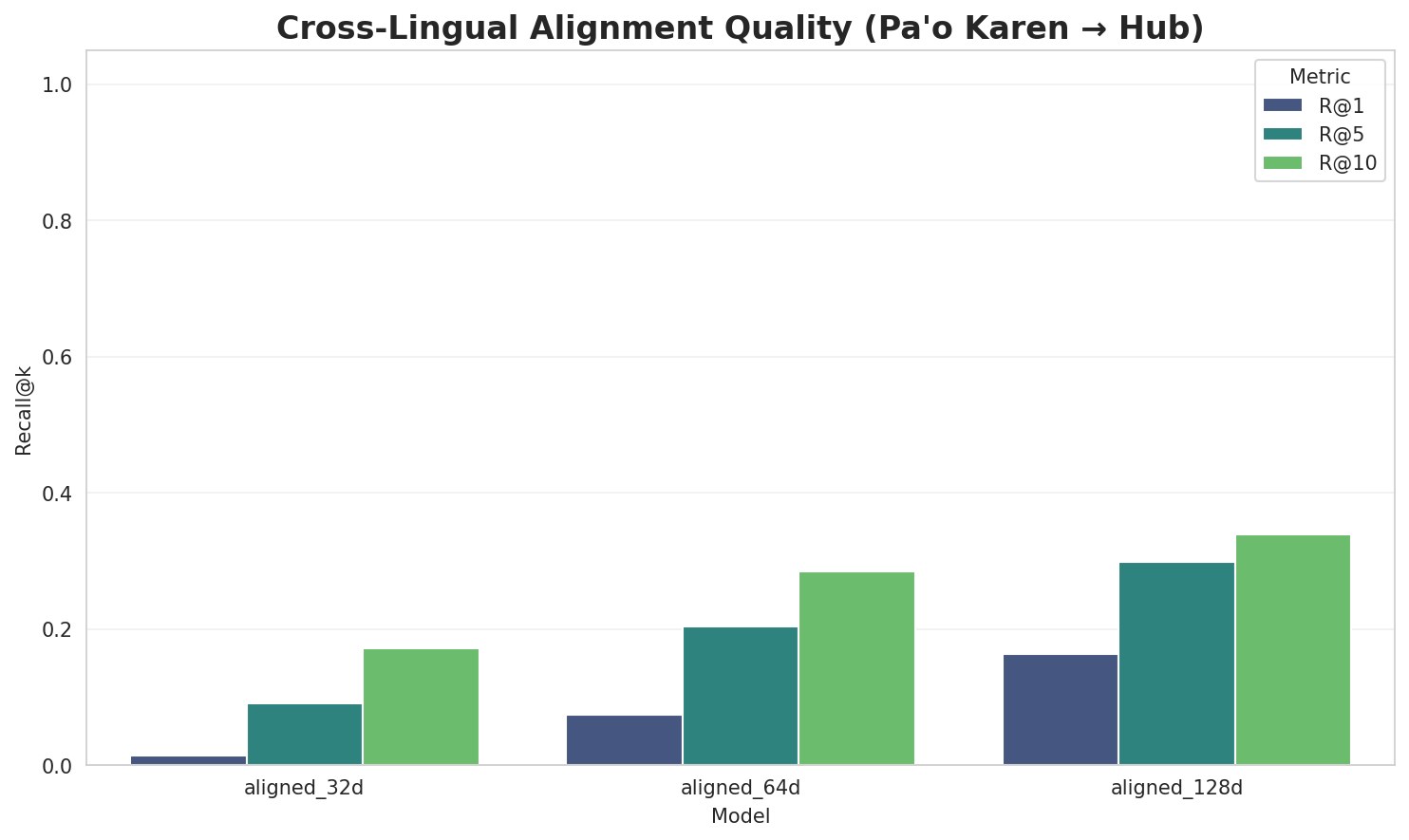

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8632 🏆 | 0.3270 | N/A | N/A |

| mono_64d | 64 | 0.8595 | 0.2722 | N/A | N/A |

| mono_128d | 128 | 0.6854 | 0.2261 | N/A | N/A |

| aligned_32d | 32 | 0.8632 | 0.3317 | 0.0135 | 0.1716 |

| aligned_64d | 64 | 0.8595 | 0.2717 | 0.0745 | 0.2844 |

| aligned_128d | 128 | 0.6854 | 0.2281 | 0.1625 | 0.3386 |

Key Findings

- Best Isotropy: mono_32d with 0.8632 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2762. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 16.3% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.267 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-လိ |

လိက်ပအိုဝ်ႏ, လိုꩻစားယိုဖုံႏနဝ်ꩻ, လိတ်လုံးကို |

-လို |

လိုꩻစားယိုဖုံႏနဝ်ꩻ, လိုꩻခိုဖုံႏလဲ့, လိုꩻမွိုက်နဝ်ꩻ |

Productive Suffixes

| Suffix | Examples |

|---|---|

-ꩻ |

ဝန်ႏကိုတွော့ꩻ, ရခဲင်ႏခွန်ဟော်ခံꩻ, သဗ္ဗညုဘုရာꩻ |

-ႏ |

လဲဉ်အံႏ, အလင်္ကာႏ, မာꩻသွော့ကုသိုလ်ႏ |

-်ꩻ |

လာအိုခမ်းထီနဝ်ꩻ, တသီႏအံႏနယ်ꩻနဝ်ꩻ, ယိုသွံပါꩻထွာနဝ်ꩻ |

-း |

အောဝ်ႏဟမ်ႏခမ်ႏဖာႏလောင်း, လူထုအလောင်း, ဉာဏ်ႏတောႏဆꩻချာလွဉ်းလွဉ်း |

-ဝ်ꩻ |

လာအိုခမ်းထီနဝ်ꩻ, တသီႏအံႏနယ်ꩻနဝ်ꩻ, ယိုသွံပါꩻထွာနဝ်ꩻ |

-်း |

အောဝ်ႏဟမ်ႏခမ်ႏဖာႏလောင်း, လူထုအလောင်း, ဉာဏ်ႏတောႏဆꩻချာလွဉ်းလွဉ်း |

-နဝ်ꩻ |

လာအိုခမ်းထီနဝ်ꩻ, တသီႏအံႏနယ်ꩻနဝ်ꩻ, ယိုသွံပါꩻထွာနဝ်ꩻ |

-ာႏ |

အလင်္ကာႏ, ဖန်ဆင်ꩻမာꩻခါꩻဒျာႏ, ကိုꩻကွယ်ႏသားအာဗာႏ |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

No significant bound stems detected.

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-လိ |

-ꩻ |

83 words | လိုꩻမဉ်ꩻ, လိုꩻနမ်းအကိုအထန်ႏနီဖဲ့ꩻ |

-လိ |

-ႏ |

64 words | လိုꩻစွဲဉ်ႏ, လိုꩻမုရေꩻအစွိုꩻအဗူႏဖုံႏ |

-လိ |

-်ꩻ |

61 words | လိုꩻမဉ်ꩻ, လိုꩻယုက်နဝ်ꩻ |

-လိ |

-ဝ်ꩻ |

45 words | လိုꩻယုက်နဝ်ꩻ, လိတ်မွူးပအိုဝ်ႏယိုခါနဝ်ꩻ |

-လိ |

-နဝ်ꩻ |

37 words | လိုꩻယုက်နဝ်ꩻ, လိတ်မွူးပအိုဝ်ႏယိုခါနဝ်ꩻ |

-လိ |

-း |

36 words | လိုꩻခမ်း, လိုႏတဝ်း |

-လိ |

-်ႏ |

23 words | လိုꩻစွဲဉ်ႏ, လိုꩻသွုန်ႏထီဓာတ်တွမ်ႏ |

-လိ |

-်း |

19 words | လိုꩻခမ်း, လိုႏတဝ်း |

-လိ |

-ာႏ |

15 words | လိုꩻမျိုꩻတွမ်ႏခမ်းထီအတာႏ, လိတ်လုဲင်ꩻတွမ်ႏအနုပညာႏ |

-လိ |

-ွူ |

5 words | လိုꩻမဉ်အံႏနွောင်ꩻနိစ်စက်ဒါႏဝင်ꩻဖုံႏနဝ်ꩻသွူ, လိုႏမာꩻထူႏလွလဲဉ်းဒျာႏနောဝ်ꩻသွူ |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| ကွဲညညနဝ်ꩻ | ကွဲညည-နဝ်ꩻ |

4.5 | ကွဲညည |

| သꩻကိုနဝ်ꩻ | သꩻကို-နဝ်ꩻ |

4.5 | သꩻကို |

| လိုꩻယင်ဟန်ႏနဝ်ꩻ | လို-ꩻယင်ဟန-်ႏ-နဝ်ꩻ |

4.5 | ꩻယင်ဟန |

| နင်ꩻသုမနာနဝ်ꩻ | နင်ꩻသုမနာ-နဝ်ꩻ |

4.5 | နင်ꩻသုမနာ |

| ပုဏ္ဏာꩻနဝ်ꩻ | ပုဏ္ဏာꩻ-နဝ်ꩻ |

4.5 | ပုဏ္ဏာꩻ |

| နာꩻတဲ့နဝ်ꩻ | နာꩻတဲ့-နဝ်ꩻ |

4.5 | နာꩻတဲ့ |

| ခန္ဓာႏတန်ယိုနဝ်ꩻ | ခန္ဓာႏတန်ယို-နဝ်ꩻ |

4.5 | ခန္ဓာႏတန်ယို |

| ရောင်ထာꩻနဝ်ꩻ | ရောင်ထာꩻ-နဝ်ꩻ |

4.5 | ရောင်ထာꩻ |

| ခယ်ႏမူႏနဝ်ꩻ | ခယ်ႏမူႏ-နဝ်ꩻ |

4.5 | ခယ်ႏမူႏ |

| အနာႏဂတ်နဝ်ꩻ | အနာႏဂတ်-နဝ်ꩻ |

4.5 | အနာႏဂတ် |

| ရဟန်ꩻသာႏမဏေႏနဝ်ꩻ | ရဟန်ꩻသာႏမဏေႏ-နဝ်ꩻ |

4.5 | ရဟန်ꩻသာႏမဏေႏ |

| ထွို့ꩻစွဲႏနဝ်ꩻ | ထွို့ꩻစွဲႏ-နဝ်ꩻ |

4.5 | ထွို့ꩻစွဲႏ |

| စူမွူးနဝ်ꩻ | စူမွူး-နဝ်ꩻ |

4.5 | စူမွူး |

| ပွိုးနဝ်ꩻ | ပွိုး-နဝ်ꩻ |

4.5 | ပွိုး |

| သင်္ဃာႏတောႏနဝ်ꩻ | သင်္ဃာႏတေ-ာႏ-နဝ်ꩻ |

3.0 | သင်္ဃာႏတေ |

6.6 Linguistic Interpretation

Automated Insight: The language Pa'o Karen shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.85x) |

| N-gram | 2-gram | Lowest perplexity (1,398) |

| Markov | Context-4 | Highest predictability (99.1%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-03 19:13:44