language: bs

language_name: Bosnian

language_family: slavic_south

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- feature-extraction

- sentence-similarity

- tokenization

- n-grams

- markov-chain

- text-mining

- fasttext

- babelvec

- vocabulous

- vocabulary

- monolingual

- family-slavic_south

license: mit

library_name: wikilangs

pipeline_tag: text-generation

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 4.709

- name: best_isotropy

type: isotropy

value: 0.6791

- name: vocabulary_size

type: vocab

value: 0

generated: 2026-01-04T00:00:00.000Z

Bosnian - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Bosnian Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

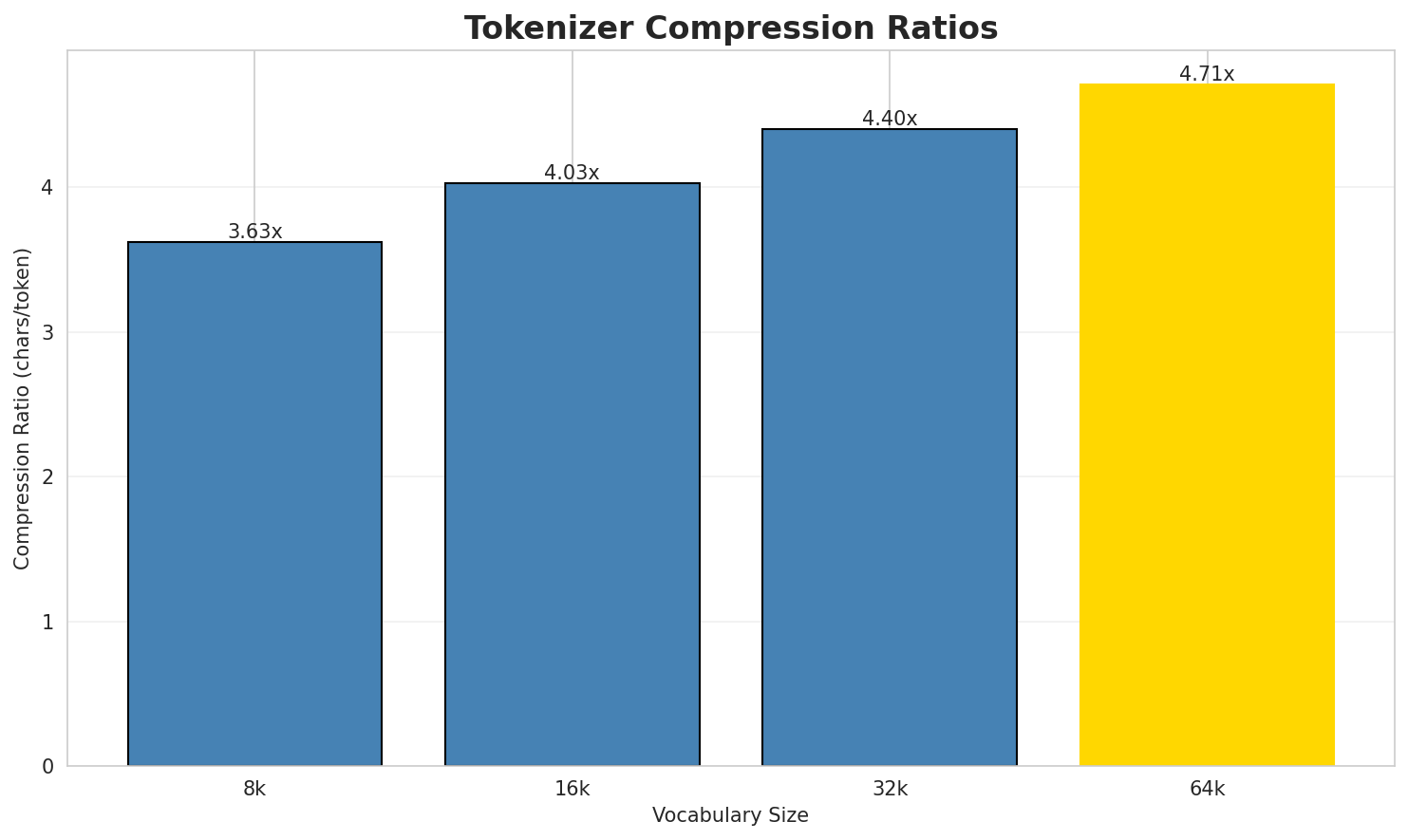

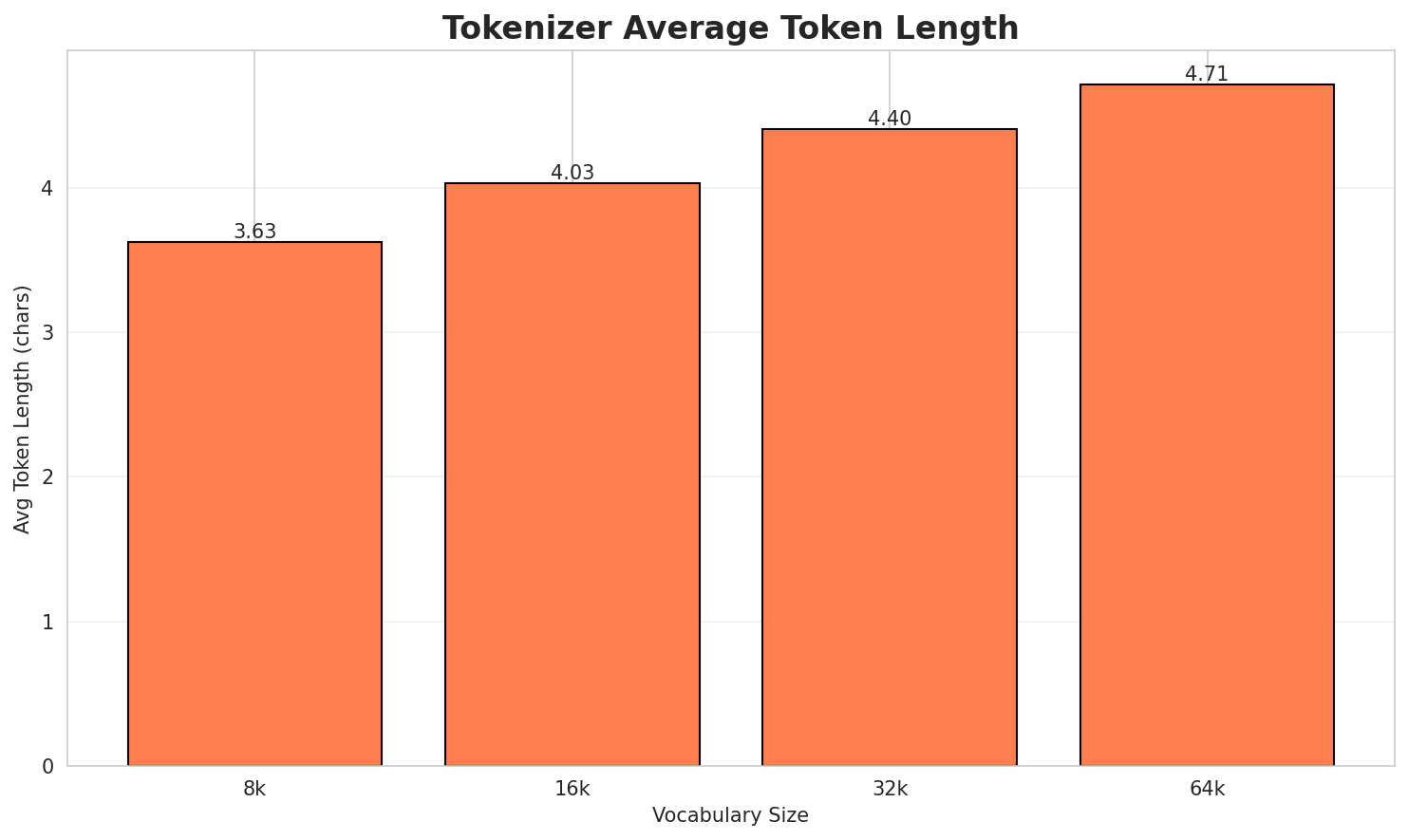

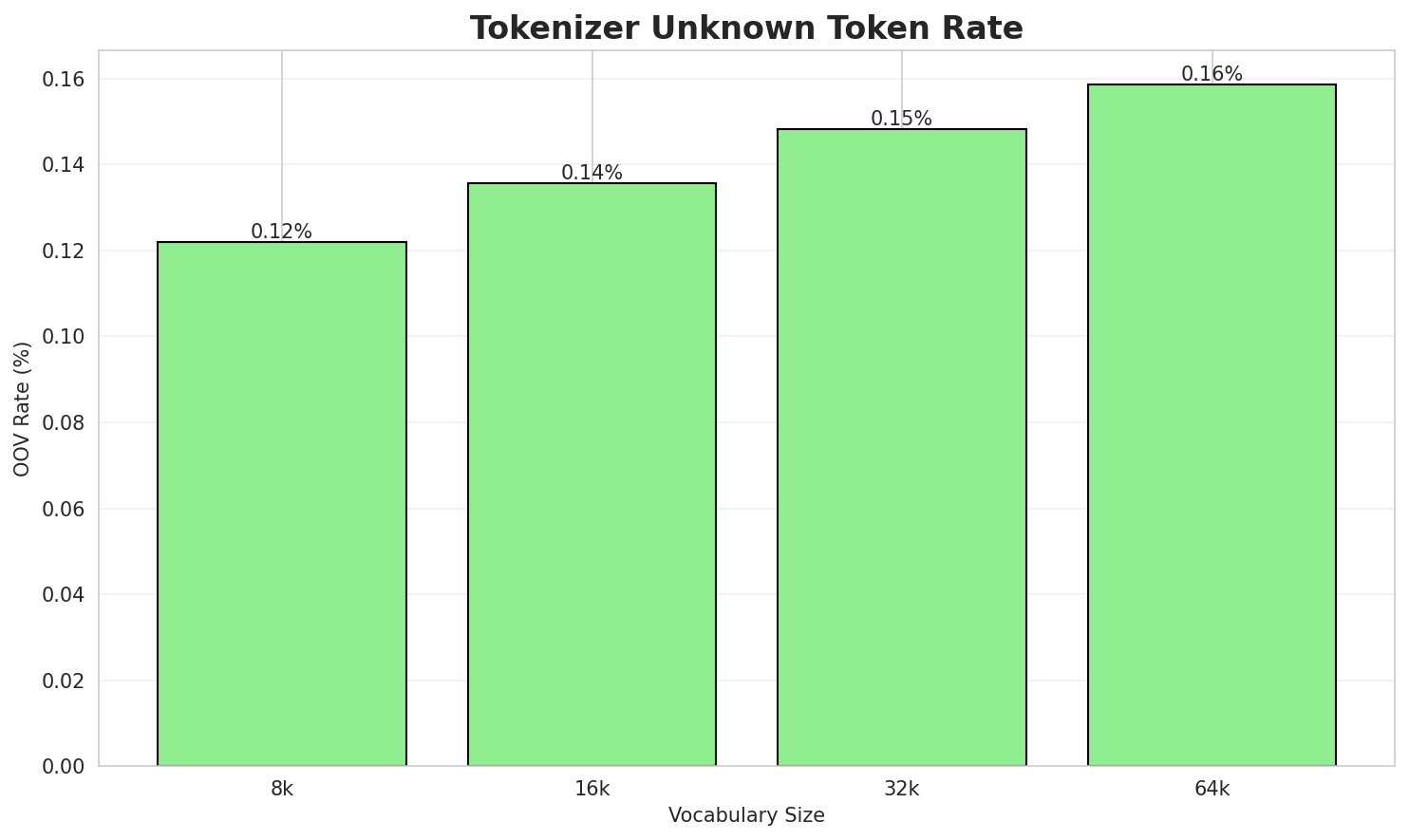

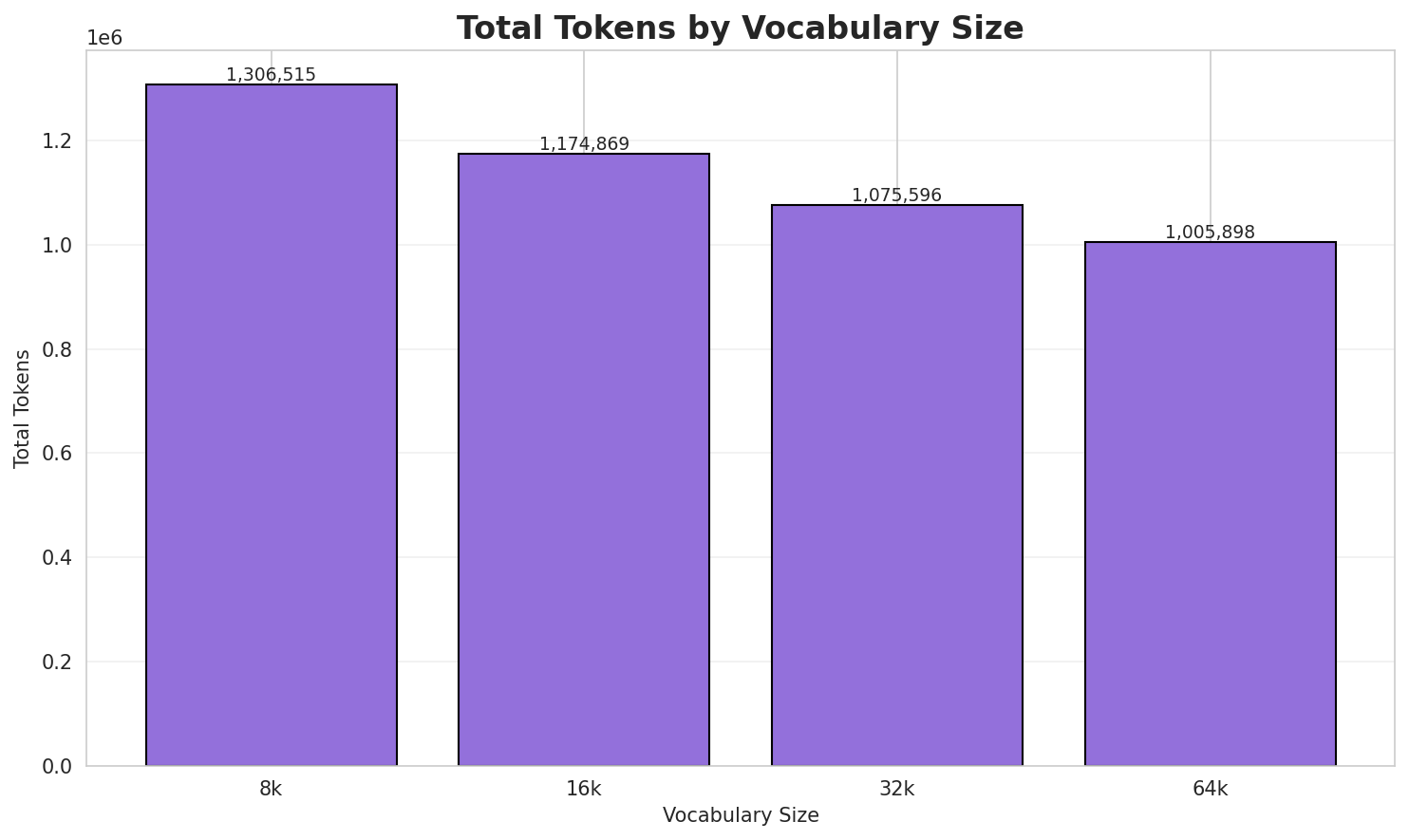

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.626x | 3.63 | 0.1221% | 1,306,515 |

| 16k | 4.032x | 4.03 | 0.1358% | 1,174,869 |

| 32k | 4.404x | 4.40 | 0.1483% | 1,075,596 |

| 64k | 4.709x 🏆 | 4.71 | 0.1586% | 1,005,898 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Vrpolje Ljubomir je naseljeno mjesto u gradu Trebinju, Bosna i Hercegovina. Stan...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁vr polje ▁lju bo mir ▁je ▁naseljeno ▁mjesto ▁u ▁gradu ... (+16 more) |

26 |

| 16k | ▁vr polje ▁ljubo mir ▁je ▁naseljeno ▁mjesto ▁u ▁gradu ▁trebinju ... (+13 more) |

23 |

| 32k | ▁vr polje ▁ljubomir ▁je ▁naseljeno ▁mjesto ▁u ▁gradu ▁trebinju , ... (+12 more) |

22 |

| 64k | ▁vrpolje ▁ljubomir ▁je ▁naseljeno ▁mjesto ▁u ▁gradu ▁trebinju , ▁bosna ... (+11 more) |

21 |

Sample 2: Kobatovci su naseljeno mjesto u gradu Laktaši, Bosna i Hercegovina. Stanovništvo...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ko ba to vci ▁su ▁naseljeno ▁mjesto ▁u ▁gradu ▁la ... (+17 more) |

27 |

| 16k | ▁koba to vci ▁su ▁naseljeno ▁mjesto ▁u ▁gradu ▁lakta ši ... (+14 more) |

24 |

| 32k | ▁koba tovci ▁su ▁naseljeno ▁mjesto ▁u ▁gradu ▁laktaši , ▁bosna ... (+11 more) |

21 |

| 64k | ▁koba tovci ▁su ▁naseljeno ▁mjesto ▁u ▁gradu ▁laktaši , ▁bosna ... (+11 more) |

21 |

Sample 3: Decenija 780-ih trajala je od 1. januara 780. do 31. decembra 789. godine. Događ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁dece nija ▁ 7 8 0 - ih ▁traja la ... (+31 more) |

41 |

| 16k | ▁decenija ▁ 7 8 0 - ih ▁trajala ▁je ▁od ... (+29 more) |

39 |

| 32k | ▁decenija ▁ 7 8 0 - ih ▁trajala ▁je ▁od ... (+29 more) |

39 |

| 64k | ▁decenija ▁ 7 8 0 - ih ▁trajala ▁je ▁od ... (+29 more) |

39 |

Key Findings

- Best Compression: 64k achieves 4.709x compression

- Lowest UNK Rate: 8k with 0.1221% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

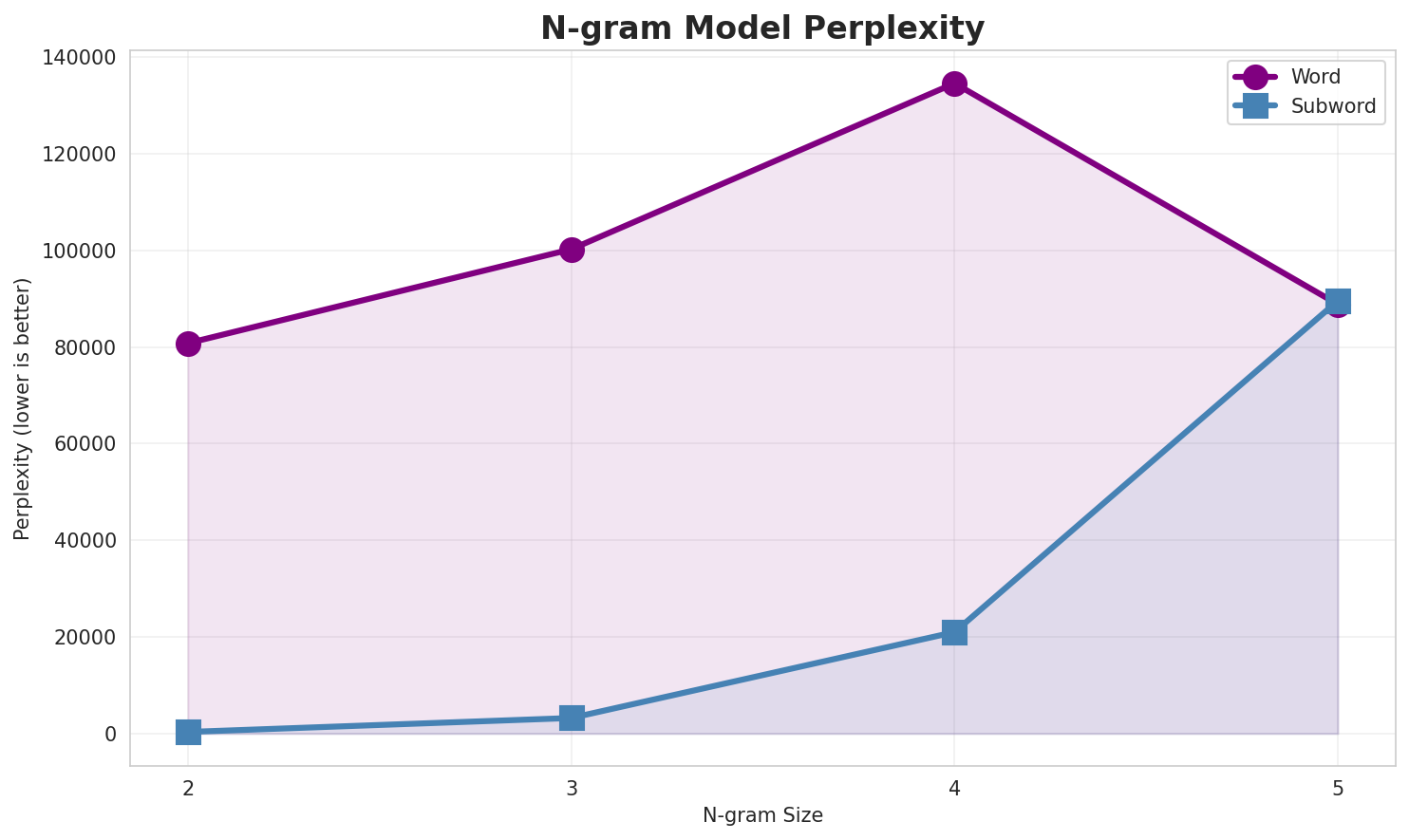

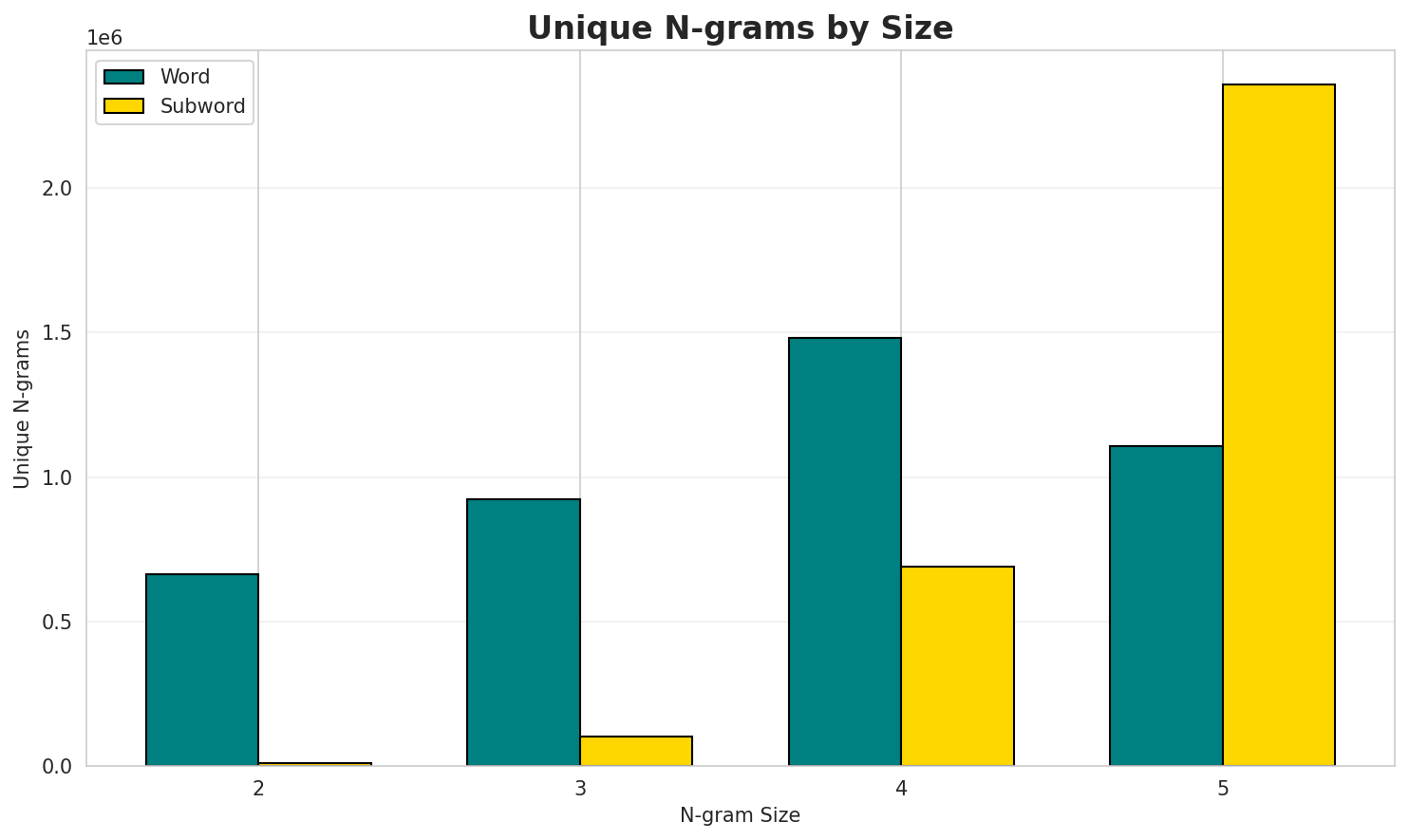

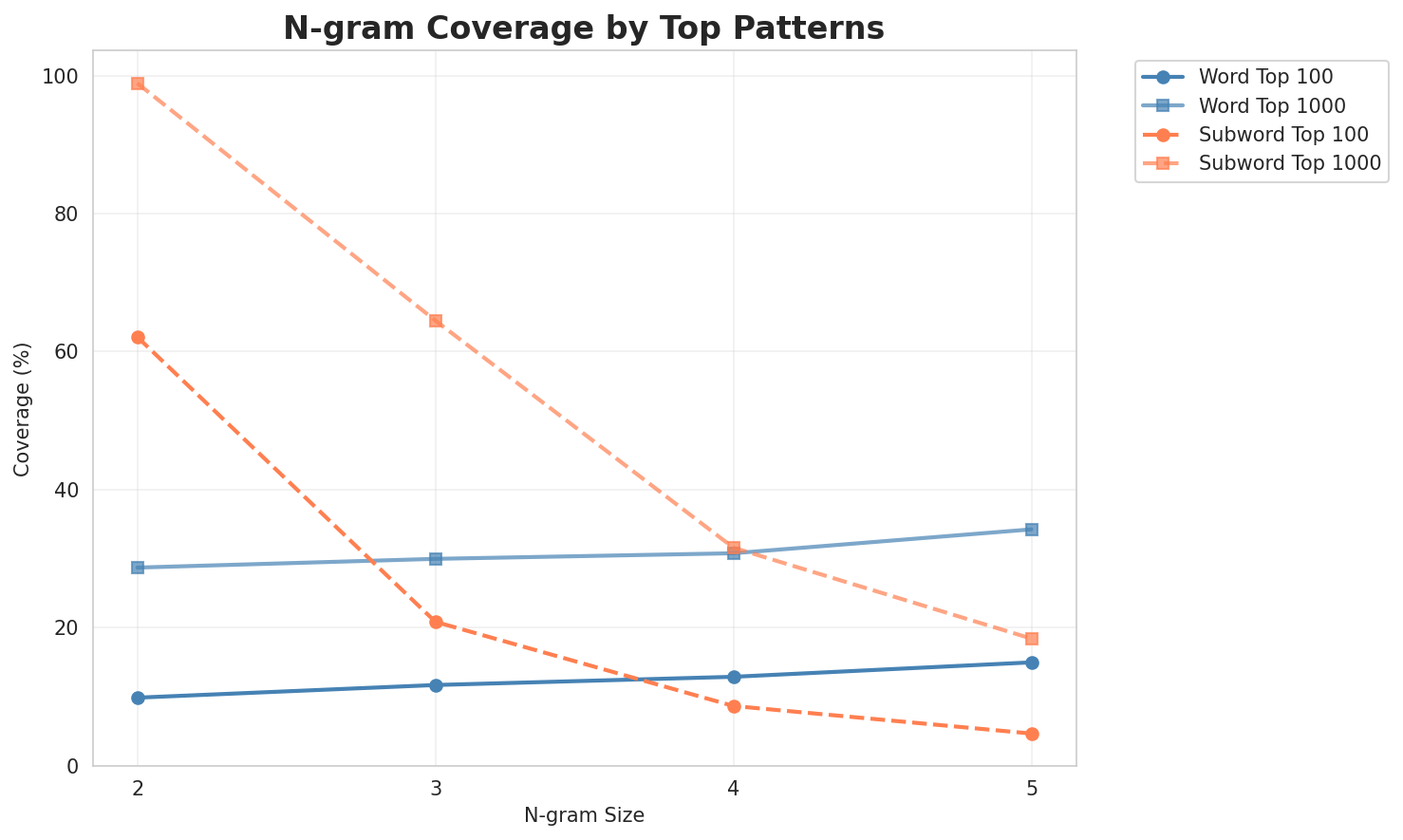

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 80,810 | 16.30 | 664,455 | 9.9% | 28.7% |

| 2-gram | Subword | 328 🏆 | 8.36 | 10,943 | 62.1% | 98.9% |

| 3-gram | Word | 100,258 | 16.61 | 924,847 | 11.7% | 30.0% |

| 3-gram | Subword | 3,216 | 11.65 | 100,916 | 20.8% | 64.5% |

| 4-gram | Word | 134,611 | 17.04 | 1,482,132 | 12.9% | 30.8% |

| 4-gram | Subword | 20,996 | 14.36 | 689,460 | 8.6% | 31.6% |

| 5-gram | Word | 88,861 | 16.44 | 1,107,611 | 15.0% | 34.2% |

| 5-gram | Subword | 89,572 | 16.45 | 2,357,541 | 4.7% | 18.4% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | spiralna galaksija |

91,078 |

| 2 | vanjski linkovi |

68,061 |

| 3 | se u |

45,470 |

| 4 | reference vanjski |

44,256 |

| 5 | ngc ic |

40,015 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | reference vanjski linkovi |

44,193 |

| 2 | prečkasta spiralna galaksija |

32,671 |

| 3 | zavod za statistiku |

22,679 |

| 4 | popisu stanovništva godine |

20,723 |

| 5 | na popisu stanovništva |

20,184 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | na popisu stanovništva godine |

20,088 |

| 2 | državni zavod za statistiku |

14,619 |

| 3 | broj stanovnika po popisima |

13,853 |

| 4 | reference vanjski linkovi u |

13,677 |

| 5 | novi opći katalog spisak |

13,518 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | također pogledajte novi opći katalog |

13,518 |

| 2 | pogledajte novi opći katalog spisak |

13,517 |

| 3 | historija do teritorijalne reorganizacije u |

13,436 |

| 4 | interaktivni ngc online katalog astronomska |

13,248 |

| 5 | ngc online katalog astronomska baza |

13,248 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a _ |

5,724,674 |

| 2 | e _ |

4,473,918 |

| 3 | j e |

3,904,782 |

| 4 | i _ |

3,802,145 |

| 5 | _ s |

3,388,803 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | j e _ |

1,738,823 |

| 2 | n a _ |

1,237,973 |

| 3 | _ n a |

1,177,081 |

| 4 | _ j e |

1,128,189 |

| 5 | _ p o |

1,086,240 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ j e _ |

924,709 |

| 2 | i j a _ |

457,403 |

| 3 | _ n a _ |

454,266 |

| 4 | _ s e _ |

399,769 |

| 5 | i j e _ |

316,944 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a _ j e _ |

263,188 |

| 2 | _ g o d i |

195,374 |

| 3 | g o d i n |

192,967 |

| 4 | o _ j e _ |

190,942 |

| 5 | _ n g c _ |

158,105 |

Key Findings

- Best Perplexity: 2-gram (subword) with 328

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~18% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

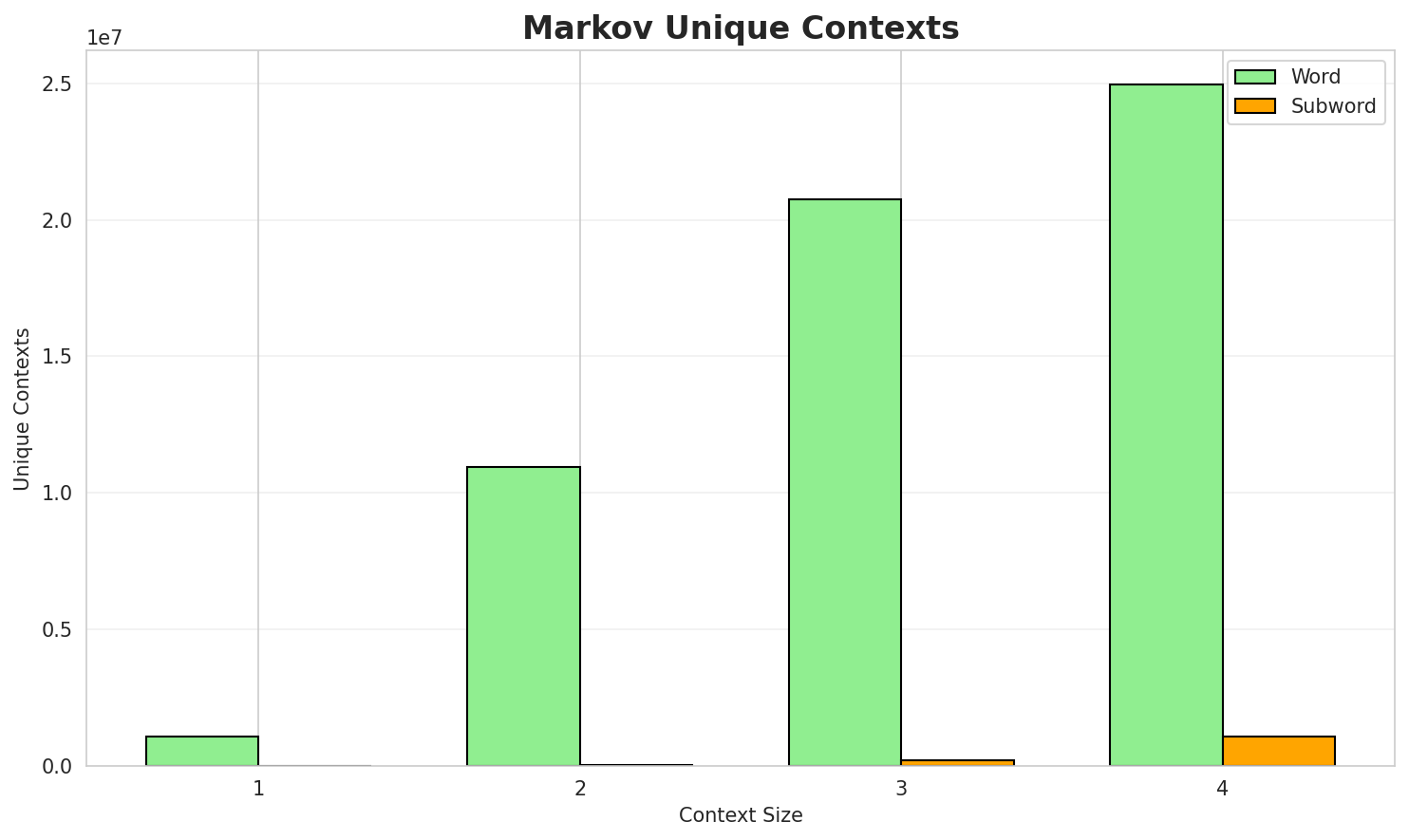

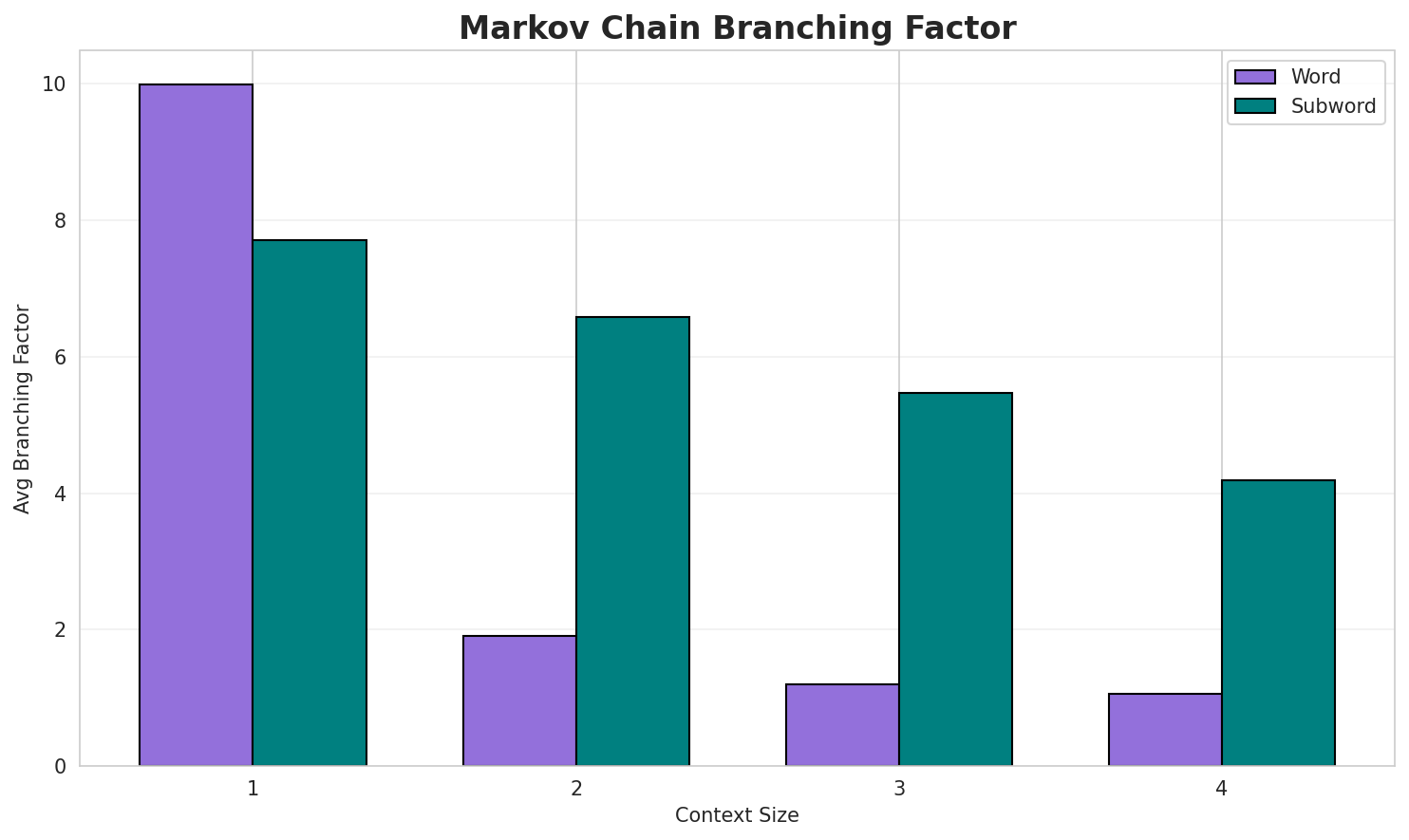

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.9835 | 1.977 | 9.99 | 1,096,434 | 1.7% |

| 1 | Subword | 1.0155 | 2.022 | 7.71 | 3,863 | 0.0% |

| 2 | Word | 0.3071 | 1.237 | 1.90 | 10,934,441 | 69.3% |

| 2 | Subword | 0.9460 | 1.927 | 6.59 | 29,789 | 5.4% |

| 3 | Word | 0.1029 | 1.074 | 1.20 | 20,758,711 | 89.7% |

| 3 | Subword | 0.9514 | 1.934 | 5.47 | 196,125 | 4.9% |

| 4 | Word | 0.0378 🏆 | 1.027 | 1.06 | 24,939,260 | 96.2% |

| 4 | Subword | 0.9416 | 1.921 | 4.19 | 1,073,504 | 5.8% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

i sfrj popis ostali su nove ere ce espanyol olímpic lluís d očigledno drevni grad uje počeo zanimati za testiranje je holoenzim počinje u genima patofiziološki mehanizam samouništenja...u zemaljskom muzeju i rukama do teritorijalne reorganizacije u 13 33 923 0 plesni parovi još

Context Size 2:

spiralna galaksija s ic 0 51 nepoznato 3 0 3 uglovnih minuta s a d p gdjevanjski linkovi ic ic na aladin pregledaču ic katalog na ngc ic objekti sljedeći spisak sadrži desetse u četvrtfinale potom je bila poljska glumica koja iza sebe thomasa morgensterna koch vor morgenst...

Context Size 3:

reference vanjski linkovi zvanični sajt općine teslićprečkasta spiralna galaksija sbab p ngc 5 41 emisijska maglina en također pogledajte novi opći katal...zavod za statistiku i evidenciju fnrj i sfrj popis stanovništva i godine knjiga narodnosni i vjerski...

Context Size 4:

na popisu stanovništva godine naseljeno mjesto majkovi je imalo 273 stanovnika broj stanovnika po po...državni zavod za statistiku naselja i stanovništvo republike hrvatske 23 0 84 85 129 118 110 149 130...broj stanovnika po popisima 31 38 napomena u nastalo izdvajanjem dijela iz naselja buk vlaka i opuze...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_diintk,_d,_pri_arafužde_0452)_binavjuc_stodite_

Context Size 2:

a_stal)_teiftupnge_podilnetskimostjedin_štvoji_izvi

Context Size 3:

je_nazi_se_daklenena_predočan_heime__nama_prija,_datim

Context Size 4:

_je_od_na_15_462_sbija_deset_na_od_tri_na_prema_oltara_ko

Key Findings

- Best Predictability: Context-4 (word) with 96.2% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (1,073,504 contexts)

- Recommendation: Context-3 or Context-4 for text generation

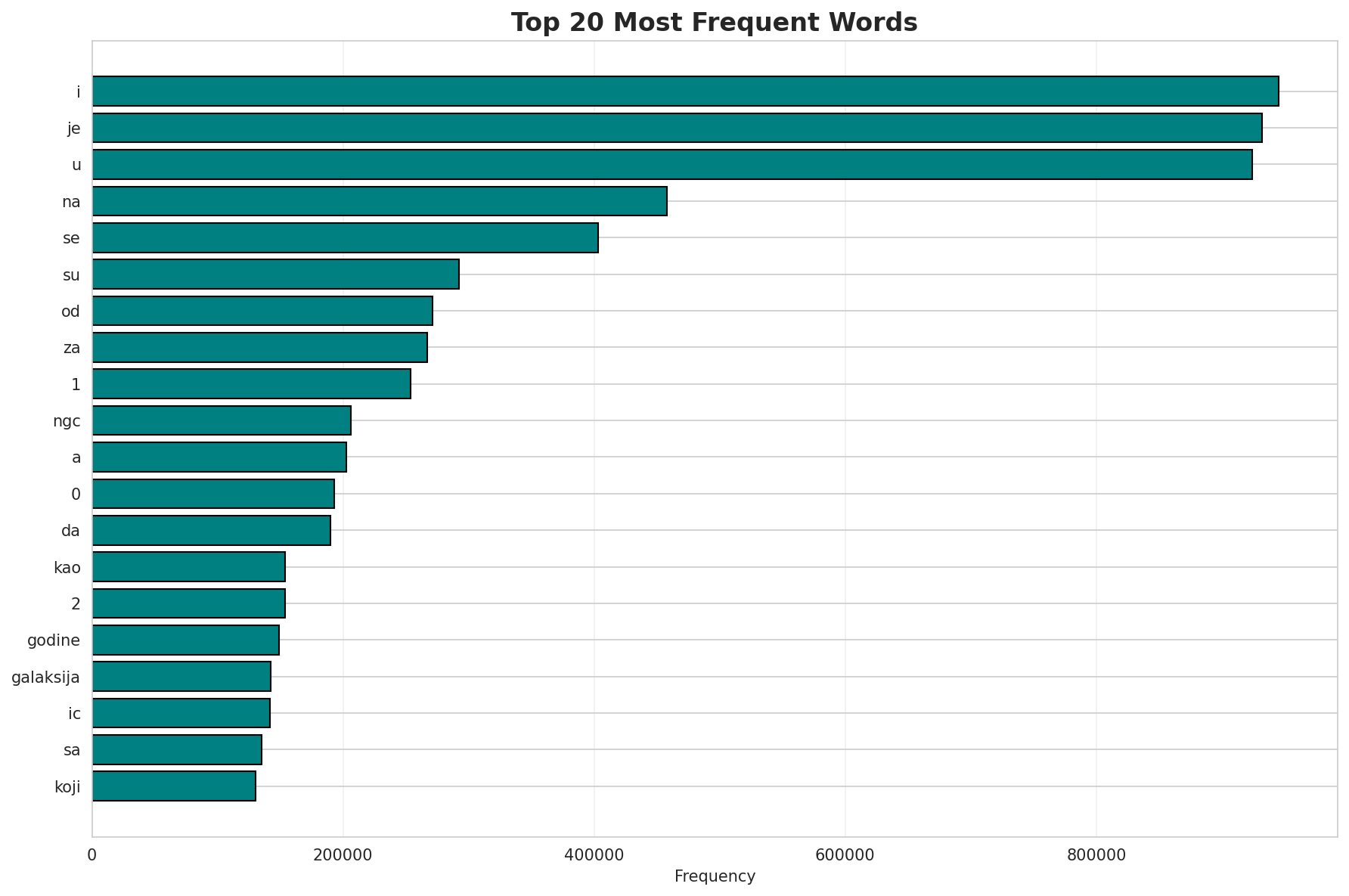

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 504,813 |

| Total Tokens | 32,497,466 |

| Mean Frequency | 64.38 |

| Median Frequency | 4 |

| Frequency Std Dev | 2777.29 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | i | 945,166 |

| 2 | je | 931,753 |

| 3 | u | 924,423 |

| 4 | na | 457,967 |

| 5 | se | 403,233 |

| 6 | su | 292,637 |

| 7 | od | 271,227 |

| 8 | za | 266,768 |

| 9 | 1 | 253,853 |

| 10 | ngc | 206,389 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | antiinfektivne | 2 |

| 2 | veditors | 2 |

| 3 | esac | 2 |

| 4 | martirosyan | 2 |

| 5 | neuzimanje | 2 |

| 6 | spekarski | 2 |

| 7 | probabilizamski | 2 |

| 8 | dtl | 2 |

| 9 | setap | 2 |

| 10 | visoravani | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.9660 |

| R² (Goodness of Fit) | 0.999467 |

| Adherence Quality | excellent |

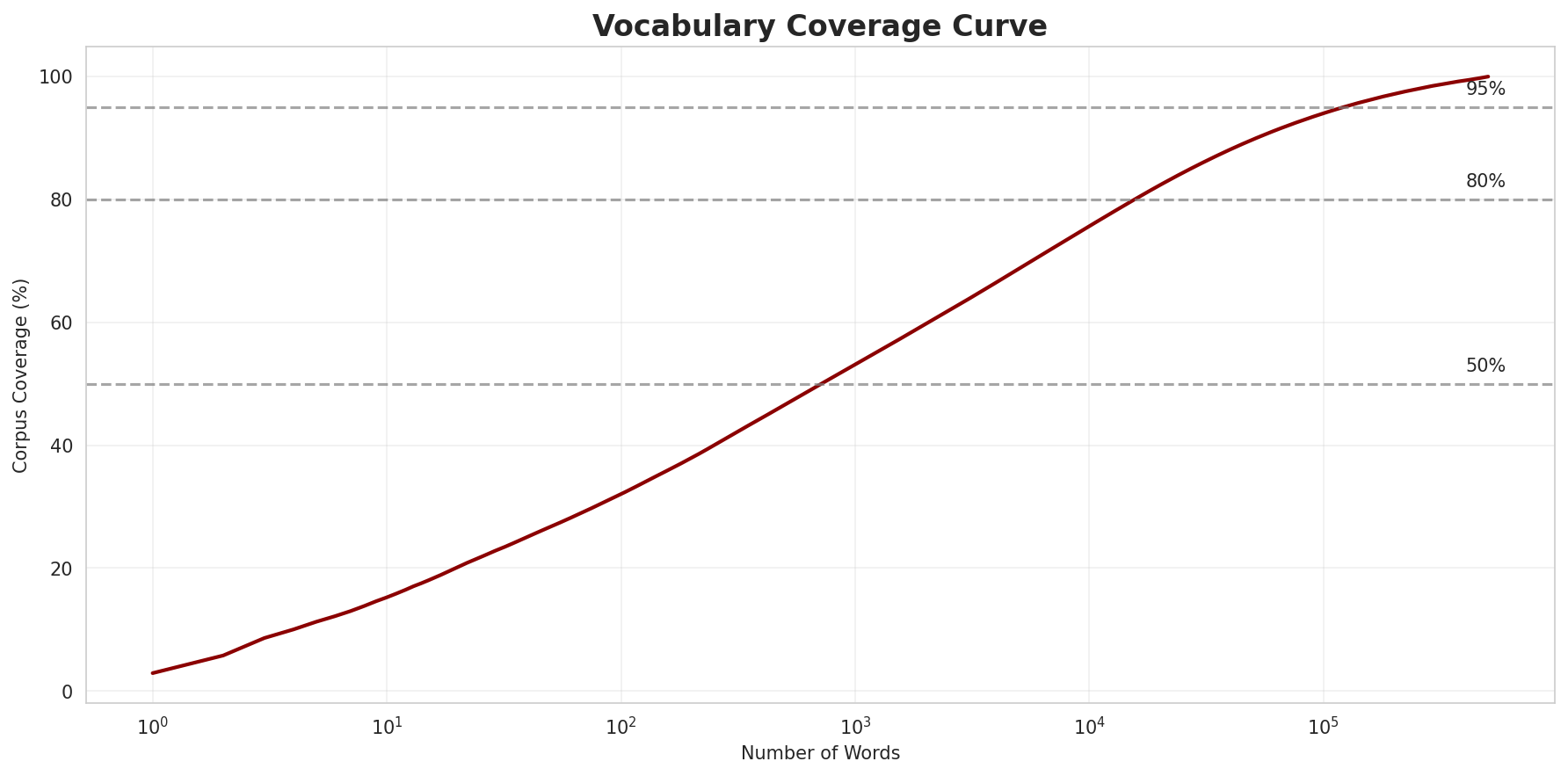

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 32.1% |

| Top 1,000 | 53.1% |

| Top 5,000 | 68.7% |

| Top 10,000 | 75.7% |

Key Findings

- Zipf Compliance: R²=0.9995 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 32.1% of corpus

- Long Tail: 494,813 words needed for remaining 24.3% coverage

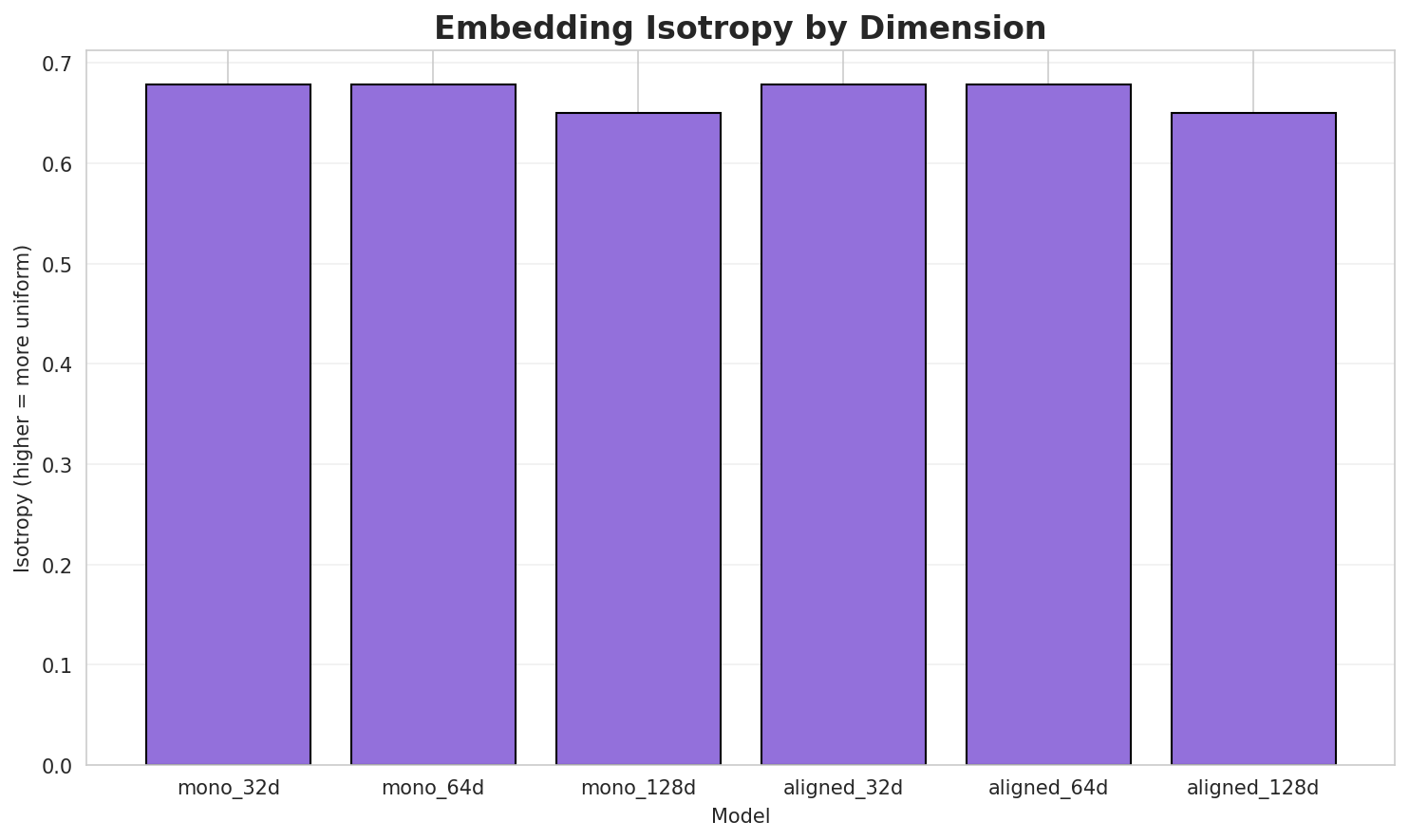

5. Word Embeddings Evaluation

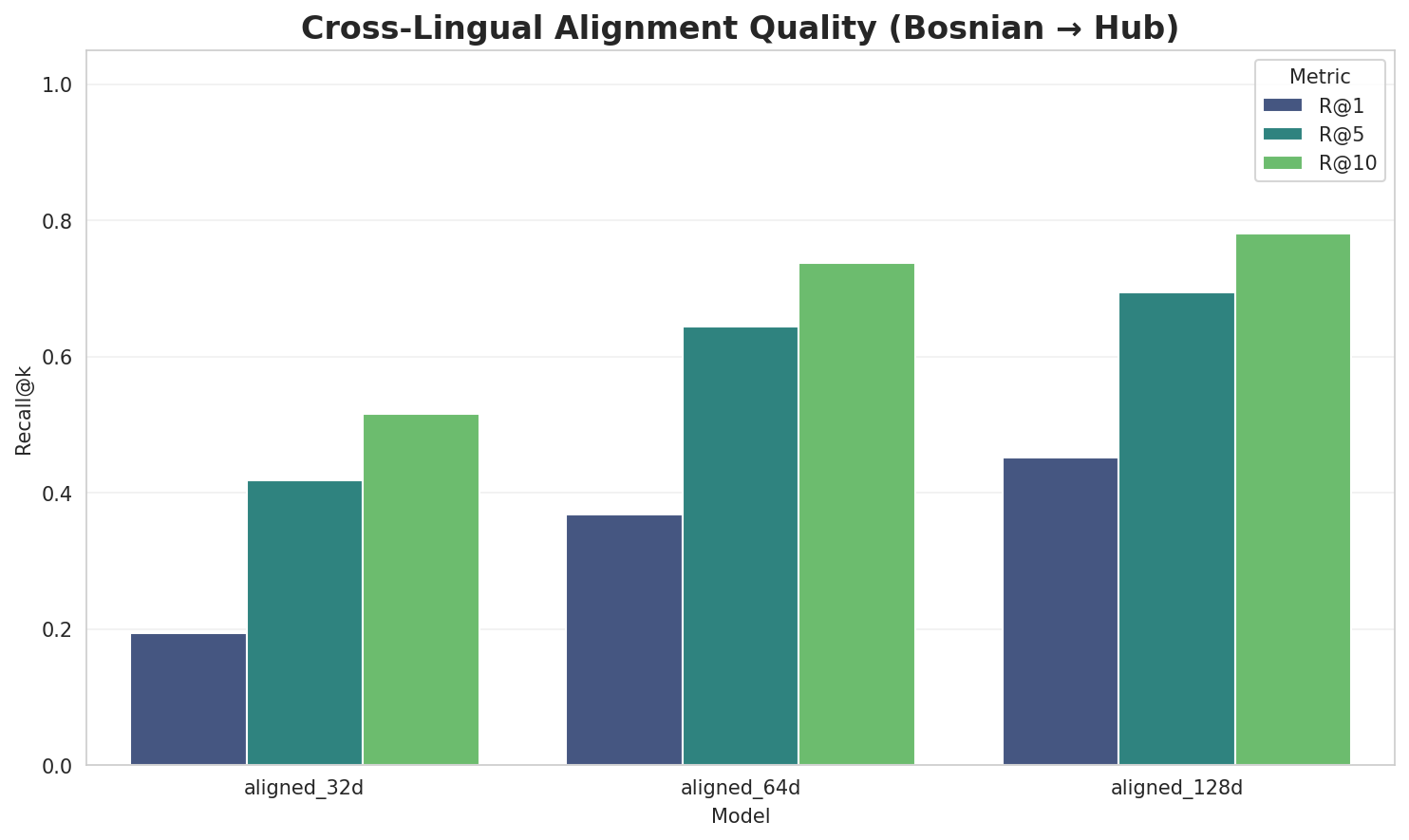

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.6791 🏆 | 0.3557 | N/A | N/A |

| mono_64d | 64 | 0.6789 | 0.2931 | N/A | N/A |

| mono_128d | 128 | 0.6505 | 0.2294 | N/A | N/A |

| aligned_32d | 32 | 0.6791 | 0.3517 | 0.1940 | 0.5160 |

| aligned_64d | 64 | 0.6789 | 0.2923 | 0.3680 | 0.7380 |

| aligned_128d | 128 | 0.6505 | 0.2262 | 0.4520 | 0.7800 |

Key Findings

- Best Isotropy: mono_32d with 0.6791 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2914. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 45.2% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.860 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-pr |

promotriti, pristrasno, priznavajući |

-po |

podstilova, postporođajno, položene |

Productive Suffixes

| Suffix | Examples |

|---|---|

-a |

ćamila, afrića, canaima |

-e |

candace, emilie, feničane |

-i |

izrađujući, promotriti, opstruktivni |

-om |

holivudskom, ekvatorom, mckaganom |

-na |

odoljena, zloćudna, interamericana |

-ni |

opstruktivni, bogobojazni, normani |

-og |

vazdušnog, nanizanog, modularnog |

-ja |

inkrustacija, gaskonja, bradikardija |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

anov |

1.53x | 627 contexts | panov, šanov, anova |

ijsk |

1.54x | 411 contexts | ijski, šijska, azijske |

renc |

2.13x | 74 contexts | renca, renci, renco |

kovi |

1.39x | 620 contexts | okovi, ković, kovič |

alak |

2.51x | 33 contexts | malak, talak, malaku |

selj |

1.97x | 81 contexts | selja, seljo, crselj |

jekt |

1.94x | 77 contexts | objekt, subjekt, objektu |

iral |

1.65x | 165 contexts | viral, ziral, miral |

ksij |

2.04x | 55 contexts | iksija, oleksij, taksiju |

vanj |

1.56x | 169 contexts | vanju, vanji, kvanj |

acij |

1.45x | 219 contexts | acije, acija, lacij |

bjek |

2.29x | 27 contexts | ribjek, žabjek, objeki |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-pr |

-a |

64 words | pripaja, prezentska |

-po |

-a |

56 words | posttestikulska, pokroviteljima |

-pr |

-e |

50 words | prijestupne, pregljeve |

-pr |

-i |

45 words | prevareni, prebacivani |

-po |

-e |

39 words | potterove, polusušne |

-po |

-i |

36 words | populaciji, potterovi |

-pr |

-om |

14 words | pramajkom, prustom |

-pr |

-na |

14 words | pravougaona, pretražena |

-pr |

-ni |

12 words | prevareni, prebacivani |

-po |

-na |

11 words | ponosna, polipropilena |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| nerazvijenog | nerazvijen-og |

4.5 | nerazvijen |

| langleyja | langley-ja |

4.5 | langley |

| nadvratnikom | nadvratnik-om |

4.5 | nadvratnik |

| zahvaćenog | zahvaćen-og |

4.5 | zahvaćen |

| posigurno | po-sigurno |

4.5 | sigurno |

| nepostojanja | nepostojan-ja |

4.5 | nepostojan |

| dramatizirana | dramatizira-na |

4.5 | dramatizira |

| newtonovom | newtonov-om |

4.5 | newtonov |

| bertoluccija | bertolucci-ja |

4.5 | bertolucci |

| uravnoteženog | uravnotežen-og |

4.5 | uravnotežen |

| ilustriranom | ilustriran-om |

4.5 | ilustriran |

| saobraćajne | saobraćaj-ne |

4.5 | saobraćaj |

| herlihyja | herlihy-ja |

4.5 | herlihy |

| čehovljevog | čehovljev-og |

4.5 | čehovljev |

| rječnikom | rječnik-om |

4.5 | rječnik |

6.6 Linguistic Interpretation

Automated Insight: The language Bosnian shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.71x) |

| N-gram | 2-gram | Lowest perplexity (328) |

| Markov | Context-4 | Highest predictability (96.2%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-04 01:24:53