Upload all models and assets for cdo (20251001)

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- README.md +254 -131

- models/embeddings/monolingual/cdo_128d.bin +2 -2

- models/embeddings/monolingual/cdo_128d_metadata.json +5 -3

- models/embeddings/monolingual/cdo_32d.bin +2 -2

- models/embeddings/monolingual/cdo_32d_metadata.json +5 -3

- models/embeddings/monolingual/cdo_64d.bin +2 -2

- models/embeddings/monolingual/cdo_64d_metadata.json +5 -3

- models/subword_markov/cdo_markov_ctx1_subword.parquet +2 -2

- models/subword_markov/cdo_markov_ctx1_subword_metadata.json +2 -2

- models/subword_markov/cdo_markov_ctx2_subword.parquet +2 -2

- models/subword_markov/cdo_markov_ctx2_subword_metadata.json +2 -2

- models/subword_markov/cdo_markov_ctx3_subword.parquet +2 -2

- models/subword_markov/cdo_markov_ctx3_subword_metadata.json +2 -2

- models/subword_markov/cdo_markov_ctx4_subword.parquet +2 -2

- models/subword_markov/cdo_markov_ctx4_subword_metadata.json +2 -2

- models/subword_ngram/cdo_2gram_subword.parquet +2 -2

- models/subword_ngram/cdo_2gram_subword_metadata.json +2 -2

- models/subword_ngram/cdo_3gram_subword.parquet +2 -2

- models/subword_ngram/cdo_3gram_subword_metadata.json +2 -2

- models/subword_ngram/cdo_4gram_subword.parquet +2 -2

- models/subword_ngram/cdo_4gram_subword_metadata.json +2 -2

- models/tokenizer/cdo_tokenizer_32k.model +2 -2

- models/tokenizer/cdo_tokenizer_32k.vocab +0 -0

- models/tokenizer/cdo_tokenizer_64k.model +2 -2

- models/tokenizer/cdo_tokenizer_64k.vocab +0 -0

- models/vocabulary/cdo_vocabulary.parquet +2 -2

- models/vocabulary/cdo_vocabulary_metadata.json +10 -9

- models/word_markov/cdo_markov_ctx1_word.parquet +2 -2

- models/word_markov/cdo_markov_ctx1_word_metadata.json +2 -2

- models/word_markov/cdo_markov_ctx2_word.parquet +2 -2

- models/word_markov/cdo_markov_ctx2_word_metadata.json +2 -2

- models/word_markov/cdo_markov_ctx3_word.parquet +2 -2

- models/word_markov/cdo_markov_ctx3_word_metadata.json +2 -2

- models/word_markov/cdo_markov_ctx4_word.parquet +2 -2

- models/word_markov/cdo_markov_ctx4_word_metadata.json +2 -2

- models/word_ngram/cdo_2gram_word.parquet +2 -2

- models/word_ngram/cdo_2gram_word_metadata.json +2 -2

- models/word_ngram/cdo_3gram_word.parquet +2 -2

- models/word_ngram/cdo_3gram_word_metadata.json +2 -2

- models/word_ngram/cdo_4gram_word.parquet +2 -2

- models/word_ngram/cdo_4gram_word_metadata.json +2 -2

- visualizations/embedding_isotropy.png +0 -0

- visualizations/embedding_norms.png +0 -0

- visualizations/embedding_similarity.png +2 -2

- visualizations/markov_branching.png +0 -0

- visualizations/markov_contexts.png +0 -0

- visualizations/markov_entropy.png +0 -0

- visualizations/model_sizes.png +0 -0

- visualizations/nearest_neighbors.png +0 -0

- visualizations/ngram_coverage.png +0 -0

README.md

CHANGED

|

@@ -23,14 +23,14 @@ dataset_info:

|

|

| 23 |

metrics:

|

| 24 |

- name: best_compression_ratio

|

| 25 |

type: compression

|

| 26 |

-

value: 2.

|

| 27 |

- name: best_isotropy

|

| 28 |

type: isotropy

|

| 29 |

-

value: 0.

|

| 30 |

- name: vocabulary_size

|

| 31 |

type: vocab

|

| 32 |

-

value:

|

| 33 |

-

generated:

|

| 34 |

---

|

| 35 |

|

| 36 |

# CDO - Wikilangs Models

|

|

@@ -44,12 +44,13 @@ We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and

|

|

| 44 |

### Models & Assets

|

| 45 |

|

| 46 |

- Tokenizers (8k, 16k, 32k, 64k)

|

| 47 |

-

- N-gram models (2, 3, 4-gram)

|

| 48 |

-

- Markov chains (context of 1, 2, 3 and

|

| 49 |

- Subword N-gram and Markov chains

|

| 50 |

-

- Embeddings in various sizes and dimensions

|

| 51 |

- Language Vocabulary

|

| 52 |

- Language Statistics

|

|

|

|

| 53 |

|

| 54 |

|

| 55 |

### Analysis and Evaluation

|

|

@@ -59,7 +60,8 @@ We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and

|

|

| 59 |

- [3. Markov Chain Evaluation](#3-markov-chain-evaluation)

|

| 60 |

- [4. Vocabulary Analysis](#4-vocabulary-analysis)

|

| 61 |

- [5. Word Embeddings Evaluation](#5-word-embeddings-evaluation)

|

| 62 |

-

- [6.

|

|

|

|

| 63 |

- [Metrics Glossary](#appendix-metrics-glossary--interpretation-guide)

|

| 64 |

- [Visualizations Index](#visualizations-index)

|

| 65 |

|

|

@@ -68,50 +70,49 @@ We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and

|

|

| 68 |

|

| 69 |

|

| 70 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 71 |

### Results

|

| 72 |

|

| 73 |

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|

| 74 |

|------------|-------------|---------------|----------|--------------|

|

| 75 |

-

| **32k** | 2.

|

| 76 |

-

| **64k** | 2.

|

| 77 |

|

| 78 |

### Tokenization Examples

|

| 79 |

|

| 80 |

Below are sample sentences tokenized with each vocabulary size:

|

| 81 |

|

| 82 |

-

**Sample 1:** `

|

| 83 |

|

| 84 |

| Vocab | Tokens | Count |

|

| 85 |

|-------|--------|-------|

|

| 86 |

-

| 32k | `▁

|

| 87 |

-

| 64k | `▁

|

| 88 |

-

|

| 89 |

-

**Sample 2:** `Duâi dâi

|

| 90 |

|

| 91 |

-

|

| 92 |

-

|

| 93 |

-

Guó-sié

|

| 94 |

-

|

| 95 |

-

|

| 96 |

-

分類:1170 nièng-dâi`

|

| 97 |

|

| 98 |

| Vocab | Tokens | Count |

|

| 99 |

|-------|--------|-------|

|

| 100 |

-

| 32k | `▁

|

| 101 |

-

| 64k | `▁

|

| 102 |

|

| 103 |

-

**Sample 3:** `

|

| 104 |

|

| 105 |

| Vocab | Tokens | Count |

|

| 106 |

|-------|--------|-------|

|

| 107 |

-

| 32k | `▁

|

| 108 |

-

| 64k | `▁

|

| 109 |

|

| 110 |

|

| 111 |

### Key Findings

|

| 112 |

|

| 113 |

-

- **Best Compression:** 64k achieves 2.

|

| 114 |

-

- **Lowest UNK Rate:** 32k with 0.

|

| 115 |

- **Trade-off:** Larger vocabularies improve compression but increase model size

|

| 116 |

- **Recommendation:** 32k vocabulary provides optimal balance for production use

|

| 117 |

|

|

@@ -120,57 +121,89 @@ Guó-sié

|

|

| 120 |

|

| 121 |

|

| 122 |

|

|

|

|

|

|

|

| 123 |

|

| 124 |

|

| 125 |

### Results

|

| 126 |

|

| 127 |

-

| N-gram | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|

| 128 |

-

|--------|------------|---------|----------------|------------------|-------------------|

|

| 129 |

-

| **2-gram** |

|

| 130 |

-

| **2-gram** |

|

| 131 |

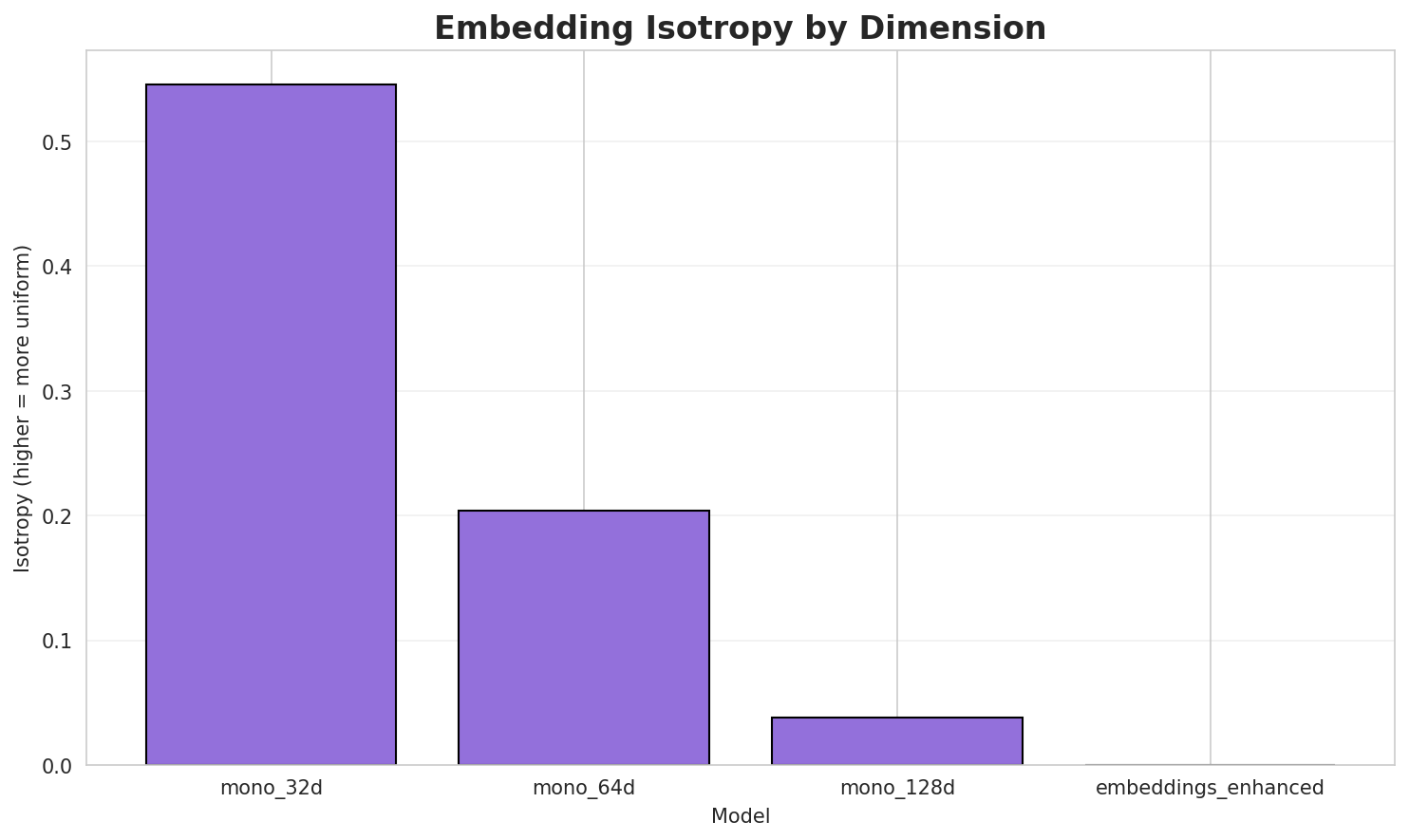

-

| **3-gram** |

|

| 132 |

-

| **3-gram** |

|

| 133 |

-

| **4-gram** |

|

| 134 |

-

| **4-gram** |

|

| 135 |

|

| 136 |

### Top 5 N-grams by Size

|

| 137 |

|

| 138 |

-

**2-grams:**

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 139 |

|

| 140 |

| Rank | N-gram | Count |

|

| 141 |

|------|--------|-------|

|

| 142 |

-

| 1 | `

|

| 143 |

-

| 2 | `

|

| 144 |

-

| 3 | `

|

| 145 |

-

| 4 | `

|

| 146 |

-

| 5 | `

|

| 147 |

|

| 148 |

-

**

|

| 149 |

|

| 150 |

| Rank | N-gram | Count |

|

| 151 |

|------|--------|-------|

|

| 152 |

-

| 1 | `

|

| 153 |

-

| 2 | `

|

| 154 |

-

| 3 | `

|

| 155 |

-

| 4 | `

|

| 156 |

-

| 5 | `

|

| 157 |

|

| 158 |

-

**

|

| 159 |

|

| 160 |

| Rank | N-gram | Count |

|

| 161 |

|------|--------|-------|

|

| 162 |

-

| 1 | `

|

| 163 |

-

| 2 | `

|

| 164 |

-

| 3 | `

|

| 165 |

-

| 4 | `

|

| 166 |

-

| 5 | `

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 167 |

|

| 168 |

|

| 169 |

### Key Findings

|

| 170 |

|

| 171 |

-

- **Best Perplexity:** 2-gram with

|

| 172 |

- **Entropy Trend:** Decreases with larger n-grams (more predictable)

|

| 173 |

-

- **Coverage:** Top-1000 patterns cover ~

|

| 174 |

- **Recommendation:** 4-gram or 5-gram for best predictive performance

|

| 175 |

|

| 176 |

---

|

|

@@ -178,55 +211,86 @@ Guó-sié

|

|

| 178 |

|

| 179 |

|

| 180 |

|

|

|

|

|

|

|

| 181 |

|

| 182 |

|

| 183 |

### Results

|

| 184 |

|

| 185 |

-

| Context | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|

| 186 |

-

|---------|-------------|------------|------------------|-----------------|----------------|

|

| 187 |

-

| **1** | 0.

|

| 188 |

-

| **1** | 0.

|

| 189 |

-

| **2** | 0.

|

| 190 |

-

| **2** | 0.

|

| 191 |

-

| **3** | 0.

|

| 192 |

-

| **3** | 0.

|

| 193 |

-

| **4** | 0.

|

| 194 |

-

| **4** | 0.

|

| 195 |

|

| 196 |

-

### Generated Text Samples

|

| 197 |

|

| 198 |

-

Below are text samples generated from each Markov chain model:

|

| 199 |

|

| 200 |

**Context Size 1:**

|

| 201 |

|

| 202 |

-

1. `

|

| 203 |

-

2. `

|

| 204 |

-

3. `

|

| 205 |

|

| 206 |

**Context Size 2:**

|

| 207 |

|

| 208 |

-

1. `

|

| 209 |

-

2. `

|

| 210 |

-

3. `

|

| 211 |

|

| 212 |

**Context Size 3:**

|

| 213 |

|

| 214 |

-

1. `

|

| 215 |

-

2. `

|

| 216 |

-

3. `

|

| 217 |

|

| 218 |

**Context Size 4:**

|

| 219 |

|

| 220 |

-

1. `

|

| 221 |

-

2. `

|

| 222 |

-

3. `

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 223 |

|

| 224 |

|

| 225 |

### Key Findings

|

| 226 |

|

| 227 |

-

- **Best Predictability:** Context-4 with

|

| 228 |

- **Branching Factor:** Decreases with context size (more deterministic)

|

| 229 |

-

- **Memory Trade-off:** Larger contexts require more storage (

|

| 230 |

- **Recommendation:** Context-3 or Context-4 for text generation

|

| 231 |

|

| 232 |

---

|

|

@@ -242,64 +306,64 @@ Below are text samples generated from each Markov chain model:

|

|

| 242 |

|

| 243 |

| Metric | Value |

|

| 244 |

|--------|-------|

|

| 245 |

-

| Vocabulary Size |

|

| 246 |

-

| Total Tokens |

|

| 247 |

-

| Mean Frequency |

|

| 248 |

| Median Frequency | 3 |

|

| 249 |

-

| Frequency Std Dev |

|

| 250 |

|

| 251 |

### Most Common Words

|

| 252 |

|

| 253 |

| Rank | Word | Frequency |

|

| 254 |

|------|------|-----------|

|

| 255 |

-

| 1 | gì |

|

| 256 |

-

| 2 |

|

| 257 |

-

| 3 |

|

| 258 |

-

| 4 |

|

| 259 |

-

| 5 |

|

| 260 |

-

| 6 |

|

| 261 |

-

| 7 |

|

| 262 |

-

| 8 |

|

| 263 |

-

| 9 |

|

| 264 |

-

| 10 |

|

| 265 |

|

| 266 |

### Least Common Words (from vocabulary)

|

| 267 |

|

| 268 |

| Rank | Word | Frequency |

|

| 269 |

|------|------|-----------|

|

| 270 |

-

| 1 |

|

| 271 |

-

| 2 |

|

| 272 |

-

| 3 |

|

| 273 |

-

| 4 |

|

| 274 |

-

| 5 |

|

| 275 |

-

| 6 |

|

| 276 |

-

| 7 |

|

| 277 |

-

| 8 |

|

| 278 |

-

| 9 |

|

| 279 |

-

| 10 |

|

| 280 |

|

| 281 |

### Zipf's Law Analysis

|

| 282 |

|

| 283 |

| Metric | Value |

|

| 284 |

|--------|-------|

|

| 285 |

| Zipf Coefficient | 1.3995 |

|

| 286 |

-

| R² (Goodness of Fit) | 0.

|

| 287 |

| Adherence Quality | **excellent** |

|

| 288 |

|

| 289 |

### Coverage Analysis

|

| 290 |

|

| 291 |

| Top N Words | Coverage |

|

| 292 |

|-------------|----------|

|

| 293 |

-

| Top 100 |

|

| 294 |

-

| Top 1,000 |

|

| 295 |

-

| Top 5,000 |

|

| 296 |

-

| Top 10,000 |

|

| 297 |

|

| 298 |

### Key Findings

|

| 299 |

|

| 300 |

-

- **Zipf Compliance:** R²=0.

|

| 301 |

-

- **High Frequency Dominance:** Top 100 words cover

|

| 302 |

-

- **Long Tail:**

|

| 303 |

|

| 304 |

---

|

| 305 |

## 5. Word Embeddings Evaluation

|

|

@@ -312,24 +376,80 @@ Below are text samples generated from each Markov chain model:

|

|

| 312 |

|

| 313 |

|

| 314 |

|

| 315 |

-

### Model Comparison

|

| 316 |

|

| 317 |

-

|

| 318 |

-

|

| 319 |

-

|

| 320 |

-

|

| 321 |

-

|

| 322 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 323 |

|

| 324 |

### Key Findings

|

| 325 |

|

| 326 |

-

- **Best Isotropy:** mono_32d with 0.

|

| 327 |

-

- **

|

| 328 |

-

- **

|

| 329 |

-

- **Recommendation:**

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 330 |

|

| 331 |

---

|

| 332 |

-

##

|

| 333 |

|

| 334 |

|

| 335 |

|

|

@@ -337,11 +457,12 @@ Below are text samples generated from each Markov chain model:

|

|

| 337 |

|

| 338 |

| Component | Recommended | Rationale |

|

| 339 |

|-----------|-------------|-----------|

|

| 340 |

-

| Tokenizer | **

|

| 341 |

-

| N-gram | **

|

| 342 |

-

| Markov | **Context-4** | Highest predictability (

|

| 343 |

| Embeddings | **100d** | Balanced semantic capture and isotropy |

|

| 344 |

|

|

|

|

| 345 |

---

|

| 346 |

## Appendix: Metrics Glossary & Interpretation Guide

|

| 347 |

|

|

@@ -531,7 +652,8 @@ If you use these models in your research, please cite:

|

|

| 531 |

author = {Kamali, Omar},

|

| 532 |

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

|

| 533 |

year = {2025},

|

| 534 |

-

|

|

|

|

| 535 |

url = {https://huggingface.co/wikilangs}

|

| 536 |

institution = {Omneity Labs}

|

| 537 |

}

|

|

@@ -547,7 +669,8 @@ MIT License - Free for academic and commercial use.

|

|

| 547 |

- 🤗 Models: [huggingface.co/wikilangs](https://huggingface.co/wikilangs)

|

| 548 |

- 📊 Data: [wikipedia-monthly](https://huggingface.co/datasets/omarkamali/wikipedia-monthly)

|

| 549 |

- 👤 Author: [Omar Kamali](https://huggingface.co/omarkamali)

|

|

|

|

| 550 |

---

|

| 551 |

*Generated by Wikilangs Models Pipeline*

|

| 552 |

|

| 553 |

-

*Report Date:

|

|

|

|

| 23 |

metrics:

|

| 24 |

- name: best_compression_ratio

|

| 25 |

type: compression

|

| 26 |

+

value: 2.892

|

| 27 |

- name: best_isotropy

|

| 28 |

type: isotropy

|

| 29 |

+

value: 0.5551

|

| 30 |

- name: vocabulary_size

|

| 31 |

type: vocab

|

| 32 |

+

value: 0

|

| 33 |

+

generated: 2026-01-03

|

| 34 |

---

|

| 35 |

|

| 36 |

# CDO - Wikilangs Models

|

|

|

|

| 44 |

### Models & Assets

|

| 45 |

|

| 46 |

- Tokenizers (8k, 16k, 32k, 64k)

|

| 47 |

+

- N-gram models (2, 3, 4, 5-gram)

|

| 48 |

+

- Markov chains (context of 1, 2, 3, 4 and 5)

|

| 49 |

- Subword N-gram and Markov chains

|

| 50 |

+

- Embeddings in various sizes and dimensions (aligned and unaligned)

|

| 51 |

- Language Vocabulary

|

| 52 |

- Language Statistics

|

| 53 |

+

|

| 54 |

|

| 55 |

|

| 56 |

### Analysis and Evaluation

|

|

|

|

| 60 |

- [3. Markov Chain Evaluation](#3-markov-chain-evaluation)

|

| 61 |

- [4. Vocabulary Analysis](#4-vocabulary-analysis)

|

| 62 |

- [5. Word Embeddings Evaluation](#5-word-embeddings-evaluation)

|

| 63 |

+

- [6. Morphological Analysis (Experimental)](#6-morphological-analysis)

|

| 64 |

+

- [7. Summary & Recommendations](#7-summary--recommendations)

|

| 65 |

- [Metrics Glossary](#appendix-metrics-glossary--interpretation-guide)

|

| 66 |

- [Visualizations Index](#visualizations-index)

|

| 67 |

|

|

|

|

| 70 |

|

| 71 |

|

| 72 |

|

| 73 |

+

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

|

| 78 |

+

|

| 79 |

### Results

|

| 80 |

|

| 81 |

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|

| 82 |

|------------|-------------|---------------|----------|--------------|

|

| 83 |

+

| **32k** | 2.757x | 2.76 | 0.1034% | 257,325 |

|

| 84 |

+

| **64k** | 2.892x 🏆 | 2.90 | 0.1084% | 245,327 |

|

| 85 |

|

| 86 |

### Tokenization Examples

|

| 87 |

|

| 88 |

Below are sample sentences tokenized with each vocabulary size:

|

| 89 |

|

| 90 |

+

**Sample 1:** `Bashkortostan sê Ngò̤-lò̤-sṳ̆ gì siŏh ciáh gê̤ṳng-huò-guók. gì gê̤ṳng-huò-guók`

|

| 91 |

|

| 92 |

| Vocab | Tokens | Count |

|

| 93 |

|-------|--------|-------|

|

| 94 |

+

| 32k | `▁b ash k ort os tan ▁sê ▁ngò ̤- lò ... (+17 more)` | 27 |

|

| 95 |

+

| 64k | `▁bash k ortos tan ▁sê ▁ngò ̤- lò ̤- sṳ̆ ... (+15 more)` | 25 |

|

|

|

|

|

|

|

| 96 |

|

| 97 |

+

**Sample 2:** `Montague Gông (Ĭng-ngṳ̄: Montague County) sê Mī-guók Texas gì siŏh ciáh gông. gì...`

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 98 |

|

| 99 |

| Vocab | Tokens | Count |

|

| 100 |

|-------|--------|-------|

|

| 101 |

+

| 32k | `▁mont a gue ▁gông ▁( ĭng - ngṳ̄ : ▁mont ... (+16 more)` | 26 |

|

| 102 |

+

| 64k | `▁montague ▁gông ▁( ĭng - ngṳ̄ : ▁montague ▁county ) ... (+12 more)` | 22 |

|

| 103 |

|

| 104 |

+

**Sample 3:** `Ochiltree Gông (Ĭng-ngṳ̄: Ochiltree County) sê Mī-guók Texas gì siŏh ciáh gông. ...`

|

| 105 |

|

| 106 |

| Vocab | Tokens | Count |

|

| 107 |

|-------|--------|-------|

|

| 108 |

+

| 32k | `▁o chi l t re e ▁gông ▁( ĭng - ... (+22 more)` | 32 |

|

| 109 |

+

| 64k | `▁ochiltree ▁gông ▁( ĭng - ngṳ̄ : ▁ochiltree ▁county ) ... (+12 more)` | 22 |

|

| 110 |

|

| 111 |

|

| 112 |

### Key Findings

|

| 113 |

|

| 114 |

+

- **Best Compression:** 64k achieves 2.892x compression

|

| 115 |

+

- **Lowest UNK Rate:** 32k with 0.1034% unknown tokens

|

| 116 |

- **Trade-off:** Larger vocabularies improve compression but increase model size

|

| 117 |

- **Recommendation:** 32k vocabulary provides optimal balance for production use

|

| 118 |

|

|

|

|

| 121 |

|

| 122 |

|

| 123 |

|

| 124 |

+

|

| 125 |

+

|

| 126 |

|

| 127 |

|

| 128 |

### Results

|

| 129 |

|

| 130 |

+

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|

| 131 |

+

|--------|---------|------------|---------|----------------|------------------|-------------------|

|

| 132 |

+

| **2-gram** | Word | 3,105 | 11.60 | 11,679 | 27.7% | 59.2% |

|

| 133 |

+

| **2-gram** | Subword | 342 🏆 | 8.42 | 6,912 | 63.5% | 95.8% |

|

| 134 |

+

| **3-gram** | Word | 4,698 | 12.20 | 17,954 | 23.8% | 52.2% |

|

| 135 |

+

| **3-gram** | Subword | 1,659 | 10.70 | 21,000 | 36.1% | 75.8% |

|

| 136 |

+

| **4-gram** | Word | 8,483 | 13.05 | 30,938 | 18.6% | 45.4% |

|

| 137 |

+

| **4-gram** | Subword | 5,744 | 12.49 | 69,193 | 23.7% | 55.8% |

|

| 138 |

|

| 139 |

### Top 5 N-grams by Size

|

| 140 |

|

| 141 |

+

**2-grams (Word):**

|

| 142 |

+

|

| 143 |

+

| Rank | N-gram | Count |

|

| 144 |

+

|------|--------|-------|

|

| 145 |

+

| 1 | `gì siŏh` | 6,258 |

|

| 146 |

+

| 2 | `siŏh ciáh` | 6,232 |

|

| 147 |

+

| 3 | `mī guók` | 3,385 |

|

| 148 |

+

| 4 | `sê mī` | 3,191 |

|

| 149 |

+

| 5 | `gì gông` | 3,000 |

|

| 150 |

+

|

| 151 |

+

**3-grams (Word):**

|

| 152 |

+

|

| 153 |

+

| Rank | N-gram | Count |

|

| 154 |

+

|------|--------|-------|

|

| 155 |

+

| 1 | `gì siŏh ciáh` | 5,413 |

|

| 156 |

+

| 2 | `sê mī guók` | 3,173 |

|

| 157 |

+

| 3 | `siŏh ciáh gông` | 3,000 |

|

| 158 |

+

| 4 | `ciáh gông gì` | 2,557 |

|

| 159 |

+

| 5 | `gông gì gông` | 2,557 |

|

| 160 |

+

|

| 161 |

+

**4-grams (Word):**

|

| 162 |

|

| 163 |

| Rank | N-gram | Count |

|

| 164 |

|------|--------|-------|

|

| 165 |

+

| 1 | `gì siŏh ciáh gông` | 3,000 |

|

| 166 |

+

| 2 | `ciáh gông gì gông` | 2,557 |

|

| 167 |

+

| 3 | `siŏh ciáh gông gì` | 2,557 |

|

| 168 |

+

| 4 | `county sê mī guók` | 1,971 |

|

| 169 |

+

| 5 | `gông sê mī guók` | 1,029 |

|

| 170 |

|

| 171 |

+

**2-grams (Subword):**

|

| 172 |

|

| 173 |

| Rank | N-gram | Count |

|

| 174 |

|------|--------|-------|

|

| 175 |

+

| 1 | `n g` | 146,797 |

|

| 176 |

+

| 2 | `_ g` | 59,970 |

|

| 177 |

+

| 3 | `g -` | 55,946 |

|

| 178 |

+

| 4 | `g _` | 55,139 |

|

| 179 |

+

| 5 | `_ s` | 41,311 |

|

| 180 |

|

| 181 |

+

**3-grams (Subword):**

|

| 182 |

|

| 183 |

| Rank | N-gram | Count |

|

| 184 |

|------|--------|-------|

|

| 185 |

+

| 1 | `n g -` | 55,920 |

|

| 186 |

+

| 2 | `n g _` | 55,025 |

|

| 187 |

+

| 3 | `_ g ì` | 23,090 |

|

| 188 |

+

| 4 | `g ì _` | 22,312 |

|

| 189 |

+

| 5 | `_ s i` | 14,134 |

|

| 190 |

+

|

| 191 |

+

**4-grams (Subword):**

|

| 192 |

+

|

| 193 |

+

| Rank | N-gram | Count |

|

| 194 |

+

|------|--------|-------|

|

| 195 |

+

| 1 | `_ g ì _` | 22,161 |

|

| 196 |

+

| 2 | `_ s ê _` | 13,231 |

|

| 197 |

+

| 3 | `n g _ g` | 11,336 |

|

| 198 |

+

| 4 | `i ŏ h _` | 10,632 |

|

| 199 |

+

| 5 | `_ s i ŏ` | 9,391 |

|

| 200 |

|

| 201 |

|

| 202 |

### Key Findings

|

| 203 |

|

| 204 |

+

- **Best Perplexity:** 2-gram (subword) with 342

|

| 205 |

- **Entropy Trend:** Decreases with larger n-grams (more predictable)

|

| 206 |

+

- **Coverage:** Top-1000 patterns cover ~56% of corpus

|

| 207 |

- **Recommendation:** 4-gram or 5-gram for best predictive performance

|

| 208 |

|

| 209 |

---

|

|

|

|

| 211 |

|

| 212 |

|

| 213 |

|

| 214 |

+

|

| 215 |

+

|

| 216 |

|

| 217 |

|

| 218 |

### Results

|

| 219 |

|

| 220 |

+

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|

| 221 |

+

|---------|---------|-------------|------------|------------------|-----------------|----------------|

|

| 222 |

+

| **1** | Word | 0.4882 | 1.403 | 4.73 | 29,670 | 51.2% |

|

| 223 |

+

| **1** | Subword | 0.3461 | 1.271 | 2.92 | 25,622 | 65.4% |

|

| 224 |

+

| **2** | Word | 0.3187 | 1.247 | 1.80 | 139,308 | 68.1% |

|

| 225 |

+

| **2** | Subword | 0.2753 | 1.210 | 1.79 | 74,780 | 72.5% |

|

| 226 |

+

| **3** | Word | 0.1201 | 1.087 | 1.23 | 249,012 | 88.0% |

|

| 227 |

+

| **3** | Subword | 0.2343 | 1.176 | 1.69 | 133,665 | 76.6% |

|

| 228 |

+

| **4** | Word | 0.0526 🏆 | 1.037 | 1.09 | 301,670 | 94.7% |

|

| 229 |

+

| **4** | Subword | 0.2290 | 1.172 | 1.53 | 225,577 | 77.1% |

|

| 230 |

|

| 231 |

+

### Generated Text Samples (Word-based)

|

| 232 |

|

| 233 |

+

Below are text samples generated from each word-based Markov chain model:

|

| 234 |

|

| 235 |

**Context Size 1:**

|

| 236 |

|

| 237 |

+

1. `gì hiŏng 蘆洋鄉 bìng dēng có̤i kăi sṳ̄ gó nâ háng nè̤ng gó̤ lō̤ 法老 gái`

|

| 238 |

+

2. `sê dṳ̆ng huà ìng chê 邢臺市 lòng cĭ 退之 sê ĕng diŏh adelaide ô 2 nguŏk`

|

| 239 |

+

3. `siŏh bĭk cék éng sáuk īng lĭk â̤ dé̤ṳng buŏng nàng áng cê sê turkic ngṳ̄`

|

| 240 |

|

| 241 |

**Context Size 2:**

|

| 242 |

|

| 243 |

+

1. `gì siŏh cṳ̄ng lòi ĭng ôi sĭng ô 79 ciáh mŭk sṳ̆ 佳木斯 sê dṳ̆ng guók sĭng`

|

| 244 |

+

2. `siŏh ciáh chê hăk kṳ̆ 市轄區 lĭk sṳ̄ diē sié sê siŏh cṳ̄ng ciŏng muòng dò̤ lā̤`

|

| 245 |

+

3. `mī guók montana gì siŏh ciáh gông gì gông`

|

| 246 |

|

| 247 |

**Context Size 3:**

|

| 248 |

|

| 249 |

+

1. `gì siŏh ciáh duâi kṳ̆ gì duâi kṳ̆`

|

| 250 |

+

2. `sê mī guók kansas gì siŏh ciáh cê mō̤ diŏh eta gì piăng âu gâe̤ng sigma gì sèng`

|

| 251 |

+

3. `siŏh ciáh gông gì gông`

|

| 252 |

|

| 253 |

**Context Size 4:**

|

| 254 |

|

| 255 |

+

1. `gì siŏh ciáh gông gì gông`

|

| 256 |

+

2. `siŏh ciáh gông gì gông`

|

| 257 |

+

3. `county sê mī guók nebraska gì siŏh ciáh gông gì gông`

|

| 258 |

+

|

| 259 |

+

|

| 260 |

+

### Generated Text Samples (Subword-based)

|

| 261 |

+

|

| 262 |

+

Below are text samples generated from each subword-based Markov chain model:

|

| 263 |

+

|

| 264 |

+

**Context Size 1:**

|

| 265 |

+

|

| 266 |

+

1. `_ônià_hô̤_sênty)_`

|

| 267 |

+

2. `gì_ciônièng,_sṳ̄:`

|

| 268 |

+

3. `ngăngôner_g-ccáh`

|

| 269 |

+

|

| 270 |

+

**Context Size 2:**

|

| 271 |

+

|

| 272 |

+

1. `nguô_ka_cĭ_gì_dâ̤_`

|

| 273 |

+

2. `_gì_sié-sê_hŏk-cê`

|

| 274 |

+

3. `g-hĭ_(獨聯體)_gông_n`

|

| 275 |

+

|

| 276 |

+

**Context Size 3:**

|

| 277 |

+

|

| 278 |

+

1. `ng-hèng_biéng,_mac`

|

| 279 |

+

2. `ng_adahoma_gì_(兩個聲`

|

| 280 |

+

3. `_gì_siōng-dĕ̤ng-ŭk_`

|

| 281 |

+

|

| 282 |

+

**Context Size 4:**

|

| 283 |

+

|

| 284 |

+

1. `_gì_«sṳ̀ng-kṳ̆_dĕk-bi`

|

| 285 |

+

2. `_sê_„發現更大的世界“)_có̤_c`

|

| 286 |

+

3. `ng_găk_chăng-muò_(𧋘`

|

| 287 |

|

| 288 |

|

| 289 |

### Key Findings

|

| 290 |

|

| 291 |

+

- **Best Predictability:** Context-4 (word) with 94.7% predictability

|

| 292 |

- **Branching Factor:** Decreases with context size (more deterministic)

|

| 293 |

+

- **Memory Trade-off:** Larger contexts require more storage (225,577 contexts)

|

| 294 |

- **Recommendation:** Context-3 or Context-4 for text generation

|

| 295 |

|

| 296 |

---

|

|

|

|

| 306 |

|

| 307 |

| Metric | Value |

|

| 308 |

|--------|-------|

|

| 309 |

+

| Vocabulary Size | 9,559 |

|

| 310 |

+

| Total Tokens | 467,385 |

|

| 311 |

+

| Mean Frequency | 48.89 |

|

| 312 |

| Median Frequency | 3 |

|

| 313 |

+

| Frequency Std Dev | 395.71 |

|

| 314 |

|

| 315 |

### Most Common Words

|

| 316 |

|

| 317 |

| Rank | Word | Frequency |

|

| 318 |

|------|------|-----------|

|

| 319 |

+

| 1 | gì | 23,295 |

|

| 320 |

+

| 2 | sê | 14,068 |

|

| 321 |

+

| 3 | siŏh | 9,247 |

|

| 322 |

+

| 4 | gông | 9,087 |

|

| 323 |

+

| 5 | guók | 8,549 |

|

| 324 |

+

| 6 | ciáh | 7,131 |

|

| 325 |

+

| 7 | nièng | 5,854 |

|

| 326 |

+

| 8 | ngṳ̄ | 5,277 |

|

| 327 |

+

| 9 | sié | 4,616 |

|

| 328 |

+

| 10 | gáu | 4,179 |

|

| 329 |

|

| 330 |

### Least Common Words (from vocabulary)

|

| 331 |

|

| 332 |

| Rank | Word | Frequency |

|

| 333 |

|------|------|-----------|

|

| 334 |

+

| 1 | woolridge | 2 |

|

| 335 |

+

| 2 | imperiyası | 2 |

|

| 336 |

+

| 3 | abş | 2 |

|

| 337 |

+

| 4 | çox | 2 |

|

| 338 |

+

| 5 | dünyada | 2 |

|

| 339 |

+

| 6 | bütün | 2 |

|

| 340 |

+

| 7 | 嘉祿 | 2 |

|

| 341 |

+

| 8 | 六一路 | 2 |

|

| 342 |

+

| 9 | 神壇樹 | 2 |

|

| 343 |

+

| 10 | 신단수 | 2 |

|

| 344 |

|

| 345 |

### Zipf's Law Analysis

|

| 346 |

|

| 347 |

| Metric | Value |

|

| 348 |

|--------|-------|

|

| 349 |

| Zipf Coefficient | 1.3995 |

|

| 350 |

+

| R² (Goodness of Fit) | 0.957431 |

|

| 351 |

| Adherence Quality | **excellent** |

|

| 352 |

|

| 353 |

### Coverage Analysis

|

| 354 |

|

| 355 |

| Top N Words | Coverage |

|

| 356 |

|-------------|----------|

|

| 357 |

+

| Top 100 | 52.2% |

|

| 358 |

+

| Top 1,000 | 91.7% |

|

| 359 |

+

| Top 5,000 | 98.0% |

|

| 360 |

+

| Top 10,000 | 0.0% |

|

| 361 |

|

| 362 |

### Key Findings

|

| 363 |

|

| 364 |

+

- **Zipf Compliance:** R²=0.9574 indicates excellent adherence to Zipf's law

|

| 365 |

+

- **High Frequency Dominance:** Top 100 words cover 52.2% of corpus

|

| 366 |

+

- **Long Tail:** -441 words needed for remaining 100.0% coverage

|

| 367 |

|

| 368 |

---

|

| 369 |

## 5. Word Embeddings Evaluation

|

|

|

|

| 376 |

|

| 377 |

|

| 378 |

|

|

|

|

| 379 |

|

| 380 |

+

### 5.1 Cross-Lingual Alignment

|

| 381 |

+

|

| 382 |

+

> *Note: Multilingual alignment visualization not available for this language.*

|

| 383 |

+

|

| 384 |

+

|

| 385 |

+

### 5.2 Model Comparison

|

| 386 |

+

|

| 387 |

+

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|

| 388 |

+

|-------|-----------|----------|------------------|---------------|----------------|

|

| 389 |

+

| **mono_32d** | 32 | 0.5551 🏆 | 0.4156 | N/A | N/A |

|

| 390 |

+

| **mono_64d** | 64 | 0.1856 | 0.4055 | N/A | N/A |

|

| 391 |

+

| **mono_128d** | 128 | 0.0279 | 0.4128 | N/A | N/A |

|

| 392 |

|

| 393 |

### Key Findings

|

| 394 |

|

| 395 |

+

- **Best Isotropy:** mono_32d with 0.5551 (more uniform distribution)

|

| 396 |

+

- **Semantic Density:** Average pairwise similarity of 0.4113. Lower values indicate better semantic separation.

|

| 397 |

+

- **Alignment Quality:** No aligned models evaluated in this run.

|

| 398 |

+

- **Recommendation:** 128d aligned for best cross-lingual performance

|

| 399 |

+

|

| 400 |

+

---

|

| 401 |

+

## 6. Morphological Analysis (Experimental)

|

| 402 |

+

|

| 403 |

+

> ⚠️ **Warning:** This language shows low morphological productivity. The statistical signals used for this analysis may be noisy or less reliable than for morphologically rich languages.

|

| 404 |

+

|

| 405 |

+

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

|

| 406 |

+

|

| 407 |

+

### 6.1 Productivity & Complexity

|

| 408 |

+

|

| 409 |

+

| Metric | Value | Interpretation | Recommendation |

|

| 410 |

+

|--------|-------|----------------|----------------|

|

| 411 |

+

| Productivity Index | **0.000** | Low morphological productivity | ⚠️ Likely unreliable |

|

| 412 |

+

| Idiomaticity Gap | **-1.000** | Low formulaic content | - |

|

| 413 |

+

|

| 414 |

+

### 6.2 Affix Inventory (Productive Units)

|

| 415 |

+

|

| 416 |

+

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

|

| 417 |

+

|

| 418 |

+

*No productive affixes detected.*

|

| 419 |

+

|

| 420 |

+

|

| 421 |

+

### 6.3 Bound Stems (Lexical Roots)

|

| 422 |

+

|

| 423 |

+

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

|

| 424 |

+

|

| 425 |

+

| Stem | Cohesion | Substitutability | Examples |

|

| 426 |

+

|------|----------|------------------|----------|

|

| 427 |

+

| `áung` | 1.97x | 9 contexts | táung, láung, dáung |

|

| 428 |

+

| `âung` | 1.96x | 9 contexts | câung, bâung, hâung |

|

| 429 |

+

| `iăng` | 1.80x | 7 contexts | hiăng, siăng, giăng |

|

| 430 |

+

| `iāng` | 1.55x | 8 contexts | liāng, biāng, ciāng |

|

| 431 |

+

|

| 432 |

+

### 6.4 Affix Compatibility (Co-occurrence)

|

| 433 |

+

|

| 434 |

+

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

|

| 435 |

+

|

| 436 |

+

*No significant affix co-occurrences detected.*

|

| 437 |

+

|

| 438 |

+

|

| 439 |

+

### 6.5 Recursive Morpheme Segmentation

|

| 440 |

+

|

| 441 |

+

Using **Recursive Hierarchical Substitutability**, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., `prefix-prefix-root-suffix`).

|

| 442 |

+

|

| 443 |

+

*Insufficient data for recursive segmentation.*

|

| 444 |

+

|

| 445 |

+

|

| 446 |

+

### 6.6 Linguistic Interpretation

|

| 447 |

+

|

| 448 |

+

> **Automated Insight:**

|

| 449 |

+

The language CDO appears to be more isolating or has a highly fixed vocabulary. Word-level models perform nearly as well as subword models, indicating fewer productive morphological processes.

|

| 450 |

|

| 451 |

---

|

| 452 |

+

## 7. Summary & Recommendations

|

| 453 |

|

| 454 |

|

| 455 |

|

|

|

|

| 457 |

|

| 458 |

| Component | Recommended | Rationale |

|

| 459 |

|-----------|-------------|-----------|

|

| 460 |

+

| Tokenizer | **64k BPE** | Best compression (2.89x) |

|

| 461 |

+

| N-gram | **2-gram** | Lowest perplexity (342) |

|

| 462 |

+

| Markov | **Context-4** | Highest predictability (94.7%) |

|

| 463 |

| Embeddings | **100d** | Balanced semantic capture and isotropy |

|

| 464 |

|

| 465 |

+

|

| 466 |

---

|

| 467 |

## Appendix: Metrics Glossary & Interpretation Guide

|

| 468 |

|

|

|

|

| 652 |

author = {Kamali, Omar},

|

| 653 |

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

|

| 654 |

year = {2025},

|

| 655 |

+

doi = {10.5281/zenodo.18073153},

|

| 656 |

+

publisher = {Zenodo},

|

| 657 |

url = {https://huggingface.co/wikilangs}

|

| 658 |

institution = {Omneity Labs}

|

| 659 |

}

|

|

|

|

| 669 |

- 🤗 Models: [huggingface.co/wikilangs](https://huggingface.co/wikilangs)

|

| 670 |

- 📊 Data: [wikipedia-monthly](https://huggingface.co/datasets/omarkamali/wikipedia-monthly)

|

| 671 |

- 👤 Author: [Omar Kamali](https://huggingface.co/omarkamali)

|

| 672 |

+

- 🤝 Sponsor: [Featherless AI](https://featherless.ai)

|

| 673 |

---

|

| 674 |

*Generated by Wikilangs Models Pipeline*

|

| 675 |

|

| 676 |

+

*Report Date: 2026-01-03 09:43:04*

|

models/embeddings/monolingual/cdo_128d.bin

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8c366500bae149b287db9cc735aecf709e56990e0f1dd949aa01f743c5b542bf

|

| 3 |

+

size 1030106435

|

models/embeddings/monolingual/cdo_128d_metadata.json

CHANGED

|

@@ -3,11 +3,13 @@

|

|

| 3 |

"dimension": 128,

|

| 4 |

"version": "monolingual",

|

| 5 |

"training_params": {

|

| 6 |

-

"

|

| 7 |

"min_count": 5,

|

| 8 |

"window": 5,

|

| 9 |

"negative": 5,

|

| 10 |

-

"epochs": 5

|

|

|

|

|

|

|

| 11 |

},

|

| 12 |

-

"vocab_size":

|

| 13 |

}

|

|

|

|

| 3 |

"dimension": 128,

|

| 4 |

"version": "monolingual",

|

| 5 |

"training_params": {

|

| 6 |

+

"algorithm": "skipgram",

|

| 7 |

"min_count": 5,

|

| 8 |

"window": 5,

|

| 9 |

"negative": 5,

|

| 10 |

+

"epochs": 5,

|

| 11 |

+

"encoding_method": "rope",

|

| 12 |

+

"dim": 128

|

| 13 |

},

|

| 14 |

+

"vocab_size": 5854

|

| 15 |

}

|

models/embeddings/monolingual/cdo_32d.bin

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9ee34c3106d7321309452b983807ebe84c3a153af734404b31b03ac0f6076d5c

|

| 3 |

+

size 257610563

|

models/embeddings/monolingual/cdo_32d_metadata.json

CHANGED

|

@@ -3,11 +3,13 @@

|

|

| 3 |

"dimension": 32,

|

| 4 |

"version": "monolingual",

|

| 5 |

"training_params": {

|

| 6 |

-

"

|

| 7 |

"min_count": 5,

|

| 8 |

"window": 5,

|

| 9 |

"negative": 5,

|

| 10 |

-

"epochs": 5

|

|

|

|

|

|

|

| 11 |

},

|

| 12 |

-

"vocab_size":

|

| 13 |

}

|

|

|

|

| 3 |

"dimension": 32,

|

| 4 |

"version": "monolingual",

|

| 5 |

"training_params": {

|

| 6 |

+

"algorithm": "skipgram",

|

| 7 |

"min_count": 5,

|

| 8 |

"window": 5,

|

| 9 |

"negative": 5,

|

| 10 |

+

"epochs": 5,

|

| 11 |

+

"encoding_method": "rope",

|

| 12 |

+

"dim": 32

|

| 13 |

},

|

| 14 |

+

"vocab_size": 5854

|

| 15 |

}

|

models/embeddings/monolingual/cdo_64d.bin

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:aad5fc0dca0e268941025dbedc1373061213d3a2602f82f5fb36a6a8ac4c5600

|

| 3 |

+

size 515109187

|

models/embeddings/monolingual/cdo_64d_metadata.json

CHANGED

|

@@ -3,11 +3,13 @@

|

|

| 3 |

"dimension": 64,

|

| 4 |

"version": "monolingual",

|

| 5 |

"training_params": {

|

| 6 |

-

"

|

| 7 |

"min_count": 5,

|

| 8 |

"window": 5,

|

| 9 |

"negative": 5,

|

| 10 |

-

"epochs": 5

|

|

|

|

|

|

|

| 11 |

},

|

| 12 |

-

"vocab_size":

|

| 13 |

}

|

|

|

|

| 3 |

"dimension": 64,

|

| 4 |

"version": "monolingual",

|

| 5 |

"training_params": {

|

| 6 |

+

"algorithm": "skipgram",

|

| 7 |

"min_count": 5,

|

| 8 |

"window": 5,

|

| 9 |

"negative": 5,

|

| 10 |

+

"epochs": 5,

|

| 11 |

+

"encoding_method": "rope",

|

| 12 |

+

"dim": 64

|

| 13 |

},

|

| 14 |

+

"vocab_size": 5854

|

| 15 |

}

|

models/subword_markov/cdo_markov_ctx1_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:41c23bda939ee89e9ea22c7d220f77ade97cf29b5706a8ccea6a5a1649c35d5c

|

| 3 |

+

size 588804

|

models/subword_markov/cdo_markov_ctx1_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 1,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 1,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 25622,

|

| 6 |

+

"total_transitions": 2209713

|

| 7 |

}

|

models/subword_markov/cdo_markov_ctx2_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:635b5359d40f1fb9f72a9835efb0cf9b06c7ece40db0ae41691aa56bcf42a68f

|

| 3 |

+

size 1373275

|

models/subword_markov/cdo_markov_ctx2_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 2,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 2,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 74780,

|

| 6 |

+

"total_transitions": 2199286

|

| 7 |

}

|

models/subword_markov/cdo_markov_ctx3_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:edbc276446953ce254374098ddc144b562d74f64b6dc6b037712625c3474bcc7

|

| 3 |

+

size 2410456

|

models/subword_markov/cdo_markov_ctx3_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 3,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 3,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 133665,

|

| 6 |

+

"total_transitions": 2188859

|

| 7 |

}

|

models/subword_markov/cdo_markov_ctx4_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:73b83a95594a9de41178ee150977a694d8fe0fc9528952db1f4037278640135b

|

| 3 |

+

size 3940722

|

models/subword_markov/cdo_markov_ctx4_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 4,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 4,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 225577,

|

| 6 |

+

"total_transitions": 2178432

|

| 7 |

}

|

models/subword_ngram/cdo_2gram_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:07438dbbdf7df68795d2567c55c6704ba9e2aa37a1e974f3796653e4ee46d715

|

| 3 |

+

size 93187

|

models/subword_ngram/cdo_2gram_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"n": 2,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_ngrams":

|

| 6 |

-

"total_ngrams":

|

| 7 |

}

|

|

|

|

| 2 |

"n": 2,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 6912,

|

| 6 |

+

"total_ngrams": 2209713

|

| 7 |

}

|

models/subword_ngram/cdo_3gram_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9bc12fdb56f63acfec879929e218e1b08b69ed6bc6caaf23de5405818299a15a

|

| 3 |

+

size 290607

|

models/subword_ngram/cdo_3gram_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"n": 3,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_ngrams":

|

| 6 |

-

"total_ngrams":

|

| 7 |

}

|

|

|

|

| 2 |

"n": 3,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 21000,

|

| 6 |

+

"total_ngrams": 2199286

|

| 7 |

}

|

models/subword_ngram/cdo_4gram_subword.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d7bbf6e008db71c8cab5f01f7b94dece221cc12e30b6c0bbde60267e28ad3d1b

|

| 3 |

+

size 893634

|

models/subword_ngram/cdo_4gram_subword_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"n": 4,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_ngrams":

|

| 6 |

-

"total_ngrams":

|

| 7 |

}

|

|

|

|

| 2 |

"n": 4,

|

| 3 |

"variant": "subword",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 69193,

|

| 6 |

+

"total_ngrams": 2188859

|

| 7 |

}

|

models/tokenizer/cdo_tokenizer_32k.model

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e4ea04b7b5ac9eb6c0c47f8743b9ec573da4a66b11329b62767916c896096283

|

| 3 |

+

size 659402

|

models/tokenizer/cdo_tokenizer_32k.vocab

CHANGED

|

The diff for this file is too large to render.

See raw diff

|

|

|

models/tokenizer/cdo_tokenizer_64k.model

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:543fc6291e9f68ca726ae3c62da2bf8a9f2e8964f61ac8c8f47e7620b30680d4

|

| 3 |

+

size 1252522

|

models/tokenizer/cdo_tokenizer_64k.vocab

CHANGED

|

The diff for this file is too large to render.

See raw diff

|

|

|

models/vocabulary/cdo_vocabulary.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:13d428e7f371fae4d1817f7fd0cad404bb08c4eebfeba6b19d2159ec0858966d

|

| 3 |

+

size 161421

|

models/vocabulary/cdo_vocabulary_metadata.json

CHANGED

|

@@ -1,16 +1,17 @@

|

|

| 1 |

{

|

| 2 |

"language": "cdo",

|

| 3 |

-

"vocabulary_size":

|

|

|

|

| 4 |

"statistics": {

|

| 5 |

-

"type_token_ratio": 0.

|

| 6 |

"coverage": {

|

| 7 |

-

"top_100": 0.

|

| 8 |

-

"top_1000": 0.

|

| 9 |

-

"top_5000": 0.

|

| 10 |

-

"top_10000": 0.

|

| 11 |

},

|

| 12 |

-

"hapax_count":

|

| 13 |

-

"hapax_ratio": 0.

|

| 14 |

-

"total_documents":

|

| 15 |

}

|

| 16 |

}

|

|

|

|

| 1 |

{

|

| 2 |

"language": "cdo",

|

| 3 |

+

"vocabulary_size": 9559,

|

| 4 |

+

"variant": "full",

|

| 5 |

"statistics": {

|

| 6 |

+

"type_token_ratio": 0.06153581253100364,

|

| 7 |

"coverage": {

|

| 8 |

+

"top_100": 0.4998278145152364,

|

| 9 |

+

"top_1000": 0.8788593121599849,

|

| 10 |

+

"top_5000": 0.9388721030817102,

|

| 11 |

+

"top_10000": 0.9589624594646672

|

| 12 |

},

|

| 13 |

+

"hapax_count": 20461,

|

| 14 |

+

"hapax_ratio": 0.6815789473684211,

|

| 15 |

+

"total_documents": 10427

|

| 16 |

}

|

| 17 |

}

|

models/word_markov/cdo_markov_ctx1_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b7c52cf701393a62ef55eb76e071bcc146cb575dcba8d7de8f629c6f59a0ffa9

|

| 3 |

+

size 1378282

|

models/word_markov/cdo_markov_ctx1_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 1,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 1,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 29670,

|

| 6 |

+

"total_transitions": 477419

|

| 7 |

}

|

models/word_markov/cdo_markov_ctx2_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4e2d34224b73bb7d088188c6354a0a9b2ed3b721bfecbe369bd09f27bc4bf8eb

|

| 3 |

+

size 3117924

|

models/word_markov/cdo_markov_ctx2_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 2,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 2,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 139308,

|

| 6 |

+

"total_transitions": 466992

|

| 7 |

}

|

models/word_markov/cdo_markov_ctx3_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:751ecd29db06623ef008eb11136a6de55e6177de5d5106326042cf155bf67096

|

| 3 |

+

size 4876570

|

models/word_markov/cdo_markov_ctx3_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 3,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 3,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 249012,

|

| 6 |

+

"total_transitions": 456565

|

| 7 |

}

|

models/word_markov/cdo_markov_ctx4_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:be6fc8ceb5ad611ae4227c2c3e9eb01458860d29280986707129fb49af2ed1fc

|

| 3 |

+

size 5983737

|

models/word_markov/cdo_markov_ctx4_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"context_size": 4,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_contexts":

|

| 6 |

-

"total_transitions":

|

| 7 |

}

|

|

|

|

| 2 |

"context_size": 4,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_contexts": 301670,

|

| 6 |

+

"total_transitions": 446138

|

| 7 |

}

|

models/word_ngram/cdo_2gram_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1665b5acdc0653c9ed337ac32fede9cd787b10dd4061bd94b629fa966ee9aaf1

|

| 3 |

+

size 178246

|

models/word_ngram/cdo_2gram_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"n": 2,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_ngrams":

|

| 6 |

-

"total_ngrams":

|

| 7 |

}

|

|

|

|

| 2 |

"n": 2,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 11679,

|

| 6 |

+

"total_ngrams": 477419

|

| 7 |

}

|

models/word_ngram/cdo_3gram_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0dbe777e25174fb6b91ddeadf4d3f6969ac19328aeeb43492d068cc47fffedb9

|

| 3 |

+

size 311342

|

models/word_ngram/cdo_3gram_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"n": 3,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_ngrams":

|

| 6 |

-

"total_ngrams":

|

| 7 |

}

|

|

|

|

| 2 |

"n": 3,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 17954,

|

| 6 |

+

"total_ngrams": 466992

|

| 7 |

}

|

models/word_ngram/cdo_4gram_word.parquet

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:11d7a87e924b2a61e7e8acb9a1eb51754eb4c044273e29e85c59b97269584a38

|

| 3 |

+

size 556300

|

models/word_ngram/cdo_4gram_word_metadata.json

CHANGED

|

@@ -2,6 +2,6 @@

|

|

| 2 |

"n": 4,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

-

"unique_ngrams":

|

| 6 |

-

"total_ngrams":

|

| 7 |

}

|

|

|

|

| 2 |

"n": 4,

|

| 3 |

"variant": "word",

|

| 4 |

"language": "cdo",

|

| 5 |

+

"unique_ngrams": 30938,

|

| 6 |

+

"total_ngrams": 456565

|

| 7 |

}

|

visualizations/embedding_isotropy.png

CHANGED

|

|

visualizations/embedding_norms.png

CHANGED

|

|

visualizations/embedding_similarity.png

CHANGED

|

Git LFS Details

|

|

Git LFS Details

|

visualizations/markov_branching.png

CHANGED

|

|

visualizations/markov_contexts.png

CHANGED

|

|

visualizations/markov_entropy.png

CHANGED

|

|

visualizations/model_sizes.png

CHANGED

|

|

visualizations/nearest_neighbors.png

CHANGED

|

|

visualizations/ngram_coverage.png

CHANGED

|

|