Gujarati - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Gujarati Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

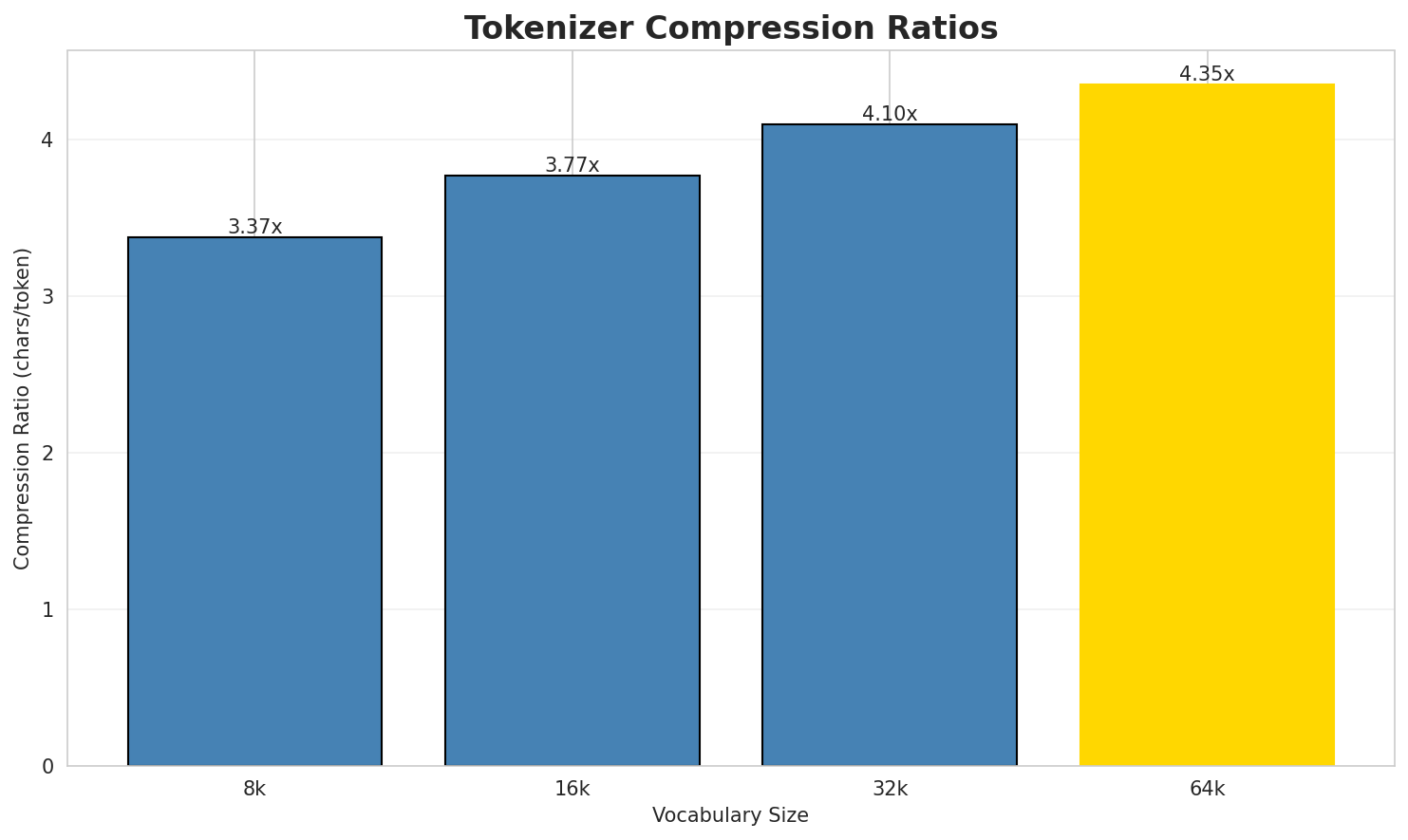

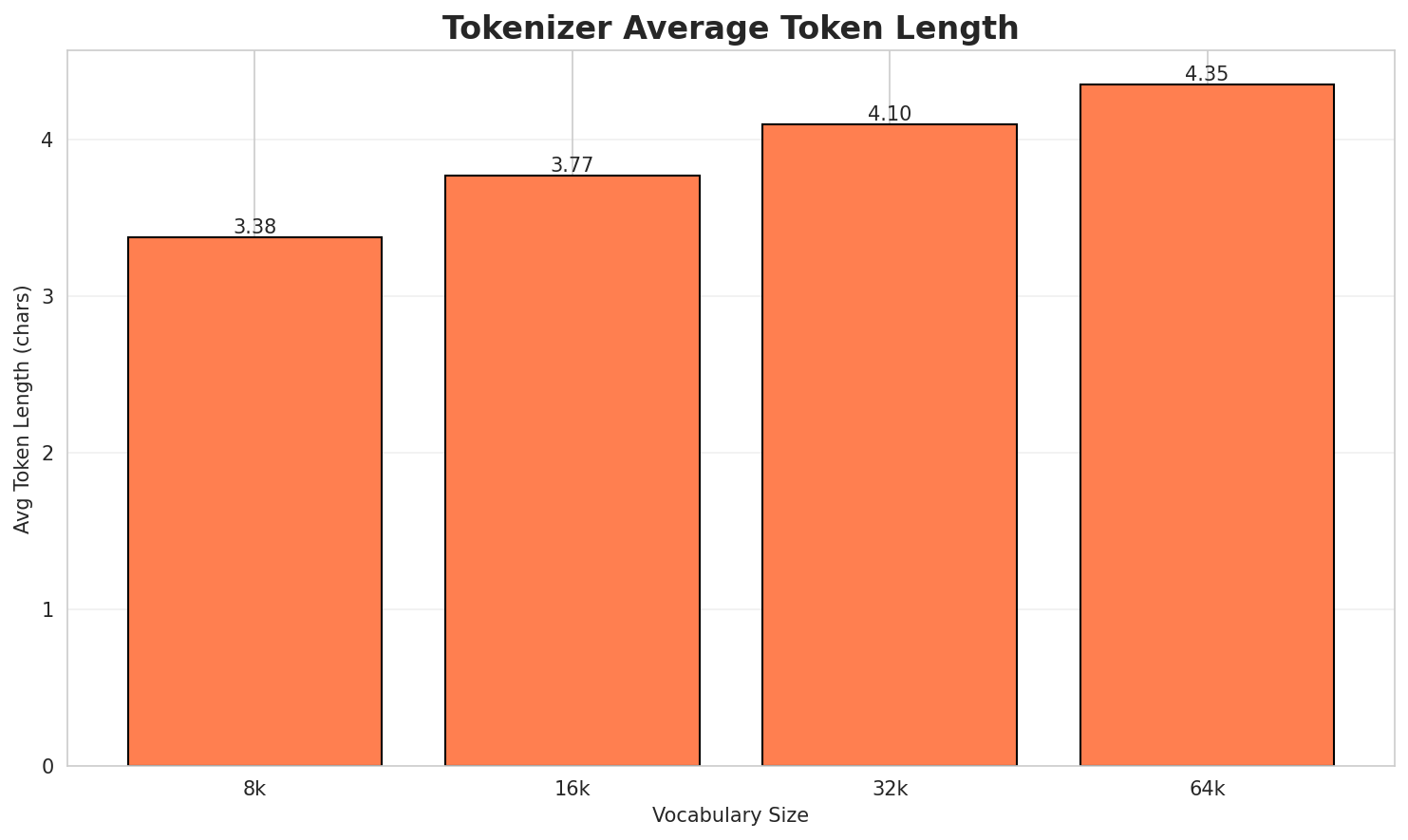

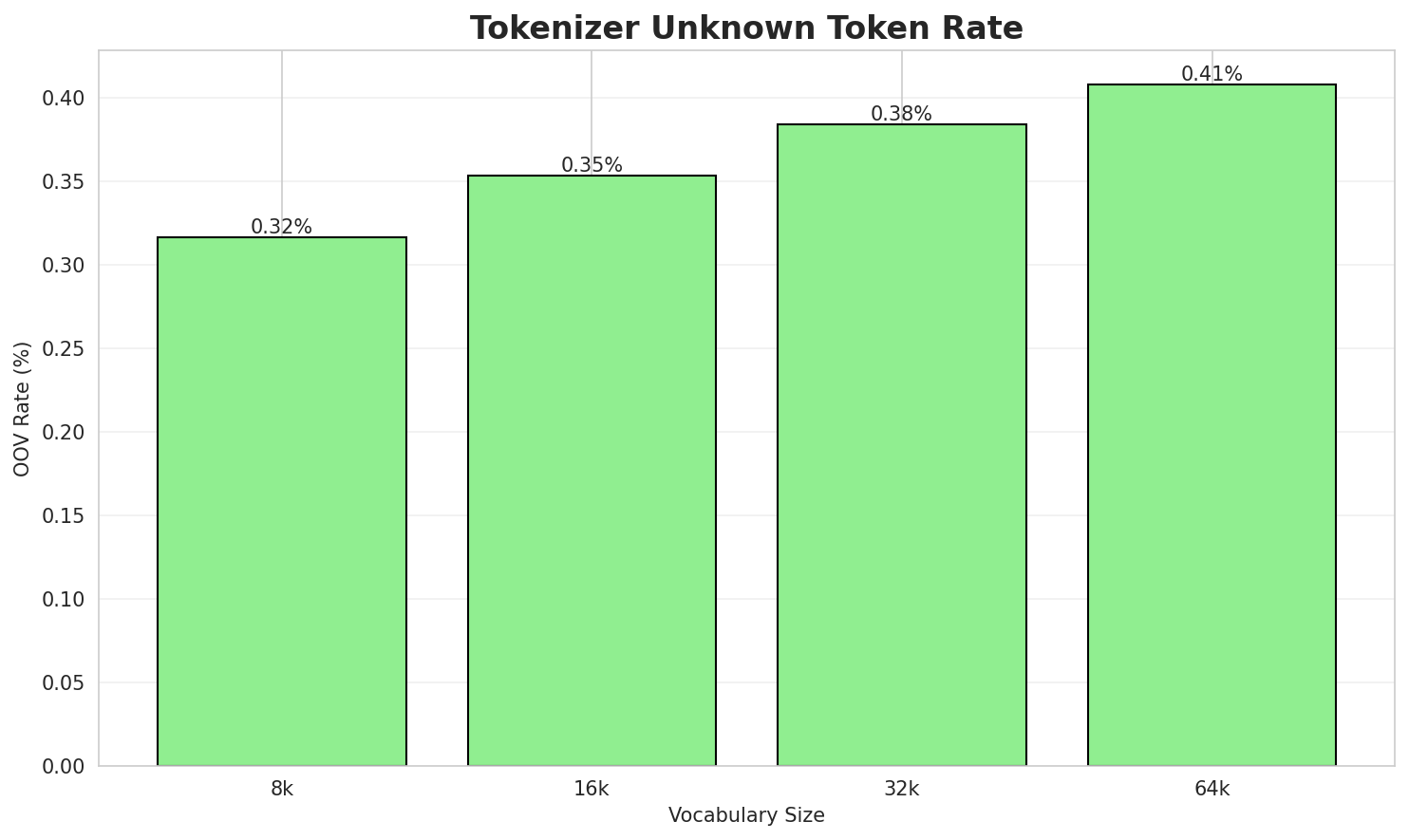

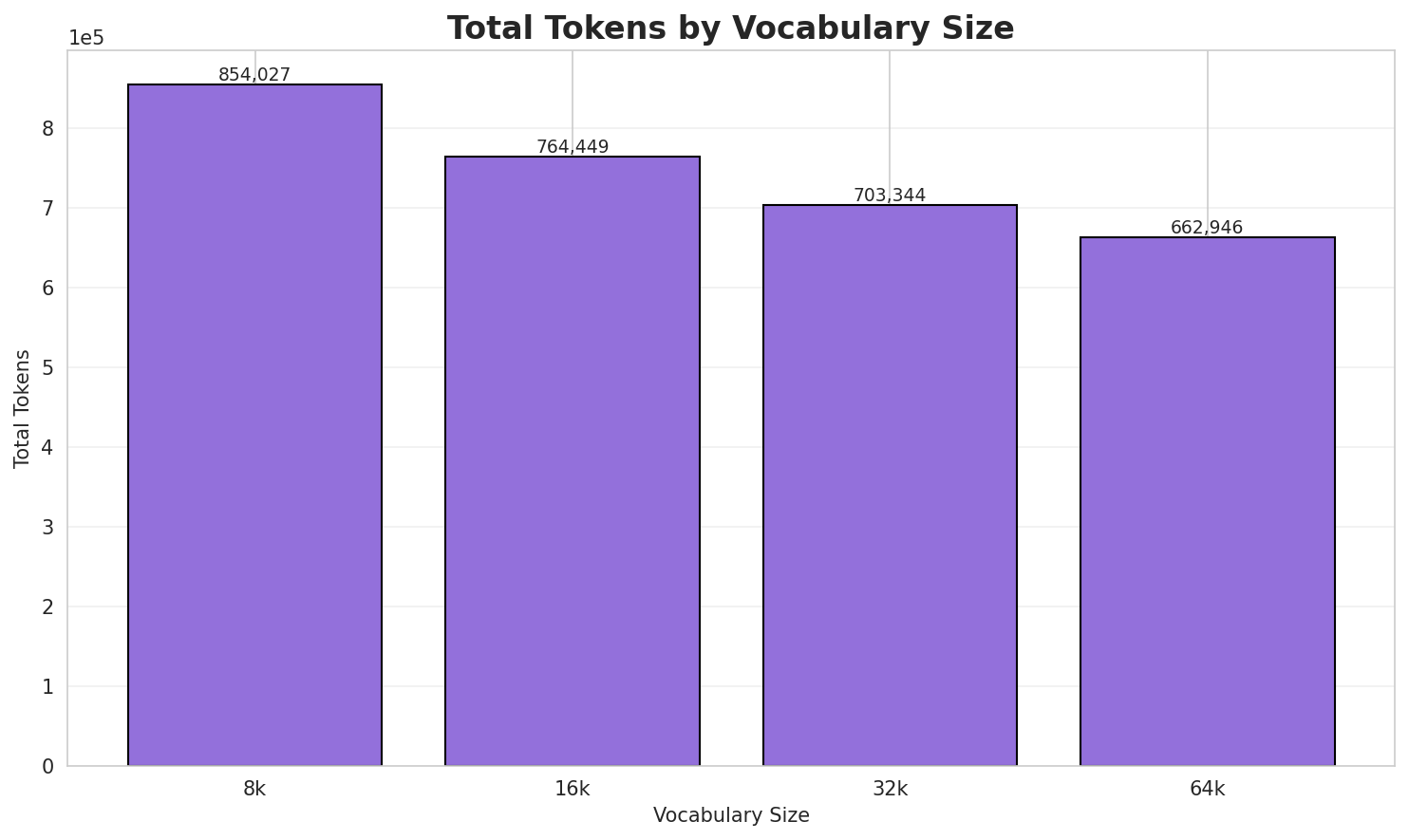

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.375x | 3.38 | 0.3164% | 854,027 |

| 16k | 3.770x | 3.77 | 0.3535% | 764,449 |

| 32k | 4.098x | 4.10 | 0.3842% | 703,344 |

| 64k | 4.347x 🏆 | 4.35 | 0.4076% | 662,946 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: સિકર ભારત દેશના પશ્ચિમ ભાગમાં આવેલા રાજસ્થાન રાજ્યનું એક નગર છે. સિકરમાં સિકર જિ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁સિક ર ▁ભારત ▁દેશના ▁પશ્ચિમ ▁ભાગમાં ▁આવેલા ▁રાજસ્થાન ▁રાજ્યનું ▁એક ... (+11 more) |

21 |

| 16k | ▁સિક ર ▁ભારત ▁દેશના ▁પશ્ચિમ ▁ભાગમાં ▁આવેલા ▁રાજસ્થાન ▁રાજ્યનું ▁એક ... (+11 more) |

21 |

| 32k | ▁સિકર ▁ભારત ▁દેશના ▁પશ્ચિમ ▁ભાગમાં ▁આવેલા ▁રાજસ્થાન ▁રાજ્યનું ▁એક ▁નગર ... (+9 more) |

19 |

| 64k | ▁સિકર ▁ભારત ▁દેશના ▁પશ્ચિમ ▁ભાગમાં ▁આવેલા ▁રાજસ્થાન ▁રાજ્યનું ▁એક ▁નગર ... (+9 more) |

19 |

Sample 2: હસમુખ પટેલ ગુજરાતના અમરઇવાડી લોકસભા મત વિસ્તારમાંથી લોકસભા ચૂંટણીમાં ભારતીય જનત...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁હ સ મુખ ▁પટેલ ▁ગુજરાતના ▁અમર ▁ઇ વાડી ▁લોકસભા ▁મત ... (+14 more) |

24 |

| 16k | ▁હસમુખ ▁પટેલ ▁ગુજરાતના ▁અમર ▁ઇ વાડી ▁લોકસભા ▁મત ▁વિસ્તારમાંથી ▁લોકસભા ... (+11 more) |

21 |

| 32k | ▁હસમુખ ▁પટેલ ▁ગુજરાતના ▁અમર ▁ઇ વાડી ▁લોકસભા ▁મત ▁વિસ્તારમાંથી ▁લોકસભા ... (+11 more) |

21 |

| 64k | ▁હસમુખ ▁પટેલ ▁ગુજરાતના ▁અમર ▁ઇ વાડી ▁લોકસભા ▁મત ▁વિસ્તારમાંથી ▁લોકસભા ... (+11 more) |

21 |

Sample 3: જય હિન્દ ગુજરાતી ભાષાનું એક રોજીંદુ સમાચારપત્ર છે. બાહ્ય કડીઓ જય હિન્દ વેબસાઇટ સ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁જય ▁હિન્ દ ▁ગુજરાતી ▁ભાષાનું ▁એક ▁રો જી ંદુ ▁સમાચાર ... (+11 more) |

21 |

| 16k | ▁જય ▁હિન્ દ ▁ગુજરાતી ▁ભાષાનું ▁એક ▁રોજી ંદુ ▁સમાચારપત્ર ▁છે ... (+8 more) |

18 |

| 32k | ▁જય ▁હિન્દ ▁ગુજરાતી ▁ભાષાનું ▁એક ▁રોજી ંદુ ▁સમાચારપત્ર ▁છે . ... (+6 more) |

16 |

| 64k | ▁જય ▁હિન્દ ▁ગુજરાતી ▁ભાષાનું ▁એક ▁રોજી ંદુ ▁સમાચારપત્ર ▁છે . ... (+6 more) |

16 |

Key Findings

- Best Compression: 64k achieves 4.347x compression

- Lowest UNK Rate: 8k with 0.3164% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

2. N-gram Model Evaluation

Results

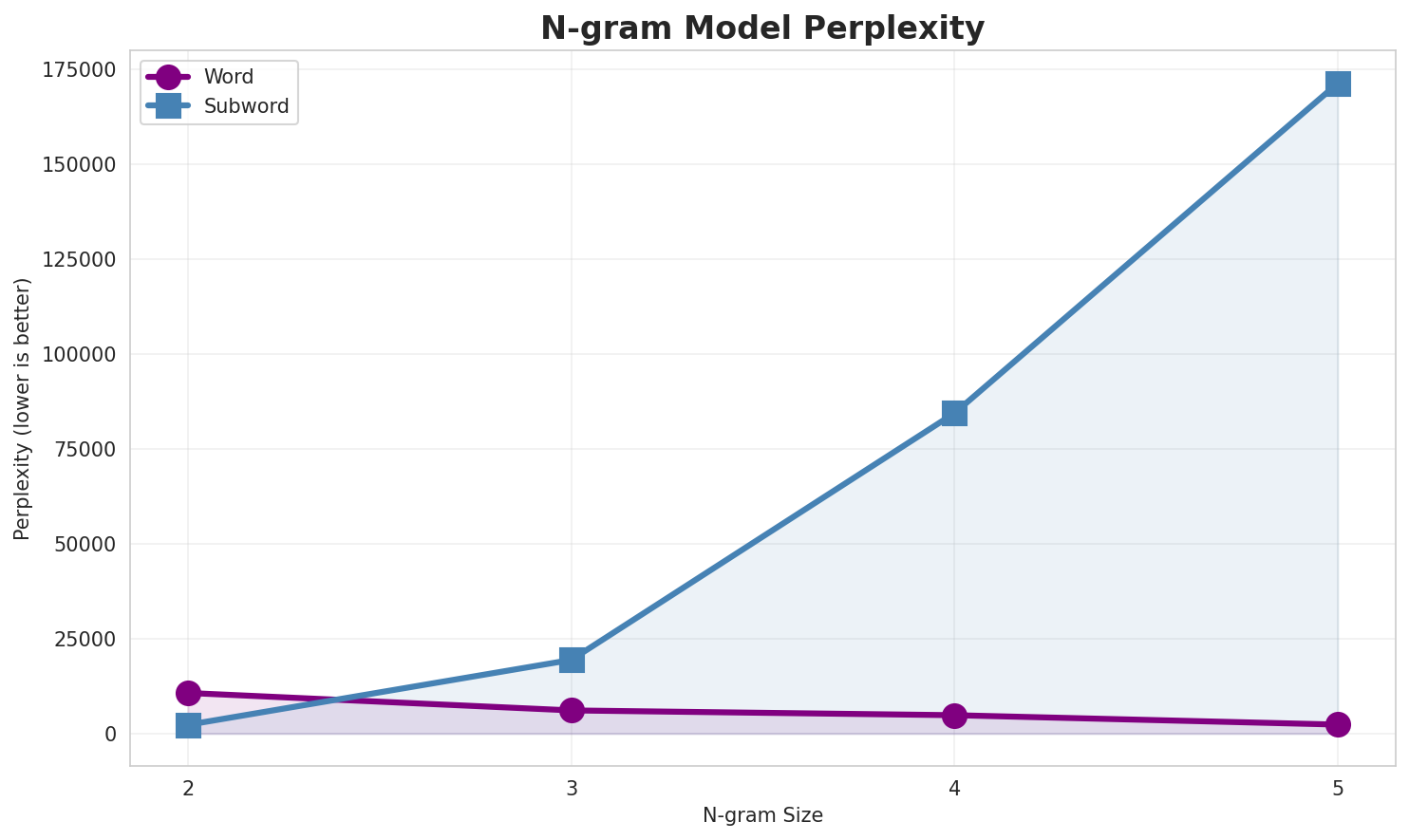

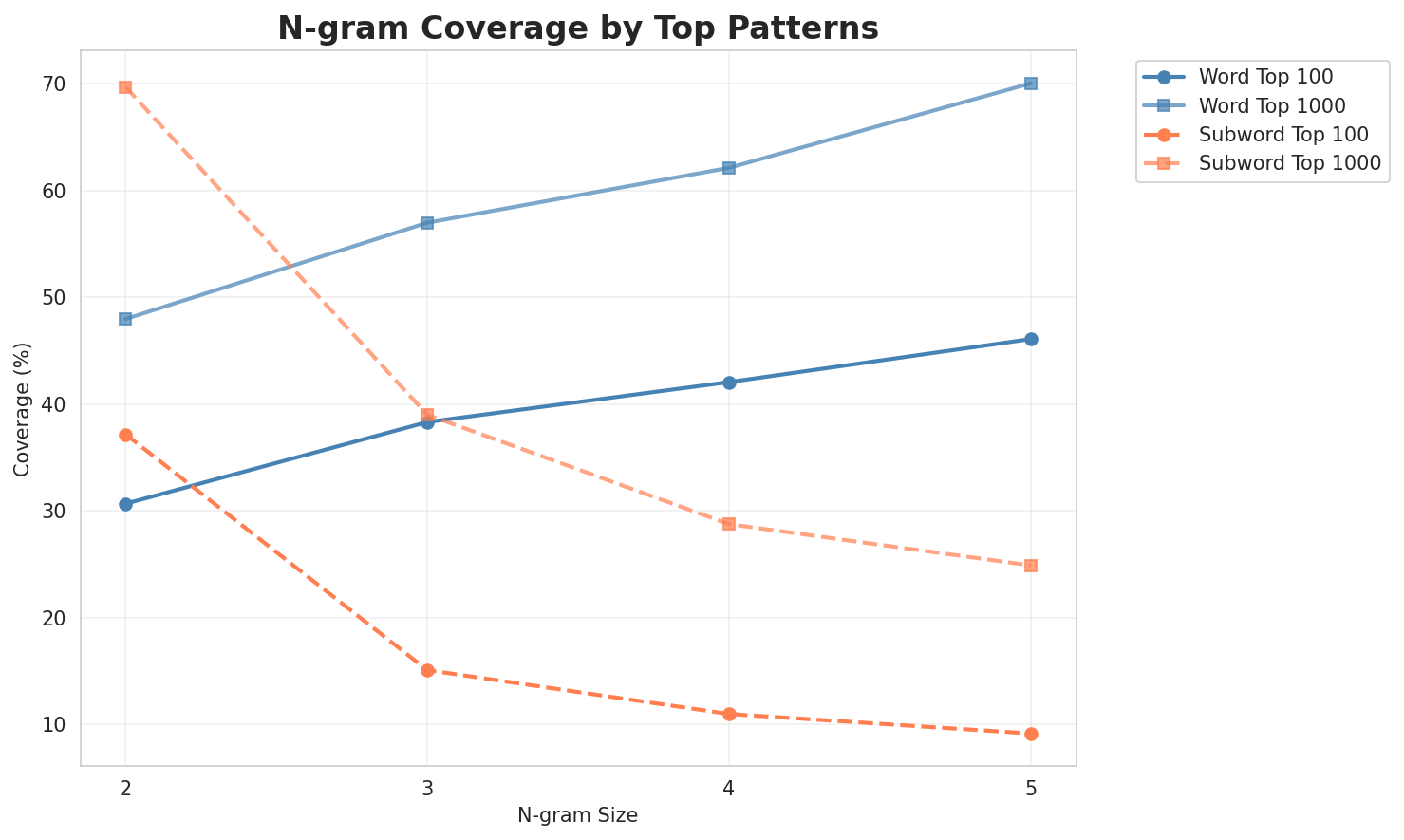

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 10,698 | 13.39 | 125,204 | 30.7% | 48.0% |

| 2-gram | Subword | 2,297 🏆 | 11.17 | 62,671 | 37.2% | 69.7% |

| 3-gram | Word | 6,094 | 12.57 | 126,108 | 38.3% | 57.0% |

| 3-gram | Subword | 19,412 | 14.24 | 340,527 | 15.1% | 39.0% |

| 4-gram | Word | 4,835 | 12.24 | 174,488 | 42.1% | 62.1% |

| 4-gram | Subword | 84,467 | 16.37 | 1,351,931 | 11.0% | 28.7% |

| 5-gram | Word | 2,358 | 11.20 | 99,029 | 46.1% | 70.1% |

| 5-gram | Subword | 171,415 | 17.39 | 2,092,817 | 9.1% | 24.9% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | છે આ |

59,392 |

| 2 | તેમ જ |

50,730 |

| 3 | આવે છે |

34,138 |

| 4 | આ ગામમાં |

33,314 |

| 5 | ભાગમાં આવેલા |

31,103 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | છે આ ગામમાં |

33,162 |

| 2 | કરવામાં આવે છે |

19,146 |

| 3 | પશ્ચિમ ભાગમાં આવેલા |

18,413 |

| 4 | ભારત દેશના પશ્ચિમ |

18,409 |

| 5 | દેશના પશ્ચિમ ભાગમાં |

18,400 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ભારત દેશના પશ્ચિમ ભાગમાં |

18,398 |

| 2 | દેશના પશ્ચિમ ભાગમાં આવેલા |

18,367 |

| 3 | પશ્ચિમ ભાગમાં આવેલા ગુજરાત |

17,959 |

| 4 | ભાગમાં આવેલા ગુજરાત રાજ્યના |

17,656 |

| 5 | લોકોનો મુખ્ય વ્યવસાય ખેતી |

16,402 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ભારત દેશના પશ્ચિમ ભાગમાં આવેલા |

18,365 |

| 2 | દેશના પશ્ચિમ ભાગમાં આવેલા ગુજરાત |

17,934 |

| 3 | પશ્ચિમ ભાગમાં આવેલા ગુજરાત રાજ્યના |

17,654 |

| 4 | ગામના લોકોનો મુખ્ય વ્યવસાય ખેતી |

16,093 |

| 5 | લોકોનો મુખ્ય વ્યવસાય ખેતી ખેતમજૂરી |

16,009 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | . _ |

427,913 |

| 2 | , _ |

392,494 |

| 3 | _ આ |

387,226 |

| 4 | માં _ |

379,145 |

| 5 | _ અ |

336,386 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ છે . |

235,479 |

| 2 | છે . _ |

225,048 |

| 3 | _ અ ને |

167,804 |

| 4 | અ ને _ |

165,365 |

| 5 | માં _ આ |

149,526 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ છે . _ |

224,981 |

| 2 | _ અ ને _ |

164,399 |

| 3 | માં _ આ વે |

110,493 |

| 4 | . _ આ _ |

68,884 |

| 5 | વા માં _ આ |

67,045 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ છે . _ આ |

62,651 |

| 2 | છે . _ આ _ |

57,724 |

| 3 | _ આ વે લા _ |

56,800 |

| 4 | માં _ આ વે લા |

53,829 |

| 5 | _ તે મ _ જ |

50,773 |

Key Findings

- Best Perplexity: 2-gram (subword) with 2,297

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~25% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

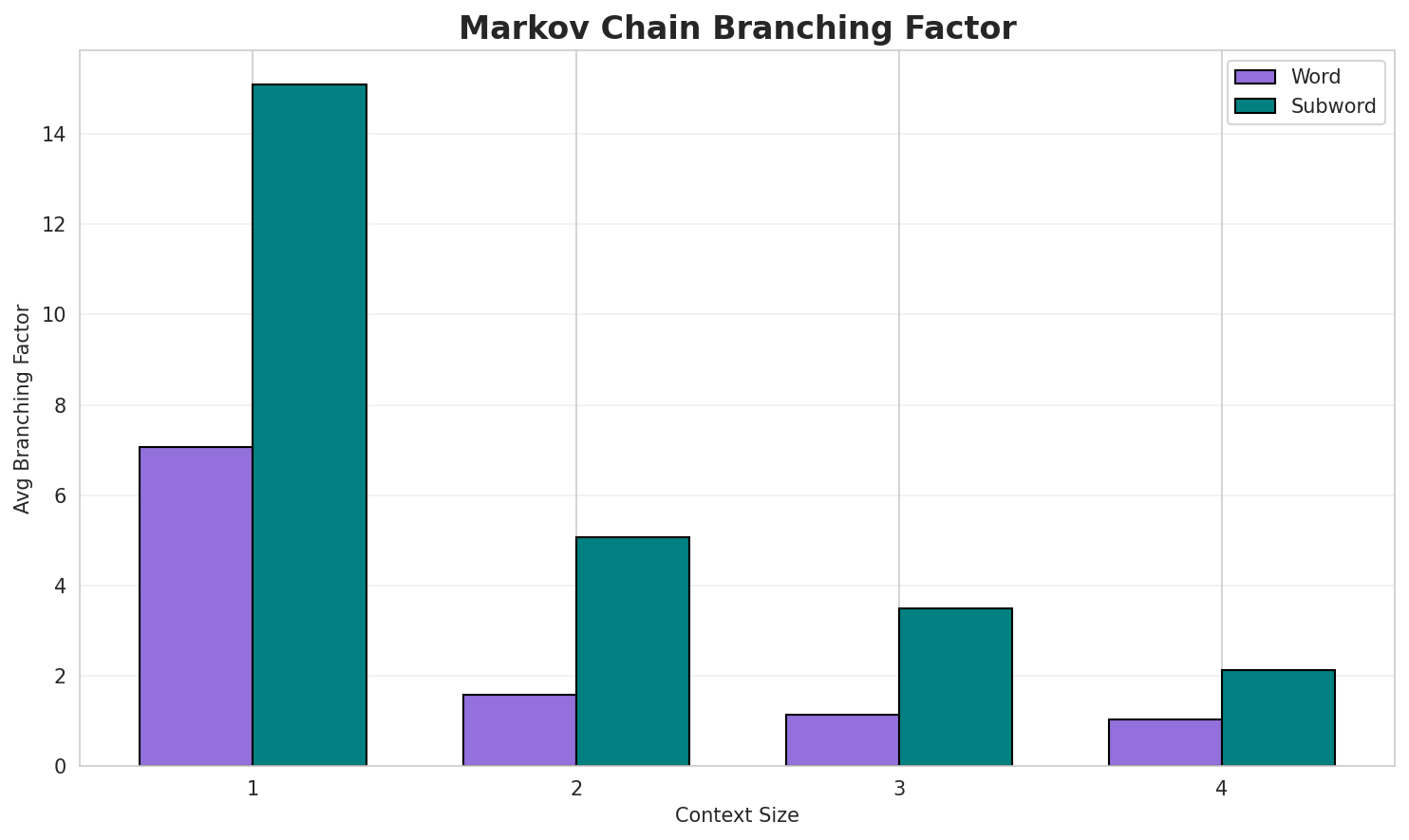

3. Markov Chain Evaluation

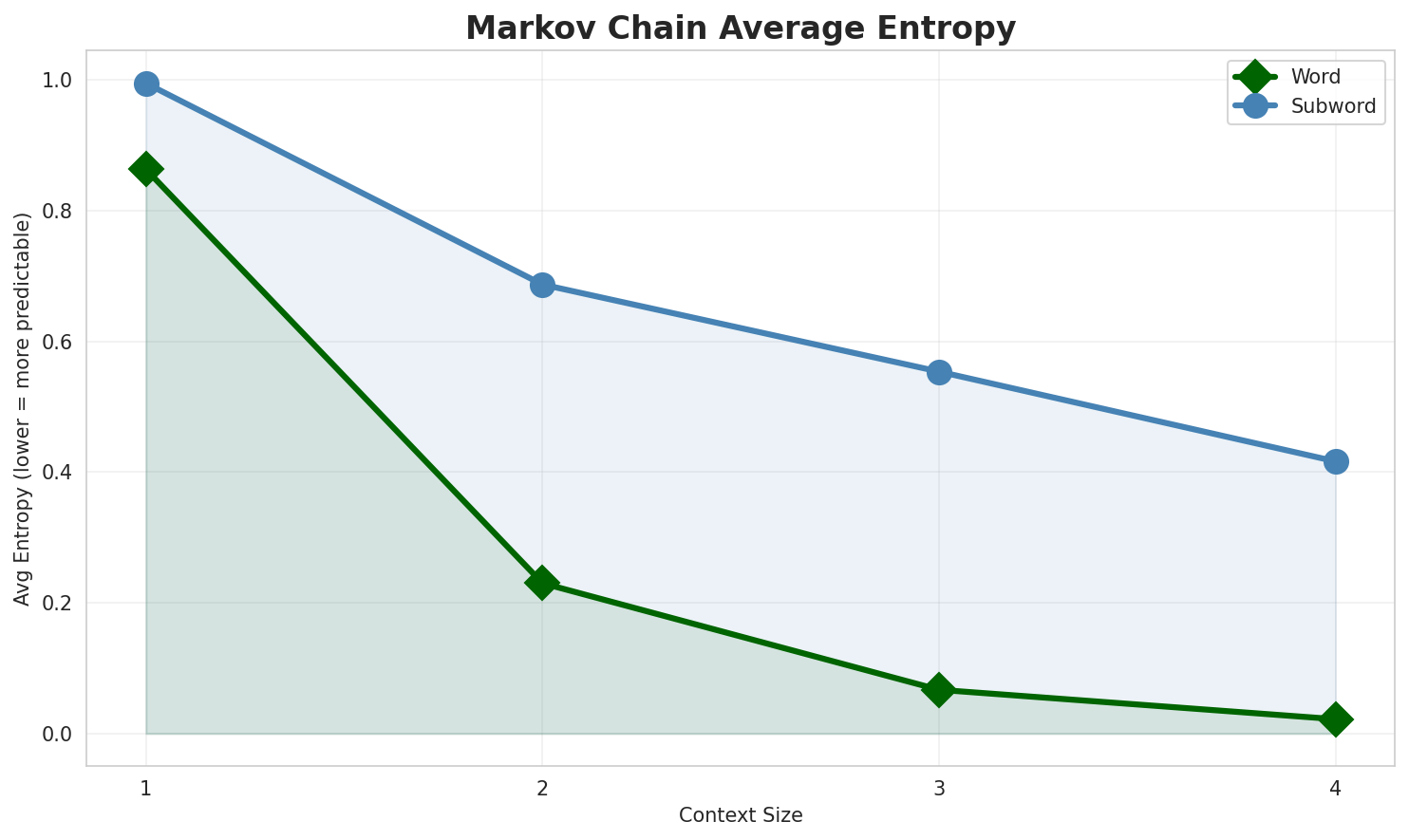

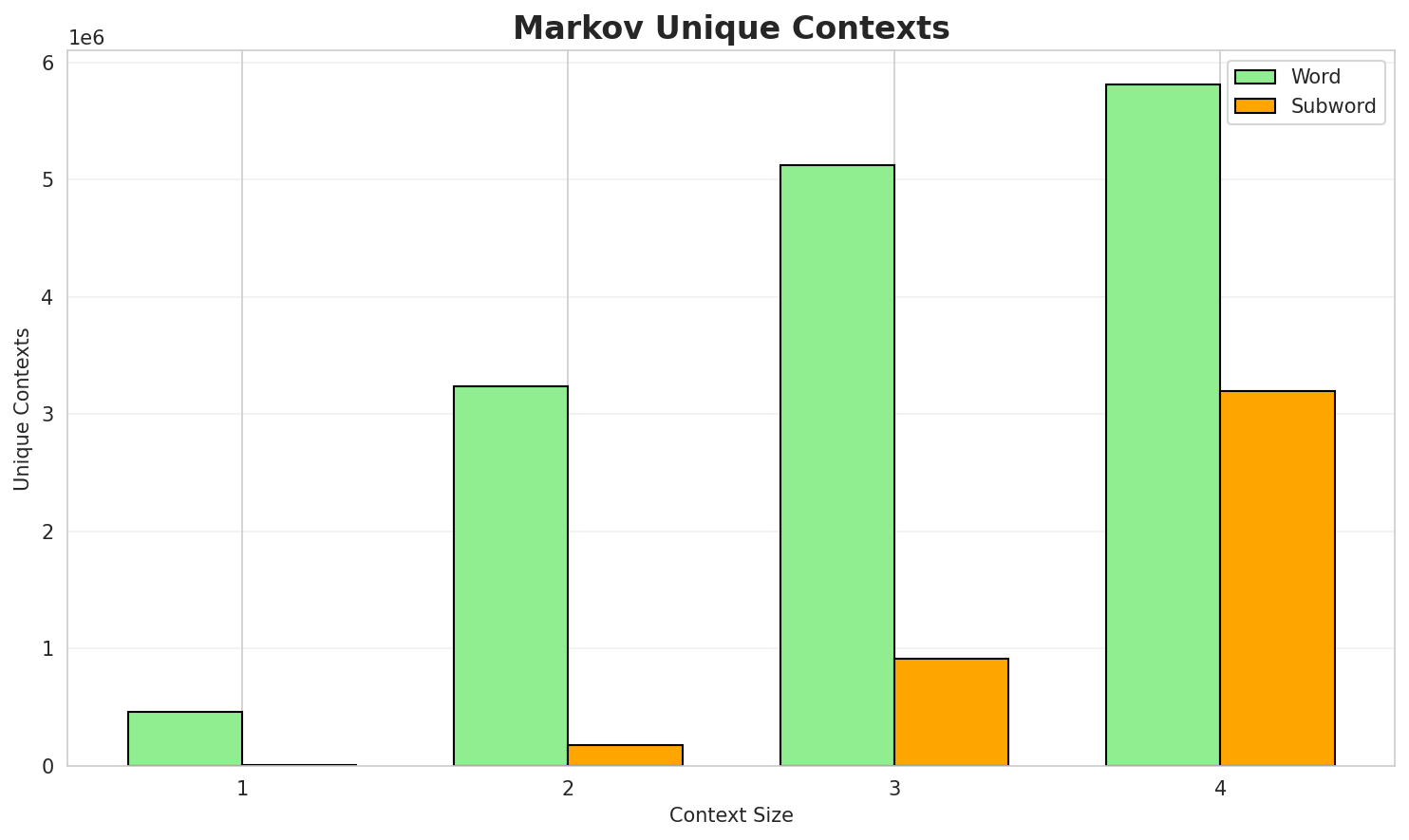

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.8634 | 1.819 | 7.06 | 458,692 | 13.7% |

| 1 | Subword | 0.9952 | 1.993 | 15.09 | 11,981 | 0.5% |

| 2 | Word | 0.2300 | 1.173 | 1.59 | 3,234,951 | 77.0% |

| 2 | Subword | 0.6872 | 1.610 | 5.08 | 180,749 | 31.3% |

| 3 | Word | 0.0669 | 1.047 | 1.13 | 5,125,144 | 93.3% |

| 3 | Subword | 0.5539 | 1.468 | 3.49 | 917,629 | 44.6% |

| 4 | Word | 0.0220 🏆 | 1.015 | 1.04 | 5,806,762 | 97.8% |

| 4 | Subword | 0.4165 | 1.335 | 2.13 | 3,199,363 | 58.3% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

છે તે સામેલ કરવાનો છે એઆરડીએ arda ના વેટરર્નસ ઘડાયેલા સ્તંભો વિમલ સંધિવિગ્રહિક દામોદર ઠાકરસી મહિલાઅને એ ઇ સ્મિથ એંથની જીડેંસ મિશેલ ચાર્લ્સ સ્ટેઇનમેટ્ઝ અને ગાયક તરીકે પસંદ નથી તેમણે સ્ટેલીનાઆ ગામમાં પ્રાથમિક શાળા તેમ જ દૂધની ડેરી જેવી સવલતો પ્રાપ્ય થયેલી છે સંદર્ભ કર્યા છે

Context Size 2:

છે આ ગામ સ્થાપિત સહયોગ કુષ્ટ યજ્ઞ ટ્રસ્ટ નામની સંસ્થા પણ જીવનસંચારવાદ પણ શીખવે છે તે દરમિયાનતેમ જ અન્ય શાકભાજીના પાકની ખેતી કરવામાં આવે છે આ શહેરની અર્થતંત્રએ ભારતમા દસમો ક્રમ ધરાવે છેઆવે છે જો કે બંગાળીઓ વિશે કેટલીક કાલ્પનિક વાર્તાઓ ડબ્લ્યુડબ્લ્યુઇમાં બિહાઇન્ડ ધ પેઇન્ટેડ સ્માઇલ માં ...

Context Size 3:

છે આ ગામમાં પ્રાથમિક શાળા આંગણવાડી પંચાયતઘર દૂધની ડેરી વગેરે સવલતો પ્રાપ્ય છે ગામના લોકો વ્યવસાયમાં ...કરવામાં આવે છે આ તત્વનું નામ મેન્ડેલિવીયમ ને શુદ્ધ અને ઉપયોગિ રસાયણ શાસ્તની આંતરરાષ્ટ્રીય સંસ્થા દ્વ...પશ્ચિમ ભાગમાં આવેલા ગુજરાત રાજ્યના મધ્ય ભાગમાં આવેલા પંચમહાલ જિલ્લામાં આવેલા કુલ ૬ છ તાલુકાઓ પૈકીના ...

Context Size 4:

ભારત દેશના પશ્ચિમ ભાગમાં આવેલા ગુજરાત રાજ્યમાં આવેલા સૌરાષ્ટ્ર વિસ્તારમાં આવેલા પોરબંદર જિલ્લામાં આવ...દેશના પશ્ચિમ ભાગમાં આવેલા ગુજરાત રાજ્યના દક્ષિણ ભાગમાં આવેલા તાપી જિલ્લાના કુલ ૭ સાત તાલુકાઓ પૈકીના ...પશ્ચિમ ભાગમાં આવેલા ગુજરાત રાજ્યના ઉત્તર પૂર્વ ભાગમાં આવેલા અરવલ્લી જિલ્લામાં આવેલા કુલ ૬ છ તાલુકાઓ ...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_મેજયેલી_રોજના_અને_અને_રજકોનો_તા_દરાજ્યની_માટેની_કર_hie_સ્વતંત્ર્ય_તાં_પદ્ધની

Context Size 2:

._૧૯_રોહિતો_અને_ભારત_મા,_entના_પાકની_ઉત્તરાધિક__આર_સૂર્યના_મૃત્યુ_ગાંધીનગર,

Context Size 3:

_છે._આ_ગામમાં_મુખ્યત્વે_આદિવાસીછે._તાલુકો_ખેતી_કરવાની_પણ__અનેક_આવેલા_અભ્યાસ_દ્વારા_પહોં

Context Size 4:

_છે._ગોડ્ડામાં_ગોડ્ડા_જિલ્લામાં_આવેલા__અને_ક્લાસિકલ_માર્ગ_પર_એમિગાઃ_માં_આવે_ત્યારે_બાસાલ્ટિક_કે_દારૂનુ_

Key Findings

- Best Predictability: Context-4 (word) with 97.8% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (3,199,363 contexts)

- Recommendation: Context-3 or Context-4 for text generation

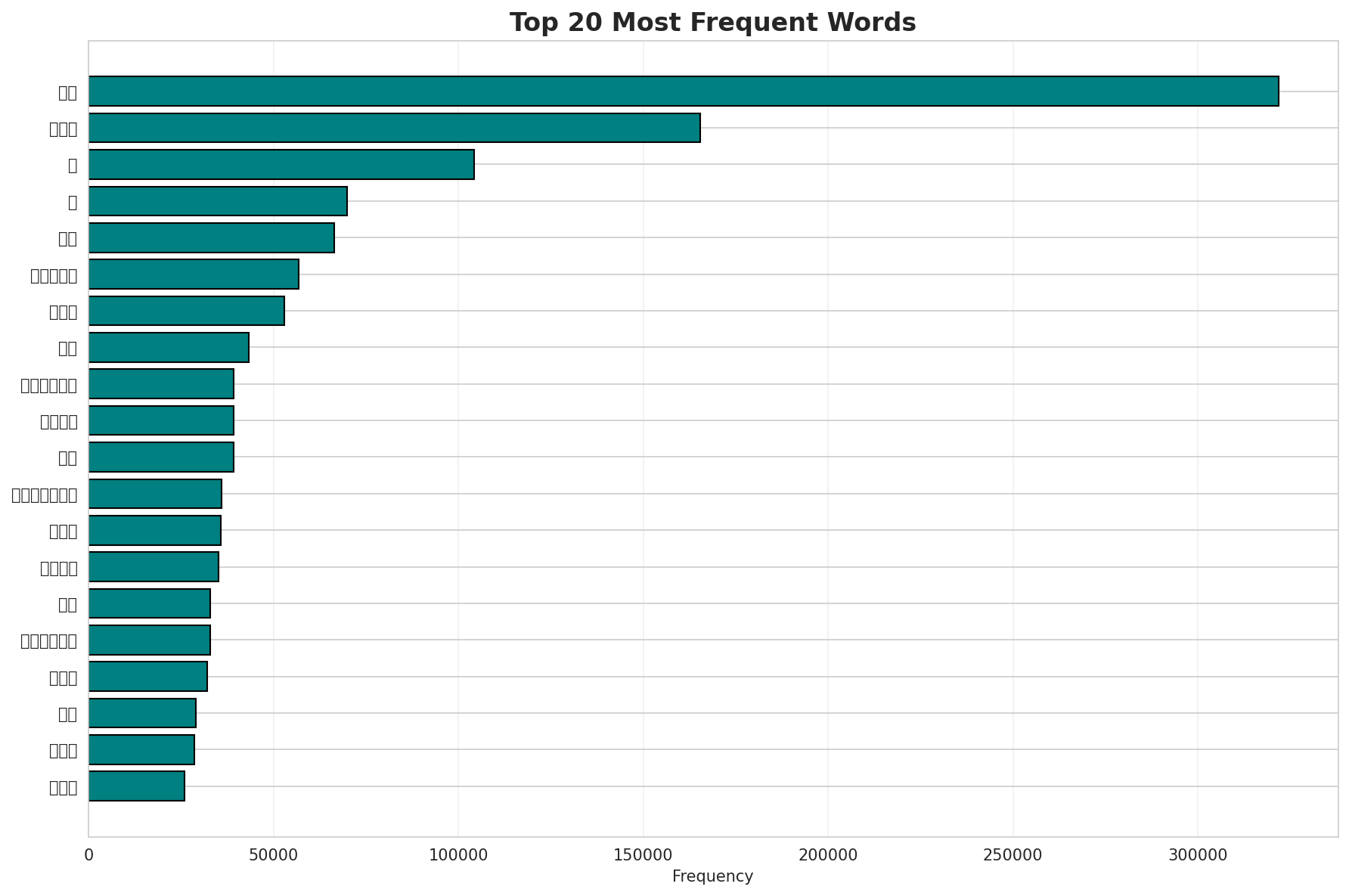

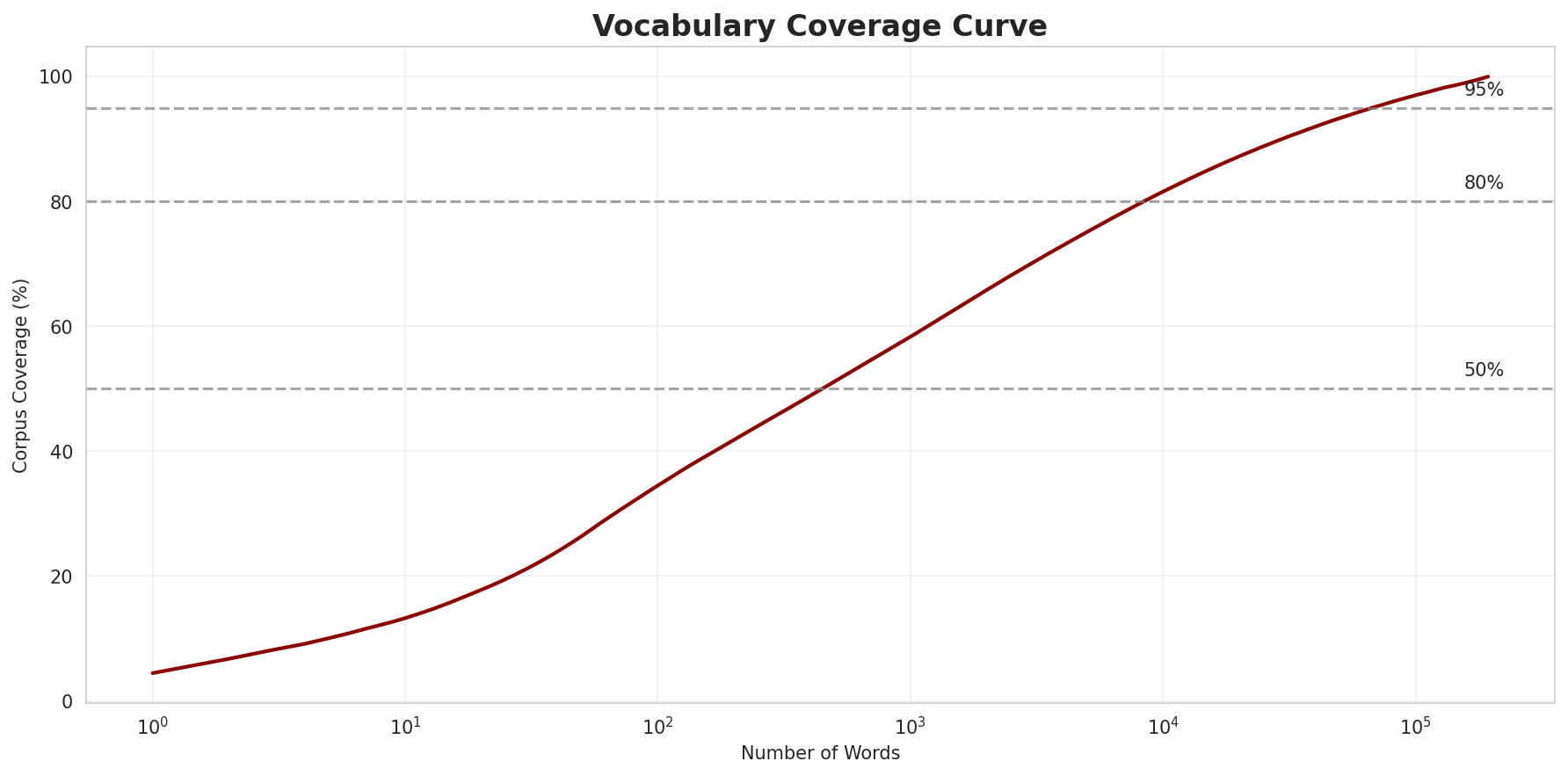

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 193,639 |

| Total Tokens | 7,238,200 |

| Mean Frequency | 37.38 |

| Median Frequency | 4 |

| Frequency Std Dev | 1013.36 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | છે | 321,831 |

| 2 | અને | 165,458 |

| 3 | આ | 104,369 |

| 4 | જ | 69,831 |

| 5 | એક | 66,411 |

| 6 | આવેલા | 56,885 |

| 7 | તેમ | 53,009 |

| 8 | કે | 43,256 |

| 9 | ગામમાં | 39,326 |

| 10 | માટે | 39,310 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | લંપુરને | 2 |

| 2 | શ્રુહાદ | 2 |

| 3 | ફાર્મહાઉસમાં | 2 |

| 4 | જંબુદ્દીવપણ્ણત્તિ | 2 |

| 5 | જંબુસામિચરિઉ | 2 |

| 6 | સુધર્મસ્વામી | 2 |

| 7 | જંબુને | 2 |

| 8 | વૈરાગ્યવિરોધી | 2 |

| 9 | જંબુની | 2 |

| 10 | સુધર્માસ્વામીએ | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0403 |

| R² (Goodness of Fit) | 0.997228 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 34.5% |

| Top 1,000 | 58.3% |

| Top 5,000 | 75.1% |

| Top 10,000 | 81.6% |

Key Findings

- Zipf Compliance: R²=0.9972 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 34.5% of corpus

- Long Tail: 183,639 words needed for remaining 18.4% coverage

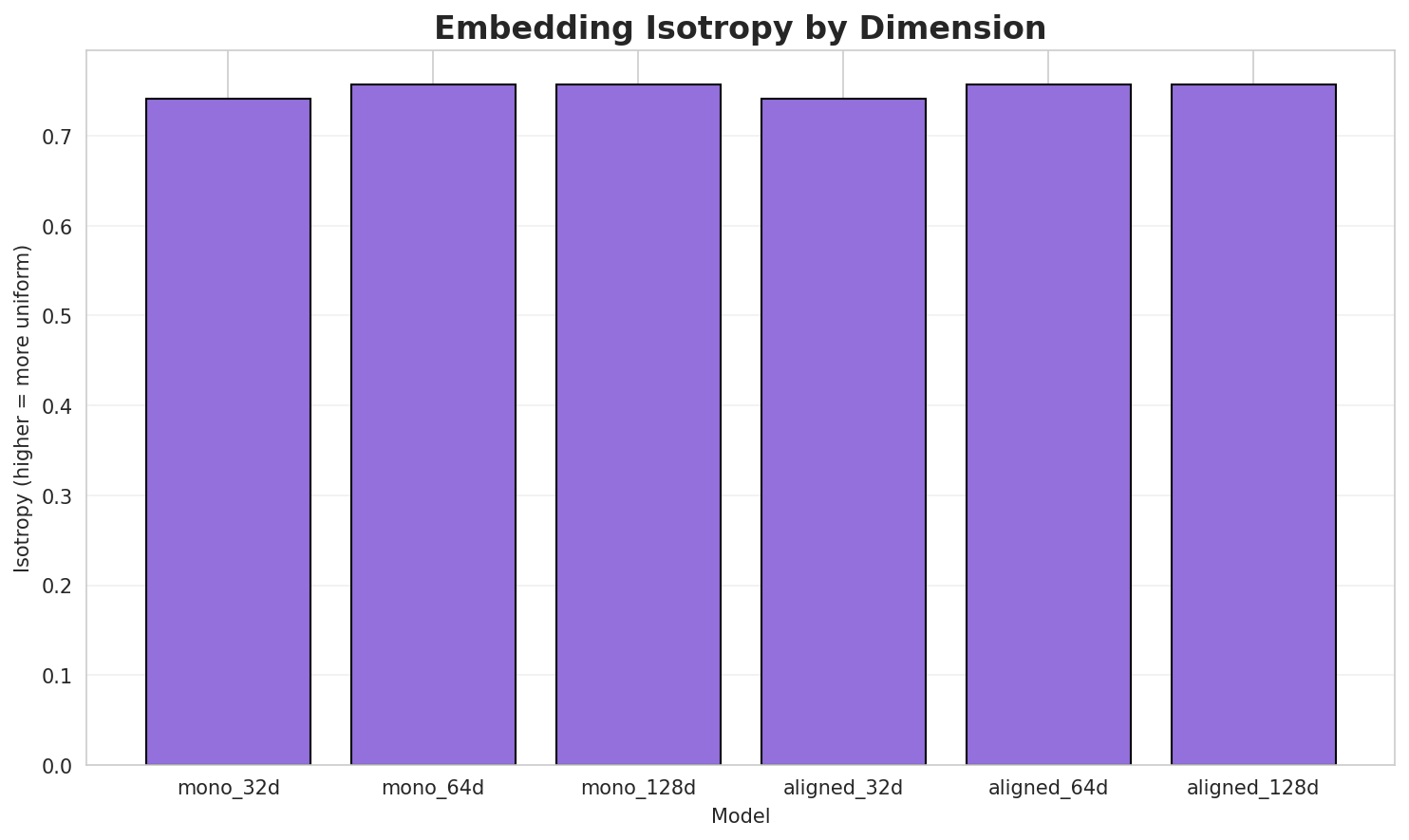

5. Word Embeddings Evaluation

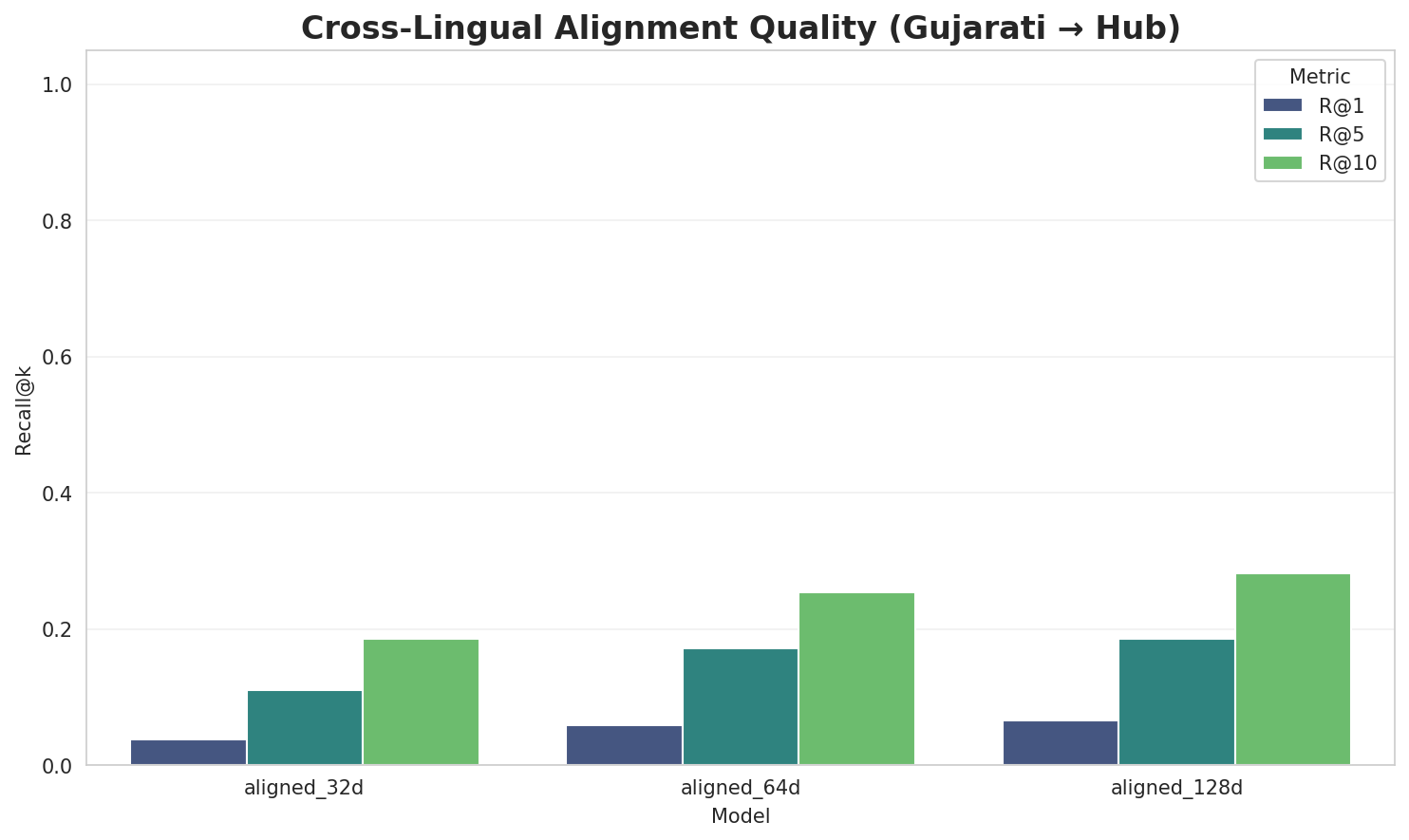

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.7412 | 0.3587 | N/A | N/A |

| mono_64d | 64 | 0.7575 | 0.2728 | N/A | N/A |

| mono_128d | 128 | 0.7575 🏆 | 0.2077 | N/A | N/A |

| aligned_32d | 32 | 0.7412 | 0.3577 | 0.0380 | 0.1860 |

| aligned_64d | 64 | 0.7575 | 0.2690 | 0.0580 | 0.2540 |

| aligned_128d | 128 | 0.7575 | 0.2042 | 0.0660 | 0.2820 |

Key Findings

- Best Isotropy: mono_128d with 0.7575 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2783. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 6.6% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 3.181 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 1.816 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-સ |

સંસ્કૃતભાષામાં, સામાન્યત, સ્થપાતી |

-ક |

કોબોલ્ડ, કાલક્રમાનુસાર, કાળજીપુર્વક |

-મ |

મિંઢા, મહાવીરને, માયથિકલ |

-પ |

પોપચા, પાડવા, પંચગવ્ય |

-બ |

બૉલની, બલાઢા, બિન્કસ |

-વ |

વાસ્તે, વાલીના, વિદેશોની |

-ર |

રેટની, રાસમસ, રેતીયાની |

-અ |

અવસ્થાના, અપ્રમાણિકતાનો, અતુલનીય |

Productive Suffixes

| Suffix | Examples |

|---|---|

-ર |

કાલક્રમાનુસાર, ઈન્કાર, છાપર |

-ન |

નાસારિસ્તાન, ફિઝિશ્યન, અંકન |

-સ |

બિન્કસ, રાસમસ, ન્યૂટ્સ |

-ક |

આસ્ક, કાળજીપુર્વક, ટેલિફોનિક |

-લ |

માયથિકલ, ખારોલ, લીવરપૂલ |

-ટ |

ઓરિએન્ટ, બેરોનેટ, કન્ફ્લિક્ટ |

-એ |

શાલ્વએ, વેબએ, ટેસ્લાએ |

-s |

guianensis, libraries, rhodes |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

tion |

3.61x | 29 contexts | motion, nation, notion |

atio |

3.66x | 25 contexts | ratio, nation, station |

indi |

3.58x | 24 contexts | india, hindi, indic |

વનગર |

3.22x | 14 contexts | ઇવનગર, ધુવનગર, ભાવનગર |

નવલક |

3.19x | 5 contexts | નવલકથા, નવલકથાએ, નવલકથાઓ |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-ક |

-ન |

26 words | કુલન, ક્રુશિયન |

-સ |

-સ |

25 words | સ્પીકર્સ, સેટેલાઇટ્સ |

-ક |

-સ |

22 words | કનેક્શન્સ, કાલપેર્સ |

-ક |

-ર |

22 words | કેલનર, કૃષ્ણકુમાર |

-સ |

-ક |

18 words | સબસોનિક, સ્ટ્રેટેજીક |

-સ |

-ર |

17 words | સુમેર, સ્ટ્રાઈકર |

-પ |

-ર |

17 words | પાઉડર, પીયર |

-સ |

-ન |

17 words | સફરજન, સવેન |

-મ |

-ન |

16 words | માર્જીન, મિત્રસેન |

-પ |

-ન |

16 words | પરાધીન, પ્રણોદન |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| conventional | convention-al |

4.5 | convention |

| કસોટીઓમાં | ક-સ-ોટીઓમાં |

4.5 | ોટીઓમાં |

| એનડબલ્યુએ | એનડબલ્યુ-એ |

4.5 | એનડબલ્યુ |

| ઇન્સ્ટ્રુમેન્ટલ | ઇન્સ્ટ્રુમેન્ટ-લ |

4.5 | ઇન્સ્ટ્રુમેન્ટ |

| અવિશ્વાસની | અ-વિશ્વાસની |

4.5 | વિશ્વાસની |

| હેલેનીકોન | હેલેનીકો-ન |

4.5 | હેલેનીકો |

| manifestations | manifestation-s |

4.5 | manifestation |

| ટેકનોલોજીએ | ટેકનોલોજી-એ |

4.5 | ટેકનોલોજી |

| festivals | festival-s |

4.5 | festival |

| citations | citation-s |

4.5 | citation |

| લક્ષણોમાં | લ-ક્ષણોમાં |

4.5 | ક્ષણોમાં |

| પહોંચાડવામાં | પ-હ-ોંચાડવામાં |

4.5 | ોંચાડવામાં |

| બિનસહસંયોજક | બ-િનસહસંયોજ-ક |

3.0 | િનસહસંયોજ |

| રુખમાબાઈને | ર-ુખમાબાઈને |

1.5 | ુખમાબાઈને |

| ભવિષ્યકથન | ભવિષ્યકથ-ન |

1.5 | ભવિષ્યકથ |

6.6 Linguistic Interpretation

Automated Insight: The language Gujarati shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.35x) |

| N-gram | 2-gram | Lowest perplexity (2,297) |

| Markov | Context-4 | Highest predictability (97.8%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-10 00:30:07