language: ig

language_name: Igbo

language_family: atlantic_yoruba_igbo

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- feature-extraction

- sentence-similarity

- tokenization

- n-grams

- markov-chain

- text-mining

- fasttext

- babelvec

- vocabulous

- vocabulary

- monolingual

- family-atlantic_yoruba_igbo

license: mit

library_name: wikilangs

pipeline_tag: text-generation

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 3.745

- name: best_isotropy

type: isotropy

value: 0.8093

- name: vocabulary_size

type: vocab

value: 0

generated: 2026-01-10T00:00:00.000Z

Igbo - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Igbo Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

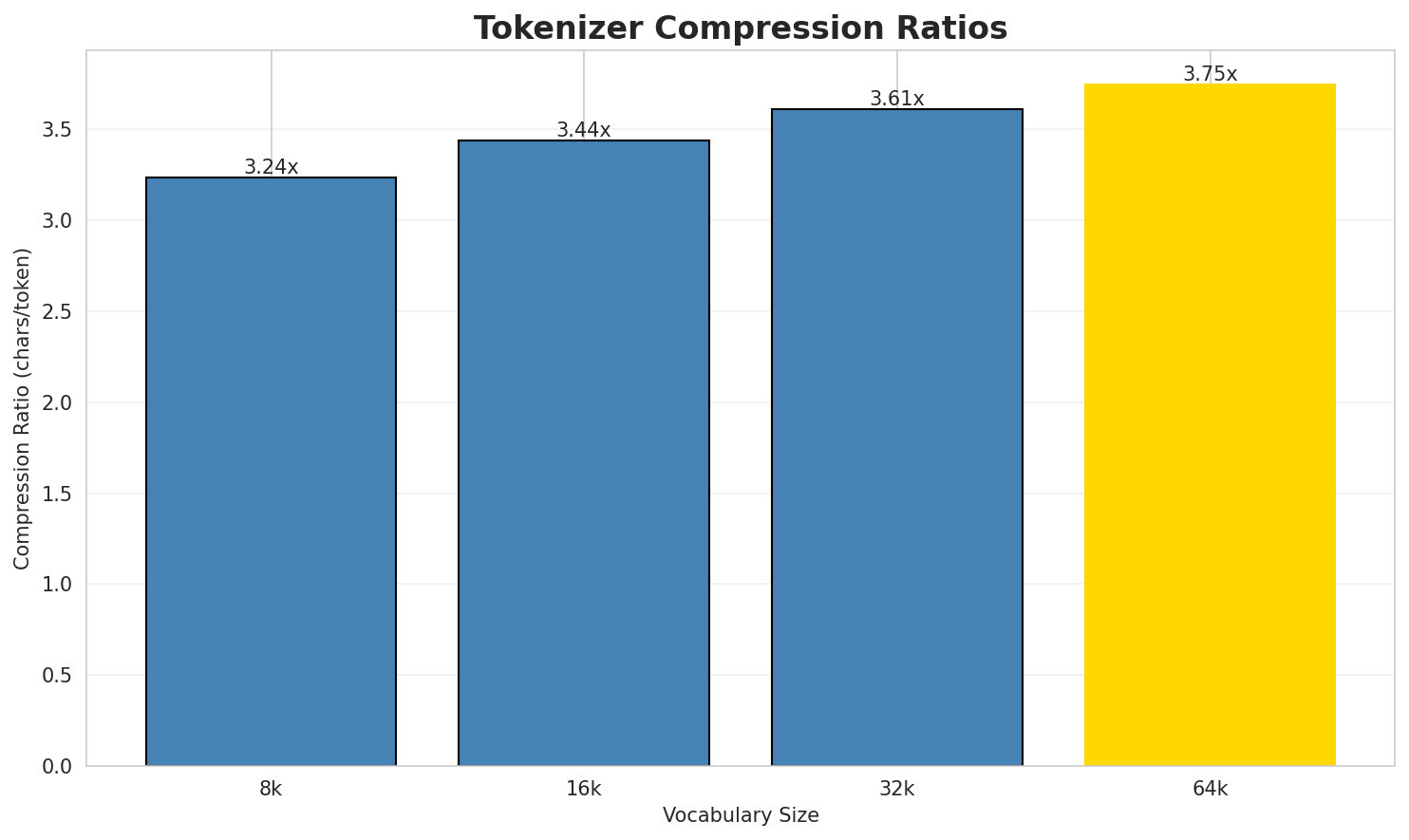

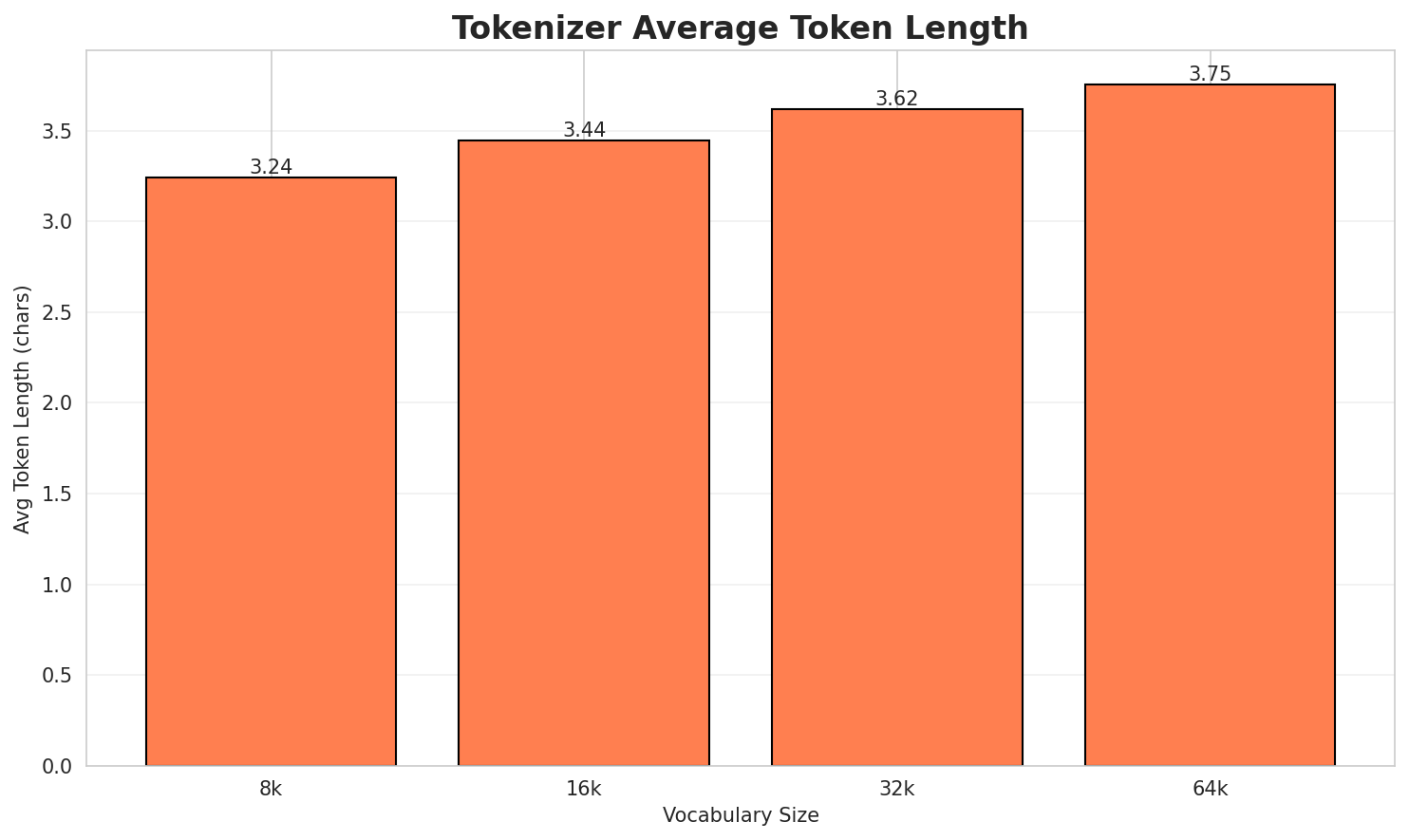

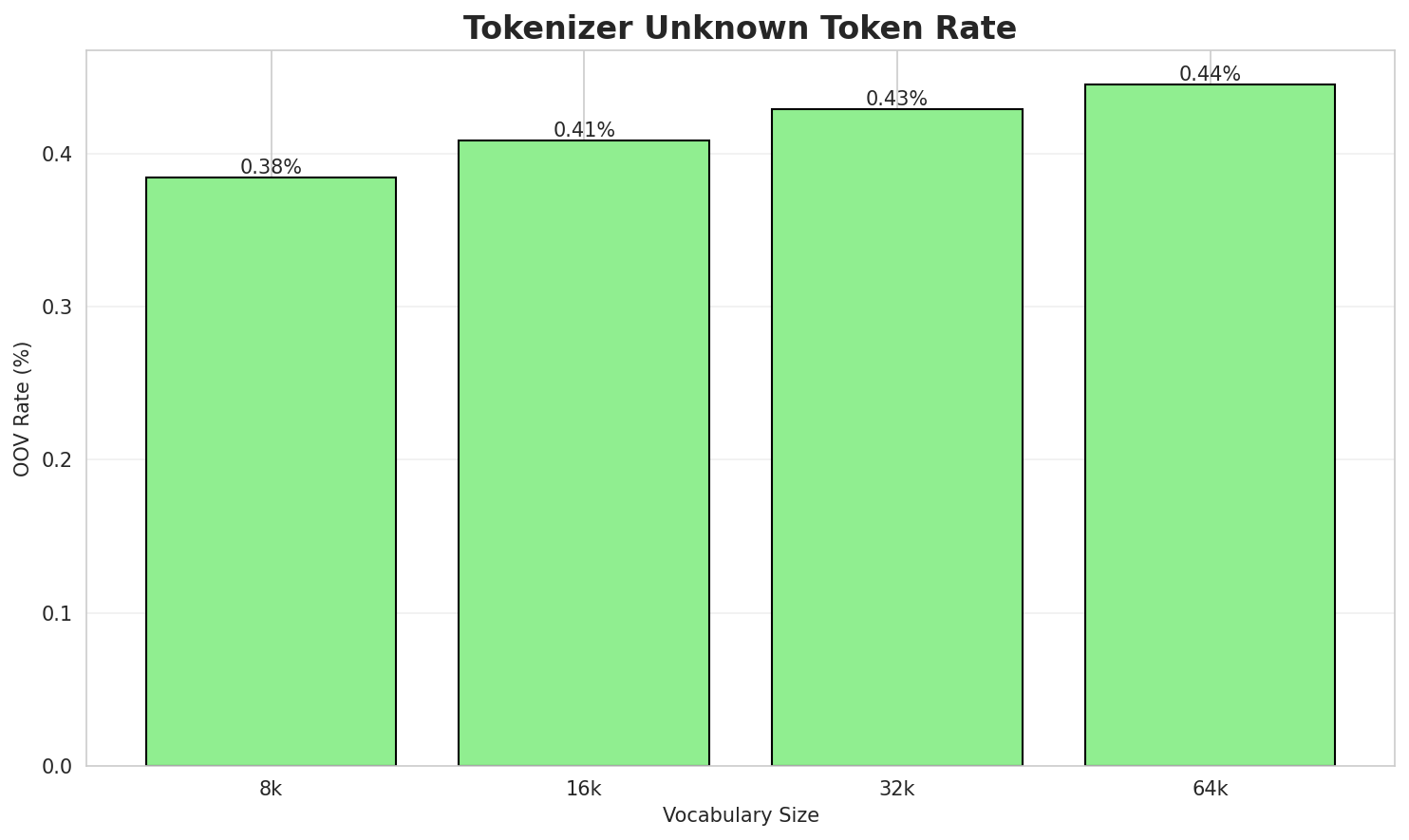

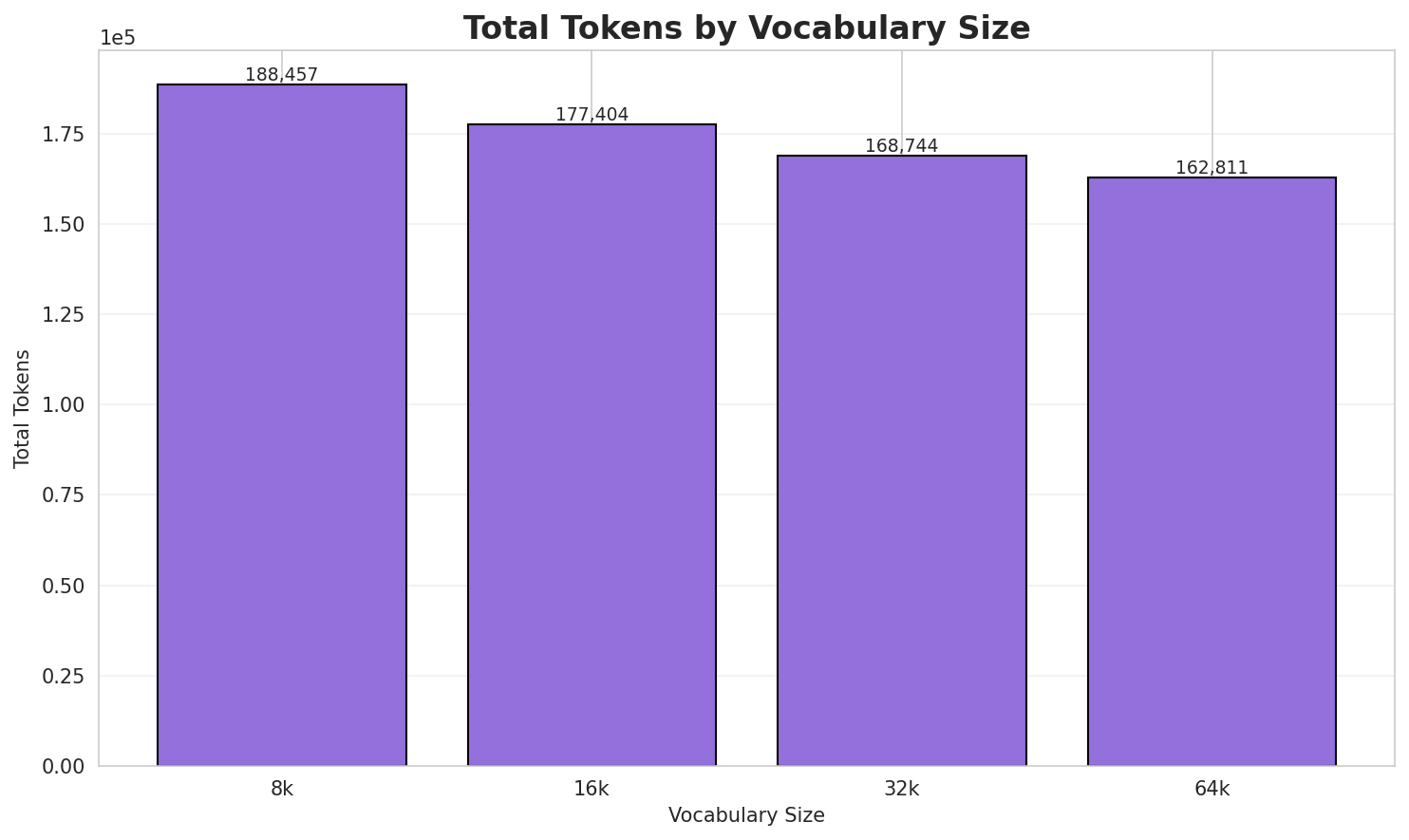

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.236x | 3.24 | 0.3842% | 188,457 |

| 16k | 3.437x | 3.44 | 0.4081% | 177,404 |

| 32k | 3.614x | 3.62 | 0.4291% | 168,744 |

| 64k | 3.745x 🏆 | 3.75 | 0.4447% | 162,811 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Duli bu nwere ike izo aka na: Duli, Ardabil, Iran Duli, Hamadan, Iran Duli, Nepa...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁du li ▁bu ▁nwere ▁ike ▁izo ▁aka ▁na : ▁du ... (+31 more) |

41 |

| 16k | ▁du li ▁bu ▁nwere ▁ike ▁izo ▁aka ▁na : ▁du ... (+31 more) |

41 |

| 32k | ▁du li ▁bu ▁nwere ▁ike ▁izo ▁aka ▁na : ▁du ... (+31 more) |

41 |

| 64k | ▁du li ▁bu ▁nwere ▁ike ▁izo ▁aka ▁na : ▁du ... (+31 more) |

41 |

Sample 2: Purukotó (Purucotó) bụ asụsụ Cariban na-apụ n'anya . Kaufman debere ya na ngalab...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁pu ru ko t ó ▁( pu ru co t ... (+30 more) |

40 |

| 16k | ▁puru kot ó ▁( puru co tó ) ▁bụ ▁asụsụ ... (+24 more) |

34 |

| 32k | ▁puru kot ó ▁( puru co tó ) ▁bụ ▁asụsụ ... (+22 more) |

32 |

| 64k | ▁puru kot ó ▁( puru co tó ) ▁bụ ▁asụsụ ... (+22 more) |

32 |

Sample 3: Manombai (nke a dị ka Wokam) bụ otu n'ime Asụsụ Aru, nke ndị bi na Aru Islands, ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁man om bai ▁( nke ▁a ▁dị ▁ka ▁wo ka ... (+24 more) |

34 |

| 16k | ▁man om bai ▁( nke ▁a ▁dị ▁ka ▁wo kam ... (+23 more) |

33 |

| 32k | ▁man om bai ▁( nke ▁a ▁dị ▁ka ▁wo kam ... (+23 more) |

33 |

| 64k | ▁man om bai ▁( nke ▁a ▁dị ▁ka ▁wo kam ... (+23 more) |

33 |

Key Findings

- Best Compression: 64k achieves 3.745x compression

- Lowest UNK Rate: 8k with 0.3842% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

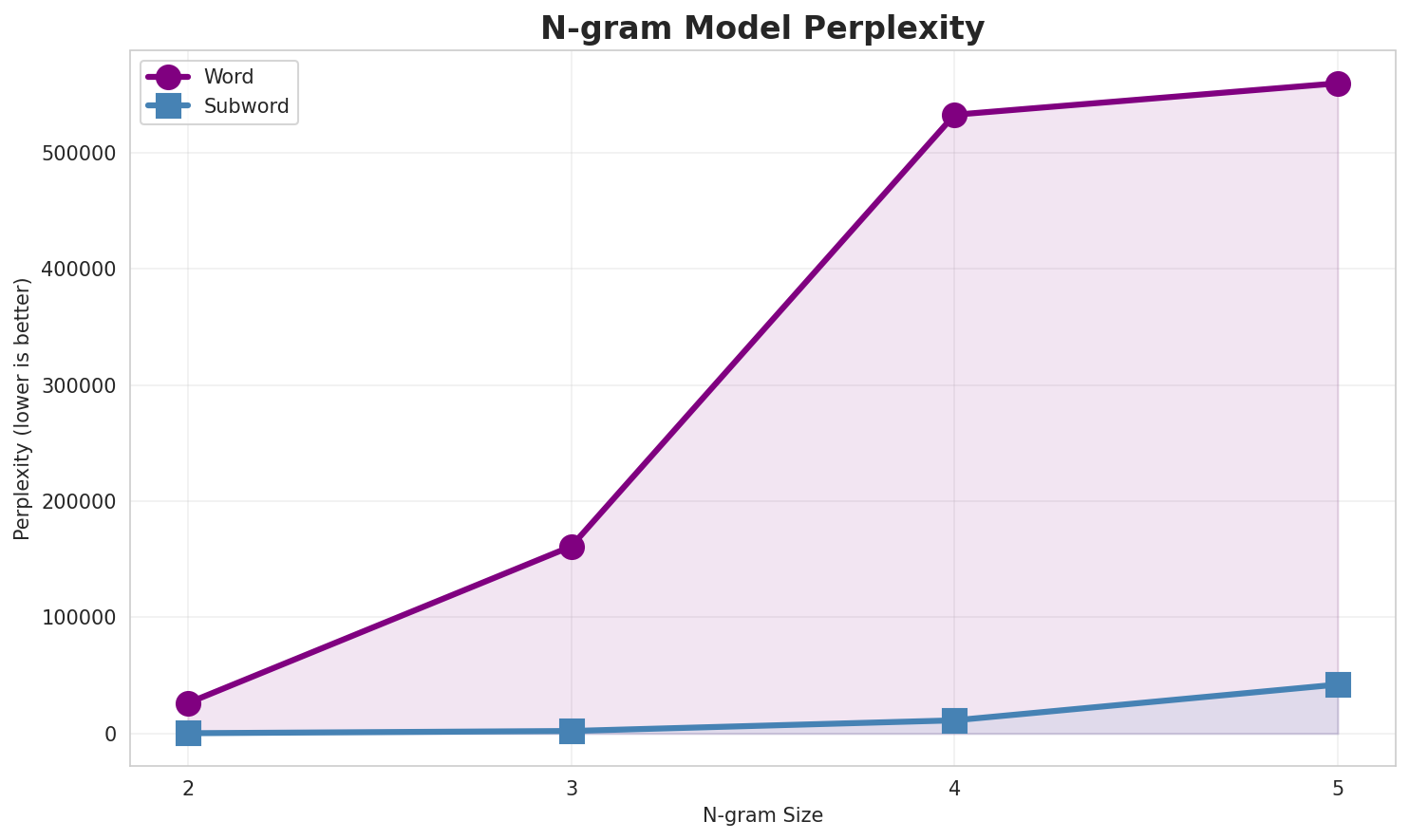

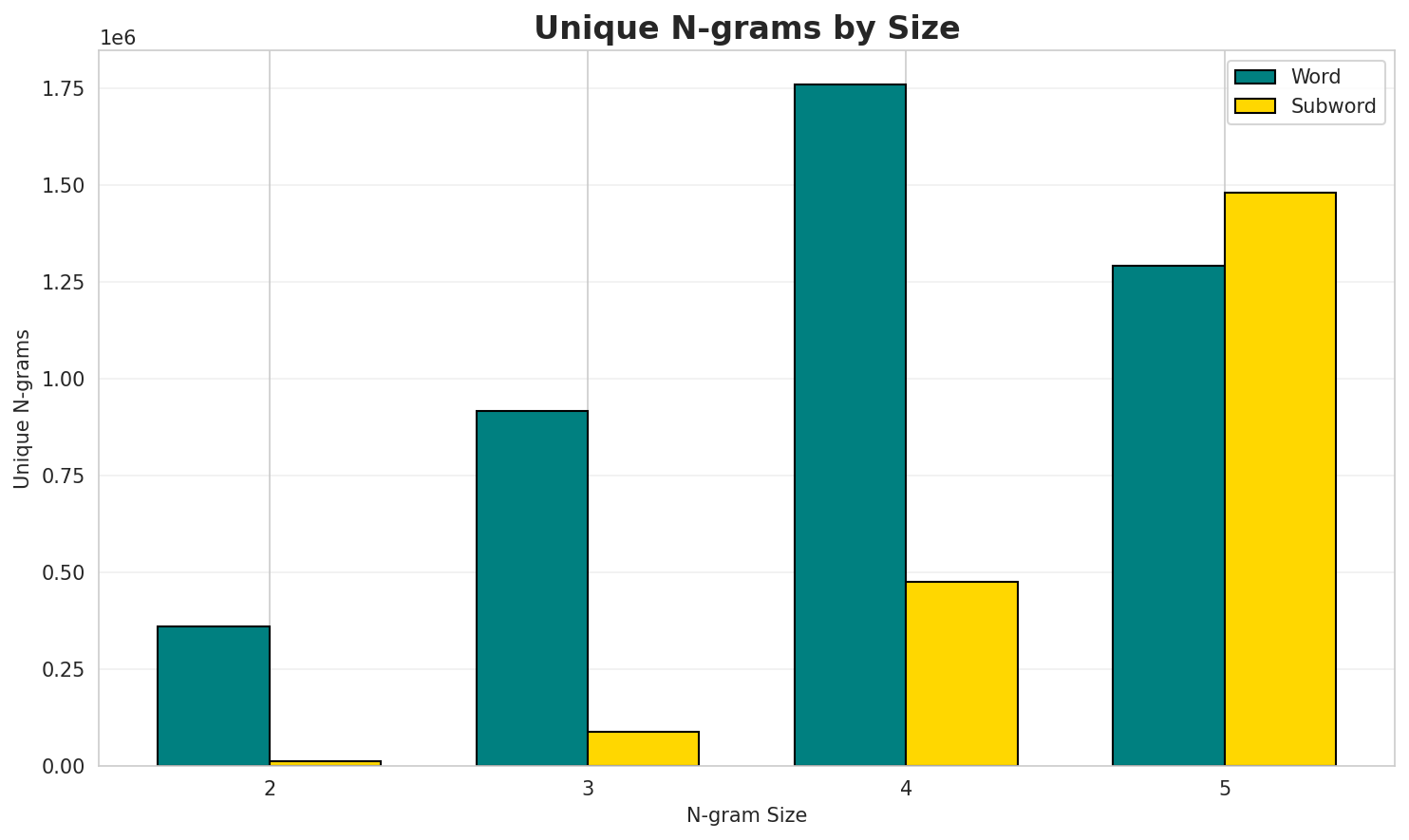

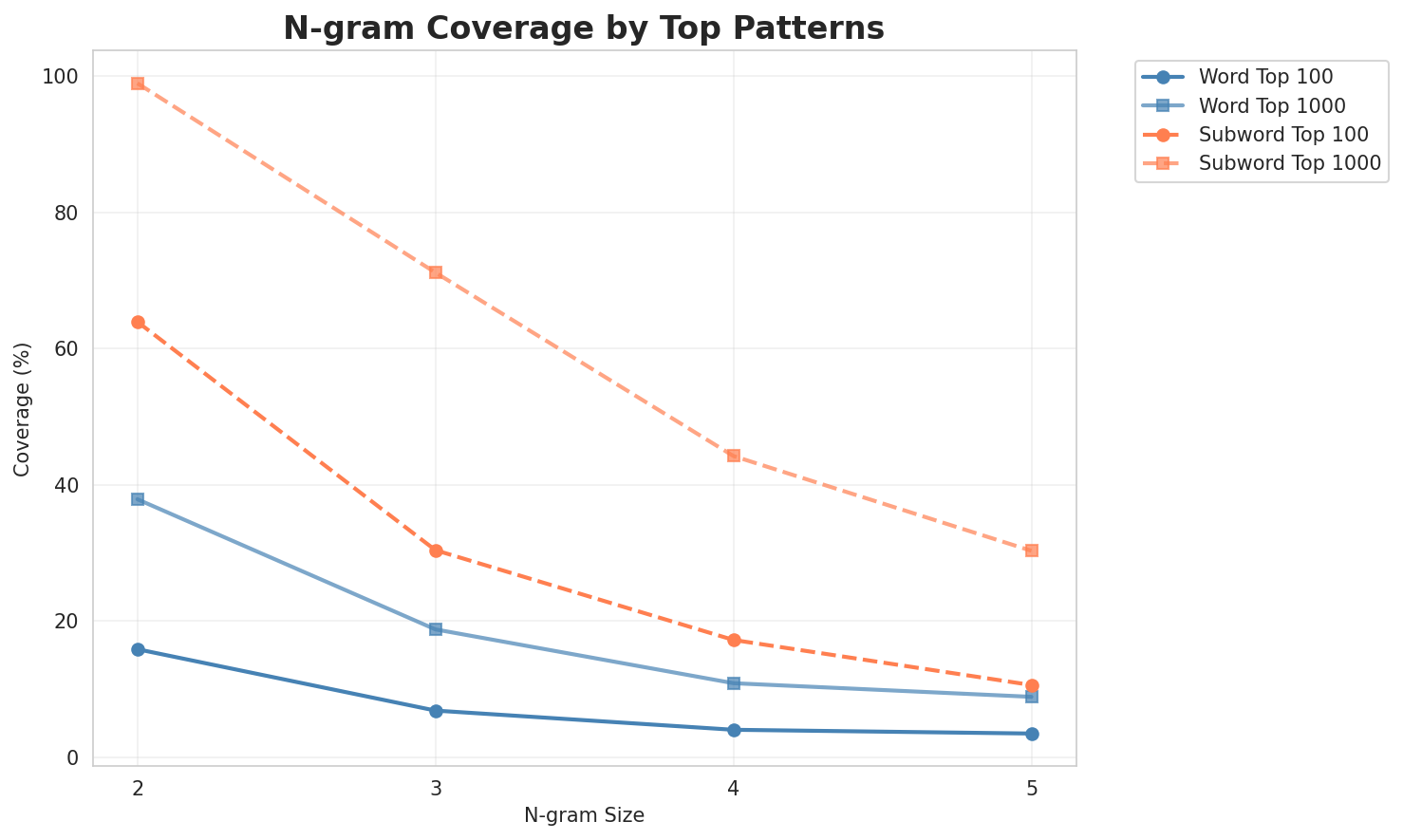

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 26,246 | 14.68 | 359,156 | 15.9% | 37.9% |

| 2-gram | Subword | 280 🏆 | 8.13 | 12,173 | 64.0% | 99.0% |

| 3-gram | Word | 161,068 | 17.30 | 916,288 | 6.8% | 18.8% |

| 3-gram | Subword | 2,183 | 11.09 | 87,468 | 30.4% | 71.2% |

| 4-gram | Word | 532,594 | 19.02 | 1,757,879 | 4.0% | 10.9% |

| 4-gram | Subword | 11,363 | 13.47 | 475,134 | 17.2% | 44.2% |

| 5-gram | Word | 559,672 | 19.09 | 1,291,016 | 3.5% | 8.9% |

| 5-gram | Subword | 42,173 | 15.36 | 1,479,265 | 10.6% | 30.3% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | dị ka |

140,163 |

| 2 | a na |

112,277 |

| 3 | ọ bụ |

105,148 |

| 4 | ya na |

99,998 |

| 5 | site na |

75,118 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ma ọ bụ |

47,538 |

| 2 | dị ka onye |

33,165 |

| 3 | dị iche iche |

22,236 |

| 4 | ndi di ndụ |

19,640 |

| 5 | na eme ihe |

19,264 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | mmadụ ndi di ndụ |

17,108 |

| 2 | òtù mmadụ ndi di |

17,101 |

| 3 | na eme ihe nkiri |

13,842 |

| 4 | akụkọ ihe mere eme |

12,735 |

| 5 | dị ka onye na |

9,212 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | òtù mmadụ ndi di ndụ |

17,099 |

| 2 | onye na eme ihe nkiri |

6,973 |

| 3 | òtù pages with unreviewed translations |

4,329 |

| 4 | e dere n ala ala |

4,004 |

| 5 | ihe e dere n ala |

3,927 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ n |

5,638,183 |

| 2 | a _ |

5,376,024 |

| 3 | e _ |

4,318,368 |

| 4 | n a |

2,708,872 |

| 5 | _ a |

2,215,860 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ n a |

2,367,266 |

| 2 | n a _ |

1,687,800 |

| 3 | a _ n |

1,387,006 |

| 4 | e _ n |

1,187,243 |

| 5 | _ n k |

938,041 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ n a _ |

1,567,660 |

| 2 | _ n k e |

743,366 |

| 3 | n k e _ |

735,578 |

| 4 | _ n a - |

656,811 |

| 5 | a _ n a |

579,489 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ n k e _ |

722,504 |

| 2 | _ n d ị _ |

399,246 |

| 3 | _ i h e _ |

373,739 |

| 4 | _ n a - e |

351,252 |

| 5 | a _ n a _ |

349,914 |

Key Findings

- Best Perplexity: 2-gram (subword) with 280

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~30% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

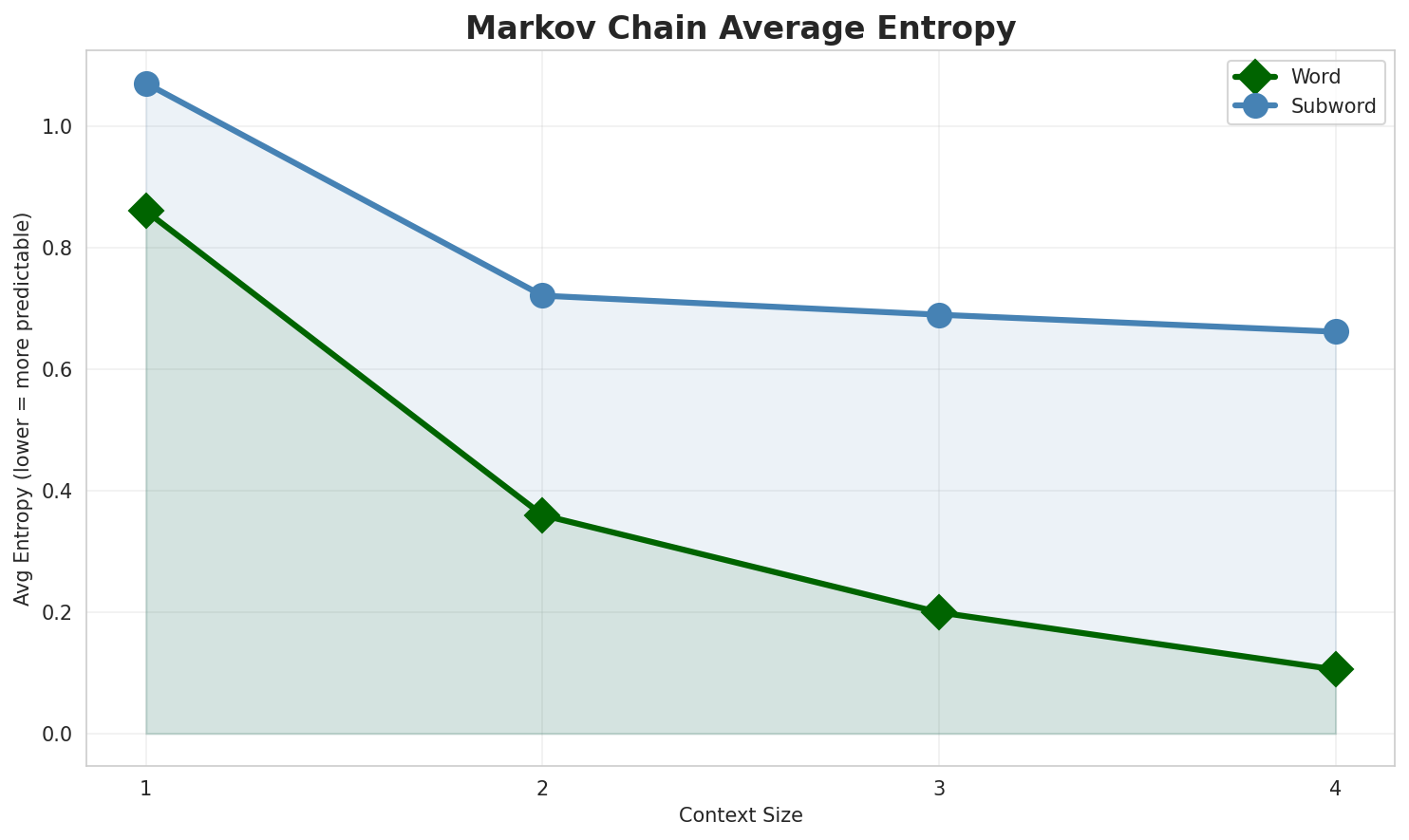

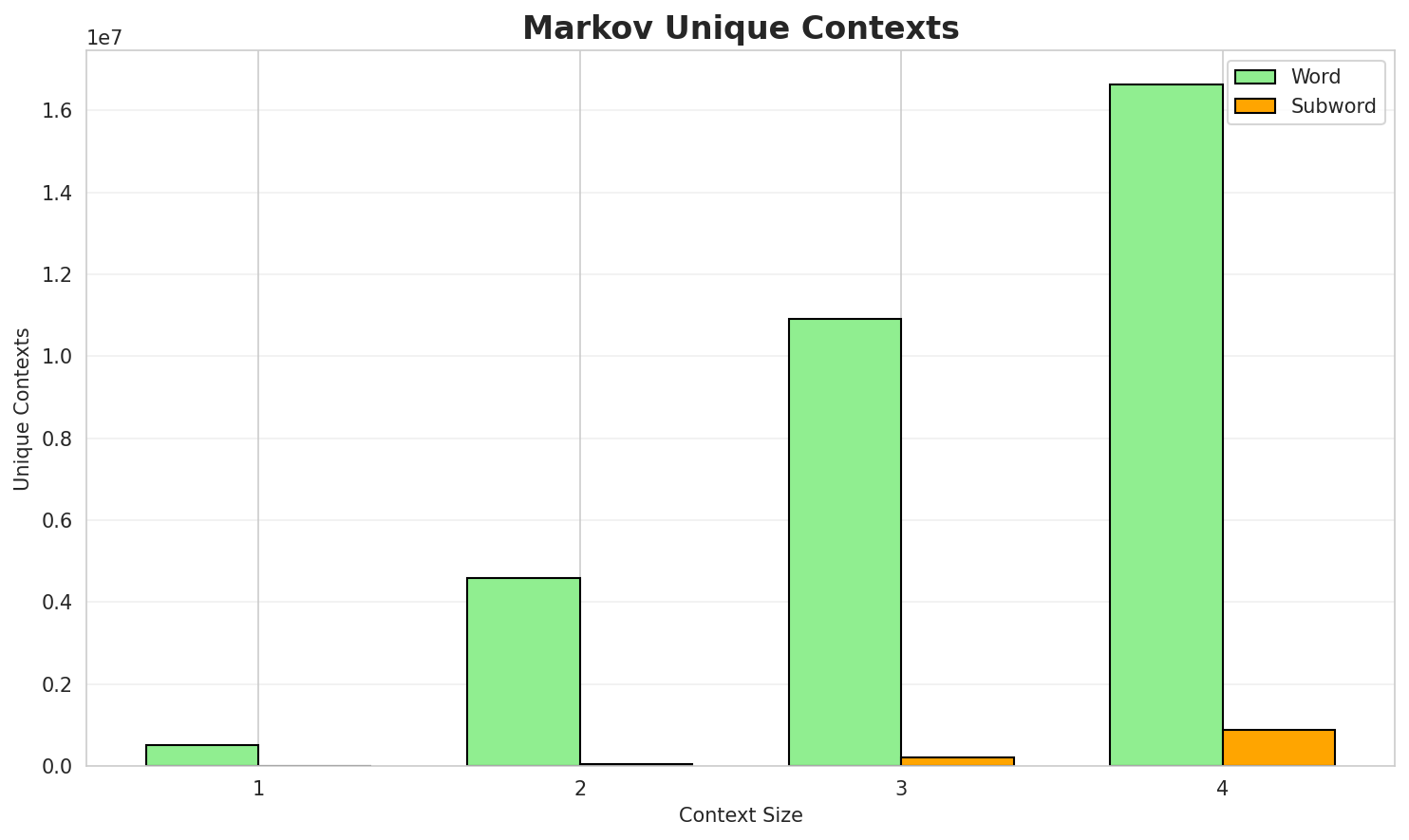

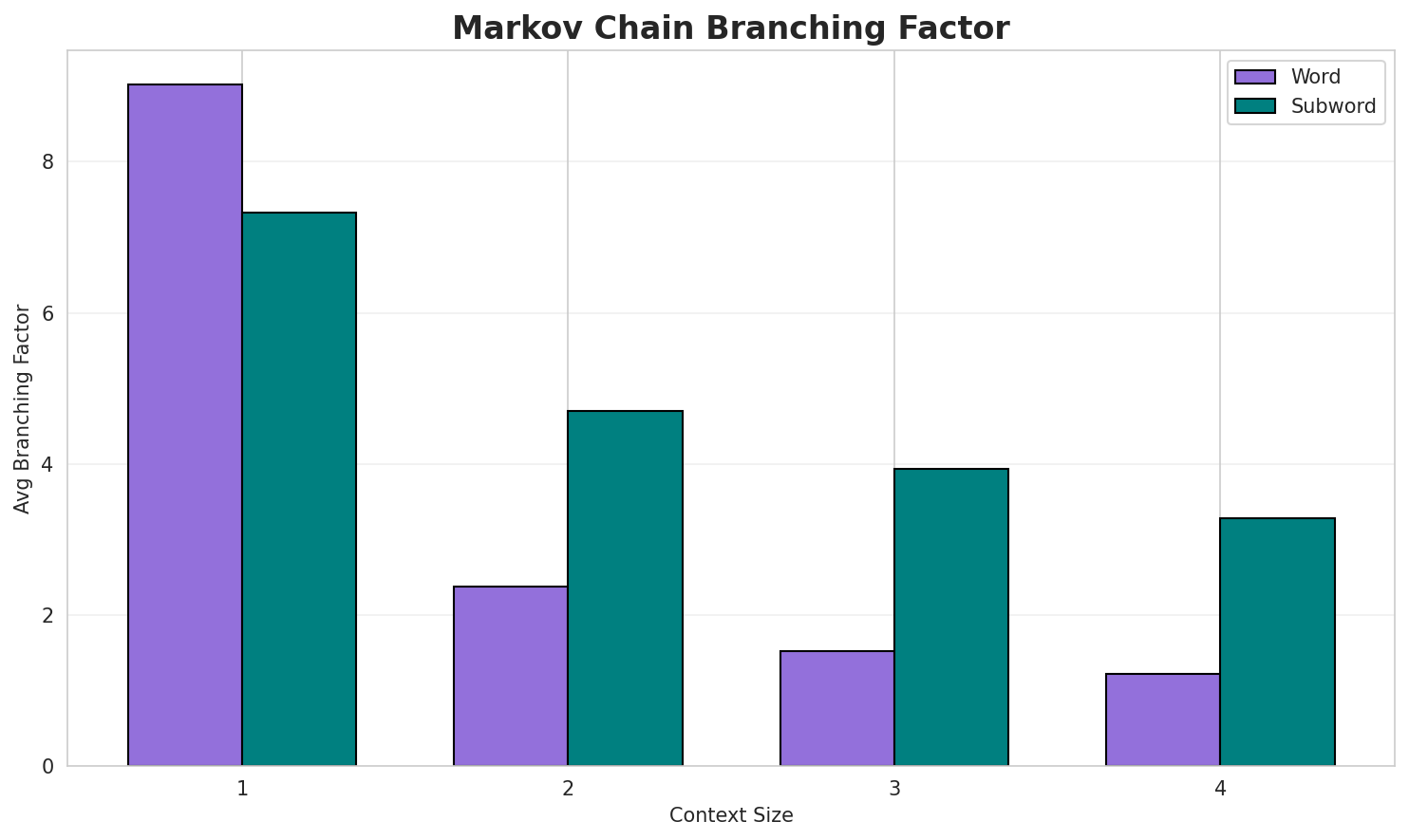

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.8607 | 1.816 | 9.02 | 510,524 | 13.9% |

| 1 | Subword | 1.0714 | 2.101 | 7.32 | 6,437 | 0.0% |

| 2 | Word | 0.3599 | 1.283 | 2.38 | 4,598,546 | 64.0% |

| 2 | Subword | 0.7215 | 1.649 | 4.70 | 47,137 | 27.9% |

| 3 | Word | 0.1996 | 1.148 | 1.52 | 10,914,867 | 80.0% |

| 3 | Subword | 0.6901 | 1.613 | 3.94 | 221,281 | 31.0% |

| 4 | Word | 0.1054 🏆 | 1.076 | 1.21 | 16,623,256 | 89.5% |

| 4 | Subword | 0.6621 | 1.582 | 3.28 | 871,504 | 33.8% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

na mmemme ahụ n ọtụtụ ndị dugara na abụọ nke 302 west sepik province nke anke na kaduna kama nke ndị agha ebumnuche na ndị na otu a na ya olulun ime ndị o kwuru na ahụ na eto ya niile na dholuo okpukpe n etiti

Context Size 2:

dị ka nke abụọ marathon nke etiopia onye otu bọọdụ na achọ ọfịs dabere na ike araromirea na enyo enyo ébé ọ bi na ya jide nche anwụ nke all progressives congress apcọ bụ akụkụ nke machar colony akụkụ nke usoro nke na ezere ọkwa nna ya bụ 531

Context Size 3:

ma ọ bụ tin ore ihe ndị fọdụrụ na german army dina na nzuzo na eduga na nkwupụtadị ka onye edemede na onye na ezisa ozi ọma na ghana ebe ọ mmụta akwụkwọ na adịbeghịdị iche iche nke a ga enyocha n ihu nyocha nke chọpụtara ụzọ agha oke ala nke dara

Context Size 4:

òtù mmadụ ndi di ndụ òtù pages with unreviewed translations __lead_section__ áká_ịkẹngạ thumb ihe ej...na eme ihe nkiri kacha mma na ọrụ dị mkpa nke ala ala dị n ibéetiti ahụ áká_èkpè thumbakụkọ ihe mere eme na muizenberg cape town mbipụta abụ m na efe efe carapace doo wop girls of

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_i_natọ_nọ_ndonaa_ngbụ_ondiy_ma_e_i_nnropana-e_ụ

Context Size 2:

_ng_porosii_nke_aa_ọdụ_na_ka_hasụ_e_12.2,_ndihe_ọzọ

Context Size 3:

_na-ụdị_nwunyere_ona_nke_na_gọzi_na_a_nke_umuagest_6_k

Context Size 4:

_na_baltham_taa_aː__nke_12,_ndị_burugbnke_ọrụ_egypt_mara_

Key Findings

- Best Predictability: Context-4 (word) with 89.5% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (871,504 contexts)

- Recommendation: Context-3 or Context-4 for text generation

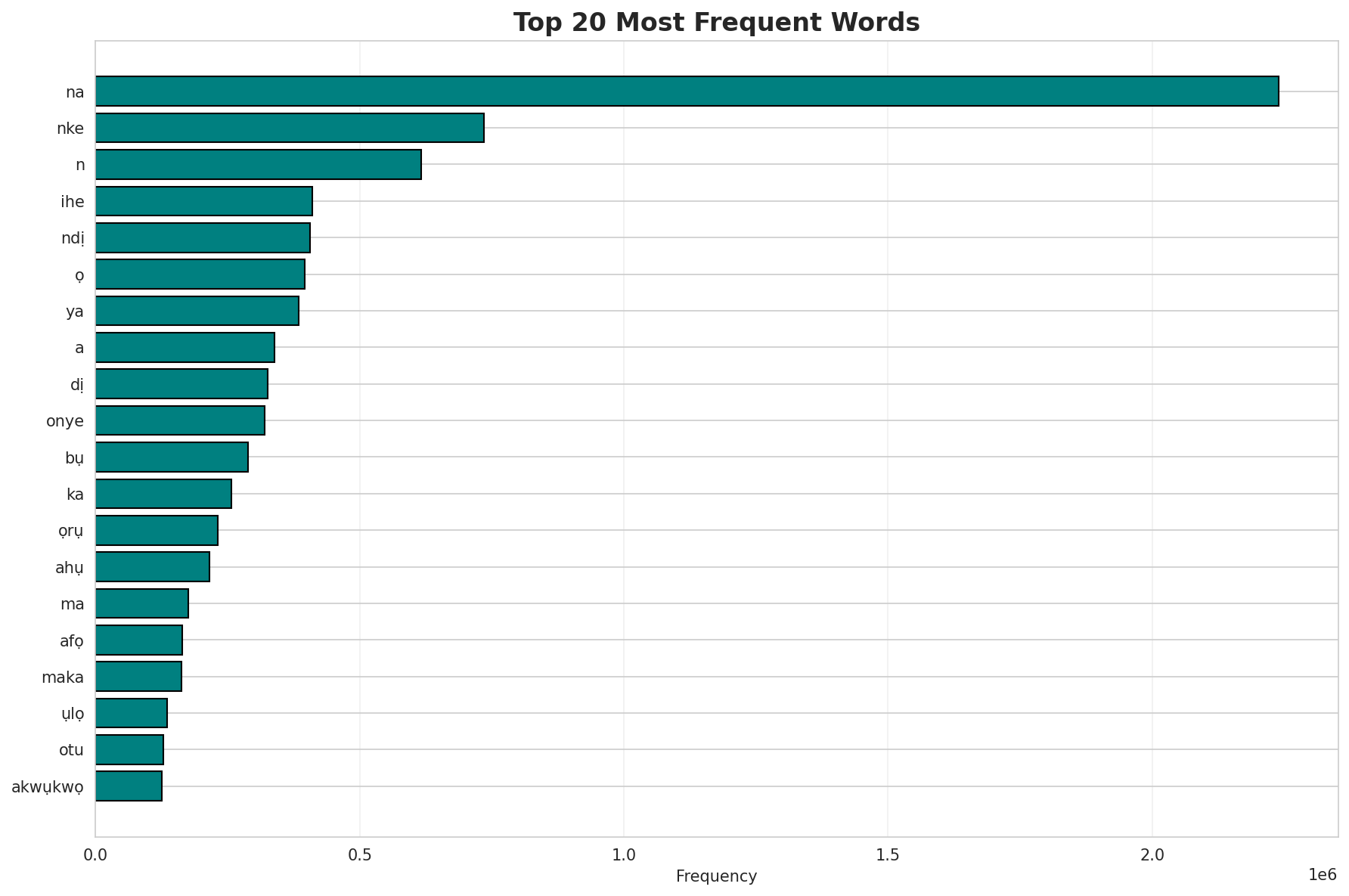

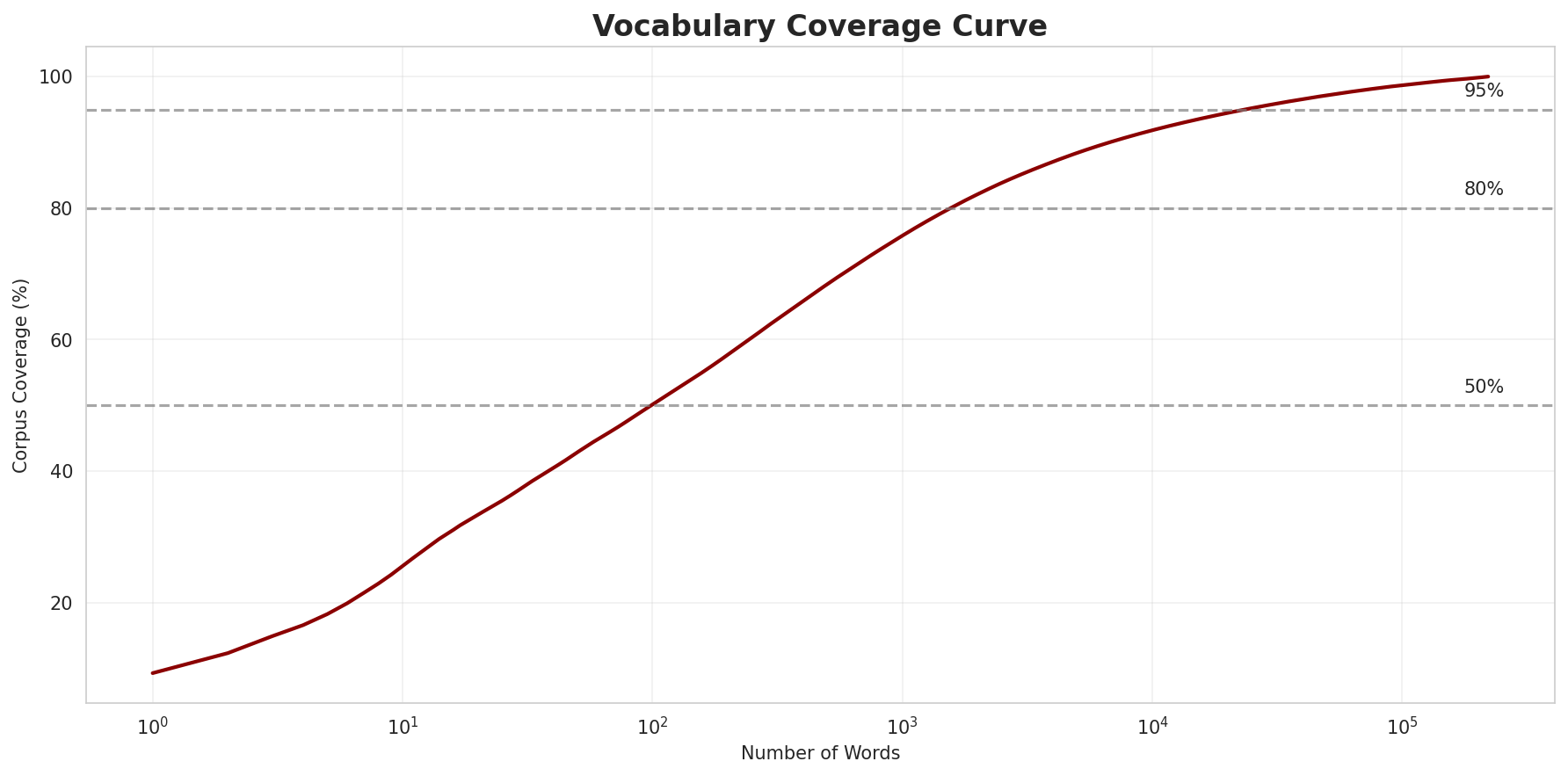

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 220,608 |

| Total Tokens | 24,129,478 |

| Mean Frequency | 109.38 |

| Median Frequency | 4 |

| Frequency Std Dev | 5866.90 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | na | 2,239,768 |

| 2 | nke | 735,052 |

| 3 | n | 615,909 |

| 4 | ihe | 410,419 |

| 5 | ndị | 405,283 |

| 6 | ọ | 395,253 |

| 7 | ya | 384,400 |

| 8 | a | 339,042 |

| 9 | dị | 325,019 |

| 10 | onye | 319,693 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | agbalagbo | 2 |

| 2 | akpalagu | 2 |

| 3 | okwule | 2 |

| 4 | otuogene | 2 |

| 5 | ovili | 2 |

| 6 | anyansi | 2 |

| 7 | ifediorah | 2 |

| 8 | chidalu | 2 |

| 9 | okebo | 2 |

| 10 | pdna | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.2680 |

| R² (Goodness of Fit) | 0.992771 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 50.1% |

| Top 1,000 | 75.8% |

| Top 5,000 | 88.4% |

| Top 10,000 | 91.8% |

Key Findings

- Zipf Compliance: R²=0.9928 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 50.1% of corpus

- Long Tail: 210,608 words needed for remaining 8.2% coverage

5. Word Embeddings Evaluation

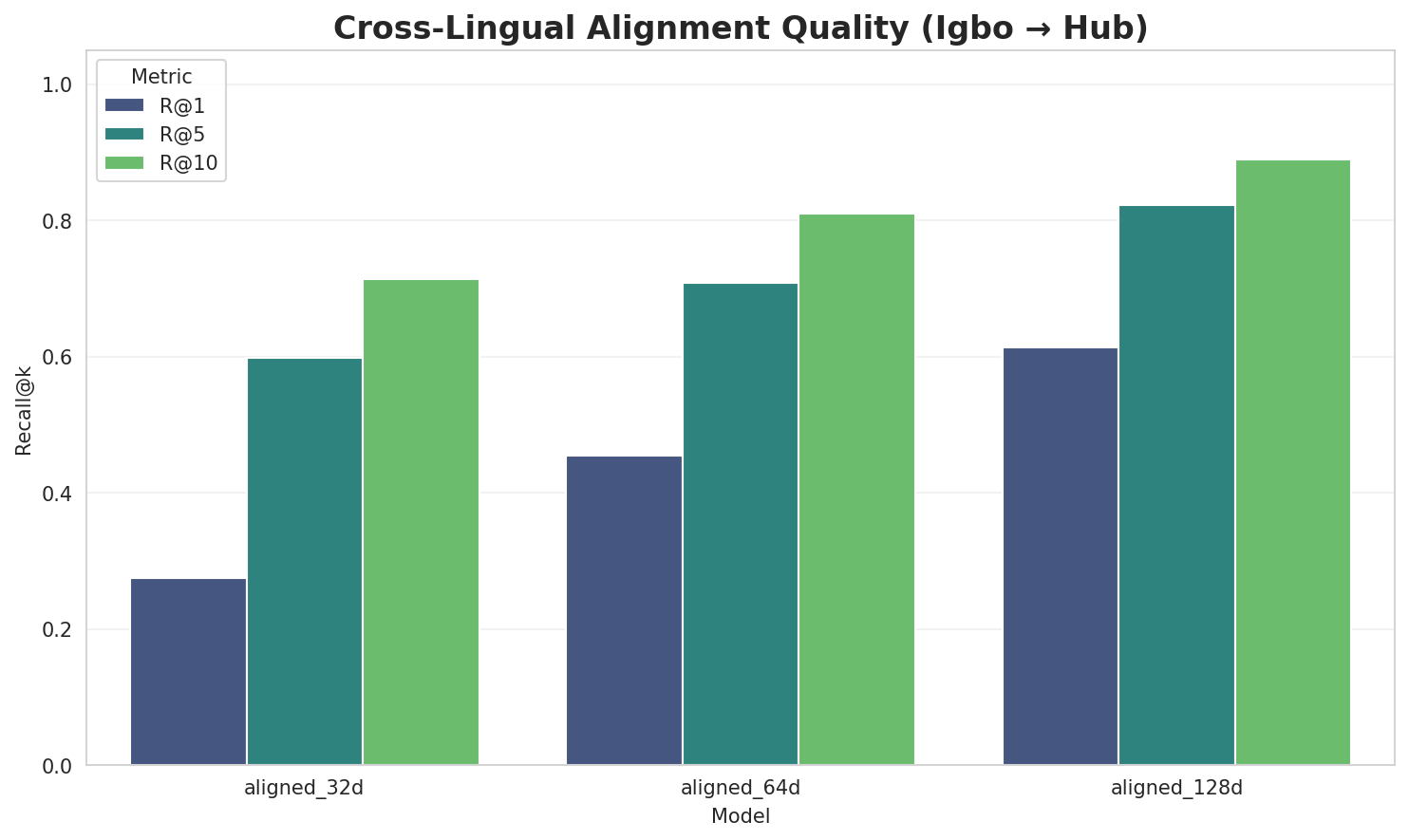

5.1 Cross-Lingual Alignment

5.2 Model Comparison

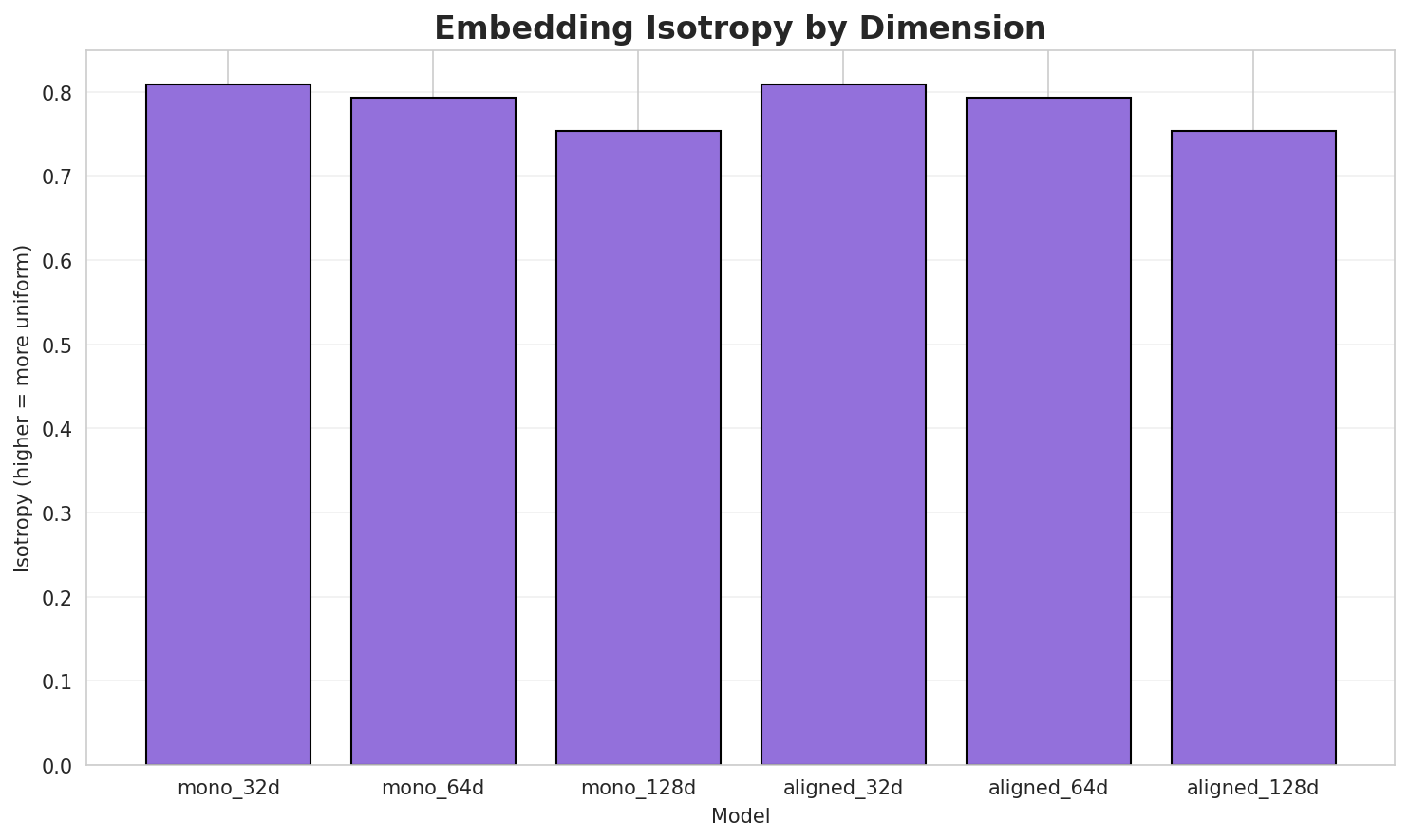

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8093 | 0.4233 | N/A | N/A |

| mono_64d | 64 | 0.7925 | 0.3195 | N/A | N/A |

| mono_128d | 128 | 0.7531 | 0.2578 | N/A | N/A |

| aligned_32d | 32 | 0.8093 🏆 | 0.4482 | 0.2740 | 0.7140 |

| aligned_64d | 64 | 0.7925 | 0.3263 | 0.4540 | 0.8100 |

| aligned_128d | 128 | 0.7531 | 0.2597 | 0.6140 | 0.8900 |

Key Findings

- Best Isotropy: aligned_32d with 0.8093 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.3391. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 61.4% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | -0.708 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-a |

agathon, aboudia, ankusha |

-m |

mertsalov, millionaire, müttererholungsverein |

-n |

naimdb, nasril, nwpl |

-ma |

malitereihe, matsumoto, mackerdhuj |

-s |

schnee, shabaka, shuaibiu |

-b |

beloved, bourguiba, brunhild |

-k |

kechie, kareem, kilolo |

-e |

edekọrọ, eribake, edremoda |

Productive Suffixes

| Suffix | Examples |

|---|---|

-e |

kechie, millionaire, ghọtahie |

-a |

yulia, hekka, bourguiba |

-s |

hypochlorous, pleiades, morcus |

-n |

müttererholungsverein, fleischman, agathon |

-i |

wabehi, hajjaji, adefarati |

-r |

mountaineer, leaver, br |

-o |

turbo, wamco, kilolo |

-t |

chiat, rajput, zuidoost |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

atio |

2.41x | 79 contexts | ation, ratio, patio |

fric |

2.53x | 46 contexts | afric, frick, friche |

nati |

2.46x | 46 contexts | natij, inati, natie |

epụt |

2.22x | 64 contexts | kepụta, ndepụt, mepụta |

alit |

1.92x | 109 contexts | alita, alito, palit |

kwad |

2.39x | 40 contexts | kwadi, kwado, kwada |

wany |

1.95x | 71 contexts | wanyä, nwany, wanye |

gbas |

2.08x | 54 contexts | gbasa, egbas, ịgbasa |

nwan |

1.93x | 73 contexts | nwany, enwan, nwana |

ụtar |

2.04x | 56 contexts | ụtara, ụtarị, tụtara |

ọpụt |

1.94x | 68 contexts | ọpụta, kọpụta, họpụta |

nwet |

2.21x | 39 contexts | nweta, nwetụ, nwete |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-a |

-a |

92 words | amazônia, arema |

-m |

-e |

74 words | montefiore, mmachineke |

-m |

-s |

70 words | marthinus, missionaries |

-m |

-a |

69 words | mgbasasa, mëhneja |

-a |

-e |

69 words | adae, adamorobe |

-s |

-s |

66 words | schreiners, strives |

-a |

-s |

62 words | antiperspirants, autonomous |

-s |

-e |

55 words | stalemate, sute |

-k |

-a |

53 words | kadina, katọkwara |

-s |

-a |

51 words | spelaea, shadia |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| avanzadoras | avanzador-a-s |

7.5 | a |

| commutata | commu-ta-ta |

7.5 | ta |

| starfruit | starfru-i-t |

7.5 | i |

| johnsonmain | johnsonm-a-in |

7.5 | a |

| maniapoto | maniapo-t-o |

7.5 | t |

| hollywoodland | hollywoodl-an-d |

7.5 | an |

| camptoceras | camptoce-ra-s |

7.5 | ra |

| expressway | express-wa-y |

7.5 | wa |

| minnijean | minnij-e-an |

7.5 | e |

| multiflora | multifl-o-ra |

7.5 | o |

| christened | christe-n-ed |

7.5 | n |

| westfälisch | westfälis-c-h |

7.5 | c |

| caballero | ca-baller-o |

6.0 | baller |

| personnel | person-ne-l |

6.0 | person |

| ameringer | ameri-ng-er |

6.0 | ameri |

6.6 Linguistic Interpretation

Automated Insight: The language Igbo shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (3.75x) |

| N-gram | 2-gram | Lowest perplexity (280) |

| Markov | Context-4 | Highest predictability (89.5%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-10 05:45:06