Ingush - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Ingush Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

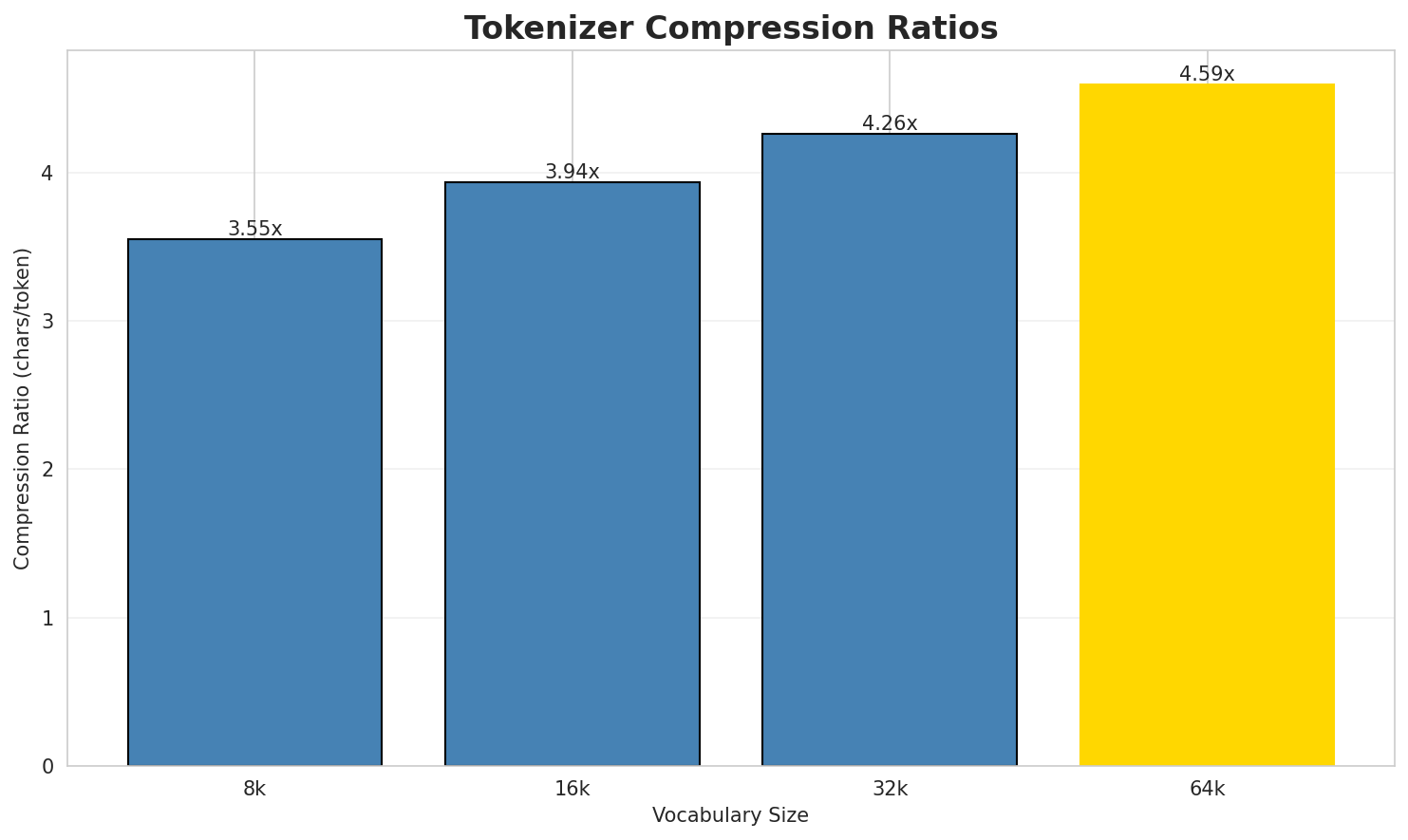

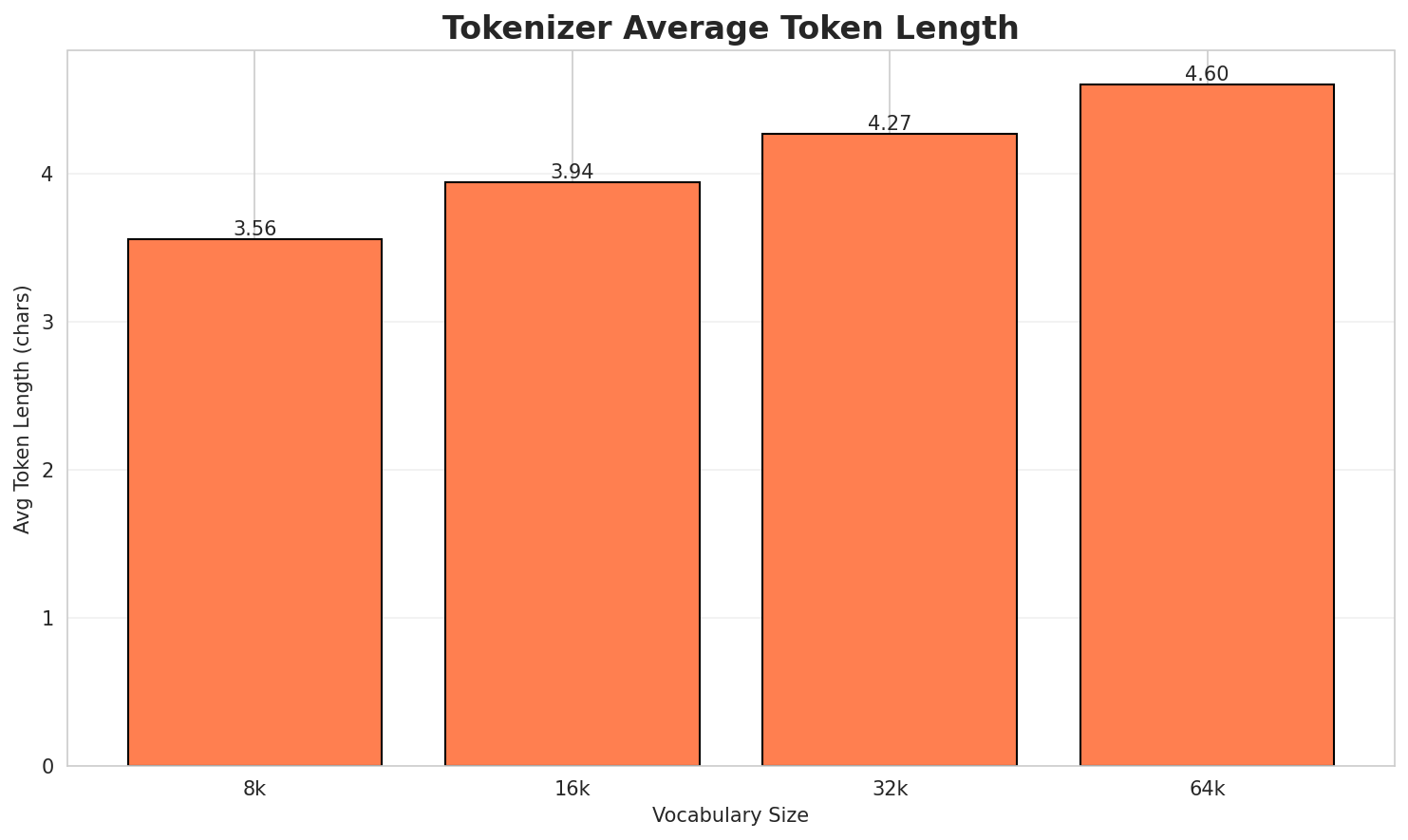

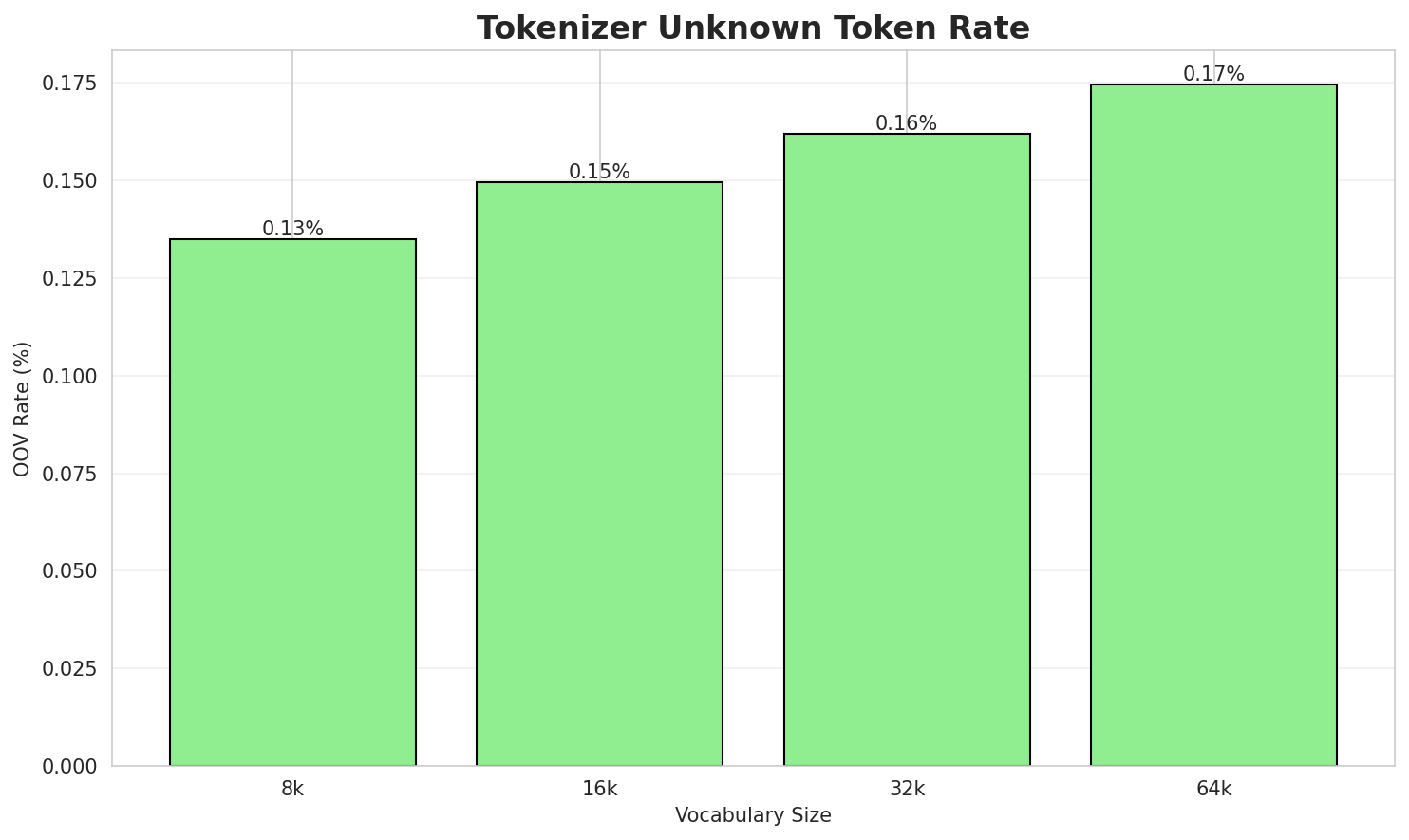

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.549x | 3.56 | 0.1349% | 201,601 |

| 16k | 3.935x | 3.94 | 0.1496% | 181,782 |

| 32k | 4.258x | 4.27 | 0.1619% | 168,012 |

| 64k | 4.589x 🏆 | 4.60 | 0.1745% | 155,892 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Ме́ксика ( ), официальни — Мексикахой Хетта ШтаташМИД России | | МЕКСИКА () — па...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ме ́ кс ика ▁( ▁), ▁официальни ▁— ▁мекс ика ... (+19 more) |

29 |

| 16k | ▁ме ́ кс ика ▁( ▁), ▁официальни ▁— ▁мексика хой ... (+17 more) |

27 |

| 32k | ▁ме ́ кс ика ▁( ▁), ▁официальни ▁— ▁мексика хой ... (+16 more) |

26 |

| 64k | `▁ме́ксика ▁( ▁), ▁официальни ▁— ▁мексикахой ▁хетта ▁штаташмид ▁россии ▁ | ... (+11 more)` |

Sample 2: Нотр-Дам-де-Пари е Парижа Даьла Наьна Элгац (, ) — Париже йоалла католикий элгац...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁н от р - дам - де - п ари ... (+27 more) |

37 |

| 16k | ▁нот р - дам - де - пари ▁е ▁пари ... (+21 more) |

31 |

| 32k | ▁нот р - дам - де - пари ▁е ▁парижа ... (+20 more) |

30 |

| 64k | ▁нотр - дам - де - пари ▁е ▁парижа ▁даьла ... (+18 more) |

28 |

Sample 3: «Нийсхо» (я) () — шера гӀалгӀашкара хьаяьккха́ ГӀалме шахьар юха ГӀалгӀай Респуб...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁« нийс хо » ▁( я ) ▁() ▁— ▁шера ... (+25 more) |

35 |

| 16k | ▁« нийс хо » ▁( я ) ▁() ▁— ▁шера ... (+20 more) |

30 |

| 32k | ▁« нийсхо » ▁( я ) ▁() ▁— ▁шера ▁гӏалгӏаш ... (+17 more) |

27 |

| 64k | ▁« нийсхо » ▁( я ) ▁() ▁— ▁шера ▁гӏалгӏашкара ... (+15 more) |

25 |

Key Findings

- Best Compression: 64k achieves 4.589x compression

- Lowest UNK Rate: 8k with 0.1349% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

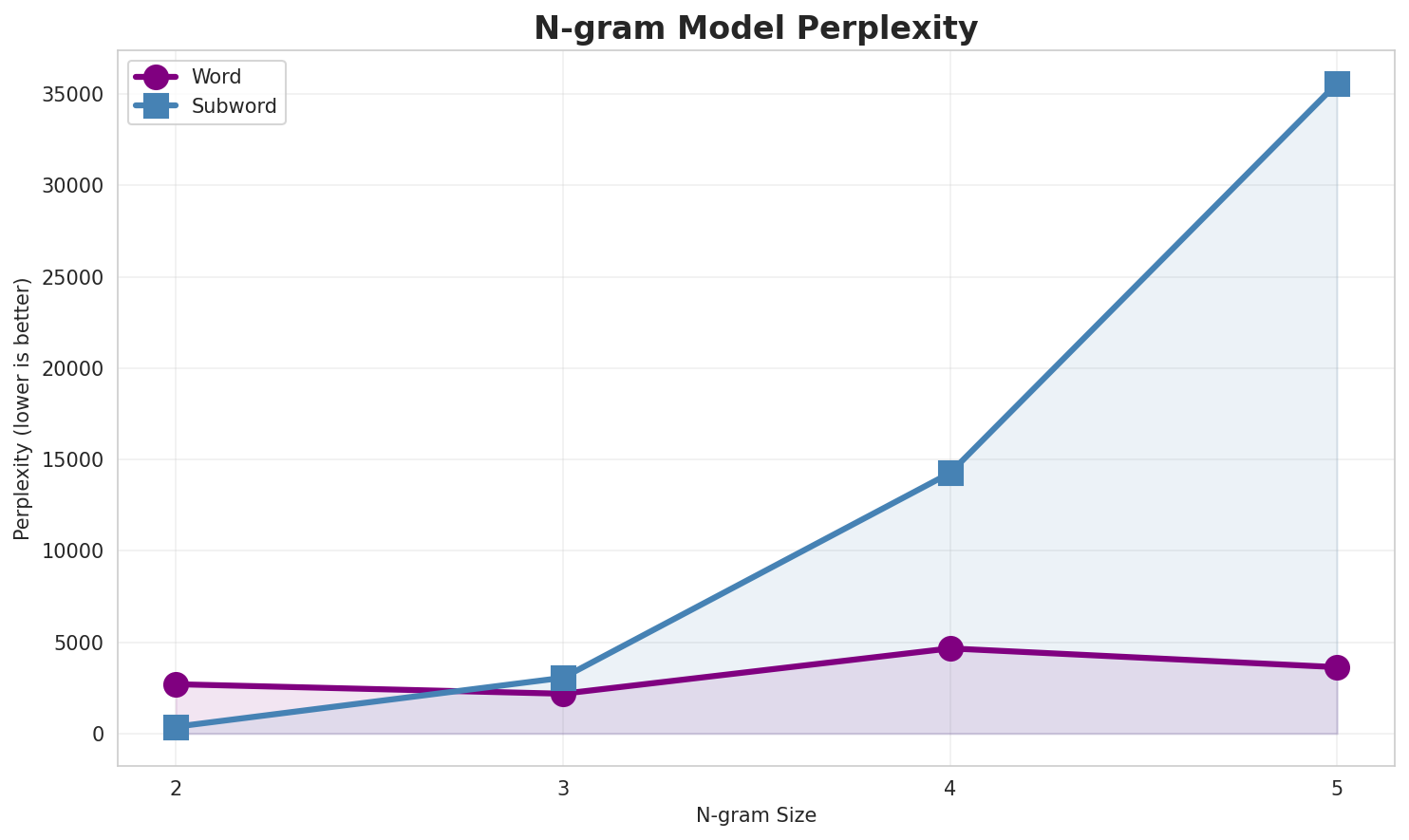

2. N-gram Model Evaluation

Results

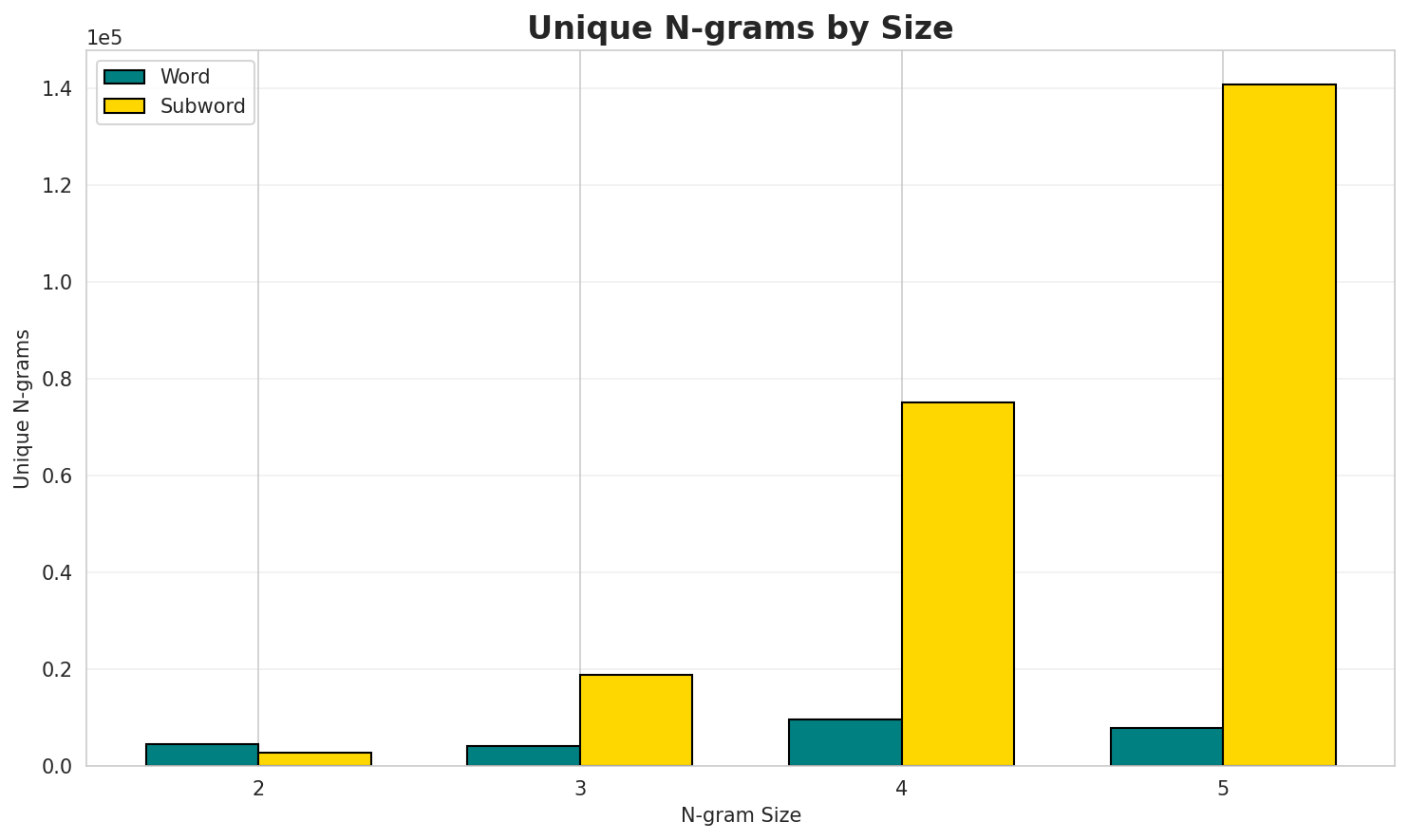

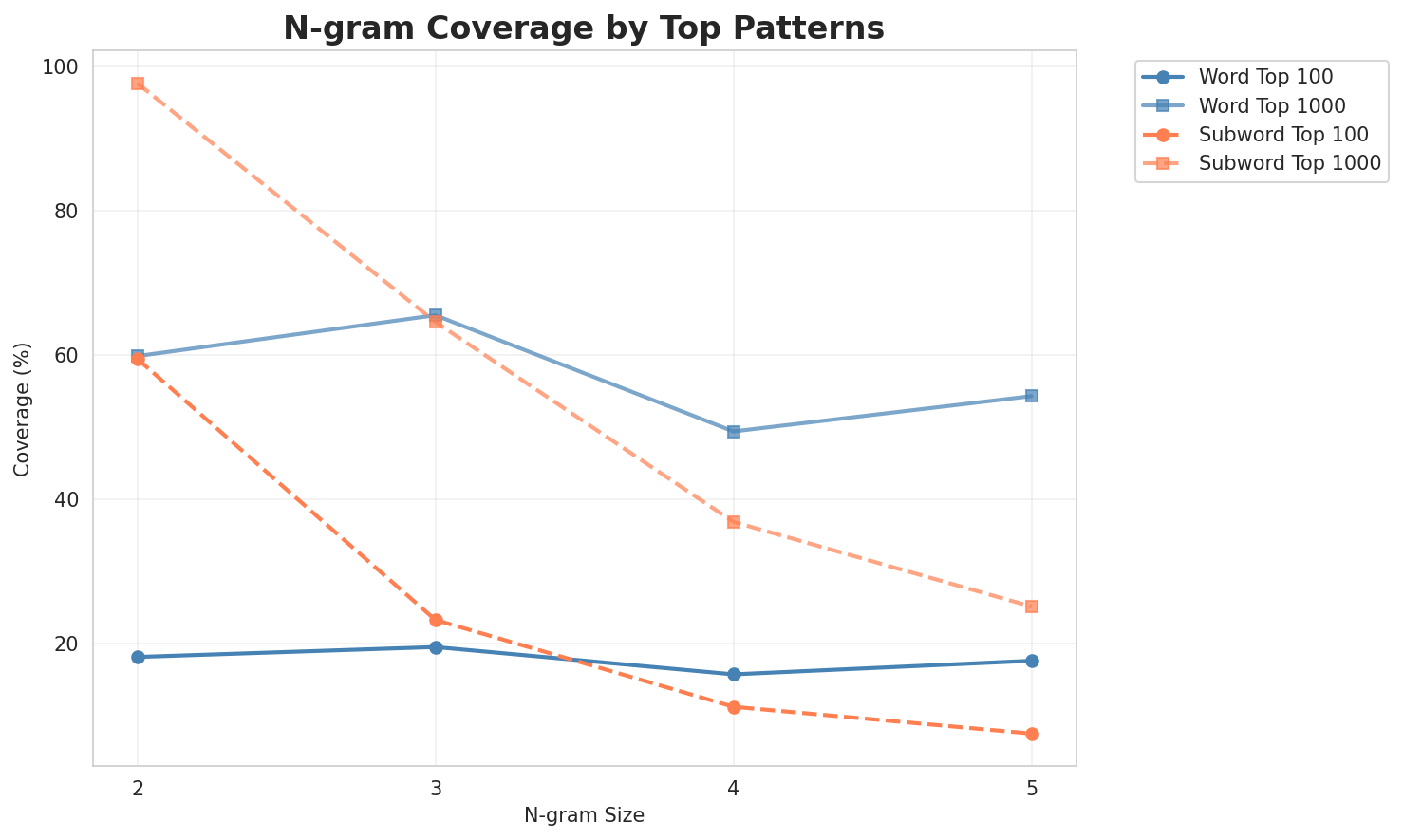

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 2,700 | 11.40 | 4,486 | 18.1% | 59.8% |

| 2-gram | Subword | 374 🏆 | 8.55 | 2,693 | 59.4% | 97.6% |

| 3-gram | Word | 2,178 | 11.09 | 4,133 | 19.5% | 65.5% |

| 3-gram | Subword | 3,053 | 11.58 | 18,826 | 23.3% | 64.6% |

| 4-gram | Word | 4,659 | 12.19 | 9,587 | 15.7% | 49.4% |

| 4-gram | Subword | 14,259 | 13.80 | 75,178 | 11.2% | 36.9% |

| 5-gram | Word | 3,632 | 11.83 | 7,779 | 17.6% | 54.3% |

| 5-gram | Subword | 35,588 | 15.12 | 140,686 | 7.5% | 25.1% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | белгалдаккхар тӏатовжамаш |

415 |

| 2 | гӏалгӏай мехка |

328 |

| 3 | з хь |

315 |

| 4 | вай з |

307 |

| 5 | хьажа иштта |

255 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | вай з хь |

307 |

| 2 | шераш вай з |

232 |

| 3 | нах баха моттигаш |

153 |

| 4 | хь шераш вай |

130 |

| 5 | з хь шераш |

130 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | шераш вай з хь |

232 |

| 2 | вай з хь шераш |

130 |

| 3 | з хь шераш вай |

130 |

| 4 | хь шераш вай з |

130 |

| 5 | шахьара нах баха моттигаш |

130 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | вай з хь шераш вай |

130 |

| 2 | з хь шераш вай з |

130 |

| 3 | хь шераш вай з хь |

130 |

| 4 | шераш вай з хь шераш |

117 |

| 5 | гӏа шераш вай з хь |

100 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | а _ |

75,922 |

| 2 | а р |

27,088 |

| 3 | ӏ а |

26,314 |

| 4 | а л |

24,378 |

| 5 | р а |

24,271 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | х ь а |

13,086 |

| 2 | г ӏ а |

13,029 |

| 3 | а ш _ |

11,108 |

| 4 | р а _ |

10,332 |

| 5 | ч а _ |

9,547 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | а р а _ |

4,962 |

| 2 | а ч а _ |

4,074 |

| 3 | _ х ь а |

3,915 |

| 4 | г ӏ а л |

3,870 |

| 5 | а г ӏ а |

3,736 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | х и н н а |

3,488 |

| 2 | _ х и н н |

3,331 |

| 3 | г ӏ а л г |

3,121 |

| 4 | ӏ а л г ӏ |

3,119 |

| 5 | а л г ӏ а |

3,111 |

Key Findings

- Best Perplexity: 2-gram (subword) with 374

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~25% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

3. Markov Chain Evaluation

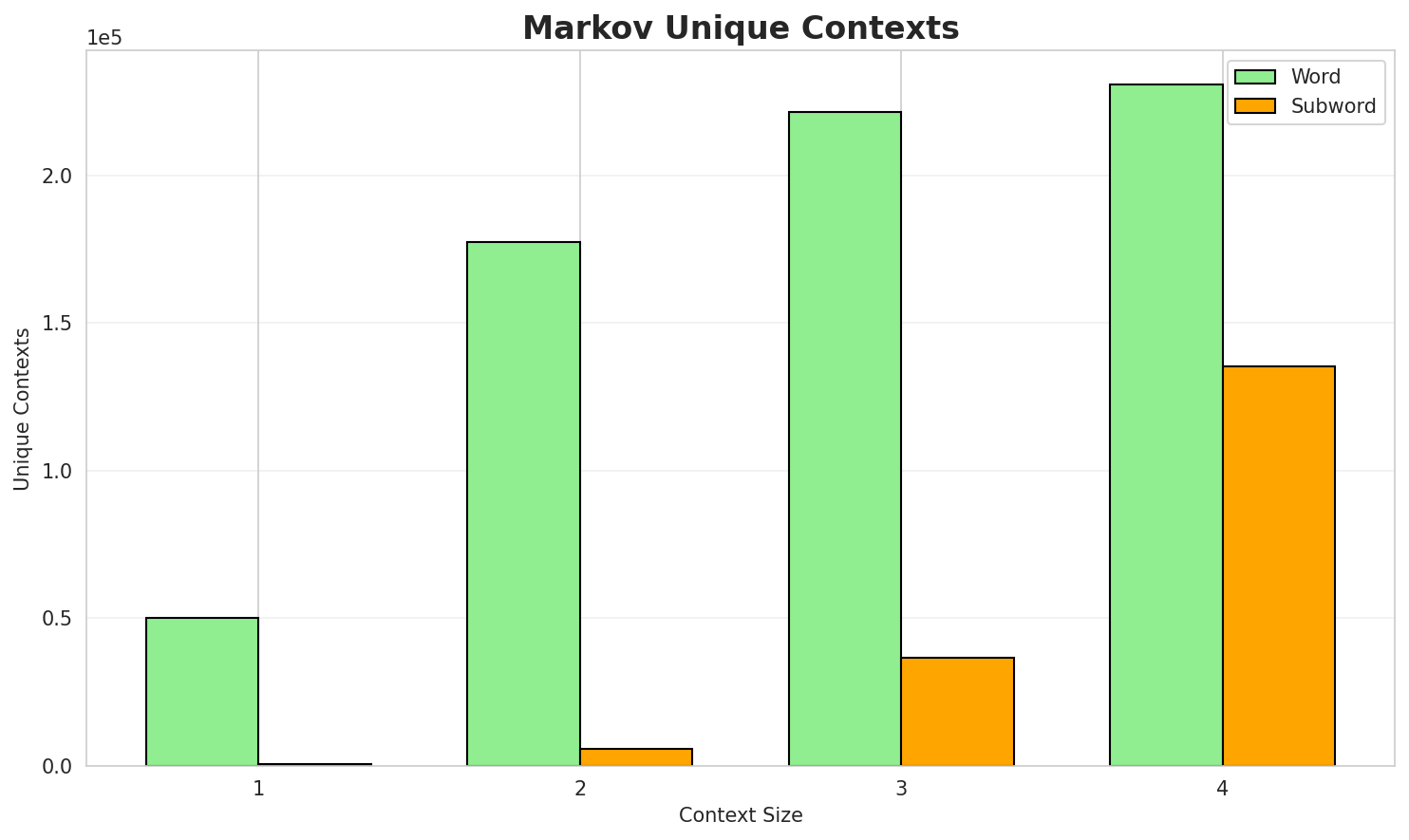

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.6550 | 1.575 | 3.54 | 50,260 | 34.5% |

| 1 | Subword | 1.2189 | 2.328 | 9.47 | 622 | 0.0% |

| 2 | Word | 0.1442 | 1.105 | 1.26 | 177,219 | 85.6% |

| 2 | Subword | 1.1111 | 2.160 | 6.21 | 5,892 | 0.0% |

| 3 | Word | 0.0357 | 1.025 | 1.05 | 221,229 | 96.4% |

| 3 | Subword | 0.8323 | 1.781 | 3.70 | 36,562 | 16.8% |

| 4 | Word | 0.0120 🏆 | 1.008 | 1.02 | 230,572 | 98.8% |

| 4 | Subword | 0.5706 | 1.485 | 2.34 | 135,317 | 42.9% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

а долаш ший йоазонашта юкъе лелаш хул цхьайолча хана денз цун бизнес дегӏакхувлара дукха мехкарий ам...я лоам жӏайраха шахьаре я аьдагӏий мотт хьехаш аьлте юрта хьисапе моттиг хиннай шера мальсагов дошлу...гӏалгӏай мохк баьккха́ ва́гӏача моастагӏчунга паргӏата ма дарра аьлча гӏалгӏаша къаьстта кубчий пхьа...

Context Size 2:

белгалдаккхар тӏатовжамаш чеботарев а и робакидзеи даьча тохкамех фаьппий кхаькхалоех е ӏадатех а е ...гӏалгӏай мехка паччахьалкъен филармони хамхой ахьмада цӏерагӏа я юрт ларс жӏайрахой баьха моттиг улл...з хь 590 гӏа шераш 390 гӏа шераш vii бӏаьшу 600 гӏа шераш вай з хь xcix

Context Size 3:

вай з хь шераш вай з хь xxx xxix xxviii xxvii xxvi xxv xxiv xxiii xxii xxi 2шераш вай з хь 830 гӏа шераш вай з хь шераш вай з хь 7 шу i бӏаьшераз хь шераш вай з хь шераш вай з хь шераш вай з хь шераш вай з хь

Context Size 4:

шераш вай з хь 720 гӏа шераш вай з хь 50 гӏа шераш вай з хь шераш вай зхь шераш вай з хь шераш вай з хь 400 гӏа шераш вай з хь шераш вай з хьз хь шераш вай з хь xiv бӏаьшу вай з хь тӏатовжамаш

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

аш_дндолега_афал_коа,_—_«цхьермиоаллг_пргӏе._мап

Context Size 2:

а_сийчеи_бе._хьа_архойиха_худжамаӏӏайча_хаязыкнофи_

Context Size 3:

хьалкхар_тӏа_—_«бӏгӏалаходкуменна_бааш_лелал_ха́ннай._б

Context Size 4:

ара_арахой_2_обознаача_между_из,_нохчи_хьаяхача_багарга_х

Key Findings

- Best Predictability: Context-4 (word) with 98.8% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (135,317 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 19,260 |

| Total Tokens | 235,079 |

| Mean Frequency | 12.21 |

| Median Frequency | 3 |

| Frequency Std Dev | 72.65 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | а | 6,393 |

| 2 | я | 2,455 |

| 3 | гӏалгӏай | 2,253 |

| 4 | из | 2,010 |

| 5 | шера | 1,966 |

| 6 | да | 1,931 |

| 7 | и | 1,329 |

| 8 | белгалдаккхар | 1,258 |

| 9 | в | 1,233 |

| 10 | тӏа | 1,139 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | ориентальни | 2 |

| 2 | балтий | 2 |

| 3 | лорала́ | 2 |

| 4 | кхерамзеи | 2 |

| 5 | wie | 2 |

| 6 | дарбанчаш | 2 |

| 7 | легализаци | 2 |

| 8 | целители | 2 |

| 9 | практикаш | 2 |

| 10 | лоралгахь | 2 |

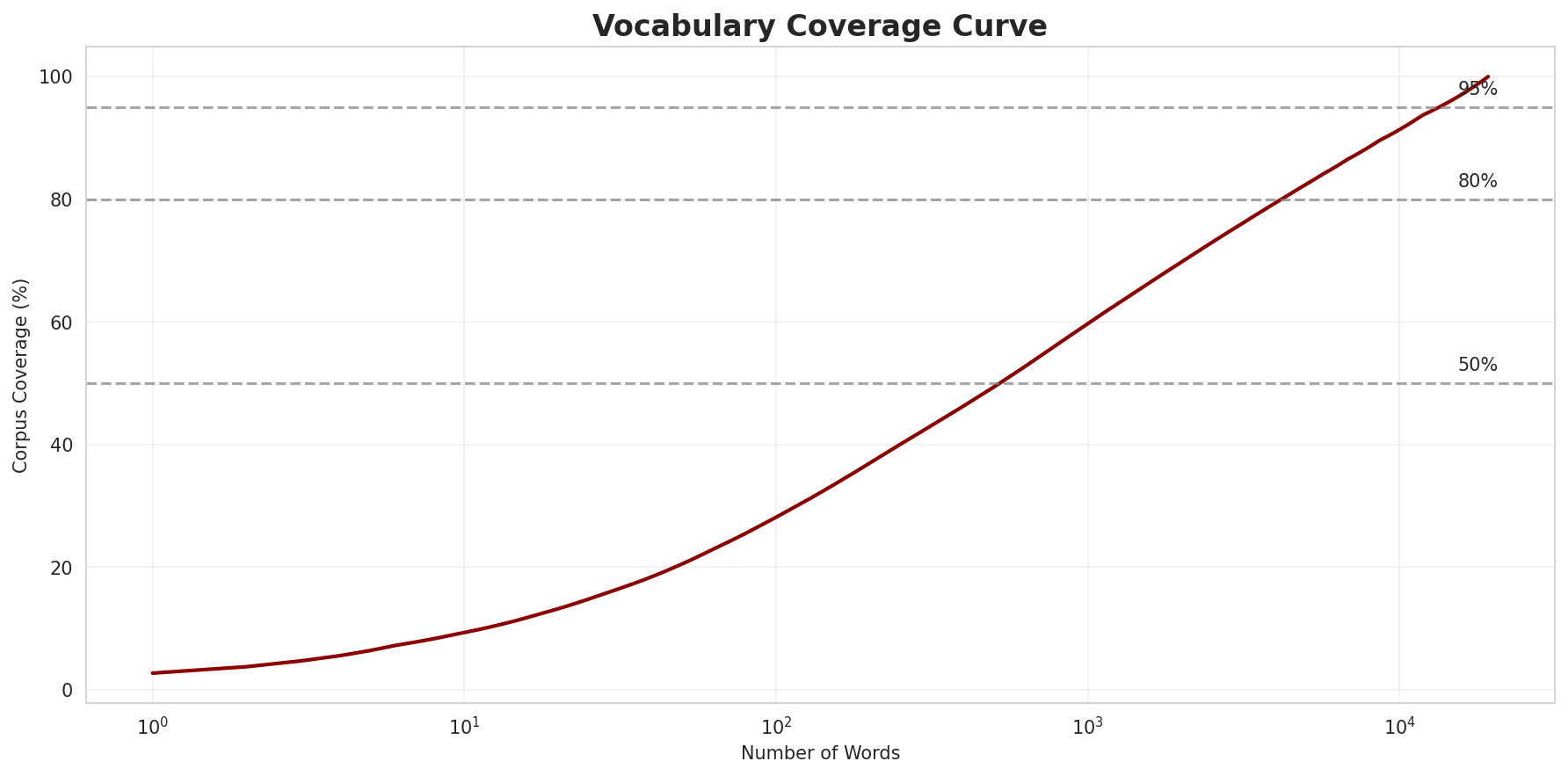

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0116 |

| R² (Goodness of Fit) | 0.991479 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 28.1% |

| Top 1,000 | 59.7% |

| Top 5,000 | 82.4% |

| Top 10,000 | 91.3% |

Key Findings

- Zipf Compliance: R²=0.9915 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 28.1% of corpus

- Long Tail: 9,260 words needed for remaining 8.7% coverage

5. Word Embeddings Evaluation

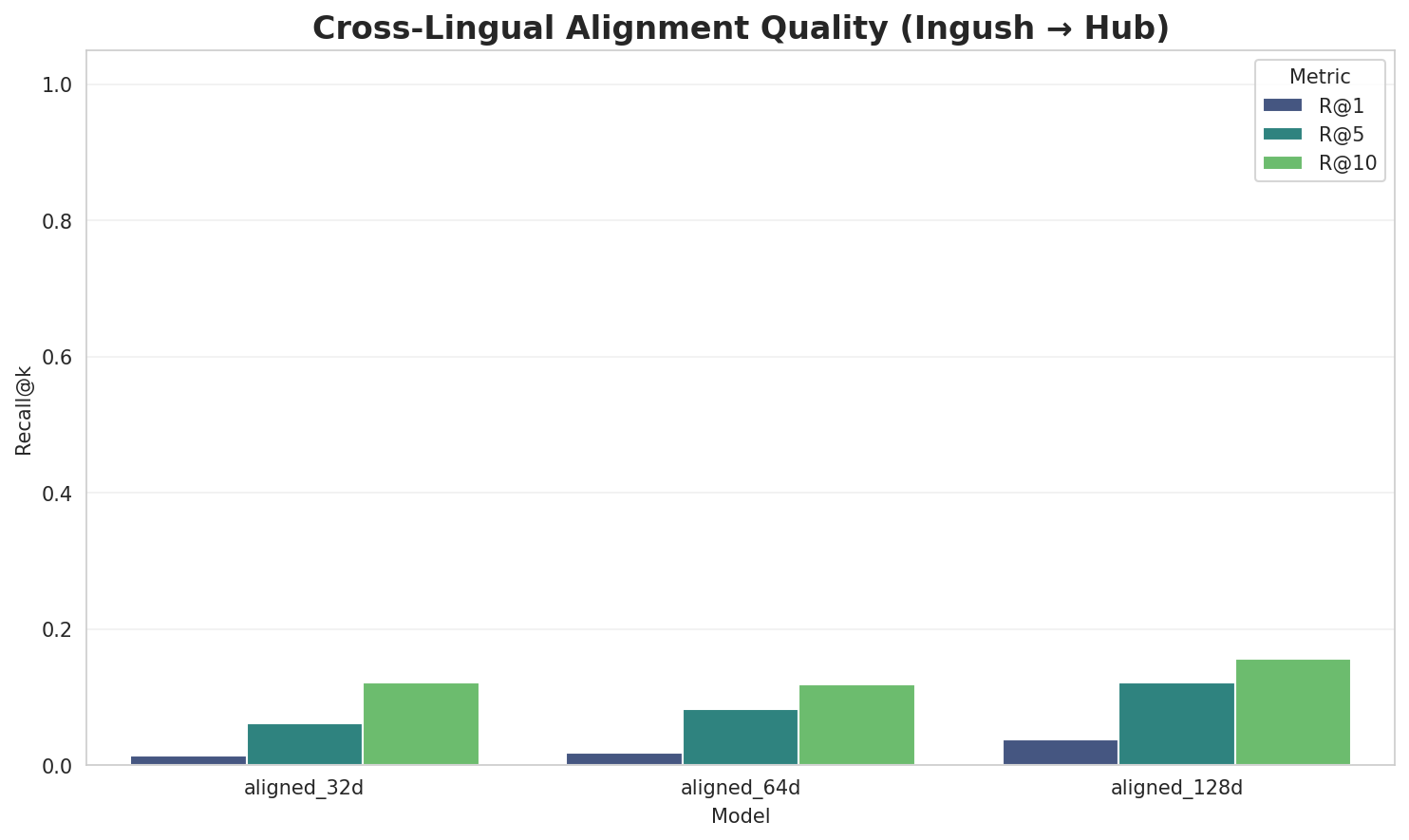

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.7882 🏆 | 0.3485 | N/A | N/A |

| mono_64d | 64 | 0.3727 | 0.3608 | N/A | N/A |

| mono_128d | 128 | 0.0496 | 0.3296 | N/A | N/A |

| aligned_32d | 32 | 0.7882 | 0.3541 | 0.0140 | 0.1220 |

| aligned_64d | 64 | 0.3727 | 0.3473 | 0.0180 | 0.1180 |

| aligned_128d | 128 | 0.0496 | 0.3275 | 0.0380 | 0.1560 |

Key Findings

- Best Isotropy: mono_32d with 0.7882 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.3446. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 3.8% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 1.160 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-д |

дийхка, довта, даьржаи |

-к |

классификациям, кӏориганаькъан, кодекса |

-с |

сулак, сомали, статьяш |

-б |

бунак, берашта, белгалъеш |

-м |

мусульманами, мухтарова, мальта |

-а |

астрале, аьтта, амхарой |

-т |

тӏахьелхаш, тайпового, тийна |

-хьа |

хьалхадоахаш, хьалхашкарча, хьаста |

Productive Suffixes

| Suffix | Examples |

|---|---|

-а |

община, мухтарова, юххьанцарча |

-и |

мусульманами, жигули, экзотермически |

-й |

лезгинский, регулярный, амхарой |

-аш |

тӏахьелхаш, воагӏаш, яхараш |

-ш |

тӏахьелхаш, воагӏаш, яхараш |

-е |

астрале, йолае, ӏомаде |

-ий |

лезгинский, къарший, советский |

-ча |

юххьанцарча, йолалуча, хьогденнача |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ккха |

1.67x | 69 contexts | яккха, йоккха, аьккха |

ькъа |

1.96x | 30 contexts | шаькъа, ӏаькъа, даькъа |

хьар |

1.58x | 67 contexts | пхьар, хьарп, хьарме |

амаш |

1.70x | 45 contexts | тамаш, замаш, ӏамаш |

хача |

1.85x | 28 contexts | яхача, ухача, кхача |

инна |

1.92x | 24 contexts | хинна, шинна, хиннар |

аькъ |

1.78x | 30 contexts | наькъ, даькъ, шаькъа |

аккх |

1.89x | 24 contexts | боаккх, воаккх, чаккхе |

кхар |

1.70x | 33 contexts | кхарт, декхар, акхаре |

ахьа |

1.38x | 55 contexts | кхахьа, арахьа, дахьаш |

лгал |

1.78x | 21 contexts | кулгал, белгал, белгала |

хинн |

1.93x | 16 contexts | хинна, хиннар, хиннад |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-д |

-а |

176 words | длина, дешагара |

-к |

-а |

148 words | кӏезигагӏа, кепагӏа |

-б |

-а |

110 words | баьлча, бийтта |

-м |

-а |

104 words | малхбоалега, мукха |

-г |

-а |

91 words | гӏалгӏайченна, галашкархоша |

-т |

-а |

80 words | тайпарча, тӏаргамара |

-а |

-а |

79 words | арадийна, арахецарца |

-п |

-а |

67 words | принципаца, произведенеша |

-с |

-а |

61 words | секретара, сша |

-к |

-и |

59 words | клавиши, критически |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| шоллагӏчох | шоллагӏч-о-х |

7.5 | о |

| кепатехача | кепатех-а-ча |

7.5 | а |

| кулгалдара | кулгалд-а-ра |

7.5 | а |

| баьцакомар | баьцако-м-ар |

7.5 | м |

| дӏатиллай | дӏатилл-а-й |

7.5 | а |

| наькъахои | наькъах-о-и |

7.5 | о |

| исбахьлен | исбахь-л-ен |

7.5 | л |

| кириллицай | кириллиц-а-й |

7.5 | а |

| латтандаь | латтанд-а-ь |

7.5 | а |

| гӏалгӏашкара | гӏалгӏаш-ка-ра |

7.5 | ка |

| гӏоазотаца | гӏоазот-а-ца |

7.5 | а |

| моттигашкара | моттигаш-ка-ра |

7.5 | ка |

| хьаракаца | хьара-ка-ца |

7.5 | ка |

| малхбоалехьаи | малхбоалехь-а-и |

7.5 | а |

| республикаца | республик-а-ца |

7.5 | а |

6.6 Linguistic Interpretation

Automated Insight: The language Ingush shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.59x) |

| N-gram | 2-gram | Lowest perplexity (374) |

| Markov | Context-4 | Highest predictability (98.8%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-10 04:22:21