Khmer - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Khmer Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

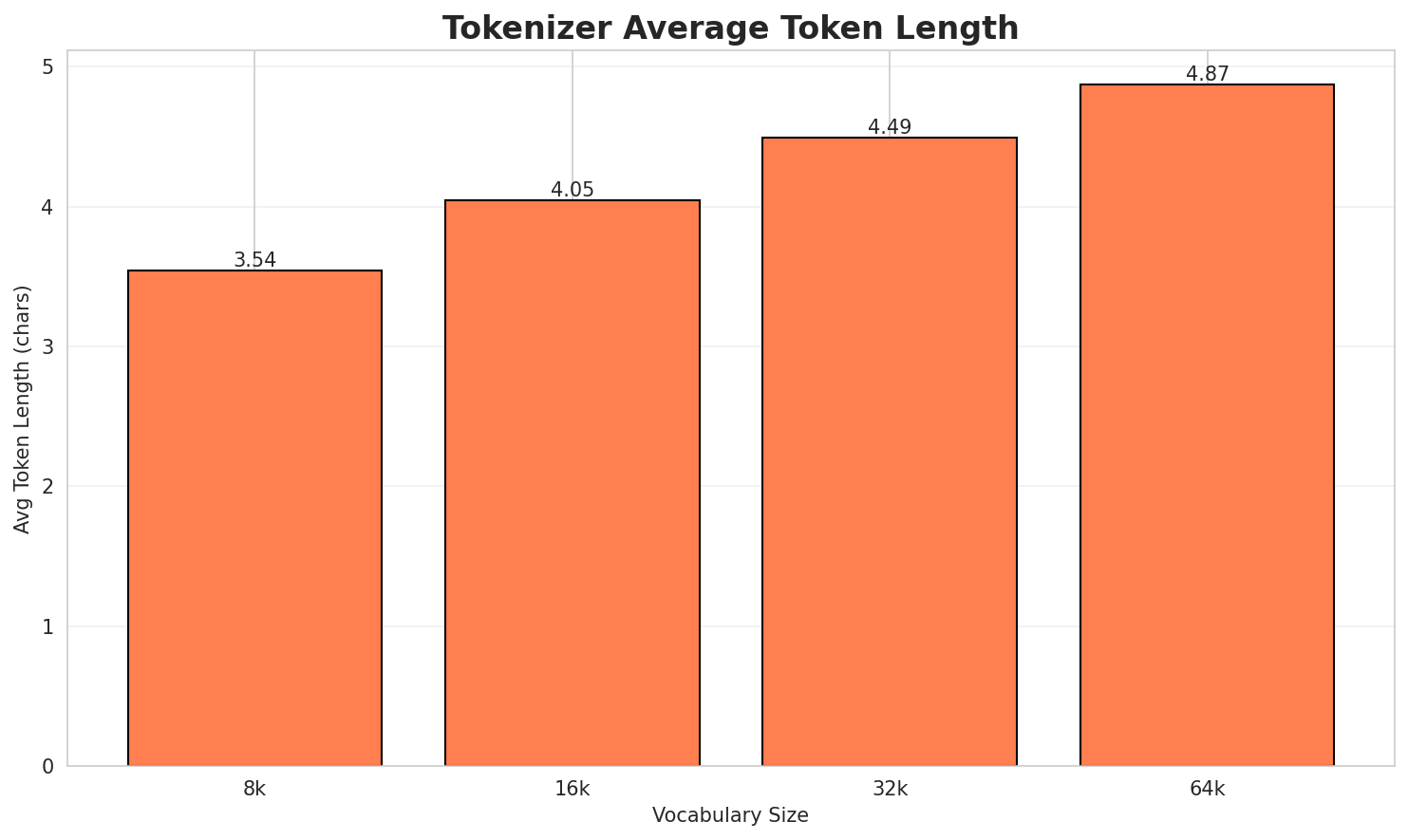

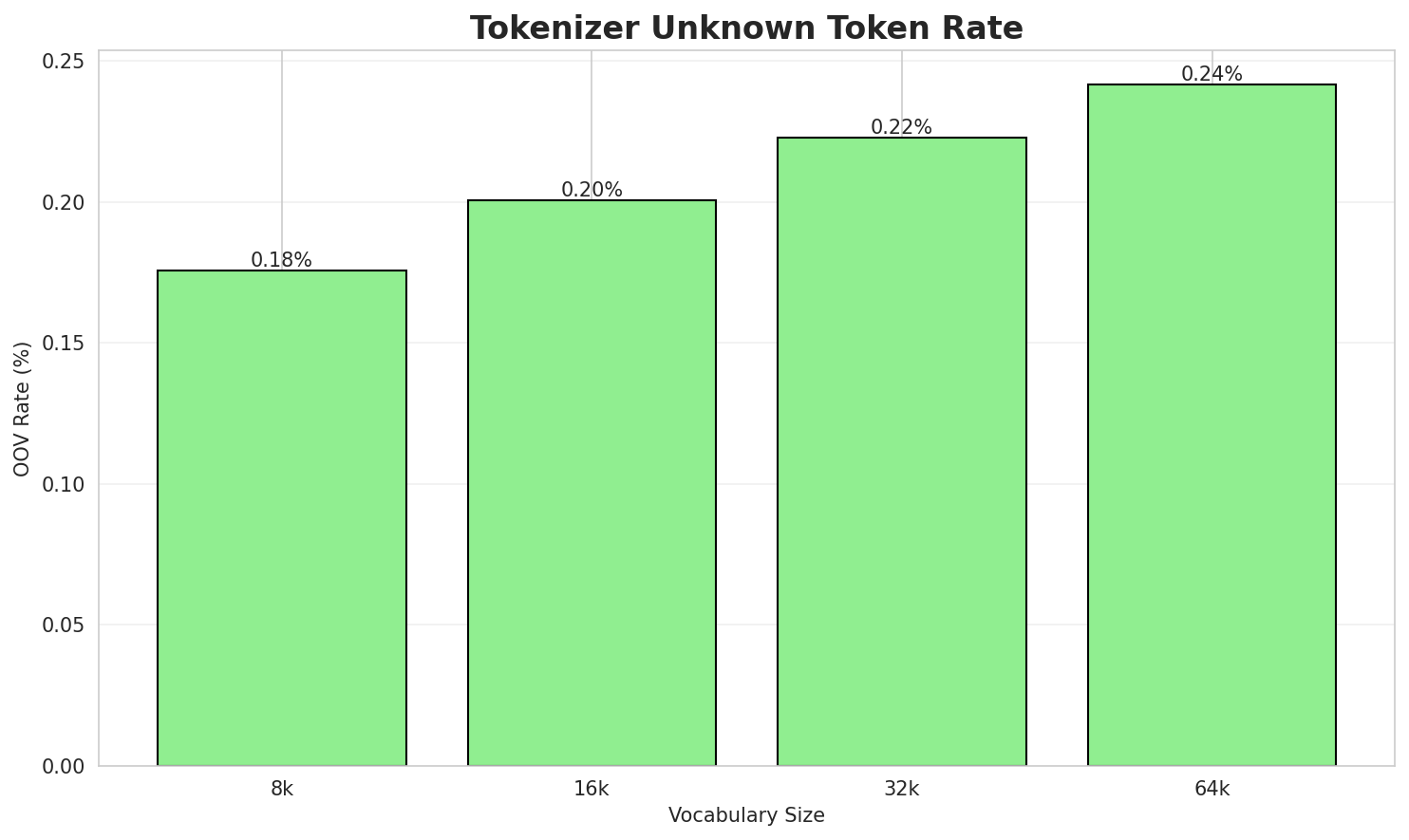

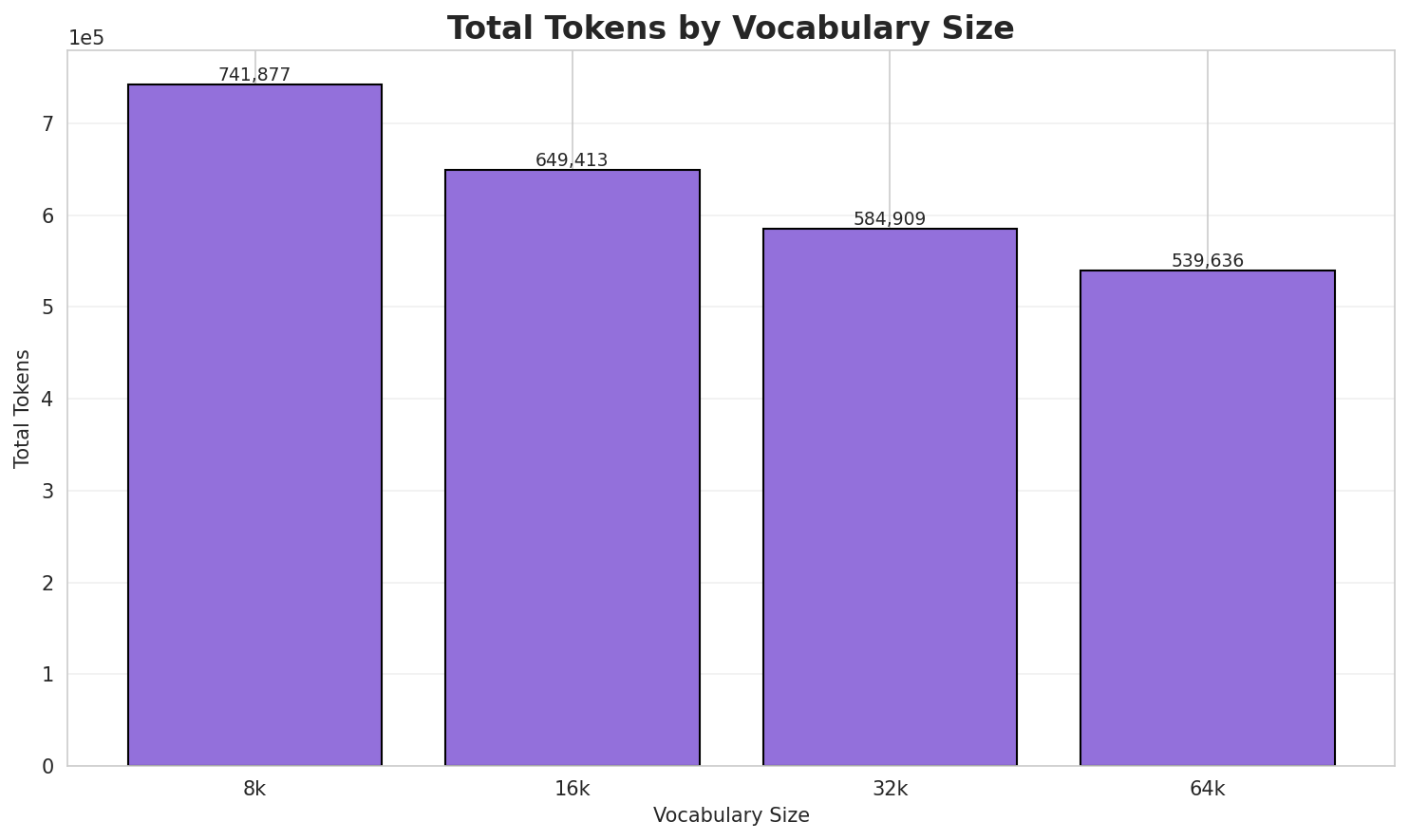

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.556x | 3.54 | 0.1756% | 741,877 |

| 16k | 4.063x | 4.05 | 0.2006% | 649,413 |

| 32k | 4.511x | 4.49 | 0.2228% | 584,909 |

| 64k | 4.889x 🏆 | 4.87 | 0.2415% | 539,636 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: សាវតារ ភូមិតាបឹបនេះយើងពុំបានជ្រាបច្បាស់ទេ ។ តែយើងបានដឹងថាក្នុងភូមិនេះមានទួលកប់ខ្...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁សាវ តារ ▁ភូមិ ត ាប ឹប នេះ យើង ពុំ បាន ... (+24 more) |

34 |

| 16k | ▁សាវ តារ ▁ភូមិ តាប ឹប នេះ យើង ពុំ បាន ជ្រាប ... (+21 more) |

31 |

| 32k | ▁សាវ តារ ▁ភូមិ តាប ឹប នេះ យើង ពុំបាន ជ្រាប ច្បាស់ ... (+17 more) |

27 |

| 64k | ▁សាវតារ ▁ភូមិ តាប ឹប នេះយើង ពុំបាន ជ្រាប ច្បាស់ទេ ▁។ ▁តែ ... (+13 more) |

23 |

Sample 2: ៖ ឃុំស៊ុង ឃុំមានជ័យ ឃុំសំឡូត ឃុំកំពង់ល្ពៅ ឃុំអូរសំរិល ឃុំតាតោក ឃុំតាសាញ សូមមើលផង...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁៖ ▁ឃុំ ស៊ ុង ▁ឃុំ មានជ័យ ▁ឃុំ សំ ឡ ូត ... (+18 more) |

28 |

| 16k | ▁៖ ▁ឃុំ ស៊ ុង ▁ឃុំមានជ័យ ▁ឃុំ សំឡូត ▁ឃុំកំពង់ ល ្ពៅ ... (+13 more) |

23 |

| 32k | ▁៖ ▁ឃុំ ស៊ុង ▁ឃុំមានជ័យ ▁ឃុំ សំឡូត ▁ឃុំកំពង់ ល្ពៅ ▁ឃុំអូរ សំរ ... (+10 more) |

20 |

| 64k | ▁៖ ▁ឃុំ ស៊ុង ▁ឃុំមានជ័យ ▁ឃុំ សំឡូត ▁ឃុំកំពង់ល្ពៅ ▁ឃុំអូរ សំរិល ▁ឃុំ ... (+7 more) |

17 |

Sample 3: ម៉ៃឃើលអាចសំដៅលើ៖ ម៉ៃឃើល ហ្វារ៉ាដេយ ម៉ៃឃើល ចាកសាន់ ម៉ៃឃើល វីកឃឺវី

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ម៉ ៃ ឃ ើល អាច សំដៅលើ ៖ ▁ម៉ ៃ ឃ ... (+22 more) |

32 |

| 16k | ▁ម៉ៃឃើល អាច សំដៅលើ៖ ▁ម៉ៃឃើល ▁ហ ្វារ ៉ា ដ េយ ▁ម៉ៃឃើល ... (+8 more) |

18 |

| 32k | ▁ម៉ៃឃើល អាចសំដៅលើ៖ ▁ម៉ៃឃើល ▁ហ្វារ ៉ា ដេយ ▁ម៉ៃឃើល ▁ចាក សាន់ ▁ម៉ៃឃើល ... (+4 more) |

14 |

| 64k | ▁ម៉ៃឃើល អាចសំដៅលើ៖ ▁ម៉ៃឃើល ▁ហ្វារ៉ាដេយ ▁ម៉ៃឃើល ▁ចាក សាន់ ▁ម៉ៃឃើល ▁វីក ឃឺ ... (+1 more) |

11 |

Key Findings

- Best Compression: 64k achieves 4.889x compression

- Lowest UNK Rate: 8k with 0.1756% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

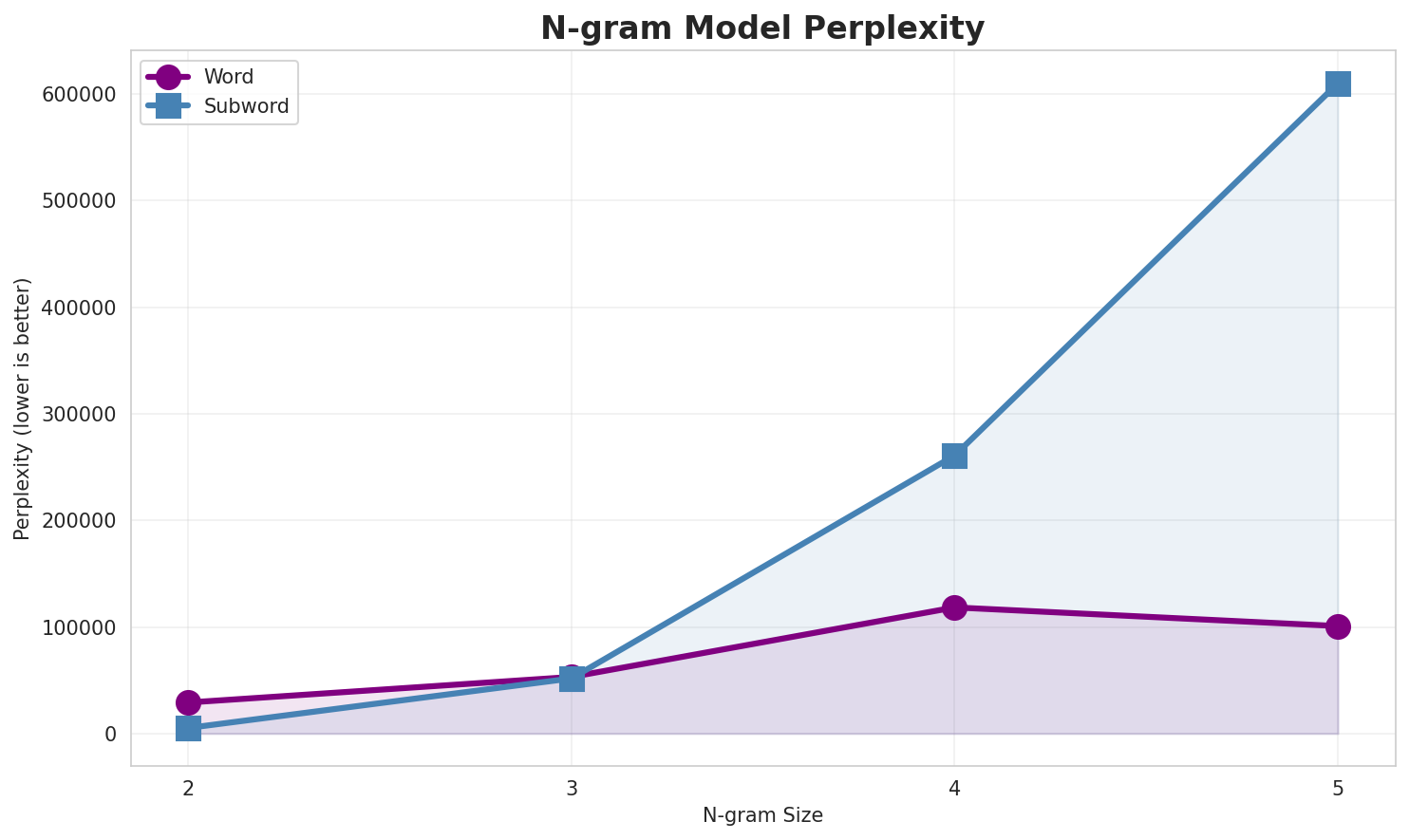

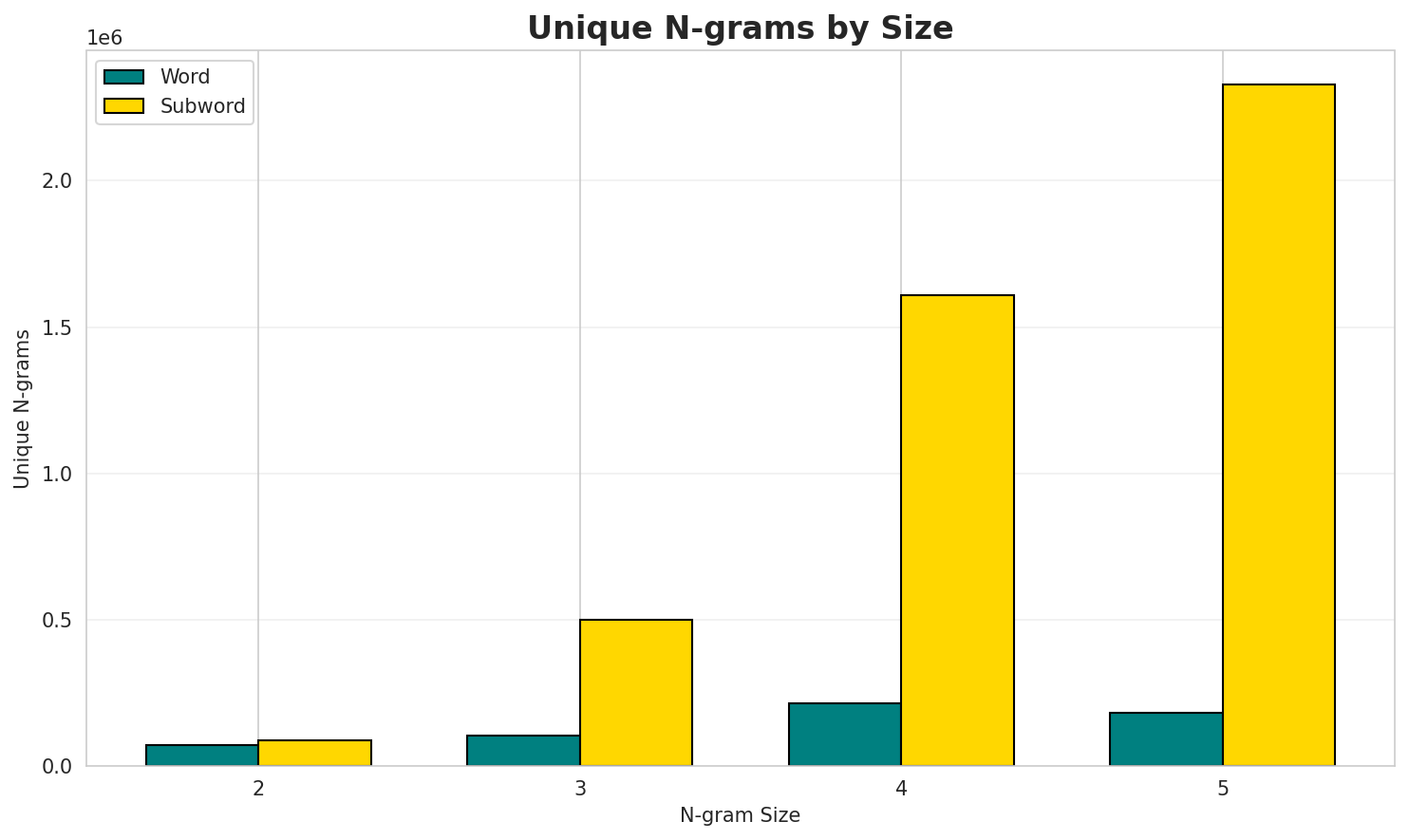

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 29,102 | 14.83 | 72,055 | 8.9% | 24.7% |

| 2-gram | Subword | 5,212 🏆 | 12.35 | 88,256 | 22.4% | 57.4% |

| 3-gram | Word | 53,084 | 15.70 | 103,452 | 6.4% | 17.4% |

| 3-gram | Subword | 51,695 | 15.66 | 499,965 | 8.2% | 24.3% |

| 4-gram | Word | 118,314 | 16.85 | 213,260 | 4.3% | 12.7% |

| 4-gram | Subword | 260,843 | 17.99 | 1,609,249 | 4.4% | 12.4% |

| 5-gram | Word | 100,822 | 16.62 | 180,877 | 4.2% | 13.0% |

| 5-gram | Subword | 609,986 | 19.22 | 2,327,771 | 3.0% | 8.0% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | example example |

21,905 |

| 2 | of the |

4,908 |

| 3 | ត្រូវ បាន |

3,687 |

| 4 | នៅ ក្នុង |

3,249 |

| 5 | ព្រះ អង្គ |

2,574 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | example example example |

10,790 |

| 2 | villageភូមិ villageភូមិ villageភូមិ |

1,612 |

| 3 | ត្រូវ បាន គេ |

1,169 |

| 4 | ៤៩៣ ប្រ ក |

995 |

| 5 | សាសនា ព្រះពុទ្ធសាសនា វត្ត |

640 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | example example example example |

1,615 |

| 2 | villageភូមិ villageភូមិ villageភូមិ villageភូមិ |

1,380 |

| 3 | អនុវិទ្យាល័យ សាសនា ព្រះពុទ្ធសាសនា វត្ត |

558 |

| 4 | បឋមសិក្សា អនុវិទ្យាល័យ សាសនា ព្រះពុទ្ធសាសនា |

536 |

| 5 | អប់រំ បឋមសិក្សា អនុវិទ្យាល័យ សាសនា |

535 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | villageភូមិ villageភូមិ villageភូមិ villageភូមិ villageភូមិ |

1,151 |

| 2 | អប់រំ បឋមសិក្សា អនុវិទ្យាល័យ សាសនា ព្រះពុទ្ធសាសនា |

535 |

| 3 | បឋមសិក្សា អនុវិទ្យាល័យ សាសនា ព្រះពុទ្ធសាសនា វត្ត |

528 |

| 4 | e លិច w ត្បូង s |

455 |

| 5 | n កើត e លិច w |

454 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ។ _ |

199,513 |

| 2 | បា ន |

145,143 |

| 3 | ង _ |

128,650 |

| 4 | កា រ |

123,593 |

| 5 | e _ |

121,925 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ និ ង |

83,168 |

| 2 | _ ។ _ |

67,258 |

| 3 | រ ប ស់ |

64,716 |

| 4 | _ ដែ ល |

42,564 |

| 5 | _ t h |

39,828 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | m p l e |

34,032 |

| 2 | p l e _ |

33,694 |

| 3 | _ e x a |

33,362 |

| 4 | a m p l |

33,310 |

| 5 | e x a m |

33,310 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ e x a m |

33,301 |

| 2 | a m p l e |

33,292 |

| 3 | e x a m p |

33,273 |

| 4 | x a m p l |

33,273 |

| 5 | m p l e _ |

33,105 |

Key Findings

- Best Perplexity: 2-gram (subword) with 5,212

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~8% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

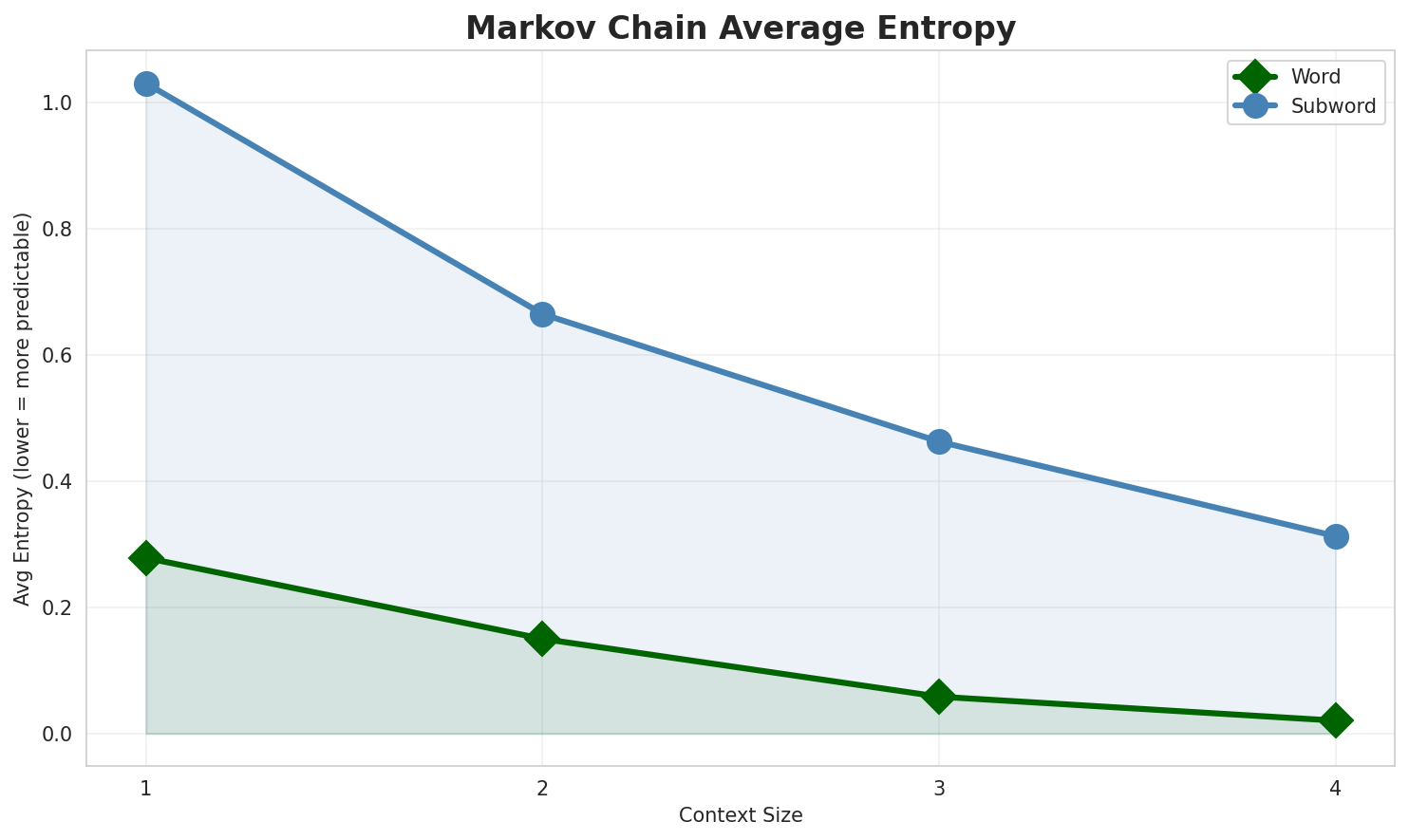

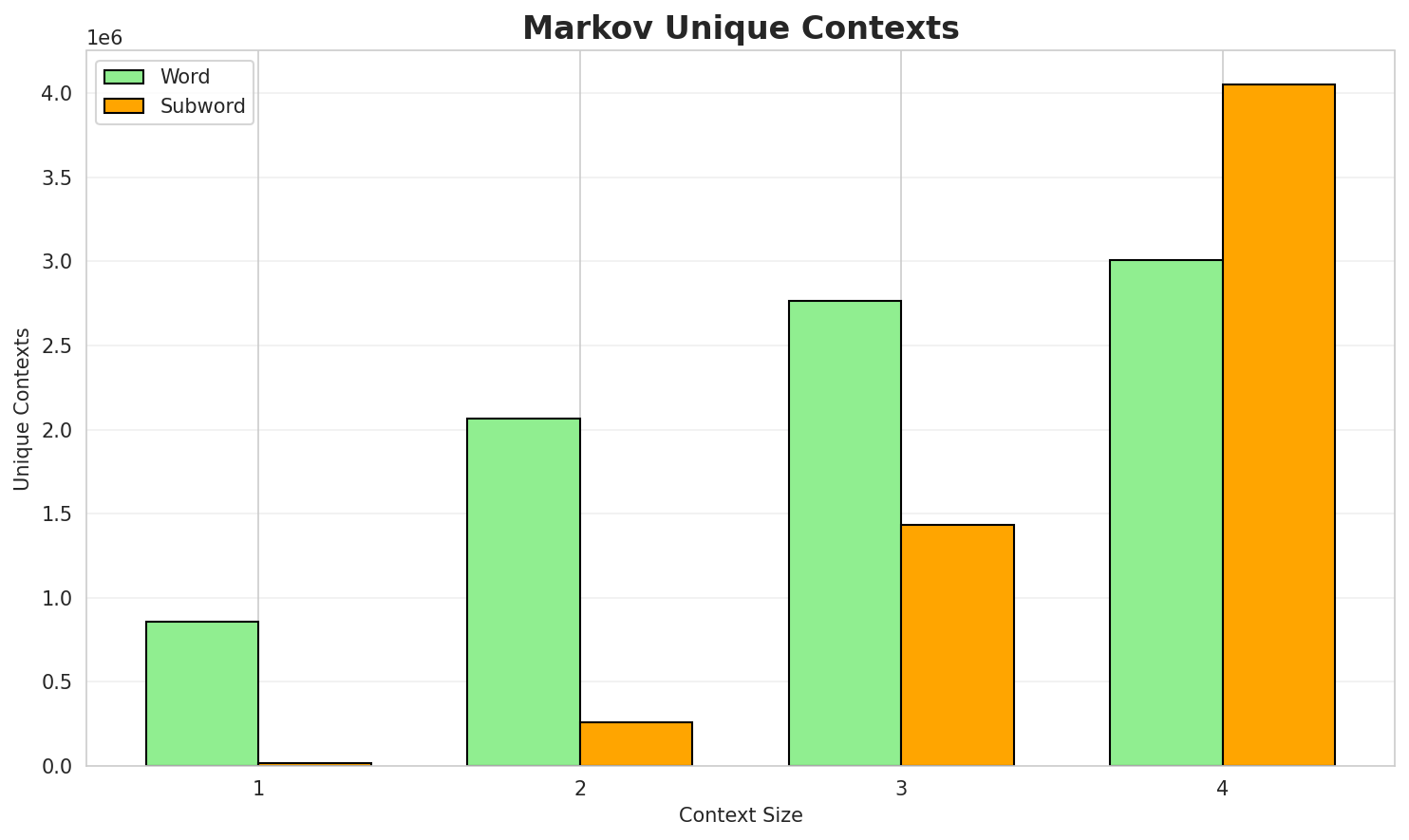

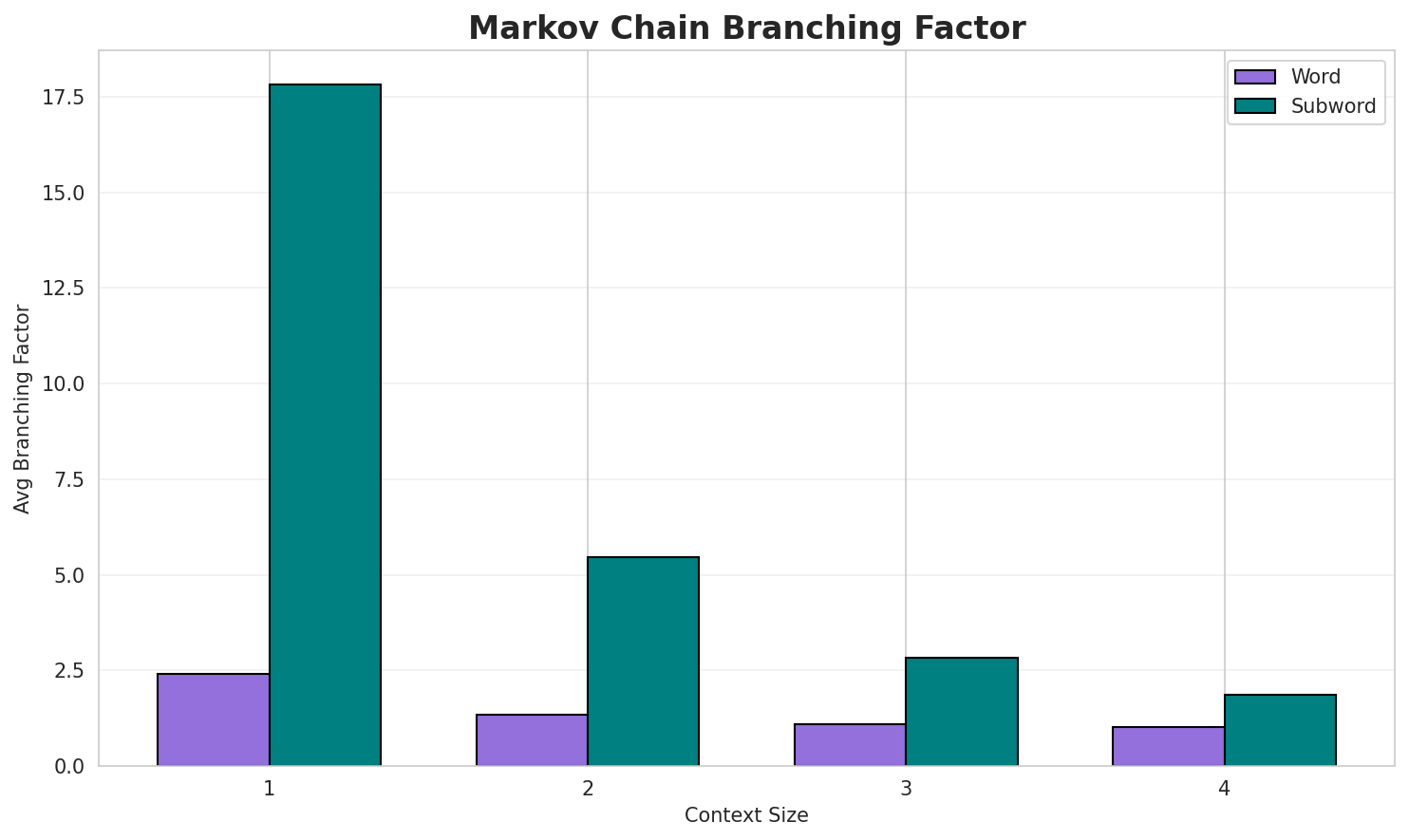

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.2782 | 1.213 | 2.41 | 859,644 | 72.2% |

| 1 | Subword | 1.0301 | 2.042 | 17.81 | 14,759 | 0.0% |

| 2 | Word | 0.1500 | 1.110 | 1.34 | 2,064,587 | 85.0% |

| 2 | Subword | 0.6645 | 1.585 | 5.47 | 262,778 | 33.5% |

| 3 | Word | 0.0584 | 1.041 | 1.09 | 2,764,478 | 94.2% |

| 3 | Subword | 0.4625 | 1.378 | 2.82 | 1,436,052 | 53.8% |

| 4 | Word | 0.0205 🏆 | 1.014 | 1.03 | 3,007,497 | 98.0% |

| 4 | Subword | 0.3127 | 1.242 | 1.86 | 4,049,871 | 68.7% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

និង ឡាវ ព្រះឧបជ្ឈាហ៍ ទេពវង្ស សម្តេច ព្រះអភិសិរីសុគន្ធាមហាសង្ឃរាជាធិបតី សម្តេចព្រះមហាសង្ឃរាជ បួរ គ្រី...example example example example ៧ លោកស្រី គាត់ បាន សម្រាប់ និកាយ ហ្សេន តានត្រិក និងដែនដីបរិសុទ្ធ ដែន...the united states union premier league cup នេះក៏ជាការប្រកួតផ្លូវការណ៍ក្រោមការគ្រប់គ្រងរបស់ cambodian...

Context Size 2:

example example example ៣ example example ២៧ example example ១១ example example ៧ example example ex...of the mahayana idea that such an attack scenario dynamically shall make use of both the dmtត្រូវ បាន អភិវឌ្ឍន សម្រាប់ kde 3 បាន ការ តែង តាំង ជា អភិបាល នៃ តំបន់អុីវាណូ ហ្វ្រែនគីវស៍ ក្នុង នាម

Context Size 3:

example example example ៤១ example example example ៦ example example example ១២ example example exam...villageភូមិ villageភូមិ villageភូមិ villageភូមិ village ព្រំប្រទល់នៃ ទិសខាងកើត e ខាងត្បូង s ខាងលិច w...ត្រូវ បាន គេ ធ្វើ តេស្ដ នៅ ក្នុង ថ្នាក់ b និង c គឺជារង្វាស់នៃជ្រុងនៃ ត្រីកោណ ដែលមាន ក្រលាផ្ទៃ f និង ...

Context Size 4:

example example example example ៣ ស្រី ៨ example example example ៣៣ example example example ៩ exampl...villageភូមិ villageភូមិ villageភូមិ villageភូមិ villageភូមិ villageភូមិ villageភូមិ village ព្រំប្រទ...អនុវិទ្យាល័យ សាសនា ព្រះពុទ្ធសាសនា វត្ត ផ្សារ រមណីដ្ឋាន ឯកសារពិគ្រោះ គណកម្មការជាតិរៀបចំការបោះឆ្នោត ខេ...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_plovon_(ហៅថានេះសេចក្តីច្បាប់ជាជន៍ជាគួរលាវបាទទួងថា_មាគរ_ck_និងសែន

Context Size 2:

។_rel.2_សង្ខិត្តំ។]_(_sបានលទ្ធផលស្គាល់ច្បាស់លាស់_។_សង_ត្រឡប់យកមន្រ្តីខុទ្ទកាល័យ_និ

Context Size 3:

_និង_កម្រិត។_ផ្លូវថូម៉ាស"_(r_។_នាម៉ឺនពិធីមាំថែមទៀតផងរបស់វីតាមីន_atter_leve

Context Size 4:

mple_៥០_និងប្រទេសអូស្រ្ដាលី_កេនple_example_example_example_example_ex

Key Findings

- Best Predictability: Context-4 (word) with 98.0% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (4,049,871 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 168,571 |

| Total Tokens | 2,917,143 |

| Mean Frequency | 17.31 |

| Median Frequency | 3 |

| Frequency Std Dev | 265.83 |

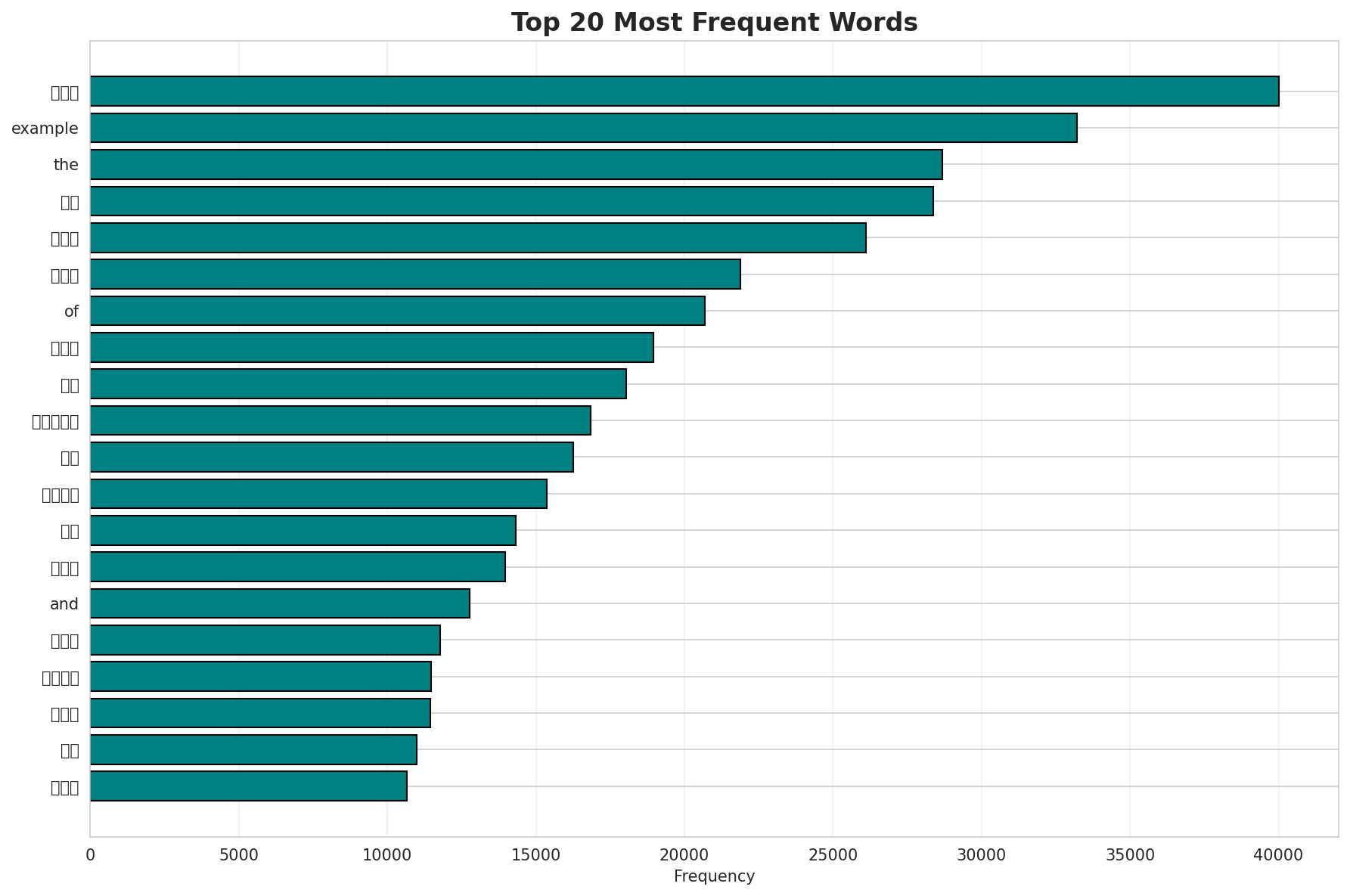

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | និង | 40,023 |

| 2 | example | 33,205 |

| 3 | the | 28,680 |

| 4 | ជា | 28,379 |

| 5 | បាន | 26,100 |

| 6 | មាន | 21,881 |

| 7 | of | 20,677 |

| 8 | ដែល | 18,961 |

| 9 | នៅ | 18,044 |

| 10 | ក្នុង | 16,838 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | កេលីម៉ាន់តាន់ | 2 |

| 2 | สทิงพระ | 2 |

| 3 | ទេសបាលតំបន់ | 2 |

| 4 | វត្តច័ន្ទ | 2 |

| 5 | និងការអភិវឌ្ឍខ្លួនឯង | 2 |

| 6 | milliontimes | 2 |

| 7 | អក្សរចិនបុរាណ | 2 |

| 8 | នៅលើផ្ទៃខាងក្រោយងងឹត | 2 |

| 9 | វគ្គជម្រុះជុំទី៣ | 2 |

| 10 | wagnalls | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0175 |

| R² (Goodness of Fit) | 0.996035 |

| Adherence Quality | excellent |

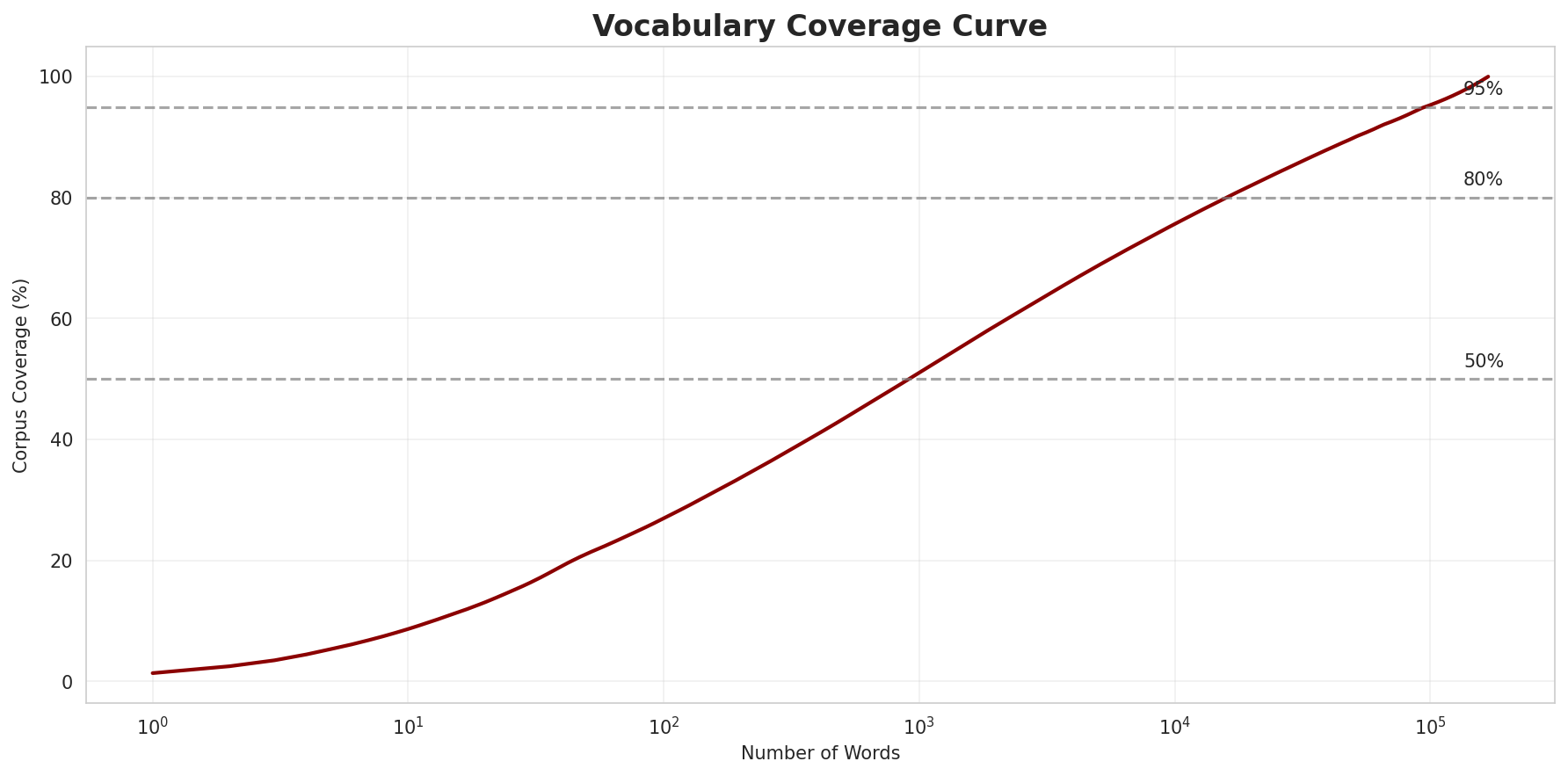

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 27.0% |

| Top 1,000 | 51.0% |

| Top 5,000 | 68.7% |

| Top 10,000 | 75.6% |

Key Findings

- Zipf Compliance: R²=0.9960 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 27.0% of corpus

- Long Tail: 158,571 words needed for remaining 24.4% coverage

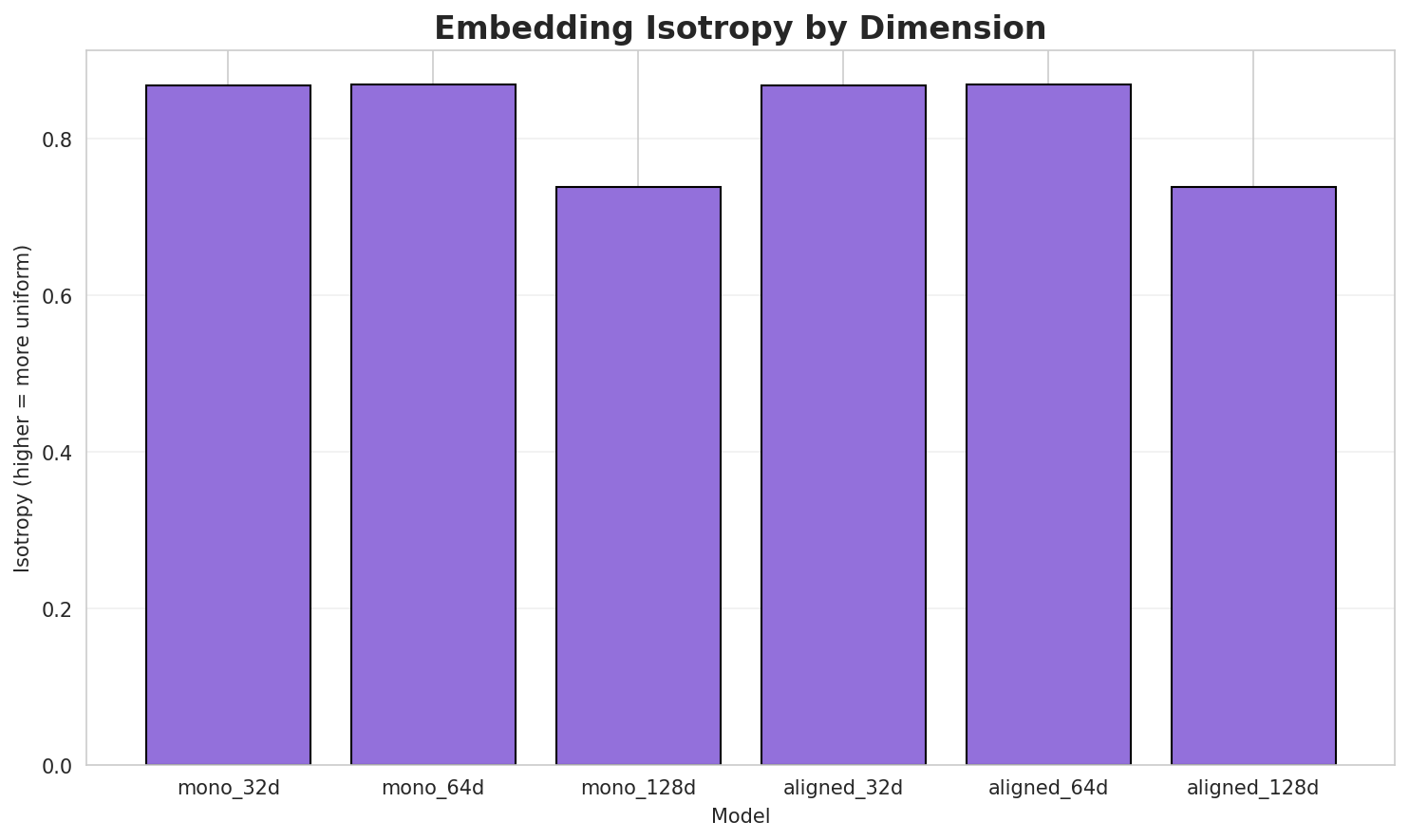

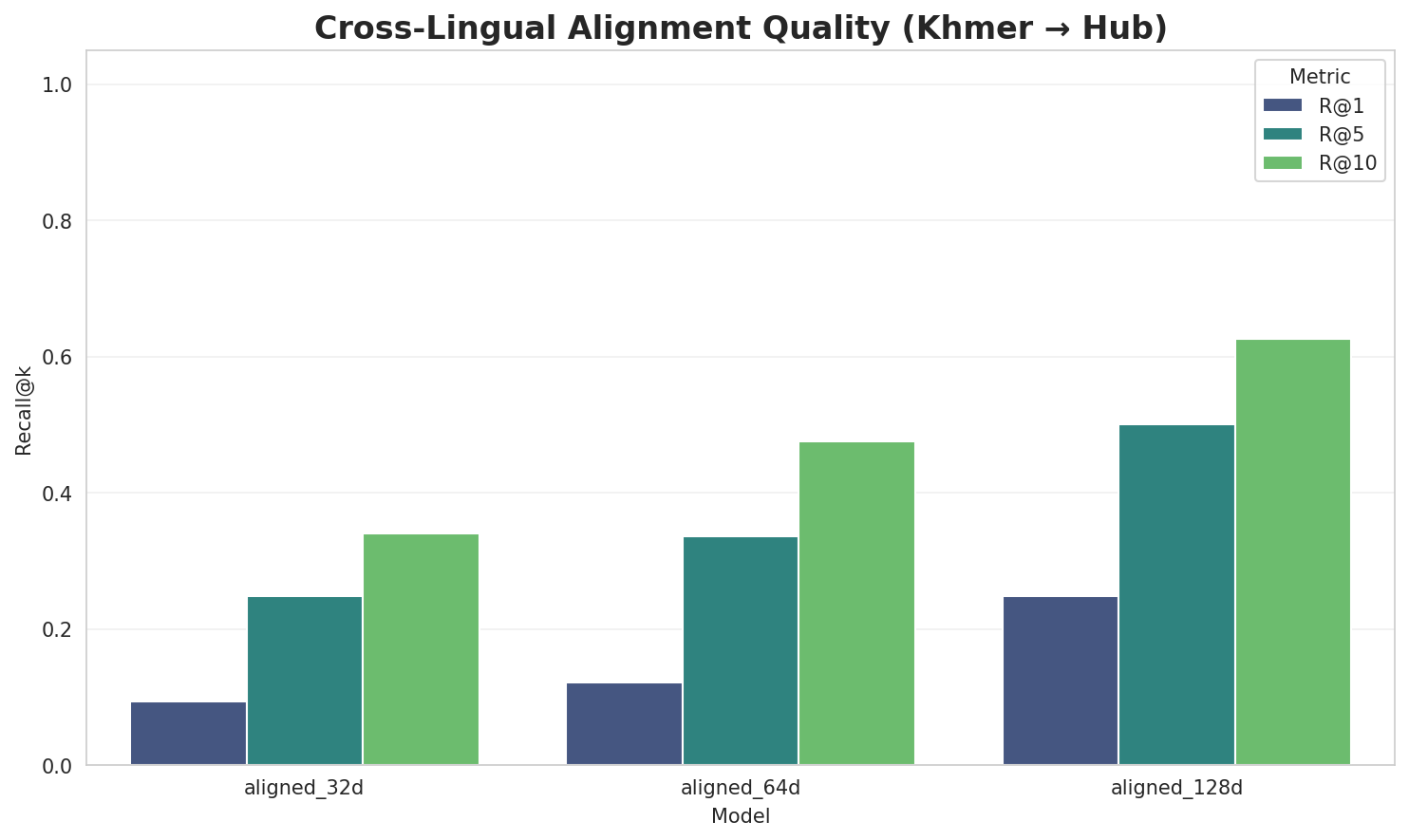

5. Word Embeddings Evaluation

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8684 | 0.3333 | N/A | N/A |

| mono_64d | 64 | 0.8701 🏆 | 0.2501 | N/A | N/A |

| mono_128d | 128 | 0.7385 | 0.2098 | N/A | N/A |

| aligned_32d | 32 | 0.8684 | 0.3316 | 0.0940 | 0.3400 |

| aligned_64d | 64 | 0.8701 | 0.2521 | 0.1220 | 0.4760 |

| aligned_128d | 128 | 0.7385 | 0.2166 | 0.2480 | 0.6260 |

Key Findings

- Best Isotropy: mono_64d with 0.8701 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2656. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 24.8% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.614 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-ស |

សឧត្តរំ, ស្វាហ៊ីលី, សម្បកក្រៅរុំ |

-ប |

បានដល់ការទាយគតិរបស់ព្រះសិទ្ធត្ថរាជកុមារ, បឋមជ្ឈានតោ, ប្រាសាទបាក់បែកនៅខាងក្រោយនៃវត្តស្រីមឿងនៅវាំងចន្ទន៍ភាគកណ្ដាល |

-ក |

ក្រាំងចិន, ក្រមាខ្មែរ, ក្នុងកាលខាងក្រោយ |

-អ |

អង្គុយក្នុងទីសមគួរហើយ, អេអូនីសេ, អូរាំងអាស្លី |

-ន |

និងបន្លែ, នៃម៉ាស់សរុបនៃប្រព័ន្ធព្រះអាទិត្យ, និងបរិវារមួយក្រុមបានភៀសទៅជ្រកកោនក្នុងប្រទេសសៀមជាមួយព្រះ |

-ម |

មានប្រាសាទ, ម្យ៉ាងទៀតសោត, មានឱកាស |

-s |

supra, sharia, signals |

-រ |

រមែងសញ្ជប់សញ្ជឹង, រណ្តៅតូច, របស់ព្រះពុទ្ធមួយភាគដែរ |

Productive Suffixes

| Suffix | Examples |

|---|---|

-ង |

រមែងសញ្ជប់សញ្ជឹង, ត្បូងពណ៌បៃតង, ដើម្បីនឹង |

-យ |

អង្គុយក្នុងទីសមគួរហើយ, ធ្វើឱ្យជាស្ថានទីរីករាយ, គ្មានមន្ទីរពេទ្យ |

-ន |

ក្រាំងចិន, យោន, គឺមិនមាន |

-រ |

បានដល់ការទាយគតិរបស់ព្រះសិទ្ធត្ថរាជកុមារ, ក្រមាខ្មែរ, របស់ព្រះពុទ្ធមួយភាគដែរ |

-ត |

គឺមិនមាននិមិត្ត, ម្យ៉ាងទៀតសោត, និងរារាំងការពង្រីកខ្លួនរបស់ចិនបន្តទៅទៀត |

-ក |

នៃតំបន់ប្រាសាទសំបូរព្រៃគុក, ក្នុងសំដីរបស់អ្នក, និងចក |

-ម |

ទៅកាន់មនុស្សទាំងអស់ក្នុងសង្គម, ដូចជាកោះត្រល់ជាដើម, ទឹកនោមផ្អែម |

-s |

nicolas, thoughts, characters |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ight |

2.39x | 50 contexts | fight, night, sight |

tion |

2.28x | 46 contexts | option, nation, lotion |

ment |

2.30x | 39 contexts | cement, moment, mental |

atio |

2.39x | 33 contexts | ratio, nation, horatio |

nter |

2.15x | 37 contexts | enter, inter, winter |

inte |

2.29x | 29 contexts | intel, inter, winter |

stor |

2.31x | 27 contexts | story, jstor, storm |

ctio |

2.40x | 23 contexts | action, section, actions |

illa |

2.19x | 27 contexts | illam, villa, silla |

ubli |

2.35x | 19 contexts | dublin, public, publié |

pres |

2.24x | 22 contexts | press, ypres, presse |

iver |

2.18x | 22 contexts | liver, river, waiver |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-ប |

-ន |

50 words | បណ្តាញសាកលវិទ្យាល័យអាស៊ាន, បង្ហាញខ្លួន |

-ក |

-ង |

49 words | ការប្រើដំណរក្នុង, ក្រាំងខ្លុង |

-ប |

-យ |

46 words | បានត្រាស់សេចក្តីនេះរួចហើយ, បន្សាយ |

-ន |

-យ |

44 words | និងសម្តែងដោយ, និងបានយសសក្ដិគ្រប់សព្វណាស់ទៅហើយ |

-ក |

-យ |

40 words | កម្លាំងថយ, ក៏ពោលពាក្យ |

-ក |

-ន |

39 words | កៈទឿន, ការឈ្លានពានរបស់ជប៉ុន |

-ន |

-ង |

38 words | និងចៅប្រមាញ់វិងស៊ុង, និងនៅសងខាង |

-ន |

-រ |

37 words | និងវិចិត្រសិល្បៈខេត្តព្រះវិហារ, នាយសមុទ្រ |

-ស |

-ន |

36 words | សីលជាស្ពាន, សារពត័មាន |

-ស |

-រ |

35 words | សុពាហុត្ថេរ, សភិយត្ថេរ |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| abdagases | abdaga-s-es |

7.5 | s |

| នៅពីក្រោយខ្នង | នៅពីក្រោយខ្-ន-ង |

7.5 | ន |

| tlaxcaltecas | tlaxcalteca-s |

4.5 | tlaxcalteca |

| instrumental | instrument-al |

4.5 | instrument |

| អន្តរជាតិ | អ-ន-្តរជាតិ |

4.5 | ្តរជាតិ |

| អបដិក្កូលេ | អ-បដិក្កូលេ |

4.5 | បដិក្កូលេ |

| scholarships | scholarship-s |

4.5 | scholarship |

| ស្រមោចហែរ | ស្រមោចហែ-រ |

4.5 | ស្រមោចហែ |

| replacements | replacement-s |

4.5 | replacement |

| ពួកសត្វតែងមាន | ព-ួកសត្វតែងមា-ន |

3.0 | ួកសត្វតែងមា |

| grancrest | grancr-es-t |

3.0 | grancr |

| ប្រទាញសងខាង | ប្រទាញសងខា-ង |

1.5 | ប្រទាញសងខា |

| ក្នុងថ្ងៃនេះបាន | ក្នុងថ្ងៃនេះបា-ន |

1.5 | ក្នុងថ្ងៃនេះបា |

| vidyādhara | vidyādhar-a |

1.5 | vidyādhar |

| ក្រុមហាម៉ាស់ | ក-្រុមហាម៉ាស់ |

1.5 | ្រុមហាម៉ាស់ |

6.6 Linguistic Interpretation

Automated Insight: The language Khmer shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.89x) |

| N-gram | 2-gram | Lowest perplexity (5,212) |

| Markov | Context-4 | Highest predictability (98.0%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-10 08:23:26