Macedonian - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Macedonian Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

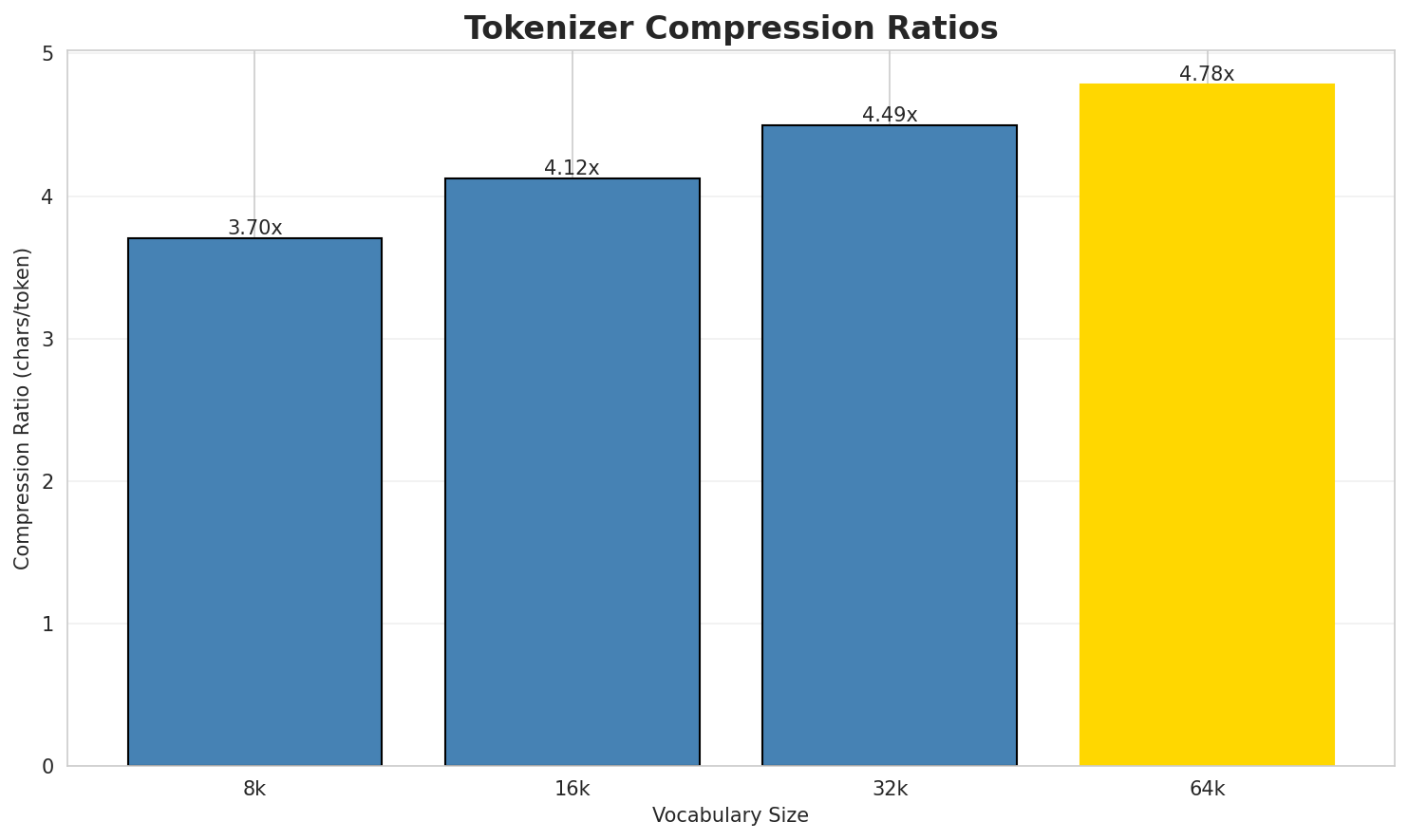

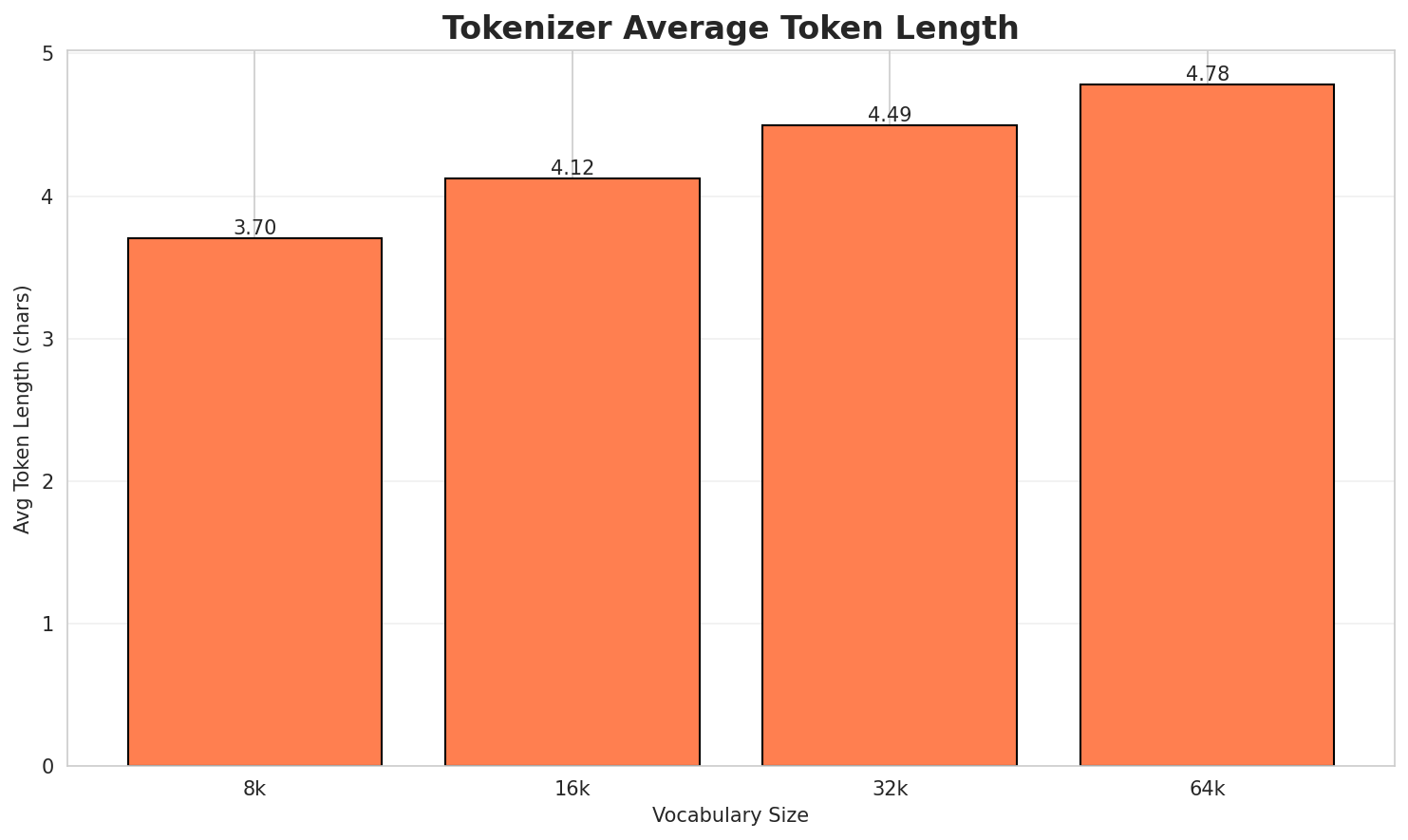

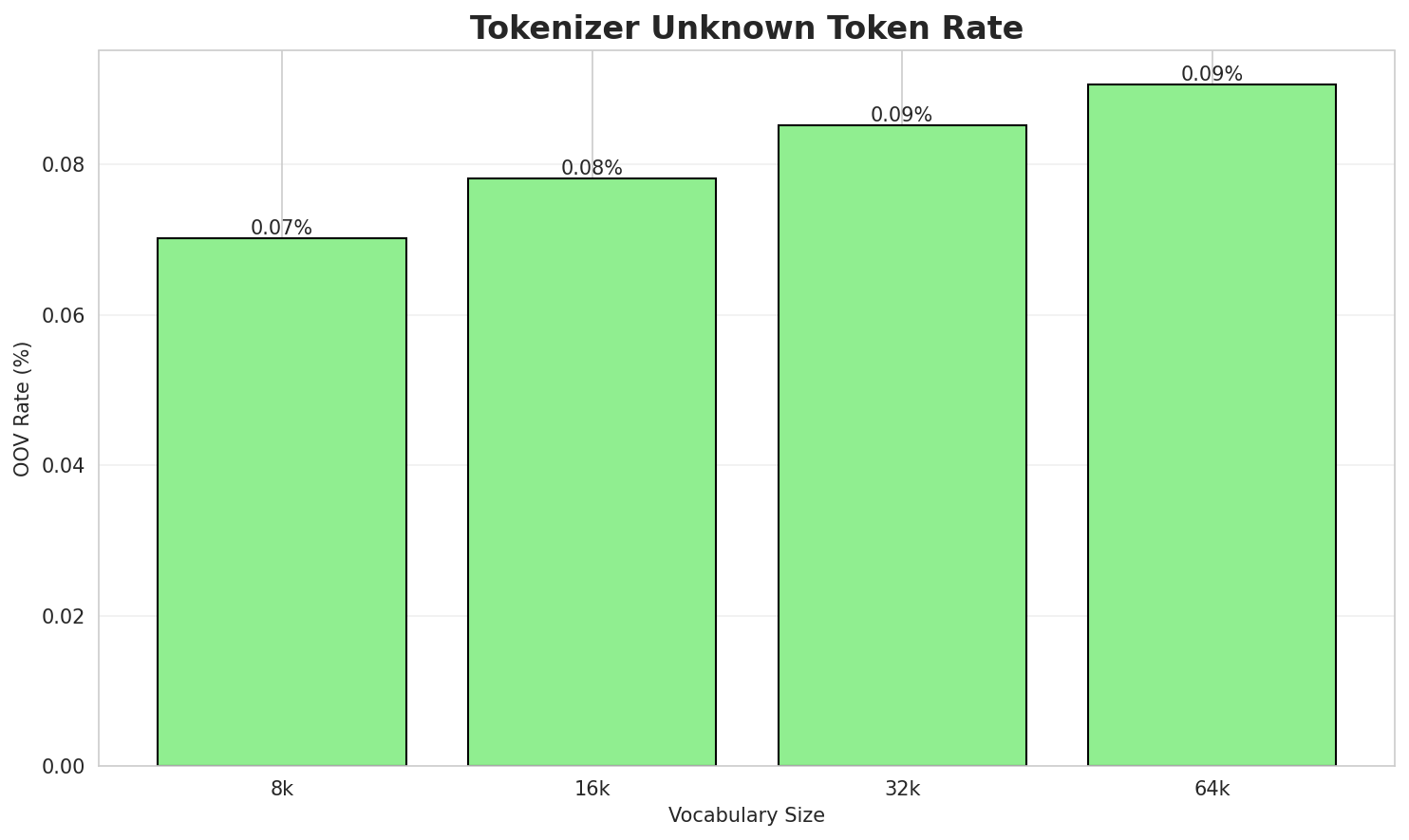

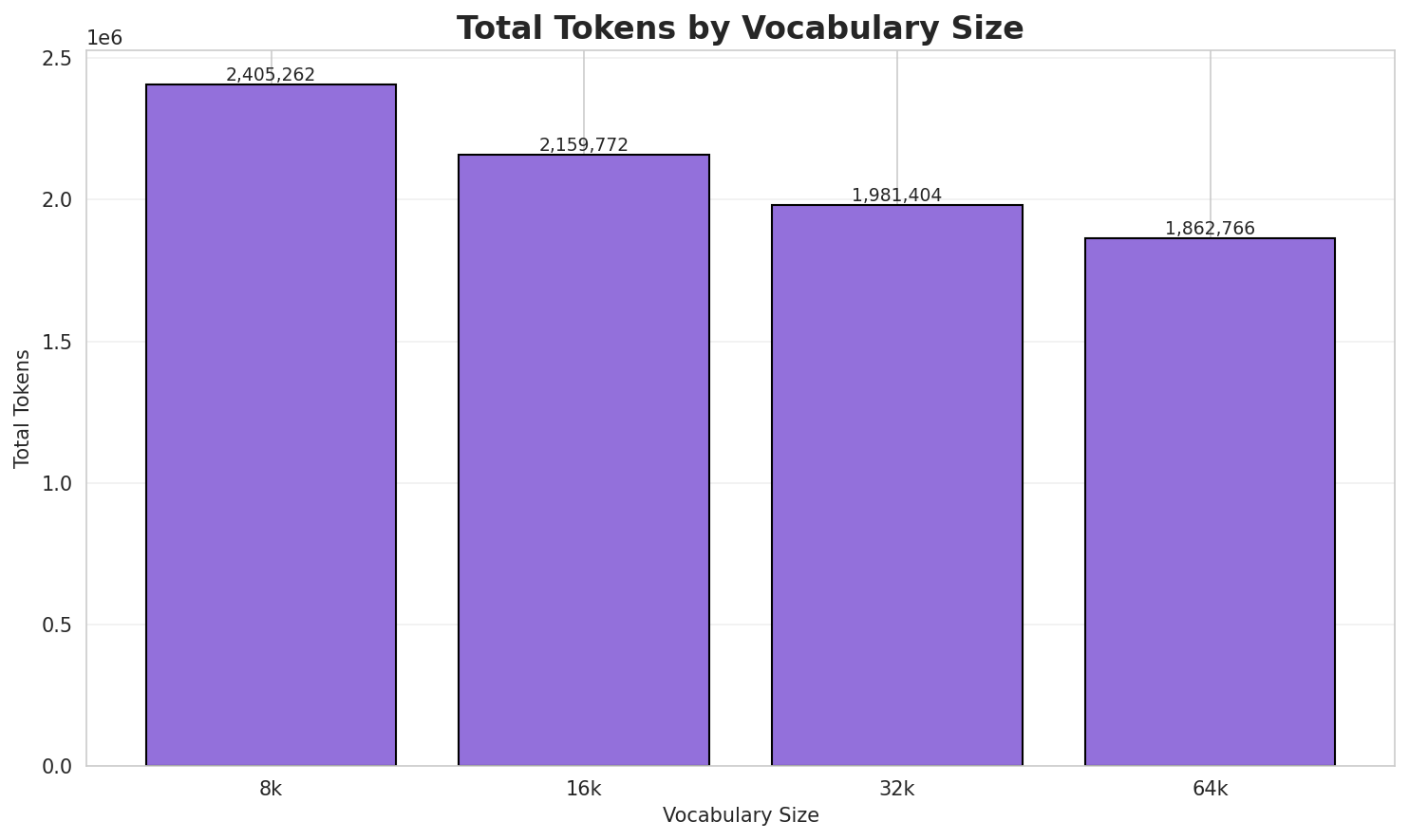

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.702x | 3.70 | 0.0702% | 2,405,262 |

| 16k | 4.123x | 4.12 | 0.0782% | 2,159,772 |

| 32k | 4.494x | 4.49 | 0.0852% | 1,981,404 |

| 64k | 4.780x 🏆 | 4.78 | 0.0906% | 1,862,766 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: година во архитектурата содржи некои значајни настани. Настани во архитектурата ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+3 more) |

13 |

| 16k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+3 more) |

13 |

| 32k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+3 more) |

13 |

| 64k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+3 more) |

13 |

Sample 2: година во архитектурата содржи некои значајни настани. Настани во архитектурата

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+1 more) |

11 |

| 16k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+1 more) |

11 |

| 32k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+1 more) |

11 |

| 64k | ▁година ▁во ▁архитектурата ▁содржи ▁некои ▁значајни ▁настани . ▁настани ▁во ... (+1 more) |

11 |

Sample 3: 31 мај — 151-иот ден во годината според грегоријанскиот календар (152-и во прест...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ 3 1 ▁мај ▁— ▁ 1 5 1 - ... (+33 more) |

43 |

| 16k | ▁ 3 1 ▁мај ▁— ▁ 1 5 1 - ... (+32 more) |

42 |

| 32k | ▁ 3 1 ▁мај ▁— ▁ 1 5 1 - ... (+32 more) |

42 |

| 64k | ▁ 3 1 ▁мај ▁— ▁ 1 5 1 - ... (+32 more) |

42 |

Key Findings

- Best Compression: 64k achieves 4.780x compression

- Lowest UNK Rate: 8k with 0.0702% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

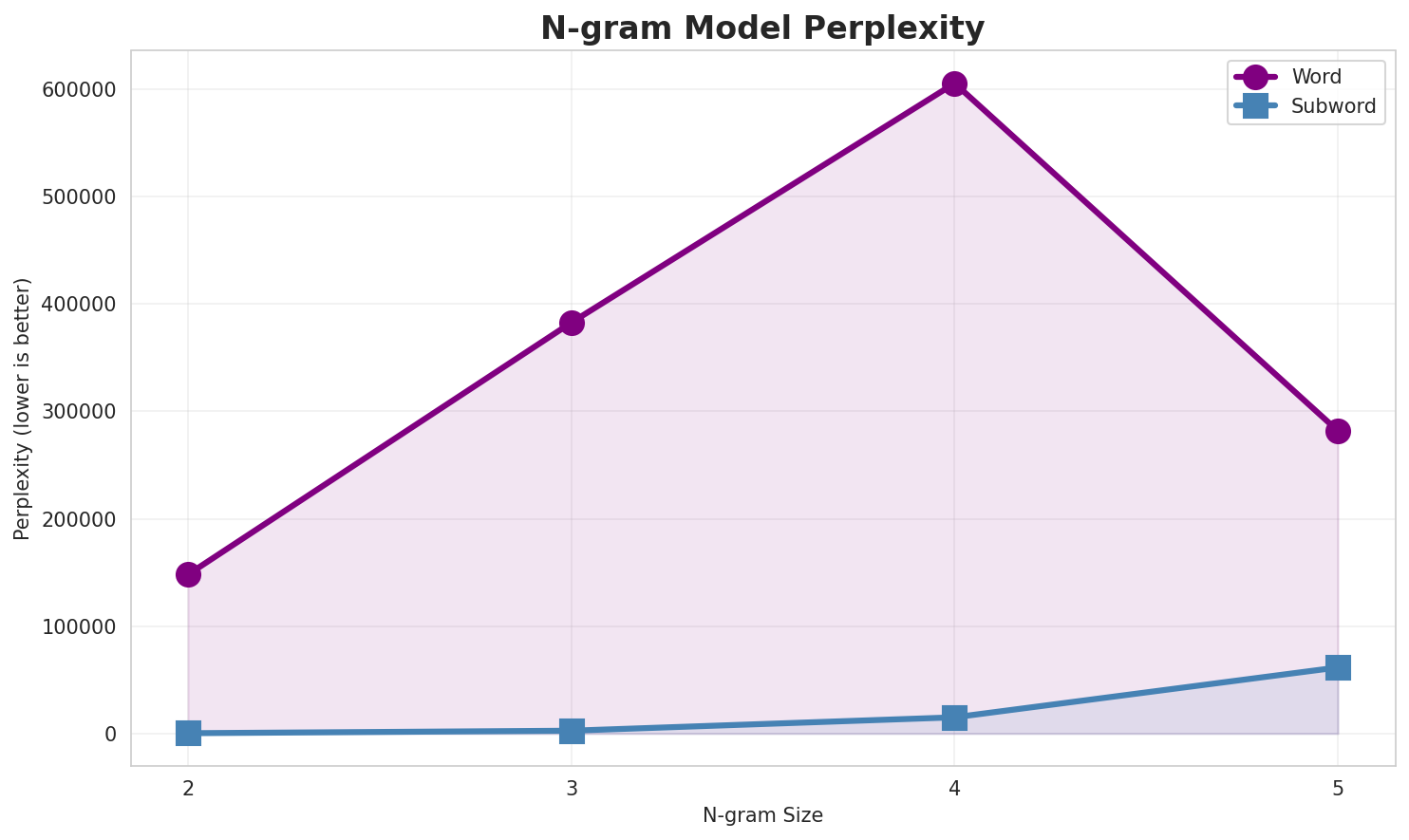

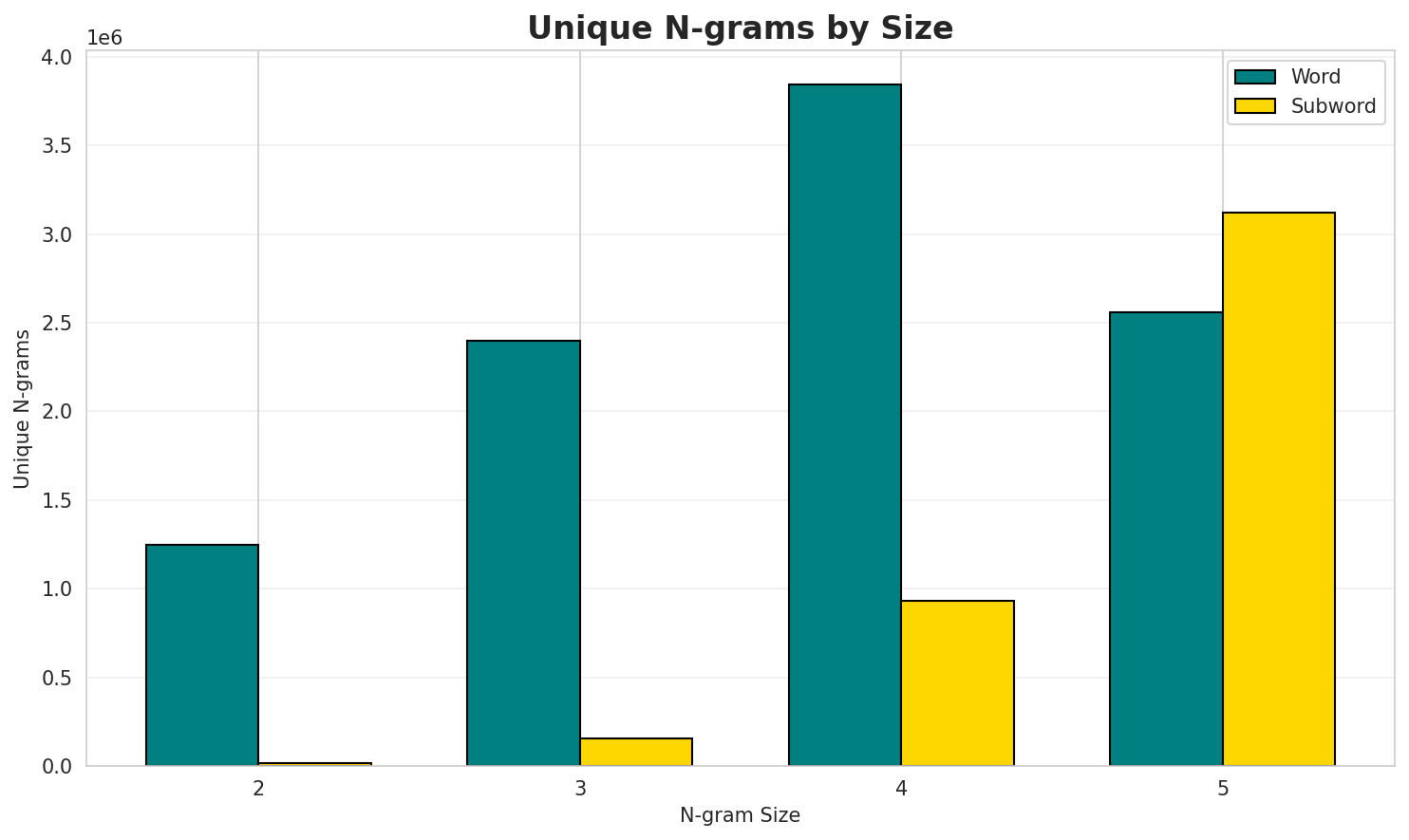

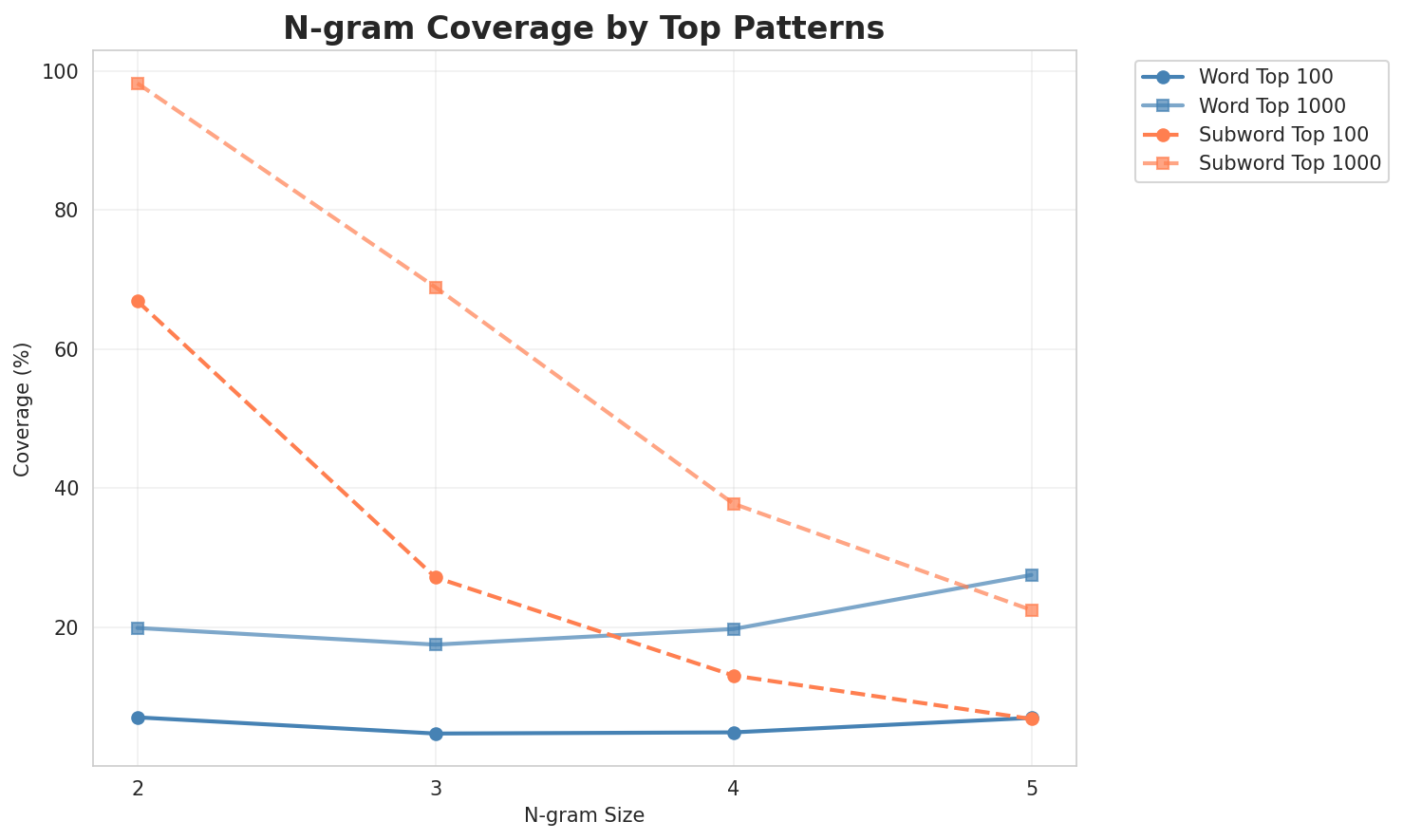

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 148,118 | 17.18 | 1,246,589 | 7.0% | 19.9% |

| 2-gram | Subword | 310 🏆 | 8.28 | 17,556 | 66.9% | 98.2% |

| 3-gram | Word | 382,752 | 18.55 | 2,398,097 | 4.7% | 17.5% |

| 3-gram | Subword | 2,638 | 11.37 | 153,828 | 27.1% | 68.9% |

| 4-gram | Word | 605,602 | 19.21 | 3,842,232 | 4.8% | 19.7% |

| 4-gram | Subword | 15,114 | 13.88 | 929,390 | 13.0% | 37.7% |

| 5-gram | Word | 281,875 | 18.10 | 2,561,910 | 6.9% | 27.5% |

| 5-gram | Subword | 61,546 | 15.91 | 3,120,609 | 6.8% | 22.4% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | во година |

270,904 |

| 2 | да се |

185,526 |

| 3 | може да |

82,758 |

| 4 | исто така |

74,629 |

| 5 | година во |

71,130 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | од страна на |

47,837 |

| 2 | п н е |

45,911 |

| 3 | за време на |

45,528 |

| 4 | во текот на |

44,568 |

| 5 | може да се |

38,713 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | г п н е |

26,767 |

| 2 | во текот на и |

13,167 |

| 3 | година од страна на |

13,039 |

| 4 | база на податоци на |

10,253 |

| 5 | е вклучен и во |

10,177 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | новиот општ каталог на длабоконебесни |

10,166 |

| 2 | општ каталог на длабоконебесни тела |

10,166 |

| 3 | тоа е вклучен и во |

10,165 |

| 4 | е вклучен и во други |

10,165 |

| 5 | вршено од повеќе истражувачи па |

10,165 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | а _ |

16,220,828 |

| 2 | н а |

9,755,201 |

| 3 | о _ |

8,545,001 |

| 4 | и _ |

8,299,189 |

| 5 | _ н |

7,088,266 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | н а _ |

5,782,550 |

| 2 | _ н а |

5,471,722 |

| 3 | _ в о |

2,895,397 |

| 4 | в о _ |

2,774,290 |

| 5 | а т а |

2,545,500 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ н а _ |

3,968,376 |

| 2 | _ в о _ |

2,496,500 |

| 3 | а т а _ |

2,159,054 |

| 4 | и т е _ |

1,510,803 |

| 5 | _ о д _ |

1,503,838 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | а _ н а _ |

1,123,156 |

| 2 | _ г о д и |

801,639 |

| 3 | г о д и н |

793,128 |

| 4 | о д и н а |

717,809 |

| 5 | а _ в о _ |

641,767 |

Key Findings

- Best Perplexity: 2-gram (subword) with 310

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~22% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

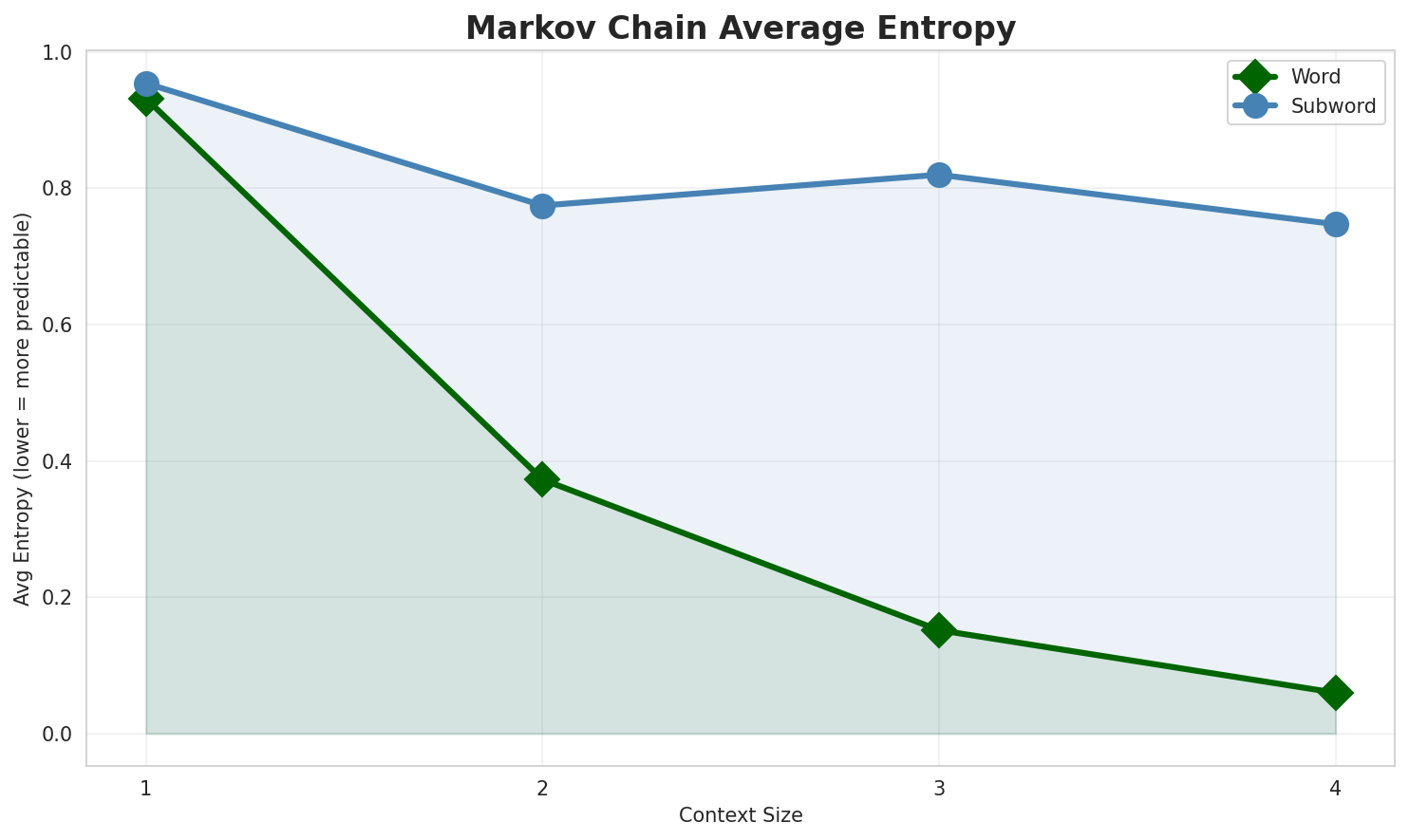

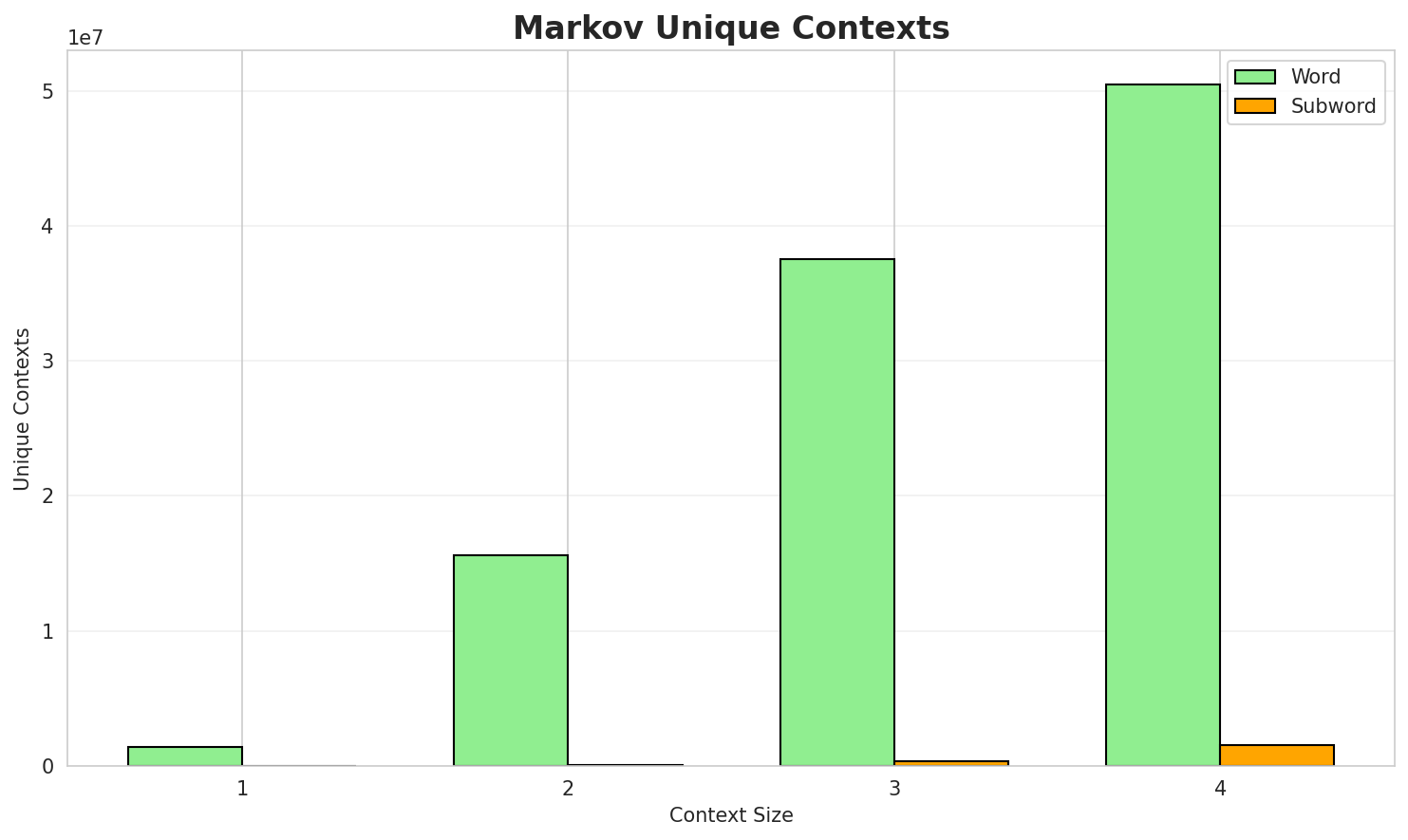

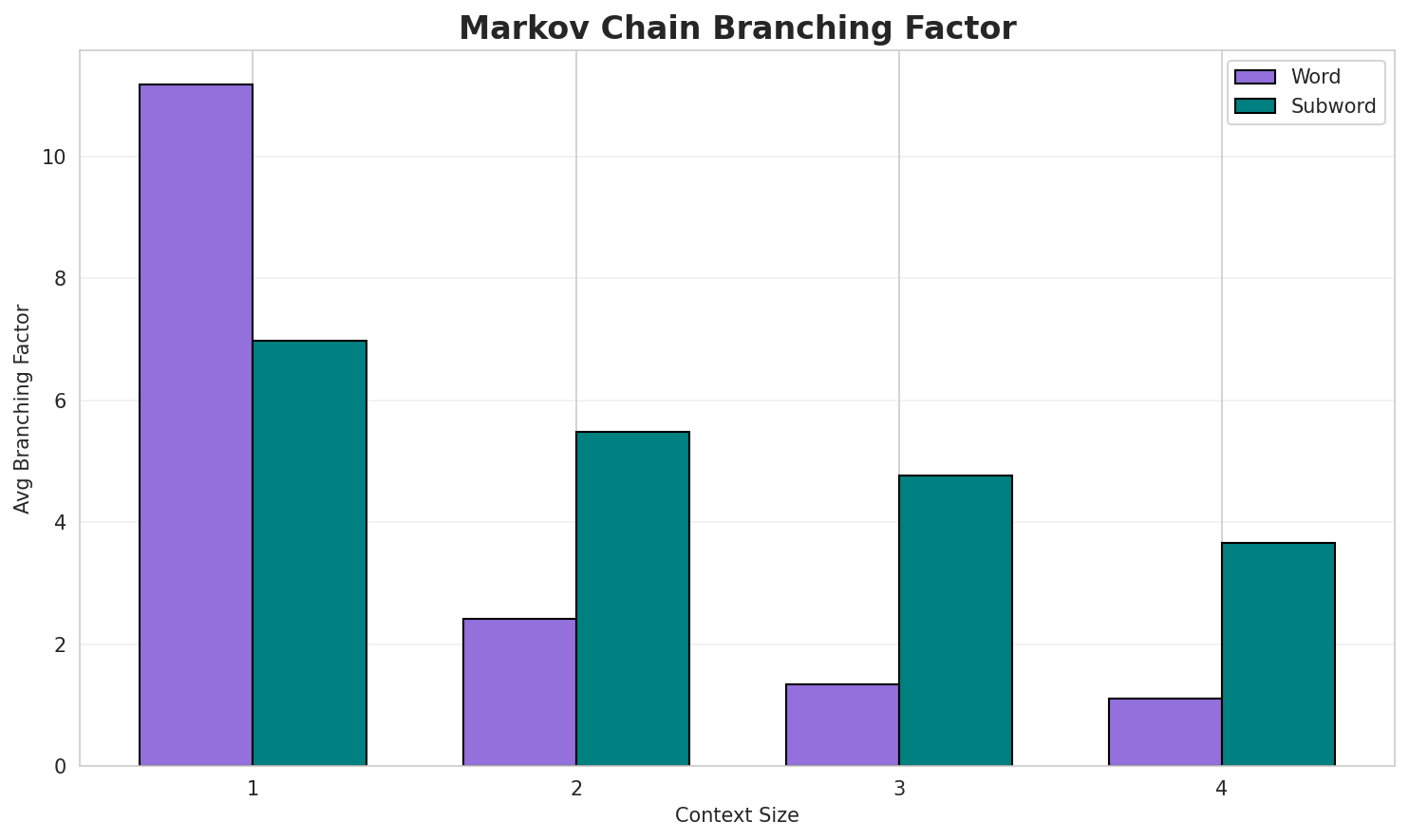

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.9313 | 1.907 | 11.18 | 1,397,869 | 6.9% |

| 1 | Subword | 0.9537 | 1.937 | 6.98 | 8,643 | 4.6% |

| 2 | Word | 0.3725 | 1.295 | 2.41 | 15,610,954 | 62.7% |

| 2 | Subword | 0.7745 | 1.711 | 5.49 | 60,305 | 22.6% |

| 3 | Word | 0.1516 | 1.111 | 1.34 | 37,555,740 | 84.8% |

| 3 | Subword | 0.8197 | 1.765 | 4.77 | 330,722 | 18.0% |

| 4 | Word | 0.0598 🏆 | 1.042 | 1.11 | 50,433,239 | 94.0% |

| 4 | Subword | 0.7470 | 1.678 | 3.67 | 1,576,045 | 25.3% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

на минотаурот најстарото болничко лекување на електронот може да го ставаат во ноември се од овиево г п н е независна држава за аварите да се случиле неколку минути по неколкуи надгледувајќи радикални реакции како и главен увозник skycom сад и во романија историјата како ugc

Context Size 2:

во година во полска и украина реката е 117 км2 дитмаршен 132 965 1 861 година предда се натпреварува водачи на земјата развојот на препарати за атрофичната кожа многу поширок опфат т...може да има изразени оддавања на стронциум и алуминиум изопрооксиди соодветно првиот е анонимното ск...

Context Size 3:

од страна на данците кои се подолги од аксијалната пиридилна ga n врска со должини на страните аза време на вечерата иван илич е веќе многу пијан кога линдорф влегува со пејачката стела и гово текот на 367 и 368 исламска година настани 1 јануари ссср започнува со својата хуманитарна активн...

Context Size 4:

г п н е според продолжениот јулијански календар истата трае во текот на и година според асирскиот ка...во текот на и година според асирскиот календар во којшто мерењето на времето започнува со 622 година...година од страна на бугарските истражувачи генеричките лекови го формираат столбот на локалната екон...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_о_зна_скан_идо_а._во_и_нина_н_сова_дичеме_на_ка

Context Size 2:

а_изенизвине_на_мна_доне_перетски_о_улигна_арларист

Context Size 3:

на_јанско-лисковек_на_ост_попрата_же_во_френ_каквиот_п

Context Size 4:

_на_чашките_заливор_во_сличн_кривале_дата_долна_свињарски

Key Findings

- Best Predictability: Context-4 (word) with 94.0% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (1,576,045 contexts)

- Recommendation: Context-3 or Context-4 for text generation

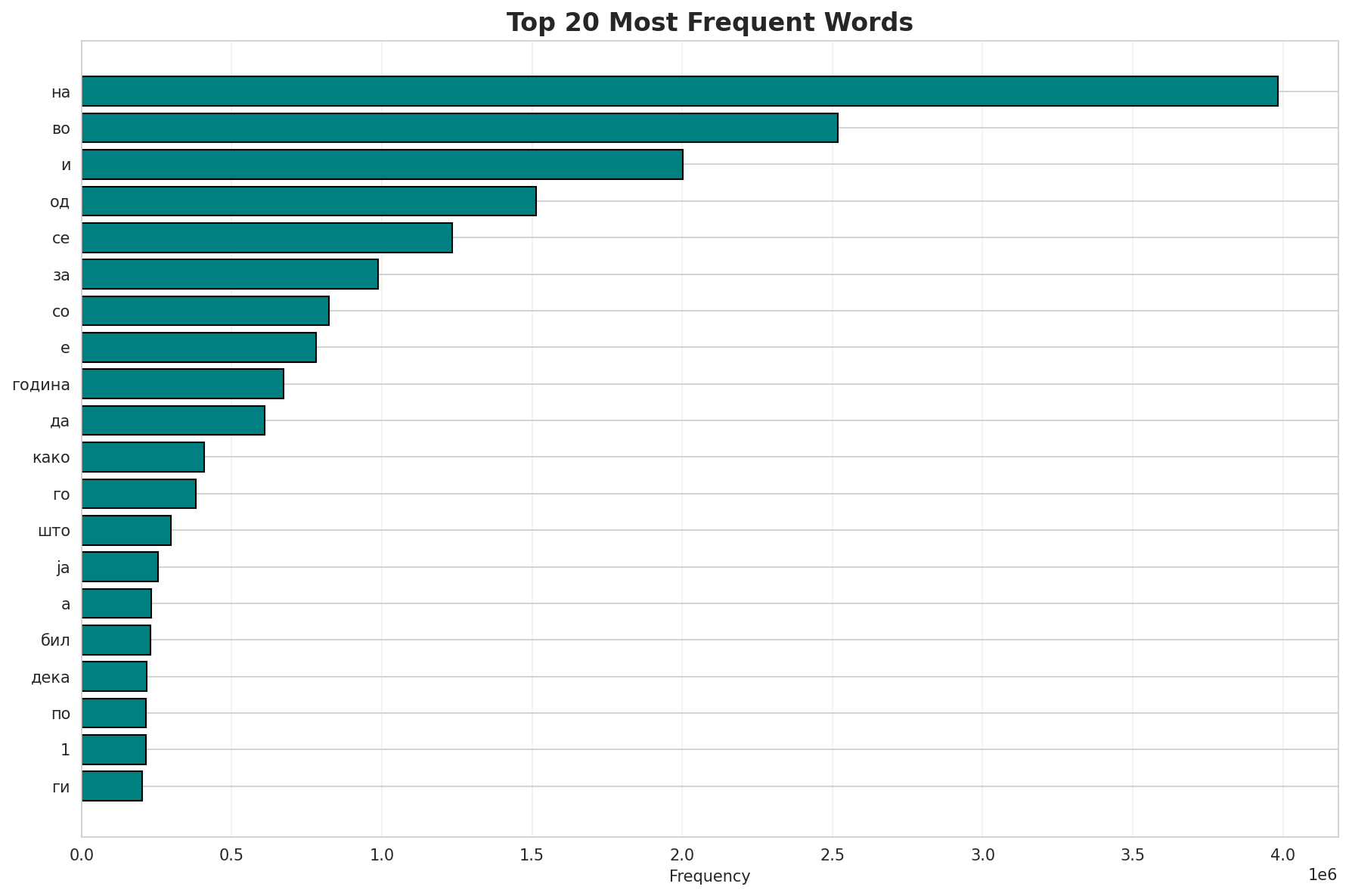

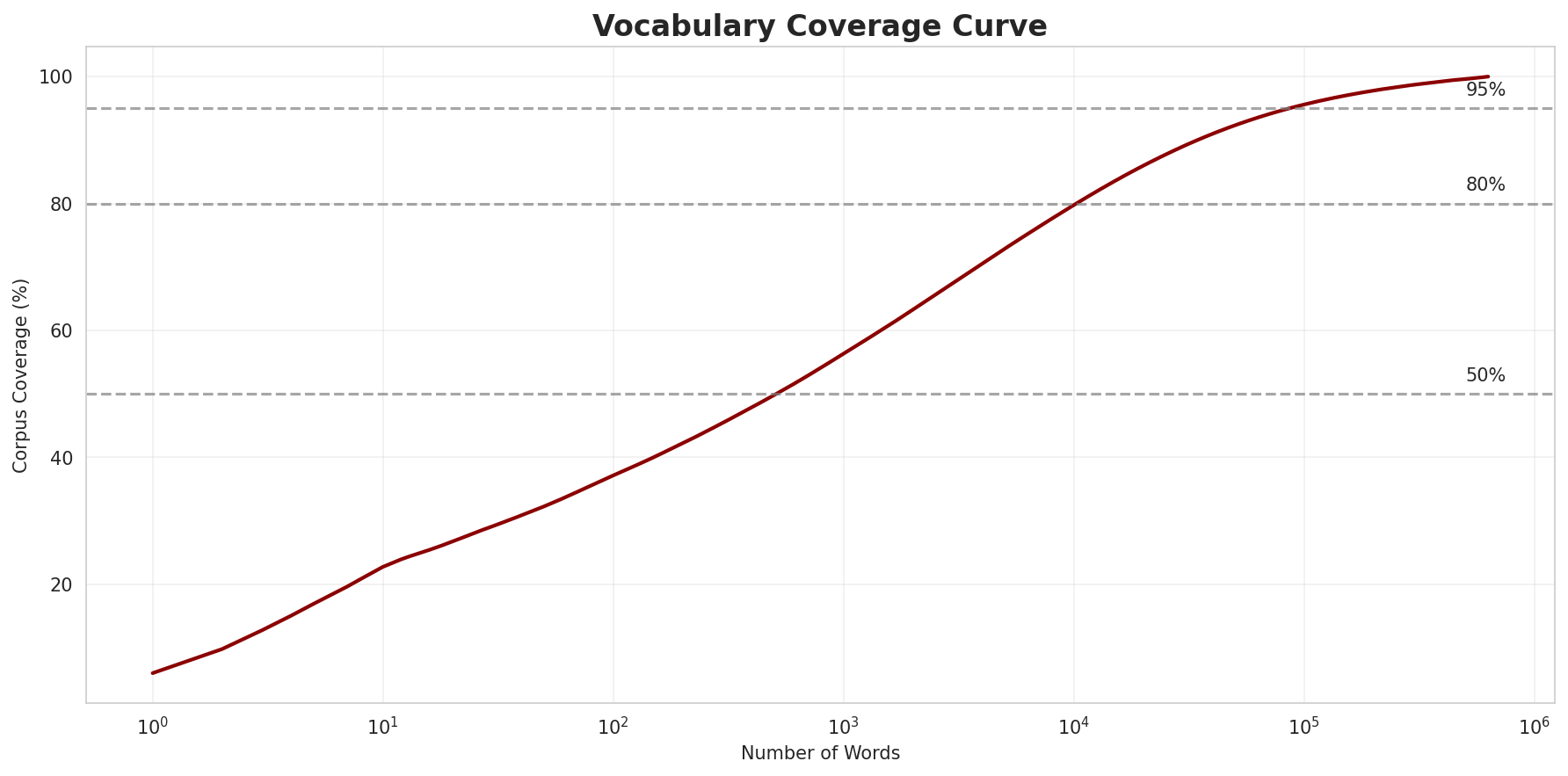

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 629,840 |

| Total Tokens | 66,539,192 |

| Mean Frequency | 105.64 |

| Median Frequency | 4 |

| Frequency Std Dev | 7439.52 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | на | 3,984,194 |

| 2 | во | 2,517,366 |

| 3 | и | 2,001,305 |

| 4 | од | 1,514,717 |

| 5 | се | 1,235,287 |

| 6 | за | 987,031 |

| 7 | со | 823,175 |

| 8 | е | 782,070 |

| 9 | година | 672,383 |

| 10 | да | 610,844 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | калеуче | 2 |

| 2 | chiloé | 2 |

| 3 | преживениот | 2 |

| 4 | делевиш | 2 |

| 5 | platessoides | 2 |

| 6 | pleco | 2 |

| 7 | метарма | 2 |

| 8 | алалаона | 2 |

| 9 | octodecimguttata | 2 |

| 10 | домбасл | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.9604 |

| R² (Goodness of Fit) | 0.996757 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 37.1% |

| Top 1,000 | 56.3% |

| Top 5,000 | 72.8% |

| Top 10,000 | 79.8% |

Key Findings

- Zipf Compliance: R²=0.9968 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 37.1% of corpus

- Long Tail: 619,840 words needed for remaining 20.2% coverage

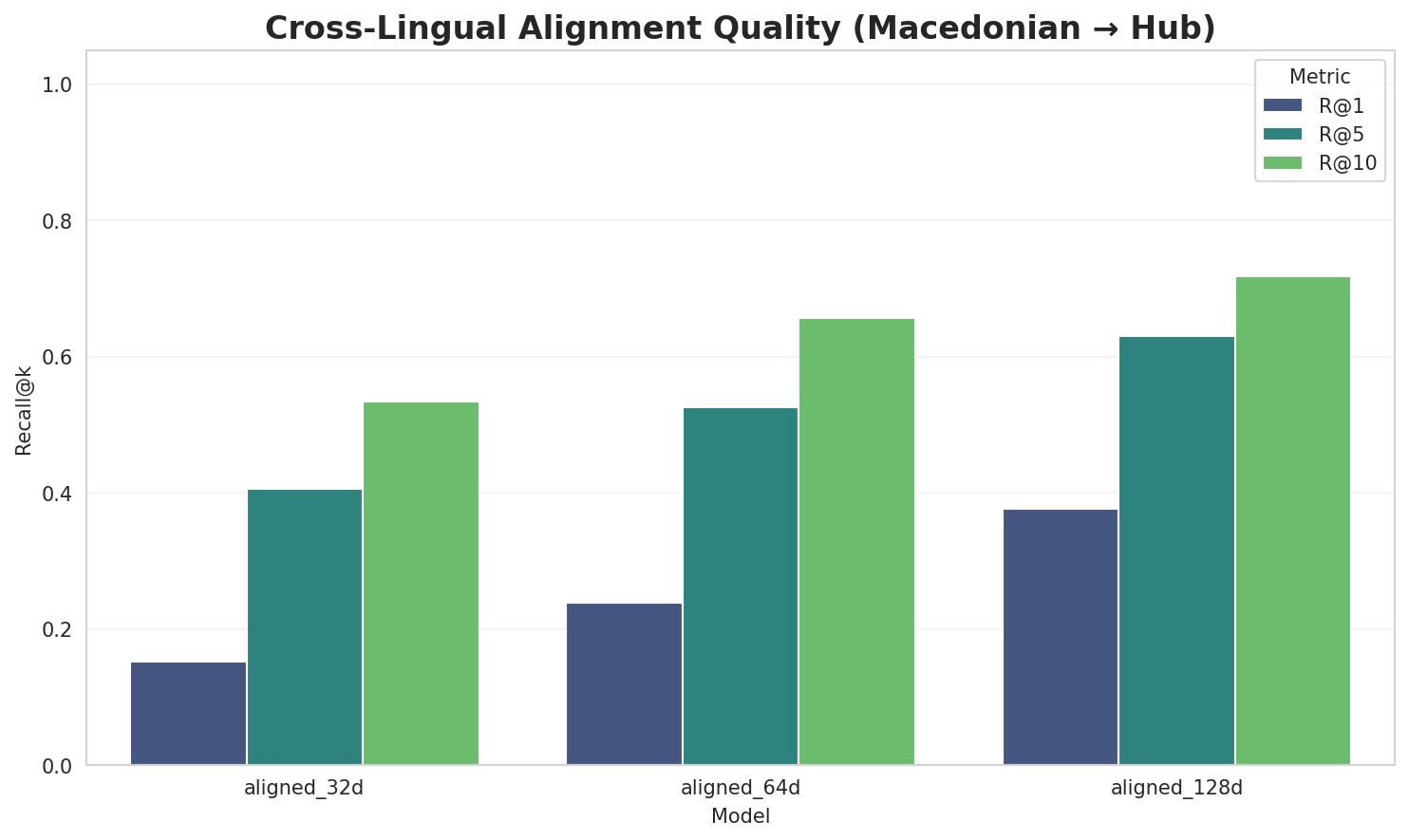

5. Word Embeddings Evaluation

5.1 Cross-Lingual Alignment

5.2 Model Comparison

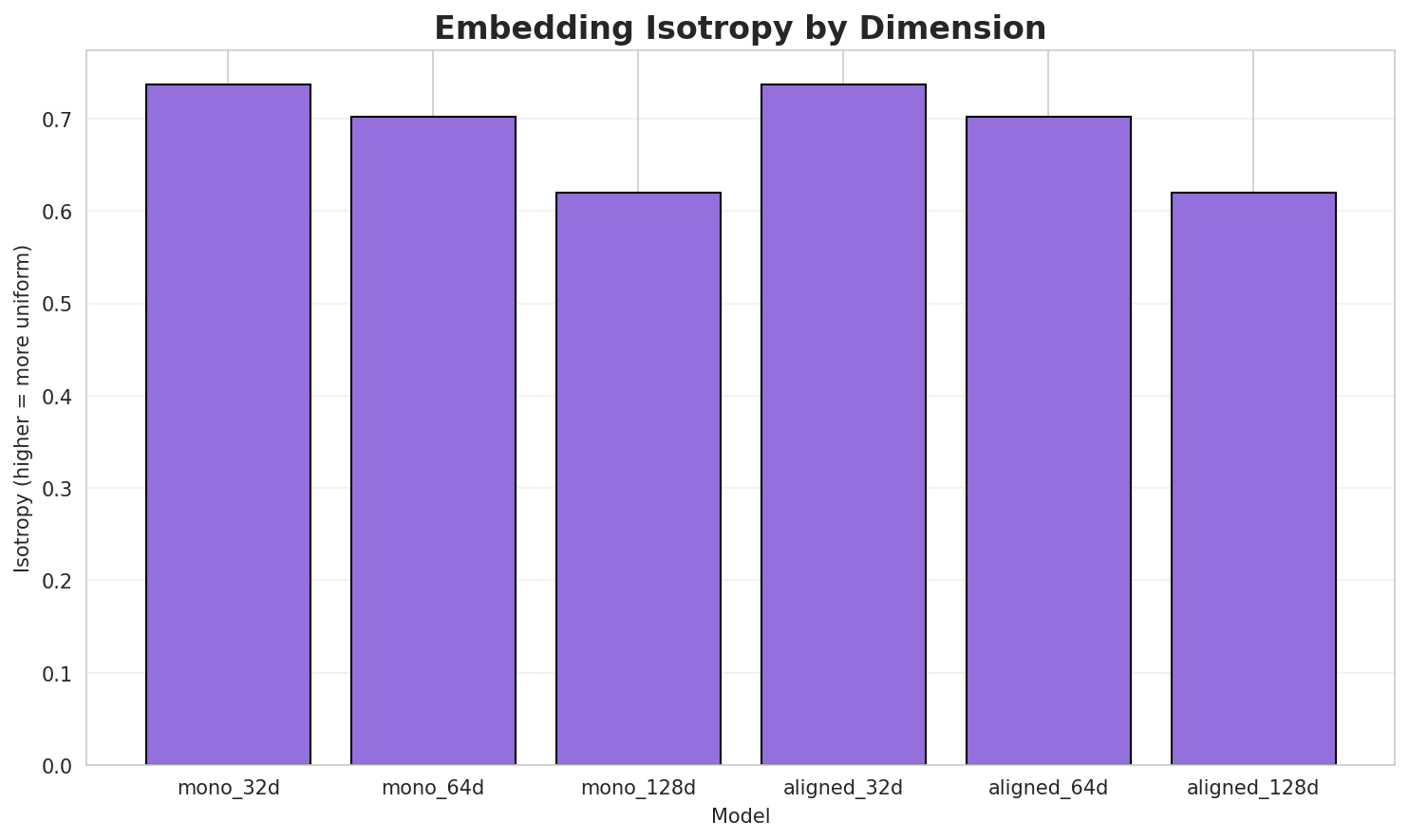

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.7374 | 0.3633 | N/A | N/A |

| mono_64d | 64 | 0.7024 | 0.2990 | N/A | N/A |

| mono_128d | 128 | 0.6203 | 0.2691 | N/A | N/A |

| aligned_32d | 32 | 0.7374 🏆 | 0.3635 | 0.1520 | 0.5340 |

| aligned_64d | 64 | 0.7024 | 0.2953 | 0.2380 | 0.6560 |

| aligned_128d | 128 | 0.6203 | 0.2655 | 0.3760 | 0.7180 |

Key Findings

- Best Isotropy: aligned_32d with 0.7374 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.3093. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 37.6% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.225 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-с |

стенливил, североисточен, салминен |

-ка |

кантрел, кач, католски |

-а |

аргас, азилантите, акнисте |

-ма |

мамуци, мажените, маринадата |

-по |

помориска, подјазичната, положат |

-к |

кантрел, кладошница, коинон |

-ко |

коинон, кошланд, копродукт |

-s |

sbordone, superluminal, stralsunder |

Productive Suffixes

| Suffix | Examples |

|---|---|

-а |

душевина, електроника, пијаната |

-и |

издатоци, рудници, гликобелковини |

-е |

најневообичаените, субкултурните, царице |

-те |

најневообичаените, субкултурните, регистраторите |

-та |

пијаната, проверката, подјазичната |

-т |

еукариот, реверсот, меѓупарламентарниот |

-от |

еукариот, реверсот, меѓупарламентарниот |

-о |

витешкото, интимно, пароло |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

уваа |

2.43x | 85 contexts | уваат, чуваа, жуваат |

увањ |

2.04x | 160 contexts | лување, рување, чување |

увал |

2.00x | 172 contexts | увала, јувал, дувал |

ијат |

1.76x | 300 contexts | лијат, хијат, ријат |

ички |

1.82x | 235 contexts | кички, нички, лички |

кедо |

2.77x | 33 contexts | македо, алкедо, македон |

ањет |

2.27x | 71 contexts | рањето, вањето, кањете |

нски |

1.58x | 402 contexts | ронски, менски, ренски |

анск |

1.34x | 935 contexts | канск, анска, данск |

иски |

1.56x | 353 contexts | киски, тиски, писки |

инск |

1.39x | 722 contexts | пинск, инско, минск |

онск |

1.41x | 510 contexts | ронски, јонско, шонски |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-п |

-а |

118 words | пресбикуза, пентесилеја |

-с |

-а |

108 words | самбра, скаса |

-п |

-и |

79 words | повелбени, пољани |

-п |

-е |

76 words | питите, поиде |

-к |

-а |

74 words | клитика, куиксама |

-с |

-и |

70 words | сукотаи, сапрофитии |

-с |

-е |

67 words | служите, софите |

-по |

-а |

66 words | поситна, почесна |

-а |

-а |

64 words | адарсана, аеторема |

-б |

-а |

62 words | безлисна, бозонската |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| клинетите | клинет-и-те |

7.5 | и |

| карантанците | карантанц-и-те |

7.5 | и |

| ҫемҫелӗхпалли | ҫемҫелӗхпал-л-и |

7.5 | л |

| кедровата | кедров-а-та |

7.5 | а |

| тркачката | тркач-ка-та |

7.5 | ка |

| пантотенат | пантотен-а-т |

7.5 | а |

| наранџито | наранџ-и-то |

7.5 | и |

| епросартан | епросар-та-н |

7.5 | та |

| стивенсовиот | стивенсов-и-от |

7.5 | и |

| организирано | организир-а-но |

7.5 | а |

| евроазијците | евроазијц-и-те |

7.5 | и |

| епистазата | епистаз-а-та |

7.5 | а |

| страдачите | страдач-и-те |

7.5 | и |

| поштарината | поштарин-а-та |

7.5 | а |

| дебатирано | дебатир-а-но |

7.5 | а |

6.6 Linguistic Interpretation

Automated Insight: The language Macedonian shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.78x) |

| N-gram | 2-gram | Lowest perplexity (310) |

| Markov | Context-4 | Highest predictability (94.0%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-10 18:37:02