Sakizaya - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Sakizaya Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

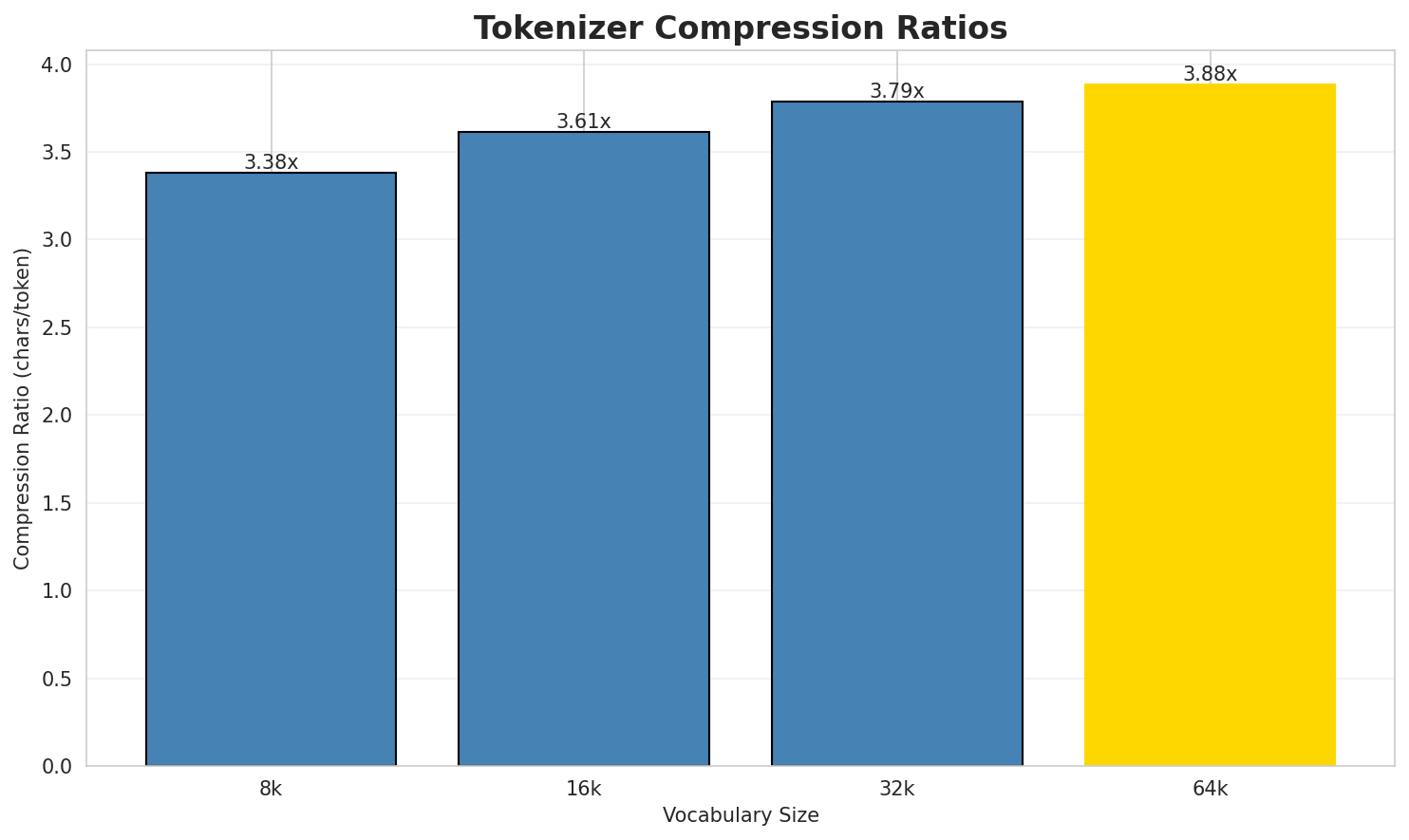

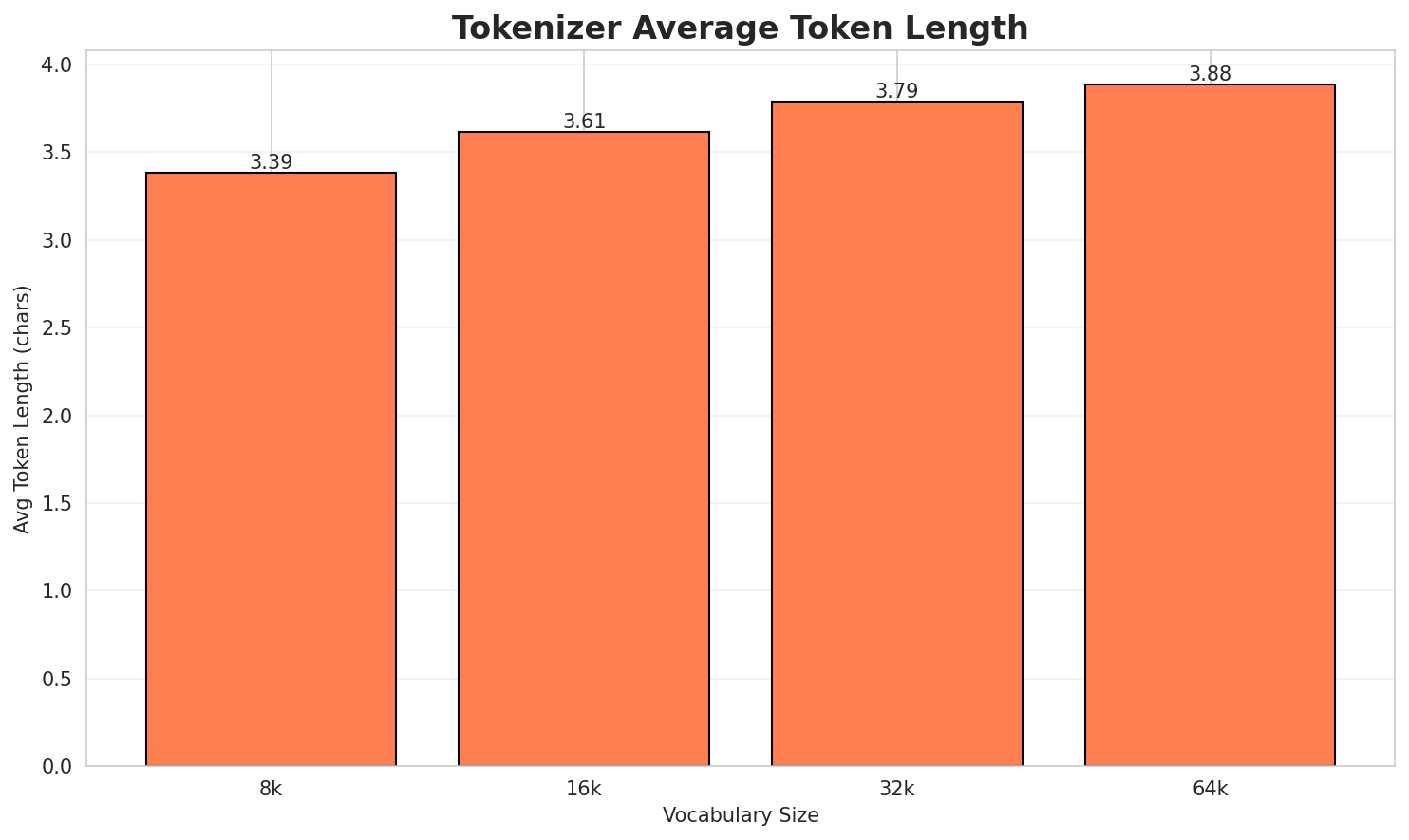

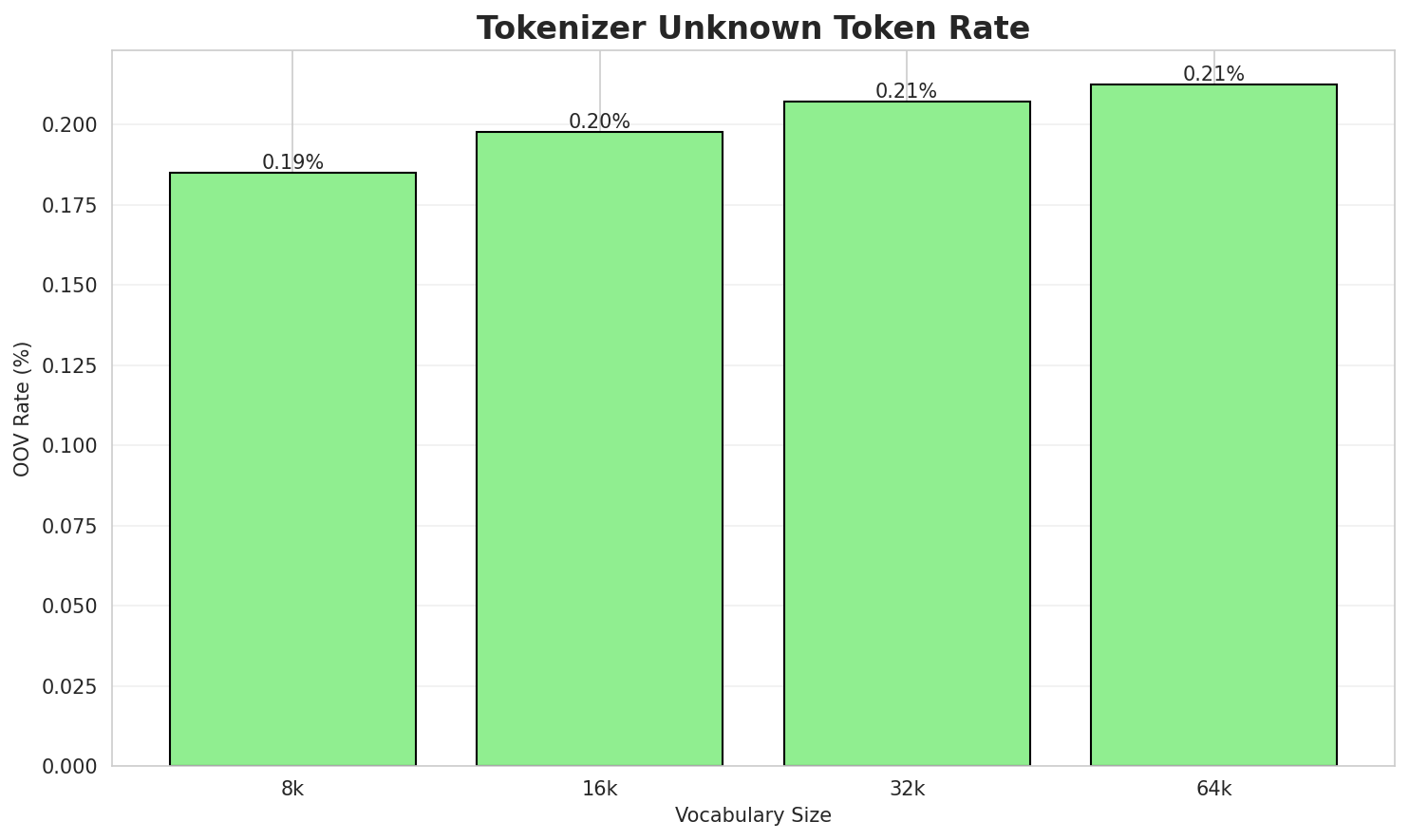

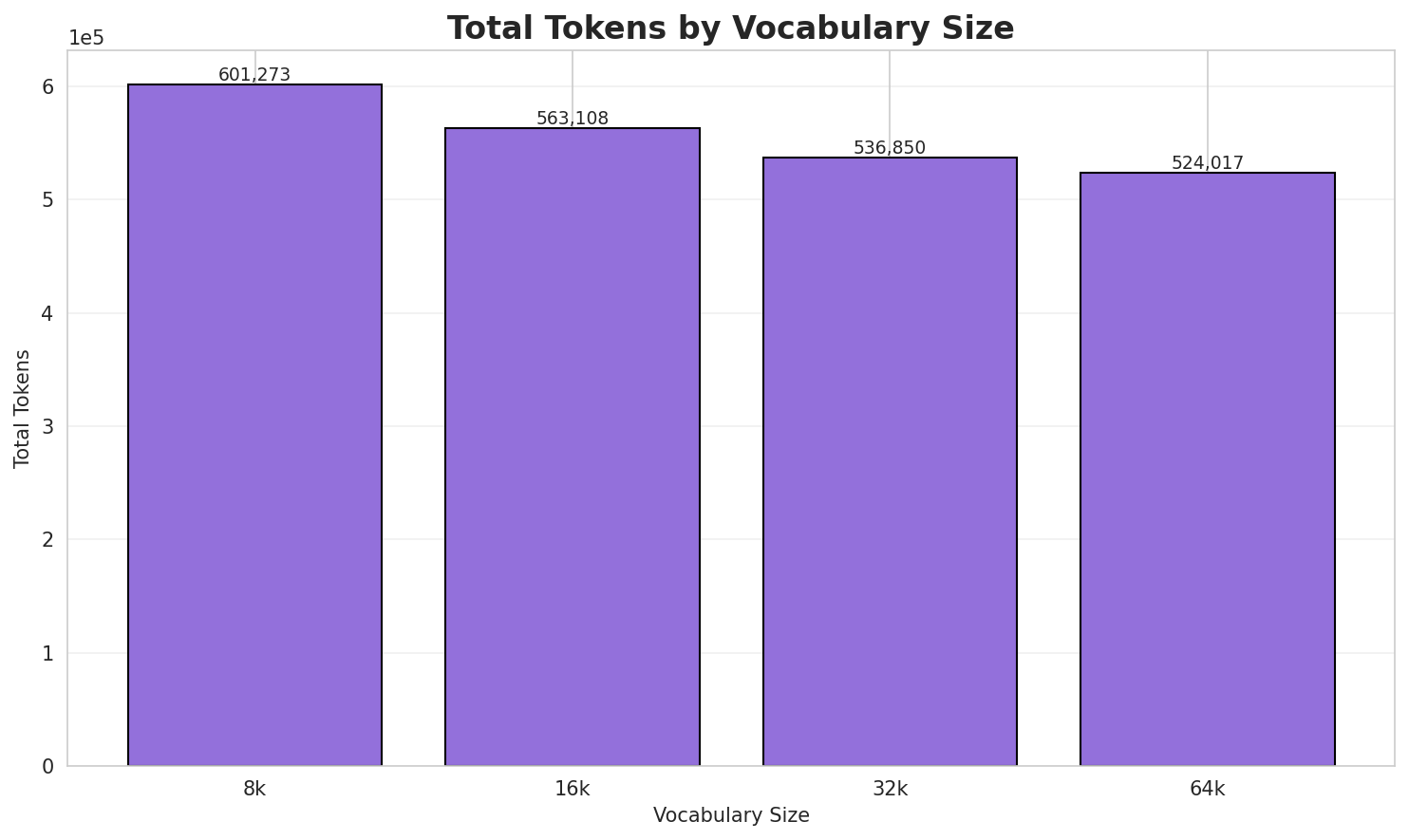

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.383x | 3.39 | 0.1851% | 601,273 |

| 16k | 3.613x | 3.61 | 0.1977% | 563,108 |

| 32k | 3.789x | 3.79 | 0.2073% | 536,850 |

| 64k | 3.882x 🏆 | 3.88 | 0.2124% | 524,017 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: (kamu nu hulam:照顧) diput tu babalaki. 照顧老人。 malalitin tu ihekalay atu zumaay a n...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁( kamu ▁nu ▁hulam : 照 顧 ) ▁d iput ... (+16 more) |

26 |

| 16k | ▁( kamu ▁nu ▁hulam : 照顧 ) ▁d iput ▁tu ... (+14 more) |

24 |

| 32k | ▁( kamu ▁nu ▁hulam : 照顧 ) ▁diput ▁tu ▁babalaki ... (+12 more) |

22 |

| 64k | ▁( kamu ▁nu ▁hulam : 照顧 ) ▁diput ▁tu ▁babalaki ... (+11 more) |

21 |

Sample 2: (kasatubangan:u kamu nu Hulam:被殖民、被奴隸 pasatubangan:讓他做奴隸)

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁( kas atu bangan : u ▁kamu ▁nu ▁hulam : ... (+17 more) |

27 |

| 16k | ▁( kas atu bangan : u ▁kamu ▁nu ▁hulam : ... (+17 more) |

27 |

| 32k | ▁( kas atu bangan : u ▁kamu ▁nu ▁hulam : ... (+16 more) |

26 |

| 64k | ▁( kas atubangan : u ▁kamu ▁nu ▁hulam : 被 ... (+9 more) |

19 |

Sample 3: kamu nu hulam:掉下 tinaku a kamu mihetik 掉下 mihetik kaku tu kalisiw i ginko. 我去銀行提...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁kamu ▁nu ▁hulam : 掉 下 ▁tinaku ▁a ▁kamu ▁mih ... (+29 more) |

39 |

| 16k | ▁kamu ▁nu ▁hulam : 掉 下 ▁tinaku ▁a ▁kamu ▁mih ... (+26 more) |

36 |

| 32k | ▁kamu ▁nu ▁hulam : 掉 下 ▁tinaku ▁a ▁kamu ▁mih ... (+26 more) |

36 |

| 64k | ▁kamu ▁nu ▁hulam : 掉下 ▁tinaku ▁a ▁kamu ▁mihetik ▁ ... (+21 more) |

31 |

Key Findings

- Best Compression: 64k achieves 3.882x compression

- Lowest UNK Rate: 8k with 0.1851% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

2. N-gram Model Evaluation

Results

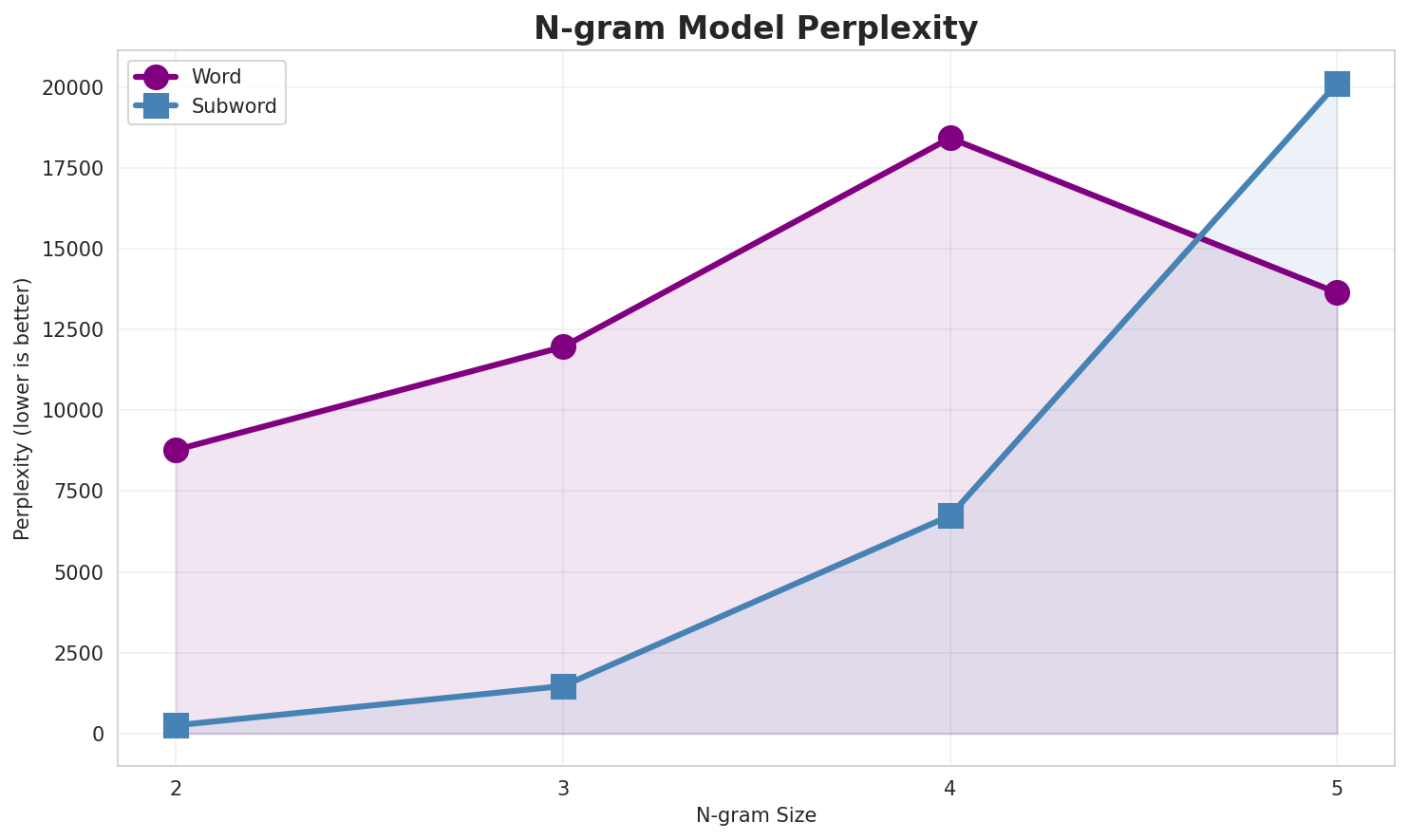

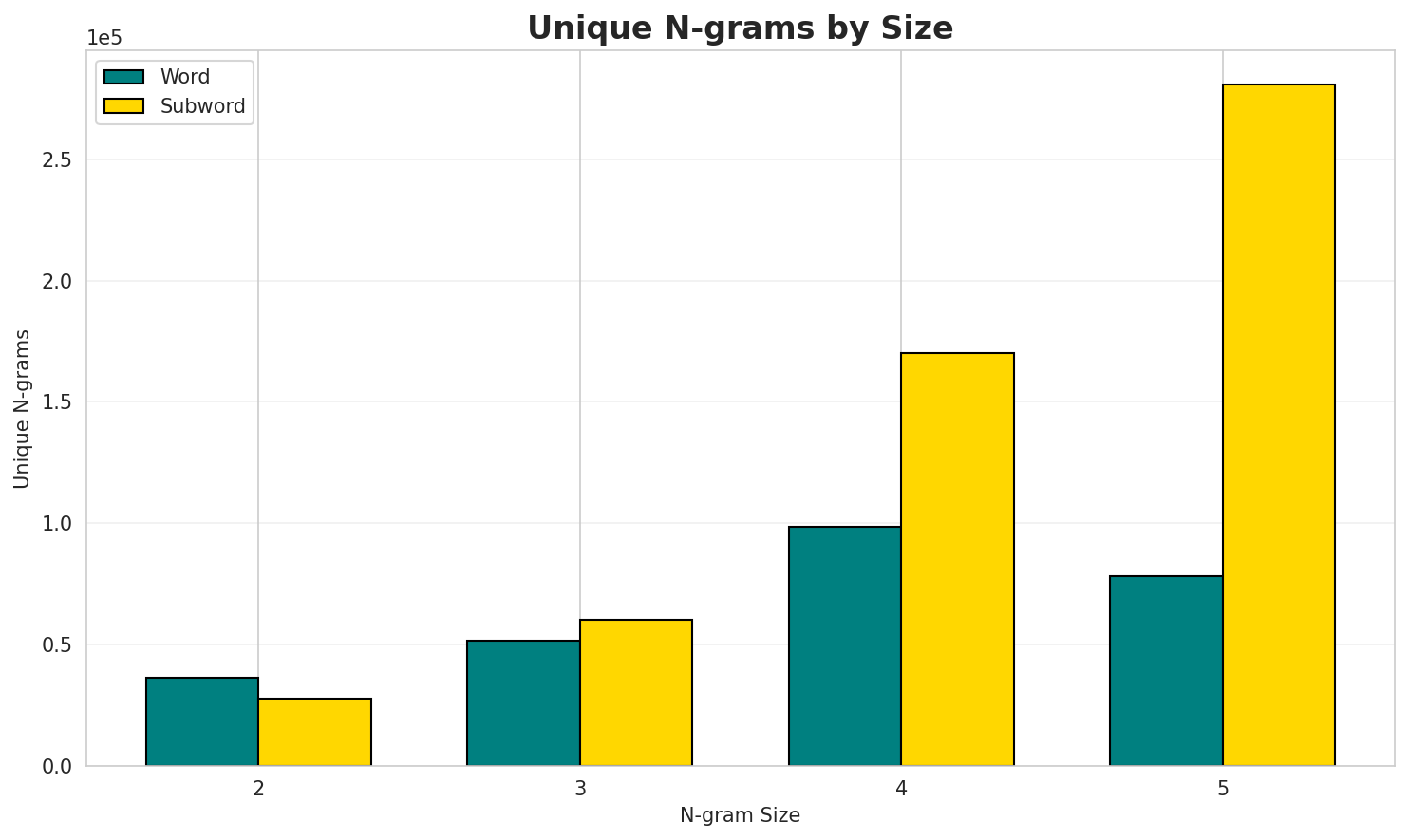

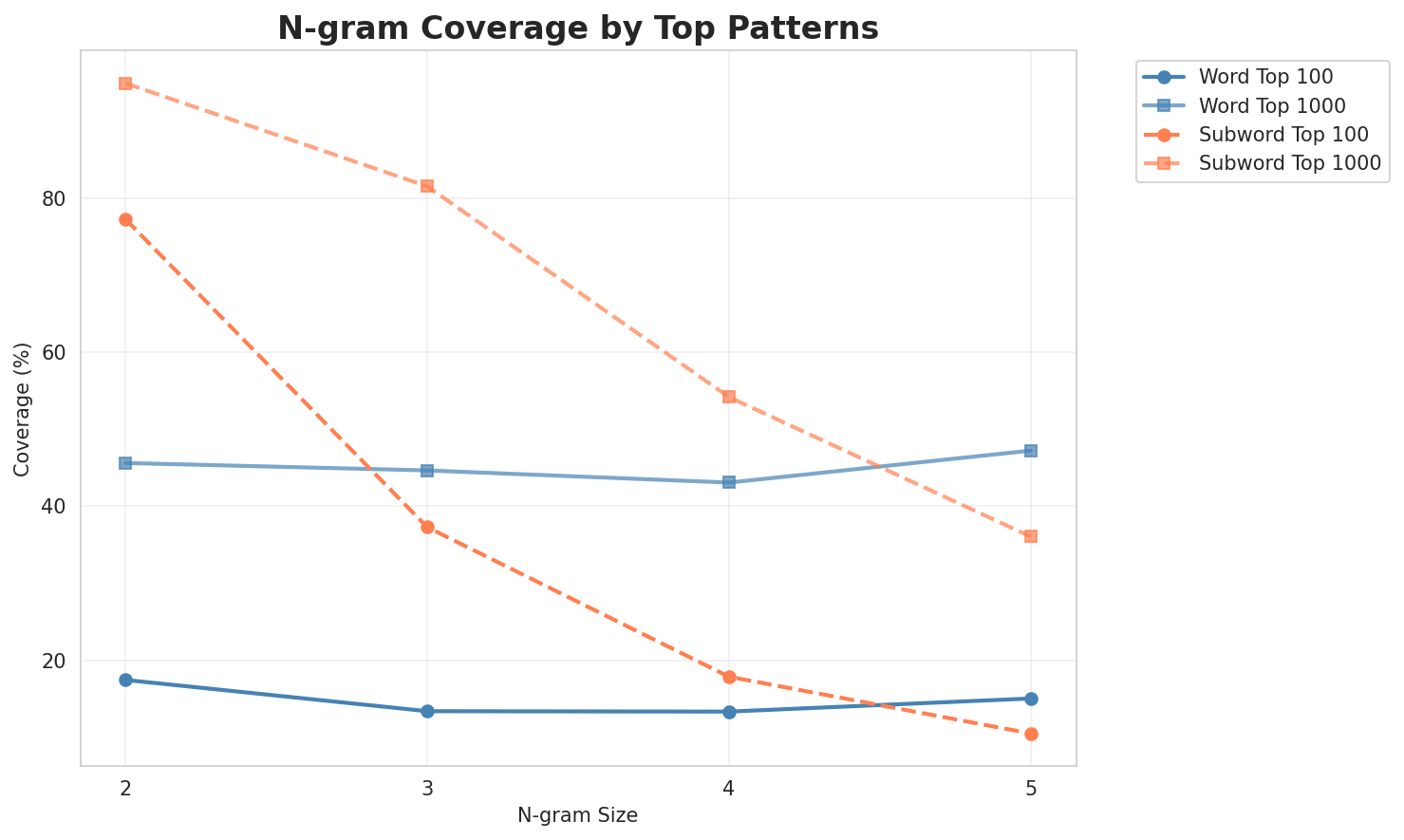

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 8,778 | 13.10 | 36,425 | 17.4% | 45.6% |

| 2-gram | Subword | 254 🏆 | 7.99 | 27,613 | 77.3% | 95.0% |

| 3-gram | Word | 11,965 | 13.55 | 51,761 | 13.4% | 44.7% |

| 3-gram | Subword | 1,471 | 10.52 | 60,255 | 37.3% | 81.6% |

| 4-gram | Word | 18,427 | 14.17 | 98,389 | 13.3% | 43.1% |

| 4-gram | Subword | 6,740 | 12.72 | 170,144 | 17.8% | 54.2% |

| 5-gram | Word | 13,641 | 13.74 | 78,197 | 15.0% | 47.2% |

| 5-gram | Subword | 20,122 | 14.30 | 280,627 | 10.5% | 36.0% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a tademaw |

9,781 |

| 2 | a mihcaan |

6,305 |

| 3 | sa u |

4,975 |

| 4 | idaw ku |

4,643 |

| 5 | ku tademaw |

4,369 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | kamu nu hulam |

1,808 |

| 2 | nasulitan nasakamuan atu |

1,789 |

| 3 | namakayniay a nasulitan |

1,789 |

| 4 | a nasulitan nasakamuan |

1,789 |

| 5 | nasakamuan atu natinengan |

1,757 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a nasulitan nasakamuan atu |

1,789 |

| 2 | namakayniay a nasulitan nasakamuan |

1,778 |

| 3 | nasulitan nasakamuan atu natinengan |

1,755 |

| 4 | atu zumaay a natinengan |

1,673 |

| 5 | tu ihekalay atu zumaay |

1,466 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | namakayniay a nasulitan nasakamuan atu |

1,778 |

| 2 | a nasulitan nasakamuan atu natinengan |

1,755 |

| 3 | tu ihekalay atu zumaay a |

1,465 |

| 4 | malalitin tu ihekalay atu zumaay |

1,463 |

| 5 | ihekalay atu zumaay a natinengan |

1,462 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | u _ |

357,853 |

| 2 | a n |

299,562 |

| 3 | a _ |

290,493 |

| 4 | a y |

241,409 |

| 5 | _ a |

215,000 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a y _ |

143,914 |

| 2 | _ a _ |

137,006 |

| 3 | a n _ |

126,871 |

| 4 | t u _ |

101,083 |

| 5 | _ s a |

100,121 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ n u _ |

84,566 |

| 2 | _ t u _ |

65,522 |

| 3 | _ k u _ |

59,832 |

| 4 | a y _ a |

54,817 |

| 5 | y _ a _ |

47,865 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a y _ a _ |

47,058 |

| 2 | _ a t u _ |

22,206 |

| 3 | t a d e m |

21,403 |

| 4 | a d e m a |

21,335 |

| 5 | d e m a w |

21,328 |

Key Findings

- Best Perplexity: 2-gram (subword) with 254

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~36% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

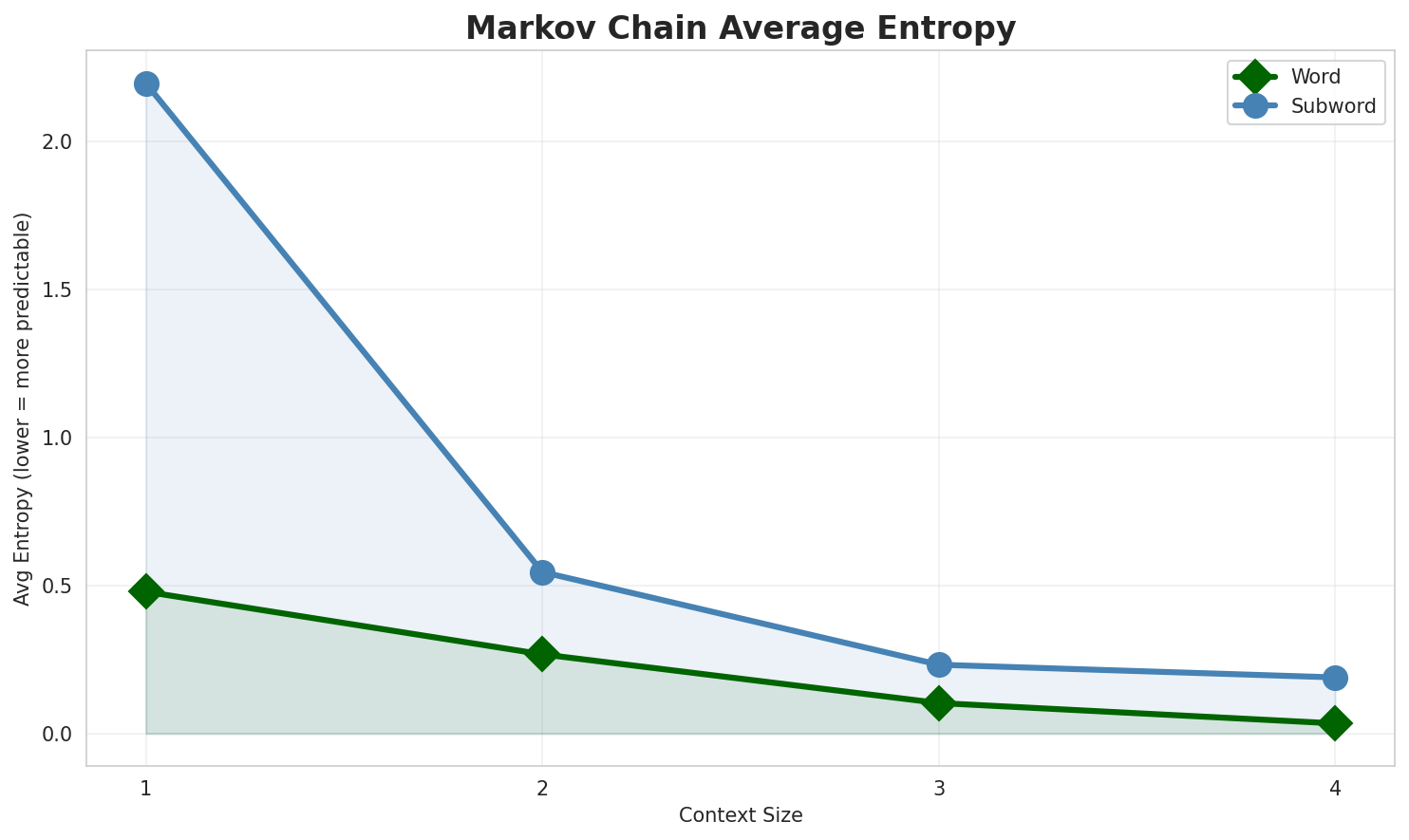

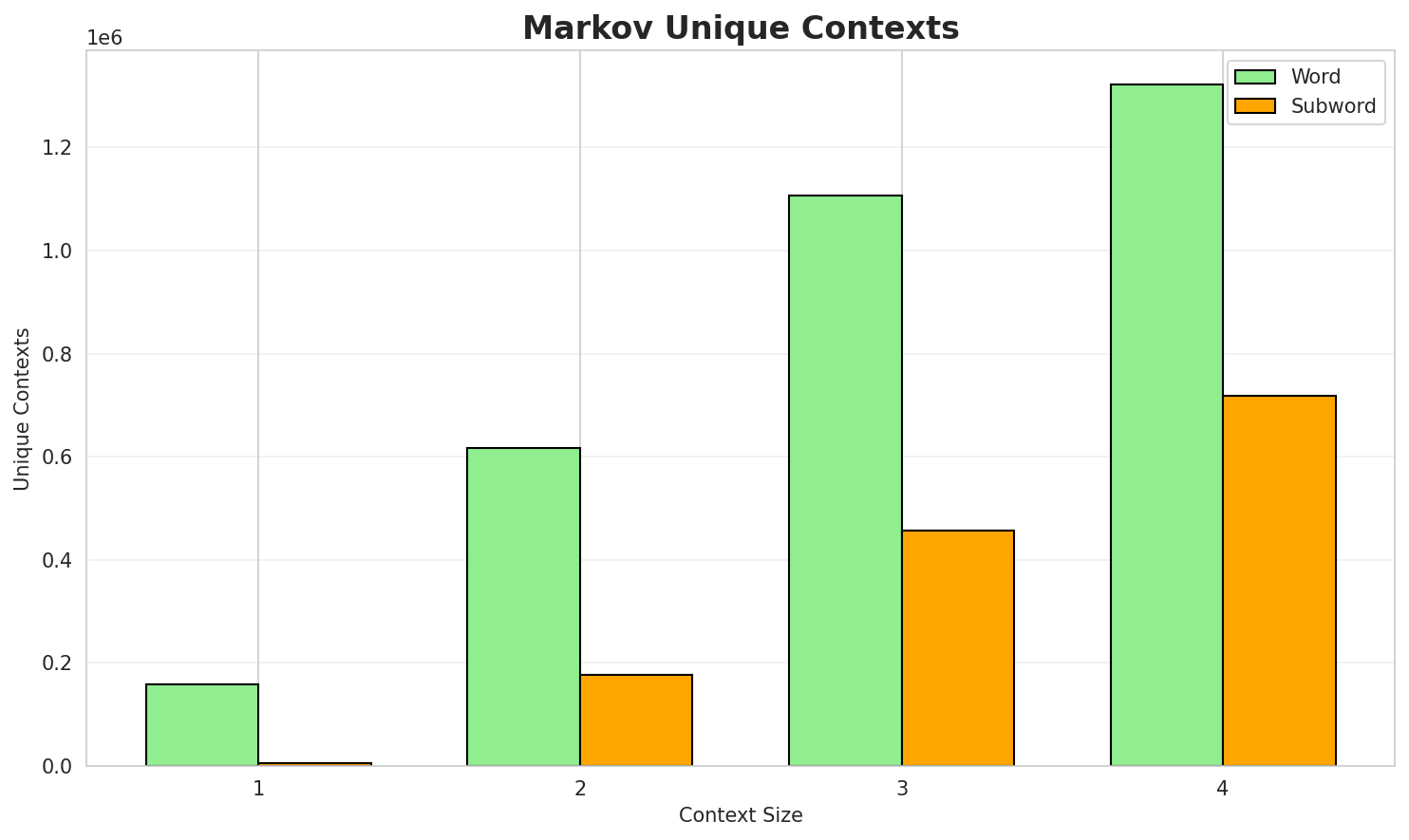

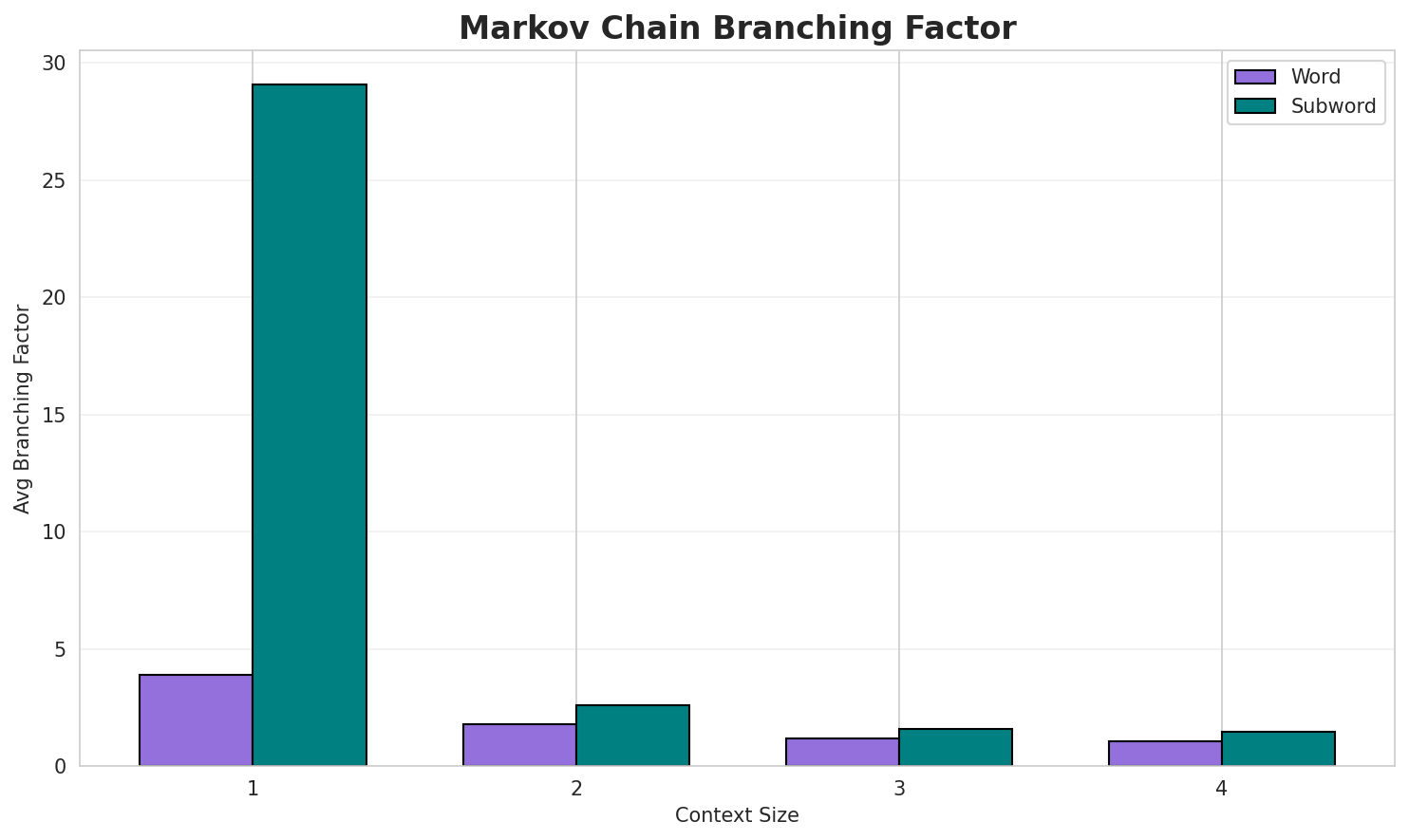

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.4793 | 1.394 | 3.89 | 158,896 | 52.1% |

| 1 | Subword | 2.1979 | 4.588 | 29.06 | 6,068 | 0.0% |

| 2 | Word | 0.2677 | 1.204 | 1.80 | 616,064 | 73.2% |

| 2 | Subword | 0.5459 | 1.460 | 2.59 | 176,243 | 45.4% |

| 3 | Word | 0.1031 | 1.074 | 1.20 | 1,105,652 | 89.7% |

| 3 | Subword | 0.2326 | 1.175 | 1.58 | 456,451 | 76.7% |

| 4 | Word | 0.0342 🏆 | 1.024 | 1.06 | 1,321,192 | 96.6% |

| 4 | Subword | 0.1897 | 1.141 | 1.47 | 718,822 | 81.0% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

a kamu nu sakizaya 940 sejek 9 位由執政黨與反對黨分別任命之參議員組成 任期五年 每五年舉行一次普選 malawi sa cacay ademiad mapatay im...nu u miliyaway a cidekay 南島語族 saan ya a kawaw panay有專屬的工作tu 報刊會涼 u siwkay nu sakizaya 鄒族 cou uici itan 卑南 triyatriyaran 阿美 bu a sapaluma

Context Size 2:

a tademaw silecaday a lalangawan lisin kamu atu kabanaan si kalilidan tumuk saca babalaki mililid tu...a mihcaan u nananuman nikaidaw atu sapatakekal hamin i cung ku u pu se su wi alesensa u moyan putiput tina dadiw sa nasulitan ni tuku sayun nay pabalucu ay a cidekay ku

Context Size 3:

kamu nu hulam a pu ha ce a kakitidaan atu nu sakay kinkuay i paris 巴黎 kina ia nasulitan nasakamuan atu natinengan lists of national basketball association sapuyu en nba u amis ...nasulitan nasakamuan atu natinengan 參考來源 ː malaalitin tu i hekalay atu zumaay a natinengan list of c...

Context Size 4:

a nasulitan nasakamuan atu natinengan lists of national basketball association players alvan adams 阿...namakayniay a nasulitan nasakamuan atu natinengan 撒奇萊雅族語詞典 原住民族委員會線上字詞典 花蓮縣政府nasulitan nasakamuan atu natinengan 中國高等植物資料庫全庫 中國科學院微生物研究所 行政院原住民族委員會 原住民族藥用植物 花序數位典藏國家型科技計畫 應用服務分項...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

abu_mit_in._iw-b_uzay_ng”,isasanude_cihcatu_a_ay

Context Size 2:

u_macay_a_nida_pianaydaw-mici_paana_casa_luayinipah

Context Size 3:

ay_izaw_nan_藝術家mis_a_nidaw_masa_micaan_cuduc_tu_pyria_

Context Size 4:

_nu_siyhu_ku_kapah__tu_takuwanikeliday_ku_akuti’_nu_baluc

Key Findings

- Best Predictability: Context-4 (word) with 96.6% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (718,822 contexts)

- Recommendation: Context-3 or Context-4 for text generation

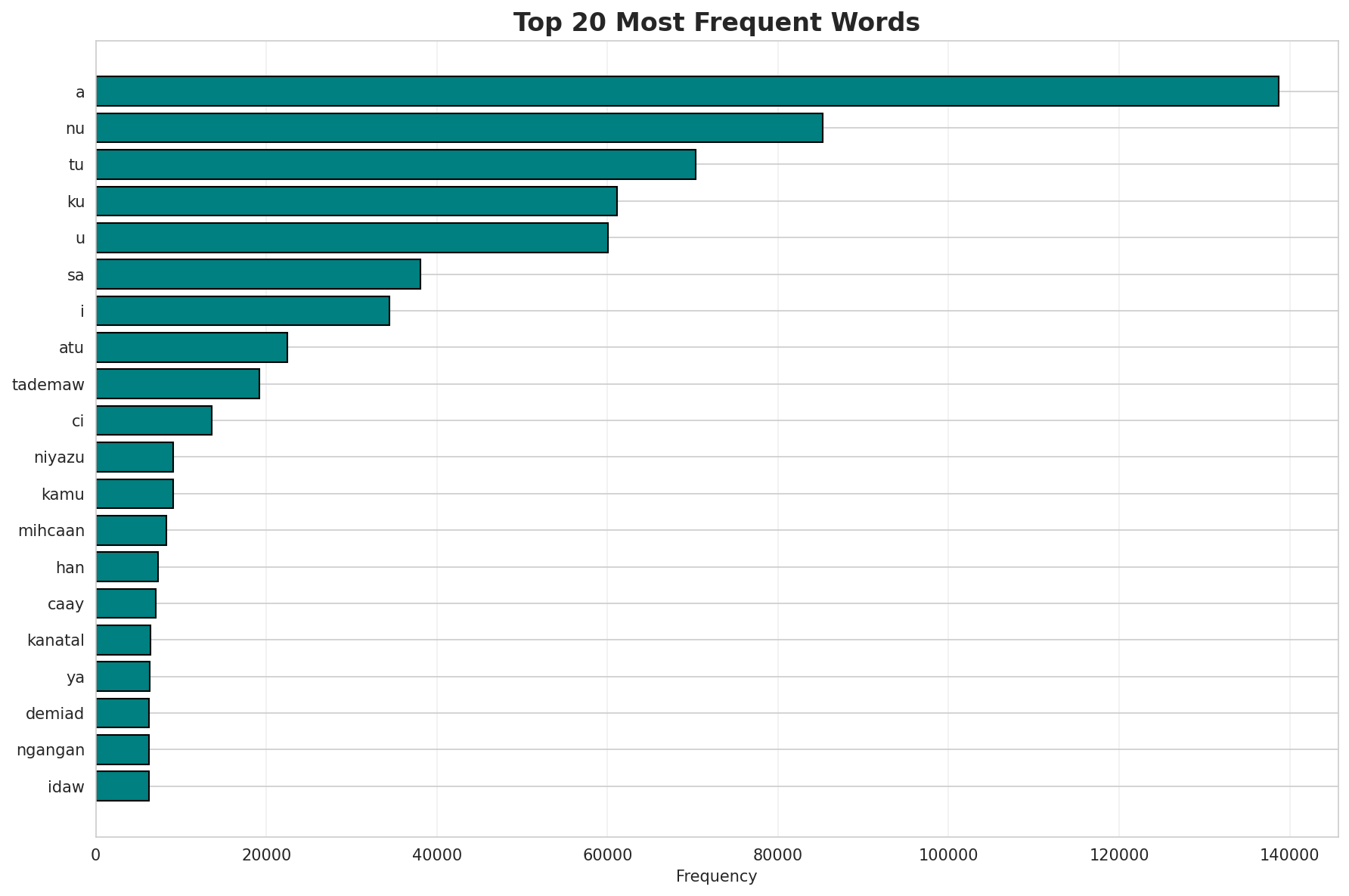

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 51,046 |

| Total Tokens | 1,702,988 |

| Mean Frequency | 33.36 |

| Median Frequency | 3 |

| Frequency Std Dev | 928.70 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | a | 138,739 |

| 2 | nu | 85,232 |

| 3 | tu | 70,354 |

| 4 | ku | 61,136 |

| 5 | u | 60,011 |

| 6 | sa | 38,061 |

| 7 | i | 34,413 |

| 8 | atu | 22,437 |

| 9 | tademaw | 19,177 |

| 10 | ci | 13,592 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | lengat | 2 |

| 2 | 屋頂的裂縫 | 2 |

| 3 | pulukelin | 2 |

| 4 | kulisimas | 2 |

| 5 | pingki | 2 |

| 6 | matulakay | 2 |

| 7 | kalimicu | 2 |

| 8 | 的未來 | 2 |

| 9 | pisasapi | 2 |

| 10 | sadihkuay | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.1985 |

| R² (Goodness of Fit) | 0.993933 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 49.3% |

| Top 1,000 | 75.3% |

| Top 5,000 | 88.1% |

| Top 10,000 | 92.1% |

Key Findings

- Zipf Compliance: R²=0.9939 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 49.3% of corpus

- Long Tail: 41,046 words needed for remaining 7.9% coverage

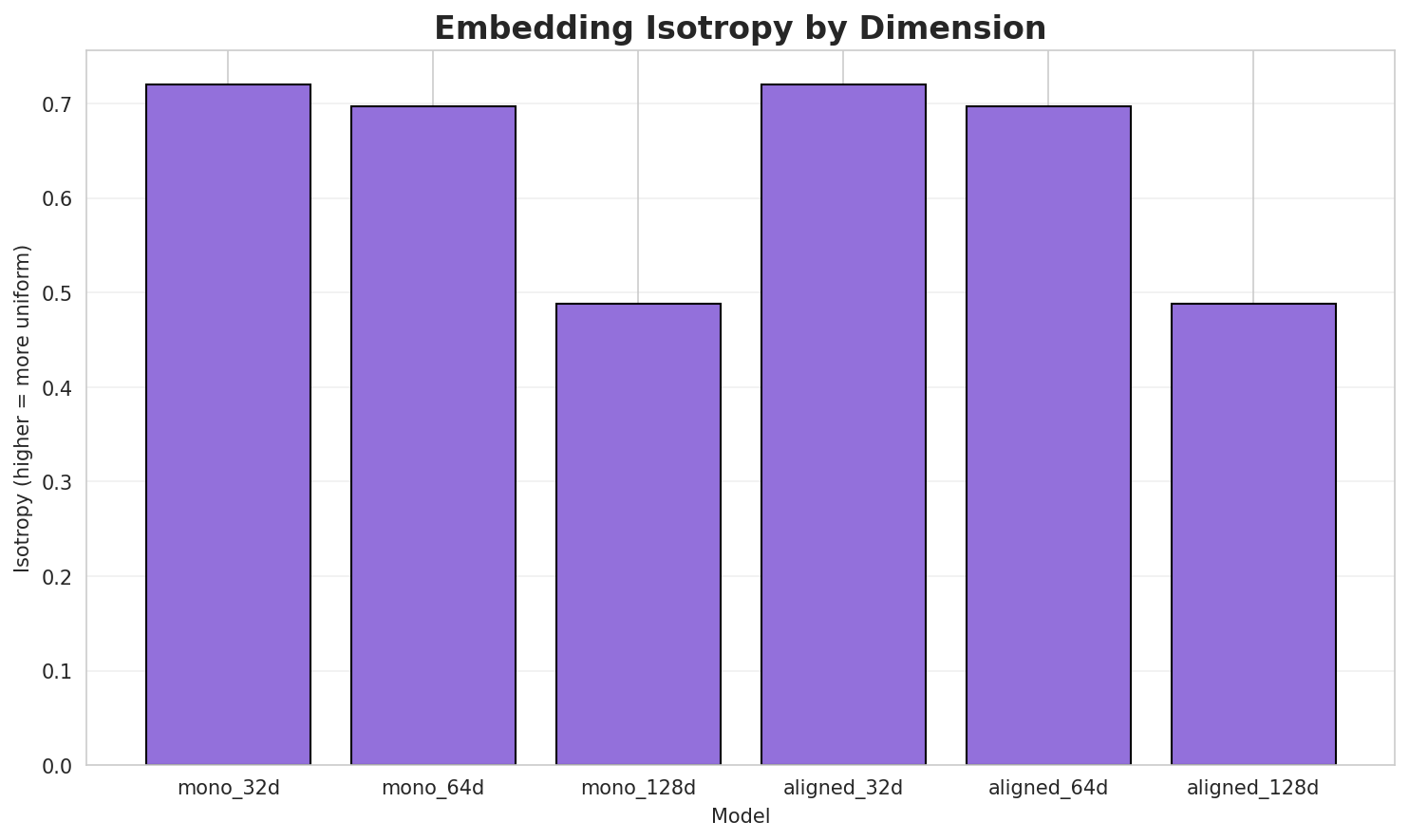

5. Word Embeddings Evaluation

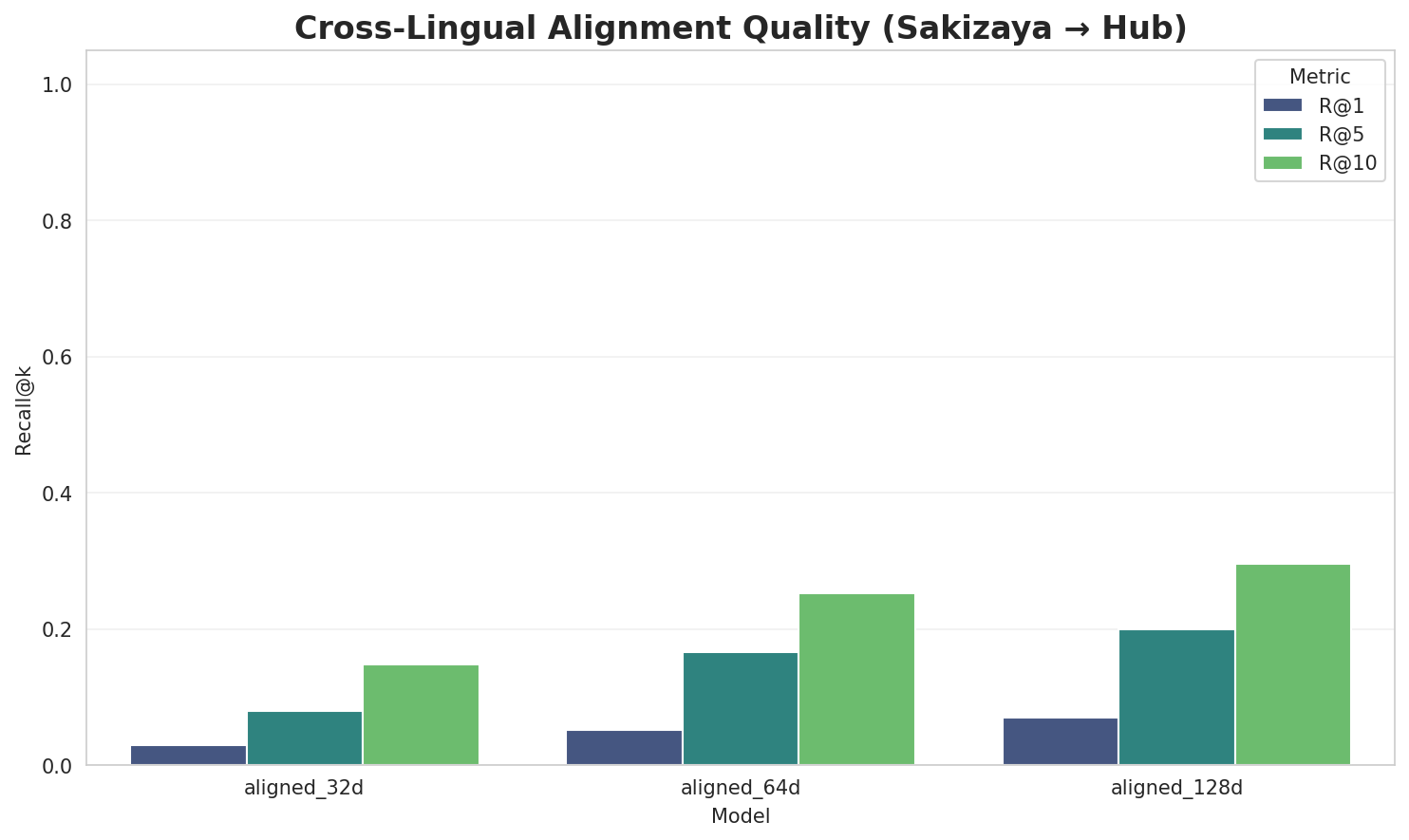

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.7206 | 0.3585 | N/A | N/A |

| mono_64d | 64 | 0.6971 | 0.2873 | N/A | N/A |

| mono_128d | 128 | 0.4883 | 0.2402 | N/A | N/A |

| aligned_32d | 32 | 0.7206 🏆 | 0.3548 | 0.0300 | 0.1480 |

| aligned_64d | 64 | 0.6971 | 0.2750 | 0.0520 | 0.2520 |

| aligned_128d | 128 | 0.4883 | 0.2443 | 0.0700 | 0.2960 |

Key Findings

- Best Isotropy: aligned_32d with 0.7206 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2934. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 7.0% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.310 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-ma |

masakiketay, mabunal, mata目 |

-ka |

kadiceman, kasikawaw, kaniket |

-pa |

pabelien, pakalaliw, pacukeday |

-sa |

saicelangan, sakatu, sakaudipan |

-mi |

mipelu, mipuputay, mingaayay |

-a |

ak, amuawaw, anuyaan |

-s |

saicelangan, sʉhlʉnganʉ, sakatu |

-m |

mipelu, muoli, masakiketay |

Productive Suffixes

| Suffix | Examples |

|---|---|

-n |

pabelien, saicelangan, anuyaan |

-an |

saicelangan, anuyaan, kadiceman |

-ay |

umahicaay, masakiketay, mipuputay |

-y |

umahicaay, masakiketay, mipuputay |

-a |

yaciyana, yita, esperança |

-ng |

pisasing, ninaimelang, inng |

-g |

pisasing, ninaimelang, inng |

-u |

mipelu, sakatu, swu |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ulit |

1.96x | 76 contexts | sulit, kulit, asulit |

atin |

1.96x | 71 contexts | latin, yatin, matin |

inen |

1.96x | 69 contexts | yinen, bineng, tineng |

tade |

2.10x | 42 contexts | tadek, taden, tadem |

dema |

2.08x | 40 contexts | demaw, demad, demak |

emia |

2.16x | 34 contexts | emiad, demia, demiad |

awan |

1.69x | 92 contexts | tawan, dawan, awang |

tine |

2.29x | 27 contexts | tineng, atineng, utineng |

demi |

2.21x | 29 contexts | demia, demied, kudemi |

hcaa |

2.19x | 28 contexts | ihcaan, mihcaa, mhcaan |

anan |

1.56x | 108 contexts | canan, nanan, panan |

anat |

2.28x | 18 contexts | canata, kanatl, kanata |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-ma |

-y |

218 words | mapasimaay, mapatidengay |

-ma |

-ay |

211 words | mapasimaay, mapatidengay |

-ka |

-n |

148 words | kasaupuan, kalalulan |

-ka |

-an |

141 words | kasaupuan, kalalulan |

-sa |

-n |

122 words | sakalihalayan, sakayduhan |

-mi |

-y |

120 words | mitatibay, micacuy |

-mi |

-ay |

116 words | mitatibay, mibelinay |

-pa |

-n |

114 words | pazen, pasilisian |

-sa |

-an |

93 words | sakalihalayan, sakayduhan |

-sa |

-y |

72 words | sapisahemay, sakasiidaay |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| nikuwanay | nikuw-an-ay |

7.5 | an |

| asasemaan | asase-ma-an |

7.5 | ma |

| maytebanay | mayteb-an-ay |

7.5 | an |

| sakaputun | sakapu-tu-n |

7.5 | tu |

| sapaiyuwan | sapaiyu-w-an |

7.5 | w |

| kasasudang | kasasu-da-ng |

7.5 | da |

| binacadana | binacad-an-a |

7.5 | an |

| nipikisaan | nipikis-a-an |

7.5 | a |

| lalaliyunan | lalaliyu-n-an |

7.5 | n |

| tadatabaki | ta-da-tabaki |

7.5 | tabaki |

| namakaadih | na-ma-kaadih |

7.5 | kaadih |

| amasasetul | a-ma-sasetul |

7.5 | sasetul |

| mamamelawan | ma-ma-melawan |

7.5 | melawan |

| tadaadidi | ta-da-adidi |

7.5 | adidi |

| malalawlaw | malalaw-l-aw |

7.5 | l |

6.6 Linguistic Interpretation

Automated Insight: The language Sakizaya shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (3.88x) |

| N-gram | 2-gram | Lowest perplexity (254) |

| Markov | Context-4 | Highest predictability (96.6%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-11 00:15:31