language: uk

language_name: Ukrainian

language_family: slavic_east

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- feature-extraction

- sentence-similarity

- tokenization

- n-grams

- markov-chain

- text-mining

- fasttext

- babelvec

- vocabulous

- vocabulary

- monolingual

- family-slavic_east

license: mit

library_name: wikilangs

pipeline_tag: text-generation

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 4.642

- name: best_isotropy

type: isotropy

value: 0.7906

- name: vocabulary_size

type: vocab

value: 0

generated: 2026-01-11T00:00:00.000Z

Ukrainian - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Ukrainian Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

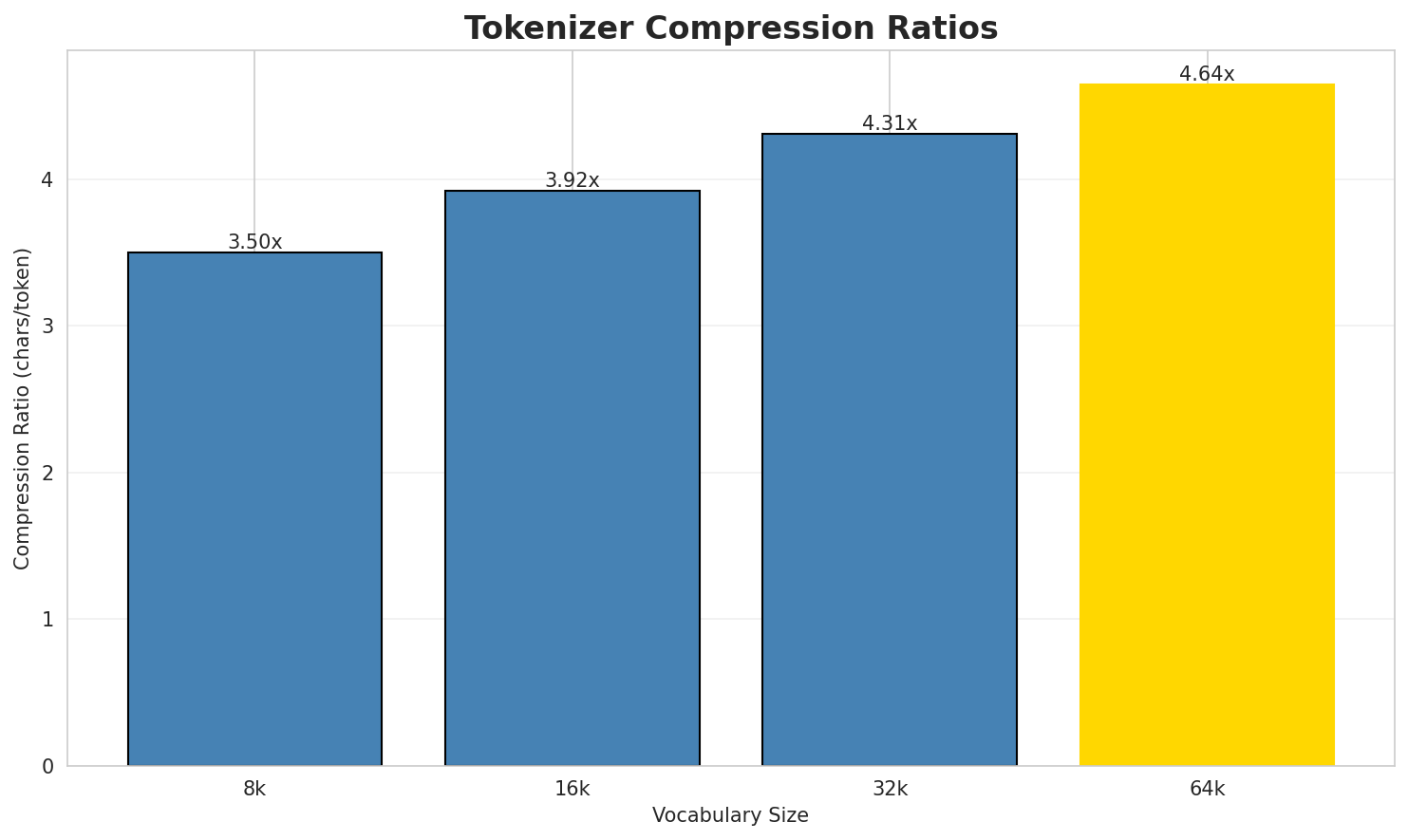

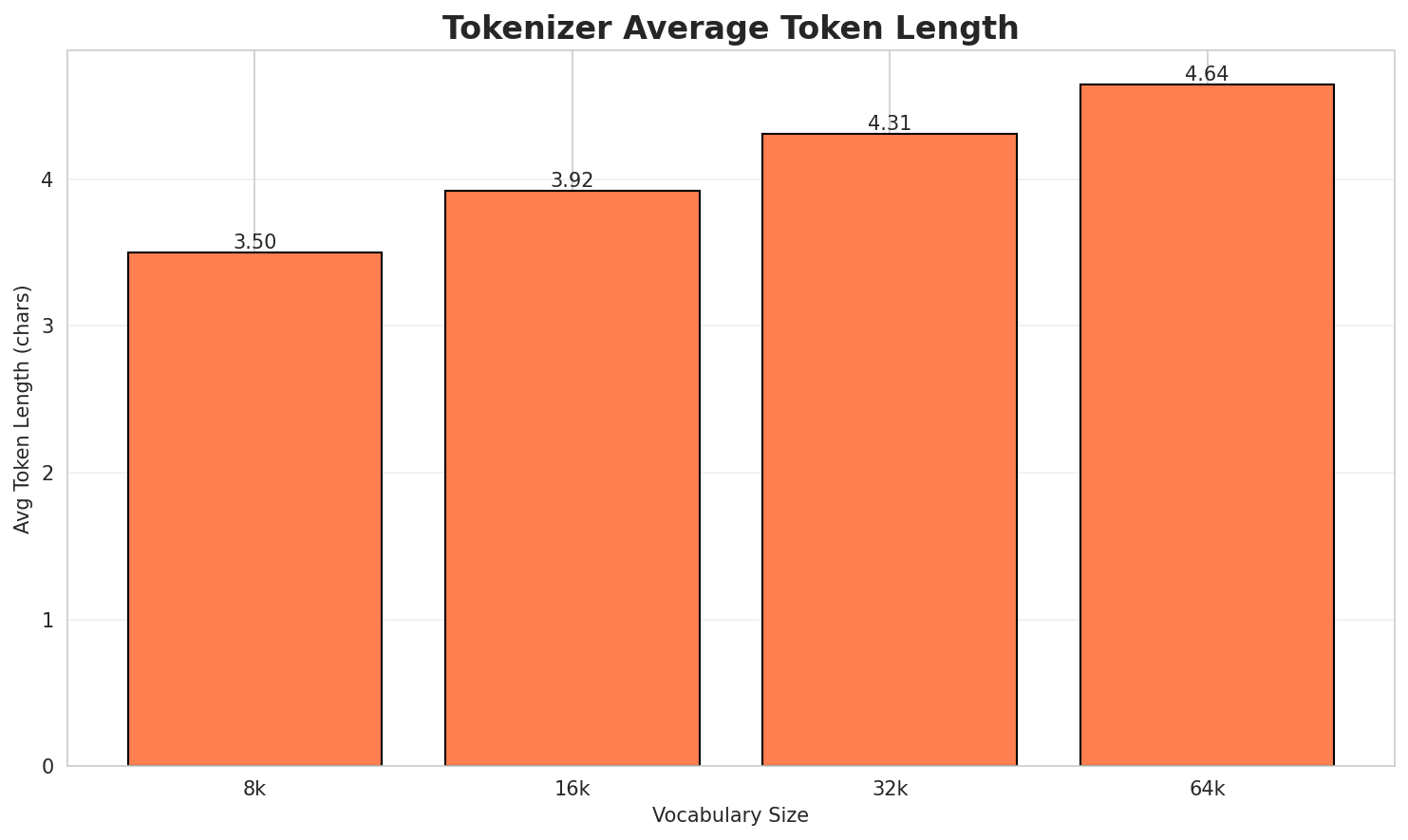

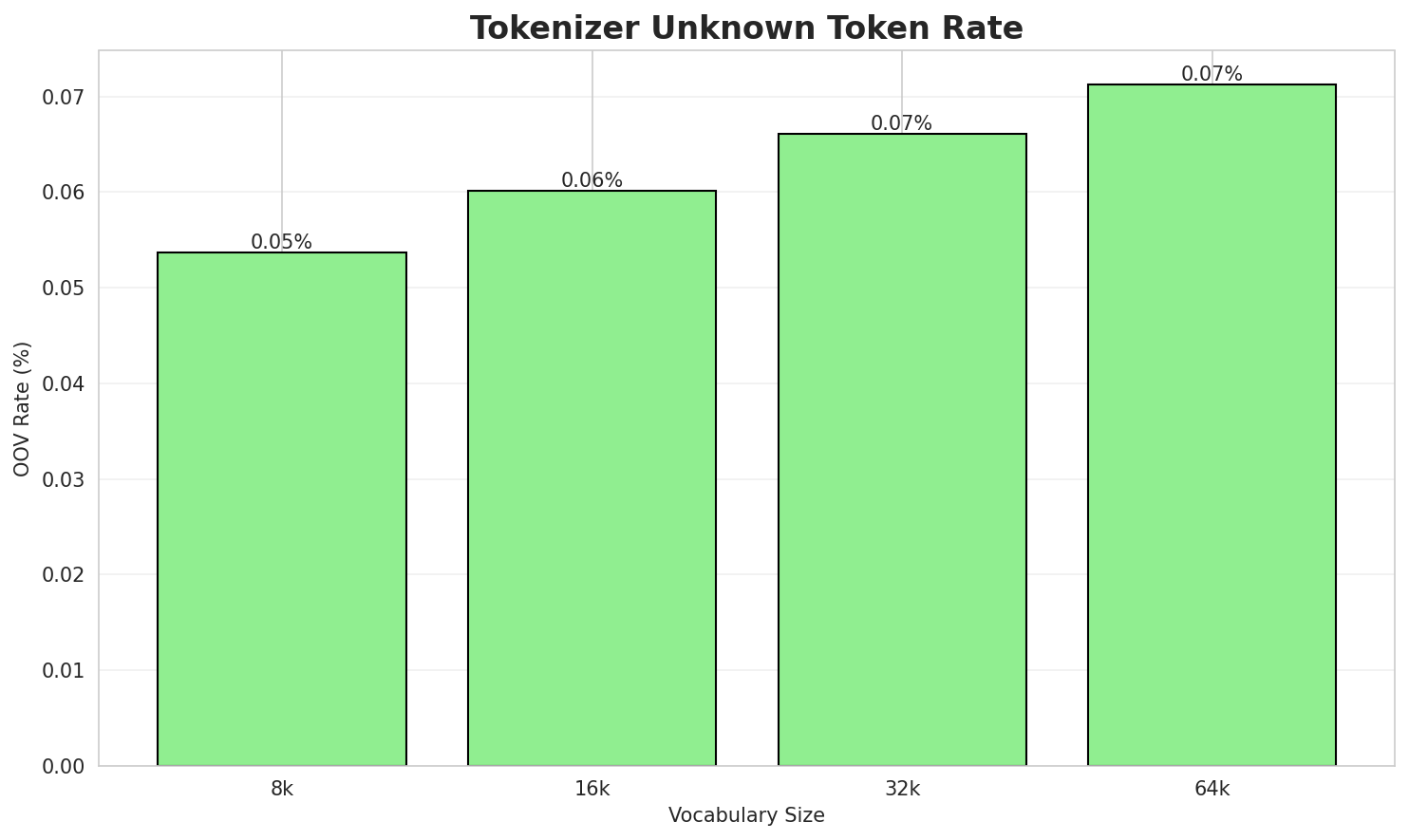

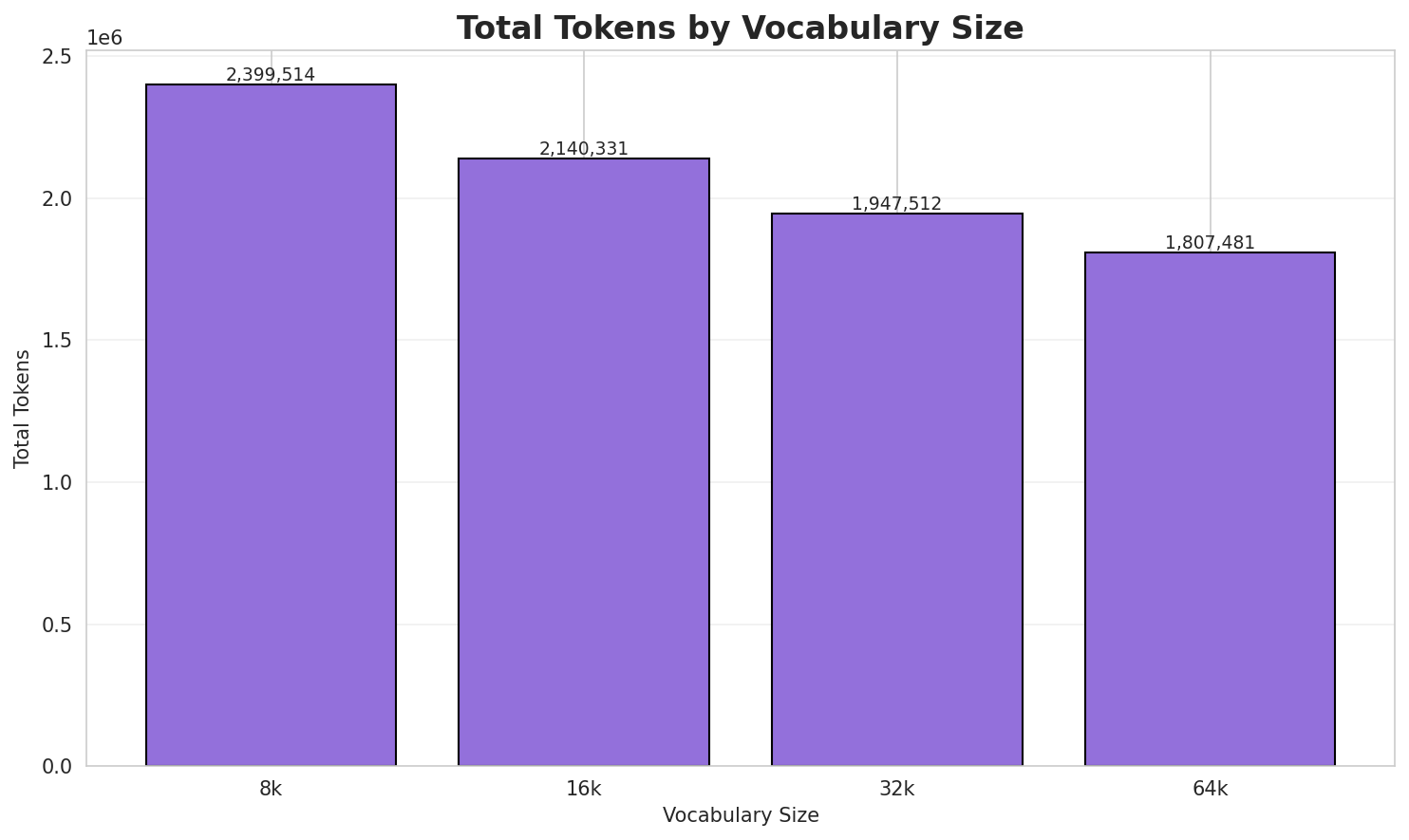

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.497x | 3.50 | 0.0536% | 2,399,514 |

| 16k | 3.921x | 3.92 | 0.0601% | 2,140,331 |

| 32k | 4.309x | 4.31 | 0.0661% | 1,947,512 |

| 64k | 4.642x 🏆 | 4.64 | 0.0712% | 1,807,481 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Шлепаков: Шлепаков Арнольд Миколайович — історик. Шлепаков Микола Степанович — ф...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ш ле па ков : ▁ш ле па ков ▁ар ... (+17 more) |

27 |

| 16k | ▁ш ле па ков : ▁ш ле па ков ▁арно ... (+15 more) |

25 |

| 32k | ▁шле па ков : ▁шле па ков ▁арнольд ▁миколайович ▁— ... (+11 more) |

21 |

| 64k | ▁шлепаков : ▁шлепаков ▁арнольд ▁миколайович ▁— ▁історик . ▁шлепаков ▁микола ... (+5 more) |

15 |

Sample 2: Села: Біївці — Київська область, Обухівський район Біївці — Полтавська область, ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁села : ▁бі їв ці ▁— ▁київська ▁область , ▁обу ... (+12 more) |

22 |

| 16k | ▁села : ▁бі їв ці ▁— ▁київська ▁область , ▁обухівський ... (+10 more) |

20 |

| 32k | ▁села : ▁бі ївці ▁— ▁київська ▁область , ▁обухівський ▁район ... (+8 more) |

18 |

| 64k | ▁села : ▁бі ївці ▁— ▁київська ▁область , ▁обухівський ▁район ... (+8 more) |

18 |

Sample 3: Апіоніни (Насіннеїди, Грушовидки) — це підродина жуків з родини Апіоніди (Apioni...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁а пі оні ни ▁( на сі н не ї ... (+27 more) |

37 |

| 16k | ▁а пі оні ни ▁( на сін не їди , ... (+23 more) |

33 |

| 32k | ▁а пі оні ни ▁( на сін не їди , ... (+22 more) |

32 |

| 64k | ▁а пі оні ни ▁( насін не їди , ▁гру ... (+19 more) |

29 |

Key Findings

- Best Compression: 64k achieves 4.642x compression

- Lowest UNK Rate: 8k with 0.0536% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

2. N-gram Model Evaluation

Results

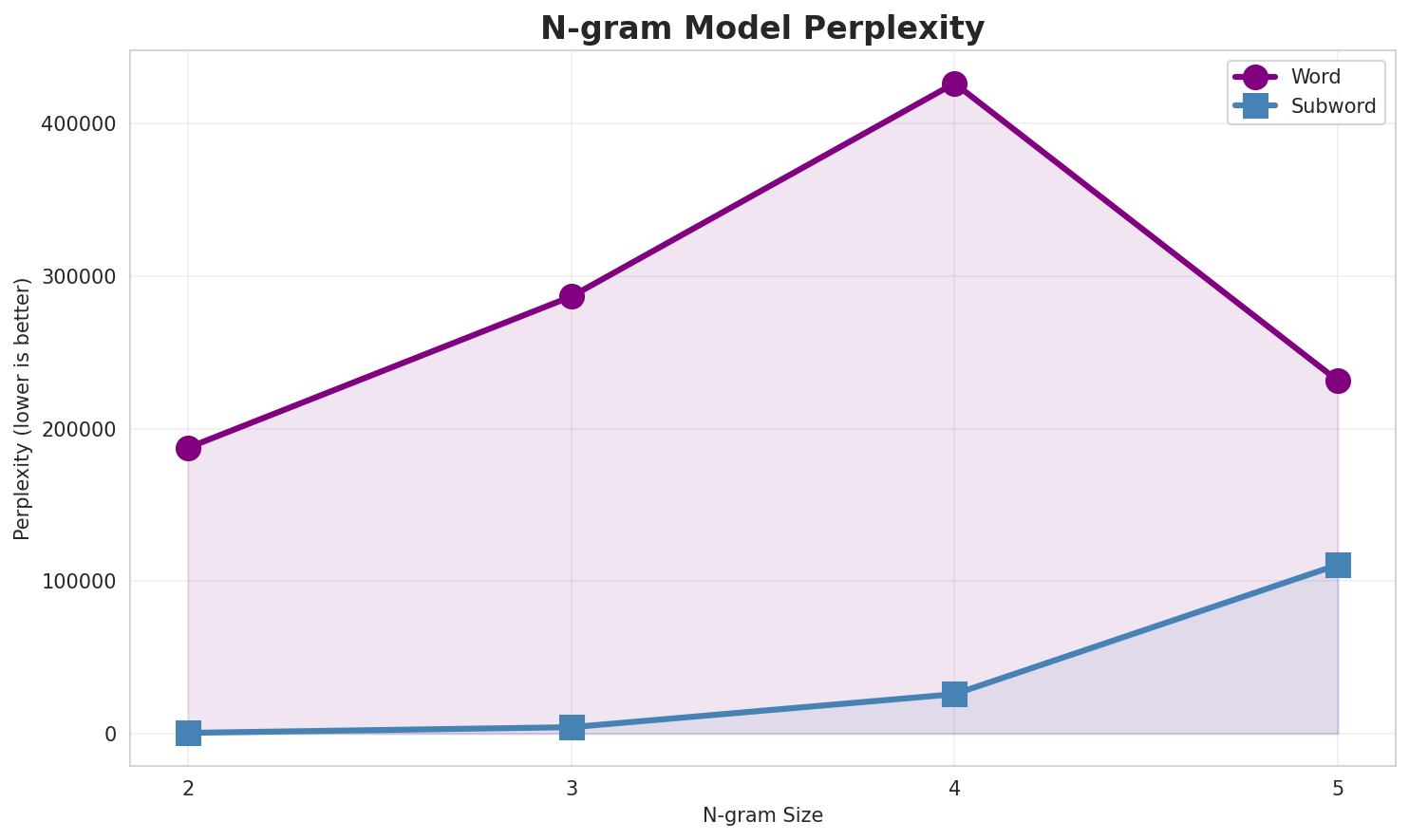

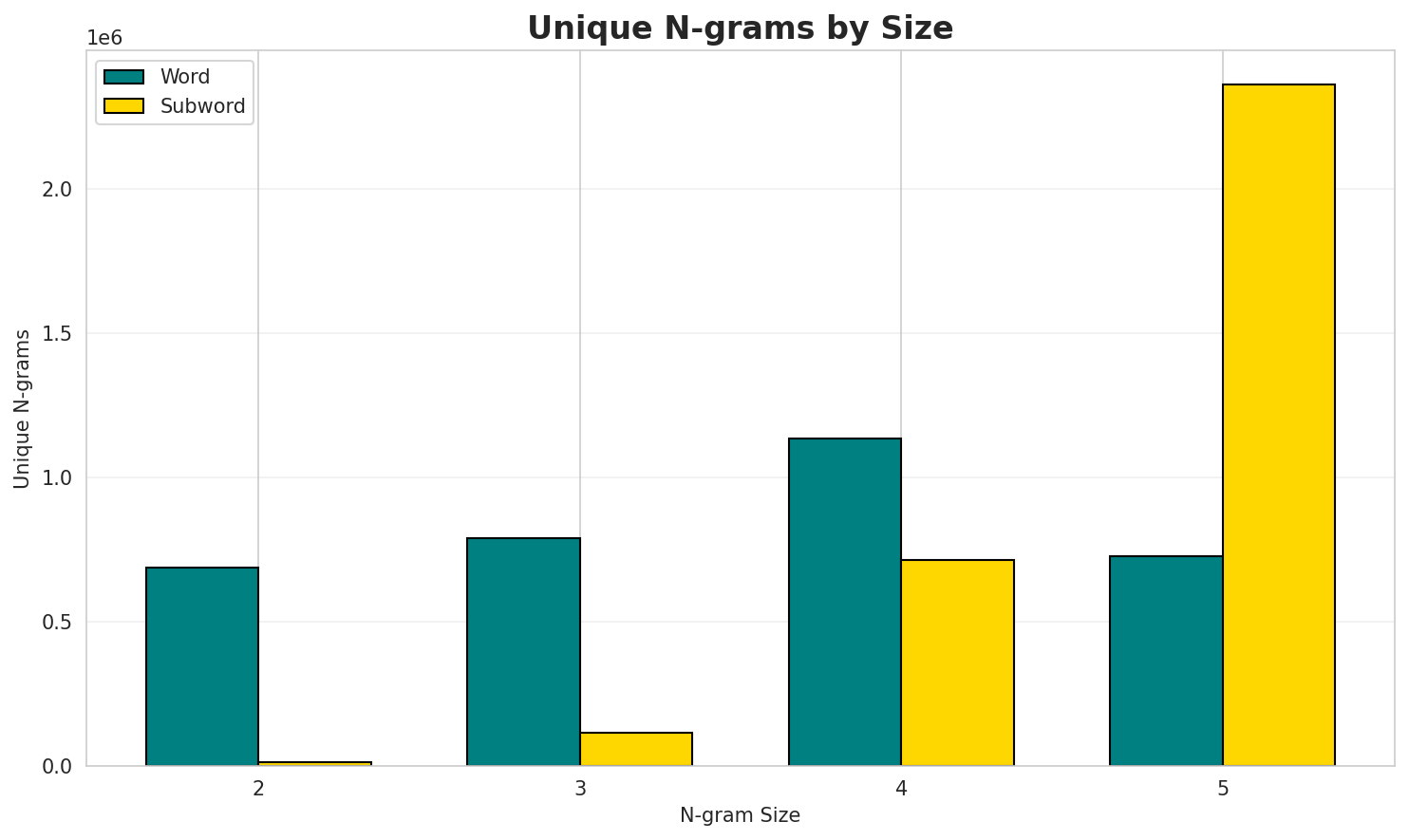

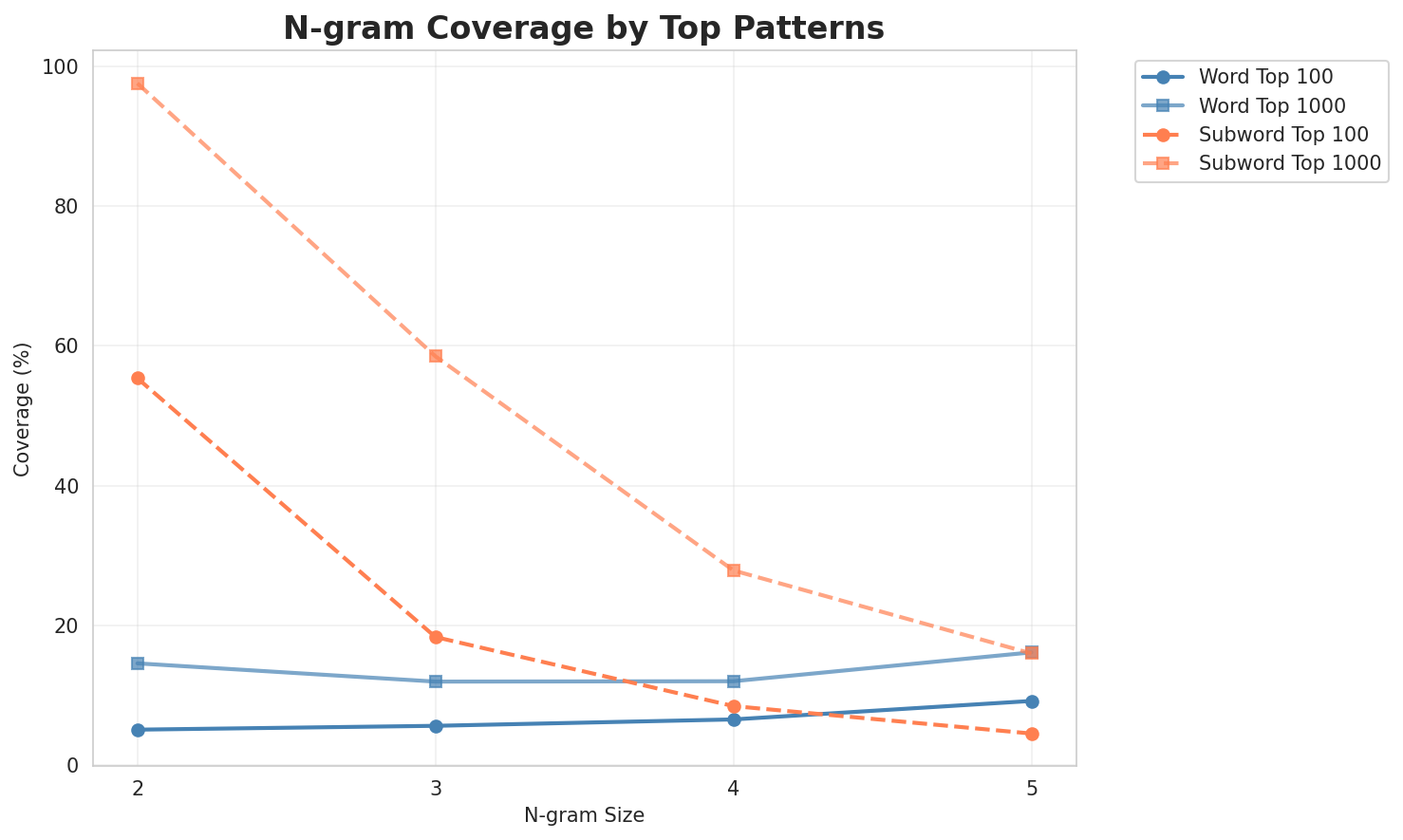

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 187,448 | 17.52 | 685,840 | 5.0% | 14.5% |

| 2-gram | Subword | 437 🏆 | 8.77 | 13,081 | 55.4% | 97.6% |

| 3-gram | Word | 286,638 | 18.13 | 787,827 | 5.6% | 11.9% |

| 3-gram | Subword | 4,150 | 12.02 | 116,111 | 18.3% | 58.5% |

| 4-gram | Word | 426,525 | 18.70 | 1,132,759 | 6.5% | 12.0% |

| 4-gram | Subword | 25,826 | 14.66 | 714,146 | 8.4% | 27.8% |

| 5-gram | Word | 231,506 | 17.82 | 725,209 | 9.1% | 16.1% |

| 5-gram | Subword | 110,683 | 16.76 | 2,359,262 | 4.5% | 15.9% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | у році |

39,132 |

| 2 | під час |

21,948 |

| 3 | ic в |

21,270 |

| 4 | а також |

20,792 |

| 5 | в україні |

18,087 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ic в базі |

12,721 |

| 2 | оригінальному новому загальному |

10,477 |

| 3 | в оригінальному новому |

10,475 |

| 4 | новому загальному каталозі |

10,473 |

| 5 | до н е |

8,904 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | в оригінальному новому загальному |

10,475 |

| 2 | оригінальному новому загальному каталозі |

10,468 |

| 3 | ic в оригінальному новому |

8,549 |

| 4 | новому загальному каталозі ic |

7,477 |

| 5 | загальному каталозі ic в |

6,124 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | в оригінальному новому загальному каталозі |

10,468 |

| 2 | ic в оригінальному новому загальному |

8,549 |

| 3 | оригінальному новому загальному каталозі ic |

7,477 |

| 4 | новому загальному каталозі ic в |

6,124 |

| 5 | бази даних про об єкти |

5,241 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ п |

2,788,984 |

| 2 | а _ |

2,782,956 |

| 3 | _ в |

2,478,604 |

| 4 | , _ |

2,402,312 |

| 5 | . _ |

2,316,510 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ н а |

1,039,254 |

| 2 | с ь к |

1,024,566 |

| 3 | _ п р |

870,352 |

| 4 | _ п о |

858,794 |

| 5 | н а _ |

850,334 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | о г о _ |

679,817 |

| 2 | н н я _ |

490,022 |

| 3 | _ н а _ |

413,243 |

| 4 | с ь к о |

409,920 |

| 5 | _ п р о |

378,210 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | к р а ї н |

282,501 |

| 2 | у к р а ї |

252,628 |

| 3 | е н н я _ |

250,361 |

| 4 | _ у к р а |

236,337 |

| 5 | н о г о _ |

219,776 |

Key Findings

- Best Perplexity: 2-gram (subword) with 437

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~16% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

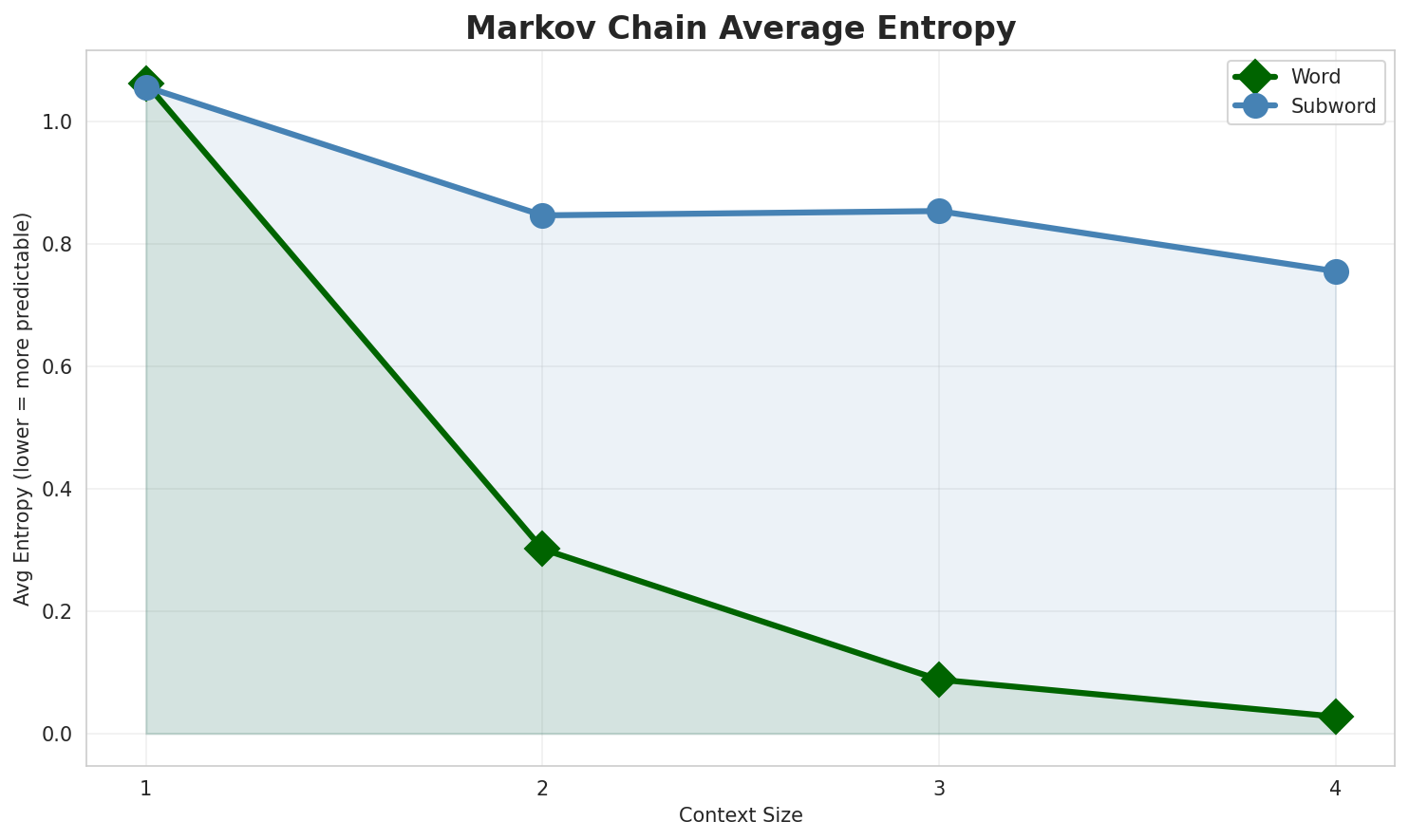

3. Markov Chain Evaluation

Results

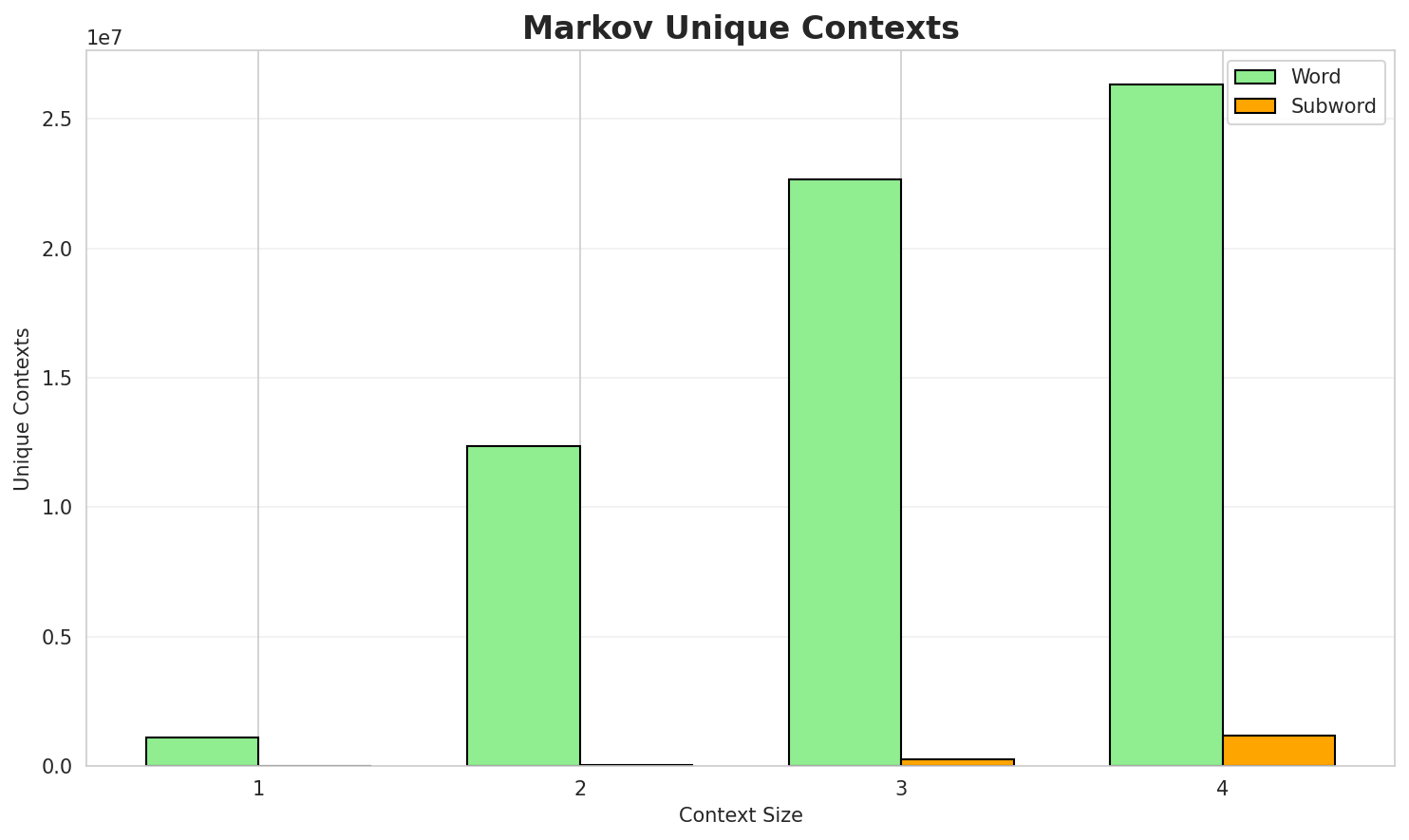

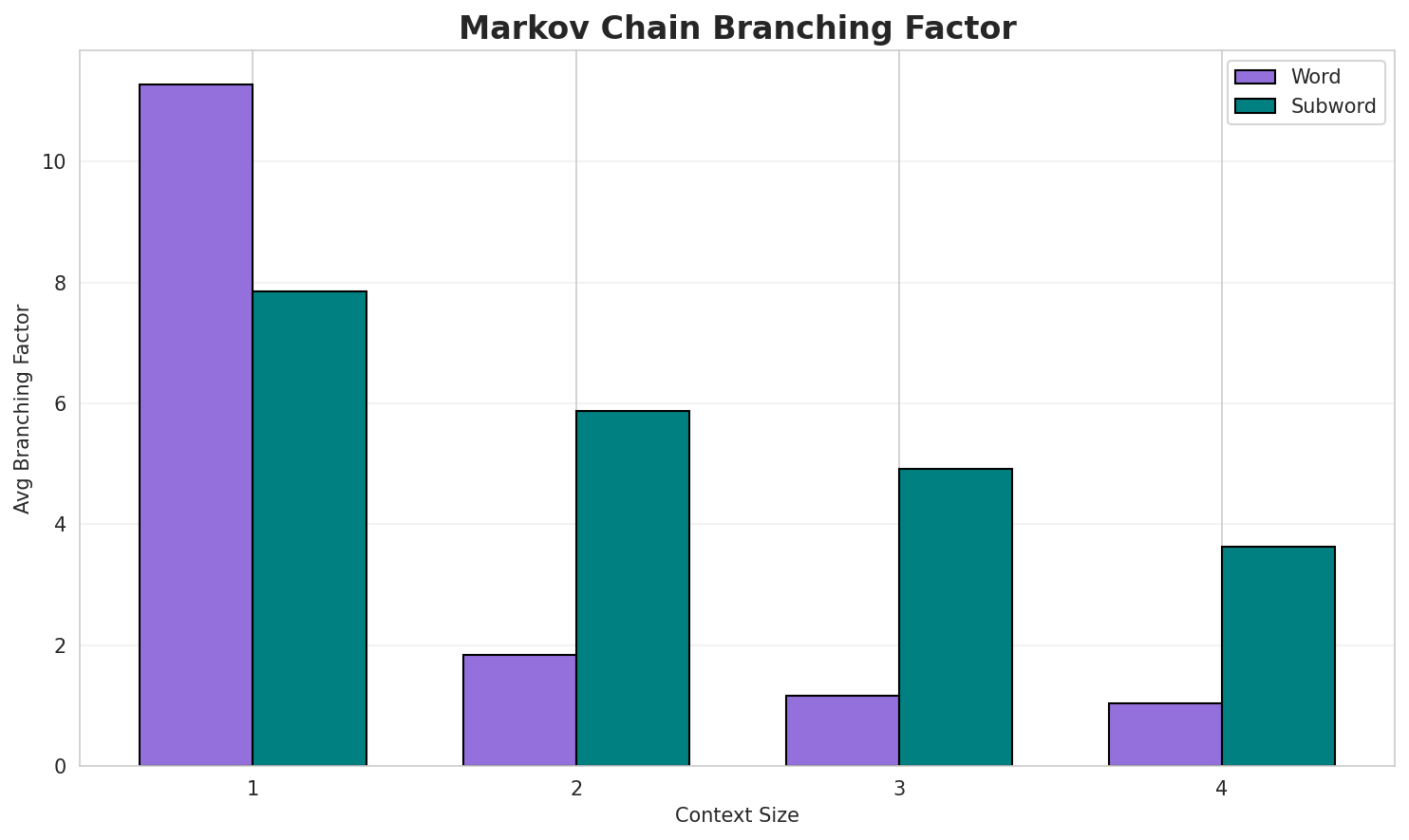

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 1.0632 | 2.089 | 11.27 | 1,098,688 | 0.0% |

| 1 | Subword | 1.0573 | 2.081 | 7.85 | 5,267 | 0.0% |

| 2 | Word | 0.3016 | 1.233 | 1.83 | 12,375,104 | 69.8% |

| 2 | Subword | 0.8473 | 1.799 | 5.87 | 41,346 | 15.3% |

| 3 | Word | 0.0881 | 1.063 | 1.16 | 22,683,749 | 91.2% |

| 3 | Subword | 0.8543 | 1.808 | 4.91 | 242,807 | 14.6% |

| 4 | Word | 0.0277 🏆 | 1.019 | 1.04 | 26,324,244 | 97.2% |

| 4 | Subword | 0.7559 | 1.689 | 3.63 | 1,193,273 | 24.4% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

в батьківський дім і його окраїнним морем протоками назва мовою за петра чардиніна в середині 2у першому турі з них 22 січня за негайне перекидання до а по абдуллах аль азхарі 4 результати голос панк музиканти науковці астрономи вважали для кількості загиблих 95 82 трубы сл...

Context Size 2:

у році стипендію і поступити у підпорядкування головної команди вперше була видана 9 серпня в сьогод...під час якої були самодержавство православ я офіційною мовою була османська початкова освіта є одніє...ic в базі vizier ic в оригінальному новому загальному каталозі ic в базі vizier ic в оригінальному

Context Size 3:

ic в базі simbad ic в базі nasa extragalactic database бази даних про об єкти ngc ic icоригінальному новому загальному каталозі перевірена інформація про ic ic в базі nasa extragalactic d...в оригінальному новому загальному каталозі ic в оригінальному новому загальному каталозі ic в оригін...

Context Size 4:

в оригінальному новому загальному каталозі ic в оригінальному новому загальному каталозі ic 541 в ор...оригінальному новому загальному каталозі ic 260 в базі simbad ic в базі vizier ic в базі nasa extrag...ic в оригінальному новому загальному каталозі ic в оригінальному новому загальному каталозі перевіре...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_й_—_заходу_да_аониндиннив_сти_за_сути_в_бії_мія

Context Size 2:

_празии_5_махол_на_є_боваєктажам_в_відня_вийшоми_ла

Context Size 3:

_нання_у_сунути_імське_нобійно-жозем_прення_одиланзент

Context Size 4:

ого_слідних_примусоння_верхнею_черничо_на_саку,_торгове_в

Key Findings

- Best Predictability: Context-4 (word) with 97.2% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (1,193,273 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 524,715 |

| Total Tokens | 29,104,691 |

| Mean Frequency | 55.47 |

| Median Frequency | 4 |

| Frequency Std Dev | 1788.64 |

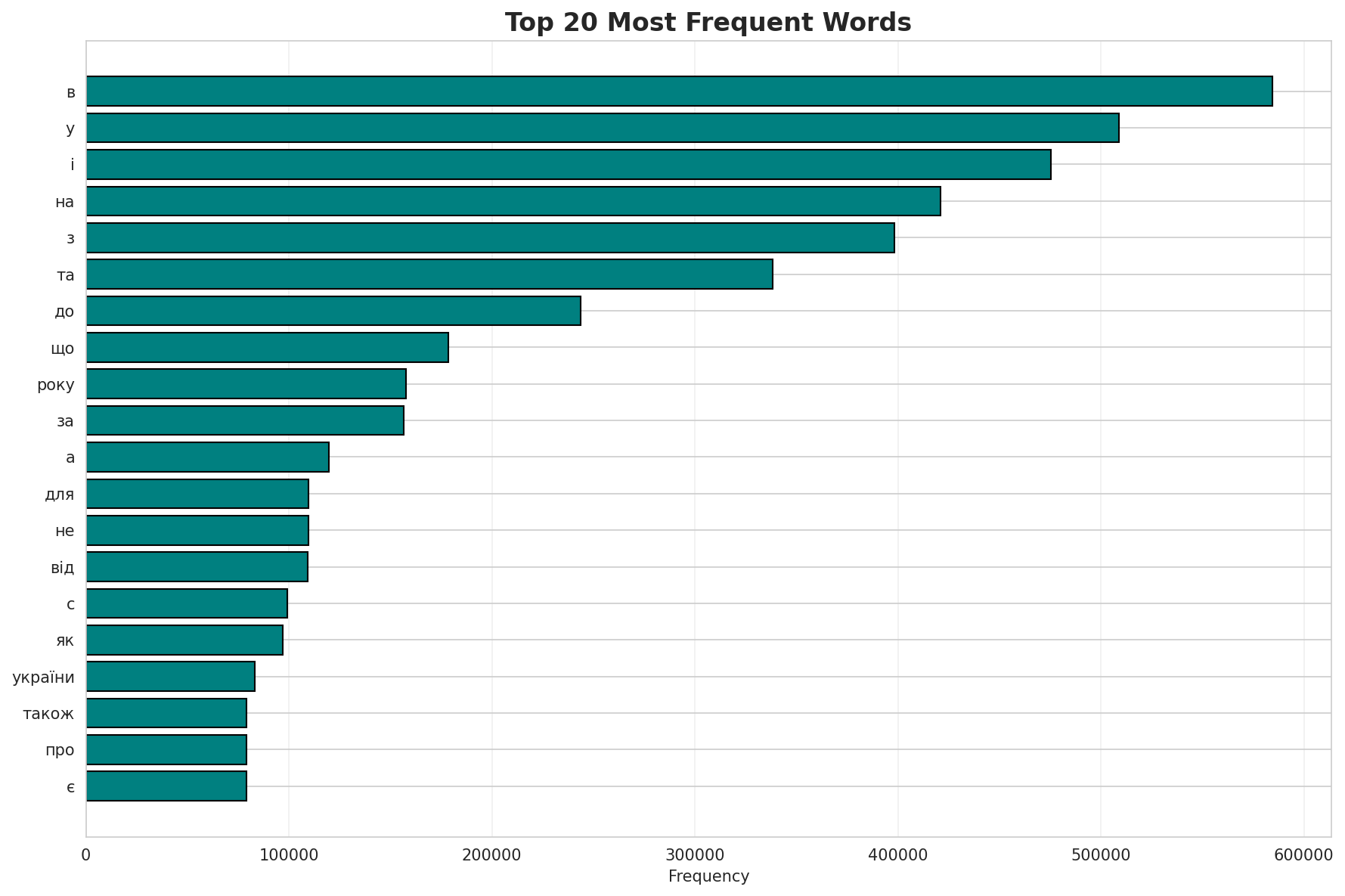

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | в | 584,423 |

| 2 | у | 509,046 |

| 3 | і | 475,294 |

| 4 | на | 421,086 |

| 5 | з | 398,175 |

| 6 | та | 338,290 |

| 7 | до | 243,692 |

| 8 | що | 178,466 |

| 9 | року | 157,886 |

| 10 | за | 156,732 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | паніцца | 2 |

| 2 | ро́рбах | 2 |

| 3 | рубе́ль | 2 |

| 4 | катархей | 2 |

| 5 | азой | 2 |

| 6 | приской | 2 |

| 7 | гадейському | 2 |

| 8 | сезан | 2 |

| 9 | конезаводства | 2 |

| 10 | сінельникова | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.8995 |

| R² (Goodness of Fit) | 0.997133 |

| Adherence Quality | excellent |

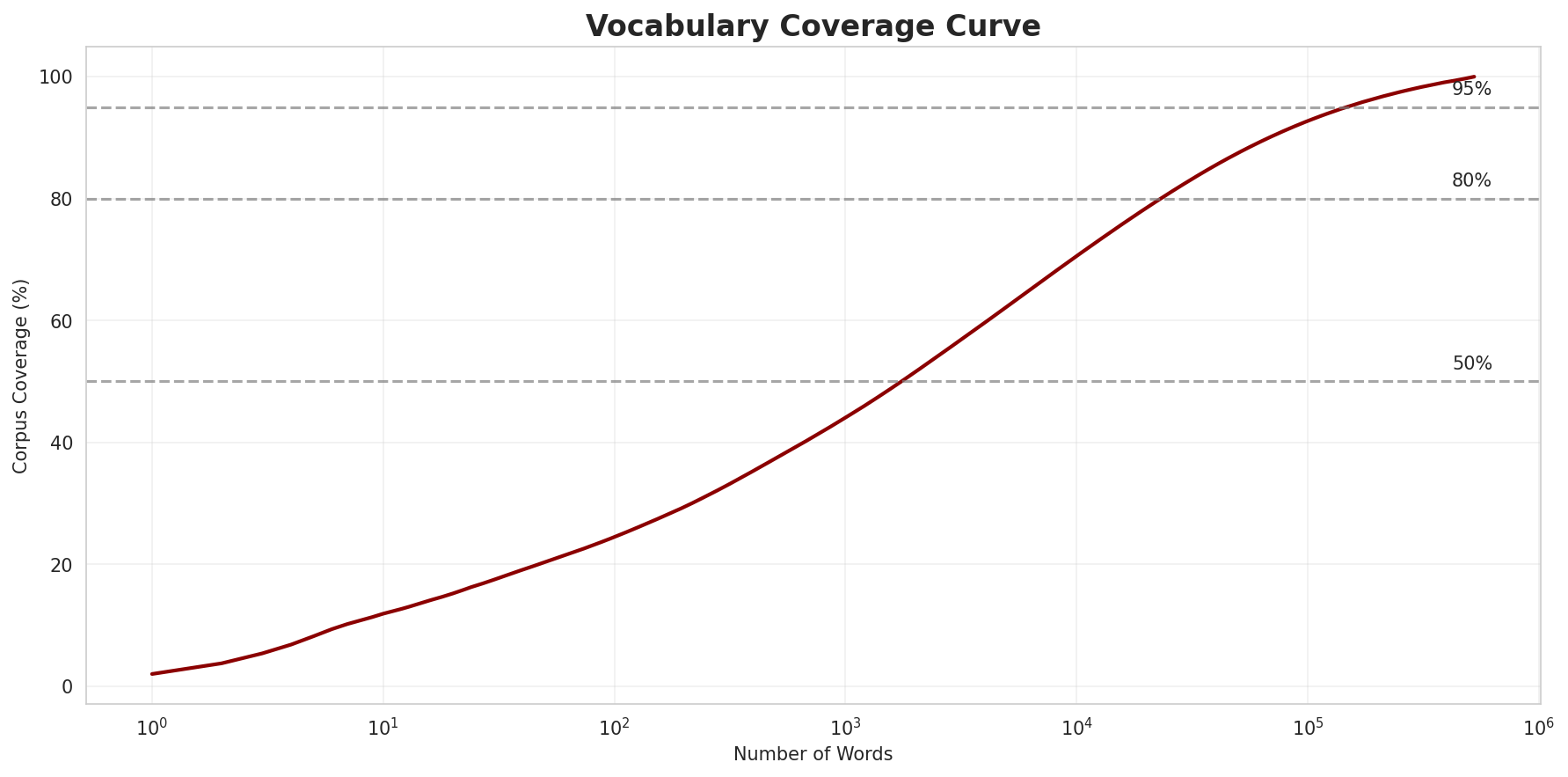

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 24.5% |

| Top 1,000 | 44.1% |

| Top 5,000 | 62.3% |

| Top 10,000 | 70.6% |

Key Findings

- Zipf Compliance: R²=0.9971 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 24.5% of corpus

- Long Tail: 514,715 words needed for remaining 29.4% coverage

5. Word Embeddings Evaluation

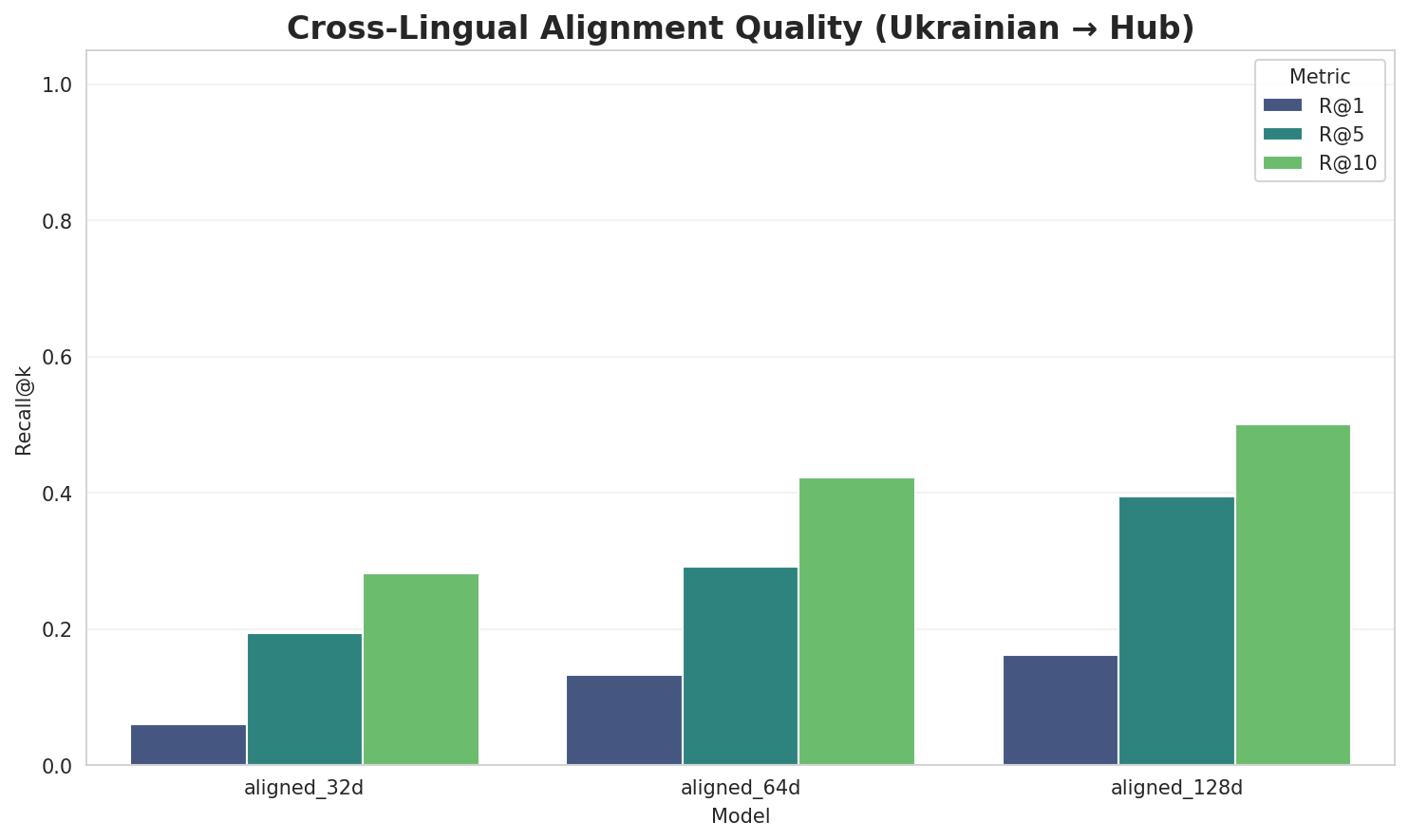

5.1 Cross-Lingual Alignment

5.2 Model Comparison

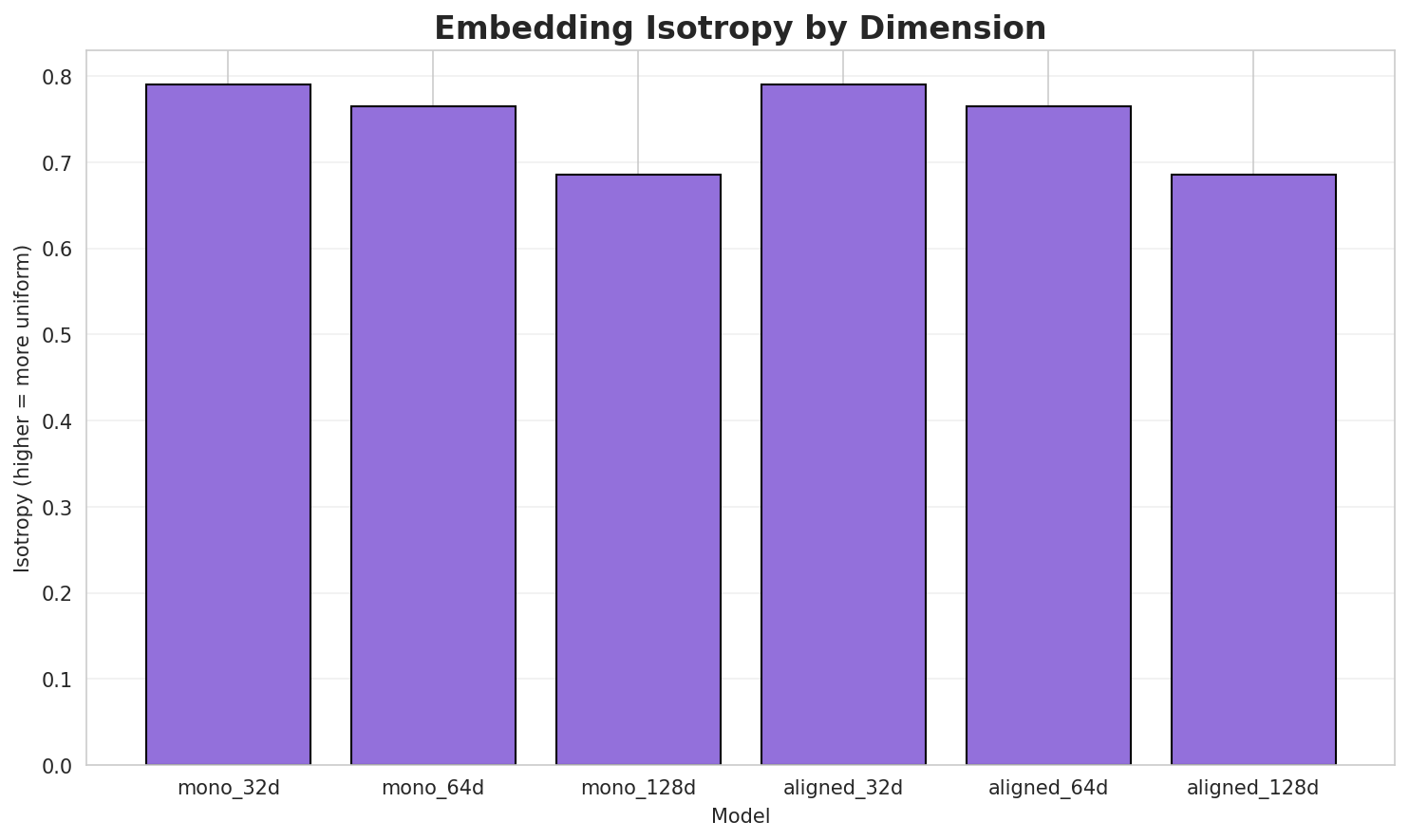

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.7906 🏆 | 0.3688 | N/A | N/A |

| mono_64d | 64 | 0.7645 | 0.2903 | N/A | N/A |

| mono_128d | 128 | 0.6859 | 0.2083 | N/A | N/A |

| aligned_32d | 32 | 0.7906 | 0.3638 | 0.0600 | 0.2820 |

| aligned_64d | 64 | 0.7645 | 0.2932 | 0.1320 | 0.4220 |

| aligned_128d | 128 | 0.6859 | 0.2081 | 0.1620 | 0.5000 |

Key Findings

- Best Isotropy: mono_32d with 0.7906 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2887. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 16.2% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.010 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-с |

серіри, словникарство, стійок |

-к |

клинописній, купрієнко, контролюючого |

-ма |

макаронічну, матеріалізму, македонянин |

-а |

акціонерів, адвокатами, арманізм |

-ко |

контролюючого, кошториси, конгресмен |

-ка |

калькутти, карагандинською, катренко |

-в |

воллс, вигином, вимагаючи |

-по |

популяція, поклики, поданні |

Productive Suffixes

| Suffix | Examples |

|---|---|

-а |

бехерівка, ядерна, чигиринська |

-ий |

летунський, нецентрований, триденський |

-и |

приспали, мільйонерки, серіри |

-о |

купрієнко, словникарство, контролюючого |

-й |

клинописній, летунський, нецентрований |

-і |

міліметрі, червоніші, осяяні |

-го |

контролюючого, бактерійного, жартівливого |

-м |

вигином, дослідженим, македонським |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ають |

2.47x | 104 contexts | дають, лають, мають |

увал |

1.86x | 304 contexts | тувал, тувалу, бувало |

ьког |

2.42x | 55 contexts | ського, яцького, яського |

ання |

1.84x | 137 contexts | пання, вання, рання |

ький |

2.15x | 58 contexts | ський, цький, яський |

ськи |

1.41x | 426 contexts | ський, яський, леськи |

ніст |

1.62x | 185 contexts | ність, юність, ністру |

ленн |

1.66x | 160 contexts | ленну, ленні, гленн |

єтьс |

2.55x | 26 contexts | ється, чується, діється |

ької |

2.50x | 27 contexts | ської, яцької, тоцької |

ійсь |

1.47x | 273 contexts | якійсь, військ, бійськ |

йськ |

1.51x | 206 contexts | єйськ, єйська, тайськ |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-п |

-и |

72 words | постачаючи, пропорции |

-с |

-а |

69 words | сповідника, струмочка |

-к |

-а |

68 words | каца, козлівська |

-п |

-а |

65 words | прописна, петровська |

-с |

-й |

65 words | сучавський, склифосовский |

-с |

-и |

58 words | скрипники, сукупностями |

-в |

-и |

57 words | вистачати, взаємовигідними |

-к |

-й |

55 words | китмановський, карпатскій |

-п |

-і |

55 words | поліморфні, палеарктиці |

-к |

-и |

54 words | кварками, кроками |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| народилася | народил-а-ся |

7.5 | а |

| послідовниками | послідовни-ка-ми |

7.5 | ка |

| кінострічках | кіностріч-ка-х |

7.5 | ка |

| фальшивих | фальши-в-их |

7.5 | в |

| заробітками | заробіт-ка-ми |

7.5 | ка |

| тейякскую | тейякс-ку-ю |

7.5 | ку |

| священиками | священи-ка-ми |

7.5 | ка |

| кронтовская | кронтовс-ка-я |

7.5 | ка |

| правилами | правил-а-ми |

7.5 | а |

| меридіану | мериді-а-ну |

7.5 | а |

| соціалізмові | соціалізм-о-ві |

7.5 | о |

| программе | програм-м-е |

7.5 | м |

| універсамі | універса-м-і |

7.5 | м |

| автошляхами | автошлях-а-ми |

7.5 | а |

| абразивного | абразив-но-го |

6.0 | абразив |

6.6 Linguistic Interpretation

Automated Insight: The language Ukrainian shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.64x) |

| N-gram | 2-gram | Lowest perplexity (437) |

| Markov | Context-4 | Highest predictability (97.2%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-11 06:57:52