Instructions to use wikilangs/vls with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- fastText

How to use wikilangs/vls with fastText:

from huggingface_hub import hf_hub_download import fasttext model = fasttext.load_model(hf_hub_download("wikilangs/vls", "model.bin")) - Notebooks

- Google Colab

- Kaggle

- West Flemish - Wikilangs Models

- Comprehensive Research Report & Full Ablation Study

- 📋 Repository Contents

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Appendix: Metrics Glossary & Interpretation Guide

- About This Project

- Comprehensive Research Report & Full Ablation Study

West Flemish - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on West Flemish Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

Results

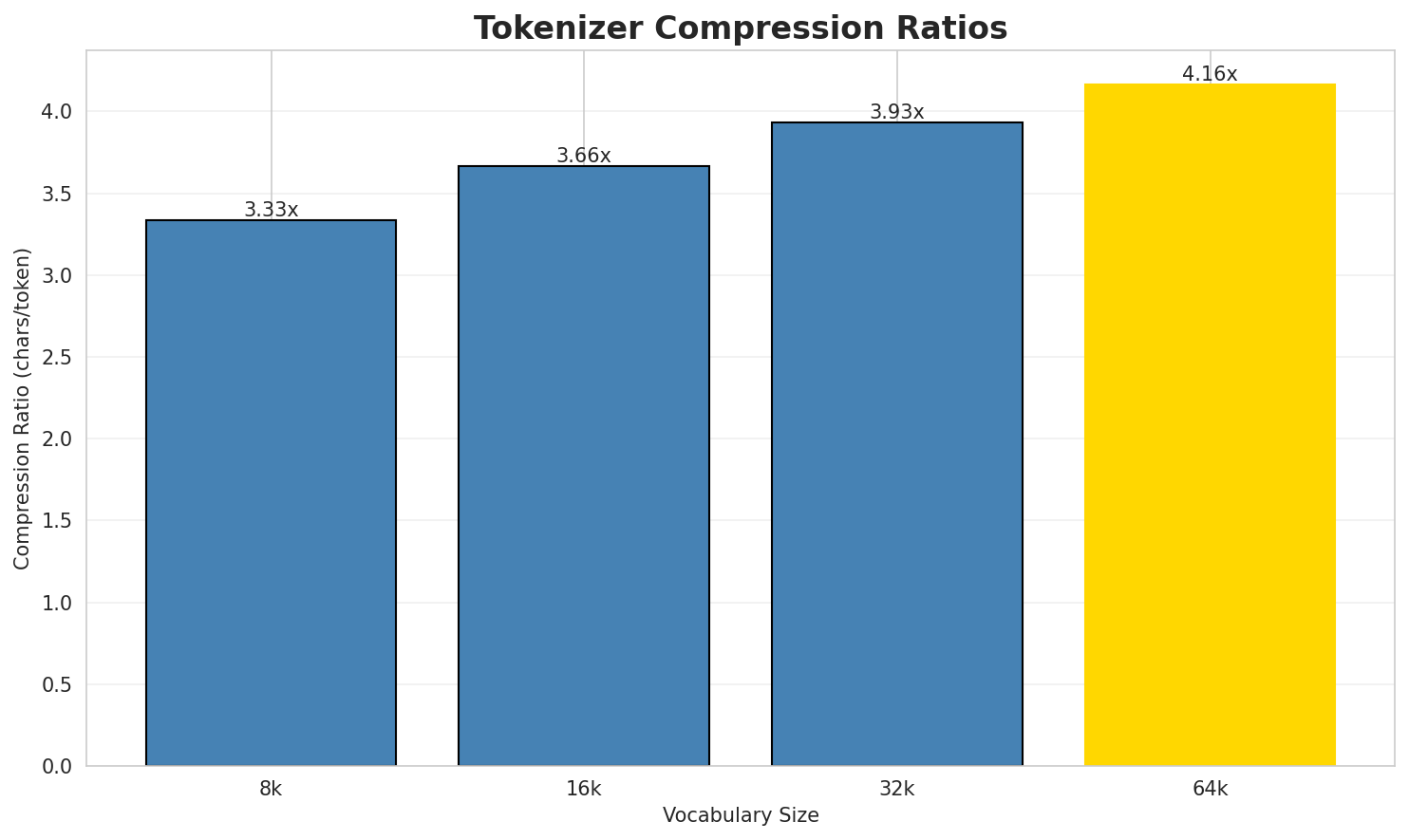

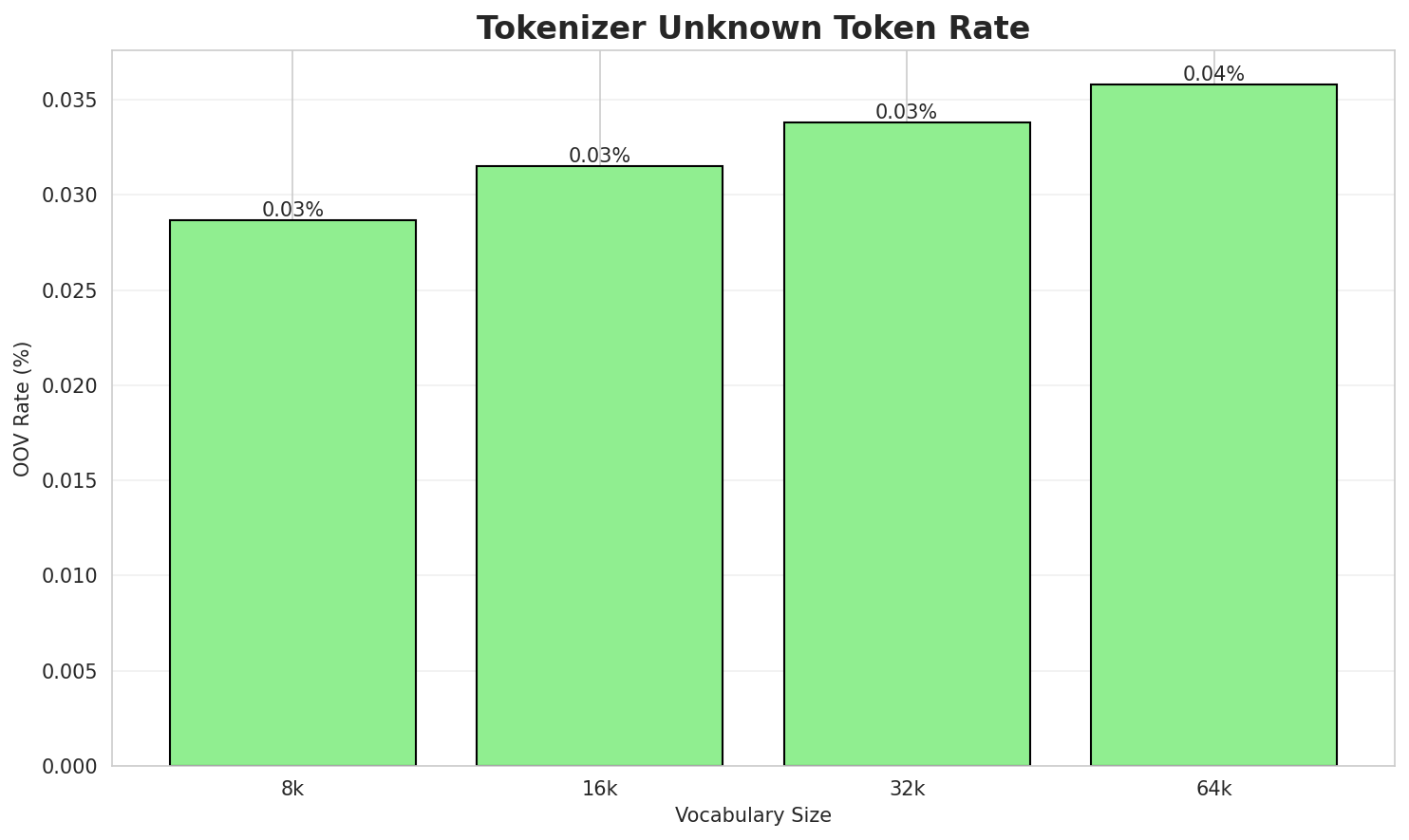

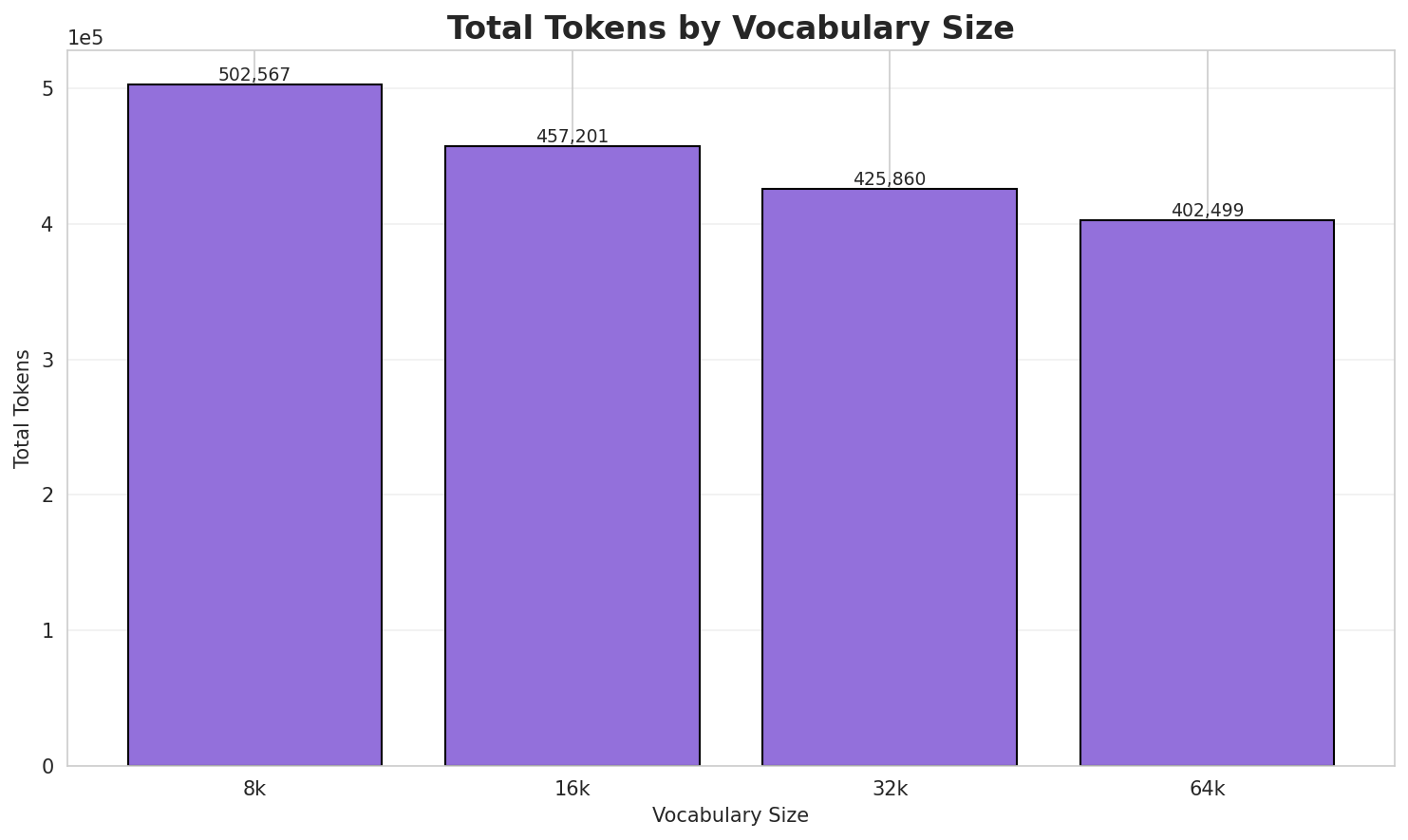

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.334x | 3.34 | 0.0287% | 502,567 |

| 16k | 3.665x | 3.67 | 0.0315% | 457,201 |

| 32k | 3.934x | 3.94 | 0.0338% | 425,860 |

| 64k | 4.163x 🏆 | 4.17 | 0.0358% | 402,499 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Achtntwientig is 't getal 28, e nateurlik getal achter zeevnetwientig en voorn n...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁a chtn tw ientig ▁is ▁' t ▁getal ▁ 2 ... (+18 more) |

28 |

| 16k | ▁a chtn tw ientig ▁is ▁' t ▁getal ▁ 2 ... (+18 more) |

28 |

| 32k | ▁a chtn twientig ▁is ▁' t ▁getal ▁ 2 8 ... (+13 more) |

23 |

| 64k | ▁achtn twientig ▁is ▁' t ▁getal ▁ 2 8 , ... (+12 more) |

22 |

Sample 2: de volksnoame van de gemêente Ôostrôzebeke e dêelgemêente van Stoan, zie: Rôzebe...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁de ▁volk sn oame ▁van ▁de ▁gemêente ▁ôostrôzebeke ▁e ▁dêelgemêente ... (+10 more) |

20 |

| 16k | ▁de ▁volk sn oame ▁van ▁de ▁gemêente ▁ôostrôzebeke ▁e ▁dêelgemêente ... (+10 more) |

20 |

| 32k | ▁de ▁volk snoame ▁van ▁de ▁gemêente ▁ôostrôzebeke ▁e ▁dêelgemêente ▁van ... (+8 more) |

18 |

| 64k | ▁de ▁volk snoame ▁van ▁de ▁gemêente ▁ôostrôzebeke ▁e ▁dêelgemêente ▁van ... (+8 more) |

18 |

Sample 3: Paltoga (Russisch: Палтога) is e dorp in Rusland in 't district Vytegorsky (obla...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁pal t og a ▁( russisch : ▁ п а ... (+37 more) |

47 |

| 16k | ▁pal t og a ▁( russisch : ▁ п а ... (+35 more) |

45 |

| 32k | ▁pal t oga ▁( russisch : ▁ п ал то ... (+29 more) |

39 |

| 64k | ▁palt oga ▁( russisch : ▁ п ал то г ... (+28 more) |

38 |

Key Findings

- Best Compression: 64k achieves 4.163x compression

- Lowest UNK Rate: 8k with 0.0287% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

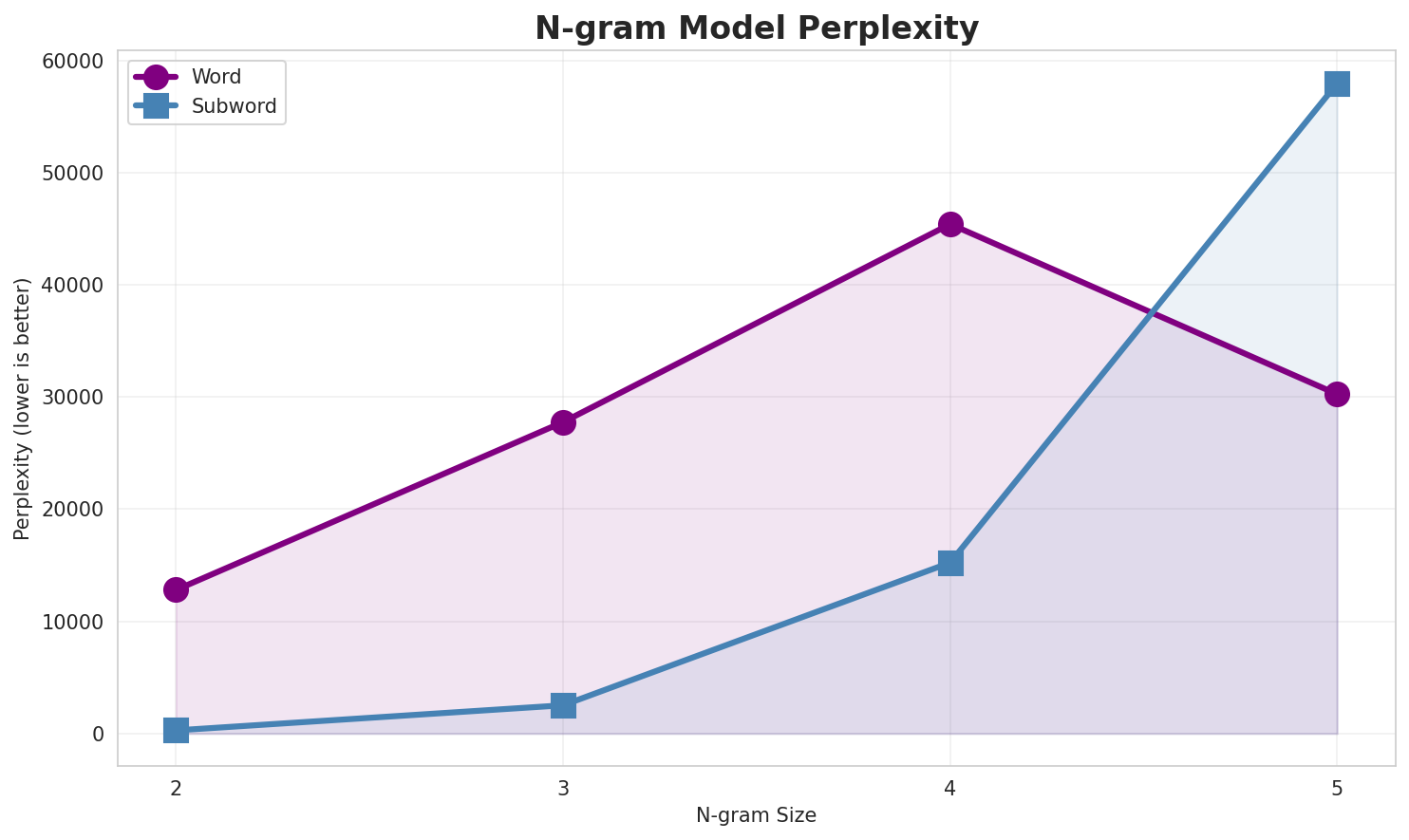

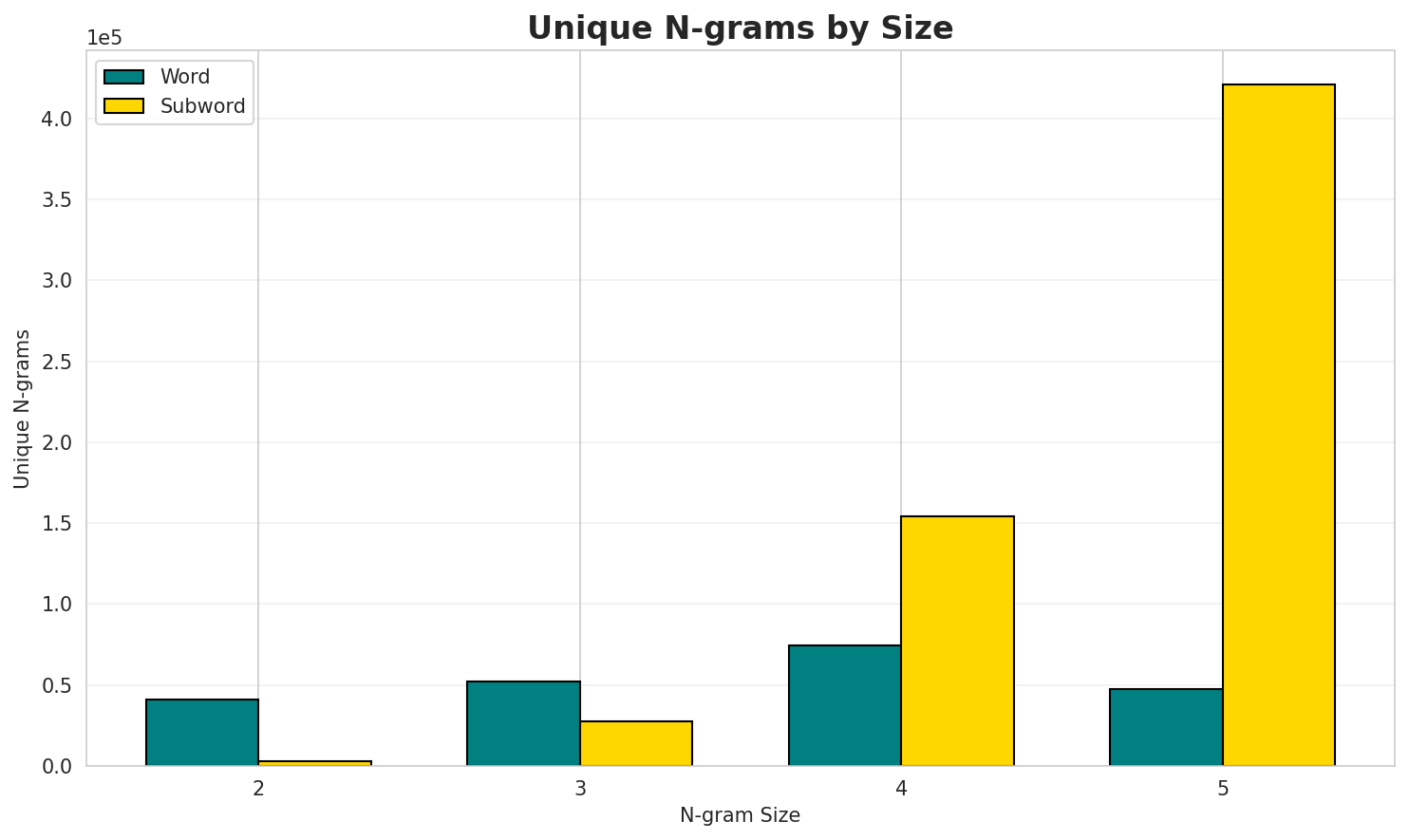

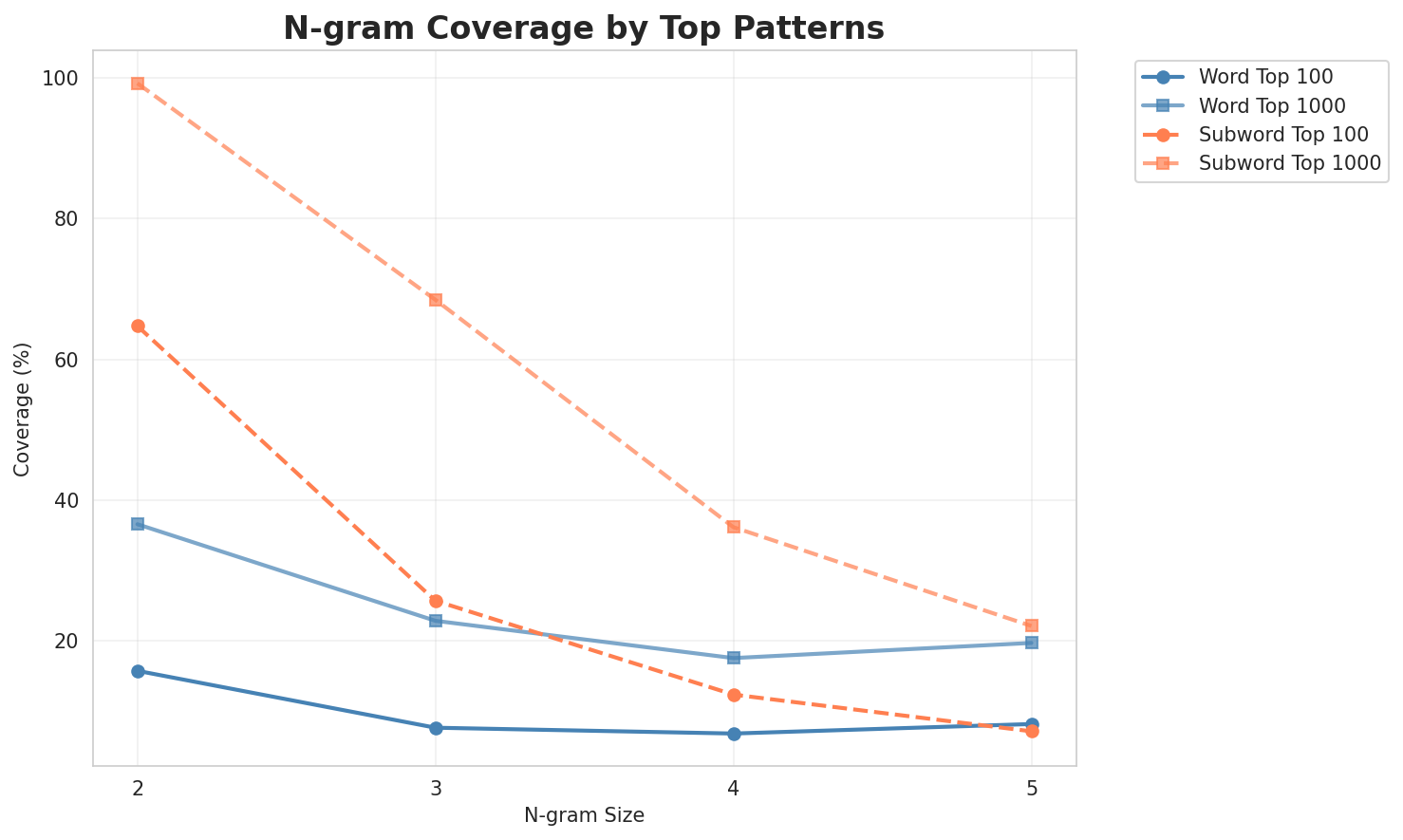

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 12,804 | 13.64 | 41,132 | 15.7% | 36.6% |

| 2-gram | Subword | 282 🏆 | 8.14 | 3,241 | 64.7% | 99.2% |

| 3-gram | Word | 27,763 | 14.76 | 51,974 | 7.7% | 22.9% |

| 3-gram | Subword | 2,519 | 11.30 | 27,863 | 25.7% | 68.5% |

| 4-gram | Word | 45,411 | 15.47 | 74,505 | 6.8% | 17.6% |

| 4-gram | Subword | 15,236 | 13.90 | 154,373 | 12.4% | 36.1% |

| 5-gram | Word | 30,248 | 14.88 | 47,265 | 8.2% | 19.7% |

| 5-gram | Subword | 57,965 | 15.82 | 420,619 | 7.2% | 22.1% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | van de |

15,489 |

| 2 | in de |

10,285 |

| 3 | in t |

6,874 |

| 4 | van t |

5,995 |

| 5 | en de |

3,723 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | joar in de |

850 |

| 2 | van t joar |

791 |

| 3 | bouwkundig erfgoed in |

765 |

| 4 | in west vloandern |

742 |

| 5 | t joar is |

714 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | t joar is t |

693 |

| 2 | eeuwe volgenst de christelikke |

526 |

| 3 | volgenst de christelikke joartellienge |

526 |

| 4 | noa bouwkundig erfgoed in |

354 |

| 5 | t ende van t |

337 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | eeuwe volgenst de christelikke joartellienge |

526 |

| 2 | t ende van t joar |

304 |

| 3 | volgenst de christelikke joartellienge gebeurtenissn |

292 |

| 4 | lyste van bouwkundig erfgoed in |

251 |

| 5 | toet t ende van t |

250 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | n _ |

399,169 |

| 2 | e _ |

395,658 |

| 3 | e r |

217,859 |

| 4 | e n |

214,189 |

| 5 | d e |

208,906 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d e |

123,266 |

| 2 | d e _ |

116,073 |

| 3 | a n _ |

97,189 |

| 4 | e n _ |

96,860 |

| 5 | _ v a |

80,611 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d e _ |

90,909 |

| 2 | _ v a n |

76,359 |

| 3 | v a n _ |

74,288 |

| 4 | _ i n _ |

52,878 |

| 5 | n _ d e |

48,858 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ v a n _ |

73,086 |

| 2 | n _ d e _ |

39,289 |

| 3 | a n _ d e |

22,613 |

| 4 | v a n _ d |

21,346 |

| 5 | e _ v a n |

19,923 |

Key Findings

- Best Perplexity: 2-gram (subword) with 282

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~22% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

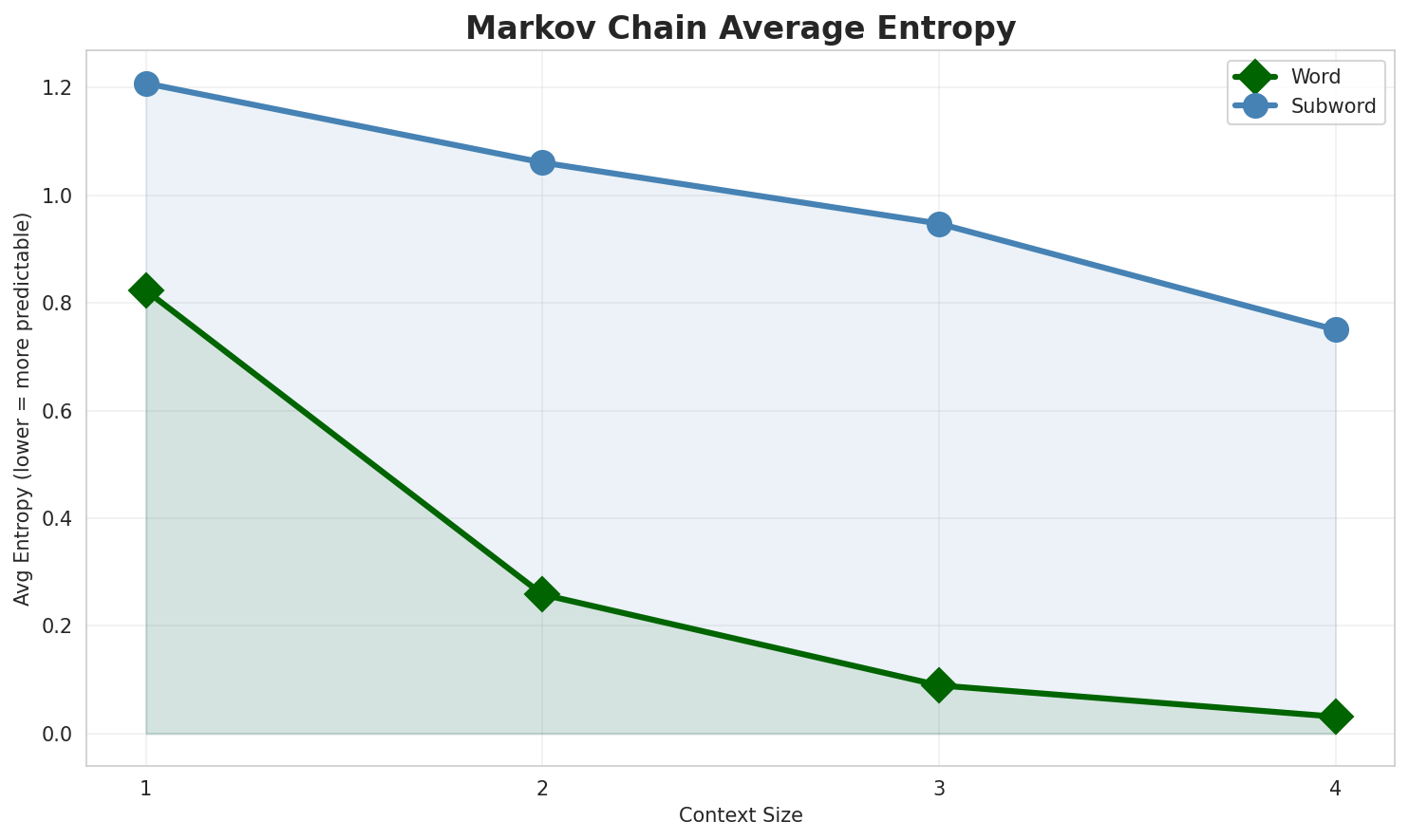

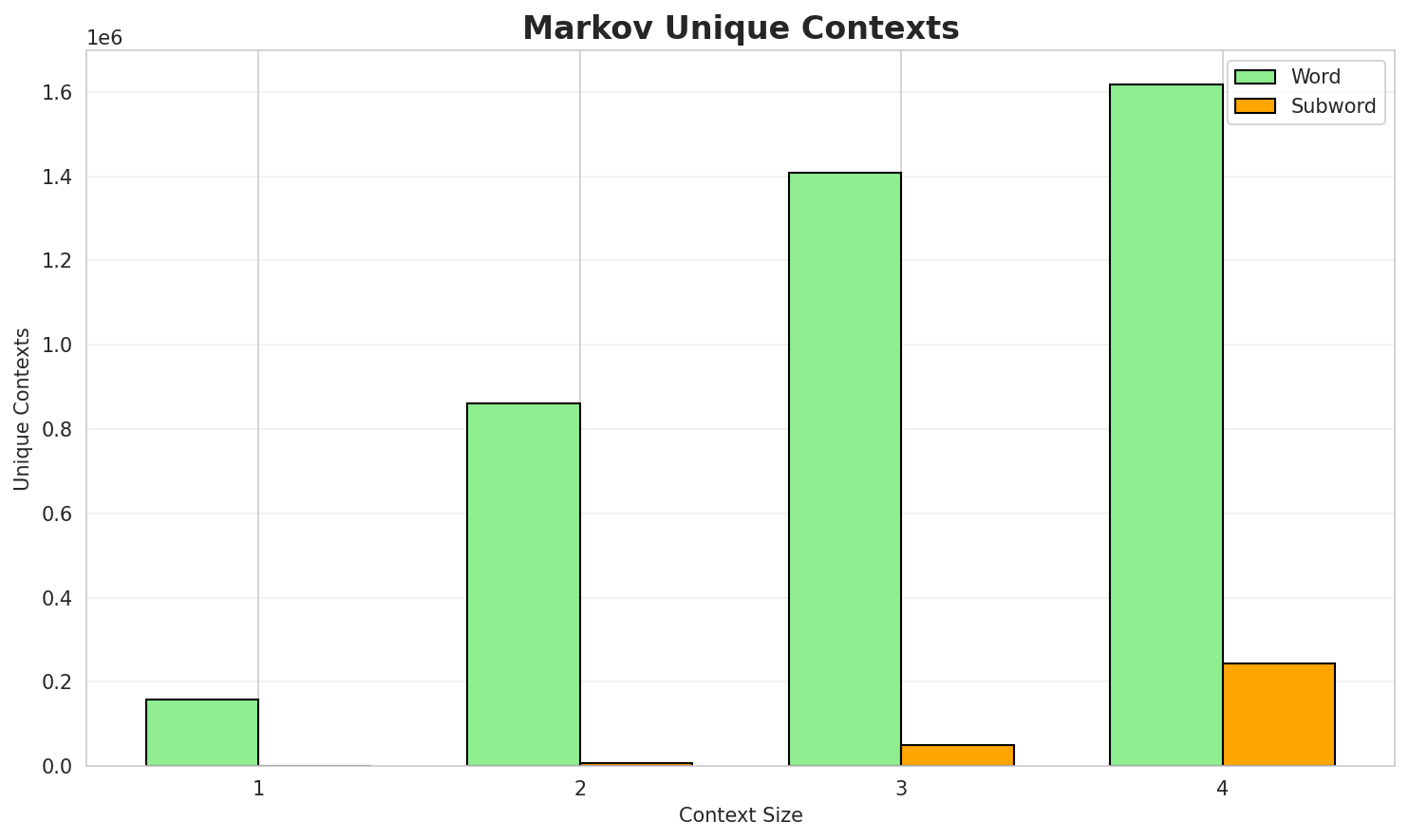

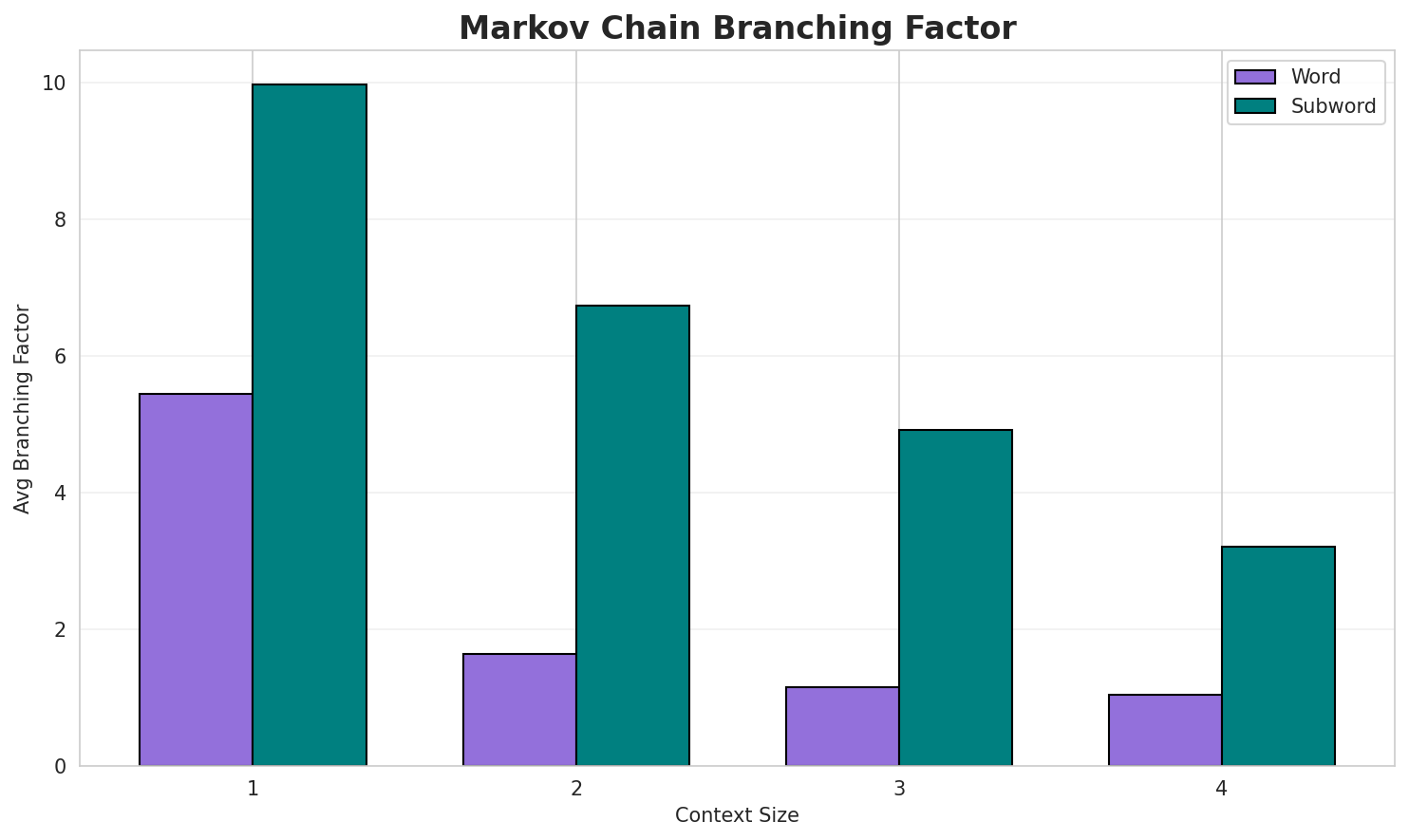

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.8228 | 1.769 | 5.44 | 158,804 | 17.7% |

| 1 | Subword | 1.2080 | 2.310 | 9.96 | 735 | 0.0% |

| 2 | Word | 0.2583 | 1.196 | 1.64 | 860,998 | 74.2% |

| 2 | Subword | 1.0608 | 2.086 | 6.74 | 7,322 | 0.0% |

| 3 | Word | 0.0895 | 1.064 | 1.15 | 1,409,019 | 91.1% |

| 3 | Subword | 0.9474 | 1.928 | 4.92 | 49,306 | 5.3% |

| 4 | Word | 0.0313 🏆 | 1.022 | 1.05 | 1,616,997 | 96.9% |

| 4 | Subword | 0.7502 | 1.682 | 3.21 | 242,577 | 25.0% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

de wyk van t nôordn gruujn dikkers in kontrast me 3 juli gin êen of mêercelligvan yper wunt en nieuw ryk in de kustvlaktn groene bewegienge wordn ze egliek nie kostin ip t volgn nog 293 noa bouwkundig erfgoed bevern en mêer tyd toen ze van

Context Size 2:

van de verênigde stoatn busschnin de dertiende êeuwe dus vès ipgedolvn gebied o den ôostkant van de verênigde stoatn en kanadain t ôostn an ciney in noamn in en je viel italië were binn de stad stroomde

Context Size 3:

joar in de 13e of 14e êeuwe en van de 50 000 en 120 000 beschreevn sôortn varieernvan t joar geboorn pontormo gabriel fahrenheit gustaaf flamen emiel lauwers bob dylan gestorvn jozef...bouwkundig erfgoed in tiegem in west vloandern t es eignlyk nen ouden arm van den aa t grenst

Context Size 4:

t joar is t 80e joar in de 10e eeuwe volgenst de christelikke joartellienge mmxii is e schrikkeljoar...volgenst de christelikke joartellienge gebeurtenissn 25 april hertog jan zounder vrêes legt an d ips...eeuwe volgenst de christelikke joartellienge gebeurtenissn april 5 de west vlamsche coureur gaston r...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_scoe_taz,_we_vee,_scar-êli²_manndstoone_zogers_

Context Size 2:

n_'t_vroegroudt_ae_priens)_gië_e_serd_ipparem_moste

Context Size 3:

_de_vanasamuele_(>de_piegouwne_refeuan_beken_deel_rede

Context Size 4:

_de_schopinidad_er__van_mandsche_kenmevan_flandn_ip_ne_bu

Key Findings

- Best Predictability: Context-4 (word) with 96.9% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (242,577 contexts)

- Recommendation: Context-3 or Context-4 for text generation

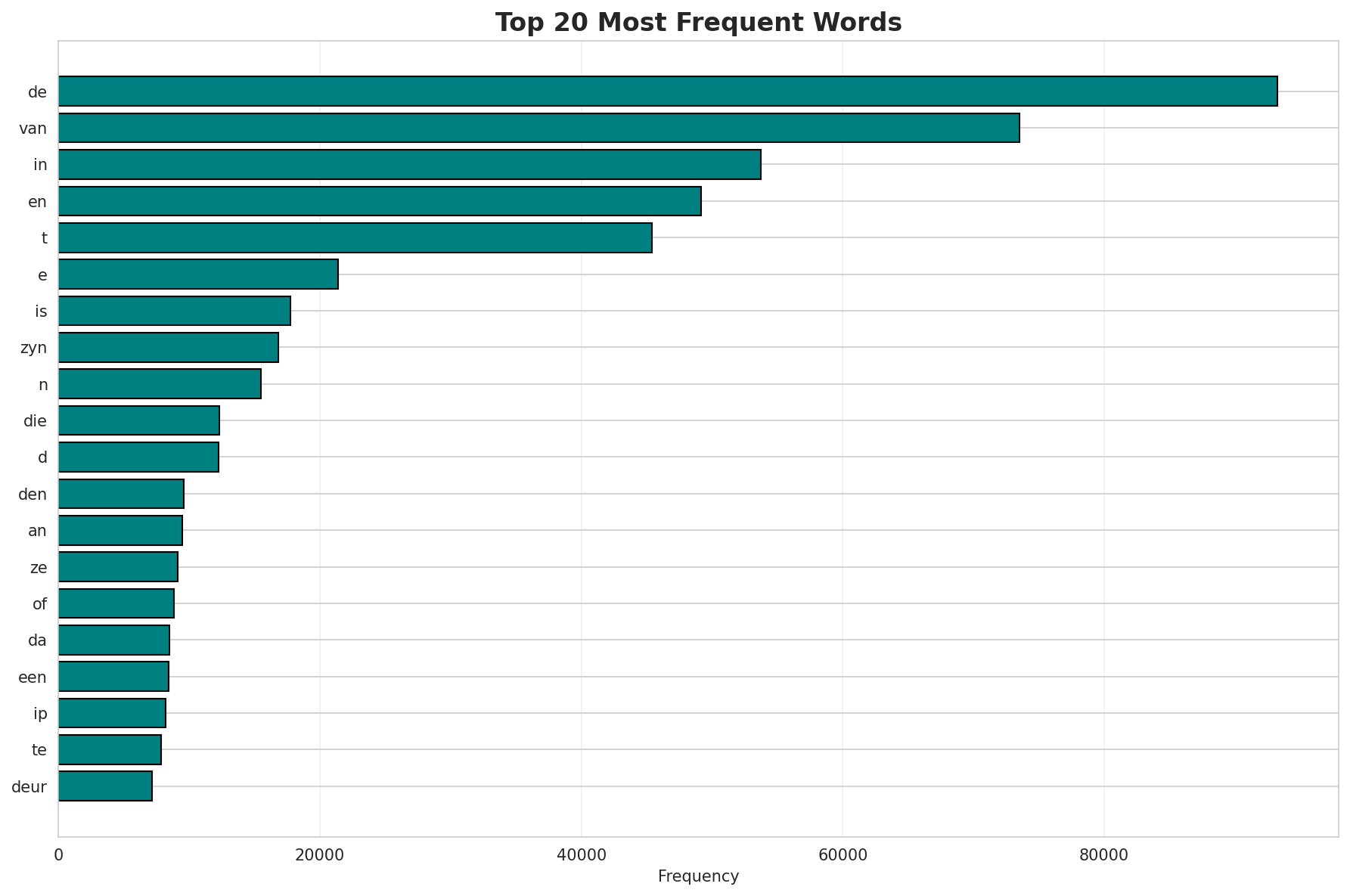

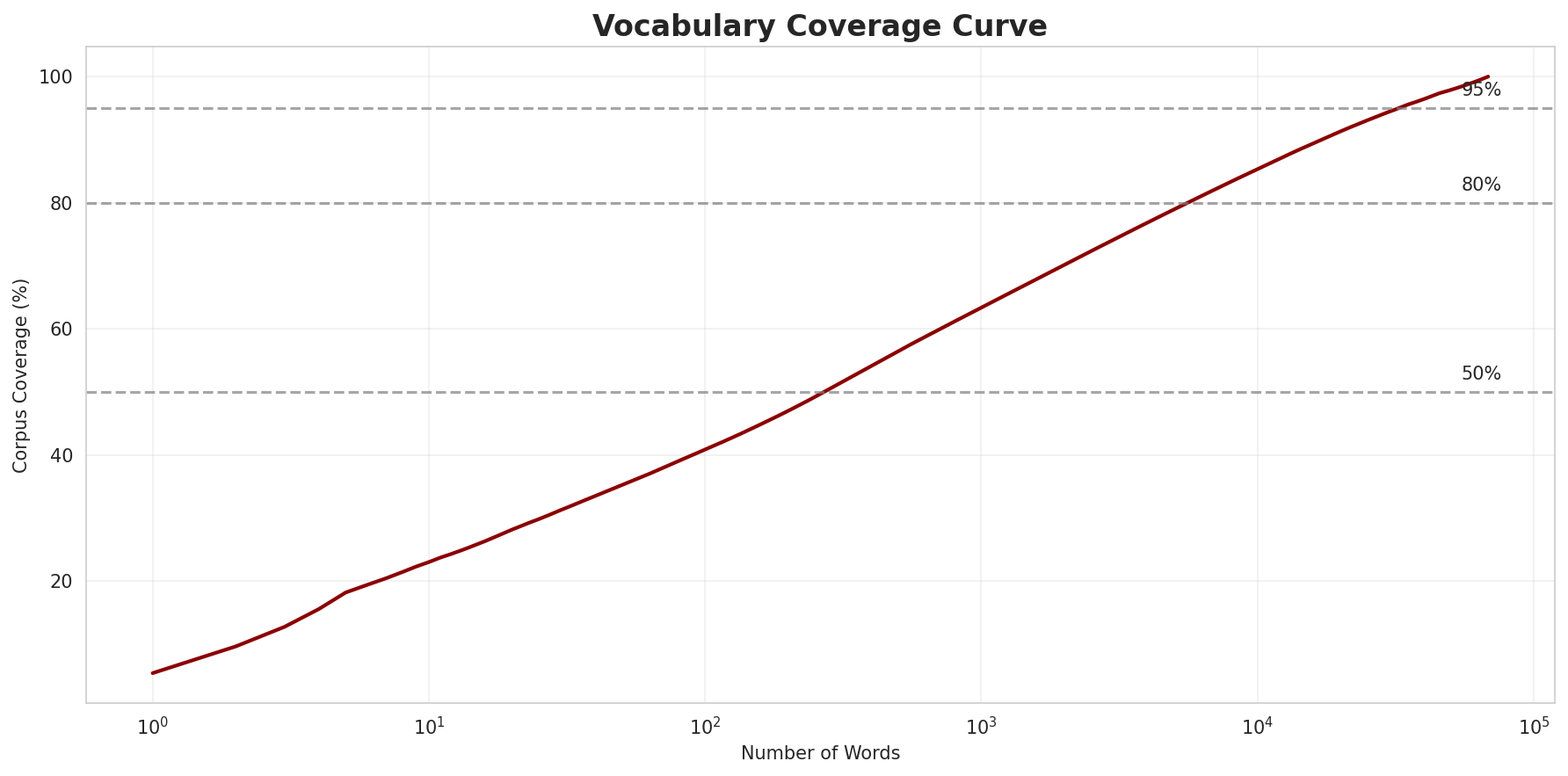

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 68,458 |

| Total Tokens | 1,735,026 |

| Mean Frequency | 25.34 |

| Median Frequency | 4 |

| Frequency Std Dev | 600.62 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | de | 93,287 |

| 2 | van | 73,544 |

| 3 | in | 53,708 |

| 4 | en | 49,180 |

| 5 | t | 45,426 |

| 6 | e | 21,400 |

| 7 | is | 17,745 |

| 8 | zyn | 16,831 |

| 9 | n | 15,475 |

| 10 | die | 12,301 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | myzeqe | 2 |

| 2 | seman | 2 |

| 3 | rumn | 2 |

| 4 | peshkopi | 2 |

| 5 | dibër | 2 |

| 6 | города | 2 |

| 7 | uytvoernde | 2 |

| 8 | stoatssecretoarisn | 2 |

| 9 | soamnstellinge | 2 |

| 10 | mph | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0178 |

| R² (Goodness of Fit) | 0.998718 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 40.8% |

| Top 1,000 | 63.3% |

| Top 5,000 | 79.0% |

| Top 10,000 | 85.3% |

Key Findings

- Zipf Compliance: R²=0.9987 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 40.8% of corpus

- Long Tail: 58,458 words needed for remaining 14.7% coverage

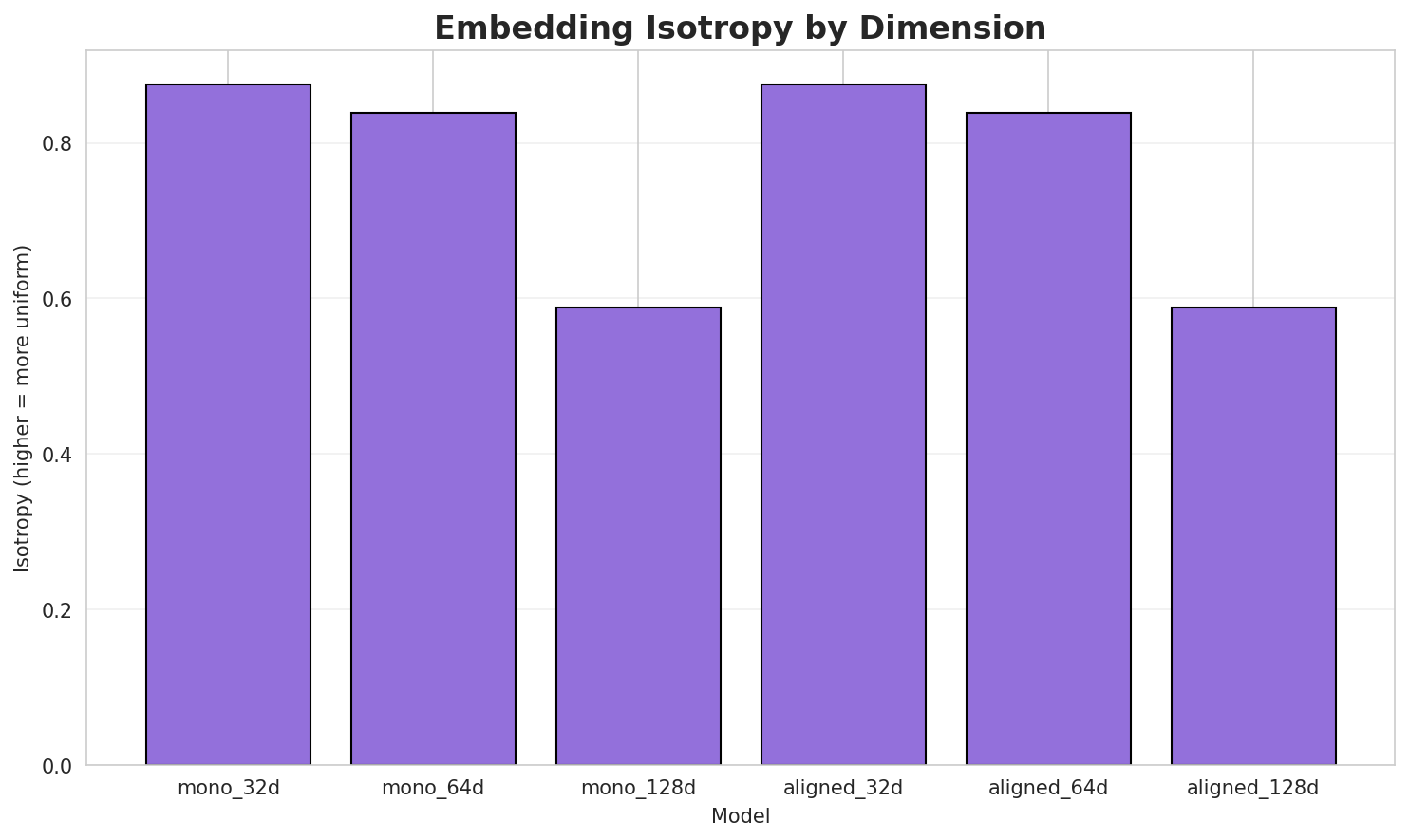

5. Word Embeddings Evaluation

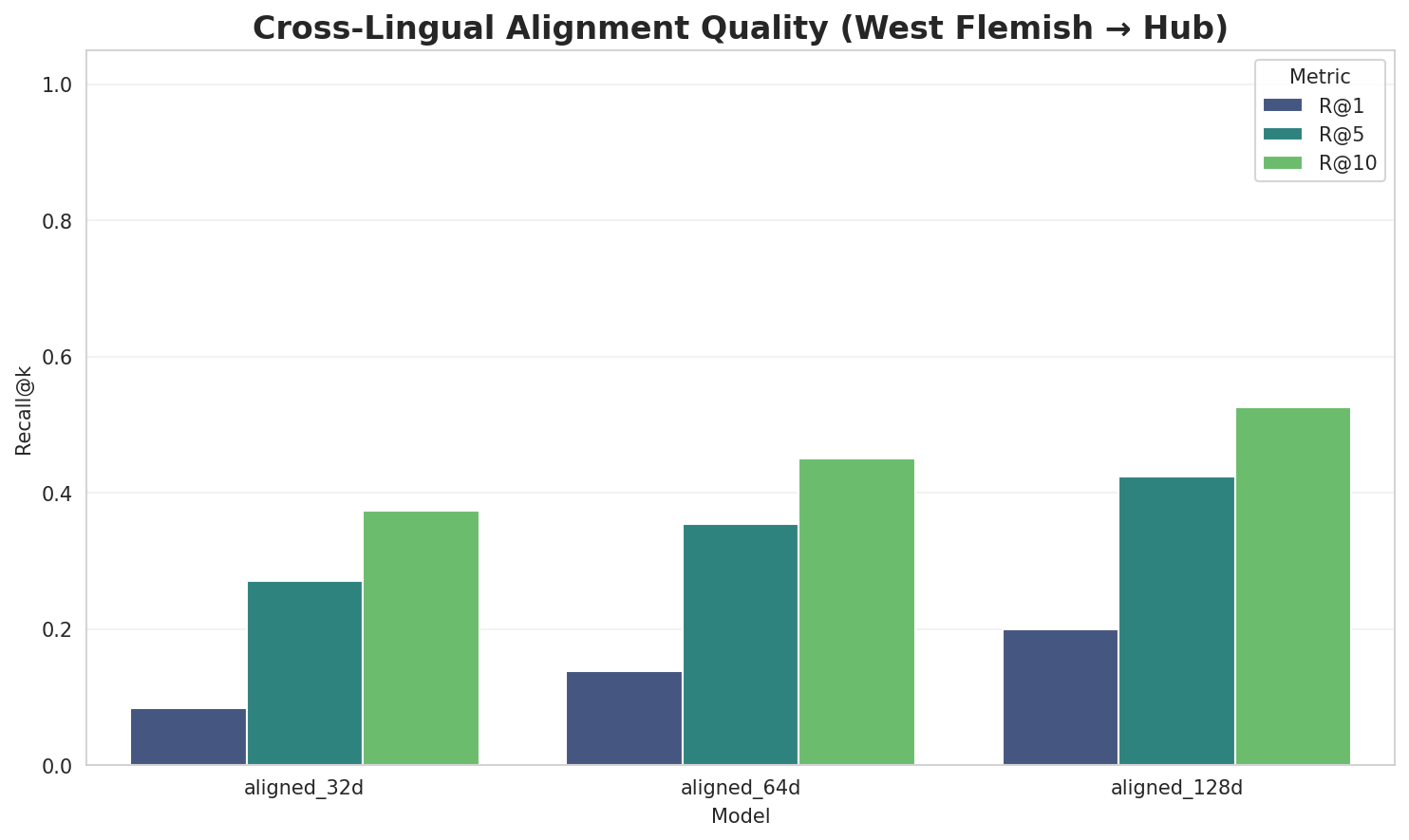

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8756 🏆 | 0.3181 | N/A | N/A |

| mono_64d | 64 | 0.8383 | 0.2517 | N/A | N/A |

| mono_128d | 128 | 0.5888 | 0.2007 | N/A | N/A |

| aligned_32d | 32 | 0.8756 | 0.3113 | 0.0840 | 0.3740 |

| aligned_64d | 64 | 0.8383 | 0.2465 | 0.1380 | 0.4500 |

| aligned_128d | 128 | 0.5888 | 0.2020 | 0.2000 | 0.5260 |

Key Findings

- Best Isotropy: mono_32d with 0.8756 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2550. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 20.0% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | -0.109 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-s |

soôrtn, schwaben, schick |

-b |

binnstroomde, bolivië, biezelehe |

-a |

arenaria, addington, amazing |

-ge |

gelanceerd, gezeyd, gevoenn |

-o |

oendregienk, ogtepunt, omwald |

-be |

bewaren, bees, bedek |

-k |

kurs, kommiesje, koopman |

-d |

dié, donetsk, darling |

Productive Suffixes

| Suffix | Examples |

|---|---|

-e |

underne, binnstroomde, poginge |

-n |

soôrtn, fryslân, hopeweunn |

-s |

zothuus, kurs, cervantes |

-t |

ogtepunt, varlet, capaciteit |

-en |

conservatieven, schwaben, bewaren |

-d |

vervolgd, omwald, tulband |

-ge |

poginge, franstalige, lancerienge |

-r |

elektrotoer, êesteminister, hour |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

enge |

2.33x | 50 contexts | engel, oenger, mengel |

sche |

1.68x | 141 contexts | schee, asche, vasche |

chte |

1.60x | 115 contexts | achte, echte, vichte |

fran |

2.05x | 37 contexts | frank, franz, frang |

schi |

1.77x | 65 contexts | schip, schie, schid |

icht |

1.56x | 114 contexts | richt, licht, vicht |

isch |

1.83x | 51 contexts | ischl, visch, vischn |

hter |

1.94x | 38 contexts | ahter, echter, achter |

nder |

1.41x | 150 contexts | ander, under, onder |

elik |

1.72x | 51 contexts | gelik, tielik, feliks |

oate |

1.77x | 40 contexts | zoate, oater, moate |

erke |

1.54x | 66 contexts | kerke, berke, werke |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-s |

-e |

169 words | subklasse, sukerziekte |

-b |

-e |

149 words | bulskampstroate, beschoafde |

-s |

-n |

125 words | skorsenelen, steeën |

-b |

-n |

114 words | blokkn, behunn |

-k |

-e |

108 words | kunstacademie, kassie |

-m |

-e |

100 words | muuzee, multiple |

-o |

-n |

95 words | oafbusschn, ofebrookn |

-o |

-e |

91 words | ounbevlekte, omriengende |

-d |

-e |

90 words | dagtemprateure, duytstoalige |

-a |

-e |

88 words | adresse, ansluutienge |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| fermenteren | fermenter-e-n |

7.5 | e |

| benoaderd | benoa-de-rd |

7.5 | de |

| bruggelingen | bruggeling-e-n |

7.5 | e |

| romantiek | romanti-e-k |

7.5 | e |

| vruchtvlees | vruchtv-le-es |

7.5 | le |

| treuzelen | treuze-le-n |

7.5 | le |

| vluchters | vlucht-e-rs |

7.5 | e |

| resources | resourc-e-s |

7.5 | e |

| splenters | splent-e-rs |

7.5 | e |

| ipbryngsten | ipbryngst-e-n |

7.5 | e |

| vienkezetters | vienkezett-e-rs |

7.5 | e |

| knobbeltjes | knobbeltj-e-s |

7.5 | e |

| beweegboar | beweegbo-a-r |

7.5 | a |

| schoonhoven | schoonhov-e-n |

7.5 | e |

| donspluumtjes | donspluumtj-e-s |

7.5 | e |

6.6 Linguistic Interpretation

Automated Insight: The language West Flemish shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.16x) |

| N-gram | 2-gram | Lowest perplexity (282) |

| Markov | Context-4 | Highest predictability (96.9%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-11 03:19:22