language: xmf

language_name: Mingrelian

language_family: kartvelian

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- feature-extraction

- sentence-similarity

- tokenization

- n-grams

- markov-chain

- text-mining

- fasttext

- babelvec

- vocabulous

- vocabulary

- monolingual

- family-kartvelian

license: mit

library_name: wikilangs

pipeline_tag: text-generation

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 4.27

- name: best_isotropy

type: isotropy

value: 0.8723

- name: vocabulary_size

type: vocab

value: 0

generated: 2026-01-11T00:00:00.000Z

Mingrelian - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Mingrelian Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

Results

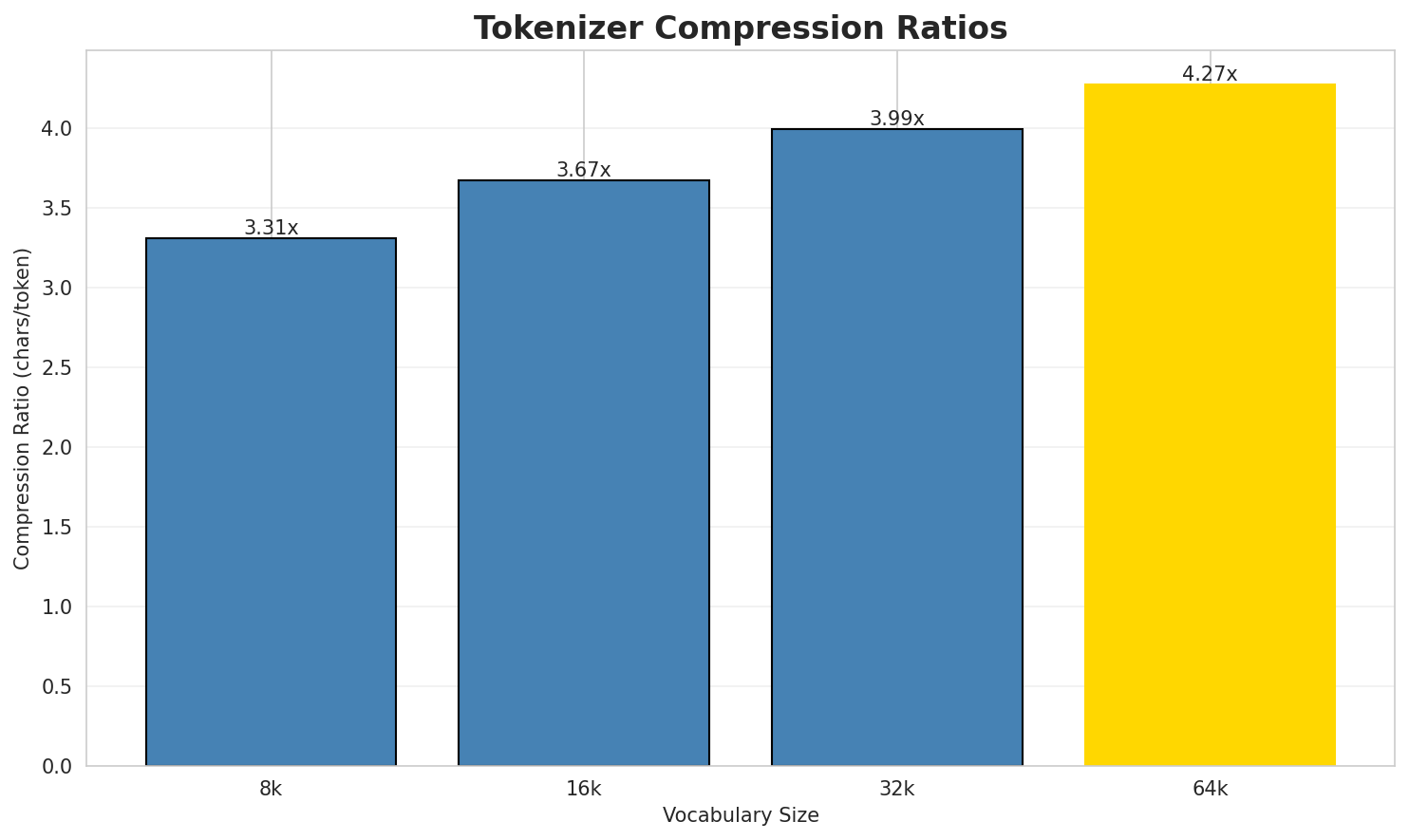

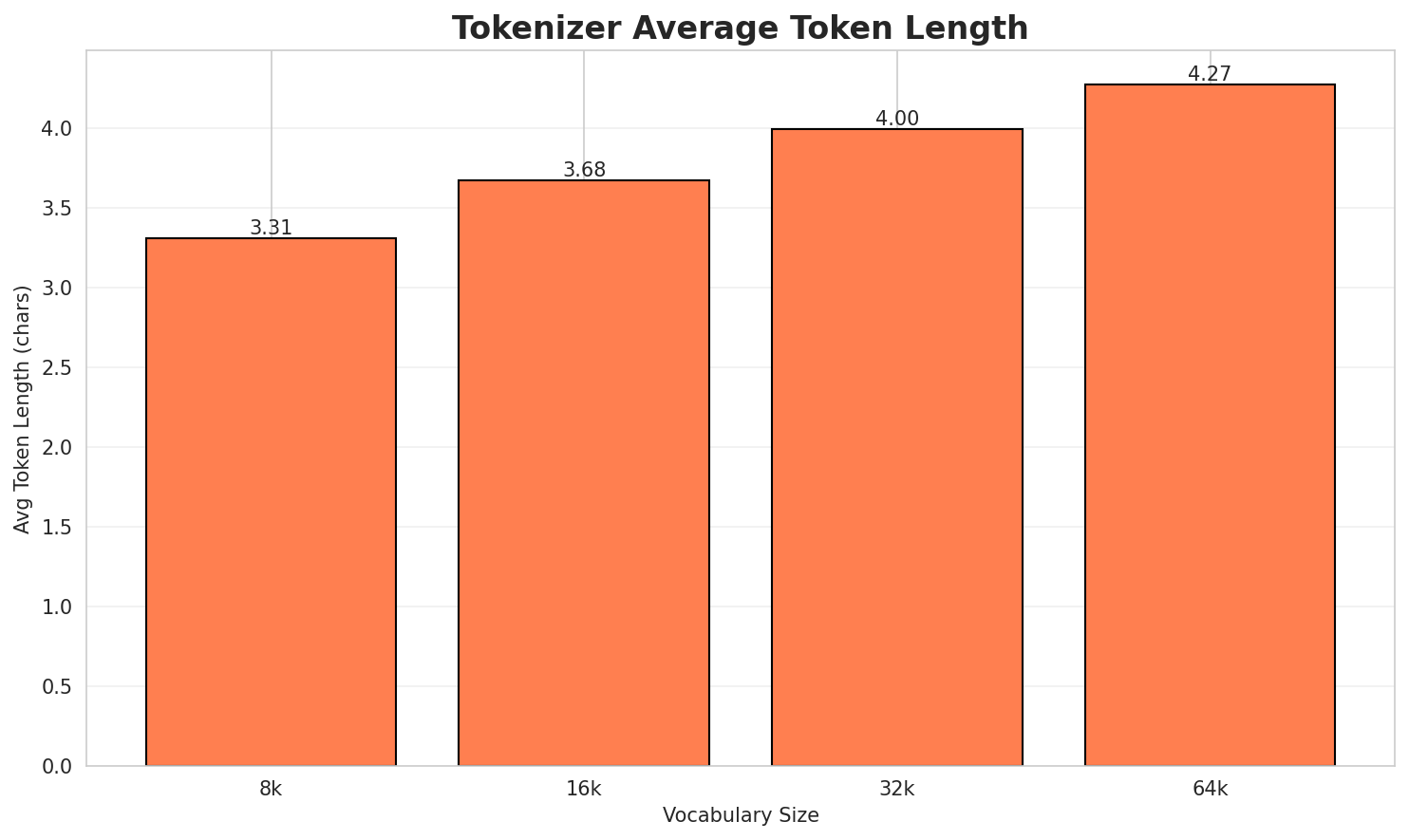

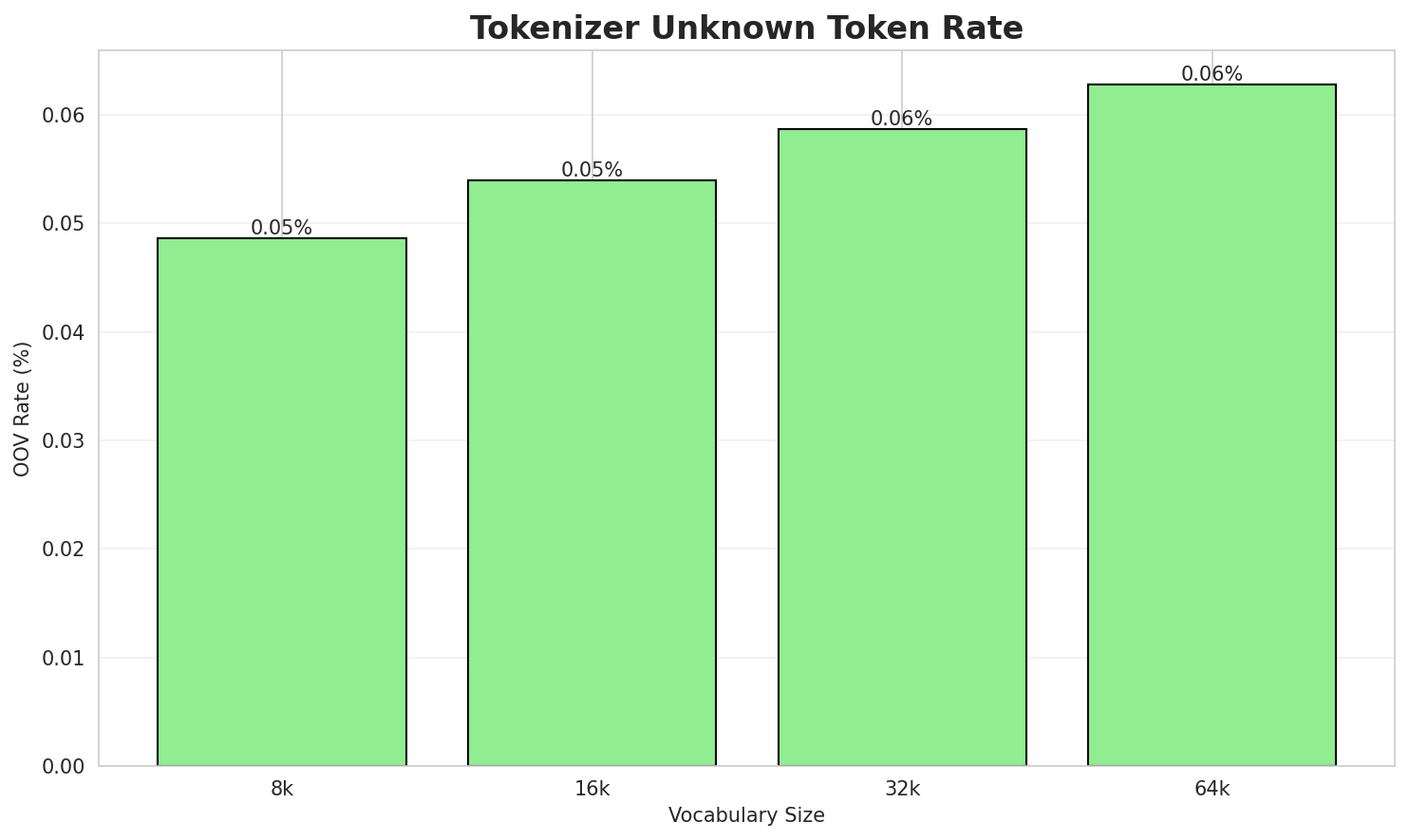

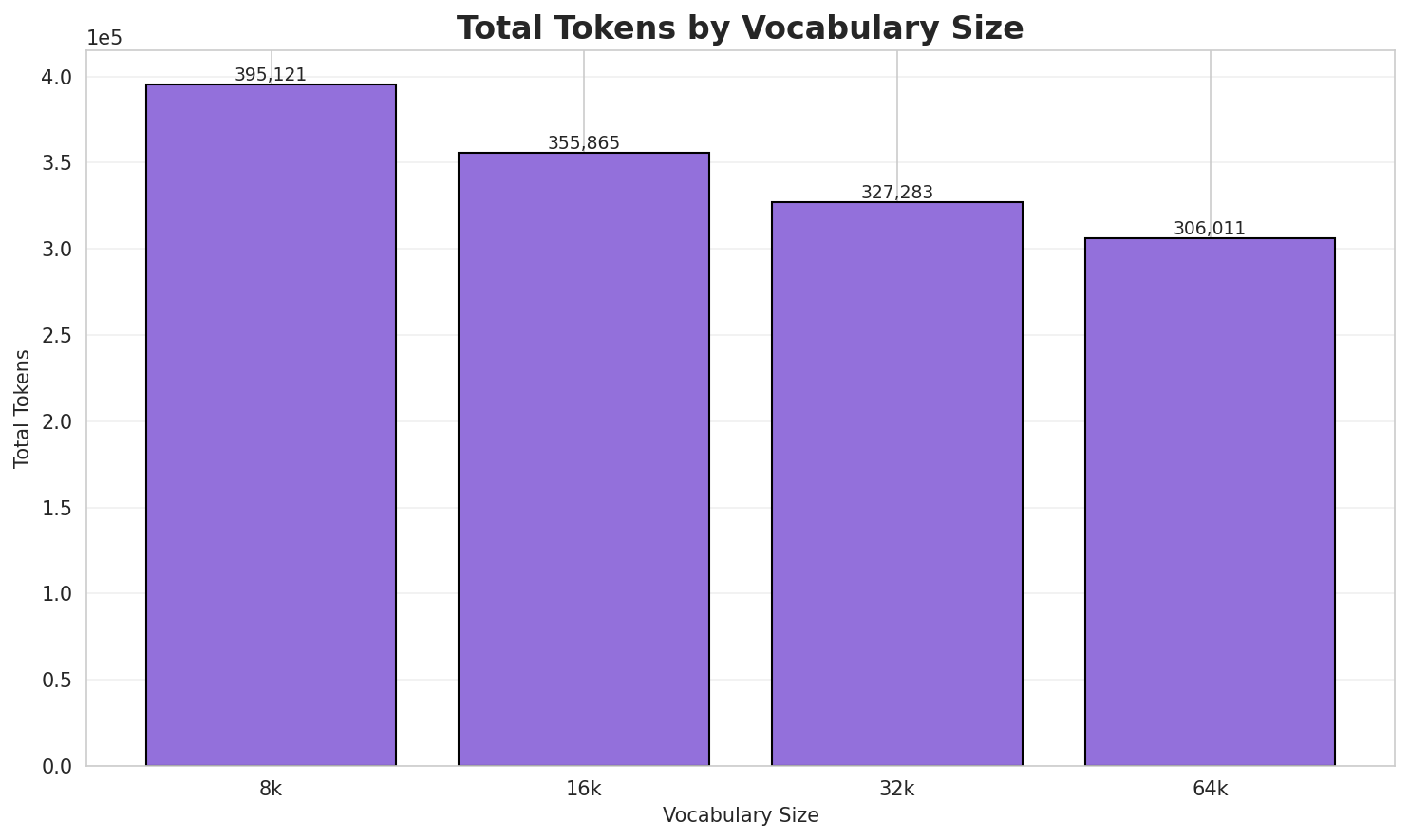

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.307x | 3.31 | 0.0486% | 395,121 |

| 16k | 3.672x | 3.68 | 0.0540% | 355,865 |

| 32k | 3.993x | 4.00 | 0.0587% | 327,283 |

| 64k | 4.270x 🏆 | 4.27 | 0.0627% | 306,011 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: — ახალი წანეფიშ ეჭარუაშახ 821 წანა. მოლინეფი დუნაბადი ნაღურა კატეგორია:

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 8 2 1 ▁წანა . ... (+5 more) |

15 |

| 16k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 8 2 1 ▁წანა . ... (+5 more) |

15 |

| 32k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 8 2 1 ▁წანა . ... (+5 more) |

15 |

| 64k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 8 2 1 ▁წანა . ... (+5 more) |

15 |

Sample 2: წანა — ჯვ. წ. XIII ოშწანურაშ ჯვ. წ. რანწკო 4-ა წანა. ახალი წანეფიშ ეჭარუაშახ წან...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁წანა ▁— ▁ჯვ . ▁წ . ▁xiii ▁ოშწანურაშ ▁ჯვ . ... (+19 more) |

29 |

| 16k | ▁წანა ▁— ▁ჯვ . ▁წ . ▁xiii ▁ოშწანურაშ ▁ჯვ . ... (+19 more) |

29 |

| 32k | ▁წანა ▁— ▁ჯვ . ▁წ . ▁xiii ▁ოშწანურაშ ▁ჯვ . ... (+19 more) |

29 |

| 64k | ▁წანა ▁— ▁ჯვ . ▁წ . ▁xiii ▁ოშწანურაშ ▁ჯვ . ... (+19 more) |

29 |

Sample 3: — ახალი წანეფიშ ეჭარუაშახ 319 წანა. მოლინეფი დუნაბადი ნაღურა კატეგორია:

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 3 1 9 ▁წანა . ... (+5 more) |

15 |

| 16k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 3 1 9 ▁წანა . ... (+5 more) |

15 |

| 32k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 3 1 9 ▁წანა . ... (+5 more) |

15 |

| 64k | ▁— ▁ახალი ▁წანეფიშ ▁ეჭარუაშახ ▁ 3 1 9 ▁წანა . ... (+5 more) |

15 |

Key Findings

- Best Compression: 64k achieves 4.270x compression

- Lowest UNK Rate: 8k with 0.0486% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

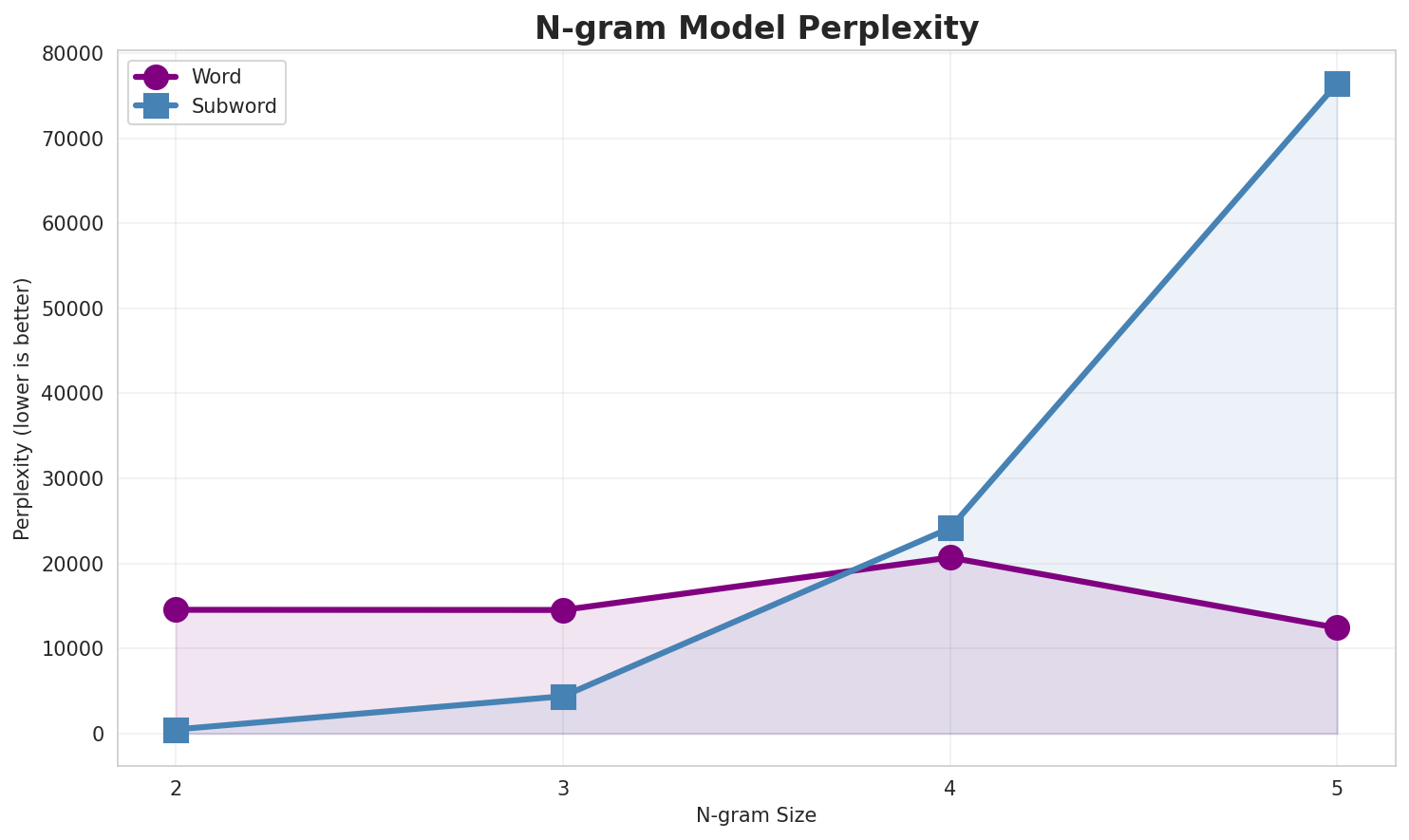

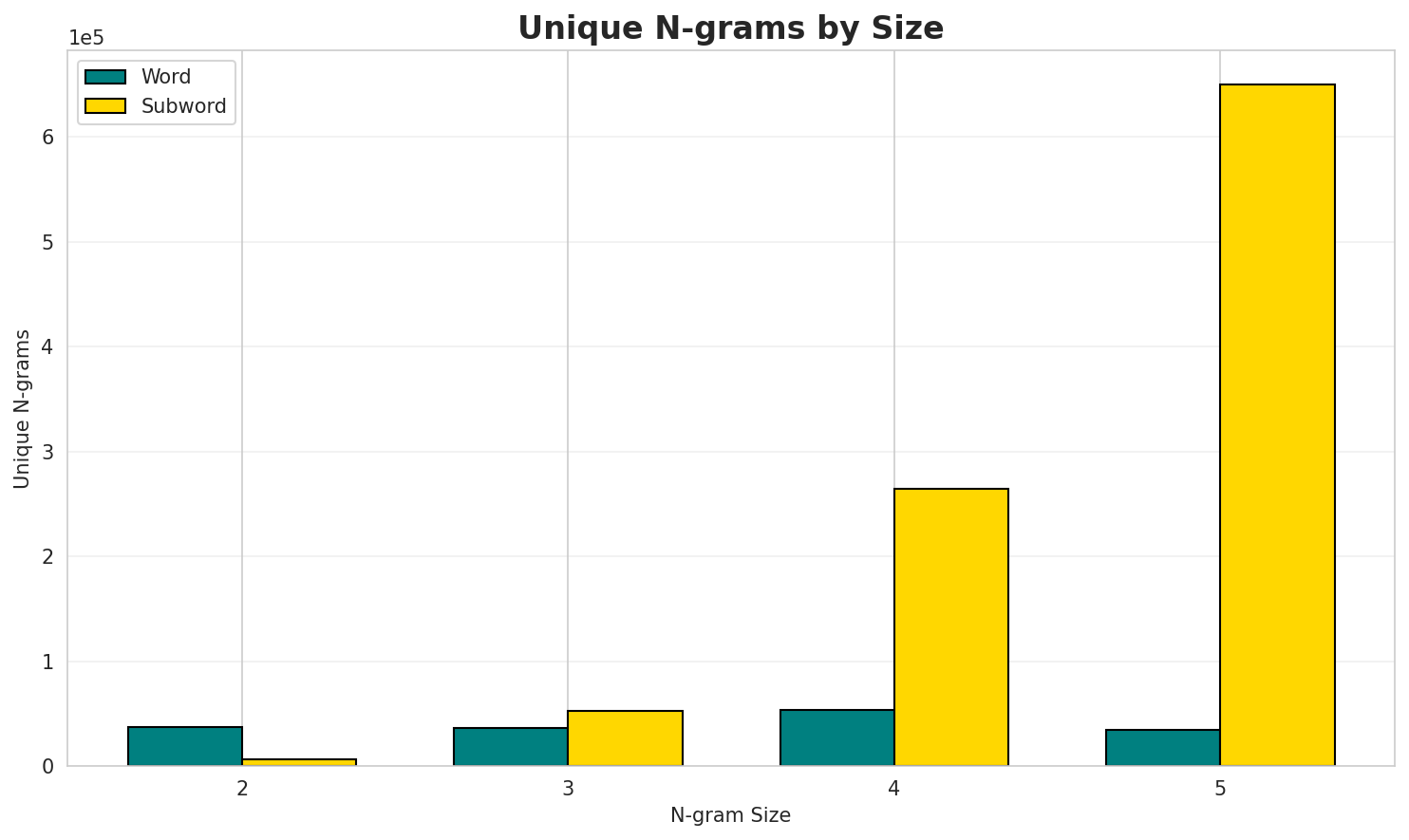

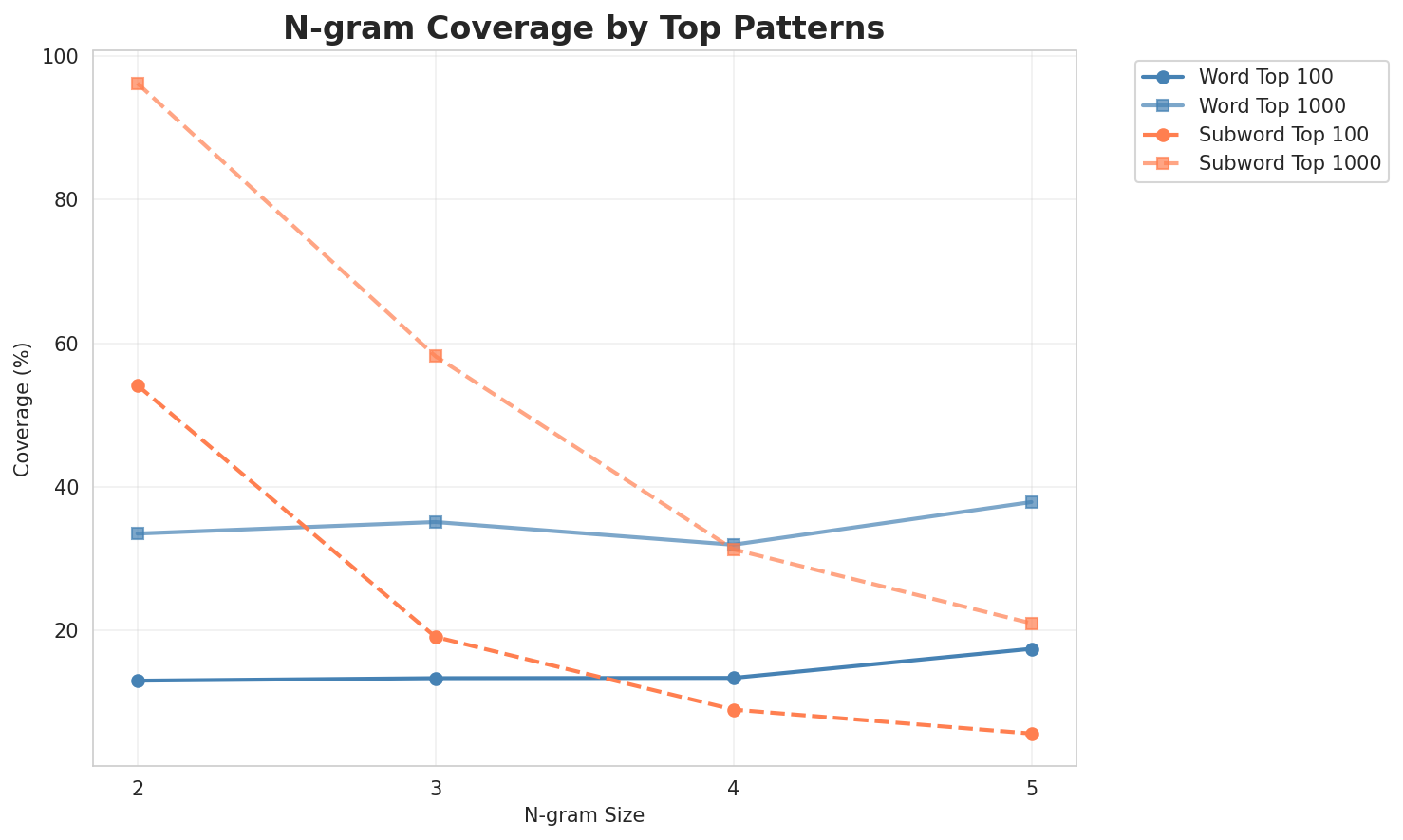

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 14,545 | 13.83 | 37,338 | 12.9% | 33.4% |

| 2-gram | Subword | 483 🏆 | 8.92 | 6,848 | 54.1% | 96.3% |

| 3-gram | Word | 14,526 | 13.83 | 36,176 | 13.3% | 35.0% |

| 3-gram | Subword | 4,386 | 12.10 | 52,208 | 19.0% | 58.2% |

| 4-gram | Word | 20,697 | 14.34 | 53,331 | 13.3% | 31.9% |

| 4-gram | Subword | 24,158 | 14.56 | 264,428 | 8.9% | 31.2% |

| 5-gram | Word | 12,424 | 13.60 | 34,098 | 17.4% | 37.8% |

| 5-gram | Subword | 76,448 | 16.22 | 649,486 | 5.5% | 20.9% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | რესურსეფი ინტერნეტის |

10,643 |

| 2 | ჯვ წ |

2,869 |

| 3 | ართ ართი |

2,539 |

| 4 | of the |

2,084 |

| 5 | ქოძირით თაშნეშე |

1,913 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | მოლინეფი დუნაბადი ნაღურა |

1,341 |

| 2 | დუნაბადი ნაღურა კატეგორია |

1,341 |

| 3 | ახალი წანეფიშ ეჭარუაშახ |

1,200 |

| 4 | წანა მოლინეფი დუნაბადი |

1,191 |

| 5 | ოფიციალური ვებ ხასჷლა |

717 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | მოლინეფი დუნაბადი ნაღურა კატეგორია |

1,336 |

| 2 | წანა მოლინეფი დუნაბადი ნაღურა |

1,191 |

| 3 | ღურთუთა ფურთუთა მელახი პირელი |

660 |

| 4 | ფურთუთა მელახი პირელი მესი |

658 |

| 5 | ეკენია გჷმათუთა გერგობათუთა ქირსეთუთა |

656 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | წანა მოლინეფი დუნაბადი ნაღურა კატეგორია |

1,191 |

| 2 | ღურთუთა ფურთუთა მელახი პირელი მესი |

654 |

| 3 | ფურთუთა მელახი პირელი მესი მანგი |

647 |

| 4 | მელახი პირელი მესი მანგი კვირკვე |

646 |

| 5 | მანგი კვირკვე მარაშინათუთა ეკენია გჷმათუთა |

642 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ი _ |

316,429 |

| 2 | შ _ |

280,108 |

| 3 | ა ნ |

206,994 |

| 4 | ა რ |

189,457 |

| 5 | რ ი |

178,820 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ი შ _ |

142,356 |

| 2 | ე ფ ი |

121,504 |

| 3 | ა შ _ |

105,502 |

| 4 | ლ ი _ |

74,000 |

| 5 | _ დ ო |

69,476 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ დ ო _ |

54,635 |

| 2 | ე ფ ი _ |

51,940 |

| 3 | ე ფ ი შ |

38,103 |

| 4 | _ წ ა ნ |

37,247 |

| 5 | ფ ი შ _ |

35,972 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | ე ფ ი შ _ |

35,235 |

| 2 | _ წ ა ნ ა |

29,928 |

| 3 | , _ ნ ა მ |

16,612 |

| 4 | _ ნ ა მ უ |

15,215 |

| 5 | წ ა ნ ა შ |

14,803 |

Key Findings

- Best Perplexity: 2-gram (subword) with 483

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~21% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

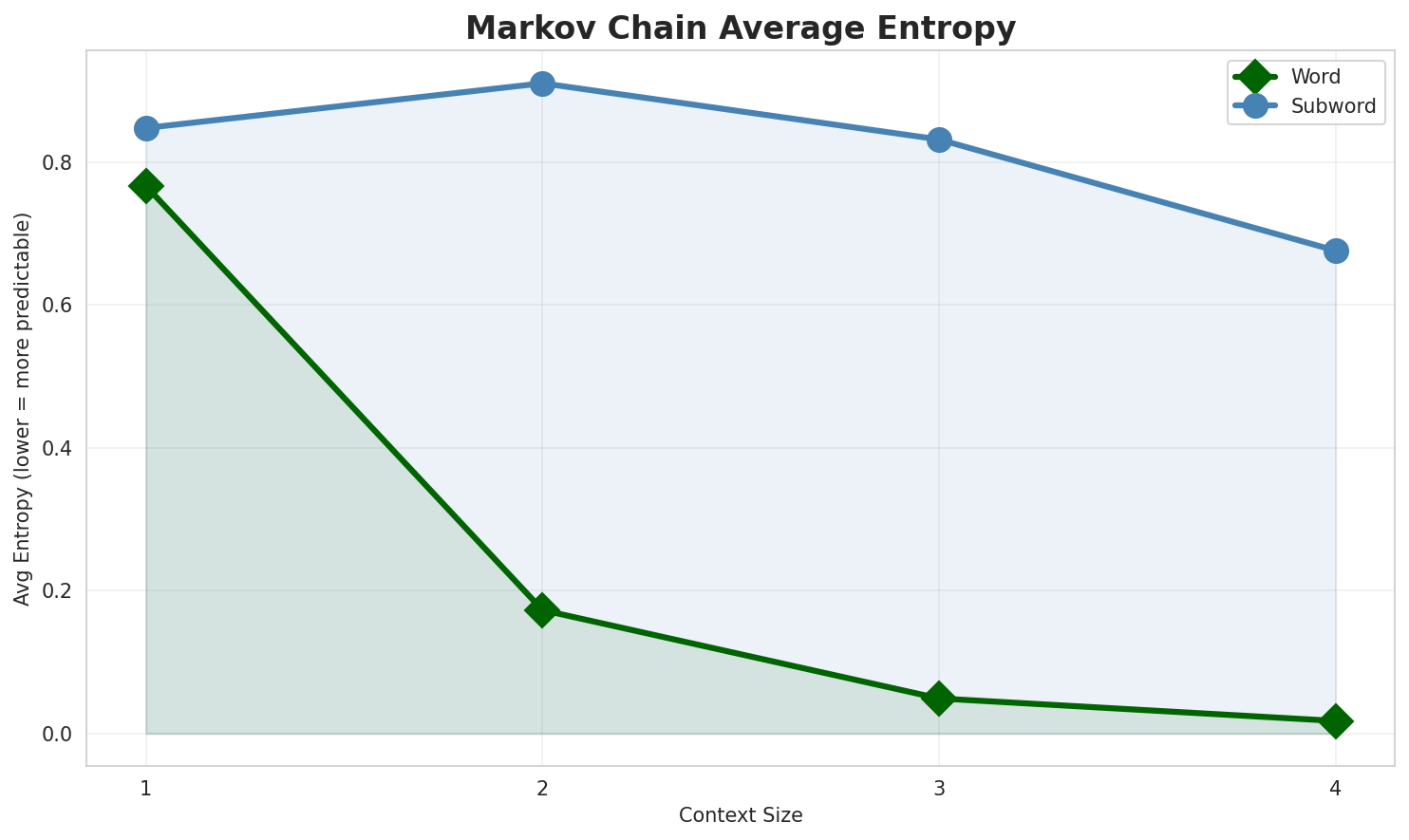

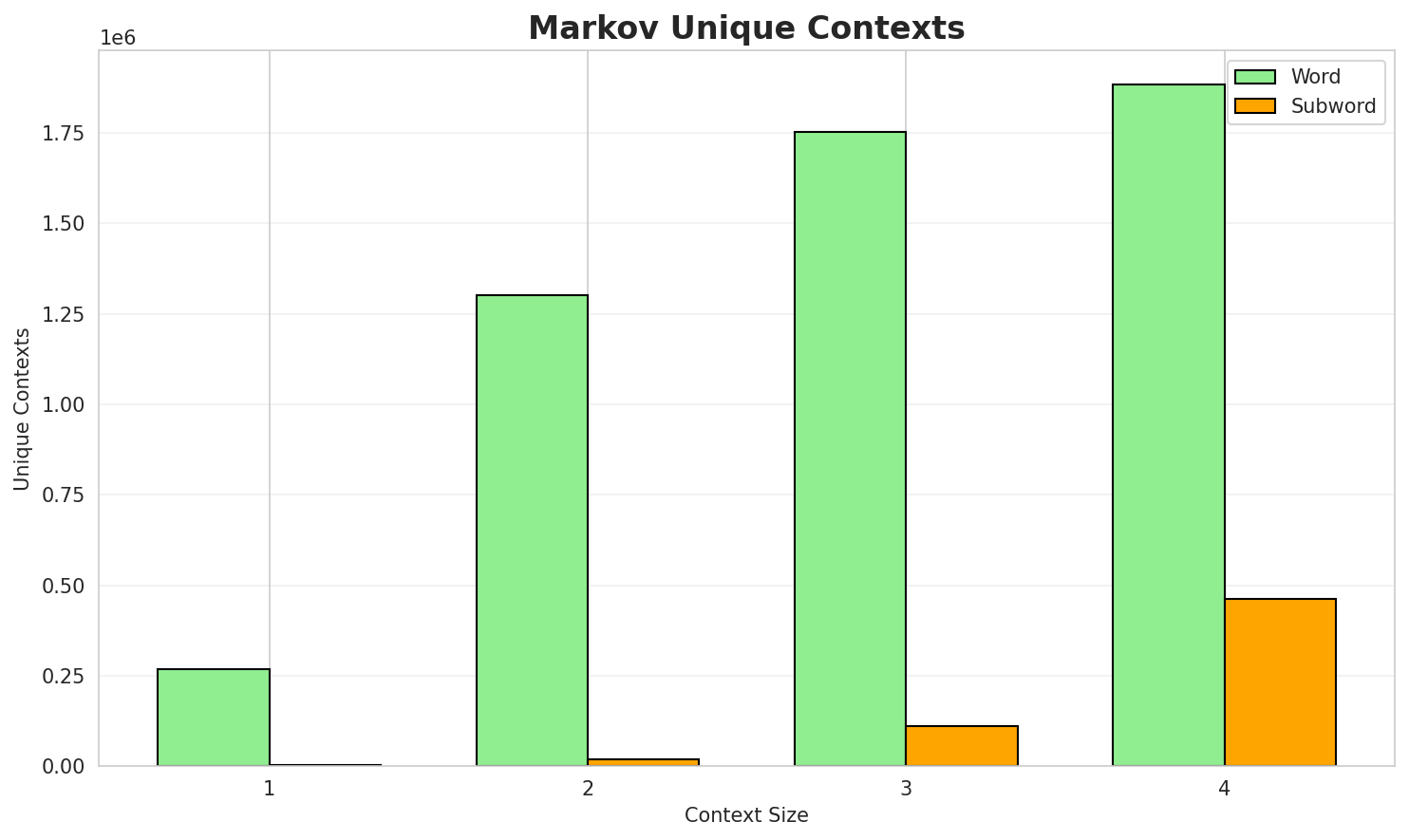

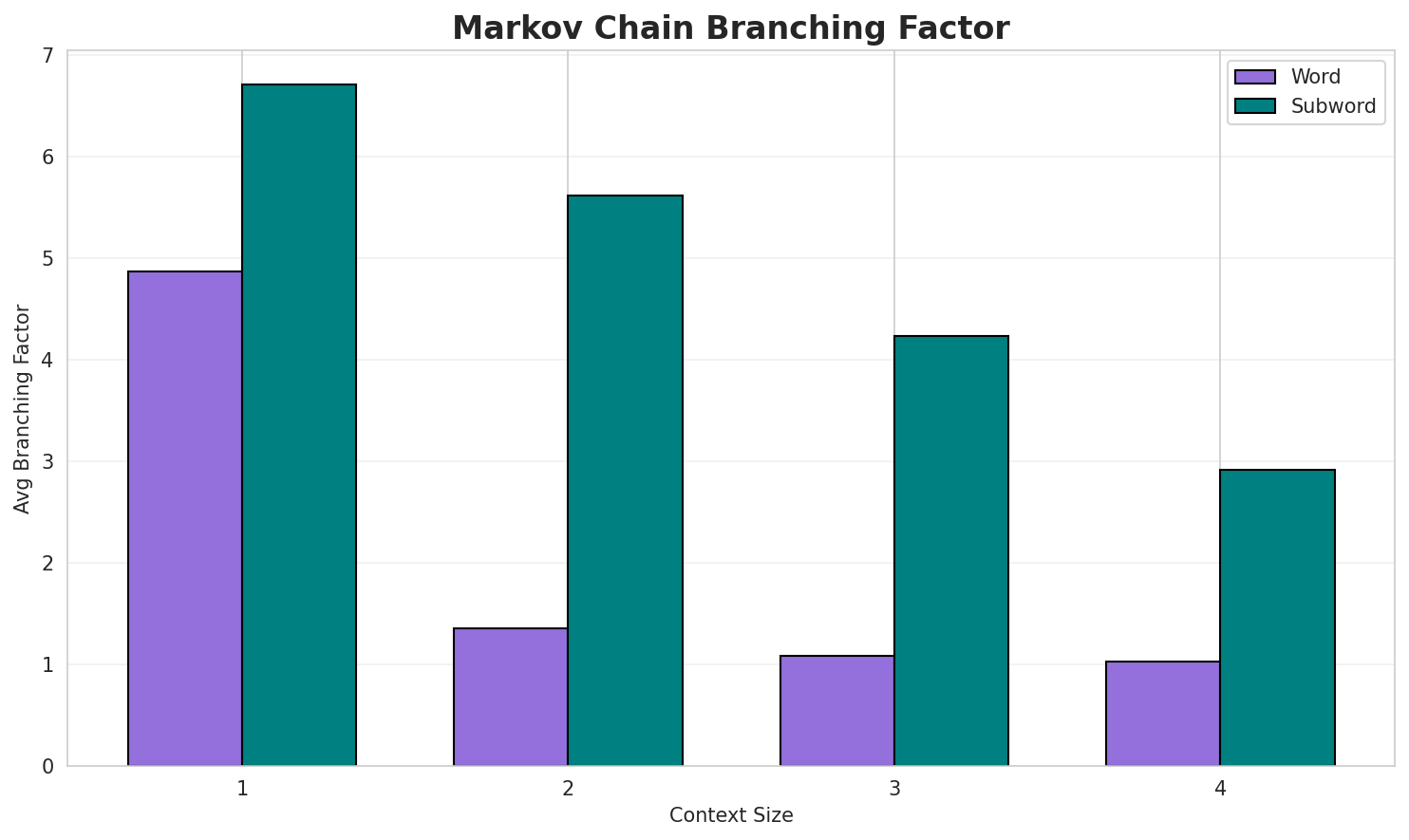

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.7664 | 1.701 | 4.87 | 268,026 | 23.4% |

| 1 | Subword | 0.8477 | 1.800 | 6.70 | 2,905 | 15.2% |

| 2 | Word | 0.1728 | 1.127 | 1.35 | 1,300,406 | 82.7% |

| 2 | Subword | 0.9102 | 1.879 | 5.61 | 19,472 | 9.0% |

| 3 | Word | 0.0491 | 1.035 | 1.08 | 1,752,396 | 95.1% |

| 3 | Subword | 0.8316 | 1.780 | 4.23 | 109,244 | 16.8% |

| 4 | Word | 0.0176 🏆 | 1.012 | 1.03 | 1,882,972 | 98.2% |

| 4 | Subword | 0.6760 | 1.598 | 2.91 | 461,858 | 32.4% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

დო მალურიე აკოანჯარაფა ავტომობილეფი ლეგენდარულო გინართინუ ირდიხას ექიაქო შეთმოფხვადუთ ინფორმაციას მუ...რე გენუა ღურჷ პუბლიკაციაშე ოთხი თარანგელოზიშე უნჩაშო წანეფს ჯავახიშვილი შ აკაკი გელოვანი მითოლოგიური...წანას ქიანაქ ტურისტეფიშ დო მუსხირენ წანას რანკექ მუშობა რსულაშე ახალ ზელანდიას პროვინცია ადმინისტრაც...

Context Size 2:

რესურსეფი ინტერნეტის უილიამ ბლეიკიშ ციტატას მიარცხუ if the doors delacorte press isbn eden paul gene...ჯვ წ 293 261 თიშენი ნამდა თაქ მუდგაზმარენ აბანობურ ფლროაშ დო ფაუნაშ გოვითარაფაშ დო გჷმორინაფაშ ნება ...ართ ართი მუკნაჭარას ნამუსჷთ წანას მიკრობიოლოგი ანტონ ვან ლევენჰუკი ინგლ antonie van leeuwenhoek დ 24...

Context Size 3:

მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა კატეგ...ახალი წანეფიშ ეჭარუაშახ 576 წანა მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა კატეგორ...წანა მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა ...

Context Size 4:

წანა მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა კატეგორია მოლინეფი დუნაბადი ნაღურა ...ღურთუთა ფურთუთა მელახი პირელი მესი მანგი კვირკვე მარაშინათუთა ეკენია გჷმათუთა გერგობათუთა ქირსეთუთა ...ფურთუთა მელახი პირელი მესი მანგი კვირკვე მარაშინათუთა ეკენია გჷმათუთა გერგობათუთა ქირსეთუთა 22 ქირსე...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_fatanacis_გლიურაზი_9075_280_ას_ი_ნტე“._გმანი_რმ

Context Size 2:

ი_მელანმოჭმენიამბშ_ლჷ_დელი_მოშწანკანი_მუე-ტემელუას_

Context Size 3:

იშ_ეპისკი_ანურეატიეფი_ართალი_წანერი_აშ_გოძველ_ურთუალეს

Context Size 4:

_დო_ჯოხოდჷ_ვითოში._ეფი_(ინგლისარიშ_ნუტეფიშ_მონწყუ_ბიგნეფი

Key Findings

- Best Predictability: Context-4 (word) with 98.2% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (461,858 contexts)

- Recommendation: Context-3 or Context-4 for text generation

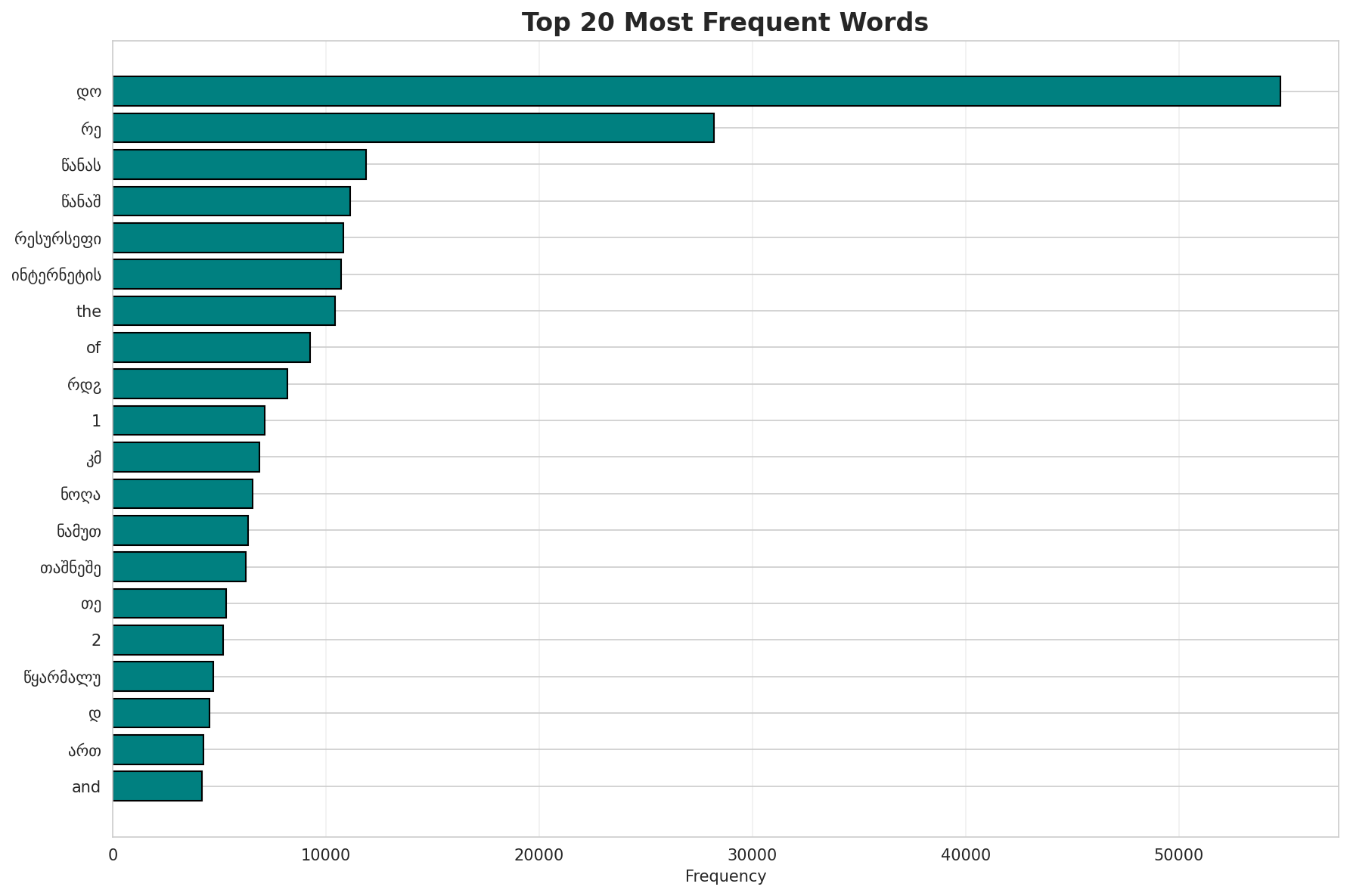

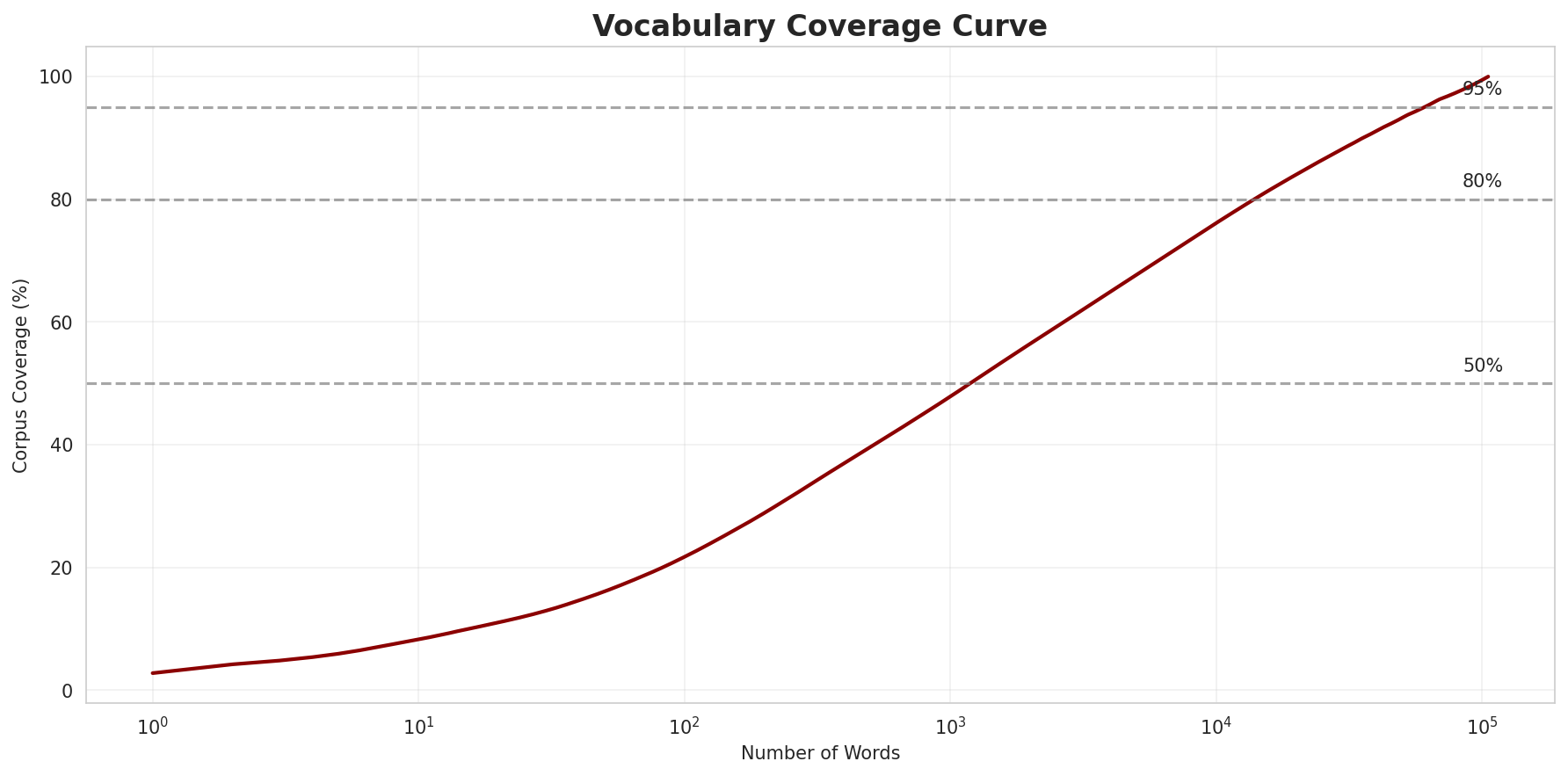

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 105,542 |

| Total Tokens | 1,961,354 |

| Mean Frequency | 18.58 |

| Median Frequency | 3 |

| Frequency Std Dev | 236.83 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | დო | 54,771 |

| 2 | რე | 28,199 |

| 3 | წანას | 11,878 |

| 4 | წანაშ | 11,129 |

| 5 | რესურსეფი | 10,818 |

| 6 | ინტერნეტის | 10,733 |

| 7 | the | 10,417 |

| 8 | of | 9,251 |

| 9 | რდჷ | 8,188 |

| 10 | 1 | 7,138 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | რაროტონგას | 2 |

| 2 | efo | 2 |

| 3 | ჟირულ | 2 |

| 4 | ლეგიონური | 2 |

| 5 | ანტონესკუშ | 2 |

| 6 | მოსლიქ | 2 |

| 7 | ფბრ | 2 |

| 8 | შპეერიშ | 2 |

| 9 | შერერიქ | 2 |

| 10 | კანემანი | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 0.9583 |

| R² (Goodness of Fit) | 0.995191 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 21.7% |

| Top 1,000 | 47.9% |

| Top 5,000 | 67.7% |

| Top 10,000 | 76.1% |

Key Findings

- Zipf Compliance: R²=0.9952 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 21.7% of corpus

- Long Tail: 95,542 words needed for remaining 23.9% coverage

5. Word Embeddings Evaluation

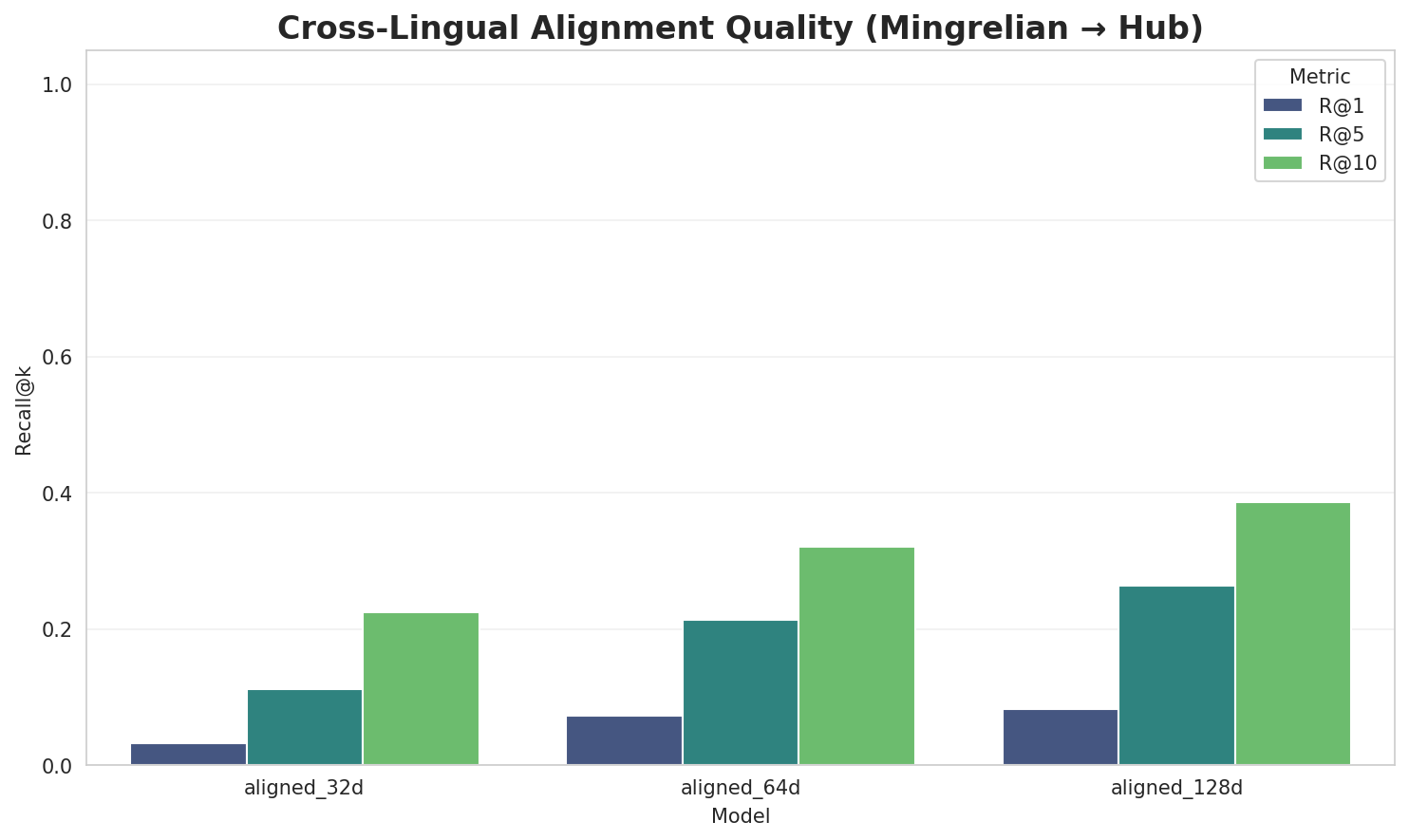

5.1 Cross-Lingual Alignment

5.2 Model Comparison

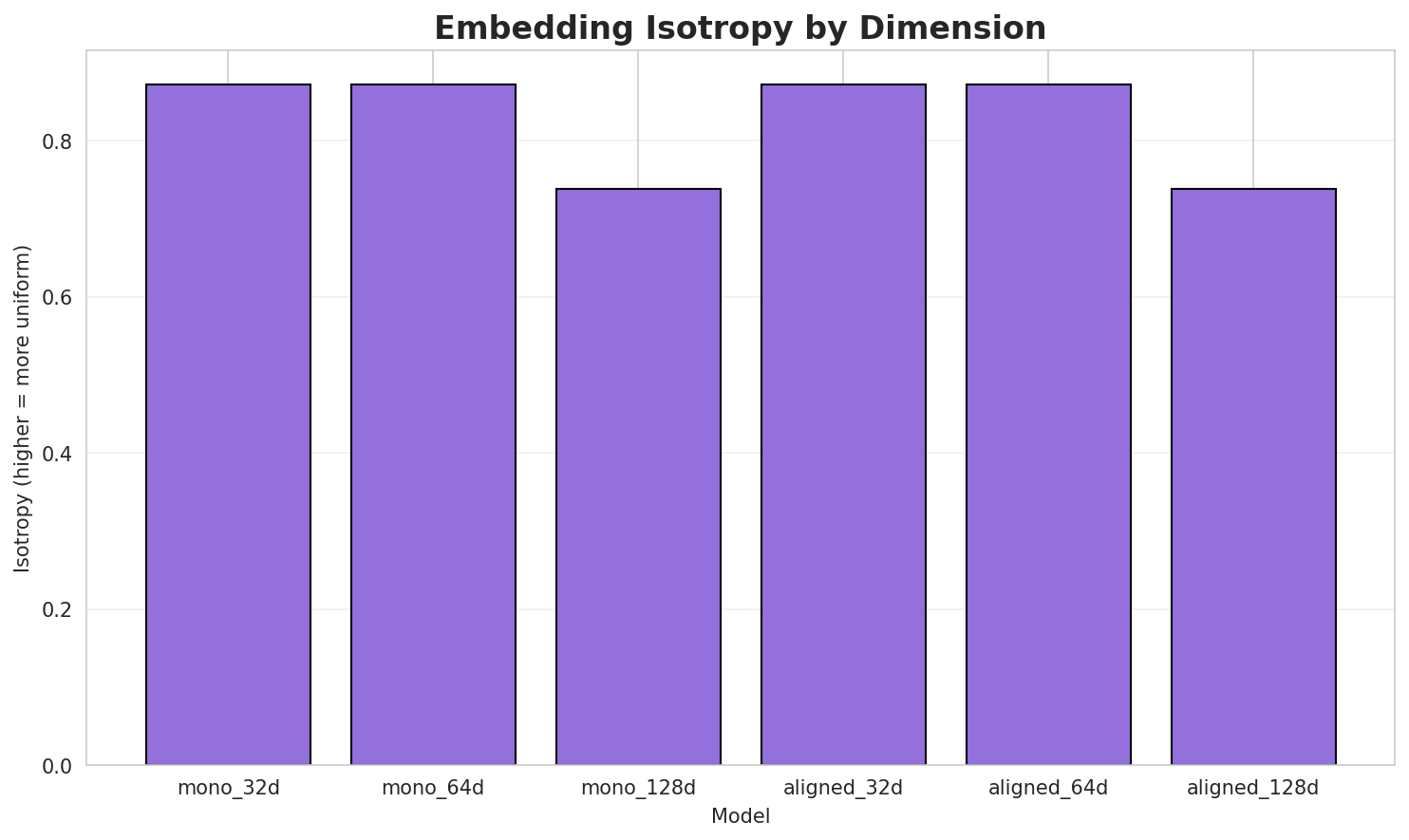

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8716 | 0.3197 | N/A | N/A |

| mono_64d | 64 | 0.8723 🏆 | 0.2350 | N/A | N/A |

| mono_128d | 128 | 0.7382 | 0.1853 | N/A | N/A |

| aligned_32d | 32 | 0.8716 | 0.3267 | 0.0320 | 0.2240 |

| aligned_64d | 64 | 0.8723 | 0.2335 | 0.0720 | 0.3200 |

| aligned_128d | 128 | 0.7382 | 0.1809 | 0.0820 | 0.3860 |

Key Findings

- Best Isotropy: mono_64d with 0.8723 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2469. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 8.2% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.809 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-ა |

ანკლავი, ახვალამას, ალაბამაშ |

-მ |

მოლუსკეფიშ, მიწონუ, მერცხილი |

-მა |

მანჩურალიშ, მაბირე, მაიერჰოფი |

-ს |

სოკომპა, საკონდიტრო, სათავადო |

-გ |

გუდაუთას, გეოლოგიქ, გეორგიოს |

-სა |

საკონდიტრო, სათავადო, სარაი |

-კ |

კინოშე, კონტრ, კარდამონიშ |

-ბ |

ბანკირი, ბრელშა, ბამიანი |

Productive Suffixes

| Suffix | Examples |

|---|---|

-ი |

ანკლავი, პიჩი, ბანკირი |

-შ |

ფორტეპიანოშ, ლოშიშ, მოლუსკეფიშ |

-იშ |

ლოშიშ, მოლუსკეფიშ, კარდამონიშ |

-ს |

გუდაუთას, ახვალამას, ფურთუთას |

-ა |

ბრელშა, ეკონომიკა, სოკომპა |

-ლი |

მერცხილი, რეკორდული, გჷშმაკოროცხალი |

-აშ |

ზუღაშ, ალაბამაშ, ჯანთხილუაშ |

-რი |

ბანკირი, ჯალპაიგური, ბედინერი |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ალურ |

1.96x | 86 contexts | ცალურ, გალურ, ალურე |

ანეფ |

1.65x | 147 contexts | წანეფ, წანეფც, ხანეფც |

რეფი |

1.65x | 143 contexts | ერეფი, არეფი, ცირეფი |

ნეფი |

1.55x | 148 contexts | თნეფი, ენეფი, ინეფი |

ლეფი |

1.55x | 139 contexts | შლეფი, დღლეფი, თულეფი |

ობაშ |

1.86x | 48 contexts | ტობაშ, ნობაშ, უობაში |

ტეფი |

1.60x | 78 contexts | ჩიტეფი, კეტეფი, ერტეფი |

ნტერ |

1.83x | 44 contexts | ნტერი, ინტერ, ნტერო |

რმალ |

1.98x | 29 contexts | ქარმალი, ქარმალქ, წყარმალ |

ურსე |

2.19x | 19 contexts | კურსეფი, კურსეფს, რსურსეფი |

ტერნ |

1.91x | 25 contexts | ტერნი, შტერნი, სტერნი |

უეფი |

1.44x | 66 contexts | ჭუეფი, კუეფი, სუეფი |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-მ |

-ი |

174 words | მუნაღელი, მიზანტროპი |

-ა |

-ი |

150 words | ანდალუსიარი, ალიფი |

-მ |

-შ |

136 words | მარსულებერეფიშ, მიოცენიშ |

-კ |

-ი |

110 words | კარერი, კუჩხეფი |

-გ |

-ი |

103 words | გიბრალტარი, გჷმორკვიაფილი |

-მ |

-ს |

91 words | მონძეეფს, მანუსკრიპტეფს |

-კ |

-შ |

87 words | კირქუაშ, კონგილიოშ |

-ბ |

-ი |

87 words | ბრაზავილი, ბურჟი |

-მ |

-იშ |

87 words | მარსულებერეფიშ, მიოცენიშ |

-ს |

-ი |

86 words | სტარი, სუმერი |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| განვითარების | განვითარებ-ი-ს |

7.5 | ი |

| ტრაგედიას | ტრაგედ-ი-ას |

7.5 | ი |

| მანერჰეიმი | მანერჰე-ი-მი |

7.5 | ი |

| აკოდგინელი | აკოდგინ-ე-ლი |

7.5 | ე |

| ქვერსემიაშ | ქვერსემ-ი-აშ |

7.5 | ი |

| დეფინიციათ | დეფინიც-ი-ათ |

7.5 | ი |

| ვიკივოიაჟის | ვიკივოიაჟ-ი-ს |

7.5 | ი |

| აპლიკაცია | აპლიკაც-ი-ა |

7.5 | ი |

| მუჭომეფითიე | მუჭომეფით-ი-ე |

7.5 | ი |

| ბანჯარმასინი | ბანჯარმა-სი-ნი |

7.5 | სი |

| ჟირსქესამიე | ჟირსქესამ-ი-ე |

7.5 | ი |

| ქუდასქიდჷ | ქუდასქ-ი-დჷ |

7.5 | ი |

| რეზერვაცია | რეზერვაც-ი-ა |

7.5 | ი |

| აეროპორტიე | აეროპორტ-ი-ე |

7.5 | ი |

| დისტილაცია | დისტილაც-ი-ა |

7.5 | ი |

6.6 Linguistic Interpretation

Automated Insight: The language Mingrelian shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.27x) |

| N-gram | 2-gram | Lowest perplexity (483) |

| Markov | Context-4 | Highest predictability (98.2%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-11 05:18:26