Improve model card: Add pipeline tag, library name, license, abstract, and project details

#1

by nielsr HF Staff - opened

README.md

CHANGED

|

@@ -1,5 +1,48 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

**VideoSSR-8B** is a multimodal large language model (MLLM) fine-tuned from `Qwen-VL-8B-Instruct` for enhanced video understanding. It is trained using a novel **Video Self-Supervised Reinforcement Learning (VideoSSR)** framework, which generates its own high-quality training data directly from videos, eliminating the need for manual annotation.

|

| 2 |

|

| 3 |

- **Base Model:** `Qwen-VL-8B-Instruct`

|

| 4 |

- **Paper:** [VideoSSR: Video Self-Supervised Reinforcement Learning](https://arxiv.org/abs/2511.06281)

|

| 5 |

-

- **Code:** [https://github.com/lcqysl/VideoSSR](https://github.com/lcqysl/VideoSSR)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

pipeline_tag: video-text-to-text

|

| 3 |

+

library_name: transformers

|

| 4 |

+

license: apache-2.0

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

# VideoSSR: Video Self-Supervised Reinforcement Learning

|

| 8 |

+

|

| 9 |

+

[](https://arxiv.org/abs/2511.06281)

|

| 10 |

+

[](https://huggingface.co/yhx12/VideoSSR)

|

| 11 |

+

[](https://huggingface.co/datasets/yhx12/VideoSSR-30k)

|

| 12 |

+

[](https://huggingface.co/datasets/yhx12/VIUBench)

|

| 13 |

+

|

| 14 |

**VideoSSR-8B** is a multimodal large language model (MLLM) fine-tuned from `Qwen-VL-8B-Instruct` for enhanced video understanding. It is trained using a novel **Video Self-Supervised Reinforcement Learning (VideoSSR)** framework, which generates its own high-quality training data directly from videos, eliminating the need for manual annotation.

|

| 15 |

|

| 16 |

- **Base Model:** `Qwen-VL-8B-Instruct`

|

| 17 |

- **Paper:** [VideoSSR: Video Self-Supervised Reinforcement Learning](https://arxiv.org/abs/2511.06281)

|

| 18 |

+

- **Code/Project Page:** [https://github.com/lcqysl/VideoSSR](https://github.com/lcqysl/VideoSSR)

|

| 19 |

+

|

| 20 |

+

## Paper Abstract

|

| 21 |

+

|

| 22 |

+

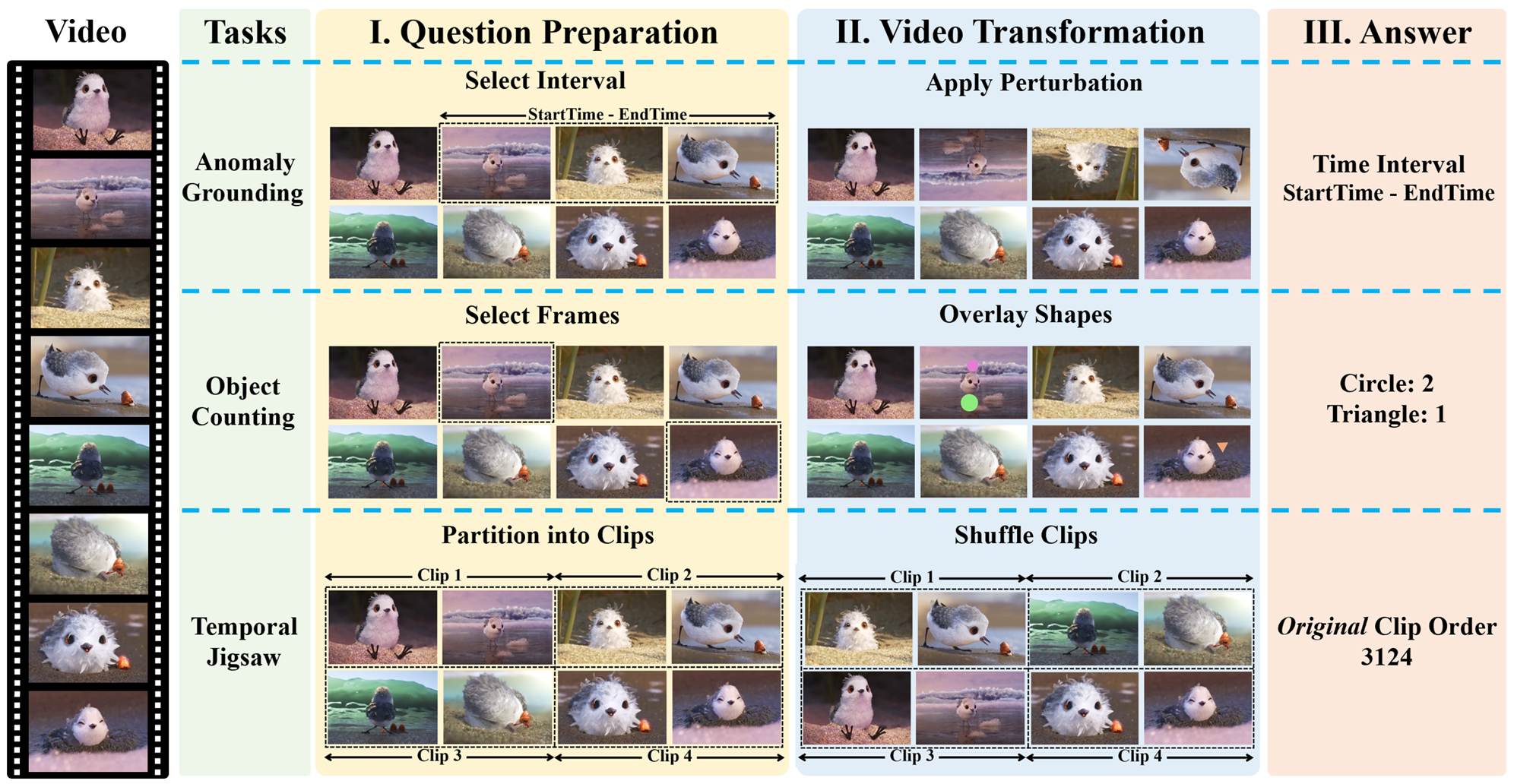

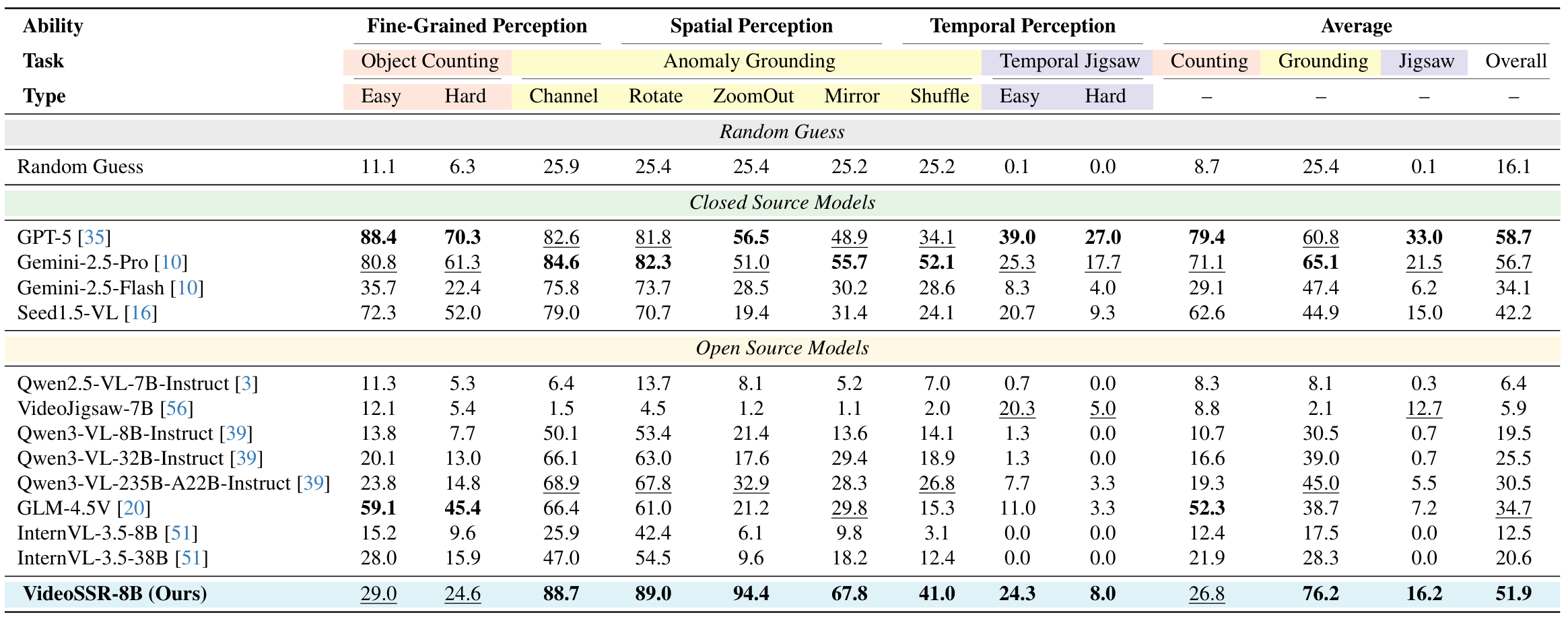

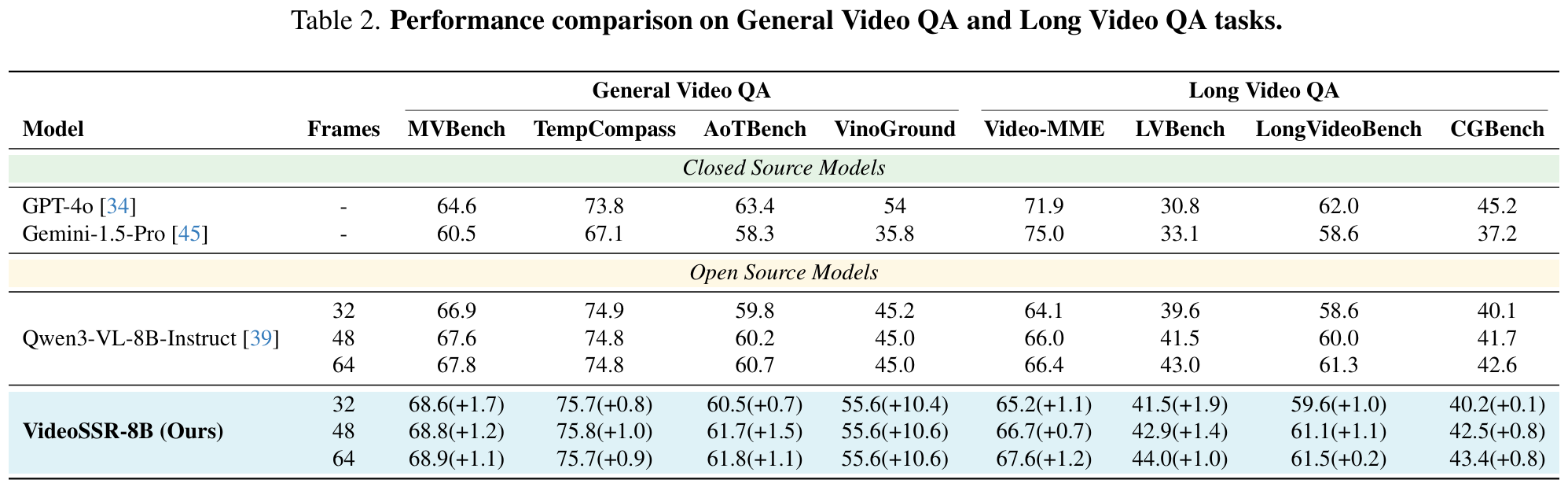

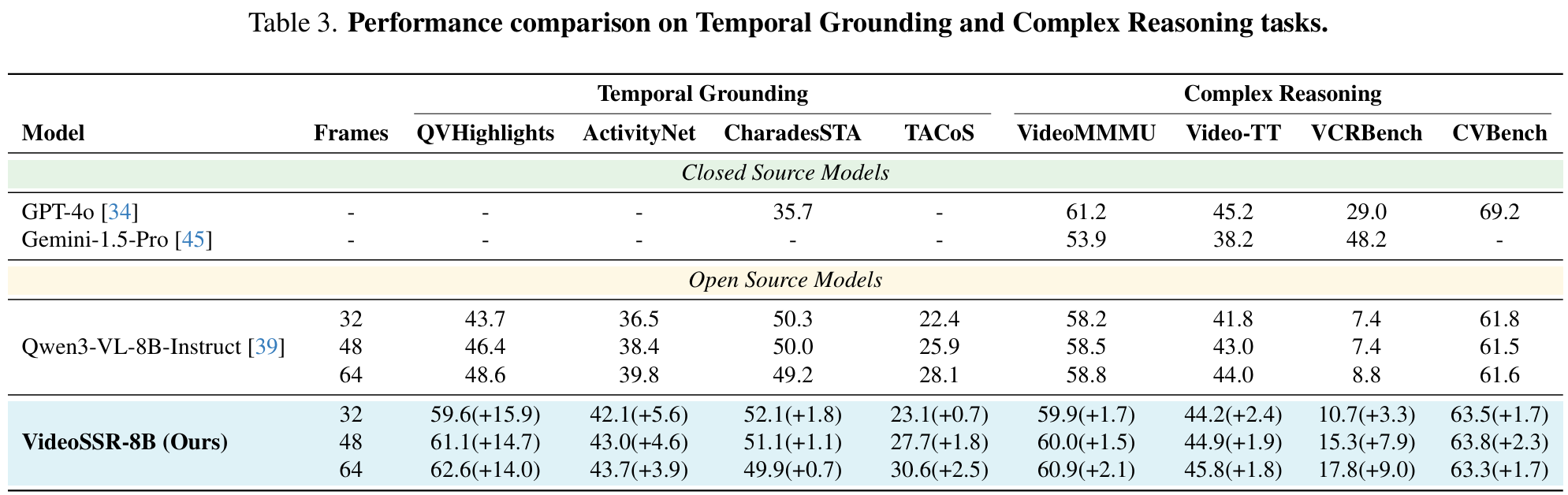

Reinforcement Learning with Verifiable Rewards (RLVR) has substantially advanced the video understanding capabilities of Multimodal Large Language Models (MLLMs). However, the rapid progress of MLLMs is outpacing the complexity of existing video datasets, while the manual annotation of new, high-quality data remains prohibitively expensive. This work investigates a pivotal question: Can the rich, intrinsic information within videos be harnessed to self-generate high-quality, verifiable training data? To investigate this, we introduce three self-supervised pretext tasks: Anomaly Grounding, Object Counting, and Temporal Jigsaw. We construct the Video Intrinsic Understanding Benchmark (VIUBench) to validate their difficulty, revealing that current state-of-the-art MLLMs struggle significantly on these tasks. Building upon these pretext tasks, we develop the VideoSSR-30K dataset and propose VideoSSR, a novel video self-supervised reinforcement learning framework for RLVR. Extensive experiments across 17 benchmarks, spanning four major video domains (General Video QA, Long Video QA, Temporal Grounding, and Complex Reasoning), demonstrate that VideoSSR consistently enhances model performance, yielding an average improvement of over 5%. These results establish VideoSSR as a potent foundational framework for developing more advanced video understanding in MLLMs. The code is available at this https URL .

|

| 23 |

+

|

| 24 |

+

**Authors:** Zefeng He, Xiaoye Qu, Yafu Li, Siyuan Huang, Daizong Liu, Yu Cheng

|

| 25 |

+

|

| 26 |

+

## Related Hugging Face Resources

|

| 27 |

+

|

| 28 |

+

* **Dataset:** [VideoSSR-30K](https://huggingface.co/datasets/yhx12/VideoSSR-30k)

|

| 29 |

+

* **Benchmark:** [VIUBench](https://huggingface.co/datasets/yhx12/VIUBench)

|

| 30 |

+

|

| 31 |

+

## Model Details

|

| 32 |

+

|

| 33 |

+

VideoSSR is a novel framework designed to enhance the video understanding capabilities of Multimodal Large Language Models (MLLMs). Instead of relying on prohibitively expensive manually annotated data or biased model-annotated data, VideoSSR harnesses the rich, intrinsic information within videos to generate high-quality, verifiable training data. We introduce three self-supervised pretext tasks: Anomaly Grounding, Object Counting, and Temporal Jigsaw. Building upon these tasks, we construct the VideoSSR-30K dataset and train models with Reinforcement Learning with Verifiable Rewards (RLVR), establishing a potent foundational framework for developing more advanced video understanding in MLLMs.

|

| 34 |

+

|

| 35 |

+

### Pretext Tasks

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

### VIUBench

|

| 39 |

+

To rigorously test the capabilities of modern MLLMs on fundamental video understanding, we introduce the **V**ideo **I**ntrinsic **U**nderstanding **Bench**mark (**VIUBench**). This benchmark is systematically constructed from our three self-supervised pretext tasks: Anomaly Grounding, Object Counting, and Temporal Jigsaw. It specifically evaluates a model's ability to reason about intrinsic video properties—such as temporal coherence and fine-grained details—independent of external annotations. Our results show that VIUBench poses a significant challenge even for the most advanced models, highlighting a critical area for improvement and validating the effectiveness of our approach.

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

### Performance Highlights

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

## Acknowledgement

|

| 47 |

+

|

| 48 |

+

This work was developed upon **[verl](https://github.com/volcengine/verl)**. We also thank the great work of **[Visual Jigsaw](https://github.com/penghao-wu/visual_jigsaw)** for the inspiration.

|