NSER-IBVS: Efficient Self-Supervised Neuro-Analytic Visual Servoing for Real-time Quadrotor Control

ICCV 2025 Workshops - Oral Presentation

Sebastian Mocanu · Sebastian-Ion Nae · Mihai-Eugen Barbu · Marius Leordeanu

Model Description

This repository contains pre-trained models for NSER-IBVS (Numerically Stable Efficient Reduced Image-Based Visual Servoing), a self-supervised framework for vision-based quadrotor control.

The system uses a teacher-student architecture where a compact 1.7M parameter student network learns to control a drone by imitating an analytical IBVS teacher, achieving 11x faster inference (540 FPS vs 48 FPS) while maintaining comparable accuracy.

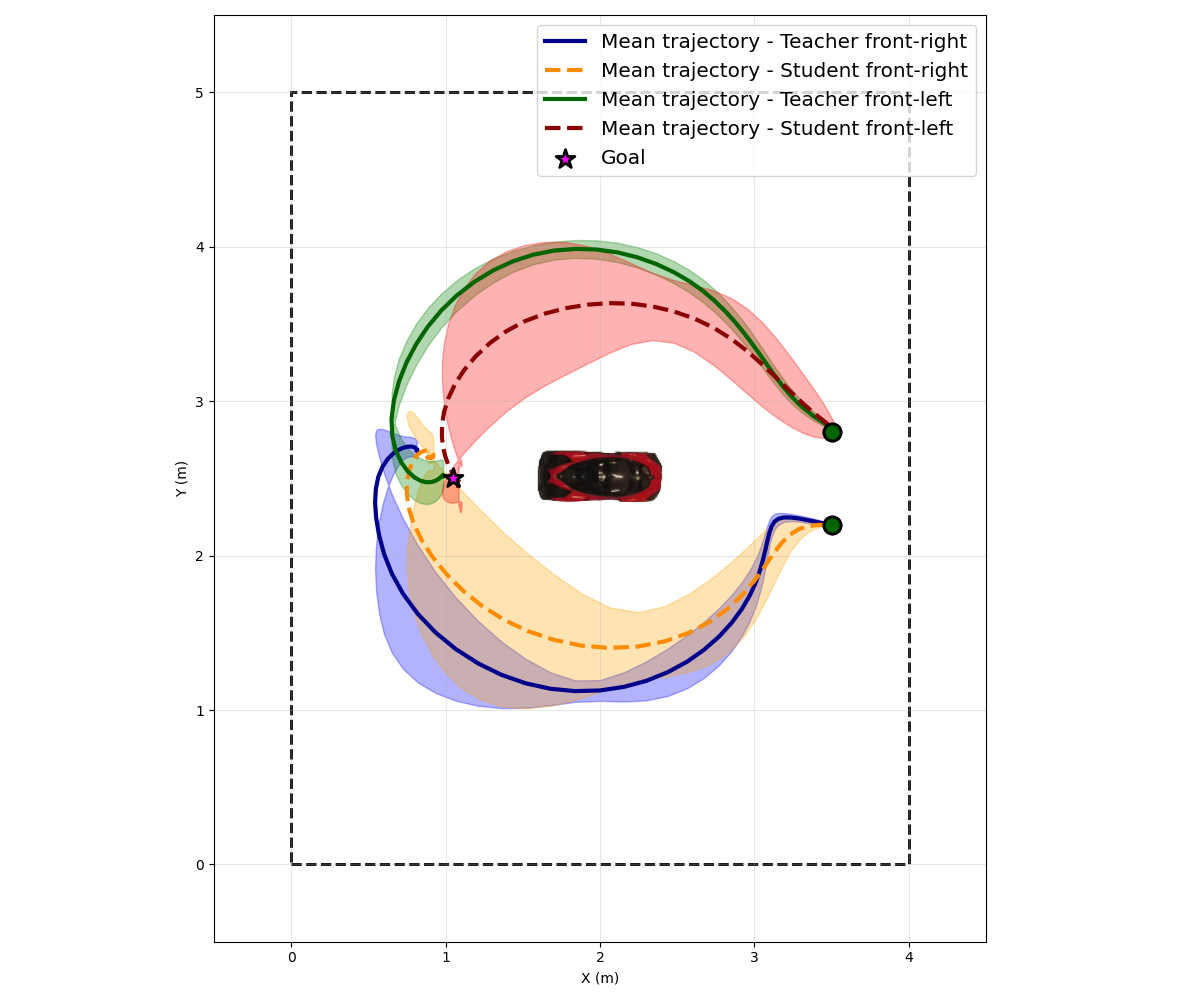

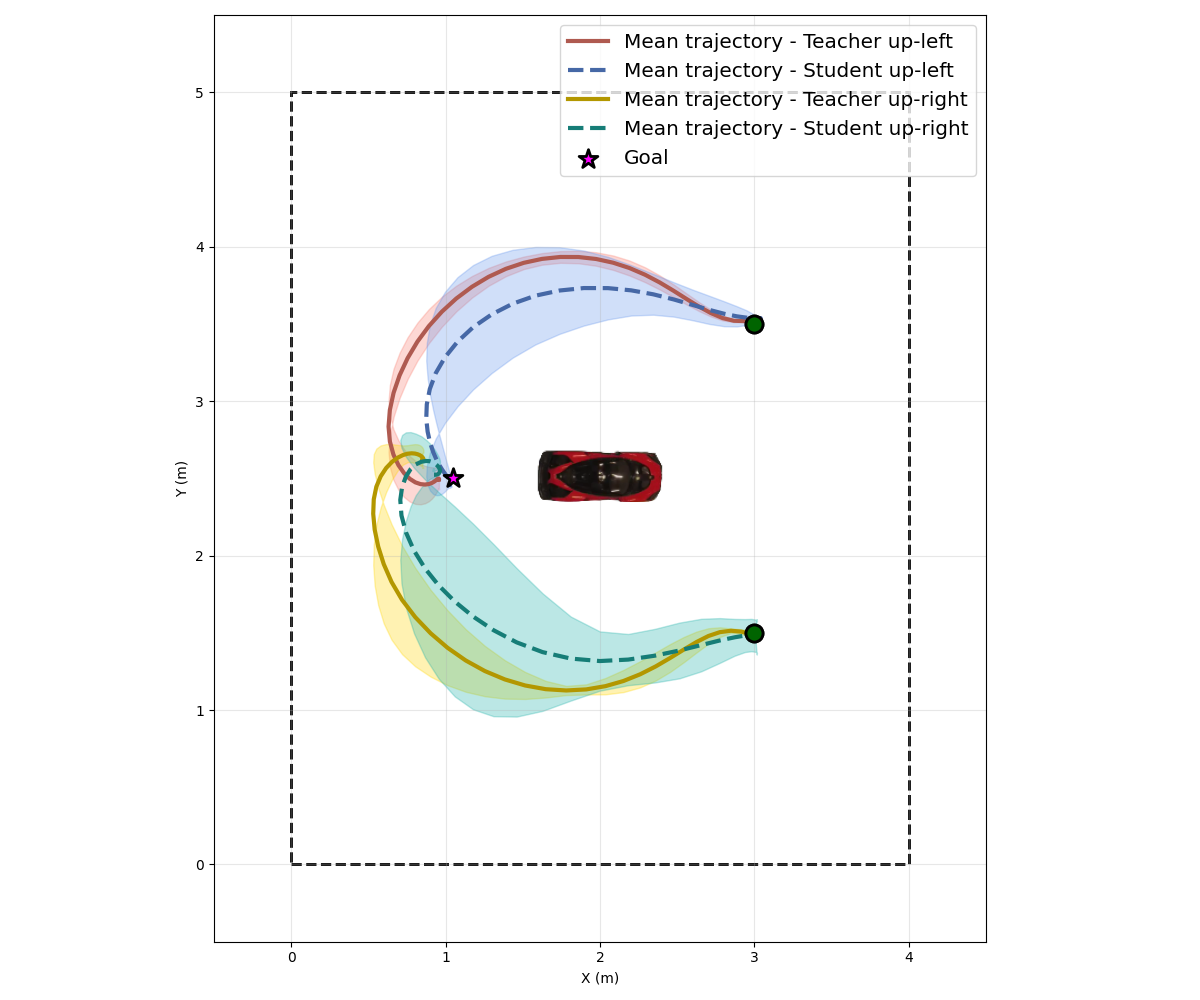

Trajectories teacher vs student from two starting points:

Front View Trajectories |

Up View Trajectories |

Intended Use

- Primary use: Vision-based autonomous drone control for target tracking

- Target users: Robotics researchers, drone developers, computer vision practitioners

- Out of scope: Production deployment without proper safety validation

Limitations

- Trained primarily on vehicle targets. May require fine-tuning for other objects

- Performance degrades in extreme lighting conditions or heavy occlusion

- Tested only with Parrot Anafi 4K drone (models and systems outputs piloting commands for Parrot drones).

Models

Teacher Pipeline Models

| Model | File | Parameters | Description |

|---|---|---|---|

| YOLOv11 Segmentation (sim) | 29_05_best__yolo11n-seg_sim_car_bunker__all.pt |

2.84M | Vehicle segmentation for simulator |

| YOLOv11 Segmentation (real) | real-yolo-car-full-segmentation.pt |

2.84M | Vehicle segmentation for real-world |

| Mask Splitter (sim) | mask_splitter-epoch_10-dropout_0-low_x2-and-high_x0_quality_early_stop.pt |

1.94M | Anterior-posterior mask splitting (simulator) |

| Mask Splitter (real) | mask_splitter-epoch_10-dropout_0-_x2_real_early_stop.pt |

1.94M | Anterior-posterior mask splitting (real-world) |

Student Models

| Model | File | Parameters | Description |

|---|---|---|---|

| Student (sim→real) | student_model_sim_on_real_world_distribution.pth |

1.7M | Trained on sim, normalized for real-world |

| Student (fine-tuned) | student_real_pretrained_augX3_80_runs.pth |

1.7M | Fine-tuned on real-world data |

Usage

Installation

git clone --recursive https://github.com/SpaceTime-Vision-Robotics-Laboratory/nser-ibvs-drone.git

cd nser-ibvs-drone

python3 -m venv ./venv

source venv/bin/activate

python -m pip install --upgrade pip

python -m pip install -r requirements.txt

python -m pip install -e .

Run Tests to verify imports and functionality:

python -m unittest discover ./tests

Loading Models

YOLO Segmentation:

from mask_splitter.yolo_model import YoloSegmentation

# Initialize YOLO model

yolo = YoloSegmentation(

model_path="real-yolo-car-full-segmentation.pt",

confidence_threshold=0.7

)

# Segment an image

annotated_frame, binary_mask = yolo.segment_image(frame)

# Get detection info

results = yolo.detect(frame)

target = yolo.find_best_target_box(results)

print(f"Confidence: {target.confidence}, Center: {target.center}")

Mask-Splitter Network

import cv2

from mask_splitter.nn.infer import MaskSplitterInference

# Initialize the model

splitter = MaskSplitterInference(

model_path="mask_splitter-epoch_10-dropout_0-_x2_real_early_stop.pt",

device="cuda",

image_size=(360, 640),

confidence_threshold=0.5

)

# Load image and mask

image = cv2.imread("frame-path.png")

mask = cv2.imread("mask-path.png", cv2.IMREAD_GRAYSCALE)

# Run inference

front_mask, back_mask = splitter.infer(image, mask)

# Visualize results

splitter.visualize(image, front_mask, back_mask)

Student Network

Direct usage:

import torch

from nser_ibvs_drone.distiled_network.drone_command_regressor import DroneCommandRegressor

# Load model

model = DroneCommandRegressor()

model.load_model("student_model_sim_on_real_world_distribution.pth")

model.eval()

# Input: RGB image tensor [B, 3, H, W]

# Output: velocity commands [vx, vy, vyaw]

Or with Student Engine:

import cv2

from nser_ibvs_drone.distiled_network.distil_engine import StudentEngine

student_model_path = "student_model_sim_on_real_world_distribution.pth"

model_engine = StudentEngine(student_model_path)

frame = cv2.imread("frame-path.png")

commands = model_engine.predict(frame)

For complete inference pipelines and running on algorithms on drones, see the docs in GitHub repository.

Performance

| Metric | Teacher (NSER-IBVS) | Student Network |

|---|---|---|

| Inference Speed | 48.3 FPS | 540.8 FPS |

| Parameters | 4.78M | 1.7M |

| Mean Error (Sim) | 29.76 px | 14.26 px |

| IoU (Sim) | 0.522 | 0.752 |

| Mean Error (Real) | 29.96 px | 33.33 px |

| IoU (Real) | 0.627 | 0.591 |

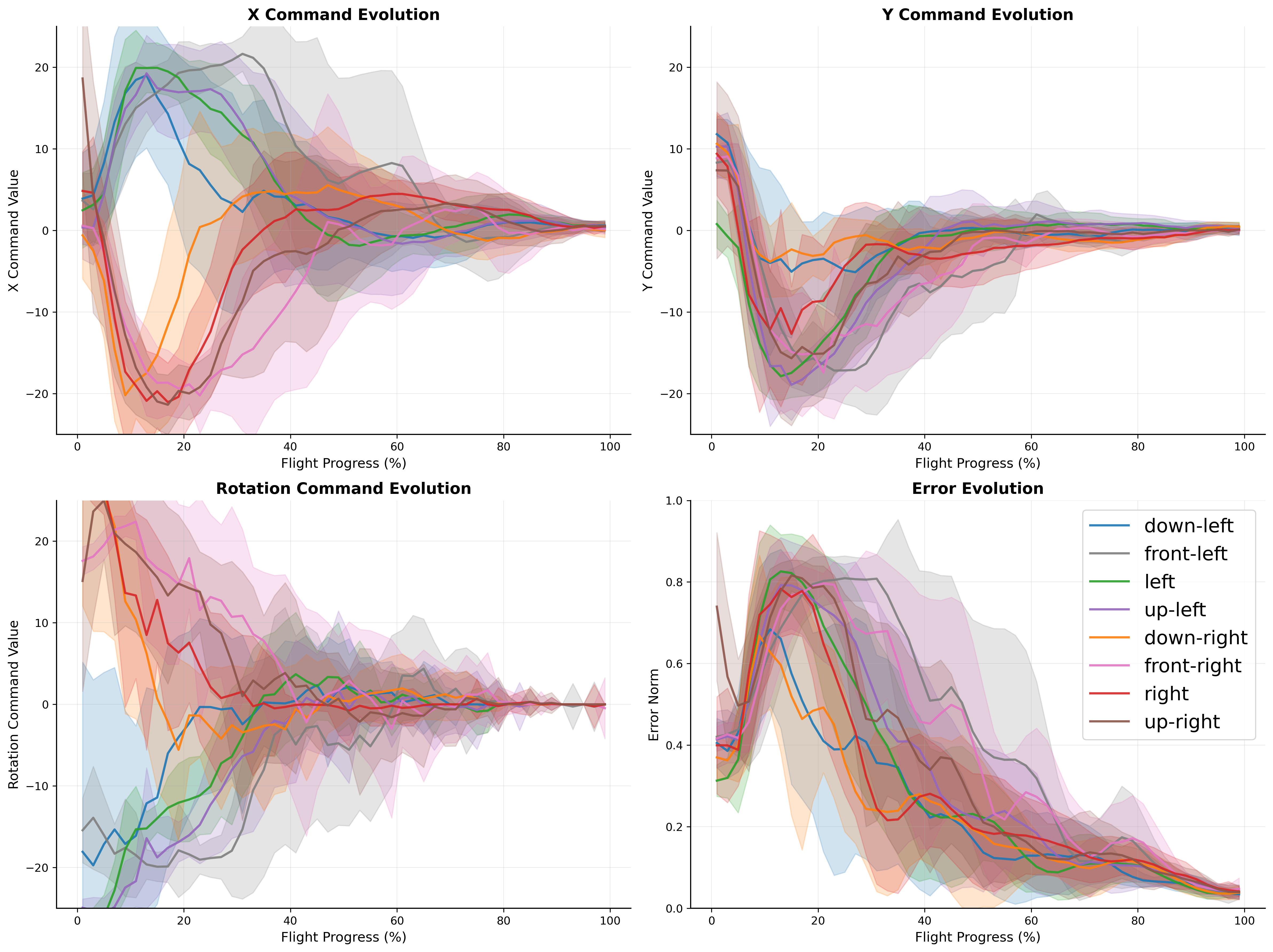

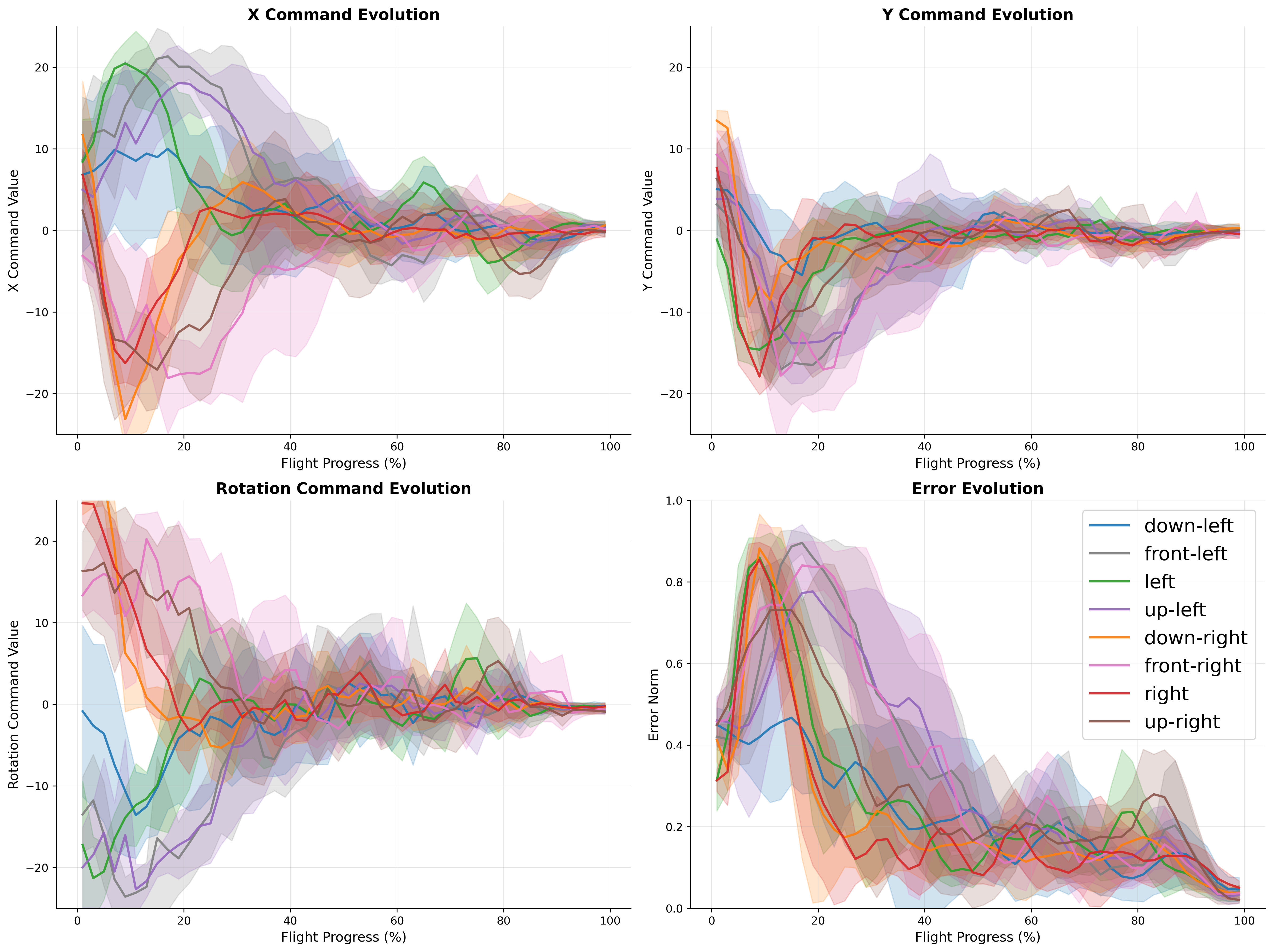

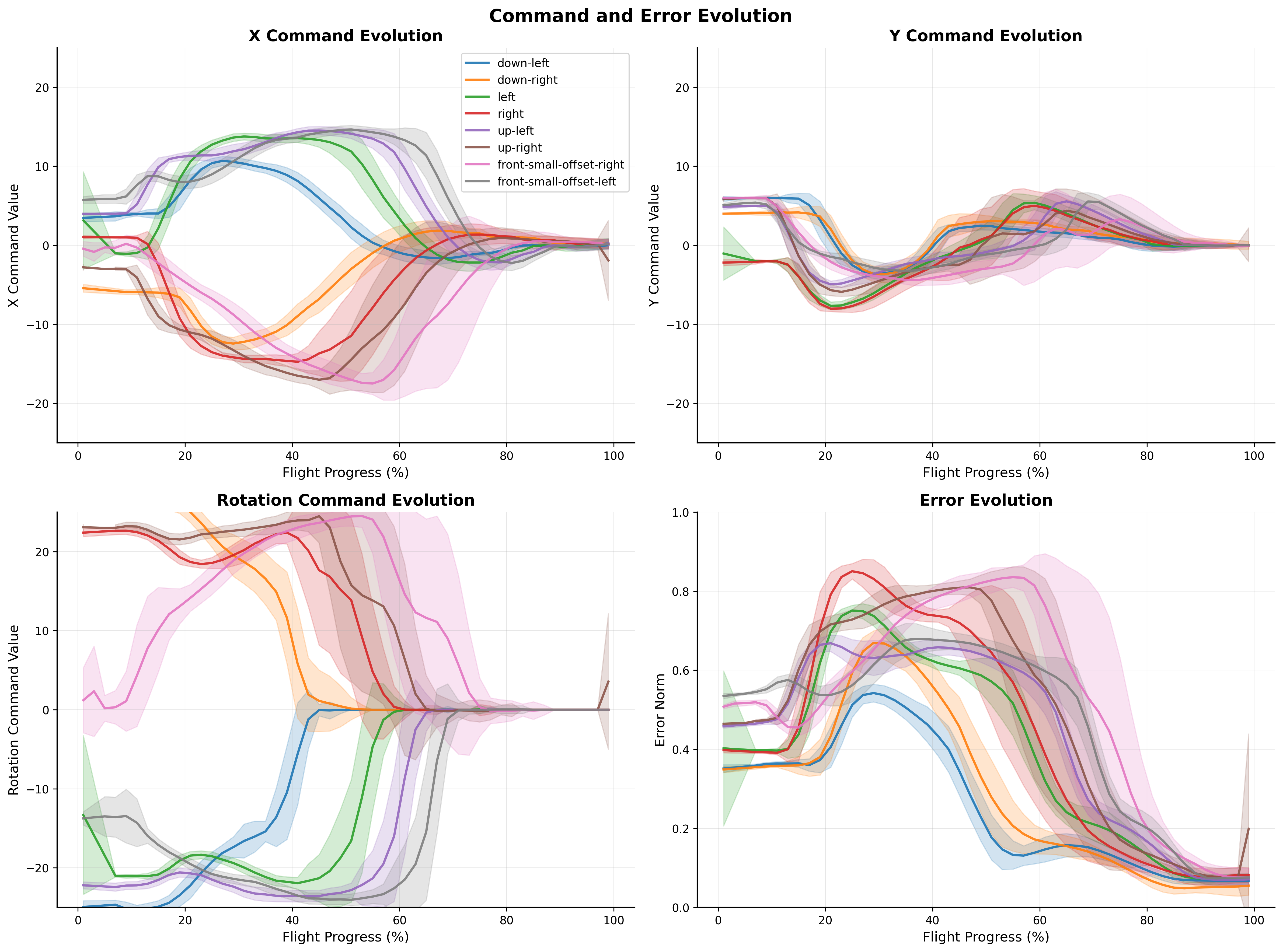

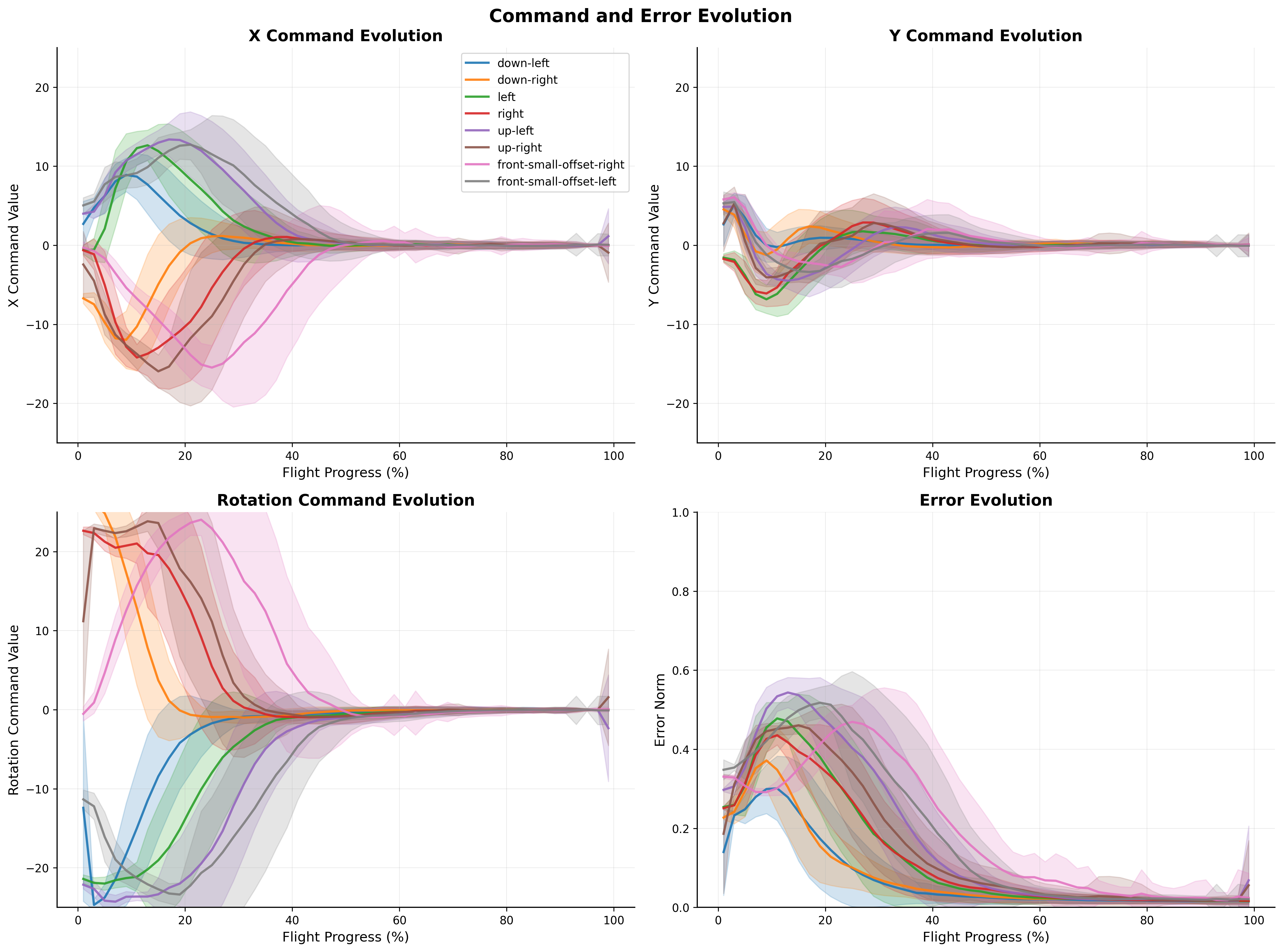

Control commands and error evolutions over time:

Real-World Flight - Teacher (IBVS) |

Real-World Flight - Student |

Digital-Twin Flight - Teacher (IBVS) |

Digital-Twin Flight - Student |

Training Data

Training data and the custom UE4 simulator environment are available:

- Training Data Mask-Splitter Hugging Faces brittleru/nser-ibvs-mask-splitter-dataset

- Training Data (Google Drive)

- UE4 Bunker Environment

Citation

@InProceedings{Mocanu_2025_ICCV,

author = {Mocanu, Sebastian and Nae, Sebastian-Ion and Barbu, Mihai-Eugen and Leordeanu, Marius},

title = {Efficient Self-Supervised Neuro-Analytic Visual Servoing for Real-time Quadrotor Control},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) Workshops},

month = {October},

year = {2025},

pages = {1744-1753}

}

License

Links

| Resource | Link |

|---|---|

| Paper | ICCV 2025 Open Access |

| arXiv | 2507.19878 |

| Code | GitHub |

| Website | Project Page |

| Poster |

Dataset used to train brittleru/nser-ibvs-drone

Spaces using brittleru/nser-ibvs-drone 3

Collection including brittleru/nser-ibvs-drone

Paper for brittleru/nser-ibvs-drone

Evaluation results

- Inference Speed on NSER-IBVS Simulation Datasetself-reported540.800

- Mean Error (px) on NSER-IBVS Simulation Datasetself-reported14.260

- IoU on NSER-IBVS Simulation Datasetself-reported0.752

- Mean Error (px) on NSER-IBVS Real-World Datasetself-reported33.330

- IoU on NSER-IBVS Real-World Datasetself-reported0.591