Buckets:

Title: How Far Are LLMs from Professional Poker Players? Revisiting Game-Theoretic Reasoning with Agentic Tool Use

URL Source: https://arxiv.org/html/2602.00528

Published Time: Tue, 03 Feb 2026 01:29:19 GMT

Markdown Content: Minhua Lin 1 Enyan Dai 2 Hui Liu 3 Xianfeng Tang 3 Yuliang Yan 2 Zhenwei Dai 3

Jingying Zeng 3 Zhiwei Zhang 1 Fali Wang 1 Hongcheng Gao 4 Chen Luo 2

Xiang Zhang 1 Qi He 5 Suhang Wang 1

1 The Pennsylvania State University 2 HKUST (GZ) 3 Amazon 4 Tsinghua University 5 Microsoft

Abstract

As Large Language Models (LLMs) are increasingly applied in high-stakes domains, their ability to reason strategically under uncertainty becomes critical. Poker provides a rigorous testbed, requiring not only strong actions but also principled, game-theoretic reasoning. In this paper, we conduct a systematic study of LLMs in multiple realistic poker tasks, evaluating both gameplay outcomes and reasoning traces. Our analysis reveals LLMs fail to compete against traditional algorithms and identifies three recurring flaws: reliance on heuristics, factual misunderstandings, and a “knowing–doing” gap where actions diverge from reasoning. An initial attempt with behavior cloning and step-level reinforcement learning improves reasoning style but remains insufficient for accurate game-theoretic play. Motivated by these limitations, we propose ToolPoker, a tool-integrated reasoning framework that combines external solvers for GTO-consistent actions with more precise professional-style explanations. Experiments demonstrate that ToolPoker achieves state-of-the-art gameplay while producing reasoning traces that closely reflect game-theoretic principles.

1 Introduction

Large Language Models (LLMs) are increasingly deployed in high-stakes domains such as cybersecurity(Ameri et al., 2021) and strategic decision-making(Jiang et al., 2023), where success requires not only factual recall but also reasoning under uncertainty and informed decision-making. A natural testbed for these abilities is game-playing, which combines reasoning, planning, and opponent modeling. Poker is especially suitable as a canonical incomplete-information game(Harsanyi, 1995), requiring players to act with hidden information, estimate opponents’ ranges, and anticipate future outcomes. Importantly, professional players succeed not only by choosing strong actions, but by reasoning in a game-theoretic manner(Brown and Sandholm, 2019), grounding decisions in equilibrium principles while adapting to opponents. Thus, to play like professionals, one must not only act optimally but also think strategically. Evaluating LLMs in poker requires going beyond win rate and examining whether their reasoning traces reflect principled strategic thinking.

Motivated by this, we ask: How far are LLMs from professional poker players? Several recent studies have explored LLMs in such game-theoretic games. For instance, GTBench(Duan et al., 2024) and PokerBench(Zhuang et al., 2025) focus on gameplay outcomes and show that LLMs struggle to compete. Suspicion-Agent(Guo et al., 2023) uses theory-of-mind prompting in Leduc Hold’em, with GPT-4 surpassing neural baselines such as NFSP(Heinrich and Silver, 2016), but still falls short of equilibrium-based methods like CFR+(Zinkevich et al., 2007). GameBot(Lin et al., 2025) examines reasoning steps but only measures correctness. While insightful, these works focus narrowly on outcomes, offering limited understandings of why LLMs succeed or fail.

To fill this gap, we conduct a systematic study of LLMs in poker, analyzing both gameplay and reasoning traces. Our analysis shows that LLMs consistently underperform traditional baselines, such as NFSP(Heinrich and Silver, 2016) and CFR+Tammelin (2014), ranging from reinforcement learning (RL) to equilibrium-based solvers, due to three key reasoning flaws: (i) Heuristic reasoning: LLMs often rely on shallow heuristics rather than rigorous game-theoretic principles. (ii) Factual misunderstanding: LLMs sometimes misjudge fundamental aspects of the game, such as hand strength, pot odds, or opponent range estimation, leading to systematically flawed reasoning and (iii) Knowing–doing gap: even when LLMs articulate sound reasoning, their final actions often deviate from it, exposing a gap between knowledge expression and decision execution.

To investigate whether these flaws can be mitigated internally, we attempt a two-stage framework: (i) behavior cloning (BC) on expert reasoning traces to instill game-theoretic principles, and (ii) RL fine-tuning with step-level rewards. While this improves fluency and expert-like reasoning style, it remains insufficient for precise derivations or competitive gameplay, underscoring LLMs’ fundamental limitations in game-theoretic tasks.

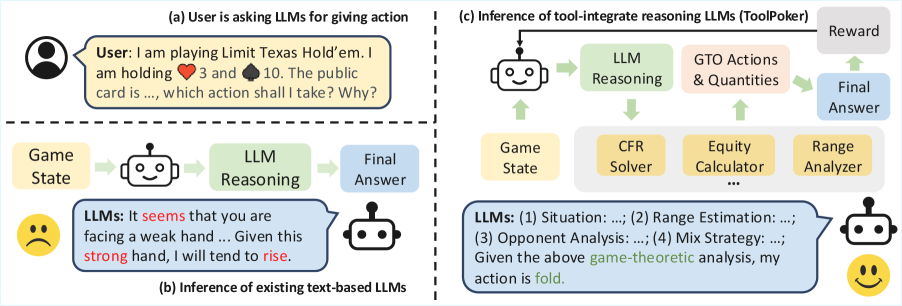

Figure 1: Illustration of ToolPoker and its advantages over LLMs using internal policies.

Motivated by these limitations, we pursue an alternative direction: leveraging LLMs’ strength in tool use. However, achieving this integration in poker is non-trivial and challenging: (i) Multi-tool dependency. Accurate game-theoretic reasoning often requires multiple solvers (e.g., action and equity solvers), and naively teaching LLMs to invoke these tools across multi-turn poker scenarios leads to error propagation and unstable training. (ii) High data cost. Collecting large-scale reasoning traces augmented with solver calls requires expensive LLM annotation and careful domain-specific tool invocation, making it prohibitively costly to build.

To address these challenges, we introduce ToolPoker, the first tool-integrated reasoning (TIR) framework for imperfect-information games (Fig.1), which teaches LLMs to call external poker solvers to provide game-theoretic optimal (GTO) actions and supporting quantities such as equity and hand ranges for accurate expert-level explanations. (i) We design a unified tool interface that consolidates solver functionalities into a single API, returning all quantities in one query to simplify tool use and stabilize training. (ii) We construct a small-scale expert-level reasoning dataset (Sec.4.1) inspired by the thought process of professional players, and programmatically augment it with standardized tool invocation templates and execution outputs, ensuring high-quality and reducing annotation cost. This also provides a robust foundation for the following RL training in TIR. By combining GTO-guaranteed computation with human-like reasoning, ToolPoker overcomes fundamental weaknesses of policy-only training and moves LLMs closer to professional-level play. Experiments across multiple poker tasks demonstrate that ToolPoker achieves both state-of-the-art gameplay performance and produces reasoning traces that align much more closely with game-theoretic principles.

Our main contributions are summarized as follows: (i) We conduct the first systematic study of LLMs in poker, revealing fundamental reasoning flaws such as heuristic bias, factual misunderstanding, and knowing–doing gaps. (ii) We make an initial attempt to improve LLMs’ internal policies through a two-stage RL framework. While effective at improving reasoning style, this approach remains insufficient for GTO reasoning and accurate game-theoretic derivation. (iii) We introduce ToolPoker, a tool-integrated reasoning framework that leverages external solvers to guarantee GTO-consistent actions while enabling LLMs to generate precise, professional-style explanations. (iv) Extensive experiments show that ToolPoker achieves state-of-the-art gameplay performance and produces reasoning traces that align closely with professional game-theoretic principles.

2 Backgrounds and Preliminaries

Two-Player Imperfect Information Poker Games. In this paper, we explore using LLMs to play poker with imperfect information. Following prior work(Guo et al., 2023; Huang et al., 2024), we focus on three widely studied two-player variants of increasing complexity: Kuhn Poker, Leduc Hold’em, and Limit Texas Hold’em, where their backgrounds and rules are in AppendixB.

Game-theoretic Reasoning. In poker, professional players go beyond heuristics or pattern recognition by systematically evaluating equity, ranges, and pot odds within a game-theoretic framework, guiding them toward actions that converge to Nash equilibrium. An example of such professional-style reasoning is in AppendixB.6, with further details on Nash equilibrium in AppendixB.5.

Problem Statement. We model a two-player poker game as a partially observable Markov decision process (POMDP) (𝒮,𝒜,𝒯,ℛ,Ω,O)(\mathcal{S},\mathcal{A},\mathcal{T},\mathcal{R},\Omega,O), where 𝒮={s t:1≤t≤T}\mathcal{S}={s^{t}:1\leq t\leq T} is the set of true states, T T is the maximum turns, 𝒜\mathcal{A} is the action space, 𝒯\mathcal{T} is the transition function, ℛ\mathcal{R} is the reward function, Ω\Omega denotes the observation space, and O O represents the observation function. At time t t, the state is s t={s pub t,s pri(i)t,s pri(¬i)t}s^{t}={s^{t}{{pub}},s^{t}{{pri}(i)},s^{t}{{pri}(\neg i)}}, where s pub t s^{t}{{pub}} denotes public information (e.g., community cards, betting), and s pri(i)t s^{t}{{pri}(i)} and s pri(¬i)t s^{t}{{pri}(\neg i)} are the private cards of player i i and the opponent, respectively. Each player i i partially observes o i t=(s pub t,s pri(i)t)∈Ω o_{i}^{t}=(s^{t}{{pub}},s^{t}{{pri}(i)})\in\Omega and conditions on its history h i t=(o i 1,a i 1,…,o i t)h_{i}^{t}=(o_{i}^{1},a_{i}^{1},\ldots,o_{i}^{t}) to choose an action a i t∼μ θ i(⋅∣f(h i t))a_{i}^{t}\sim\mu_{\theta}^{i}(\cdot\mid f(h_{i}^{t})), where f f is a prompt template that converts game states into natural language task descriptions. A full trajectory is τ=(s 1,a 1 1,a 2 1,r 1 1,r 2 1,…,s T,a 1 T,a 2 T,r 1 T,r 2 T)\tau=(s^{1},a_{1}^{1},a_{2}^{1},r_{1}^{1},r_{2}^{1},\ldots,s^{T},a_{1}^{T},a_{2}^{T},r_{1}^{T},r_{2}^{T}). The objective for player i i is to learn a policy μ θ i\mu_{\theta}^{i} that maximizes the cumulative reward ∑t=1 T r i t\sum_{t=1}^{T}r_{i}^{t} in the game.

3 Are LLMs Good at Poker? A Preliminary Analysis

In this section, to understand the capabilities of LLMs in playing poker games, we conduct a preliminary analysis to provide initial evidence regarding the strengths and weaknesses of LLMs compared to traditional algorithms for imperfect-information games.

3.1 Experimental Setup

Tasks. To quantitatively evaluate the performance of LLMs in poker, we consider two widely studied and popular poker games, Leduc Hold’em and Limit Texas Hold’em(Brown et al., 2019; Steinberger, 2019; Guo et al., 2023), both implemented in the RLCard environment(Zha et al., 2021a).

Comparison Methods. Following(Guo et al., 2023), we consider four traditional baselines for imperfect information games: NFSP(Heinrich and Silver, 2016), DQN(Mnih et al., 2015), DMC(Zha et al., 2021b), and CFR+(Tammelin, 2014). NFSP and DMC are self-play RL methods tailored to imperfect information games, while CFR+ provides a game-theoretic guarantee of convergence to the Nash equilibrium. For the more complex Limit Texas Hold’em environment, where CFR+ is computationally prohibitive, we instead adopt DeepCFR(Brown et al., 2019), a scalable neural extension of CFR+. These baselines cover diverse strategic paradigms, allowing us to assess LLMs against a broad range of opponent types. Details are provided in AppendixC.1.

Evaluation Protocol. To ensure the robustness of our evaluation metrics, in our experiment, we run a series of 50 50 games with fixed random seeds and fixed player positions We then rerun the 50 50 games with the same fixed random seeds but switched positions for the compared methods. To evaluate the gameplay performance in poker games, we choose the earned chips as the evaluation metric. Specifically, for each individual poker game, each player starts with 100 100 chips, the small blind is 1 1 chip, and the big blind is 2 2 chips.

3.2 Comparison with Traditional Method

Setting. We evaluate a suite of representative LLMs spanning a wide range of parameter scales, including Qwen2.5-3B, Qwen2.5-7B, Qwen2.5-72B(Qwen, 2024), Qwen3-8B(Yang et al., 2025), Llama3-8B(Grattafiori et al., 2024), GPT-4.1-mini(OpenAI, 2025), GPT-4o Hurst et al. (2024), and o4-mini(OpenAI, 2024), where the instruction-following versions of these open-source models are adopted. These models are evaluated against the aforementioned traditional baselines.

Results Analysis. Table1 reports the average chip gain of different LLMs against traditional methods in both Leduc Hold’em and Limit Texas Hold’em. From the table, we observe that (i) Most vanilla LLMs, particularly open-source models with smaller scales, underperform relative to traditional methods. This highlights the limited effectiveness of state-of-the-art LLMs in poker. (ii) CFR+ consistently outperforms all LLMs, including strong closed-source models such as GPT-4o and o4-mini. This is expected, as CFR+ explicitly targets Nash equilibrium strategies, underscoring the importance of game-theoretic reasoning in imperfect-information games. (iii) Against non-equilibrium baselines (i.e., NFSP, DQN, DMC), some large-scale and closed-source LLMs demonstrate competitive or superior performance. For instance, GPT-4o achieves +41.5+41.5, +60.5+60.5, and −22-22 chip outcomes against NFSP, DQN, and DMC, respectively. In contrast, small open-source LLMs (e.g., Qwen2.5-3B) exhibit severe losses across all baselines (e.g., −143.5-143.5, −161-161, and −124-124 chips). These results suggest that while LLMs cannot approximate Nash equilibrium strategies, sufficiently large models can exploit non-equilibrium opponents.

Table 1: Comparison of various vanilla LLMs against different traditional algorithms trained in Leduc Hold’em and Limit Texas Hold’em environments. Each method plays 100 games with varying random seeds and alternated player positions. Results report net chip gains. In Leduc Hold’em, values range from 1 1 to 14 14 chips; in Limit Texas Hold’em, they range from 1 1 to 99 99 chips. Bold and underline indicate the best and worst performance in each column, respectively. The “Avg.” columns summarize LLMs’ mean performance across the four traditional baselines.

3.3 In-depth Analysis: Decomposing Reasoning Flaws of LLMs

To understand why LLMs fail to compete with traditional methods in poker, we conduct an in-depth analysis of their reasoning processes. Specifically, we first present several case studies that highlight three key flaws in LLM reasoning, followed by a quantitative analysis to further validate and interpret these observations.

Case Study of LLMs’ Reasoning Flaw. To probe LLMs’ decision-making, we examine their reasoning traces in specific scenarios against baseline opponents. Representative cases from Qwen2.5-3B and GPT-4o are shown in Table13 and14 in AppendixC.2. From these examples, we identify three recurrent flaws: (i) Heuristic Reasoning. LLMs frequently rely on heuristic-driven reasoning, making decisions based on surface-level patterns or intuitive analogies rather than on rigorous game-theoretic principles. In contrast, the Nash-equilibrium algorithm CFR+ consistently achieves the strongest performance, underscoring the value of game-theoretic reasoning in imperfect-information games like poker. The absence of such equilibrium-oriented reasoning substantially constrains the gameplay performance of LLMs. These two findings indicate that while LLMs are capable of articulating plausible strategic reasoning, their actual decision-making remains constrained by executional inconsistencies and heuristic biases. These limitations ultimately hinder their effectiveness in complex poker games that require advanced strategic reasoning capabilities. (ii) Factual Misunderstanding. LLMs often ground their reasoning in intuitive analogies, making them prone to misjudging fundamental aspects of the game, such as hand strength or opponent range estimation. These factual inaccuracies can cascade into flawed reasoning chains and ultimately suboptimal actions. For example, as shown in Tab.14, GPT-4o incorrectly judged (♠K,♣10)(\spadesuit\text{K},\clubsuit\text{10}) as weak and preferred folding. However, an equity calculator shows this hand has about 60%60% equity, indicating it is relatively strong. (iii) Knowing–Doing Gap. LLMs often exhibit a mismatch between articulated reasoning and final actions. For instance, in Tab.13, Qwen2.5-3B correctly reasons that (♣3♡10\clubsuit\text{3}\ \heartsuit\text{10}) is not a strong hand and fold is optimal, while it yet proceeds to raise. Such inconsistencies reveal a breakdown between reasoning and execution. Additional case studies are provided in AppendixC.2.

Quantitative Analysis of LLMs’ Reasoning Flaws. To validate the reasoning flaws observed in case studies, we adopt the LLM-as-a-Judge framework(Dubois et al., 2023). We design three metrics: heuristic reasoning (HR), factual alignment (FA), and action–reasoning consistency (AC), and score each reasoning trace on a 0–2 2 scale using GPT-4.1-mini as the judge. Metric definitions, judge prompts, and human–LLM agreement are in AppendixC.3 andC.5. For each model, we sample 20 20 traces and evaluate Qwen2.5-3B/7B/72B, GPT-4.1-mini, and o4-mini. To ensure reliability of LLM-based judging, we manually curate 20 20 professional-style reasoning traces and score them by LLMs. We observe high agreement with human judgement and include it as a reference (see AppendixC.5).

Table 2: LLM-as-a-Judge score (0-2 2) evaluating reasoning traces of various LLMs in Leduc Hold’em and Limit Texas Hold’em. Bold and underlined numbers indicate the best and worst performance, respectively.

We report results in Tab.2. Three key findings are observed: (i) Reasoning flaws persist across all models. Qwen2.5-3B scores only 0.53 0.53 HR, 0.18 0.18 FA, and 1.53 1.53 AC, while o4-mini, the strongest model, reaches 1.80 1.80/1.56 1.56/1.85 1.85, still below perfect consistency. This shows systemic heuristic, factual, and knowing–doing flaws in LLMs. (ii) Scaling improves but does not eliminate flaws. Larger models (Qwen2.5-72B, o4-mini) improve all metrics, but significant FA and AC gaps remain, showing scale alone cannot achieve professional-level reasoning. (iii) Action–reasoning consistency remains imperfect. AC stabilizes around 1.53 1.53–1.87 1.87, below the professional baseline of 2.0 2.0, with o4-mini still exhibiting knowing–doing mismatches. Full details are in AppendixC.4.

Overall, these findings quantitatively reinforce our case studies: despite improvements in scale and instruction tuning, current LLMs remain far from professional-level poker reasoning. They continue to exhibit heuristic biases, factual misunderstandings, and executional inconsistencies that fundamentally limit their game-theoretic reasoning capabilities.

4 Can We Improve LLMs in Poker? Failures and Insights

Building on the preliminary analysis of LLM limitations in poker, we next explore how to improve their ability to both act and reason like professional players. A natural starting point is supervised fine-tuning (SFT) on expert gameplay. However, while obtaining expert actions is straightforward using established solvers such as CFR+, constructing large-scale datasets with high-quality reasoning traces is extremely costly, making pure SFT impractical at scale. For instance, Wang et al. (2025) report that mastering even simplified poker games like Leduc Hold’em requires at least 400 400 k action-only instances. Adding reasoning traces would multiply both time and financial costs, rendering such datasets infeasible to construct. To address this, inspired by recent progress in RL for enhancing LLM reasoning(Guo et al., 2025) and by traditional RL for poker(Heinrich and Silver, 2016), we make an initial attempt to propose a two-stage framework, BC-RIRL, that combines behavior cloning (BC) with regret-inspired policy optimization (RIRL). In the first stage, BC aims to provide a small but valuable foundation of expert play and reasoning. In the second stage, RIRL refines these policies toward GTO play under Nash–equilibrium–based supervision.

4.1 Behavior Cloning

We first leverage BC to expose LLMs to professional-style reasoning. Following recent advances in reasoning-augmented datasets(Muennighoff et al., 2025) and inspired by professional players’s thought process (AppendixB.6), we curate a dataset of professional-level trajectories 𝒟 b={(h t,a t,r t)}\mathcal{D}{b}={(h^{t},a^{t},r^{t})}, where h t h^{t} is the full interaction history up to time t t and a t a^{t} is the corresponding expert response. Expert actions a t a^{t} are obtained by querying the state-of-the-art CFR+ solver(Tammelin, 2014) with h t h^{t}, ensuring alignment with Nash-equilibrium play. Reasoning traces r t r^{t} are generated using an LLM guided by domain-specific prompt templates covering key concepts such as hand equity, pot odds, and opponent ranges, to mimic the explanatory style of professional players. The construction prompts and dataset examples are in AppendixD.3. To ensure dataset quality, we implement an automated pipeline that (i) checks consistency between the annotated actions and CFR+ outputs, and (ii) filters out low-quality samples using our HR/FA/AC metrics. After filtering, we obtain a compact dataset of approximately 5 5 k reasoning-augmented samples, which is then used to fine-tune the LLM policy π θ\pi{\theta} via supervised fine-tuning (SFT) to imitate expert responses:

ℒ BC=−𝔼(h t,a t)∼𝒟 b[logπ θ(a t|h t)].\mathcal{L}{\text{BC}}=-\mathbb{E}{(h^{t},a^{t})\sim\mathcal{D}{b}}[\log\pi{\theta}(a^{t}|h^{t})].(1)

This imitation phase grounds the LLM in domain knowledge and equips it with basic game-theoretic reasoning capability. As shown in Sec.4.3, BC primarily serves as a warm start, providing a crucial foundation for the subsequent RL stage.

4.2 Regret-Inspired RL Fine-Tuning

As an initial attempt to refine policies beyond imitation, we attempt a regret-inspired reinforcement learning (RIRL) framework. To overcome the sparse and noisy outcome-based rewards in multi-turn poker games such as Leduc Hold’em and Texas Hold’em, we experiment with a step-level regret-guided reward that leverages signals from a pre-trained CFR solver to guild LLMs minimize cumulative regret and convergence to the Nash equilibrium. Full details of RIRL are in AppendixD.1.

Regret-guided Reward Design. Motivated by CFR’s success in poker playing by approaching Nash equilibrium from Sec.3.2, we optimize LLMs via regret minimization. Our key idea is to compute cumulative regrets from a pre-trained CFR solver and normalize them into fine-grained reward signals that capture each action’s relative contribution. For a policy π θ\pi_{\theta} as player i i, the reward of action a i t a_{i}^{t} is defined as:

R(a i t)=R t(a i t)−mean({r t(a j)}j=1|𝒜|)F norm({r t(a j)}j=1|𝒜|),R(a^{t}{i});=;\frac{R{t}(a^{t}{i})-\text{mean}({r{t}(a_{j})}{j=1}^{|\mathcal{A}|})}{F{\text{norm}}({r_{t}(a_{j})}_{j=1}^{|\mathcal{A}|})},(2)

where F norm F_{\text{norm}} denotes a normalization factor, chosen as the standard deviation in our implementation. r t(a i t)r_{t}(a_{i}^{t}) is the cumulative regret of action a i t a_{i}^{t}, indicating how much better or worse it performs compared to the current mixture strategy across time.

Fine-tuning Objective. Based on this signal, we fine-tune LLM policy via PPO(Schulman et al., 2017) with the following clipped RL objective:

ℒ PPO\displaystyle\mathcal{L}{\text{PPO}}(θ)=−𝔼 x∼𝒟 s,y∼π old(⋅|x)\displaystyle(\theta)=-\mathbb{E}{x\sim\mathcal{D}{s},y\sim\pi{{old}}(\cdot|x)}(3) [min(π θ(y|x)π old(y|x)A,clip(π θ(y|x)π old(y|x),1−ϵ,1+ϵ))−β 𝔻 KL(π θ(⋅|c)||π ref(y|x))],\displaystyle\left[\min\left(\frac{\pi_{\theta}(y|x)}{\pi_{{old}}(y|x)}A,\text{clip}\left(\frac{\pi_{\theta}(y|x)}{\pi_{{old}}(y|x)},1-\epsilon,1+\epsilon\right)\right)-\beta\mathbb{D}{\text{KL}}(\pi{\theta}(\cdot|c)||\pi_{ref}(y|x))\right],

where π θ\pi_{\theta} and π old\pi_{old} denote the current and previous policy models, respectively. ϵ\epsilon is the clipping threshold. π ref\pi_{ref} is the reference policy that regularizes π θ\pi_{\theta} update via a KL-divergence penalty, measured and weighted by 𝔻 KL\mathbb{D}{KL} and β\beta, respectively. Generalized Advantage Estimation (GAE)(Schulman et al., 2015) is used for advantage estimate A A. x x denotes the input samples drawn from 𝒟\mathcal{D}, which is composed of trajectories generated by the current policy π θ\pi{\theta}. y y is the generated outputs via policy LLMs π θ(⋅|x)\pi_{\theta}(\cdot|x). The trajectory collection procedure is introduced in AppendixD.4.

4.3 Experiment Analysis

Experimental Setup. Following the settings in Sec.3.1, we implement BC-RIRL by fine-tuning LLMs with both BC and RIRL, and compare against traditional algorithms as well as LLM-based approaches. For traditional baselines, we adopt NFSP, DQN, DMC, and CFR+, consistent with Sec.3.1. For LLM-based baselines, in addition to direct prompting without fine-tuning, we consider two variants: (i) BC-SPRL, which fine-tunes LLMs through BC and self-play RL with sparse outcome-based rewards, and (ii) RIRL, which fine-tunes LLMs with RIRL alone, without the BC stage. Further details of SPRL are in AppendixE. Other settings follow these in Sec.3.1, including the evaluation metrics. The implementation details are in AppendixD.5.

Comparison Results. We fine-tune Qwen2.5-7B with BC-RIRL and compare against traditional algorithms and vanilla LLMs. The gameplay and reasoning results are reported in Tab.3 and Tab.4.

Gameplay. (i) All RL-based fine-tuning variants improve performance in Kuhn Poker, showing that both outcome- and regret-based feedback provide useful signals in simple environments. (ii) BC-RIRL outperforms direct prompting and BC-SPRL (e.g., +17.0+17.0 chips vs. GPT-4.1-mini) but still trails CFR+ (−34.0-34.0 chips) In Leduc Hold’em, indicating dense regret feedback is more effective than sparse outcome rewards in complex poker games, yet insufficient for equilibrium-level play. (iii) Pure RIRL without the BC stage does not yield improvements in Leduc Hold’em (−64.5-64.5 chips vs. GPT-4.1-mini), highlighting BC as a necessary foundation.

Reasoning. (i) RIRL consistently improves HR and AC (e.g., 1.93 1.93 HR and 1.90 1.90 AC in Leduc Hold’em vs. 1.80 1.80/1.85 1.85 for o4-mini), reducing heuristic flaws and the knowing–doing gap. (ii) RIRL gains only marginal improvement in FA (1.12 1.12, 0.87 0.87 and 1.65 1.65 for RIRL, Qwen2.5-7B and o4-mini), showing that factual misunderstandings remain the main limitation. Together with the case studies, these results indicate that while BC-RIRL improves strategic reasoning and action–reasoning alignment, factual misunderstandings remain a notable challenge. Full analysis are in AppendixD.2.

Takeaway. Our experiments validate that current LLMs are inherently weak at strategic reasoning in game-theoretic tasks. RL fine-tuning with step-level or outcome-based rewards yields modest gameplay gains but still lags behind traditional methods like CFR. Importantly, while our two-stage approach helps LLMs imitate professional reasoning styles, they continue to struggle with precise derivation such as equity and hand ranges. This reveals a fundamental limitation: LLMs alone cannot yet achieve both GTO actions and precise reasoning. To bridge this gap, we next explore augmenting LLMs with tool use, leveraging their natural strength in tool invocation to support GTO-consistent actions and precise game-theoretic reasoning.

Table 3: Results of comparison fine-tuning methods against various traditional-based and vanilla LLMs in Kuhn and Leduc Hold’em environment. Other settings follow these in Tab.1. Bold and underlined numbers indicate the best and worst performance, respectively.

Traditional Methods Vanilla LLMs Method NFSP DQN DMC CFR+Qwen2.5-3B Qwen2.5-7B GPT-4.1-mini o4-mini Avg. Kuhn Qwen2.5-7B-22.0-53.0-33.0-36.0+26--41-43-28.8 Qwen2.5-7B RIRL{}{\text{RIRL}}-14.0+3.0+10.0-5.0+43.0+8.0-1.0-11.0+4.1 Qwen2.5-7B BC-SPRL{}{\text{BC-SPRL}}+6.0-6.0+13.0-14.0+32.0+23.0+22.0+10.0+10.7 Qwen2.5-7B BC-RIRL{}{\text{BC-RIRL}}+4.0+8.0+11.0-2.0+57.0+27.0+21.0+11.0+17.1 Leduc Hold’em Qwen2.5-7B-57.5-93.0-73.0-68.5+48.5--59.5-32.5-47.9 Qwen2.5-7B RIRL{}{\text{RIRL}}-42.5-80-59.5-55.0+52.0+12.0+2.5-18.5-23.6 Qwen2.5-7B BC-SPRL{}{\text{BC-SPRL}}-93.0-154.5-95.5-103.5+2.0-18.0-64.5-54.5-72.6 Qwen2.5-7B BC-RIRL{}{\text{BC-RIRL}}-37.0-64.5-43.5-34.0+54.0+28.5+17.0+1.0-9.8

Table 4: LLM-as-a-Judge score (0-2 2) evaluating reasoning traces of various LLMs in two realistic poker tasks. Bold and underlined numbers indicate the best and worst performance, respectively.

5 ToolPoker: Game-theoretic Reasoning with Agentic Tool Use

Table 5: Comparison of various LLM-based methods against different traditional algorithms trained in Leduc Hold’em and Limit Texas Hold’em environments. Other settings follow these in Tab.1. Bold and underline indicate the best and worst performance in each column, respectively.

Building on our analysis in Sec.4, which highlights the limitations of LLMs in producing GTO actions and precise game-theoretic reasoning, we propose ToolPoker, a tool-integrated reasoning (TIR) framework to leverage LLMs’ strength in tool use to empower LLMs to leverage external poker solvers to refine their actions and reasoning qualities, which is shown in Fig.1. To make this tool usage stable and effective, we introduce a unified tool interface that consolidates multiple poker solvers (e.g., CFR and equity calculators) into a single API to simplify this into a single-turn tool use. On the training side, we adopt a two-stage strategy: first, behavior cloning on a code-augmented dataset to teach the model when and how to call external tools; and second, reinforcement learning with a composite reward to further optimize solver integration and reasoning quality.

5.1 Tool-Integrated Game-theoretic Reasoning in Poker

Rollout Process. To enable GTO-consistent TIR, we design a structured prompt template in Tab.21 to guide LLM to leverage external poker solvers for game-theoretic reasoning. Concretely, given a policy LLM π θ\pi_{\theta} as player i i at time t t, π θ\pi_{\theta} generates a reasoning trace enclosed in tags. To obtain GTO actions and other quantities, π θ\pi_{\theta} issues a query in tags, which calls the unified solver interface and returns results wrapped in tags. These outputs are then incorporated into the reasoning trace before π θ\pi_{\theta} produces the final action a i t a_{i}^{t} within tags.

Unified Tool Inference. Obtaining GTO actions and supporting quantities (e.g., equity, pot odds, and range distributions) often requires multiple tool calls, such as a CFR solver and an equity calculator. To simplify and stabilize training, we unify these functionalities into a single standardized interface that provides both the solver’s actions and auxiliary statistics for game-theoretic reasoning.

5.2 Training Algorithm

BC for TIR. To construct high-quality TIR data without incurring prohibitive annotation cost, we build an automated pipeline that programmatically augments the reasoning dataset from Sec.4.1 with standardized tool invocation templates (e.g., ) and execution outputs (e.g., ). This resulting dataset 𝒟 c\mathcal{D}_{c} is then used to train ToolPoker via SFT, providing a foundation for LLMs to know how to invoke tools for game-theoretic reasoning. The realistic example and the details of the automatic pipeline are in Tab.22 in AppendixG.2.

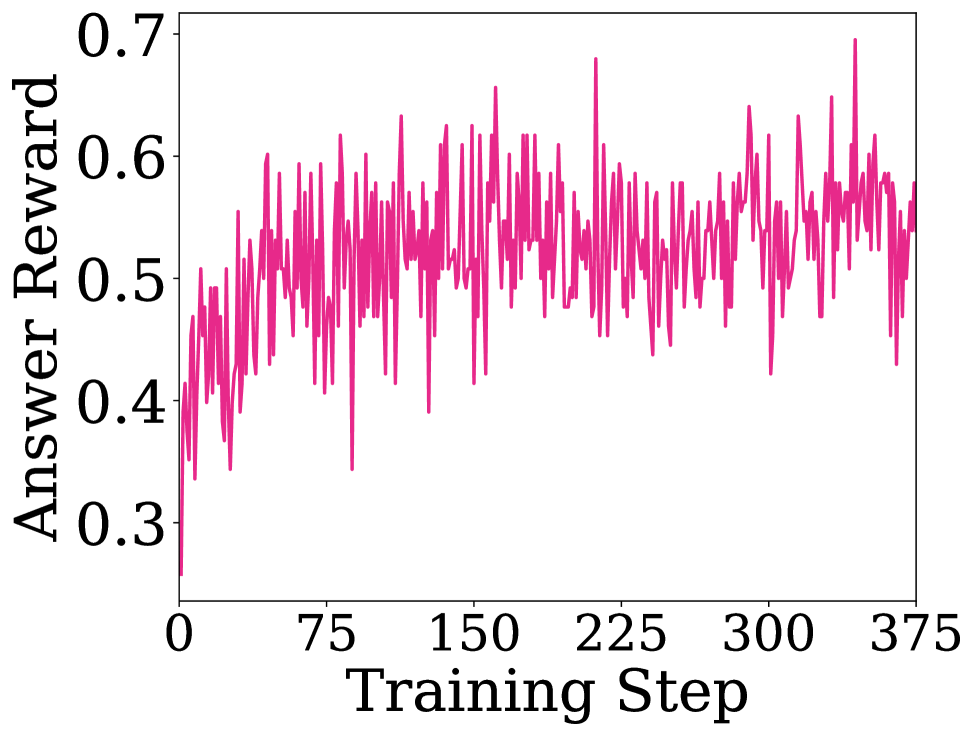

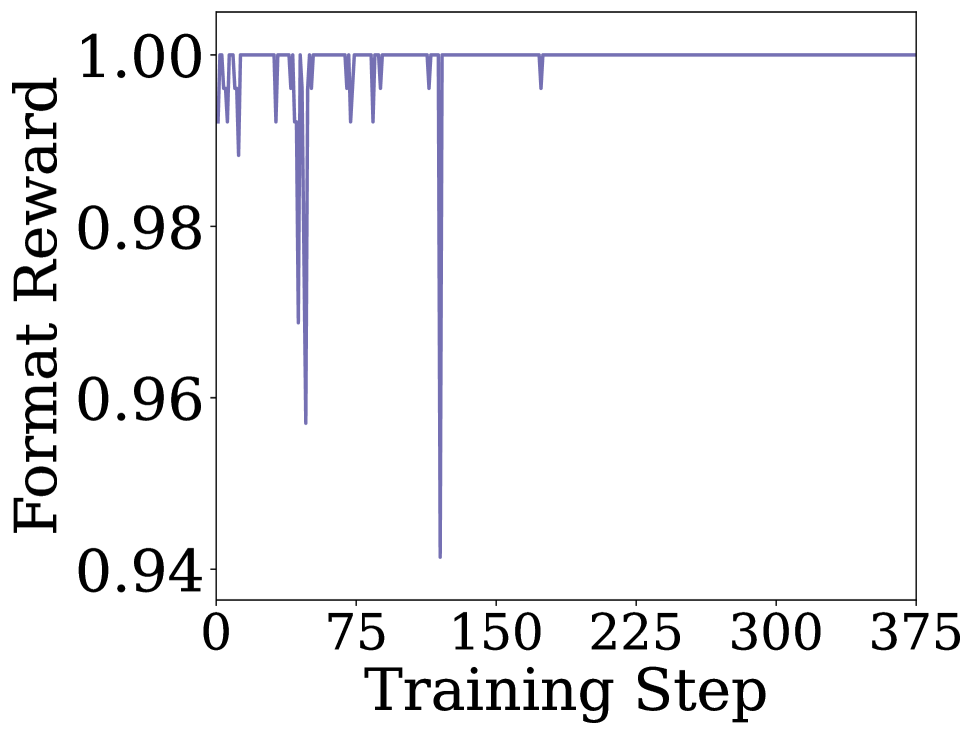

RL Fine-tuning. We train ToolPoker with PPO(Schulman et al., 2017), where the objective function is defined in Eq.(8). To better support TIR, we follow ReTool(Feng et al., 2025a) and integrate external poker solvers into the LLM policy π θ\pi_{\theta}, enabling multi-turn real-time tool use that provides GTO-consistent actions and supporting quantities from external tools. To guide the training, we design a composite reward function. Formally, given player i i at time step t t, the reward is defined as

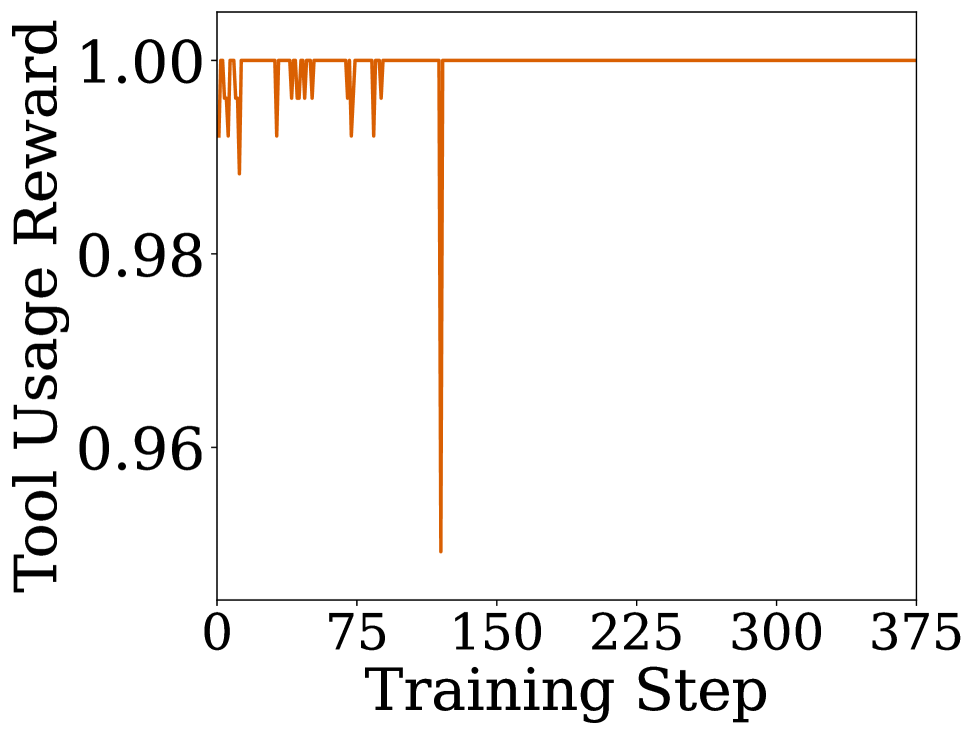

R(a i t,a^i t,ρ i t)=R answer(a i t,a^i t)+α f⋅R format(ρ i t)+α t⋅R tool(ρ i t),R(a_{i}^{t},\hat{a}{i}^{t},\rho{i}^{t})=R_{\text{answer}}(a_{i}^{t},\hat{a}{i}^{t})+\alpha{f}\cdot R_{\text{format}}(\rho_{i}^{t})+\alpha_{t}\cdot R_{\text{tool}}(\rho_{i}^{t}),(4)

where a i t a_{i}^{t} is the ground-truth action from the CFR solver, a^i t\hat{a}i^{t} is the model-predicted action, and ρ i t\rho_{i}^{t} is the generated reasoning trace. Here, R answer R_{\text{answer}}, R format R_{\text{format}}, and R tool R_{\text{tool}} correspond to the answer reward, format reward, and tool-execution reward, respectively, ensuring that ToolPoker not only outputs GTO-consistent actions but also generates structured reasoning traces with effective tool usage. α f\alpha_{f} and α t\alpha_{t} are the weights to balance the impact of format and tool execution rewards. More details of these reward functions are in AppendixG.3. The fine-tuning algorithm is in Alg.1 of AppendixG.4.

5.3 Experimental Results

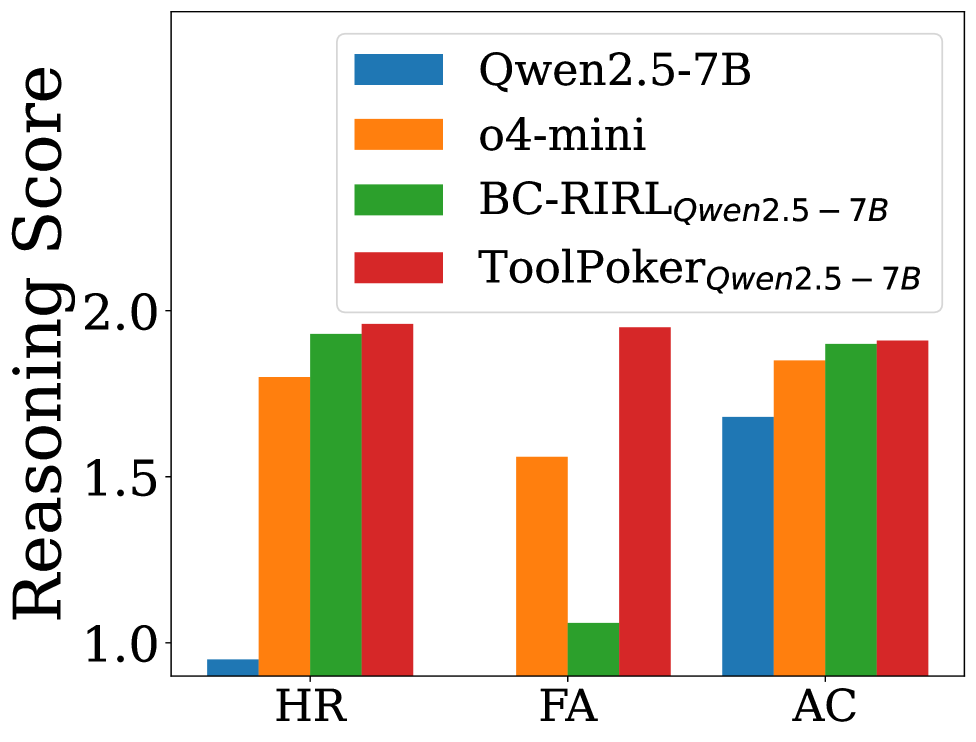

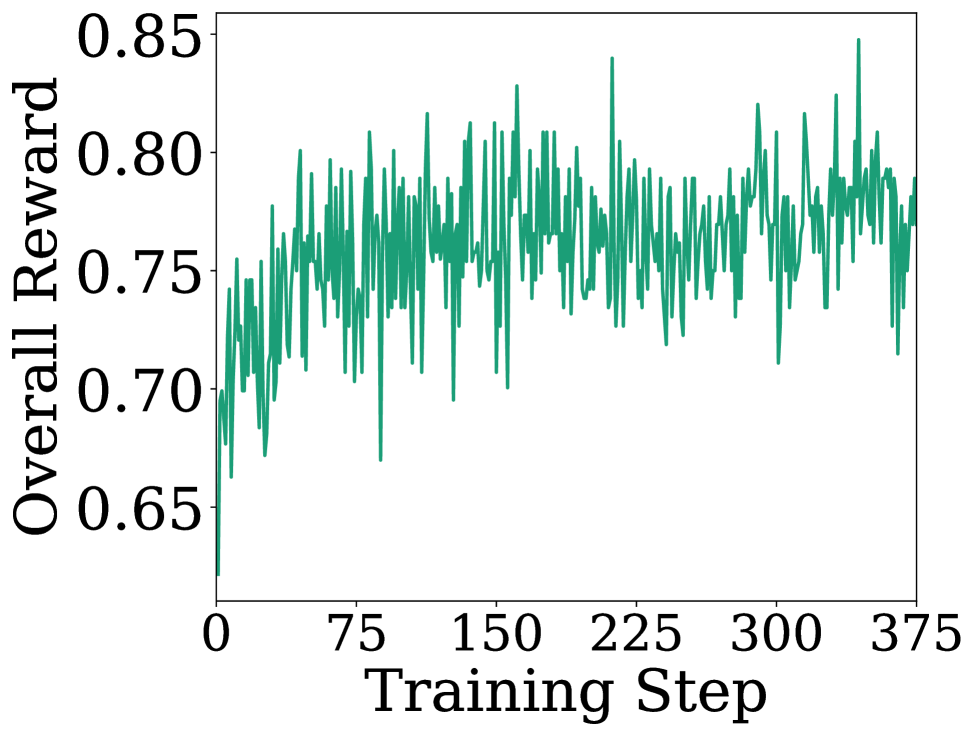

(a) Reasoning - Leduc

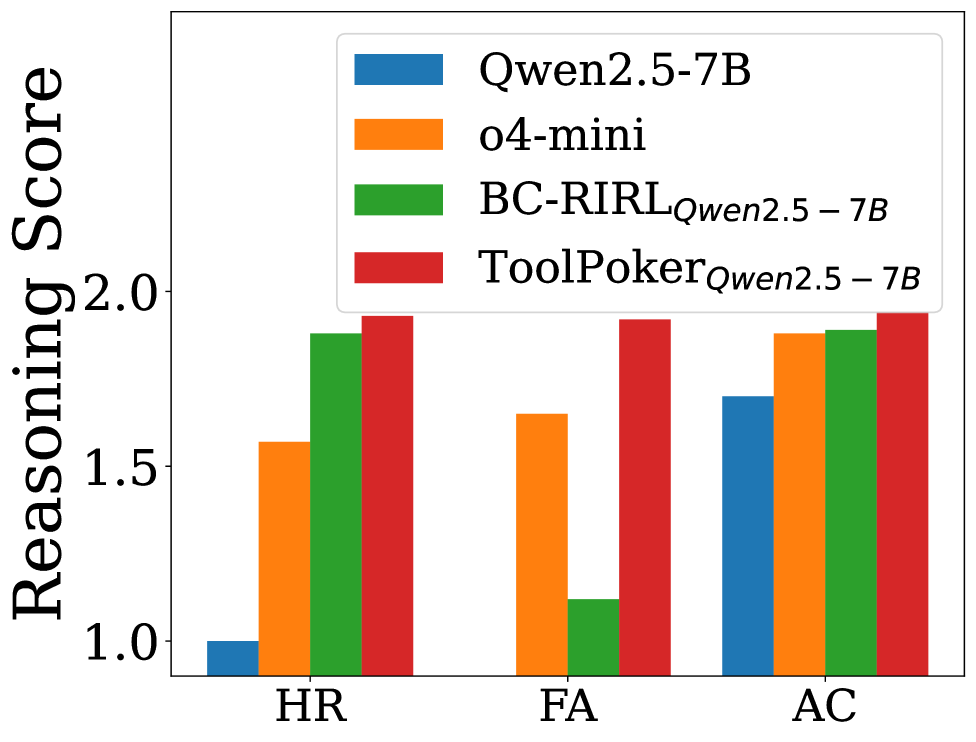

(b) Reasoning - Limit

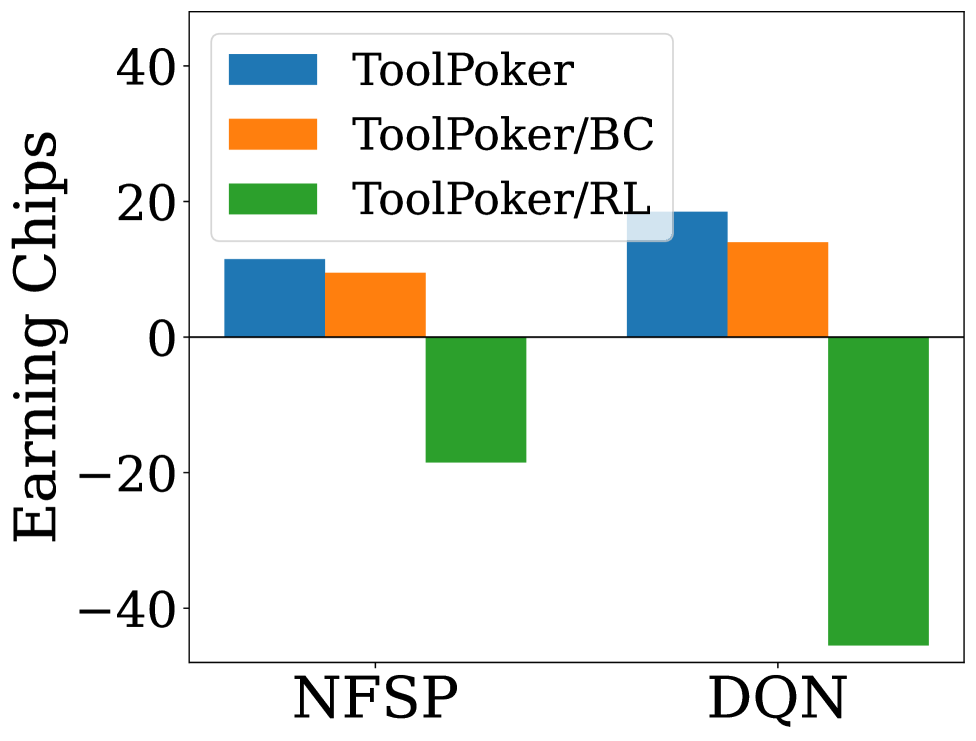

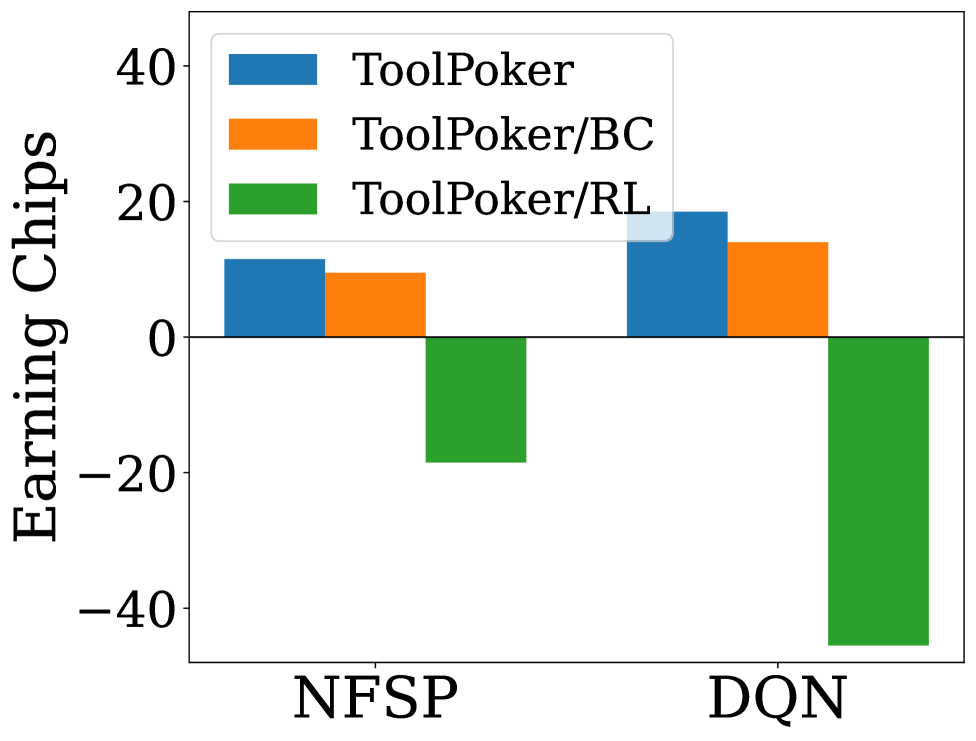

(c) Ablation - Gameplay

(d) Ablation - Reasoning

Figure 2: Results for ToolPoker: (a) and (b) present reasoning analysis in Leduc Hold’em and Limit Texas Hold’em; (c) and (d) show ablation studies on gameplay and reasoning in Leduc Hold’em.

Evaluation Setup. We conduct evaluations on two realistic and complex poker tasks, Leduc Hold’em and Limit Texas Hold’em. We compare ToolPoker with the following baselines: (i) Traditional algorithms: NFSP, DQN, DMC, and CFR; (ii) Vanilla LLMs: Qwen2.5-7B, Qwen2.5-72B, and o4-mini; (iii) Fine-tuning-based baseline: BC-RIRL. Other settings follow these in Sec.4.3. More Implementation details of ToolPoker are in AppendixG.5.

Gameplay Performance. We first explore the gameplay performance of ToolPoker. Qwen2.5-7B is the base model for fine-tuning. We compare ToolPoker with BC-RIRL and three vanilla LLMs, Qwen2.5-7B, Qwen2.5-72B and o4-mini, where the comparison results are reported in Tab.5. Two key findings emerge: (i) ToolPoker achieve state-of-the-art gameplay perfomrance against traditional algorithms. For instance, ToolPoker gains +60.5+60.5, +63.0+63.0 and +61.5+61.5 chips against NFSP, DQN and DMC in Limit Texas Hold’em, while BC-RIRL gains −77.5-77.5, −82.5-82.5 and −80.5-80.5 chips against them. This indicates the effectiveness of ToolPoker in calling CFR solver to obtain GTO-consistent actions. (ii) ToolPoker slightly underperforms CFR but is still comparable in both poker environments. Specifically, ToolPoker gain −3.0-3.0 and −5.0-5.0 chips against CFR+ and DeepCFR in both Leduc Hold’em and Limit Texas Hold’em, which are minor. We analyze the reason is that while ToolPoker provides a high success rate in executing the CFR solver to provide GTO-consistent action, it is inevitable that occasional errors occur in tool calling.

Reasoning Quality. To assess whether ToolPoker also improves reasoning, we employ the LLM-as-a-Judge framework following the settings in Sec.4.3. Fig.2 (a) and (b) summarize the results across three metrics. Two observations emerge: (i) ToolPoker achieves near-perfect across all three scores, outperforming all baselines and approaching professional levels. This indicates that, beyond delivering state-of-the-art gameplay performance, ToolPoker also enables LLMs to generate precise and logically consistent reasoning traces grounded in game-theoretic principles. (ii) Compared with BC-RIRL, ToolPoker yields substantially higher FA scores. This demonstrates the importance of leveraging external solvers: while BC-RIRL can articulate plausible reasoning, it often lacks accurate auxiliary quantities (e.g., equities, ranges). In contrast, ToolPoker grounds its reasoning in solver-derived calculations, ensuring rigor and internal consistency.

Ablation Studies. To understand the impact of each component in ToolPoker, we implement two ablated variants: (i) ToolPoker/BC: removes BC and learns tool use only via RL; (ii) ToolPoker/RL: discards RL fine-tuning and relies solely on BC. We measure both gameplay performance (against NFSP and DQN) and reasoning quality in Leduc Hold’em, with results shown in Fig.2 (c) and (d). The full ToolPoker achieves the strongest overall performance, while the variants reveal complementary weaknesses. Specifically: (i) ToolPoker/BC suffers from lower HR and weaker gameplay, suggesting it can query the solver but fails to internalize game-theoretic reasoning patterns; (ii) ToolPoker/RL attains higher HR but performs poorly in gameplay and FA/AC, indicating it imitates reasoning superficially without aligning with GTO-consistent actions. These results highlight that BC provides the foundation for TIR, while RL fine-tuning aligns solver execution with GTO actions and precise derivation. Together, they enable ToolPoker to learn not only how to call the solver, but also how to integrate outputs into coherent, professional-style reasoning traces. More discussions are in AppendixG.6.

6 Related Work

Strategic Reasoning in LLMs. Recent studies have examined LLMs in game-theoretic settings, including poker(Duan et al., 2024; Zhai et al., 2024; Zhuang et al., 2025; Wang et al., 2025). Unlike prior work that primarily evaluates gameplay outcomes, we also analyze the reasoning process, identifying why LLMs fail to achieve GTO play. Moreover, we introduce the first TIR framework that leverages poker solvers for professional-level gameplay. Further discussion is in AppendixA.1.

Tool Learning on LLMs. TIR equips LLMs with external tools for domains such as math and web search(Gao et al., 2023; Jin et al., 2025), which are typically fully observed and single-agent. In contrast, ToolPoker extends TIR to imperfect-information games, integrating poker solvers to ensure GTO actions and rigorous reasoning. Full details on RL and TIR are in AppendixA.2 andA.3.

7 Conclusions and Future Works

In this paper, we revisit strategic reasoning in LLMs through poker with imperfect information. Our analysis shows that current LLMs fall short of professional-level play, exhibiting heuristic biases, factual misunderstandings, and a knowing–doing gap between their reasoning and actions. An initial attempt with BC and RIRL partially reduces heuristic flaws but is still not enough for precise game-theoretic derivations or competitive gameplay. To address this, we introduce ToolPoker, a TIR framework that leverages LLMs’ strength in tool use to incorporate external poker solvers. ToolPoker enables models not only to call solvers for GTO actions but also to ground their rigorous and accurate game-theoretic reasoning in solver outputs. Experiments across multiple poker tasks show that ToolPoker achieves state-of-the-art gameplay performance and produces reasoning traces that align closely with professional game-theoretic principles. Our research paves the way for further exploration of TIR in more complex strategic settings, shifting the focus beyond solely improving models’ internal policies. Further discussion of future works is provided in AppendixI.

8 Ethics Statement

This paper studies LLMs in the context of poker as a rigorous benchmark for strategic reasoning under uncertainty. While poker involves gambling in practice, our experiments are conducted entirely in simulated environments without any financial transactions or human participants. Thus, this research does not pose risks related to gambling addiction or monetary harm.

Our contributions focus on methodology and evaluation. We study the reasoning capabilities of LLMs, propose new training frameworks, and benchmark them against both traditional algorithms and LLM-based methods. These findings aim to deepen understanding of LLM reasoning in imperfect-information games, with potential implications for broader domains such as cybersecurity and negotiation. We acknowledge that advanced poker agents could, if misused, be deployed in real-money contexts. To mitigate this risk, we release code and datasets solely for research purposes, emphasizing their use as benchmarks for safe and reproducible evaluation.

Finally, we ensured that no personally identifiable or sensitive human data were used in this work. All datasets are synthetically generated using poker solvers or LLMs. We believe the potential benefits of this paper, including advancing understanding of the limitations of LLMs’ reasoning, improving the design of tool-augmented AI, and supporting safer deployment in high-stakes domains, clearly outweigh the minimal risks.

9 Reproducibility Statement

We have made every effort to ensure reproducibility. The details of our proposed methods, including model architectures, training objectives, and hyperparameters, are provided in Sec.4 and Sec.5. Experimental setups, including datasets, preprocessing steps, and evaluation protocols, are described in Sec.3.1, Sec.4.3, and Sec.5.3, with additional details in the Appendix. Our code is publicly available at https://anonymous.4open.science/r/ToolPoker-797E.

References

- CyBERT: cybersecurity claim classification by fine-tuning the bert language model. Journal of Cybersecurity and Privacy, pp.615–637. Cited by: §1.

- M. Bowling, N. Burch, M. Johanson, and O. Tammelin (2015)Heads-up limit hold’em poker is solved. Science 347 (6218), pp.145–149. Cited by: §B.3.

- N. Brown, A. Lerer, S. Gross, and T. Sandholm (2019)Deep counterfactual regret minimization. In International conference on machine learning, pp.793–802. Cited by: 5th item, §3.1, §3.1.

- N. Brown and T. Sandholm (2019)Superhuman ai for multiplayer poker. Science 365 (6456), pp.885–890. Cited by: 5th item, §1.

- W. Chen, X. Ma, X. Wang, and W. W. Cohen (2022)Program of thoughts prompting: disentangling computation from reasoning for numerical reasoning tasks. arXiv preprint arXiv:2211.12588. Cited by: §A.3.

- Y. Chen, Y. Liu, J. Zhou, Y. Hao, J. Wang, Y. Zhang, and C. Fan (2025)R1-code-interpreter: training llms to reason with code via supervised and reinforcement learning. arXiv preprint arXiv:2505.21668. Cited by: §A.2.

- A. Costarelli, M. Allen, R. Hauksson, G. Sodunke, S. Hariharan, C. Cheng, W. Li, J. Clymer, and A. Yadav (2024)Gamebench: evaluating strategic reasoning abilities of llm agents. arXiv preprint arXiv:2406.06613. Cited by: §A.1.

- D. Das, D. Banerjee, S. Aditya, and A. Kulkarni (2024)MATHSENSEI: a tool-augmented large language model for mathematical reasoning. arXiv preprint arXiv:2402.17231. Cited by: §A.3.

- J. Duan, R. Zhang, J. Diffenderfer, B. Kailkhura, L. Sun, E. Stengel-Eskin, M. Bansal, T. Chen, and K. Xu (2024)Gtbench: uncovering the strategic reasoning capabilities of llms via game-theoretic evaluations. Advances in Neural Information Processing Systems 37, pp.28219–28253. Cited by: §A.1, §H.1, §1, §6.

- Y. Dubois, X. Li, R. Taori, T. Zhang, I. Gulrajani, J. Ba, C. Guestrin, P. Liang, and T. Hashimoto (2023)AlpacaFarm: a simulation framework for methods that learn from human feedback. In Thirty-seventh Conference on Neural Information Processing Systems, External Links: LinkCited by: §C.4, §3.3.

- J. Feng, S. Huang, X. Qu, G. Zhang, Y. Qin, B. Zhong, C. Jiang, J. Chi, and W. Zhong (2025a)Retool: reinforcement learning for strategic tool use in llms. arXiv preprint arXiv:2504.11536. Cited by: §A.3, §G.5, §G.7.1, §G.7.1, §G.7.2, §5.2.

- L. Feng, Z. Xue, T. Liu, and B. An (2025b)Group-in-group policy optimization for llm agent training. arXiv preprint arXiv:2505.10978. Cited by: §A.2.

- L. Gao, A. Madaan, S. Zhou, U. Alon, P. Liu, Y. Yang, J. Callan, and G. Neubig (2023)Pal: program-aided language models. In International Conference on Machine Learning, pp.10764–10799. Cited by: §A.3, §6.

- Z. Gou, Z. Shao, Y. Gong, yelong shen, Y. Yang, M. Huang, N. Duan, and W. Chen (2024)ToRA: a tool-integrated reasoning agent for mathematical problem solving. In The Twelfth International Conference on Learning Representations, Cited by: §A.3.

- A. Grattafiori, A. Dubey, A. Jauhri, A. Pandey, A. Kadian, A. Al-Dahle, A. Letman, A. Mathur, A. Schelten, A. Vaughan, et al. (2024)The llama 3 herd of models. arXiv preprint arXiv:2407.21783. Cited by: §3.2.

- D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025)Deepseek-r1: incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948. Cited by: §A.2, §D.1, §4.

- J. Guo, B. Yang, P. Yoo, B. Y. Lin, Y. Iwasawa, and Y. Matsuo (2023)Suspicion-agent: playing imperfect information games with theory of mind aware gpt-4. arXiv preprint arXiv:2309.17277. Cited by: §A.1, 2nd item, 3rd item, §H.1, §1, §2, §3.1, §3.1.

- A. Gupta (2023)Are chatgpt and gpt-4 good poker players?–a pre-flop analysis. arXiv preprint arXiv:2308.12466. Cited by: §A.1.

- J. C. Harsanyi (1995)Games with incomplete information. The American Economic Review, pp.291–303. Cited by: §1.

- J. Heinrich and D. Silver (2016)Deep reinforcement learning from self-play in imperfect-information games. arXiv preprint arXiv:1603.01121. Cited by: §A.1, 1st item, §D.1, Appendix E, §1, §1, §3.1, §4.

- [21]N. Herr, F. Acero, R. Raileanu, M. Perez-Ortiz, and Z. Li Large language models are bad game theoretic reasoners: evaluating performance and bias in two-player non-zero-sum games. In ICML 2024 Workshop on LLMs and Cognition, Cited by: §A.1.

- C. Huang, Y. Cao, Y. Wen, T. Zhou, and Y. Zhang (2024)PokerGPT: an end-to-end lightweight solver for multi-player texas hold’em via large language model. arXiv preprint arXiv:2401.06781. Cited by: §A.1, §2.

- A. Hurst, A. Lerer, A. P. Goucher, A. Perelman, A. Ramesh, A. Clark, A. Ostrow, A. Welihinda, A. Hayes, A. Radford, et al. (2024)Gpt-4o system card. arXiv preprint arXiv:2410.21276. Cited by: §3.2.

- H. Jiang, L. Ge, Y. Gao, J. Wang, and R. Song (2023)Large language model for causal decision making. arXiv preprint arXiv:2312.17122. Cited by: §1.

- B. Jin, H. Zeng, Z. Yue, J. Yoon, S. Arik, D. Wang, H. Zamani, and J. Han (2025)Search-r1: training llms to reason and leverage search engines with reinforcement learning. arXiv preprint arXiv:2503.09516. Cited by: §A.3, §G.5, §6.

- H. W. Kuhn (2016)A simplified two-person poker. Contributions to the Theory of Games 1, pp.97–103. Cited by: §B.1.

- W. Lin, J. Roberts, Y. Yang, S. Albanie, Z. Lu, and K. Han (2025)GAMEBoT: transparent assessment of LLM reasoning in games. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), W. Che, J. Nabende, and M. T. Pilehvar (Eds.), pp.7656–7682. Cited by: §1.

- Y. Meng, M. Xia, and D. Chen (2024)Simpo: simple preference optimization with a reference-free reward. Advances in Neural Information Processing Systems 37, pp.124198–124235. Cited by: §A.2.

- V. Mnih, K. Kavukcuoglu, D. Silver, A. A. Rusu, J. Veness, M. G. Bellemare, A. Graves, M. Riedmiller, A. K. Fidjeland, G. Ostrovski, et al. (2015)Human-level control through deep reinforcement learning. nature 518 (7540), pp.529–533. Cited by: 2nd item, §3.1.

- N. Muennighoff, Z. Yang, W. Shi, X. L. Li, L. Fei-Fei, H. Hajishirzi, L. Zettlemoyer, P. Liang, E. Candès, and T. Hashimoto (2025)S1: simple test-time scaling. arXiv preprint arXiv:2501.19393. Cited by: §4.1.

- J. F. Nash Jr (1950)Equilibrium points in n-person games. Proceedings of the national academy of sciences 36 (1), pp.48–49. Cited by: Definition B.1.

- OpenAI (2024)OpenAI o3 and o4-mini system card. External Links: LinkCited by: §3.2.

- OpenAI (2025)Gpt-4.1 system card. External Links: LinkCited by: §C.4, §3.2.

- Qwen (2024)Qwen2.5: a party of foundation models. External Links: LinkCited by: §3.2.

- R. Rafailov, A. Sharma, E. Mitchell, C. D. Manning, S. Ermon, and C. Finn (2023)Direct preference optimization: your language model is secretly a reward model. Advances in neural information processing systems 36, pp.53728–53741. Cited by: §A.2.

- T. Schick, J. Dwivedi-Yu, R. Dessì, R. Raileanu, M. Lomeli, E. Hambro, L. Zettlemoyer, N. Cancedda, and T. Scialom (2023)Toolformer: language models can teach themselves to use tools. Advances in Neural Information Processing Systems 36, pp.68539–68551. Cited by: §G.7.1, §G.7.1.

- J. Schulman, P. Moritz, S. Levine, M. Jordan, and P. Abbeel (2015)High-dimensional continuous control using generalized advantage estimation. arXiv preprint arXiv:1506.02438. Cited by: §D.1, Appendix E, §G.4, §4.2.

- J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov (2017)Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347. Cited by: §A.2, §D.1, Appendix E, §4.2, §5.2.

- Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y. Li, Y. Wu, et al. (2024)Deepseekmath: pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300. Cited by: Appendix E.

- G. Sheng, C. Zhang, Z. Ye, X. Wu, W. Zhang, R. Zhang, Y. Peng, H. Lin, and C. Wu (2024)HybridFlow: a flexible and efficient rlhf framework. arXiv preprint arXiv: 2409.19256. Cited by: §G.5.

- E. Steinberger (2019)Single deep counterfactual regret minimization. arXiv preprint arXiv:1901.07621. Cited by: §3.1.

- O. Tammelin (2014)Solving large imperfect information games using cfr+. arXiv preprint arXiv:1407.5042. Cited by: 4th item, §D.5, §1, §3.1, §4.1.

- T. Vu, M. Iyyer, X. Wang, N. Constant, J. Wei, J. Wei, C. Tar, Y. Sung, D. Zhou, Q. Le, et al. (2023)Freshllms: refreshing large language models with search engine augmentation. arXiv preprint arXiv:2310.03214. Cited by: §A.3.

- W. Wang, F. Bie, J. Chen, D. Zhang, S. Huang, E. Kharlamov, and J. Tang (2025)Can large language models master complex card games?. arXiv preprint arXiv:2509.01328. Cited by: §A.1, §G.5, §H.1, §4, §6.

- Z. Wei, W. Yao, Y. Liu, W. Zhang, Q. Lu, L. Qiu, C. Yu, P. Xu, C. Zhang, B. Yin, et al. (2025)Webagent-r1: training web agents via end-to-end multi-turn reinforcement learning. arXiv preprint arXiv:2505.16421. Cited by: §A.2.

- T. Xiao, Y. Yuan, Z. Chen, M. Li, S. Liang, Z. Ren, and V. G. Honavar (2025)SimPER: a minimalist approach to preference alignment without hyperparameters. arXiv preprint arXiv:2502.00883. Cited by: §A.2.

- Z. Xu, Z. Wu, Y. Zhou, A. Feng, K. Zhou, S. Woo, K. Ramnath, Y. Tian, X. Qi, W. Qiu, et al. (2025)Beyond correctness: rewarding faithful reasoning in retrieval-augmented generation. arXiv preprint arXiv:2510.13272. Cited by: 1st item.

- A. Yang, A. Li, B. Yang, B. Zhang, B. Hui, B. Zheng, B. Yu, C. Gao, C. Huang, C. Lv, et al. (2025)Qwen3 technical report. arXiv preprint arXiv:2505.09388. Cited by: §3.2.

- S. Yao, J. Zhao, D. Yu, N. Du, I. Shafran, K. R. Narasimhan, and Y. Cao (2022)React: synergizing reasoning and acting in language models. In The eleventh international conference on learning representations, Cited by: §G.7.1.

- T. Zaciragic, A. Plaat, and K. J. Batenburg (2025)Analysis of bluffing by dqn and cfr in leduc hold’em poker. arXiv preprint arXiv:2509.04125. Cited by: §B.2.

- D. Zha, K. Lai, S. Huang, Y. Cao, K. Reddy, J. Vargas, A. Nguyen, R. Wei, J. Guo, and X. Hu (2021a)RLCard: a platform for reinforcement learning in card games. In Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence, Cited by: §3.1.

- D. Zha, J. Xie, W. Ma, S. Zhang, X. Lian, X. Hu, and J. Liu (2021b)Douzero: mastering doudizhu with self-play deep reinforcement learning. In international conference on machine learning, pp.12333–12344. Cited by: 2nd item, 3rd item, §3.1.

- S. Zhai, H. Bai, Z. Lin, J. Pan, P. Tong, Y. Zhou, A. Suhr, S. Xie, Y. LeCun, Y. Ma, et al. (2024)Fine-tuning large vision-language models as decision-making agents via reinforcement learning. Advances in neural information processing systems 37, pp.110935–110971. Cited by: §A.1, §6.

- R. Zhang, Z. Xu, C. Ma, C. Yu, W. Tu, W. Tang, S. Huang, D. Ye, W. Ding, Y. Yang, et al. (2024)A survey on self-play methods in reinforcement learning. arXiv preprint arXiv:2408.01072. Cited by: Appendix E.

- E. Zhao, R. Yan, J. Li, K. Li, and J. Xing (2022)Alphaholdem: high-performance artificial intelligence for heads-up no-limit poker via end-to-end reinforcement learning. In Proceedings of the AAAI conference on artificial intelligence, Vol. 36, pp.4689–4697. Cited by: §D.1.

- Y. Zheng, D. Fu, X. Hu, X. Cai, L. Ye, P. Lu, and P. Liu (2025)Deepresearcher: scaling deep research via reinforcement learning in real-world environments. arXiv preprint arXiv:2504.03160. Cited by: §A.3.

- R. Zhuang, A. Gupta, R. Yang, A. Rahane, Z. Li, and G. Anumanchipalli (2025)Pokerbench: training large language models to become professional poker players. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 39, pp.26175–26182. Cited by: §A.1, §H.1, §1, §6.

- M. Zinkevich, M. Johanson, M. Bowling, and C. Piccione (2007)Regret minimization in games with incomplete information. Advances in neural information processing systems 20. Cited by: §B.2, 4th item, §1.

Appendix A Full Details of Related Works

A.1 Strategic Reasoning in LLMs

With the rapid progress of LLMs’ cognitive capabilities, recent studies have begun to investigate their potential for strategic reasoning in game-theoretic settings(Duan et al., 2024; Gupta, 2023; Huang et al., 2024; Zhuang et al., 2025; Wang et al., 2025). GTBench(Duan et al., 2024) introduces a comprehensive benchmark covering a variety of games to assess LLMs’ ability to follow equilibrium principles. Gupta (2023) provide one of the first empirical evaluations of GPT-4 and ChatGPT in poker, revealing systematic deviations from GTO gameplay. Guo et al. (2023) explore theory-of-mind (ToM) prompting in Leduc Hold’em, showing that GPT-4 with ToM reasoning can outperform neural baselines such as NFSP(Heinrich and Silver, 2016). PokerGPT(Huang et al., 2024) fine-tunes LLMs on poker-specific data and observes improvements in gameplay, while PokerBench(Zhuang et al., 2025) constructs a benchmark on No-Limit Hold’em. More recently, Wang et al. (2025) curate large-scale action-only datasets (more than 400k+ examples) and demonstrate gains in card games by fine-tuning LLMs on such data. Additional works(Costarelli et al., 2024; Herr et al.,) also investigate gameplay performance and biases of LLMs in other strategic games, such as Tic-Tac-Toe and Prisoner’s Dilemma. In addition to exploring strategic reasoning in text-based settings, Zhai et al. (2024) extend this line of work to the multimodal domain by fine-tuning large vision–language models (VLMs) with RL. This paper leverages CoT-style intermediate reasoning to guide VLMs through multi-step decision-making tasks, including poker. This demonstrates that RL can enable VLMs to effectively explore and execute visual–textual reasoning sequences.

Our work differs in two key aspects: (i) unlike prior works that mainly evaluate or improve LLMs’ actions, we further analyze their reasoning process, asking how LLMs think before acting and why they fail to achieve GTO play; and (ii) rather than relying on internal policies alone, we propose the first tool-integrated reasoning framework that leverages poker solvers, enabling both equilibrium-consistent actions and professional-style game-theoretic reasoning.

A.2 Reinforcement Learning

Reinforcement Learning (RL) has emerged as a powerful mechanism for enhancing the reasoning abilities of LLMs. In context of LLMs, RL was first introduced through Reinforcement Learning from Human Feedback (RLHF) to align outputs with human preferences via algorithms such as Proximal Policy Optimization (PPO)(Schulman et al., 2017). Subsequent works proposed more advanced techniques such as Direct Preference Optimization (DPO)(Rafailov et al., 2023), SimPO(Meng et al., 2024), and SimPER(Xiao et al., 2025), which improve the stability and efficiency of RL training. More recently, researchers have explored both outcome-based rewards(Guo et al., 2025) and step-level rewards(Feng et al., 2025b) to improve problem-solving in domains such as mathematical reasoning(Guo et al., 2025), code generation(Chen et al., 2025), and web retrieval(Wei et al., 2025). In this work, we investigate RL for imperfect-information games, where sparse outcomes, hidden states, and adversarial dynamics make reward design particularly challenging. Our analysis shows that both outcome-based and step-level RL signals are ineffective at improving LLMs’ internal policies in poker, motivating the use of solver-derived, regret-inspired signals as more reliable feedback.

A.3 Tool-Integrated Reasoning of LLMs

Tool-integrated reasoning (TIR) has emerged as a promising approach to extend the capabilities of LLMs. Prior works demonstrate improvements in domains requiring precise computation or external knowledge, including mathematical calculation(Das et al., 2024), programming(Chen et al., 2022), and web search(Vu et al., 2023). Early studies such as PAL(Gao et al., 2023) prompt LLMs to generate code for execution, while ToRA(Gou et al., 2024) curate tool-use trajectories and apply imitation learning to train tool invocation. More recently, RL has been explored as an effective framework to improve TIR(Jin et al., 2025; Feng et al., 2025a; Zheng et al., 2025). For instance, Search-R1(Jin et al., 2025) enables search-engine queries for QA, ReTool(Feng et al., 2025a) improves mathematical reasoning with a code sandbox, and DeepResearcher(Zheng et al., 2025) scales multi-hop retrieval and tool orchestration. Despite these advances, existing TIR research largely targets fully observed, single-agent tasks. In contrast, poker involves stochasticity, hidden information, and adversarial dynamics, where tools must compute equilibrium-consistent strategies and counterfactual values rather than deterministic answers. To the best of our knowledge, ToolPoker is the first TIR framework for imperfect-information games. It integrates external poker solvers into LLMs, teaching them how to invoke solvers, and grounding their reasoning traces in solver outputs. This ensures rigorous, precise game-theoretic reasoning and GTO-consistent play, bridging prior works on strategic reasoning, RL, and TIR.

Appendix B Background and Rules of Poker

In this section, we introduce the poker variants studied in our work. These games are widely used in the literature as benchmarks for imperfect-information reasoning because they balance tractability with the core challenges of hidden information, sequential decision-making, and stochasticity.

B.1 Kuhn Poker

Kuhn poker(Kuhn, 2016) is a minimalistic poker game designed to capture the essence of imperfect-information decision-making in a tractable form. The game is played with only three cards (e.g., Jack, Queen, King) and two players. Each player antes one chip, and a single betting round follows. Each player receives one private card, and the third card remains hidden.

Players can either check/bet (if no bet has been made) or call/fold (if a bet has been made). Because of its small size—only a handful of information sets—Kuhn poker admits closed-form solutions, including simple Nash equilibrium strategies that mix between bluffing with weak hands and value betting with strong hands. Despite its simplicity, it highlights the central strategic dilemma of poker: balancing deception and value extraction under hidden information.

B.2 Leduc Hold’em

Leduc Hold’em(Zaciragic et al., 2025) is a widely studied poker variant that extends Kuhn by introducing multiple betting rounds and public information. The game is played with a small deck of six cards consisting of two suits and three ranks. Each player antes one chip and receives a single private card. A first round of betting occurs, after which a single public card is revealed. A second round of betting then follows.

The addition of the public card dramatically increases strategic depth: players must update beliefs about opponents’ ranges as new information is revealed, balance bluffing and value bets across streets, and plan actions that maximize long-term expected value. Although still small enough for exact or approximate equilibrium computation (e.g., via CFR(Zinkevich et al., 2007)), Leduc captures essential poker phenomena such as semi-bluffing, slow-playing, and range narrowing, making it a standard benchmark for algorithmic and LLM-based poker research.

B.3 Limit Texas Hold’em

Limit Texas Hold’em Bowling et al. (2015) is a more realistic and complex poker variant that is closely related to the full game of Texas Hold’em, which is the most popular poker format in practice. The deck consists of 52 52 standard playing cards. Each player is dealt two private hole cards, and up to five public community cards are revealed in stages: the flop (three cards), the turn (one card), and the river (one card). At each stage, players take turns acting in one of several betting rounds.

Unlike No-Limit Hold’em, bet sizes in Limit Hold’em are fixed and restricted to small or big bets depending on the round. Each hand therefore unfolds as a sequence of structured betting decisions, but the state space remains extremely large compared to Kuhn or Leduc. The presence of multiple streets, large range interactions, and complex pot-odds considerations make Limit Hold’em a significantly more challenging testbed for LLMs and reinforcement learning algorithms. Professional-level play in this environment demands mastery of equilibrium-based reasoning as well as opponent exploitation—skills that current LLMs struggle to replicate.

B.4 Additional Details of Background and Preliminary

B.5 Game-theoretic Reasoning

In poker, game-theoretic reasoning grounded in Nash Equilibrium is essential for professional-level play. A Nash Equilibrium represents a stable outcome in which each player’s strategy is an optimal response to the others. Formally:

Definition B.1(Nash Equilibrium(Nash Jr, 1950)).

A Nash Equilibrium is a strategy profile in a game where no player can unilaterally improve their payoff by deviating from their current strategy, assuming the other players’ strategies remain unchanged. Formally, a strategy profile (a 1∗,a 2∗,…,a n∗)(a_{1}^{},a_{2}^{},\ldots,a_{n}^{*}) is a Nash Equilibrium if, for every player i i:

U i(a i∗,a−i∗)≥U i(a i,a−i∗),∀a i∈A i U_{i}(a_{i}^{},a_{-i}^{})\geq U_{i}(a_{i},a_{-i}^{*}),\quad\forall a_{i}\in A_{i}(5)

where A i A_{i} denotes the set of feasible actions for player i i, U i U_{i} is the utility function (expected payoff) of player i i, and a−i∗a_{-i}^{*} represents the equilibrium strategies of all players other than i i.

Rather than relying solely on heuristics or pattern recognition, professional players systematically evaluate equity, ranges, and pot odds within a game-theoretic framework, thereby providing an optimal action. An illustrative example of such game-theoretic reasoning in practice is in AppendixB.6.

B.6 Professional Players in Poker

To illustrate how professional poker players think, we provide a real example from the blog of a well-known Texas Hold’em professional player 1 1 1https://www.partypoker.com/blog/en/its-the-same-game-but-it-isnt.html. Unlike casual players who rely on intuition, professionals systematically evaluate a wide range of factors before acting, including:

- •Game context: What are the stack sizes, pot size, and stack-to-pot ratio?

- •Ranges: What range of hands should I continue with? What range does my opponent have? How does the board interact with these ranges, and which player benefits most?

- •Board texture and big hands: Who holds the larger share of strong hands in this spot?

- •Mixed strategies: What is my optimal mix between actions (e.g., 3-betting vs. calling, check-calling vs. check-raising)?

- •Bet sizing: How many bet sizes do I need here (e.g., two sizes such as 30% pot and 90% pot)? Which size does my hand prefer relative to my overall range?

- •Randomization: How do I randomize between actions to stay balanced (e.g., using a chip marker to decide frequencies)?

- •Opponent modeling: What is my opponent’s likely response to my bet? What physical tells, history, or reads do I have? At what strategic level are they operating, and what exploits should I consider?

This example shows that professional play is grounded in equilibrium-based reasoning, probabilistic mixing, and careful opponent modeling, far beyond heuristic or surface-level decision making.

Our behavior datasets are designed with these principles in mind, encouraging LLMs to reason through such questions. Details of the text-only BC dataset curation and TIR-enable BC dataset curation are provided in AppendixD.3 and AppendixG.2, respectively.

Appendix C Additional Details of Preliminary Analysis in Sec.3

C.1 Comparison Methods

To comprehensively evaluate the performance of LLMs in playing poker, we consider both traditional RL-based baselines and rule-based solver baselines. RL methods serve as learning-based references that have been widely applied to imperfect-information games, while rule-based solvers provide near-equilibrium strategies that approximate ground truth. Specifically, we include the following methods:

- •NFSP(Heinrich and Silver, 2016): Neural Fictitious Self-Play is a pioneering framework for learning approximate Nash equilibria in imperfect-information games. It combines reinforcement learning to approximate best responses with supervised learning to approximate average strategies, enabling agents to learn directly from self-play experience.

- •DQN Mnih et al. (2015): Deep Q-Network was one of the first breakthroughs in deep RL for sequential decision-making. Although originally designed for perfect-information environments such as Atari, subsequent works(Zha et al., 2021b; Guo et al., 2023) have adopted it as a baseline for imperfect-information games, including poker.

- •DMC Zha et al. (2021b): The Deep Monte Carlo (DMC) algorithm is originally proposed for the Chinese card game DouDizhu. It leverages large-scale self-play with Monte Carlo policy optimization and demonstrates strong performance in complex imperfect-information card games. Following prior works(Zha et al., 2021b; Guo et al., 2023), we adapt DMC as a baseline for poker.

- •CFR+(Tammelin, 2014): Counterfactual Regret Minimization (CFR)(Zinkevich et al., 2007) is a foundational algorithm for solving imperfect-information games, converging to Nash equilibrium by iteratively minimizing counterfactual regret at each information set. CFR+ enhances CFR with linear regret updates and warm-start averaging, greatly accelerating convergence. It has become the de facto standard solver in large-scale poker domains and serves as a strong rule-based baseline in our evaluation.

- •DeepCFR Brown et al. (2019): Building on CFR, DeepCFR employs neural function approximation to replace tabular regret tables, thereby generalizing across information sets. While CFR+ is provably effective, its computational cost grows prohibitively in large games such as Texas Hold’em. DeepCFR addresses this limitation by learning regret values via neural networks, making it applicable to larger domains and forming the basis of superhuman agents such as Libratus(Brown and Sandholm, 2019).

C.2 Case Studies of LLMs’ Reasoning Flaws

We provide the examples from Qwen2.5-3B and GPT-4o in Tab.13 and14 to illustrate why LLMs fail in playing poker. From these tables, we consistently observe three limitations of LLMs in playing poker: (1) Heuristic Reasoning; (ii) Factual Misunderstanding; and (iii) Knowing-Doing Gap. The detailed analysis of these case studies can be found in Sec.3.3.

C.3 Evaluation Metrics of the LLM-as-a-Judge for LLMs’ Reasoning

In the LLM-as-a-Judge approach used in quantitative analysis of LLMs’ reasoning traces in Sec.3.3, we use the following three metrics to validate the identified three reasoning flaws:

- •Heuristic Reasoning Score (HR): The judge prompt template is provided in Tab.15.

- •Factual Alignment Score (FA): The judge prompt template is provided in Tab.16.

- •Action-reasoning Consistency Score (AC): The judge prompt template is provided in Tab.17.

C.4 Full Details of Quantitative Analysis

To further validate the reasoning flaws observed in case studies, we adopt an LLM-as-a-Judge framework(Dubois et al., 2023). Specifically, we design three metrics: heuristic reasoning (HR), factual alignment (FA), and action–reasoning consistency (AC). Each generated reasoning trace is scored by three independent LLM judges on a 0–2 2 scale for each metric. GPT-4.1-mini(OpenAI, 2025) is used as the judge model. The metric definitions and judge prompts are in AppendixC.3.

From the table, we observe that (i) Reasoning flaws persist across all models. All evaluated LLMs demonstrate varying degrees of heuristic reasoning, factual misunderstanding, and knowing–doing gaps. For instance, Qwen2.5-3B obtains only 0.53 0.53 HR, 0.18 0.18 FA, and 1.53 1.53 AC, indicating weak factual grounding and limited strategic reasoning. Even the strongest model, o4-mini, while achieving the 1.80 1.80 HR, 1.56 1.56 FA, and 1.85 1.85 AC, still falls short of perfect action–reasoning consistency (1.85 1.85). This confirms that these flaws are systemic and persist across models. (ii) Scaling improves but does not eliminate reasoning flaws. Large and more powerful models, such as Qwen2.5-72B and o4-mini, generally achieve higher scores across all these metrics compared to their lightweight variants. This suggests that increased scale and instruction tuning enhance the ability of LLMs to approximate game-theoretic reasoning and avoid factual mistakes. Nevertheless, the persistence of non-trivial gaps, particularly in FA and AC, indicates that scaling alone is insufficient to reach professional-level game-theoretic reasoning. (iii) Action-reasoning consistency remains imperfect. AC scores are stable across models (1.53 1.53–1.87 1.87) yet below the professional baseline of 2.0 2.0. Even the strongest model, o4-mini, reaches 1.85 1.85 but still shows knowing–doing gaps where reasoning diverges from action. To directly assess this, we compute mismatch proportions in AppendixC.5, which align with AC values and confirm it as both a valid proxy for and evidence of the knowing–doing gap.

C.5 Human-in-the-Loop Evaluation for LLMs’ Reasoning

To validate the reliability of LLM-based judging, we conduct a human-in-the-loop evaluation. Drawing on professional-style reasoning (AppendixB.6) and our behavior cloning prompt template (AppendixD.3), we use GPT-5 to curate 20 20 reasoning traces and have them scored by LLMs. These traces achieve perfect scores (all achieve maximum 2 2), showing strong alignment with human judgments, which we include as a reference for our analysis.

C.6 Calibration and Validation of our LLM-as-a-Judge Score

In this subsection, we provide the details of how to calibrate and validate our LLM-as-a-Judge Score. Judge calibration. In AppendixC.3, we apply the LLM-as-a-Judge approach and use three metrics: Heuristic Reasoning (HR), Factual Alignment (FA), and Action–reasoning Consistency (AC) in the scale of 0-2. To calibrate this scale, we iteratively refined the HR/FA/AC rubrics and judge prompts using a small pilot set of representative hands.

- •General procedure. We collect a small set of clearly good, medium, and poor reasoning traces for each dimension, manually assign target scores (0/1/2), and refine the textual criteria until the judge consistently reproduces the correct scores.

- •HR calibration. We anchor the “0/1/2” rubric using examples that are (i) purely heuristic, (ii) partially grounded but inconsistent, and (iii) strongly aligned with game-theoretic principles (e.g., pot odds, range interactions).

- •FA calibration. We provide objective poker quantities (equities, ranges, pot odds) from external solvers and instruct the judge to score only factual correctness.

- •AC calibration. We explicitly instruct the judge to verify that the reasoning logically implies the same action as the final decision.

Judge validation. Following the protocol in Sec.3.3, we manually curate 20 professional-style reasoning traces to use them and score them by LLMs. These traces achieve perfect scores (all achieve maximum 2), showing strong alignment with human judgments.

Sensitivity and inter-rater LLM agreement. Our LLM-as-a-Judge results in Tab.2, Tab.4, and Fig.2 are consistent across two distinct poker environments (Leduc and Limit Hold’em), indicating that the judge is not domain-sensitive.

To further assess inter-rater agreement, we re-evaluate ToolPoker’s Limit Hold’em reasoning traces using GPT-5 as the judge (instead of the GPT-4.1-mini judge used in the main paper). All settings follow Section 5.3. The results are reported in Tab.6. From the table, we observe close agreement between the two judge models, validating the robustness of our evaluation and reducing concerns about prompt sensitivity or model-specific bias.

Table 6: Inter-rater agreement: LLM-as-a-Judge scores (0–2) on ToolPoker’s reasoning traces in Limit Texas Hold’em. We compare the original judge (GPT-4.1-mini) with another judge (GPT-5).

Appendix D Full Details of BC-RIRL

D.1 Full details of Regret-Inspired RL Fine-Tuning

While BC helps LLMs imitate expert play, its limited dataset size and imitation-based nature make it insufficient for professional-level performance. As an initial attempt to refine policies beyond imitation, we explore a regret-inspired reinforcement learning (RIRL) framework. Prior approaches in both traditional RL(Heinrich and Silver, 2016; Zhao et al., 2022) and LLM-based RL(Guo et al., 2025) typically rely on outcome-based rewards (e.g., win/loss). However, in poker, especially in multi-round games such as Leduc Hold’em and Texas Hold’em—these sparse and noisy signals fail to capture the contribution of individual actions. To address this, we experiment with a step-level regret-guided reward that leverages signals from a pre-trained CFR solver, aligning fine-tuning with the principle that minimizing cumulative regret drives convergence to the Nash equilibrium.

Regret-guided Reward Design. Inspired by our analysis in Sec.3.2, which highlights CFR as the state-of-the-art algorithm for approaching Nash equilibrium in imperfect-information games, we explore optimizing LLMs through regret minimization. Our key idea is to compute cumulative regrets with CFR and transform them into fine-grained reward signals that estimate each action’s contribution. For a policy π θ\pi_{\theta} as player i i, the cumulative regret of action a i t a_{i}^{t} at time t t is defined as:

r t(a i t)=r t−1(a i t)+I t(a i t),I t(a i t)=u(σ t a i t,σ t−a i t)−u(σ t),r_{t}(a^{t}{i})=r{t-1}(a^{t}{i})+I{t}(a^{t}{i}),\quad I{t}(a^{t}{i})=u(\sigma{t}^{a^{t}{i}},\sigma{t}^{-a^{t}{i}})-u(\sigma{t}),(6)

where σ t\sigma_{t} denotes the strategy profile at time t t, σ t−i\sigma_{t}^{-i} is the opponents’ strategy, u(σ t)u(\sigma_{t}) the expected utility under σ t\sigma_{t}, and u(σ t a i t,σ t−i)u(\sigma_{t}^{a_{i}^{t}},\sigma_{t}^{-i}) is the utility when player i i deviates to action a i t a_{i}^{t}. The instantaneous regret I t(a i t)I_{t}(a_{i}^{t}) measures how much better or worse a i t a_{i}^{t} performs relative to the current mixture strategy, while R t(a i t)R_{t}(a_{i}^{t}) aggregates this over time. To compare actions within the same decision point, we normalize regrets into a relative reward signal:

R(a i t)=R t(a i t)−mean({r t(a j)}j=1|𝒜|)F norm({r t(a j)}j=1|𝒜|),R(a^{t}{i});=;\frac{R{t}(a^{t}{i})-\text{mean}({r{t}(a_{j})}{j=1}^{|\mathcal{A}|})}{F{\text{norm}}({r_{t}(a_{j})}_{j=1}^{|\mathcal{A}|})},(7)

where F norm F_{\text{norm}} denotes a normalization factor, chosen as the standard deviation in our implementation.

Fine-tuning Objective. Based on this signal, we fine-tune LLM policy via PPO(Schulman et al., 2017) with the following clipped RL objective:

ℒ PPO\displaystyle\mathcal{L}{\text{PPO}}(θ)=−𝔼 x∼𝒟 s,y∼π old(⋅|x)\displaystyle(\theta)=-\mathbb{E}{x\sim\mathcal{D}{s},y\sim\pi{{old}}(\cdot|x)}(8) [min(π θ(y|x)π old(y|x)A,clip(π θ(y|x)π old(y|x),1−ϵ,1+ϵ))−β 𝔻 KL(π θ(⋅|c)||π ref(y|x))],\displaystyle\left[\min\left(\frac{\pi_{\theta}(y|x)}{\pi_{{old}}(y|x)}A,\text{clip}\left(\frac{\pi_{\theta}(y|x)}{\pi_{{old}}(y|x)},1-\epsilon,1+\epsilon\right)\right)-\beta\mathbb{D}{\text{KL}}(\pi{\theta}(\cdot|c)||\pi_{ref}(y|x))\right],

where π θ\pi_{\theta} and π old\pi_{old} denote the current and previous policy models, respectively. ϵ\epsilon is the clipping-related hyperparameter. π ref\pi_{ref} is the reference policy that regularizes π θ\pi_{\theta} update via a KL-divergence penalty, measured and weighted by 𝔻 KL\mathbb{D}{KL} and β\beta, respectively. Generalized Advantage Estimation (GAE)(Schulman et al., 2015) is used as the advantage estimate A A. x x denotes the input samples drawn from 𝒟\mathcal{D}, which is composed of trajectories generated by the current policy π θ\pi{\theta}. y y is the generated outputs via policy LLMs π θ(⋅|x)\pi_{\theta}(\cdot|x). The procedures of trajectory collection are detailed in AppendixD.4.

D.2 Full Details of Comparison Results

We evaluate whether BC-RIRL improves LLMs’ poker performance by fine-tuning Qwen2.5-7B and comparing against both traditional methods and vanilla LLMs. Results in Kuhn and Leduc Hold’em are reported in Tab.3. We highlight three key findings: (i) All RL-based fine-tuning variants improve performance in Kuhn Poker. This suggests that both outcome-based and regret-guided feedback provide useful learning signals in simple environments with limited strategy space. (ii) BC-RIRL surpasses direct prompting and BC-SPRL in Leduc Hold’em, though it still trails traditional algorithms such as CFR+. For example, BC-RIRL gains 17.0 17.0 chips against GPT-4.1-mini, while still losing 34.0 34.0 chips against CFR+. This indicates that regret-guided dense feedback is more effective than sparse outcome-based rewards in complex tasks, but is sufficient to reach equilibrium-level play. (iii) Pure RIRL without the BC stage does not yield improvements in Leduc Hold’em. For instance, BC-RIRL and BC-SPRL gain +17.0+17.0 and −64.5-64.5 chips against GPT-4.1-mini, respectively. This underscores the importance of BC in establishing a strong foundation of expert-like reasoning before RL fine-tuning.